On this article, you’ll be taught the architectural variations between structured outputs and performance calling in fashionable language mannequin techniques.

Matters we’ll cowl embrace:

- How structured outputs and performance calling work beneath the hood.

- When to make use of every method in real-world machine studying techniques.

- The efficiency, price, and reliability trade-offs between the 2.

Introduction

Language fashions (LMs), at their core, are text-in and text-out techniques. For a human conversing with one through a chat interface, that is completely positive. However for machine studying practitioners constructing autonomous brokers and dependable software program pipelines, uncooked unstructured textual content is a nightmare to parse, route, and combine into deterministic techniques.

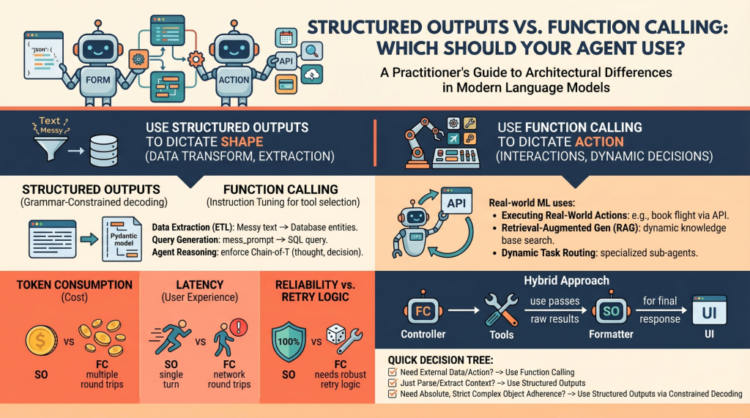

To construct dependable brokers, we want predictable, machine-readable outputs and the flexibility to work together seamlessly with exterior environments. To be able to bridge this hole, fashionable LM API suppliers (like OpenAI, Anthropic, and Google Gemini) have launched two main mechanisms:

- Structured Outputs: Forcing the mannequin to answer by adhering precisely to a predefined schema (mostly a JSON schema or a Python Pydantic mannequin)

- Perform Calling (Software Use): Equipping the mannequin with a library of practical definitions that it may select to invoke dynamically based mostly on the context of the immediate

At first look, these two capabilities look very comparable. Each sometimes depend on passing JSON schemas to the API beneath the hood, and each consequence within the mannequin outputting structured key-value pairs as an alternative of conversational prose. Nevertheless, they serve basically totally different architectural functions in agent design.

Conflating the 2 is a typical pitfall. Selecting the fallacious mechanism for a characteristic can result in brittle architectures, extreme latency, and unnecessarily inflated API prices. Let’s unpack the architectural distinctions between these strategies and supply a decision-making framework for when to make use of every.

Unpacking the Mechanics: How They Work Beneath the Hood

To know when to make use of these options, it’s essential to grasp how they differ on the mechanical and API ranges.

Structured Outputs Mechanics

Traditionally, getting a mannequin to output uncooked JSON relied on immediate engineering (“You’re a useful assistant that *solely* speaks in JSON…”). This was error-prone, requiring in depth retry logic and validation.

Trendy “structured outputs” basically change this by means of grammar-constrained decoding. Libraries like Outlines, or native options like OpenAI’s Structured Outputs, mathematically limit the token chances at era time. If the chosen schema dictates that the subsequent token should be a citation mark or a selected boolean worth, the possibilities of all non-compliant tokens are masked out (set to zero).

This can be a single-turn era strictly targeted on kind. The mannequin is answering the immediate straight, however its vocabulary is confined to the precise construction you outlined, with the intention of making certain close to 100% schema compliance.

Perform Calling Mechanics

Perform calling, then again, depends closely on instruction tuning. Throughout coaching, the mannequin is fine-tuned to acknowledge conditions the place it lacks the required data to finish a immediate, or when the immediate explicitly asks it to take an motion.

While you present a mannequin with a listing of instruments, you’re telling it, “If it’s essential to, you possibly can pause your textual content era, choose a software from this record, and generate the required arguments to run it.”

That is an inherently multi-turn, interactive stream:

- The mannequin decides to name a software and outputs the software title and arguments.

- The mannequin pauses. It can’t execute the code itself.

- Your utility code executes the chosen perform regionally utilizing the generated arguments.

- Your utility returns the results of the perform again to the mannequin.

- The mannequin synthesizes this new data and continues producing its ultimate response.

When to Select Structured Outputs

Structured outputs ought to be your default method every time the purpose is pure information transformation, extraction, or standardization.

Main Use Case: The mannequin has all the required data throughout the immediate and context window; it simply must reshape it.

Examples for Practitioners:

- Information Extraction (ETL): Processing uncooked, unstructured textual content like a buyer help transcript and extracting entities &emdash; names, dates, grievance sorts, and sentiment scores &emdash; right into a strict database schema.

- Question Technology: Changing a messy pure language person immediate right into a strict, validated SQL question or a GraphQL payload. If the schema is damaged, the question fails, making 100% adherence crucial.

- Inside Agent Reasoning: Structuring an agent’s “ideas” earlier than it acts. You may implement a Pydantic mannequin that requires a

thought_processdiscipline, anassumptionsdiscipline, and at last adeterminationdiscipline. This forces a Chain-of-Thought course of that’s simply parsed by your backend logging techniques.

The Verdict: Use structured outputs when the “motion” is just formatting. As a result of there isn’t any mid-generation interplay with exterior techniques, this method ensures excessive reliability, decrease latency, and 0 schema-parsing errors.

When to Select Perform Calling

Perform calling is the engine of agentic autonomy. If structured outputs dictate the form of the info, perform calling dictates the management stream of the applying.

Main Use Case: Exterior interactions, dynamic decision-making, and circumstances the place the mannequin must fetch data it doesn’t at the moment possess.

Examples for Practitioners:

- Executing Actual-World Actions: Triggering exterior APIs based mostly on conversational intent. If a person says, “Guide my regular flight to New York,” the mannequin makes use of perform calling to set off the

book_flight(vacation spot="JFK")software. - Retrieval-Augmented Technology (RAG): As an alternative of a naive RAG pipeline that all the time searches a vector database, an agent can use a

search_knowledge_basesoftware. The mannequin dynamically decides what search phrases to make use of based mostly on the context, or decides to not search in any respect if it already is aware of the reply. - Dynamic Activity Routing: For complicated techniques, a router mannequin would possibly use perform calling to pick the perfect specialised sub-agent (e.g., calling

delegate_to_billing_agentversusdelegate_to_tech_support) to deal with a selected question.

The Verdict: Select perform calling when the mannequin should work together with the skin world, fetch hidden information, or conditionally execute software program logic mid-thought.

Efficiency, Latency, and Price Implications

When deploying brokers to manufacturing, the architectural selection between these two strategies straight impacts your unit economics and person expertise.

- Token Consumption: Perform calling typically requires a number of spherical journeys. You ship the system immediate, the mannequin sends software arguments, you ship again the software outcomes, and the mannequin lastly sends the reply. Every step appends to the context window, accumulating enter and output token utilization. Structured outputs are sometimes resolved in a single, cheaper flip.

- Latency Overhead: The spherical journeys inherent to perform calling introduce important community and processing latency. Your utility has to attend for the mannequin, execute native code, and look forward to the mannequin once more. In case your main purpose is simply getting information into a selected format, structured outputs shall be vastly sooner.

- Reliability vs. Retry Logic: Strict structured outputs (through constrained decoding) provide close to 100% schema constancy. You may belief the output form with out complicated parsing blocks. Perform calling, nonetheless, is statistically unpredictable. The mannequin would possibly hallucinate an argument, choose the fallacious software, or get caught in a diagnostic loop. Manufacturing-grade perform calling requires strong retry logic, fallback mechanisms, and cautious error dealing with.

Hybrid Approaches and Finest Practices

In superior agent architectures, the road between these two mechanisms typically blurs, resulting in hybrid approaches.

The Overlap:

It’s value noting that fashionable perform calling truly depends on structured outputs beneath the hood to make sure the generated arguments match your perform signatures. Conversely, you possibly can design an agent that solely makes use of structured outputs to return a JSON object describing an motion that your deterministic system ought to execute after the era is full &emdash; successfully faking software use with out the multi-turn latency.

Architectural Recommendation:

- The “Controller” Sample: Use perform calling for the orchestrator or “mind” agent. Let it freely name instruments to collect context, question databases, and execute APIs till it’s glad it has amassed the required state.

- The “Formatter” Sample: As soon as the motion is full, go the uncooked outcomes by means of a ultimate, cheaper mannequin using solely structured outputs. This ensures the ultimate response completely matches your UI parts or downstream REST API expectations.

Wrapping Up

LM engineering is quickly transitioning from crafting conversational chatbots to constructing dependable, programmatic, autonomous brokers. Understanding how one can constrain and direct your fashions is the important thing to that transition.

TL;DR

- Use structured outputs to dictate the form of the info

- Use perform calling to dictate actions and interactions

The Practitioner’s Choice Tree

When constructing a brand new characteristic, run by means of this fast 3-step guidelines:

- Do I would like exterior information mid-thought or have to execute an motion? ⭢ Use perform calling

- Am I simply parsing, extracting, or translating unstructured context into structured information? ⭢ Use structured outputs

- Do I would like absolute, strict adherence to a fancy nested object? ⭢ Use structured outputs through constrained decoding

Closing Thought

The simplest AI engineers deal with perform calling as a robust however unpredictable functionality, one which ought to be used sparingly and surrounded by strong error dealing with. Conversely, structured outputs ought to be handled because the dependable, foundational glue that holds fashionable AI information pipelines collectively.

On this article, you’ll be taught the architectural variations between structured outputs and performance calling in fashionable language mannequin techniques.

Matters we’ll cowl embrace:

- How structured outputs and performance calling work beneath the hood.

- When to make use of every method in real-world machine studying techniques.

- The efficiency, price, and reliability trade-offs between the 2.

Introduction

Language fashions (LMs), at their core, are text-in and text-out techniques. For a human conversing with one through a chat interface, that is completely positive. However for machine studying practitioners constructing autonomous brokers and dependable software program pipelines, uncooked unstructured textual content is a nightmare to parse, route, and combine into deterministic techniques.

To construct dependable brokers, we want predictable, machine-readable outputs and the flexibility to work together seamlessly with exterior environments. To be able to bridge this hole, fashionable LM API suppliers (like OpenAI, Anthropic, and Google Gemini) have launched two main mechanisms:

- Structured Outputs: Forcing the mannequin to answer by adhering precisely to a predefined schema (mostly a JSON schema or a Python Pydantic mannequin)

- Perform Calling (Software Use): Equipping the mannequin with a library of practical definitions that it may select to invoke dynamically based mostly on the context of the immediate

At first look, these two capabilities look very comparable. Each sometimes depend on passing JSON schemas to the API beneath the hood, and each consequence within the mannequin outputting structured key-value pairs as an alternative of conversational prose. Nevertheless, they serve basically totally different architectural functions in agent design.

Conflating the 2 is a typical pitfall. Selecting the fallacious mechanism for a characteristic can result in brittle architectures, extreme latency, and unnecessarily inflated API prices. Let’s unpack the architectural distinctions between these strategies and supply a decision-making framework for when to make use of every.

Unpacking the Mechanics: How They Work Beneath the Hood

To know when to make use of these options, it’s essential to grasp how they differ on the mechanical and API ranges.

Structured Outputs Mechanics

Traditionally, getting a mannequin to output uncooked JSON relied on immediate engineering (“You’re a useful assistant that *solely* speaks in JSON…”). This was error-prone, requiring in depth retry logic and validation.

Trendy “structured outputs” basically change this by means of grammar-constrained decoding. Libraries like Outlines, or native options like OpenAI’s Structured Outputs, mathematically limit the token chances at era time. If the chosen schema dictates that the subsequent token should be a citation mark or a selected boolean worth, the possibilities of all non-compliant tokens are masked out (set to zero).

This can be a single-turn era strictly targeted on kind. The mannequin is answering the immediate straight, however its vocabulary is confined to the precise construction you outlined, with the intention of making certain close to 100% schema compliance.

Perform Calling Mechanics

Perform calling, then again, depends closely on instruction tuning. Throughout coaching, the mannequin is fine-tuned to acknowledge conditions the place it lacks the required data to finish a immediate, or when the immediate explicitly asks it to take an motion.

While you present a mannequin with a listing of instruments, you’re telling it, “If it’s essential to, you possibly can pause your textual content era, choose a software from this record, and generate the required arguments to run it.”

That is an inherently multi-turn, interactive stream:

- The mannequin decides to name a software and outputs the software title and arguments.

- The mannequin pauses. It can’t execute the code itself.

- Your utility code executes the chosen perform regionally utilizing the generated arguments.

- Your utility returns the results of the perform again to the mannequin.

- The mannequin synthesizes this new data and continues producing its ultimate response.

When to Select Structured Outputs

Structured outputs ought to be your default method every time the purpose is pure information transformation, extraction, or standardization.

Main Use Case: The mannequin has all the required data throughout the immediate and context window; it simply must reshape it.

Examples for Practitioners:

- Information Extraction (ETL): Processing uncooked, unstructured textual content like a buyer help transcript and extracting entities &emdash; names, dates, grievance sorts, and sentiment scores &emdash; right into a strict database schema.

- Question Technology: Changing a messy pure language person immediate right into a strict, validated SQL question or a GraphQL payload. If the schema is damaged, the question fails, making 100% adherence crucial.

- Inside Agent Reasoning: Structuring an agent’s “ideas” earlier than it acts. You may implement a Pydantic mannequin that requires a

thought_processdiscipline, anassumptionsdiscipline, and at last adeterminationdiscipline. This forces a Chain-of-Thought course of that’s simply parsed by your backend logging techniques.

The Verdict: Use structured outputs when the “motion” is just formatting. As a result of there isn’t any mid-generation interplay with exterior techniques, this method ensures excessive reliability, decrease latency, and 0 schema-parsing errors.

When to Select Perform Calling

Perform calling is the engine of agentic autonomy. If structured outputs dictate the form of the info, perform calling dictates the management stream of the applying.

Main Use Case: Exterior interactions, dynamic decision-making, and circumstances the place the mannequin must fetch data it doesn’t at the moment possess.

Examples for Practitioners:

- Executing Actual-World Actions: Triggering exterior APIs based mostly on conversational intent. If a person says, “Guide my regular flight to New York,” the mannequin makes use of perform calling to set off the

book_flight(vacation spot="JFK")software. - Retrieval-Augmented Technology (RAG): As an alternative of a naive RAG pipeline that all the time searches a vector database, an agent can use a

search_knowledge_basesoftware. The mannequin dynamically decides what search phrases to make use of based mostly on the context, or decides to not search in any respect if it already is aware of the reply. - Dynamic Activity Routing: For complicated techniques, a router mannequin would possibly use perform calling to pick the perfect specialised sub-agent (e.g., calling

delegate_to_billing_agentversusdelegate_to_tech_support) to deal with a selected question.

The Verdict: Select perform calling when the mannequin should work together with the skin world, fetch hidden information, or conditionally execute software program logic mid-thought.

Efficiency, Latency, and Price Implications

When deploying brokers to manufacturing, the architectural selection between these two strategies straight impacts your unit economics and person expertise.

- Token Consumption: Perform calling typically requires a number of spherical journeys. You ship the system immediate, the mannequin sends software arguments, you ship again the software outcomes, and the mannequin lastly sends the reply. Every step appends to the context window, accumulating enter and output token utilization. Structured outputs are sometimes resolved in a single, cheaper flip.

- Latency Overhead: The spherical journeys inherent to perform calling introduce important community and processing latency. Your utility has to attend for the mannequin, execute native code, and look forward to the mannequin once more. In case your main purpose is simply getting information into a selected format, structured outputs shall be vastly sooner.

- Reliability vs. Retry Logic: Strict structured outputs (through constrained decoding) provide close to 100% schema constancy. You may belief the output form with out complicated parsing blocks. Perform calling, nonetheless, is statistically unpredictable. The mannequin would possibly hallucinate an argument, choose the fallacious software, or get caught in a diagnostic loop. Manufacturing-grade perform calling requires strong retry logic, fallback mechanisms, and cautious error dealing with.

Hybrid Approaches and Finest Practices

In superior agent architectures, the road between these two mechanisms typically blurs, resulting in hybrid approaches.

The Overlap:

It’s value noting that fashionable perform calling truly depends on structured outputs beneath the hood to make sure the generated arguments match your perform signatures. Conversely, you possibly can design an agent that solely makes use of structured outputs to return a JSON object describing an motion that your deterministic system ought to execute after the era is full &emdash; successfully faking software use with out the multi-turn latency.

Architectural Recommendation:

- The “Controller” Sample: Use perform calling for the orchestrator or “mind” agent. Let it freely name instruments to collect context, question databases, and execute APIs till it’s glad it has amassed the required state.

- The “Formatter” Sample: As soon as the motion is full, go the uncooked outcomes by means of a ultimate, cheaper mannequin using solely structured outputs. This ensures the ultimate response completely matches your UI parts or downstream REST API expectations.

Wrapping Up

LM engineering is quickly transitioning from crafting conversational chatbots to constructing dependable, programmatic, autonomous brokers. Understanding how one can constrain and direct your fashions is the important thing to that transition.

TL;DR

- Use structured outputs to dictate the form of the info

- Use perform calling to dictate actions and interactions

The Practitioner’s Choice Tree

When constructing a brand new characteristic, run by means of this fast 3-step guidelines:

- Do I would like exterior information mid-thought or have to execute an motion? ⭢ Use perform calling

- Am I simply parsing, extracting, or translating unstructured context into structured information? ⭢ Use structured outputs

- Do I would like absolute, strict adherence to a fancy nested object? ⭢ Use structured outputs through constrained decoding

Closing Thought

The simplest AI engineers deal with perform calling as a robust however unpredictable functionality, one which ought to be used sparingly and surrounded by strong error dealing with. Conversely, structured outputs ought to be handled because the dependable, foundational glue that holds fashionable AI information pipelines collectively.