On this article, you’ll discover ways to construct production-ready AI brokers in Python utilizing Pydantic AI, with structured outputs, customized instruments, and dependency injection.

Matters we’ll cowl embody:

- How one can outline Pydantic fashions for type-safe, validated agent outputs.

- How one can register Python capabilities as instruments the agent can invoke throughout its reasoning cycle.

- How one can inject runtime dependencies akin to database connections and API shoppers utilizing a typed RunContext.

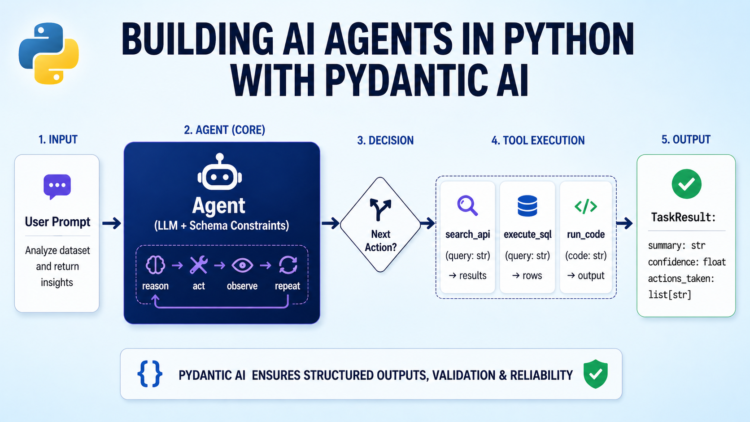

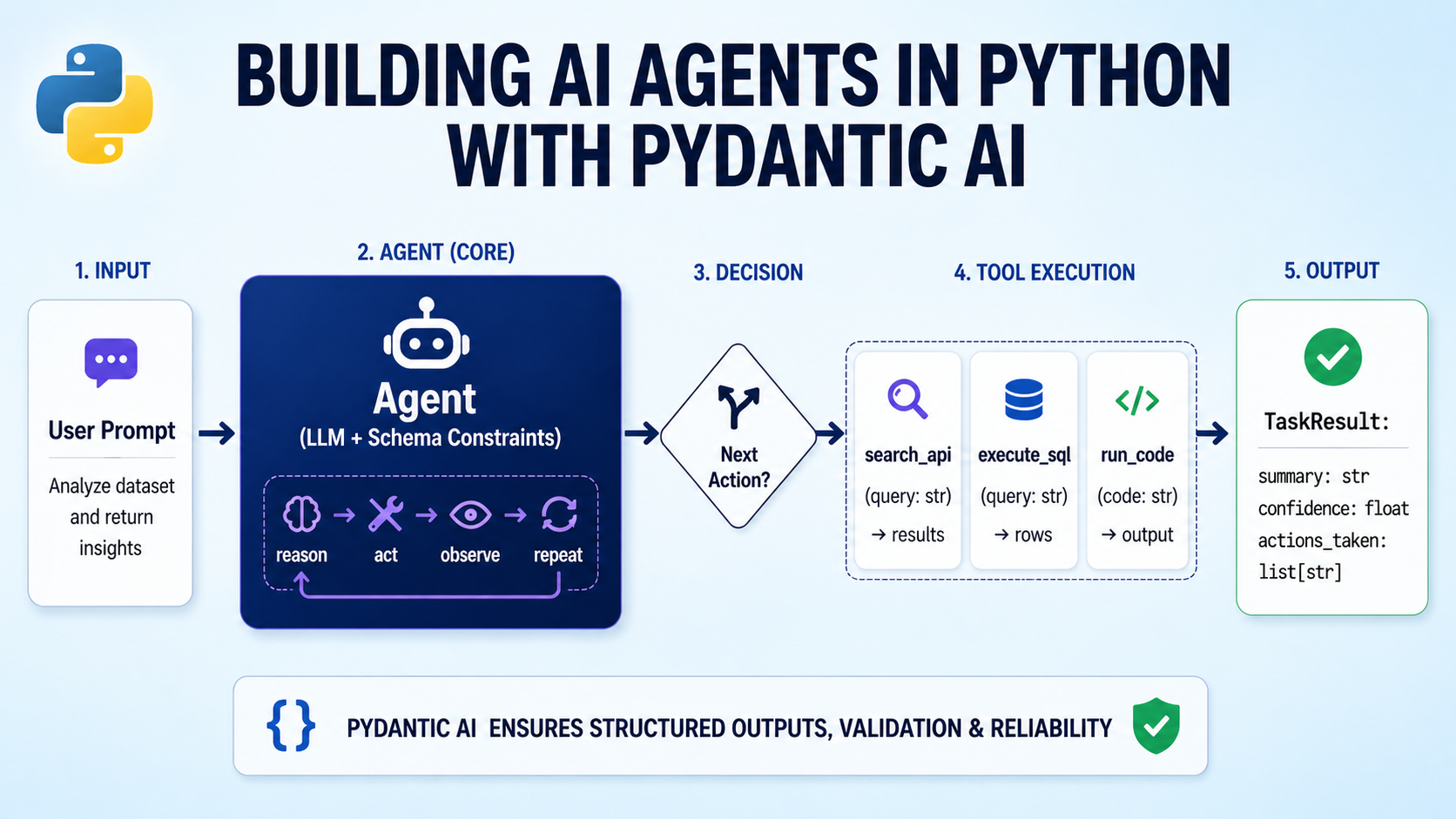

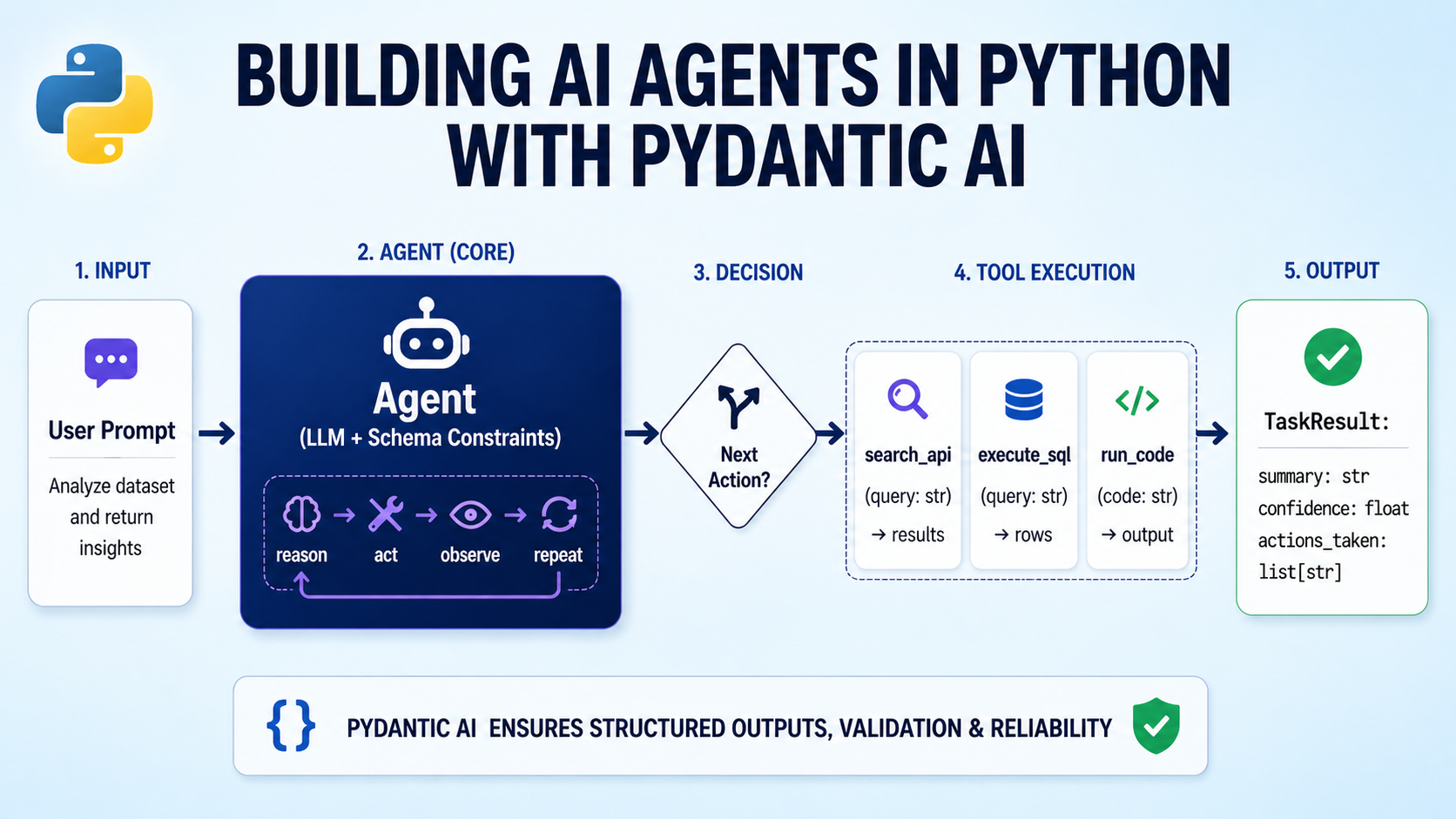

Constructing AI Brokers in Python with Pydantic AI

Picture by Creator

Introduction

AI brokers have gotten a core a part of manufacturing software program. They question databases, name APIs, cause over outcomes, and return structured outputs. However most AI agent orchestration frameworks used to construct them nonetheless really feel like glue code. They’re usually untyped, onerous to check, and simple to interrupt as techniques develop.

Pydantic AI takes a unique method, bringing robust typing, validation, and clear construction to agentic improvement. As a substitute of sewing collectively loosely linked elements, Pydantic AI permits you to work with acquainted Python patterns. Pydantic AI additionally permits you to validate inputs and outputs, outline instruments in a clear approach, and make agent habits simpler to grasp. This helps you construct brokers which might be extra dependable and simpler to take care of in real-world techniques.

On this article, you’ll discover ways to:

- Construct a structured agent with clear enter and output fashions utilizing Pydantic AI

- Add instruments that the agent can name safely

- Inject runtime dependencies in a clear and testable approach

- Use built-in capabilities in Pydantic AI like internet search and prolonged reasoning

By the tip, you’ll have a strong basis for constructing helpful brokers with Pydantic AI. You could find the Colab pocket book for reference on GitHub.

Why Pydantic AI?

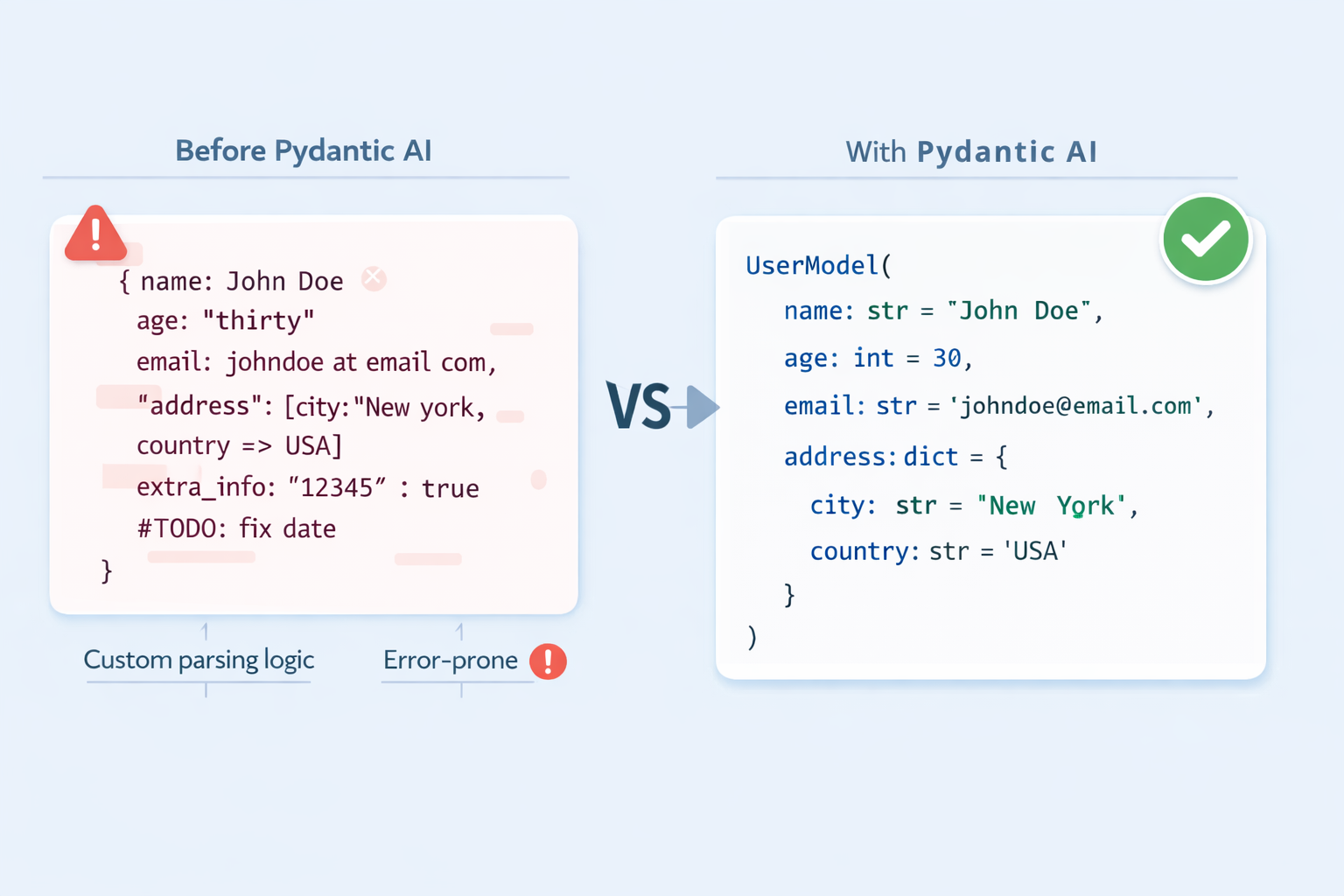

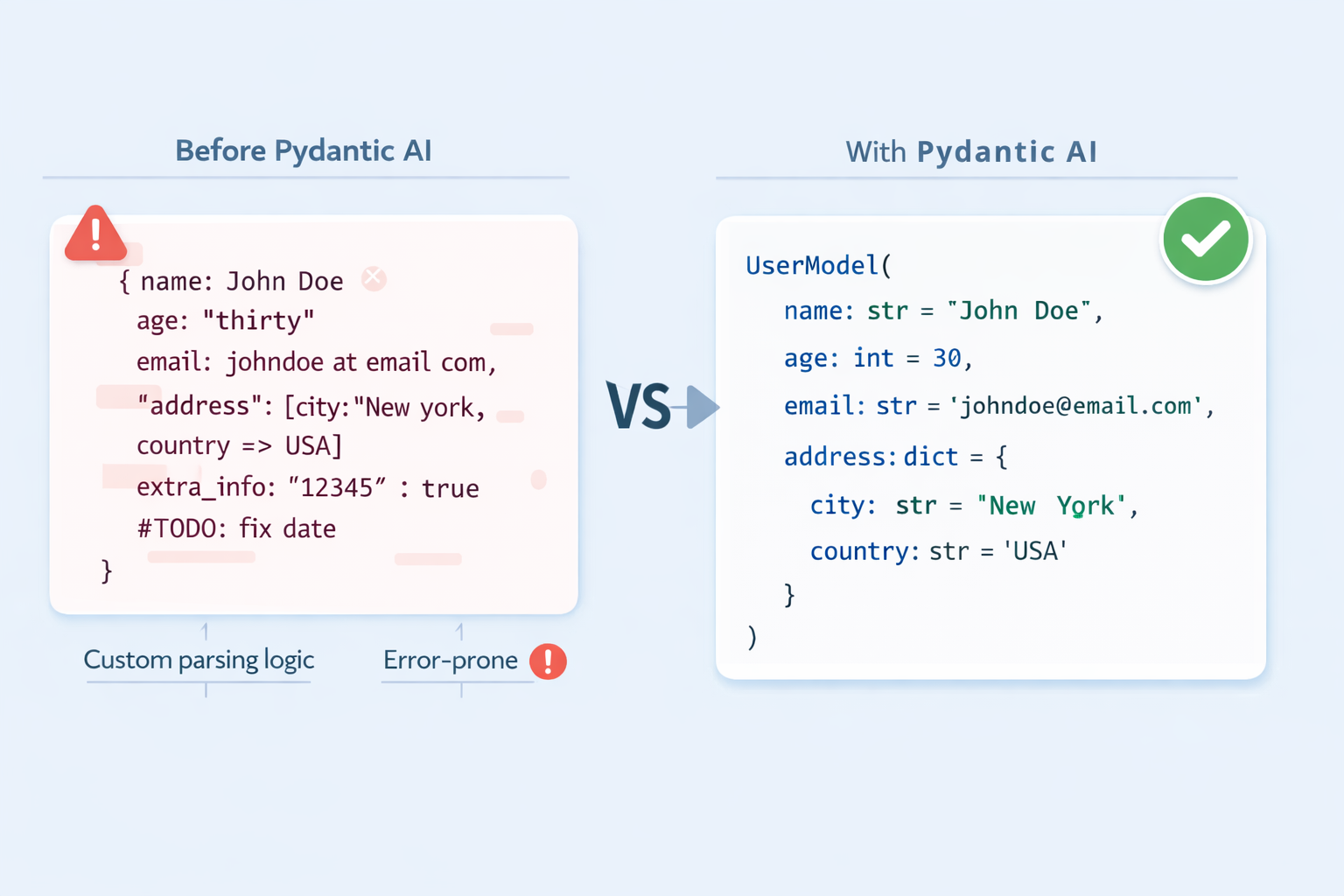

While you name an LLM straight, the response is a string. It could be JSON, markdown, or one thing totally surprising. Parsing that string right into a structured object requires customized logic, error dealing with, and hope that the mannequin retains its formatting constant. In brokers that decision instruments throughout a number of steps, this fragility compounds quick. Pydantic AI solves this with the next concepts:

Sort-safe structured outputs. Outline a BaseModel to your agent’s response. The framework instructs the LLM to evolve to that schema, validates the response, and retries mechanically on failure. You obtain a validated Python object, not a string.

Structured output with Pydantic AI

Operate instruments with docstring-driven dispatch. Register plain Python capabilities as instruments. The LLM reads their sort hints and docstrings to grasp what every software does and when to make use of it. Your software definitions are additionally documentation.

Dependency injection with out world state. Brokers in manufacturing want database connections, API shoppers, and session information. Pydantic AI offers a type-safe sample for injecting these at runtime by way of a RunContext, preserving agent definitions clear and checks straightforward.

Conditions

- Python 3.9+ put in in your working atmosphere

- Familiarity with Pydantic

BaseModeland sort hints - Fundamental understanding of how LLM prompts work

- An API key from a supported supplier; this tutorial makes use of openai:gpt-4o-mini all through; the identical patterns work identically with Anthropic’s Claude, Google Gemini, and others by solely altering the mannequin string

Putting in Pydantic AI

First, you want the package deal put in and your API key out there within the atmosphere. Create a digital atmosphere and set up Pydantic AI:

|

python –m venv venv supply venv/bin/activate pip set up pydantic–ai |

Then set your API key:

|

export OPENAI_API_KEY=“YOUR-API-KEY-HERE” |

Comply with the same process for different mannequin suppliers as effectively.

Constructing Your First Agent with Pydantic AI

With the package deal put in, you’ll be able to create and run an agent in just some strains. This confirms your setup works and introduces the 2 core arguments each agent wants.

|

from pydantic_ai import Agent

agent = Agent( “openai:gpt-4o-mini”, directions=“You’re a concise assistant. Reply in a single or two sentences.”, ) |

The mannequin string follows the "supplier:model-name" format. Swapping the prefix — to anthropic: or google-gla: for instance — switches suppliers with out altering the rest. The directions argument units the system-level persona and habits for all runs.

Now run the agent and print its output:

|

end result = agent.run_sync(“What’s a big language mannequin?”) print(end result.output) |

Right here’s a pattern output:

|

A giant language mannequin is a sort of AI system skilled on huge quantities of textual content information to perceive and generate human language. It learns statistical patterns throughout billions of parameters, enabling it to reply questions, summarise content material, write code, and extra. |

agent.run_sync(...) sends the immediate and blocks till the response arrives. The .output attribute holds the end result, a plain string for now. In async purposes, use await agent.run(...) as an alternative; the API floor is an identical.

Getting Structured, Validated Outputs

A string response is okay for easy Q&A, however most manufacturing purposes want the LLM to return information in a form your code can instantly devour — a typed object, not a blob of textual content to parse. Pydantic AI handles this by way of the output_type argument. See the structured output docs for an in depth overview.

Begin by defining a Pydantic mannequin that describes the information you need again:

|

from pydantic import BaseModel, Discipline

class JobPosting(BaseModel): job_title: str company_name: str required_skills: checklist[str] = Discipline(description=“Technical abilities explicitly acknowledged”) seniority_level: str = Discipline(description=“e.g. Junior, Mid-level, Senior, Lead”) is_remote: bool |

Every subject maps on to one thing you count on the LLM to extract. Discipline(description=...) annotations give the mannequin further hints; use them to scale back validation retries.

Subsequent, create the agent with output_type set to your mannequin and run it in opposition to some uncooked textual content:

|

from pydantic_ai import Agent

agent = Agent( “openai:gpt-4o-mini”, output_type=JobPosting, directions=“Extract structured job posting info. Solely embody what’s explicitly acknowledged.”, )

end result = agent.run_sync(“”“ We’re hiring a Senior Python Engineer at CoolData Inc. The function is totally distant. Required: FastAPI, PostgreSQL, Docker. Kubernetes is a plus. ““”)

posting = end result.output print(posting.job_title, posting.seniority_level, posting.is_remote) |

It is best to get the same output:

|

Senior Python Engineer Senior True |

You may also test the complete validated object like so:

|

print(posting.model_dump()) |

This outputs:

|

{ “job_title”: “Senior Python Engineer”, “company_name”: “CoolData Inc.”, “required_skills”: [“FastAPI”, “PostgreSQL”, “Docker”], “seniority_level”: “Senior”, “is_remote”: True } |

When output_type is ready, Pydantic AI converts your mannequin’s fields right into a JSON schema and sends it alongside the immediate. The response is validated on arrival; if any subject is lacking or mistyped, the framework retries mechanically earlier than surfacing an error.

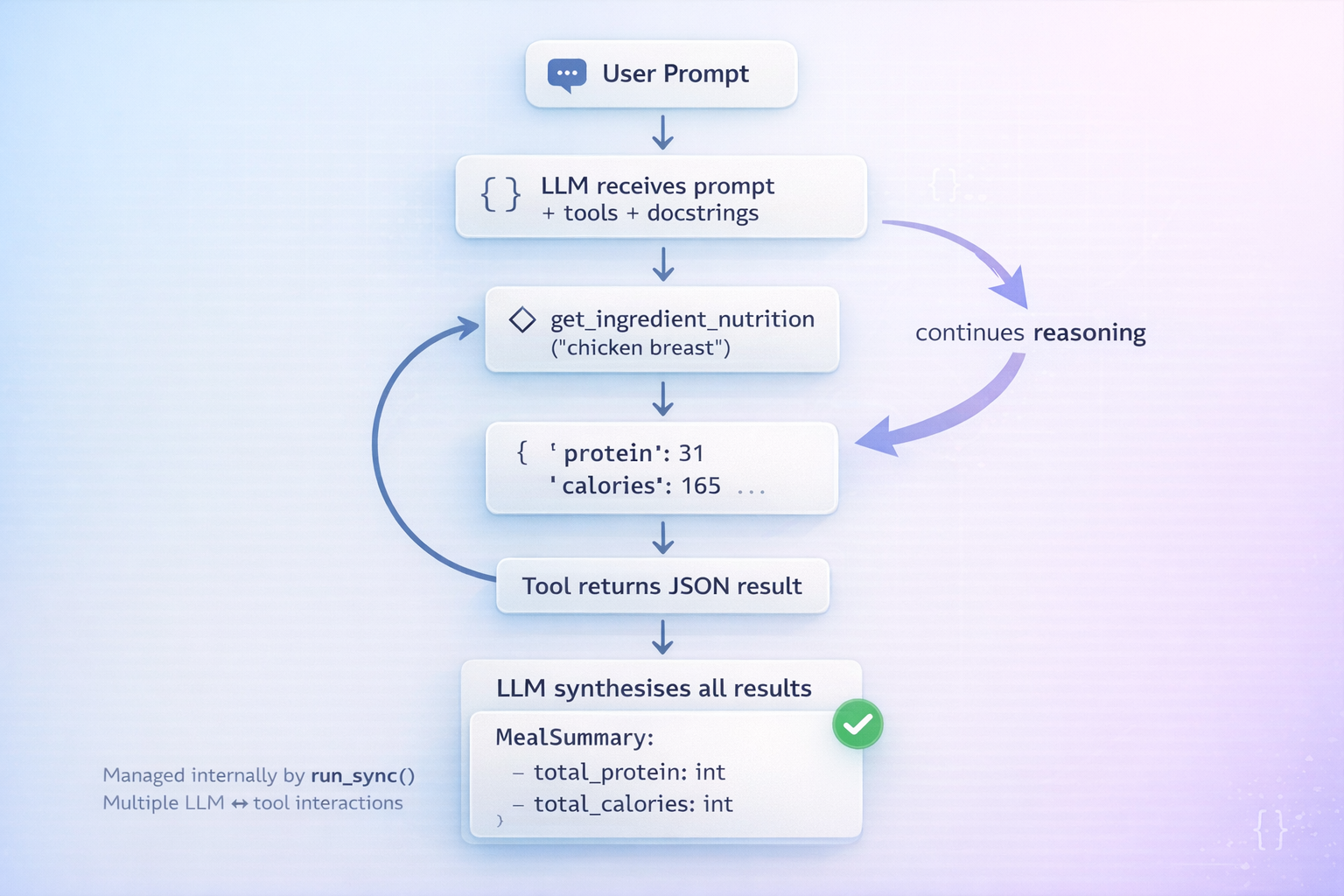

Giving Your Agent Instruments

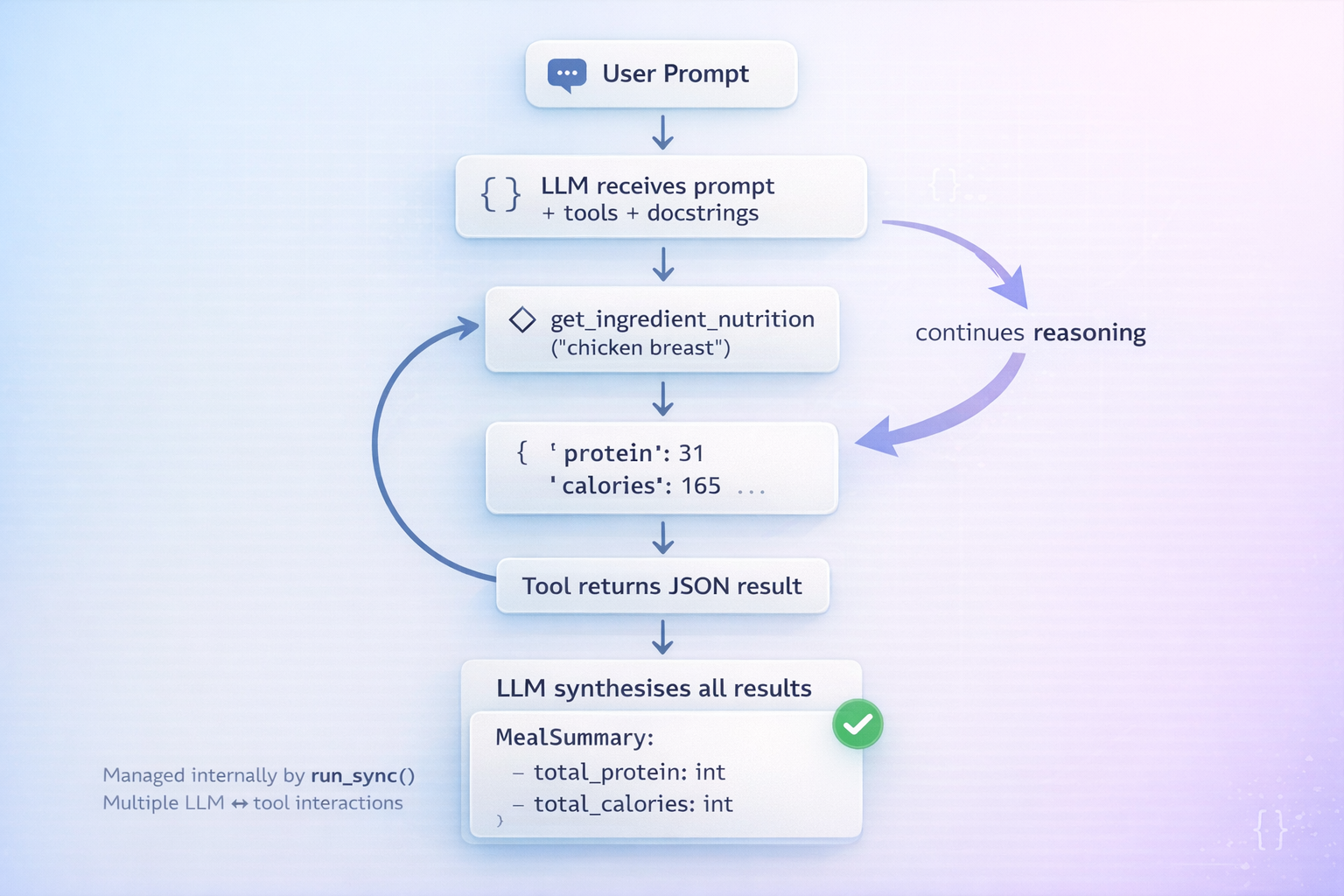

Language fashions haven’t any entry to the skin world. Instruments bridge this hole: you register Python capabilities that the LLM can invoke throughout its reasoning cycle, obtain the outcomes, and proceed reasoning earlier than producing its closing output.

First, outline the information supply and the output mannequin the agent will return:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

import json from pydantic import BaseModel from pydantic_ai import Agent

NUTRITION_DB = { “hen breast”: {“energy”: 165, “protein_g”: 31, “carbs_g”: 0, “fat_g”: 3.6}, “brown rice”: {“energy”: 216, “protein_g”: 5, “carbs_g”: 45, “fat_g”: 1.8}, “broccoli”: {“energy”: 55, “protein_g”: 3.7,“carbs_g”: 11, “fat_g”: 0.6}, “olive oil”: {“energy”: 119, “protein_g”: 0, “carbs_g”: 0, “fat_g”: 13.5}, }

class MealSummary(BaseModel): total_calories: int total_protein_g: float total_carbs_g: float total_fat_g: float health_verdict: str advice: str |

Right here, NUTRITION_DB is a stand-in for any exterior information supply: an actual database, an API, and extra. MealSummary is what we would like the agent to return after reasoning over the software outcomes.

Now create the agent and register a lookup software with @agent.tool_plain:

|

agent = Agent( “openai:gpt-4o-mini”, output_type=MealSummary, directions=“Use instruments to search for ingredient information, compute totals, and provides a verdict.”, )

@agent.tool_plain def get_ingredient_nutrition(ingredient: str) -> str: “”“ Search for energy, protein, carbs, and fats per 100g for a single ingredient. Returns an error message if the ingredient will not be discovered within the database. ““” information = NUTRITION_DB.get(ingredient.decrease().strip()) if information: return json.dumps({“ingredient”: ingredient, **information}) return f“Not discovered. Out there: {‘, ‘.be part of(NUTRITION_DB)}” |

@agent.tool_plain is for capabilities that want no entry to the run context — simply their very own arguments. The docstring will not be non-obligatory: the LLM reads it to determine when to name the software and the way to interpret the end result. A obscure or lacking docstring results in the mannequin calling instruments on the flawed time.

Giving your agent instruments in Pydantic AI

Lastly, run the agent:

|

end result = agent.run_sync( “Analyse: 200g hen breast, 150g brown rice, 100g broccoli, 10g olive oil.” ) print(end result.output.model_dump()) |

Right here’s a pattern output:

|

{ “total_calories”: 662, “total_protein_g”: 75.55, “total_carbs_g”: 101.5, “total_fat_g”: 17.25, “health_verdict”: “Properly-balanced, high-protein meal.”, “advice”: “Good post-workout possibility. Contemplate including wholesome fat like avocado if rising caloric consumption.” } |

The agent calls get_ingredient_nutrition as soon as per ingredient, accumulates the outcomes, computes totals, and returns a validated MealSummary. Every software name is a round-trip with the LLM, so maintain software capabilities light-weight and scope their docstrings tightly.

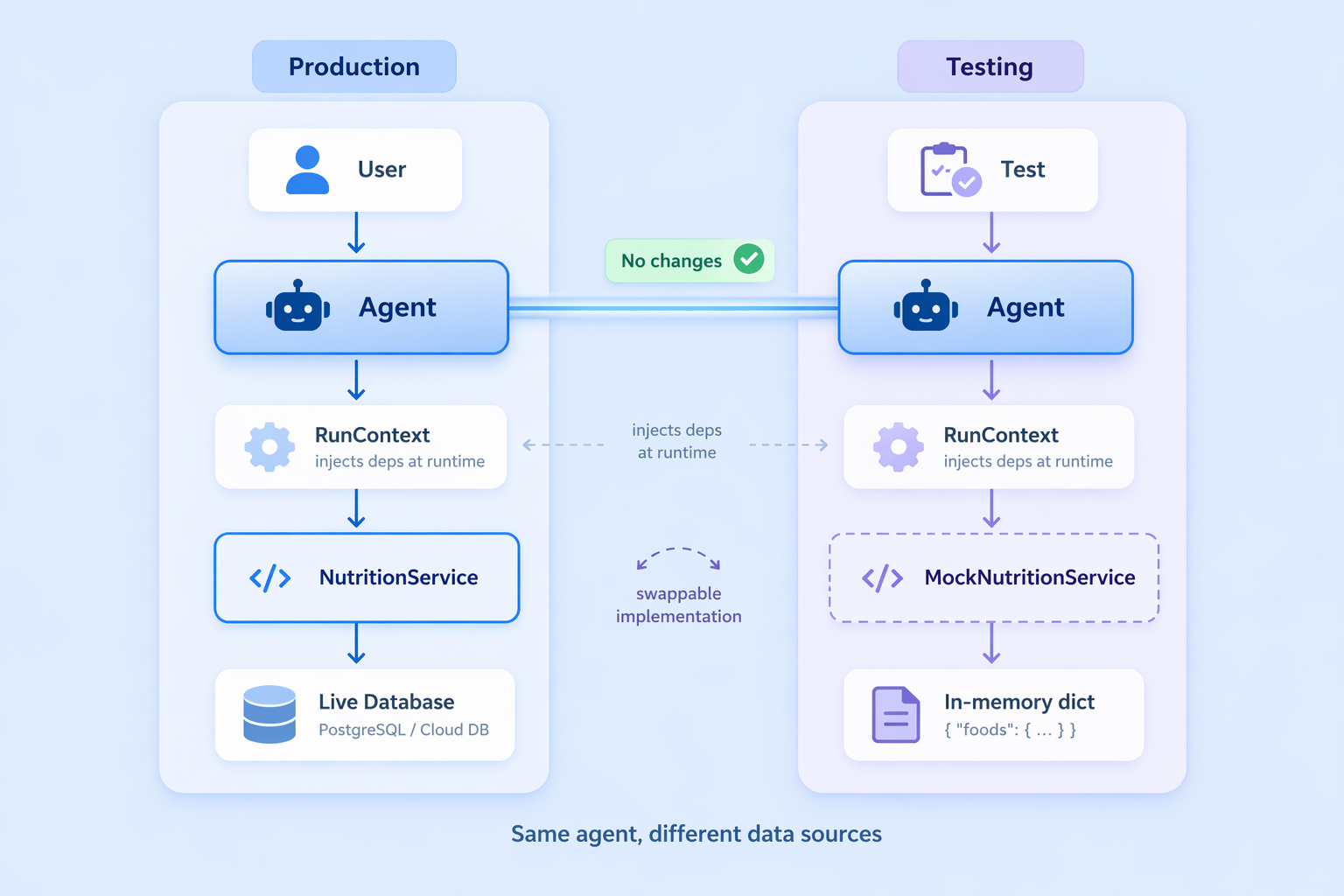

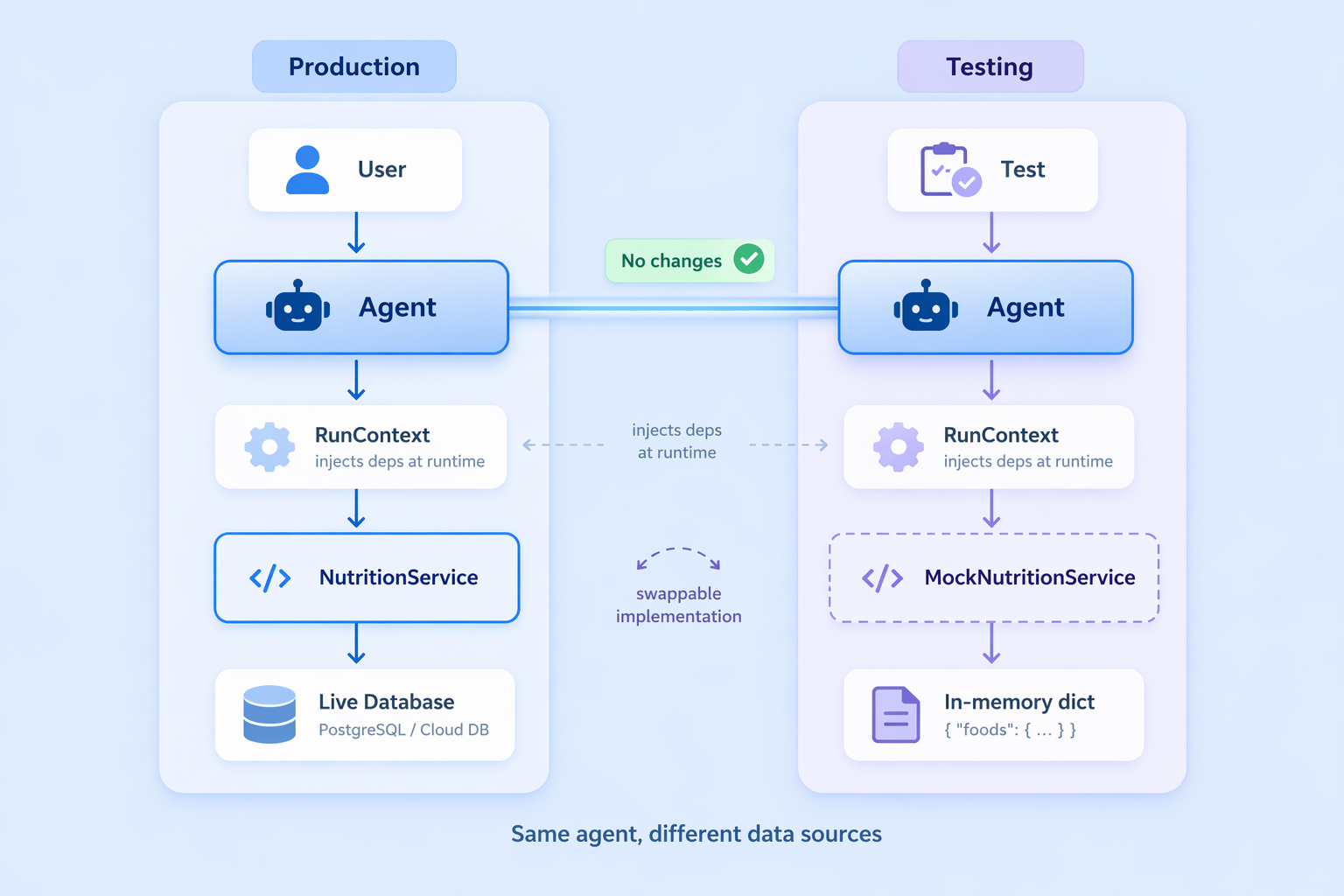

Dependency Injection in Observe

Hardcoding the vitamin database straight within the module works for demos, however in manufacturing your agent wants entry to issues created at runtime: a reside database connection, an authenticated API consumer, or consumer session information. Pydantic AI’s dependency injection sample handles this cleanly by way of a typed RunContext.

Begin by wrapping the information supply in a service class:

|

from dataclasses import dataclass from pydantic_ai import Agent, RunContext

@dataclass class NutritionService: database: dict

def lookup(self, ingredient: str) -> dict | None: return self.database.get(ingredient.decrease().strip())

def all_ingredients(self) -> checklist[str]: return checklist(self.database.keys()) |

NutritionService is the contract between the agent and the information layer. In manufacturing you’d maintain an actual database connection right here; in checks you swap in a mock with no modifications to the agent.

Dependency injection in Pydantic AI

Declare the dependency sort on the agent, then replace the software to just accept a RunContext:

|

agent = Agent( “openai:gpt-4o-mini”, output_type=MealSummary, deps_type=NutritionService, directions=“Use instruments to compute meal totals and supply a verdict.”, )

@agent.software def get_ingredient_nutrition(ctx: RunContext[NutritionService], ingredient: str) -> str: “”“Search for dietary data (per 100g) for a single ingredient.”“” information = ctx.deps.lookup(ingredient) if information: return json.dumps({“ingredient”: ingredient, **information}) return f“Not discovered. Out there: {‘, ‘.be part of(ctx.deps.all_ingredients())}” |

@agent.software (not tool_plain) is used when the operate wants the run context. ctx.deps holds the injected service occasion, totally typed.

Inject the service at name time by passing it to deps:

|

service = NutritionService(database=NUTRITION_DB) end result = agent.run_sync(“Analyse: 150g hen breast, 200g brown rice.”, deps=service) |

The payoff is clear, remoted testing. Swap in a mock with out modifying the agent definition in any respect:

|

mock_service = NutritionService(database={“check merchandise”: {“energy”: 100, “protein_g”: 10, “carbs_g”: 10, “fat_g”: 5}}) with agent.override(deps=mock_service): end result = agent.run_sync(“Analyse 100g check merchandise.”, deps=mock_service) assert end result.output.total_calories == 100 |

The agent by no means is aware of or cares the place the information comes from; the NutritionService interface is the one contract.

Utilizing Constructed-in Capabilities in Pydantic AI

Pydantic AI ships with composable capabilities that stretch your agent with out cluttering the constructor. Cross them by way of the capabilities argument; they work with any supported supplier.

Internet search offers the agent reside web entry. For finer management over domains and utilization limits, see the built-in instruments docs:

|

from pydantic_ai import Agent from pydantic_ai.capabilities import WebSearch

agent = Agent(“openai:gpt-4o-mini”, capabilities=[WebSearch()]) end result = agent.run_sync(“What’s the present value of gold?”) |

Considering permits step-by-step reasoning earlier than the ultimate reply, which is helpful for advanced or ambiguous duties. Effort ranges “low”, “medium”, and “excessive” map to every supplier’s native format. See the pondering docs for provider-specific particulars:

|

from pydantic_ai import Agent from pydantic_ai.capabilities import Considering

agent = Agent(“openai:gpt-4o-mini”, capabilities=[Thinking(effort=“high”)]) end result = agent.run_sync(“Design a schema for a multi-tenant SaaS billing system.”) |

Capabilities compose cleanly. You possibly can mix them by passing each within the checklist:

|

from pydantic_ai.capabilities import Considering, WebSearch

agent = Agent( “openai:gpt-4o-mini”, directions=“You’re a analysis assistant.”, capabilities=[Thinking(effort=“high”), WebSearch()], ) end result = agent.run_sync(“What have been the most important AI analysis breakthroughs this month?”) |

The agent causes over what to seek for, fetches reside outcomes, and synthesizes them right into a single response.

Abstract and Subsequent Steps

Here’s what you constructed:

- A primary agent with

run_syncand a mannequin string, adopted by structured output withoutput_type, turning LLM responses into validated Python objects - Customized operate instruments utilizing

@agent.tool_plainand@agent.software, with docstring-driven dispatch - Runtime dependency injection by way of

RunContextanddeps_type, preserving agent definitions decoupled from information sources - Constructed-in capabilities for internet search and prolonged pondering, composed by way of the

capabilitiesparameter

To go deeper, you’ll be able to discover superior toolsets and MCP server integration via operate instruments, together with the full suite of built-in instruments. Considering configurations allow you to fine-tune provider-specific reasoning habits, whereas dependency injection patterns make your system simpler to check and keep. While you pair Pydantic AI with Logfire, you additionally acquire real-time observability throughout each LLM name, software invocation, and validation retry, providing you with clear perception into how your system behaves in apply.

On this article, you’ll discover ways to construct production-ready AI brokers in Python utilizing Pydantic AI, with structured outputs, customized instruments, and dependency injection.

Matters we’ll cowl embody:

- How one can outline Pydantic fashions for type-safe, validated agent outputs.

- How one can register Python capabilities as instruments the agent can invoke throughout its reasoning cycle.

- How one can inject runtime dependencies akin to database connections and API shoppers utilizing a typed RunContext.

Constructing AI Brokers in Python with Pydantic AI

Picture by Creator

Introduction

AI brokers have gotten a core a part of manufacturing software program. They question databases, name APIs, cause over outcomes, and return structured outputs. However most AI agent orchestration frameworks used to construct them nonetheless really feel like glue code. They’re usually untyped, onerous to check, and simple to interrupt as techniques develop.

Pydantic AI takes a unique method, bringing robust typing, validation, and clear construction to agentic improvement. As a substitute of sewing collectively loosely linked elements, Pydantic AI permits you to work with acquainted Python patterns. Pydantic AI additionally permits you to validate inputs and outputs, outline instruments in a clear approach, and make agent habits simpler to grasp. This helps you construct brokers which might be extra dependable and simpler to take care of in real-world techniques.

On this article, you’ll discover ways to:

- Construct a structured agent with clear enter and output fashions utilizing Pydantic AI

- Add instruments that the agent can name safely

- Inject runtime dependencies in a clear and testable approach

- Use built-in capabilities in Pydantic AI like internet search and prolonged reasoning

By the tip, you’ll have a strong basis for constructing helpful brokers with Pydantic AI. You could find the Colab pocket book for reference on GitHub.

Why Pydantic AI?

While you name an LLM straight, the response is a string. It could be JSON, markdown, or one thing totally surprising. Parsing that string right into a structured object requires customized logic, error dealing with, and hope that the mannequin retains its formatting constant. In brokers that decision instruments throughout a number of steps, this fragility compounds quick. Pydantic AI solves this with the next concepts:

Sort-safe structured outputs. Outline a BaseModel to your agent’s response. The framework instructs the LLM to evolve to that schema, validates the response, and retries mechanically on failure. You obtain a validated Python object, not a string.

Structured output with Pydantic AI

Operate instruments with docstring-driven dispatch. Register plain Python capabilities as instruments. The LLM reads their sort hints and docstrings to grasp what every software does and when to make use of it. Your software definitions are additionally documentation.

Dependency injection with out world state. Brokers in manufacturing want database connections, API shoppers, and session information. Pydantic AI offers a type-safe sample for injecting these at runtime by way of a RunContext, preserving agent definitions clear and checks straightforward.

Conditions

- Python 3.9+ put in in your working atmosphere

- Familiarity with Pydantic

BaseModeland sort hints - Fundamental understanding of how LLM prompts work

- An API key from a supported supplier; this tutorial makes use of openai:gpt-4o-mini all through; the identical patterns work identically with Anthropic’s Claude, Google Gemini, and others by solely altering the mannequin string

Putting in Pydantic AI

First, you want the package deal put in and your API key out there within the atmosphere. Create a digital atmosphere and set up Pydantic AI:

|

python –m venv venv supply venv/bin/activate pip set up pydantic–ai |

Then set your API key:

|

export OPENAI_API_KEY=“YOUR-API-KEY-HERE” |

Comply with the same process for different mannequin suppliers as effectively.

Constructing Your First Agent with Pydantic AI

With the package deal put in, you’ll be able to create and run an agent in just some strains. This confirms your setup works and introduces the 2 core arguments each agent wants.

|

from pydantic_ai import Agent

agent = Agent( “openai:gpt-4o-mini”, directions=“You’re a concise assistant. Reply in a single or two sentences.”, ) |

The mannequin string follows the "supplier:model-name" format. Swapping the prefix — to anthropic: or google-gla: for instance — switches suppliers with out altering the rest. The directions argument units the system-level persona and habits for all runs.

Now run the agent and print its output:

|

end result = agent.run_sync(“What’s a big language mannequin?”) print(end result.output) |

Right here’s a pattern output:

|

A giant language mannequin is a sort of AI system skilled on huge quantities of textual content information to perceive and generate human language. It learns statistical patterns throughout billions of parameters, enabling it to reply questions, summarise content material, write code, and extra. |

agent.run_sync(...) sends the immediate and blocks till the response arrives. The .output attribute holds the end result, a plain string for now. In async purposes, use await agent.run(...) as an alternative; the API floor is an identical.

Getting Structured, Validated Outputs

A string response is okay for easy Q&A, however most manufacturing purposes want the LLM to return information in a form your code can instantly devour — a typed object, not a blob of textual content to parse. Pydantic AI handles this by way of the output_type argument. See the structured output docs for an in depth overview.

Begin by defining a Pydantic mannequin that describes the information you need again:

|

from pydantic import BaseModel, Discipline

class JobPosting(BaseModel): job_title: str company_name: str required_skills: checklist[str] = Discipline(description=“Technical abilities explicitly acknowledged”) seniority_level: str = Discipline(description=“e.g. Junior, Mid-level, Senior, Lead”) is_remote: bool |

Every subject maps on to one thing you count on the LLM to extract. Discipline(description=...) annotations give the mannequin further hints; use them to scale back validation retries.

Subsequent, create the agent with output_type set to your mannequin and run it in opposition to some uncooked textual content:

|

from pydantic_ai import Agent

agent = Agent( “openai:gpt-4o-mini”, output_type=JobPosting, directions=“Extract structured job posting info. Solely embody what’s explicitly acknowledged.”, )

end result = agent.run_sync(“”“ We’re hiring a Senior Python Engineer at CoolData Inc. The function is totally distant. Required: FastAPI, PostgreSQL, Docker. Kubernetes is a plus. ““”)

posting = end result.output print(posting.job_title, posting.seniority_level, posting.is_remote) |

It is best to get the same output:

|

Senior Python Engineer Senior True |

You may also test the complete validated object like so:

|

print(posting.model_dump()) |

This outputs:

|

{ “job_title”: “Senior Python Engineer”, “company_name”: “CoolData Inc.”, “required_skills”: [“FastAPI”, “PostgreSQL”, “Docker”], “seniority_level”: “Senior”, “is_remote”: True } |

When output_type is ready, Pydantic AI converts your mannequin’s fields right into a JSON schema and sends it alongside the immediate. The response is validated on arrival; if any subject is lacking or mistyped, the framework retries mechanically earlier than surfacing an error.

Giving Your Agent Instruments

Language fashions haven’t any entry to the skin world. Instruments bridge this hole: you register Python capabilities that the LLM can invoke throughout its reasoning cycle, obtain the outcomes, and proceed reasoning earlier than producing its closing output.

First, outline the information supply and the output mannequin the agent will return:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

import json from pydantic import BaseModel from pydantic_ai import Agent

NUTRITION_DB = { “hen breast”: {“energy”: 165, “protein_g”: 31, “carbs_g”: 0, “fat_g”: 3.6}, “brown rice”: {“energy”: 216, “protein_g”: 5, “carbs_g”: 45, “fat_g”: 1.8}, “broccoli”: {“energy”: 55, “protein_g”: 3.7,“carbs_g”: 11, “fat_g”: 0.6}, “olive oil”: {“energy”: 119, “protein_g”: 0, “carbs_g”: 0, “fat_g”: 13.5}, }

class MealSummary(BaseModel): total_calories: int total_protein_g: float total_carbs_g: float total_fat_g: float health_verdict: str advice: str |

Right here, NUTRITION_DB is a stand-in for any exterior information supply: an actual database, an API, and extra. MealSummary is what we would like the agent to return after reasoning over the software outcomes.

Now create the agent and register a lookup software with @agent.tool_plain:

|

agent = Agent( “openai:gpt-4o-mini”, output_type=MealSummary, directions=“Use instruments to search for ingredient information, compute totals, and provides a verdict.”, )

@agent.tool_plain def get_ingredient_nutrition(ingredient: str) -> str: “”“ Search for energy, protein, carbs, and fats per 100g for a single ingredient. Returns an error message if the ingredient will not be discovered within the database. ““” information = NUTRITION_DB.get(ingredient.decrease().strip()) if information: return json.dumps({“ingredient”: ingredient, **information}) return f“Not discovered. Out there: {‘, ‘.be part of(NUTRITION_DB)}” |

@agent.tool_plain is for capabilities that want no entry to the run context — simply their very own arguments. The docstring will not be non-obligatory: the LLM reads it to determine when to name the software and the way to interpret the end result. A obscure or lacking docstring results in the mannequin calling instruments on the flawed time.

Giving your agent instruments in Pydantic AI

Lastly, run the agent:

|

end result = agent.run_sync( “Analyse: 200g hen breast, 150g brown rice, 100g broccoli, 10g olive oil.” ) print(end result.output.model_dump()) |

Right here’s a pattern output:

|

{ “total_calories”: 662, “total_protein_g”: 75.55, “total_carbs_g”: 101.5, “total_fat_g”: 17.25, “health_verdict”: “Properly-balanced, high-protein meal.”, “advice”: “Good post-workout possibility. Contemplate including wholesome fat like avocado if rising caloric consumption.” } |

The agent calls get_ingredient_nutrition as soon as per ingredient, accumulates the outcomes, computes totals, and returns a validated MealSummary. Every software name is a round-trip with the LLM, so maintain software capabilities light-weight and scope their docstrings tightly.

Dependency Injection in Observe

Hardcoding the vitamin database straight within the module works for demos, however in manufacturing your agent wants entry to issues created at runtime: a reside database connection, an authenticated API consumer, or consumer session information. Pydantic AI’s dependency injection sample handles this cleanly by way of a typed RunContext.

Begin by wrapping the information supply in a service class:

|

from dataclasses import dataclass from pydantic_ai import Agent, RunContext

@dataclass class NutritionService: database: dict

def lookup(self, ingredient: str) -> dict | None: return self.database.get(ingredient.decrease().strip())

def all_ingredients(self) -> checklist[str]: return checklist(self.database.keys()) |

NutritionService is the contract between the agent and the information layer. In manufacturing you’d maintain an actual database connection right here; in checks you swap in a mock with no modifications to the agent.

Dependency injection in Pydantic AI

Declare the dependency sort on the agent, then replace the software to just accept a RunContext:

|

agent = Agent( “openai:gpt-4o-mini”, output_type=MealSummary, deps_type=NutritionService, directions=“Use instruments to compute meal totals and supply a verdict.”, )

@agent.software def get_ingredient_nutrition(ctx: RunContext[NutritionService], ingredient: str) -> str: “”“Search for dietary data (per 100g) for a single ingredient.”“” information = ctx.deps.lookup(ingredient) if information: return json.dumps({“ingredient”: ingredient, **information}) return f“Not discovered. Out there: {‘, ‘.be part of(ctx.deps.all_ingredients())}” |

@agent.software (not tool_plain) is used when the operate wants the run context. ctx.deps holds the injected service occasion, totally typed.

Inject the service at name time by passing it to deps:

|

service = NutritionService(database=NUTRITION_DB) end result = agent.run_sync(“Analyse: 150g hen breast, 200g brown rice.”, deps=service) |

The payoff is clear, remoted testing. Swap in a mock with out modifying the agent definition in any respect:

|

mock_service = NutritionService(database={“check merchandise”: {“energy”: 100, “protein_g”: 10, “carbs_g”: 10, “fat_g”: 5}}) with agent.override(deps=mock_service): end result = agent.run_sync(“Analyse 100g check merchandise.”, deps=mock_service) assert end result.output.total_calories == 100 |

The agent by no means is aware of or cares the place the information comes from; the NutritionService interface is the one contract.

Utilizing Constructed-in Capabilities in Pydantic AI

Pydantic AI ships with composable capabilities that stretch your agent with out cluttering the constructor. Cross them by way of the capabilities argument; they work with any supported supplier.

Internet search offers the agent reside web entry. For finer management over domains and utilization limits, see the built-in instruments docs:

|

from pydantic_ai import Agent from pydantic_ai.capabilities import WebSearch

agent = Agent(“openai:gpt-4o-mini”, capabilities=[WebSearch()]) end result = agent.run_sync(“What’s the present value of gold?”) |

Considering permits step-by-step reasoning earlier than the ultimate reply, which is helpful for advanced or ambiguous duties. Effort ranges “low”, “medium”, and “excessive” map to every supplier’s native format. See the pondering docs for provider-specific particulars:

|

from pydantic_ai import Agent from pydantic_ai.capabilities import Considering

agent = Agent(“openai:gpt-4o-mini”, capabilities=[Thinking(effort=“high”)]) end result = agent.run_sync(“Design a schema for a multi-tenant SaaS billing system.”) |

Capabilities compose cleanly. You possibly can mix them by passing each within the checklist:

|

from pydantic_ai.capabilities import Considering, WebSearch

agent = Agent( “openai:gpt-4o-mini”, directions=“You’re a analysis assistant.”, capabilities=[Thinking(effort=“high”), WebSearch()], ) end result = agent.run_sync(“What have been the most important AI analysis breakthroughs this month?”) |

The agent causes over what to seek for, fetches reside outcomes, and synthesizes them right into a single response.

Abstract and Subsequent Steps

Here’s what you constructed:

- A primary agent with

run_syncand a mannequin string, adopted by structured output withoutput_type, turning LLM responses into validated Python objects - Customized operate instruments utilizing

@agent.tool_plainand@agent.software, with docstring-driven dispatch - Runtime dependency injection by way of

RunContextanddeps_type, preserving agent definitions decoupled from information sources - Constructed-in capabilities for internet search and prolonged pondering, composed by way of the

capabilitiesparameter

To go deeper, you’ll be able to discover superior toolsets and MCP server integration via operate instruments, together with the full suite of built-in instruments. Considering configurations allow you to fine-tune provider-specific reasoning habits, whereas dependency injection patterns make your system simpler to check and keep. While you pair Pydantic AI with Logfire, you additionally acquire real-time observability throughout each LLM name, software invocation, and validation retry, providing you with clear perception into how your system behaves in apply.