NVIDIA stated it has achieved a document giant language mannequin (LLM) inference pace, asserting that an NVIDIA DGX B200 node with eight NVIDIA Blackwell GPUs achieved greater than 1,000 tokens per second (TPS) per person on the 400-billion-parameter Llama 4 Maverick mannequin.

NVIDIA stated the mannequin is the biggest and strongest within the Llama 4 assortment and that the pace was independently measured by the AI benchmarking service Synthetic Evaluation.

NVIDIA added that Blackwell reaches 72,000 TPS/server at their highest throughput configuration.

The corporate stated it made software program optimizations utilizing TensorRT-LLM and skilled a speculative decoding draft mannequin utilizing EAGLE-3 methods. Combining these approaches, NVIDIA has achieved a 4x speed-up relative to the most effective prior Blackwell baseline, NVIDIA stated.

“The optimizations described beneath considerably enhance efficiency whereas preserving response accuracy,” NVIDIA stated in a weblog posted yesterday. “We leveraged FP8 knowledge sorts for GEMMs, Combination of Specialists (MoE), and Consideration operations to cut back the mannequin measurement and make use of the excessive FP8 throughput doable with Blackwell Tensor Core know-how. Accuracy when utilizing the FP8 knowledge format matches that of Synthetic Evaluation BF16 throughout many metrics….”Most generative AI software contexts require a stability of throughput and latency, making certain that many purchasers can concurrently take pleasure in a “adequate” expertise. Nonetheless, for important functions that should make vital choices at pace, minimizing latency for a single consumer turns into paramount. Because the TPS/person document exhibits, Blackwell {hardware} is the only option for any process—whether or not it’s good to maximize throughput, stability throughput and latency, or decrease latency for a single person (the main focus of this submit).

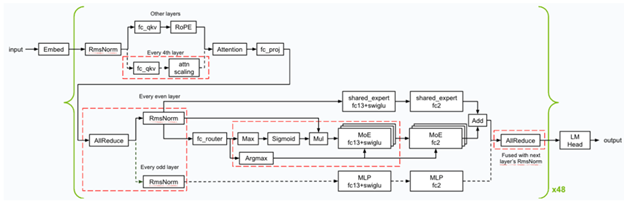

Under is an outline of the kernel optimizations and fusions (denoted in red-dashed squares) NVIDIA utilized through the inference. NVIDIA applied a number of low-latency GEMM kernels, and utilized numerous kernel fusions (like FC13 + SwiGLU, FC_QKV + attn_scaling and AllReduce + RMSnorm) to ensure Blackwell excels on the minimal latency state of affairs.

Overview of the kernel optimizations & fusions used for Llama 4 Maverick

NVIDIA optimized the CUDA kernels for GEMMs, MoE, and Consideration operations to realize the most effective efficiency on the Blackwell GPUs.

- Utilized spatial partitioning (often known as warp specialization) and designed the GEMM kernels to load knowledge from reminiscence in an environment friendly method to maximise utilization of the large reminiscence bandwidth that the NVIDIA DGX system provides—64TB/s HBM3e bandwidth in complete.

- Shuffled the GEMM weight in a swizzled format to permit higher format when loading the computation end result from Tensor Reminiscence after the matrix multiplication computations utilizing Blackwell’s fifth-generation Tensor Cores.

- Optimized the efficiency of the eye kernels by dividing the computations alongside the sequence size dimension of the Ok and V tensors, permitting computations to run in parallel throughout a number of CUDA thread blocks. As well as, NVIDIA utilized distributed shared reminiscence to effectively scale back outcomes throughout the thread blocks in the identical thread block cluster with out the necessity to entry the worldwide reminiscence.

The rest of the weblog could be discovered right here.