the ultimate quarter of 2025, it’s time to step again and look at the tendencies that can form knowledge and AI in 2026.

Whereas the headlines would possibly give attention to the newest mannequin releases and benchmark wars, they’re removed from probably the most transformative developments on the bottom. The true change is taking part in out within the trenches — the place knowledge scientists, knowledge + AI engineers, and AI/ML groups are activating these advanced methods and applied sciences for manufacturing. And unsurprisingly, the push towards manufacturing AI—and its subsequent headwinds in —are steering the ship.

Listed below are the ten tendencies defining this evolution, and what they imply heading into the ultimate quarter of 2025.

1. “Information + AI leaders” are on the rise

For those who’ve been on LinkedIn in any respect not too long ago, you may need observed a suspicious rise within the variety of knowledge + AI titles in your newsfeed—even amongst your individual staff members.

No, there wasn’t a restructuring you didn’t find out about.

Whereas that is largely a voluntary change amongst these historically categorized as knowledge or AI/ML professionals, this shift in titles displays a actuality on the bottom that Monte Carlo has been discussing for nearly a yr now—knowledge and AI are now not two separate disciplines.

From the assets and abilities they require to the issues they clear up, knowledge and AI are two sides of a coin. And that actuality is having a demonstrable affect on the best way each groups and applied sciences have been evolving in 2025 (as you’ll quickly see).

2. Conversational BI is sizzling—but it surely wants a temperature examine

Information democratization has been trending in a single type or one other for almost a decade now, and Conversational BI is the newest chapter in that story.

The distinction between conversational BI and each different BI instrument is the velocity and magnificence with which it guarantees to ship on that utopian imaginative and prescient—even probably the most non-technical area customers.

The premise is straightforward: if you happen to can ask for it, you possibly can entry it. It’s a win-win for house owners and customers alike…in concept. The problem (as with all democratization efforts) isn’t the instrument itself—it’s the reliability of the factor you’re democratizing.

The one factor worse than unhealthy insights is unhealthy insights delivered shortly. Join a chat interface to an ungoverned database, and also you gained’t simply speed up entry—you’ll speed up the implications.

3. Context engineering is changing into a core self-discipline

Enter prices for AI fashions are roughly 300-400x bigger than the outputs. In case your context knowledge is shackled with issues like incomplete metadata, unstripped HTML, or empty vector arrays, your staff goes to face large price overruns whereas processing at scale. What’s extra, confused or incomplete context can also be a serious AI reliability problem, with ambiguous product names and poor chunking complicated retrievers whereas small modifications to prompts or fashions can result in dramatically completely different outputs.

Which makes it no shock that context engineering has turn out to be the buzziest buzz phrase for knowledge + AI groups in mid-year 2025. Context engineering is the systematic means of making ready, optimizing, and sustaining context knowledge for AI fashions. Groups that grasp upstream context monitoring—making certain a dependable corpus and embeddings earlier than they hit costly processing jobs—will see significantly better outcomes from their AI fashions. Nevertheless it gained’t work in a silo.

The fact is that visibility into the context knowledge alone can’t handle AI high quality—and neither can AI observability options like evaluations. Groups want a complete strategy that gives visibility into the total system in manufacturing—from the context knowledge to the mannequin and its outputs. An socio-technical strategy that mixes knowledge + AI collectively is the one path to dependable AI at scale.

4. The AI enthusiasm hole widens

The most recent MIT report stated all of it. AI has a price downside. And the blame rests – a minimum of partly – with the chief staff.

“We nonetheless have a number of people who consider that AI is Magic and can do no matter you need it to do with no thought.”

That’s an actual quote, and it echoes a typical story for knowledge + AI groups

- An govt who doesn’t perceive the know-how units the precedence

- Venture fails to supply worth

- Pilot is scrapped

- Rinse and repeat

Firms are spending billions on AI pilots with no clear understanding of the place or how AI will drive affect—and it’s having a demonstrable affect on not solely pilot efficiency, however AI enthusiasm as a complete.

Attending to worth must be the primary, second, and third priorities. Meaning empowering the information + AI groups who perceive each the know-how and the information that’s going to energy it with the autonomy to handle actual enterprise issues—and the assets to make these use-cases dependable.

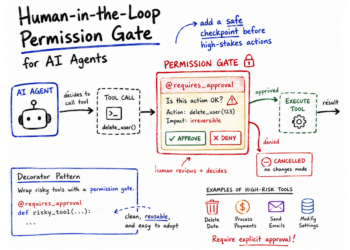

5. Cracking the code on brokers vs. agentic workflows

Whereas agentic aspirations have been fueling the hype machine during the last 18 months, the semantic debate between “agentic AI” an “brokers” was lastly held on the hallowed floor of LinkedIn’s feedback part this summer season.

On the coronary heart of the difficulty is a fabric distinction between the efficiency and value of those two seemingly similar however surprisingly divergent ways.

- Single-purpose brokers are workhorses for particular, well-defined duties the place the scope is evident and outcomes are predictable. Deploy them for centered, repetitive work.

- Agentic workflows sort out messy, multi-step processes by breaking them into manageable elements. The trick is breaking large issues into discrete duties that smaller fashions can deal with, then utilizing bigger fashions to validate and combination outcomes.

For instance, Monte Carlo’s Troubleshooting Agent makes use of an agentic workflow to orchestrate a whole bunch of sub-agents to research the basis causes of knowledge + AI high quality points.

6. Embedding high quality is within the highlight—and monitoring is correct behind it

In contrast to the information merchandise of previous, AI in its numerous types isn’t deterministic by nature. What goes in isn’t at all times what comes out. So, demystifying what beauty like on this context means measuring not simply the outputs, but additionally the methods, code, and inputs that feed them.

Embeddings are one such system.

When embeddings fail to characterize the semantic which means of the supply knowledge, AI will obtain the mistaken context no matter vector database or mannequin efficiency. Which is exactly why embedding high quality is changing into a mission-critical precedence in 2025.

Probably the most frequent embedding breaks are fundamental knowledge points: empty arrays, mistaken dimensionality, corrupted vector values, and many others. The issue is that the majority groups will solely uncover these issues when a response is clearly inaccurate.

One Monte Carlo buyer captured the issue completely: “We don’t have any perception into how embeddings are being generated, what the brand new knowledge is, and the way it impacts the coaching course of. We’re terrified of switching embedding fashions as a result of we don’t understand how retraining will have an effect on it. Do we now have to retrain our fashions that use these things? Do we now have to utterly begin over?”

As key dimensions of high quality and efficiency come into focus, groups are starting to outline new monitoring methods that may assist embeddings in manufacturing; together with components like dimensionality, consistency, and vector completeness, amongst others.

7. Vector databases want a actuality examine

Vector databases aren’t new for 2025. What IS new is that knowledge + AI groups are starting to comprehend these vector databases they’ve been counting on may not be as dependable as they thought.

Over the past 24 months, vector databases (which retailer knowledge as high-dimensional vectors that seize semantic which means) have turn out to be the de facto infrastructure for RAG functions. And in current months, they’ve additionally turn out to be a supply of consternation for knowledge + AI groups.

Embeddings drift. Chunking methods shift. Embedding fashions get up to date. All this transformation creates silent efficiency degradation that’s typically misdiagnosed as hallucinations — and sending groups down costly rabbit holes to resolve them.

The problem is that, not like conventional databases with built-in monitoring, most groups lack the requisite visibility into vector search, embeddings, and agent conduct to catch vector issues earlier than affect. That is prone to result in an increase in vector database monitoring implementation, in addition to different observability options to enhance response accuracy.

8. Main mannequin architectures prioritize simplicity over efficiency

The AI mannequin internet hosting panorama is consolidating round two clear winners: Databricks and AWS Bedrock. Each platforms are succeeding by embedding AI capabilities instantly into current knowledge infrastructure reasonably than requiring groups to study fully new methods.

Databricks wins with tight integration between mannequin coaching, deployment, and knowledge processing. Groups can fine-tune fashions on the identical platform the place their knowledge lives, eliminating the complexity of transferring knowledge between methods. In the meantime, AWS Bedrock succeeds via breadth and enterprise-grade safety, providing entry to a number of basis fashions from Anthropic, Meta, and others whereas sustaining strict knowledge governance and compliance requirements.

What’s inflicting others to fall behind? Fragmentation and complexity. Platforms that require intensive customized integration work or power groups to undertake fully new toolchains are dropping to options that match into current workflows.

Groups are selecting AI platforms based mostly on operational simplicity and knowledge integration capabilities reasonably than uncooked mannequin efficiency. The winners perceive that the most effective mannequin is ineffective if it’s too difficult to deploy and keep reliably.

9. Mannequin Context Protocol (MCP) is the MVP

Mannequin Context Protocol (MCP) has emerged because the game-changing “USB-C for AI”—a common commonplace that lets AI functions hook up with any knowledge supply with out customized integrations.

As an alternative of constructing separate connectors for each database, CRM, or API, groups can use one protocol to provide LLMs entry to all the things on the identical time. And when fashions can pull from a number of knowledge sources seamlessly, they ship sooner, extra correct responses.

Early adopters are already reporting main reductions in integration complexity and upkeep work by specializing in a single MCP implementation that works throughout their total knowledge ecosystem.

As a bonus, MCP additionally standardizes governance and logging — necessities that matter for enterprise deployment.

However don’t anticipate MCP to remain static. Many knowledge and AI leaders anticipate an Agent Context Protocol (ACP) to emerge inside the subsequent yr, dealing with much more advanced context-sharing situations. Groups adopting MCP now shall be prepared for these advances as the usual evolves.

10. Unstructured knowledge is the brand new gold (however is it idiot’s gold?)

Most AI functions depend on unstructured knowledge — like emails, paperwork, photos, audio information, and assist tickets — to supply the wealthy context that makes AI responses helpful.

However whereas groups can monitor structured knowledge with established instruments, unstructured knowledge has lengthy operated in a blind spot. Conventional knowledge high quality monitoring can’t deal with textual content information, photos, or paperwork in the identical manner it tracks database tables.

Options like Monte Carlo’s unstructured knowledge monitoring are addressing this hole for customers by bringing automated high quality checks to textual content and picture fields throughout Snowflake, Databricks, and BigQuery.

Wanting forward, unstructured knowledge monitoring will turn out to be as commonplace as conventional knowledge high quality checks. Organizations will implement complete high quality frameworks that deal with all knowledge — structured and unstructured — as essential belongings requiring energetic monitoring and governance.

Wanting ahead to 2026

If 2025 has taught us something thus far, it’s that the groups profitable with AI aren’t those with the most important budgets or the flashiest demos. The groups profitable the AI race are the groups who’ve found out the best way to ship dependable, scalable, and reliable AI in manufacturing.

Winners aren’t made in a testing setting. They’re made within the arms of actual customers. Ship adoptable AI options, and also you’ll ship demonstrable AI worth. It’s that straightforward.