You probably have studied causal inference earlier than, you most likely have already got a strong thought of the basics, just like the potential outcomes framework, propensity rating matching, and primary difference-in-differences. Nonetheless, foundational strategies typically break down in terms of real-world challenges. Typically the confounders are unmeasured, remedies roll out at totally different deadlines, or results differ throughout a inhabitants.

This text is geared in direction of people who’ve a strong grasp of the basics and are actually seeking to increase their ability set with extra superior strategies. To make issues extra relatable and tangible, we’ll use a recurring situation as a case research to evaluate whether or not a job coaching program has a constructive influence on earnings. This traditional query of causality is especially well-suited for our functions, as it’s fraught with complexities that come up in real-world conditions, comparable to self-selection, unmeasured capability, and dynamic results, making it a perfect take a look at case for the superior strategies we’ll be exploring.

“An important facet of a statistical evaluation isn’t what you do with the info, however what knowledge you employ and the way it was collected.”

— Andrew Gelman, Jennifer Hill, and Aki Vehtari, Regression and Different Tales

Contents

Introduction

1. Doubly Strong Estimation: Insurance coverage Towards Misspecification

2. Instrumental Variables: When Confounders Are Unmeasured

3. Regression Discontinuity: The Credible Quasi-Experiment

4. Distinction-in-Variations: Navigating Staggered Adoption

5. Heterogeneous Therapy Results: Shifting Past the Common

6. Sensitivity Evaluation: The Sincere Researcher’s Toolkit

Placing It All Collectively: A Resolution Framework

Closing Ideas

Half 1: Doubly Strong Estimation

Think about we’re evaluating a coaching program the place individuals self-select into therapy. To estimate its impact on their earnings, we should account for confounders like age and training. Historically, we now have two paths: end result regression (modeling earnings) or propensity rating weighting (modeling the likelihood of therapy). Each paths share a crucial vulnerability, i.e., if we specify our mannequin incorrectly, e.g., overlook an interplay or misspecify a useful kind, our estimate of the therapy impact will probably be biased. Now, Doubly Strong (DR) Estimation, often known as Augmented Inverse Likelihood Weighting (AIPW), combines each to supply a robust resolution. Our estimate will probably be constant if both the end result mannequin or the propensity mannequin is accurately specified. It makes use of the propensity mannequin to create steadiness, whereas the end result mannequin clears residual imbalance. If one mannequin is mistaken, the opposite corrects it.

In observe, this includes three steps:

- Mannequin the end result: Match two separate regression fashions (e.g., linear regression, machine studying fashions) to foretell the end result (earnings) from the covariates. One mannequin is skilled solely on the handled group to foretell the end result μ^1(X), and one other is skilled solely on the untreated group to foretell μ^0(X).

- Mannequin therapy: Match a classification mannequin (e.g., logistic regression, gradient boosting) to estimate the propensity rating, or every particular person’s likelihood of enrolling in this system, π^(X)=P(T=1∣X). Right here, π^(X) is the propensity rating. For every particular person, it’s a quantity between 0 and 1 representing their estimated probability of becoming a member of the coaching program, based mostly on their traits, and X symbolises the covariate.

- Mix: Plug these predictions into the Augmented Inverse Likelihood Weighting estimator. This method cleverly combines the regression predictions with the inverse-propensity weighted residuals to create a closing, doubly-robust estimate of the Common Therapy Impact (ATE).

Right here is the code for a easy implementation in Python:

import numpy as np

from sklearn.ensemble import GradientBoostingClassifier, GradientBoostingRegressor

from sklearn.model_selection import KFold

def doubly_robust_ate(Y, T, X, n_folds=5):

n = len(Y)

e = np.zeros(n) # propensity scores

mu1 = np.zeros(n) # E[Y|X,T=1]

mu0 = np.zeros(n) # E[Y|X,T=0]

kf = KFold(n_folds, shuffle=True, random_state=42)

for train_idx, test_idx in kf.cut up(X):

X_tr, X_te = X[train_idx], X[test_idx]

T_tr, T_te = T[train_idx], T[test_idx]

Y_tr = Y[train_idx]

# Propensity mannequin

ps = GradientBoostingClassifier(n_estimators=200)

ps.match(X_tr, T_tr)

e[test_idx] = ps.predict_proba(X_te)[:, 1]

# Consequence fashions (match solely on applicable teams)

mu1_model = GradientBoostingRegressor(n_estimators=200)

mu1_model.match(X_tr[T_tr==1], Y_tr[T_tr==1])

mu1[test_idx] = mu1_model.predict(X_te)

mu0_model = GradientBoostingRegressor(n_estimators=200)

mu0_model.match(X_tr[T_tr==0], Y_tr[T_tr==0])

mu0[test_idx] = mu0_model.predict(X_te)

# Clip excessive propensities

e = np.clip(e, 0.05, 0.95)

# AIPW estimator

treated_term = mu1 + T * (Y - mu1) / e

control_term = mu0 + (1 - T) * (Y - mu0) / (1 - e)

ate = np.imply(treated_term - control_term)

# Normal error through affect perform

affect = (treated_term - control_term) - ate

se = np.std(affect, ddof=1) / np.sqrt(n)

return ate, se

When it falls brief: The DR property protects towards useful kind misspecification, however it can not shield towards unmeasured confounding. If a key confounder is lacking from each fashions, our estimate stays biased. It is a elementary limitation of all strategies that depend on the collection of observables assumption, which leads us on to our subsequent methodology.

Half 2: Instrumental Variables

Typically, no quantity of covariate adjustment can save us. Take into account the problem of unmeasured capability. Individuals who voluntarily enrol in job coaching are seemingly extra motivated and succesful than those that don’t. These traits immediately have an effect on earnings, creating confounding that no set of noticed covariates can totally seize.

Now, suppose the coaching program sends promotional mailers to randomly chosen households to encourage enrolment. Not everybody who receives a mailer enrols, and a few folks enrol with out receiving one. However the mailer creates exogenous variation in enrolment, which is unrelated to capability or motivation.

This mailer is our instrument. Its impact on earnings flows totally by means of its impact on program participation. We are able to use this clear variation to estimate this system’s causal influence.

The Core Perception: Instrumental variables (IV) exploit a robust thought of discovering one thing that nudges folks towards therapy however has no direct impact on the end result besides by means of that therapy. This “instrument” gives variation in therapy that’s freed from confounding.

The Three Necessities: For an instrument Z to be legitimate, three situations should maintain:

- Relevance: Z should have an effect on therapy (A typical rule of thumb is a first-stage F-statistic > 10).

- Exclusion Restriction: Z impacts the end result solely by means of therapy.

- Independence: Z is unrelated to unmeasured confounders.

Situations 2 and three are basically untestable and require deep area data.

Two-Stage Least Squares: The widespread estimator, Two-Stage Least Squares (2SLS), is used to estimate IV. It isolates the variation in therapy pushed by the instrument to estimate the impact. Because the identify suggests, it really works in two levels:

- First stage: Regress therapy on the instrument (and any controls). This isolates the portion of therapy variation pushed by the instrument.

- Second stage: Regress the end result on the predicted therapy from stage one. As a result of predicted therapy solely displays instrument-driven variation, the second-stage coefficient captures the causal impact for compliers.

Right here is a straightforward implementation in Python:

import pandas as pd

import statsmodels.method.api as smf

from linearmodels.iv import IV2SLS

# Assume 'knowledge' is a pandas DataFrame with columns:

# earnings, coaching (endogenous), mailer (instrument), age, training

# ---- 1. Examine instrument energy (first stage) ----

first_stage = smf.ols('coaching ~ mailer + age + training', knowledge=knowledge).match()

# For a single instrument, the t-statistic on 'mailer' assessments relevance

t_stat = first_stage.tvalues['mailer']

f_stat = t_stat ** 2

print(f"First-stage F-statistic on instrument: {f_stat:.1f} (t = {t_stat:.2f})")

# Rule of thumb: F > 10 signifies sturdy instrument

# ---- 2. Two-Stage Least Squares ----

# System syntax: end result ~ exogenous + [endogenous ~ instrument]

iv_formula = 'earnings ~ 1 + age + training + [training ~ mailer]'

iv_result = IV2SLS.from_formula(iv_formula, knowledge=knowledge).match()

# Show key outcomes

print("nIV Estimates (2SLS)")

print(f"Coefficient for coaching: {iv_result.params['training']:.3f}")

print(f"Normal error: {iv_result.std_errors['training']:.3f}")

print(f"95% CI: {iv_result.conf_int().loc['training'].values}")

# Elective: full abstract

# print(iv_result.abstract)

The Interpretation Entice — LATE vs. ATE: A key nuance of IV is that it doesn’t estimate the therapy impact for everybody. It estimates the Native Common Therapy Impact (LATE), i.e., the impact for compliers. These are the people who take the therapy solely as a result of they obtained the instrument. In our instance, compliers are individuals who enroll as a result of they bought the mailer. We be taught nothing about “always-takers” (enroll regardless) or “never-takers” (by no means enroll). That is important context when deciphering outcomes.

Half 3: Regression Discontinuity (RD)

Now, let’s think about the job coaching program is obtainable solely to employees who scored under 70 on a abilities evaluation. A employee scoring 69.5 is basically similar to at least one scoring 70.5. The tiny distinction in rating is virtually random noise. But one receives coaching and the opposite doesn’t.

By evaluating outcomes for folks just under the cutoff (who obtained coaching) with these simply above (who didn’t), we approximate a randomized experiment within the neighbourhood of the brink. It is a regression discontinuity (RD) design, and it gives causal estimates that rival the credibility of precise randomized managed trials.

In contrast to most observational strategies, RD permits for highly effective diagnostic checks:

- The Density Take a look at: If folks can manipulate their rating to land just under the cutoff, these just under will differ systematically from these simply above. We are able to test this by plotting the density of the working variable across the threshold. A easy density helps the design, whereas a suspicious spike just under the cutoff suggests gaming.

- Covariate Continuity: Pre-treatment traits must be easy by means of the cutoff. If age, training, or different covariates bounce discontinuously on the threshold, one thing aside from therapy is altering, and our RD estimate isn’t dependable. We formally take a look at this by checking whether or not covariates themselves present a discontinuity on the cutoff.

The Bandwidth Dilemma: RD design faces an inherent dilemma:

- Slim bandwidth (trying solely at scores very near the cutoff): That is extra credible, as a result of individuals are really comparable, however much less exact, as a result of we’re utilizing fewer observations.

- Vast bandwidth (together with scores farther from the cutoff): That is extra exact, as a result of we now have extra knowledge; nonetheless, it is usually riskier, as a result of folks removed from the cutoff might differ systematically.

Trendy observe makes use of data-driven bandwidth choice strategies developed by Calonico, Cattaneo, and Titiunik, which formalize this trade-off. However the golden rule is to at all times test that our conclusions don’t flip once we modify the bandwidth.

Modeling RD: In observe, we match separate native linear regressions on both aspect of the cutoff and weight the observations by their distance from the brink. This provides extra weight to observations closest to the cutoff, the place comparability is highest. The therapy impact is the distinction between the 2 regression strains on the cutoff level.

Right here is a straightforward implementation in Python:

from rdrobust import rdrobust

# Estimate at default (optimum) bandwidth

outcome = rdrobust(y=knowledge['earnings'], x=knowledge['score'], c=70)

print(outcome.abstract())

# Sensitivity evaluation over mounted bandwidths

for bw in [3, 5, 7, 10]:

r = rdrobust(y=knowledge['earnings'], x=knowledge['score'], c=70, h=bw)

#Use integer indices: 0 = standard, 1 = bias-corrected, 2 = strong

eff = r.coef[0]

ci_low, ci_high = r.ci[0, :]

print(f"Bandwidth={bw}: Impact={eff:.2f}, "

f"95% CI=[{ci_low:.2f}, {ci_high:.2f}]")Half 4: Distinction-in-Variations

You probably have studied causal inference, you’ve most likely encountered the essential difference-in-differences (DiD) methodology, which compares the change in outcomes for a handled group earlier than and after therapy, subtracts the corresponding change for a management group, and attributes the remaining distinction to the therapy. The logic is intuitive and highly effective. However over the previous few years, methodological researchers have uncovered a major problem that has raised a number of questions.

The Drawback with Staggered Adoption: In the actual world, remedies not often swap on at a single cut-off date for a single group. Our job coaching program may roll out metropolis by metropolis over a number of years. The usual method, throwing all our knowledge right into a two-way mounted results (TWFE) regression with unit and time mounted results, feels pure however it may be probably very mistaken.

The problem, formalized by Goodman-Bacon (2021), is that with staggered therapy timing and heterogeneous results, the TWFE estimator doesn’t produce a clear weighted common of therapy results. As a substitute, it makes comparisons that may embrace already-treated items as controls for later-treated items. Think about Metropolis A is handled in 2018, Metropolis B in 2020. When estimating the impact in 2021, TWFE might examine the change in Metropolis B to the change in Metropolis A but when Metropolis A’s therapy impact evolves over time (e.g., grows or diminishes), that comparability is contaminated as a result of we’re utilizing a handled unit as a management. The ensuing estimate may be biased.

The Resolution: Current work by Callaway and Sant’Anna (2021), Solar and Abraham (2021), and others gives sensible options to the staggered adoption downside:

- Group-time particular results: As a substitute of estimating a single therapy coefficient, estimate separate results for every therapy cohort at every post-treatment time interval. This avoids the problematic implicit comparisons in TWFE.

- Clear management teams: Solely use never-treated or not-yet-treated items as controls. This prevents already-treated items from contaminating your comparability group.

- Cautious aggregation: After getting clear cohort-specific estimates, mixture them into abstract measures utilizing applicable weights, sometimes weighting by cohort measurement.

Diagnostic — The Occasion Examine Plot: Earlier than working any estimator, we should always visualize the dynamics of therapy results. An occasion research plot exhibits estimated results in durations earlier than and after therapy, relative to a reference interval (often the interval simply earlier than therapy). Pre-treatment coefficients close to zero assist the parallel traits assumption. The DiD method rests on an assumption that within the absence of therapy, the common outcomes for the handled and management teams would have adopted parallel paths, i.e., any distinction between them would stay fixed over time.

These approaches are carried out within the did package deal in R and the csdid package deal in Python. Right here is a straightforward Python implementation:

import matplotlib.pyplot as plt

import numpy as np

def plot_event_study(estimates, ci_lower, ci_upper, event_times):

"""

Visualize dynamic therapy results with confidence intervals.

Parameters

----------

estimates : array-like

Estimated results for every occasion time.

ci_lower, ci_upper : array-like

Decrease and higher bounds of confidence intervals.

event_times : array-like

Time durations relative to therapy (e.g., -5, -4, ..., +5).

"""

fig, ax = plt.subplots(figsize=(10, 6))

ax.fill_between(event_times, ci_lower, ci_upper, alpha=0.2, coloration='steelblue')

ax.plot(event_times, estimates, 'o-', coloration='steelblue', linewidth=2)

ax.axhline(y=0, coloration='grey', linestyle='--', linewidth=1)

ax.axvline(x=-0.5, coloration='firebrick', linestyle='--', alpha=0.7,

label='Therapy onset')

ax.set_xlabel('Durations Relative to Therapy', fontsize=12)

ax.set_ylabel('Estimated Impact', fontsize=12)

ax.set_title('Occasion Examine', fontsize=14)

ax.legend(fontsize=11)

ax.grid(alpha=0.3)

return fig

# Pseudocode utilizing csdid (if obtainable)

from csdid import did_multiplegt # hypothetical

outcomes = did_multiplegt(df, 'earnings', 'therapy', 'metropolis', '12 months')

plot_event_study(outcomes.estimates, outcomes.ci_lower, outcomes.ci_upper, outcomes.event_times)Half 5: Heterogeneous Therapy Results

All of the strategies we now have seen to this point are based mostly on the common impact, however that may be deceptive. Suppose coaching will increase earnings by $5,000 for non-graduates however has zero impact for graduates. Reporting simply the ATE misses probably the most actionable perception, which is who we should always goal?

Therapy impact heterogeneity is the norm, not the exception. A drug may assist youthful sufferers whereas harming older ones. An academic intervention may profit struggling college students whereas having no influence on excessive performers.

Understanding this heterogeneity serves three functions:

- Concentrating on: Allocate remedies to those that profit most.

- Mechanism: Patterns of heterogeneity reveal how a therapy works. If coaching solely helps employees in particular industries, that tells us one thing concerning the underlying mechanism.

- Generalisation: If we run a research in a single inhabitants and wish to apply findings elsewhere, we have to know whether or not results rely upon traits that differ throughout populations.

The Conditional Common Therapy Impact (CATE): CATE captures how results differ with observable traits:

τ(x) = E[Y(1) − Y(0) | X = x]

This perform tells us the anticipated therapy impact for people with traits X = x.

Trendy Instruments to estimate CATE: Causal Forests, developed by Athey and Wager, lengthen random forests to estimate CATEs. The important thing innovation is that the info used to find out tree construction is separate from the info used to estimate results inside leaves, enabling legitimate confidence intervals. The algorithm works by:

- Rising many timber, every on a subsample of the info.

- At every cut up, it chooses the variable that maximizes the heterogeneity in therapy results between the ensuing youngster nodes.

- Inside every leaf, it estimates a therapy impact (sometimes utilizing a difference-in-means or a small linear mannequin).

- The ultimate CATE for a brand new level is the common of the leaf-specific estimates throughout all timber.

Here’s a snippet of Python implementation:

from econml.dml import CausalForestDML

from sklearn.ensemble import GradientBoostingRegressor, GradientBoostingClassifier

import numpy as np

import pandas as pd

# X: covariates (age, training, business, prior earnings, and so forth.)

# T: binary therapy (enrolled in coaching)

# Y: end result (earnings after program)

# For function significance later, we'd like function names

feature_names = ['age', 'education', 'industry_manufacturing', 'industry_services',

'prior_earnings', 'unemployment_duration']

causal_forest = CausalForestDML(

model_y=GradientBoostingRegressor(n_estimators=200, max_depth=4), # end result mannequin

model_t=GradientBoostingClassifier(n_estimators=200, max_depth=4), # propensity mannequin

n_estimators=2000, # variety of timber

min_samples_leaf=20, # minimal leaf measurement

random_state=42

)

causal_forest.match(Y=Y, T=T, X=X)

# Particular person therapy impact estimates (ITE, however interpreted as CATE given X)

individual_effects = causal_forest.impact(X)

# Abstract statistics

print(f"Common impact (ATE): ${individual_effects.imply():,.0f}")

print(f"Backside quartile: ${np.percentile(individual_effects, 25):,.0f}")

print(f"Prime quartile: ${np.percentile(individual_effects, 75):,.0f}")

# Variable significance: which options drive heterogeneity?

# (econml returns significance for every function; we kind and present prime ones)

importances = causal_forest.feature_importances_

for identify, imp in sorted(zip(feature_names, importances), key=lambda x: -x[1]):

if imp > 0.05: # present solely options with >5% significance

print(f" {identify}: {imp:.3f}")

Half 6: Sensitivity Evaluation

We’ve now coated 5 refined strategies. Every of them requires assumptions we can not totally confirm. The query isn’t whether or not our assumptions are precisely right however whether or not violations are extreme sufficient to overturn our conclusions. That is the place sensitivity evaluation is available in. It gained’t make our outcomes look extra spectacular. However it might assist us to make our causal evaluation strong.

If an unmeasured confounder would should be implausibly sturdy i.e., extra highly effective than any noticed covariate, our findings are strong. If modest confounding might eradicate the impact, we now have to interpret the outcomes with warning.

The Omitted Variable Bias Framework: A sensible method, formalized by Cinelli and Hazlett (2020), frames sensitivity by way of two portions:

- How strongly would the confounder relate to therapy project? (partial R² with therapy)

- How strongly would the confounder relate to the end result? (partial R² with end result)

Utilizing these two approaches, we are able to create a sensitivity contour plot, which is a map displaying which areas of confounder energy would overturn our findings and which might not.

Right here is a straightforward Python implementation:

def sensitivity_summary(estimated_effect, se, r2_benchmarks):

"""

Illustrate robustness to unmeasured confounding utilizing a simplified method.

r2_benchmarks: dict mapping covariate names to their partial R²

with the end result, offering real-world anchors for "how sturdy is robust?"

"""

print("SENSITIVITY ANALYSIS")

print("=" * 55)

print(f"Estimated impact: ${estimated_effect:,.0f} (SE: ${se:,.0f})")

print(f"Impact could be totally defined by bias >= ${abs(estimated_effect):,.0f}")

print()

print("Benchmarking towards noticed covariates:")

print("-" * 55)

for identify, r2 in r2_benchmarks.gadgets():

# Simplified: assume confounder with identical R² as noticed covariate

# would induce bias proportional to that R²

implied_bias = abs(estimated_effect) * r2

remaining = estimated_effect - implied_bias

print(f"n If unobserved confounder is as sturdy as '{identify}':")

print(f" Implied bias: ${implied_bias:,.0f}")

print(f" Adjusted impact: ${remaining:,.0f}")

would_overturn = "YES" if abs(remaining) < 1.96 * se else "No"

print(f" Would overturn conclusion? {would_overturn}")

# Instance utilization

sensitivity_summary(

estimated_effect=3200,

se=800,

r2_benchmarks={

'training': 0.15,

'prior_earnings': 0.25,

'age': 0.05

}

)

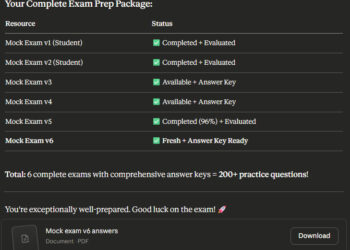

Placing It All Collectively: A Resolution Framework

With 5 strategies and a sensitivity toolkit at your disposal, the sensible query now’s which one ought to we use, and when? Here’s a flowchart to assist with that call.

Closing Ideas

Causal inference is basically about reasoning fastidiously beneath uncertainty. The strategies we now have explored on this article are all highly effective instruments, however they continue to be instruments. Every calls for judgment about when it applies and vigilance about when it fails. A easy methodology utilized thoughtfully will at all times outperform a fancy methodology utilized blindly.

Beneficial Assets for Going Deeper

Listed below are the sources I’ve discovered most precious:

For constructing statistical instinct round causal pondering:

- Regression and Different Tales by Gelman, Hill, and Vehtari

- For rigorous potential outcomes framework: Causal Inference for Statistics, Social, and Biomedical Sciences by Imbens and Rubin

- For domain-grounded causal reasoning: Causal Inference in Public Well being by Glass et al.

- Athey and Imbens (2019), “Machine Studying Strategies That Economists Ought to Know About” — Bridges ML and causal inference.

- Callaway and Sant’Anna (2021) Important studying on fashionable DiD with staggered adoption.

- Cinelli and Hazlett (2020) The definitive sensible information to sensitivity evaluation.

Be aware: The featured picture for this text was created utilizing Google’s Gemini AI picture technology instrument.