Enterprises exploring AI implementation—which constitutes most enterprises as of 2024—are presently assessing how to take action safely and sustainably. AI ethics may be a necessary a part of that dialog. Questions of explicit curiosity embrace:

- How numerous or consultant are the coaching knowledge of your AI engines? How can a scarcity of illustration influence AI’s outputs?

- When ought to AI be trusted with a delicate activity vs. a human? What degree of oversight ought to organizations enact over AI?

- When—and the way—ought to organizations inform stakeholders that AI has been used to finish a sure activity?

Organizations, particularly these leveraging proprietary AI engines, should reply these questions completely and transparently to fulfill all stakeholder considerations. To ease this course of, let’s evaluate a couple of urgent developments in AI ethics over the previous six months.

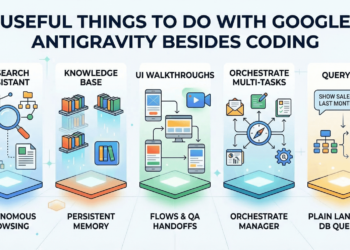

The rise of agentic AI

We’re quietly getting into a brand new period in AI. “Agentic AI,” because it’s identified, can act as an “agent” that analyzes conditions, engages different applied sciences for decision-making, and finally reaches advanced, multi-step selections with out fixed human oversight. This degree of sophistication units agentic AI other than variations of generative AI that first got here available on the market and couldn’t inform customers the time or add easy numbers.

Agentic AI programs can course of and “motive” by way of a fancy dilemma with a number of standards. For instance, planning a visit to Mumbai. You’d like this journey to align together with your mom’s birthday, and also you’d prefer to ebook a flight that cashes in in your reward miles. Moreover, you’d like a lodge near your mom’s home, and also you’re trying to make reservations for a pleasant dinner in your journey’s first and last nights. Agentic AI programs can ingest these disparate wants and suggest a workable itinerary on your journey, then ebook your keep and journey—interfacing with a number of on-line platforms to take action.

These capabilities will seemingly have monumental implications for a lot of companies, together with ramifications for very data-intensive industries like monetary providers. Think about with the ability to synthesize, analyze, and question your AI programs about numerous buyer actions and profiles in simply minutes. The chances are thrilling.

Nonetheless, agentic AI additionally begs a essential query about AI oversight. Reserving journey could be innocent, however different duties in compliance-focused industries may have parameters set round how and when AI could make govt selections.

Rising compliance frameworks

FIs have a possibility to codify sure expectations round AI proper now, with the purpose of enhancing consumer relations and proactively prioritizing the well-being of their clients. Areas of curiosity on this regard embrace:

- Security and safety

- Accountable improvement

- Bias and illegal discrimination

- Privateness

Though we can’t guess the timeline or probability of rules, organizations can conduct due diligence to assist mitigate danger and underscore their dedication to consumer outcomes. Vital concerns embrace AI transparency and client knowledge privateness.

Danger-based approaches to AI governance

Most AI specialists agree {that a} one-size-fits-all method to governance is inadequate. In spite of everything, the ramifications of unethical AI differ considerably primarily based on utility. For that reason, risk-based approaches—resembling these adopted by the EU’s complete AI act—are gaining traction.

In a risk-based compliance system, the power of punitive measures is predicated on an AI system’s potential influence on human rights, security, and societal well-being. For instance, high-risk industries like healthcare and monetary providers could be scrutinized extra completely for AI use as a result of unethical practices in these industries can considerably influence a client’s well-being.

Organizations in high-risk industries should stay particularly vigilant about moral AI deployment. The simplest approach to do that is to prioritize human-in-the-loop decision-making. In different phrases, people ought to retain the ultimate say when validating outputs, checking for bias, and imposing moral requirements.

Learn how to stability innovation and ethics

Conversations about AI ethics often reference the need for innovation. These phenomena (innovation and ethics) are depicted as counteractive forces. Nonetheless, I imagine that progressive innovation requires a dedication to moral decision-making. Once we construct upon moral programs, we create extra viable, long-term, and inclusive applied sciences.

Arguably, essentially the most essential consideration on this realm is explainable AI, or programs with decision-making processes that people can perceive, audit, and clarify.

Many AI programs presently function as “black containers.” In brief, we can’t perceive the logic informing these programs’ outputs. Non-explainable AI may be problematic when it limits people’ talents to confirm—intellectually and ethically—the accuracy of a system’s rationale. In these cases, people can’t show the reality behind an AI’s response or motion. Maybe much more troublingly, non-explainable AI is harder to iterate upon. Leaders ought to take into account prioritizing deploying AI that people can frequently take a look at, vet, and perceive.

The stability between moral and modern AI could seem delicate, nevertheless it’s essential nonetheless. Leaders who interrogate the ethics of their AI suppliers and programs can enhance their longevity and efficiency.

Concerning the Creator

Vall Herard is the CEO of Saifr.ai, a Constancy labs firm. He brings in depth expertise and material experience to this matter and may make clear the place the business is headed, in addition to what business individuals ought to anticipate for the way forward for AI. All through his profession, he’s seen the evolution in using AI inside the monetary providers business. Vall has beforehand labored at high banks resembling BNY Mellon, BNP Paribas, UBS Funding Financial institution, and extra. Vall holds an MS in Quantitative Finance from New York College (NYU) and a certificates in knowledge & AI from the Massachusetts Institute of Know-how (MIT) and a BS in Mathematical Economics from Syracuse and Tempo Universities.

Join the free insideAI Information e-newsletter.

Be a part of us on Twitter: https://twitter.com/InsideBigData1

Be a part of us on LinkedIn: https://www.linkedin.com/firm/insideainews/

Be a part of us on Fb: https://www.fb.com/insideAINEWSNOW