how neural networks realized. Practice them, watch the loss go down, save checkpoints each epoch. Commonplace workflow. Then I measured coaching dynamics at 5-step intervals as a substitute of epoch-level, and every part I assumed I knew fell aside.

The query that began this journey: Does a neural community’s capability broaden throughout coaching, or is it mounted from initialization? Till 2019, all of us assumed the reply was apparent—parameters are mounted, so capability should be mounted too. However Ansuini et al. found one thing that shouldn’t be attainable: the efficient representational dimensionality will increase throughout coaching. Yang et al. confirmed it in 2024.

This adjustments every part. If studying area expands whereas the community learns, how can we mechanistically perceive what it’s really doing?

Excessive-Frequency Coaching Checkpoints

After we are coaching a DNN with 10,000 steps, we used to arrange chack factors each 100 or 200 steps. Measuring at 5-step intervals generates an excessive amount of data that aren’t simple to handle. However these high-frequency checkpoints reveal very precious details about how a DNN learns.

Excessive-frequency checkpoints present details about:

- Whether or not early coaching errors could be recovered from (they usually can’t)

- Why some architectures work and others fail

- When interpretability evaluation ought to occur (spoiler: method sooner than we thought)

- The best way to design higher coaching approaches

Throughout an utilized analysis venture I’ve measured DNN coaching at excessive decision — each 5 steps as a substitute of each 100 or 500. I used a fundamental MLP structure with the identical dataset I’ve been utilizing for the final 10 years.

rolling statistics:

The outcomes had been shocking. Deep neural networks, even easy architectures, broaden their efficient parameter area throughout coaching. I had assumed this area was predetermined by the structure itself. As a substitute, DNNs bear discrete transitions—small jumps that improve the efficient dimensionality of their studying area.

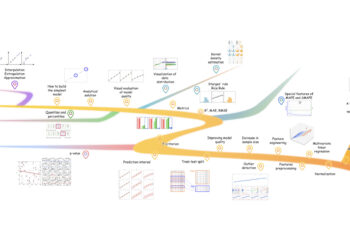

distinct developmental window. Picture by writer.

In Determine 2 we will see the monitoring of activation efficient dimensionality throughout coaching. We see these transitions focus within the first 25% of coaching, and are hidden at bigger checkpoint intervals (100-1000 steps). We would have liked a high-frequency checkpointing (5 steps) to detect most of them. The curve additionally exhibits an attention-grabbing conduct. The preliminary collapse represents loss panorama restructuring the place random initialization offers technique to a task-aligned construction. Then we see an growth section with gradual dimensionality development. Between 2000-3000 steps, there’s a stabilization that displays DNN architectural capability limits.

This adjustments how we must always take into consideration DNN coaching, interpretability, and structure design.

Exploration vs Growth

Take into account the next two situations:

| State of affairs A: Mounted Capability (Exploration) |

State of affairs B: Increasing Capability (Innovation) |

| Your community begins with a set representational capability. Coaching explores completely different areas of this pre-determined area. It’s like navigating a map that exists from the start. Early coaching simply means “haven’t discovered the nice area but”. | Your community begins with minimal capability. Coaching creates representational constructions. Its like constructing roads whereas touring — every street permits new locations. Early coaching establishes what turns into learnable later. |

Which is it?

The query issues as a result of if capability expands, then early coaching isn’t recoverable. You possibly can’t simply “practice longer” to repair early errors. So, interpretability has a timeline the place options type in sequence. Understanding this sequence is vital. Furehtermore, structure design appears to be about growth price not simply remaining capability. Lastly, important intervals exist. If we miss the window, we miss the aptitude.

When We Have to Measure Excessive-Frequency Checkpoints

Growth vs Exploration

As seen in Figures 2 and three, high-frequency sampling reveals attention-grabbing data. We will indentify three completely different phases:

| Part 1: Collapse (steps 0-300) The community restructures from random initialization. Dimensionality drops sharply because the loss panorama is reshaped across the activity. This isn’t studying but, it’s preparation for studying. |

| Part 2: Growth (steps 300-5,000) Dimensionality climbs steadily. That is capability growth. The community is constructing representational constructions. Easy options that allow advanced options that allow higher-order options. |

| Part 3: Stabilization (steps 5,000-8,000) Development plateaus. Architectural constraints bind. The community refines what it has moderately than constructing new capability. |

This plots reveals growth, not exploration. The community at step 5,000 can symbolize capabilities that had been inconceivable at step 300 as a result of they didn’t exist.

Capability Expands, Parameters Don’t

Weight area dimensionality stays practically fixed

(9.72-9.79) with only one detected “leap” throughout 8000 steps. Picture by writer

The comparability between activation and weight areas exhibits that each observe completely different dynamics with high-frequency sampling. The activation area exhibits ap. 85 discrete jumps (together with Gaussian noise). The burden area exhibits only one. The identical community with the identical coaching run. It confirms that the community at step 8000 computes capabilities inaccessible at step 500 regardless of an similar parameter depend. That is the clearest proof for growth.

DNNs innovate by producing new parameter area choices throughout coaching with a view to symbolize advanced duties.

Transitions Are Quick and Early

We’ve seen how high-frequency sampling exhibits many extra transitions. Low-frequency checkpointing would miss practically all of them. These transitions focus early. Two thirds of all transitions occur within the first 2,000 steps — simply 25% of whole coaching time. It implies that if we need to perceive what options type and when, we have to look throughout steps 0-2,000, not at convergence. By step 5,000, the story is over.

Growth {Couples} to Optimization

If we glance once more at Determine 3, we see that as loss decreases, dimensionality expands. The community doesn’t simplify because it learns. It turns into extra advanced. Dimensionality correlates strongly with loss (ρ = -0.951) and reasonably with gradient magnitude (ρ = -0.701). This might appear counterintuitive: improved efficiency correlates with expanded moderately than compressed representations. We’d count on networks to seek out less complicated, extra compressed representations as they study. As a substitute, they broaden into higher-dimensional areas.

Why?

A attainable rationalization is that advanced duties require advanced representations. The community doesn’t discover a less complicated rationalization and builds the representational adjustments wanted to separate lessons, acknowledge patterns, and generalize.

Sensible Deployment

We’ve seen a distinct technique to perceive and debug DNN coaching throughout any area.

If we all know when options type throughout coaching, we will analyze them as they crystallize moderately than reverse-engineering a black field afterward.

In actual deployment situations, we will monitor representational dimensionality in real-time, detect when growth phases happen, and run interpretability analyses at every transition level. This tells us exactly when our community is constructing new representational constructions—and when it’s completed. The measurement strategy is architecture-agnostic: it really works whether or not you’re coaching CNNs for imaginative and prescient, transformers for language, RL brokers for management, or multimodal fashions for cross-domain duties.

| Instance 1: Intervention experiments that map causal dependencies. Disrupt coaching throughout particular home windows and measure which downstream capabilities are misplaced. If corrupting information throughout steps 2,000-5,000 completely damages texture recognition however the identical corruption at step 6,000 has no impact, you’ve discovered when texture options crystallize and what they depend upon. This works identically for object recognition in imaginative and prescient fashions, syntactic construction in language fashions, or state discrimination in RL brokers. |

| Instance 2: For manufacturing deployment, steady dimensionality monitoring catches representational issues throughout coaching when you’ll be able to nonetheless repair them. If layers cease increasing, you have got architectural bottlenecks. If growth turns into erratic, you have got instability. If early layers saturate whereas late layers fail to broaden, you have got data stream issues. Commonplace loss curves gained’t present these points till it’s too late—dimensionality monitoring surfaces them instantly. |

| Instance 3: The structure design implications are equally sensible. Measure growth dynamics throughout the first 5-10% of coaching throughout candidate architectures. Choose for clear section transitions and structured bottom-up growth. These networks aren’t simply extra performant—they’re basically extra interpretable as a result of options type in clear sequential layers moderately than tangled simultaneity. |

What’s Subsequent

So we’ve established that networks broaden their representational area throughout coaching, that we will measure these transitions at excessive decision, and that this opens new approaches to interpretability and intervention. The pure query: are you able to apply this to your individual work?

I’m releasing the entire measurement infrastructure as open supply. I included validated implementations for MLPs, CNNs, ResNets, Transformers, and Imaginative and prescient Transformers, with hooks for customized architectures.

All the pieces runs with three traces added to your coaching loop.

The GitHub repository gives experiment templates for the experiments mentioned above: function formation mapping, intervention protocols, cross-architecture switch prediction, and manufacturing monitoring setups. The measurement methodology is validated. What issues now’s what you uncover while you apply it to your area.

Strive it:

pip set up ndtracker

Quickstart, directions, and examples within the repository: Neural Dimensionality Tracker (NDT)

The code is production-ready. The protocols are documented. The questions are open. I wish to see what you discover while you measure your coaching dynamics at excessive decision irrespective of the context and the structure.

You possibly can share your outcomes, open points together with your findings, or simply ⭐️ the repo if this adjustments how you consider coaching. Keep in mind, the interpretability timeline exists throughout all neural architectures.

Javier Marín | LinkedIn | Twitter

References & Additional Studying

- Achille, A., Rovere, M., & Soatto, S. (2019). Essential studying intervals in deep networks. In Worldwide Convention on Studying Representations (ICLR). https://openreview.web/discussion board?id=BkeStsCcKQ

- Frankle, J., Dziugaite, G. Okay., Roy, D. M., & Carbin, M. (2020). Linear mode connectivity and the lottery ticket speculation. In Proceedings of the thirty seventh Worldwide Convention on Machine Studying (pp. 3259-3269). PMLR. https://proceedings.mlr.press/v119/frankle20a.html

- Ansuini, A., Laio, A., Macke, J. H., & Zoccolan, D. (2019). Intrinsic dimension of information representations in deep neural networks. In Advances in Neural Info Processing Programs (Vol. 32, pp. 6109-6119). https://proceedings.neurips.cc/paper/2019/hash/cfcce0621b49c983991ead4c3d4d3b6b-Summary.html

- Yang, J., Zhao, Y., & Zhu, Q. (2024). ε-rank and the staircase phenomenon: New insights into neural community coaching dynamics. arXiv preprint arXiv:2412.05144. https://arxiv.org/abs/2412.05144

- Olah, C., Mordvintsev, A., & Schubert, L. (2017). Function visualization. Distill, 2(11), e7. https://doi.org/10.23915/distill.00007

- Elhage, N., Nanda, N., Olsson, C., Henighan, T., Joseph, N., Mann, B., Askell, A., Bai, Y., Chen, A., Conerly, T., DasSarma, N., Drain, D., Ganguli, D., Hatfield-Dodds, Z., Hernandez, D., Jones, A., Kernion, J., Lovitt, L., Ndousse, Okay., Amodei, D., Brown, T., Clark, J., Kaplan, J., McCandlish, S., & Olah, C. (2021). A mathematical framework for transformer circuits. Transformer Circuits Thread. https://transformer-circuits.pub/2021/framework/index.html