GRASP is a brand new gradient-based planner for realized dynamics (a “world mannequin”) that makes long-horizon planning sensible by (1) lifting the trajectory into digital states so optimization is parallel throughout time, (2) including stochasticity on to the state iterates for exploration, and (3) reshaping gradients so actions get clear alerts whereas we keep away from brittle “state-input” gradients by way of high-dimensional imaginative and prescient fashions.

Massive, realized world fashions have gotten more and more succesful. They’ll predict lengthy sequences of future observations in high-dimensional visible areas and generalize throughout duties in ways in which had been troublesome to think about a number of years in the past. As these fashions scale, they begin to look much less like task-specific predictors and extra like general-purpose simulators.

However having a robust predictive mannequin shouldn’t be the identical as with the ability to use it successfully for management/studying/planning. In observe, long-horizon planning with trendy world fashions stays fragile: optimization turns into ill-conditioned, non-greedy construction creates unhealthy native minima, and high-dimensional latent areas introduce refined failure modes.

On this weblog put up, I describe the issues that motivated this venture and our strategy to deal with them: why planning with trendy world fashions could be surprisingly fragile, why lengthy horizons are the actual stress take a look at, and what we modified to make gradient-based planning far more strong.

This weblog put up discusses work completed with Mike Rabbat, Aditi Krishnapriyan, Yann LeCun, and Amir Bar (* denotes equal advisorship), the place we suggest GRASP.

What’s a world mannequin?

Today, the time period “world mannequin” is sort of overloaded, and relying on the context can both imply an specific dynamics mannequin or some implicit, dependable inside state {that a} generative mannequin depends on (e.g. when an LLM generates chess strikes, whether or not there may be some inside illustration of the board). We give our unfastened working definition beneath.

Suppose you’re taking actions $a_t in mathcal{A}$ and observe states $s_t in mathcal{S}$ (photos, latent vectors, proprioception). A world mannequin is a realized mannequin that, given the present state and a sequence of future actions, predicts what’s going to occur subsequent. Formally, it defines a predictive distribution on a sequence of noticed states $s_{t-h:t}$ and present motion $a_t$:

[P_theta(s_{t+1} mid s_{t-h:t},; a_t)]

that approximates the atmosphere’s true conditional $P(s_{t+1} mid s_{t-h:t},; a_t)$. For this weblog put up, we’ll assume a Markovian mannequin $P(s_{t+1} mid s_{t-h:t},; a_t)$ for simplicity (all outcomes right here could be prolonged to the extra basic case), and when the mannequin is deterministic it reduces to a map over states:

[s_{t+1} = F_theta(s_t, a_t).]

In observe the state $s_t$ is usually a realized latent illustration (e.g., encoded from pixels), so the mannequin operates in a (theoretically) compact, differentiable house. The important thing level is {that a} world mannequin provides you a differentiable simulator; you may roll it ahead beneath hypothetical motion sequences and backpropagate by way of the predictions.

Planning: selecting actions by optimizing by way of the mannequin

Given a begin $s_0$ and a objective $g$, the best planner chooses an motion sequence $mathbf{a}=(a_0,dots,a_{T-1})$ by rolling out the mannequin and minimizing terminal error:

[min_{mathbf{a}} ; | s_T(mathbf{a}) – g |_2^2, quad text{where } s_T(mathbf{a}) = mathcal{F}_{theta}^{T}(s_0,mathbf{a}).]

Right here we use $mathcal{F}^T$ as shorthand for the total rollout by way of the world mannequin (dependence on mannequin parameters $theta$ is implicit):

[mathcal{F}_{theta}^{T}(s_0, mathbf{a}) = F_theta(F_theta(cdots F_theta(s_0, a_0), cdots, a_{T-2}), a_{T-1}).]

In brief horizons and low-dimensional techniques, this may work moderately nicely. However as horizons develop and fashions turn out to be bigger and extra expressive, its weaknesses turn out to be amplified.

So why doesn’t this simply work at scale?

Why long-horizon planning is difficult (even when every thing is differentiable)

There are two separate ache factors for the extra basic world mannequin, plus a 3rd that’s particular to realized, deep learning-based fashions.

1) Lengthy-horizon rollouts create deep, ill-conditioned computation graphs

These conversant in backprop by way of time (BPTT) might discover that we’re differentiating by way of a mannequin utilized to itself repeatedly, which is able to result in the exploding/vanishing gradients drawback. Particularly, if we take derivatives (word we’re differentiating vector-valued capabilities, leading to Jacobians that we denote with $D_x (cdots)$) with respect to earlier actions (e.g. $a_0$):

[D_{a_0} mathcal{F}_{theta}^{T}(s_0, mathbf{a}) = Bigl(prod_{t=1}^T D_s F_theta(s_t, a_t)Bigr) D_{a_0}F_theta(s_0, a_0).]

We see that the Jacobian’s conditioning scales exponentially with time $T$:

[sigma_{text{max/min}}(D_{a_0}mathcal{F}_{theta}^{T}) sim sigma_{text{max/min}}(D_s F_theta)^{T-1},]

resulting in exploding or vanishing gradients.

2) The panorama is non-greedy and stuffed with traps

At quick horizons, the grasping resolution, the place we transfer straight towards the objective at each step, is usually adequate. Should you solely have to plan a number of steps forward, the optimum trajectory often doesn’t deviate a lot from “head towards $g$” at every step.

As horizons develop, two issues occur. First, longer duties usually tend to require non-greedy habits: going round a wall, repositioning earlier than pushing, backing as much as take a greater path. And as horizons develop, extra of those non-greedy steps are sometimes wanted. Second, the optimization house itself scales with horizon: $mathrm{dim}(mathcal{A} instances cdots instances mathcal{A}) = Tmathrm{dim}(mathcal{A})$, additional increasing the house of native minima for the optimization drawback.

An extended-horizon repair: lifting the dynamics constraint

Suppose we deal with the dynamics constraint $s_{t+1} = F_{theta}(s_t, a_t)$ as a smooth constraint, and we as a substitute optimize the next penalty operate over each actions $(a_0,ldots,a_{T-1})$ and states $(s_0,ldots,s_T)$:

[min_{mathbf{s},mathbf{a}} mathcal{L}(mathbf{s}, mathbf{a}) = sum_{t=0}^{T-1} big|F_theta(s_t,a_t) – s_{t+1}big|_2^2,

quad text{with } s_0 text{ fixed and } s_T=g.]

That is additionally typically referred to as collocation in planning/robotics literature. Be aware the lifted formulation shares the identical world minimizers as the unique rollout goal (each are zero precisely when the trajectory is dynamically possible). However the optimization landscapes are very totally different, and we get two rapid advantages:

- Every world mannequin analysis $F_{theta}(s_t,a_t)$ relies upon solely on native variables, so all $T$ phrases could be computed in parallel throughout time, leading to an enormous speed-up for longer horizons, and

- You now not backpropagate by way of a single deep $T$-step composition to get a studying sign, because the earlier product of Jacobians now splits right into a sum, e.g.:

[D_{a_0} mathcal{L} = 2(F_theta(s_0, a_0) – s_1).]

With the ability to optimize states instantly additionally helps with exploration, as we will briefly navigate by way of unphysical domains to search out the optimum plan:

Nevertheless, lunch is rarely free. And certainly, particularly for deep learning-based world fashions, there’s a essential concern that makes the above optimization fairly troublesome in observe.

A difficulty for deep learning-based world fashions: sensitivity of state-input gradients

The tl;dr of this part is: instantly optimizing states by way of a deep learning-based $F_{theta}$ is extremely brittle, à la adversarial robustness. Even when you practice your world mannequin in a lower-dimensional state house, the coaching course of for the world mannequin makes unseen state landscapes very sharp, whether or not it’s an unseen state itself or just a traditional/orthogonal path to the info manifold.

Adversarial robustness and the “dimpled manifold” mannequin

Adversarial robustness initially checked out classification fashions $f_theta : mathbb{R}^{wtimes h instances c} to mathbb{R}^Ok$, and confirmed that by following the gradient of a specific logit $nabla f_theta^ok$ from a base picture $x$ (not of sophistication $ok$), you didn’t have to maneuver far alongside $x’ = x + epsilonnabla f_theta^ok$ to make $f_theta$ classify $x’$ as $ok$ (Szegedy et al., 2014; Goodfellow et al., 2015):

Later work has painted a geometrical image for what’s happening: for information close to a low-dimensional manifold $mathcal{M}$, the coaching course of controls habits in tangential instructions, however doesn’t regularize habits in orthogonal instructions, thus resulting in delicate habits (Stutz et al., 2019). One other method said: $f_theta$ has an affordable Lipschitz fixed when contemplating solely tangential instructions to the info manifold $mathcal{M}$, however can have very excessive Lipschitz constants in regular instructions. Actually, it typically advantages the mannequin to be sharper in these regular instructions, so it will possibly match extra sophisticated capabilities extra exactly.

Consequently, such adversarial examples are extremely widespread even for a single given mannequin. Additional, this isn’t simply a pc imaginative and prescient phenomenon; adversarial examples additionally seem in LLMs (Wallace et al., 2019) and in RL (Gleave et al., 2019).

Whereas there are strategies to coach for extra adversarially strong fashions, there’s a recognized trade-off between mannequin efficiency and adversarial robustness (Tsipras et al., 2019): particularly within the presence of many weakly-correlated variables, the mannequin should be sharper to realize larger efficiency. Certainly, most trendy coaching algorithms, whether or not in pc imaginative and prescient or LLMs, don’t practice adversarial robustness out. Thus, at the least till deep studying sees a serious regime change, this can be a drawback we’re caught with.

Why is adversarial robustness a problem for world mannequin planning?

Take into account a single element of the dynamics loss we’re optimizing within the lifted state strategy:

[min_{s_t, a_t, s_{t+1}} |F_theta(s_t, a_t) – s_{t+1}|_2^2]

Let’s additional concentrate on simply the bottom state:

[min_{s_t} |F_theta(s_t, a_t) – s_{t+1}|_2^2.]

Since world fashions are sometimes educated on state/motion trajectories $(s_1, a_1, s_2, a_2, ldots)$, the state-data manifold for $F_{theta}$ has dimensionality bounded by the motion house:

[mathrm{dim}(mathcal{M}_s) le mathrm{dim}(mathcal{A}) + 1 + mathrm{dim}(mathcal{R}),]

the place $mathcal{R}$ is a few non-obligatory house of augmentations (e.g. translations/rotations). Thus, we will sometimes anticipate $mathrm{dim}(mathcal{M}_s)$ to be a lot decrease than $mathrm{dim}(mathcal{S})$, and thus: it is rather straightforward to search out adversarial examples that hack any state to another desired state.

Consequently, the dynamics optimization

[sum_{t=0}^{T-1} big|F_theta(s_t,a_t) – s_{t+1}big|_2^2]

feels extremely “sticky,” as the bottom factors $s_t$ can simply trick $F_{theta}$ into considering it’s already made its native objective.1

1. This adversarial robustness concern, whereas notably unhealthy for lifted-state approaches, shouldn’t be distinctive to them. Even for serial optimization strategies that optimize by way of the total rollout map $mathcal{F}^T$, it’s attainable to get into unseen states, the place it is rather straightforward to have a traditional element fed into the delicate regular parts of $D_s F_{theta}$. The motion Jacobian’s chain rule growth is

[Bigl(prod_{t=1}^T D_s F_theta(s_t, a_t)Bigr) D_{a_0}F_theta(s_0, a_0).]

See what occurs if any stage of the product has any element regular to the info manifold. ↩

Our repair

That is the place our new planner GRASP is available in. The primary commentary: whereas $D_s F_{theta}$ is untrustworthy and adversarial, the motion house is often low-dimensional and exhaustively educated, so $D_a F_{theta}$ is definitely cheap to optimize by way of and doesn’t undergo from the adversarial robustness concern!

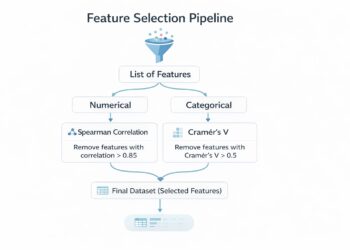

At its core, GRASP builds a first-order lifted state / collocation-based planner that’s solely depending on motion Jacobians by way of the world mannequin. We thus exploit the differentiability of realized world fashions $F_{theta}$, whereas not falling sufferer to the inherent sensitivity of the state Jacobians $D_s F_{theta}$.

GRASP: Gradient RelAxed Stochastic Planner

As famous earlier than, we begin with the collocation planning goal, the place we raise the states and calm down dynamics right into a penalty:

[min_{mathbf{s},mathbf{a}} mathcal{L}(mathbf{s}, mathbf{a}) = sum_{t=0}^{T-1} big|F_theta(s_t,a_t) – s_{t+1}big|_2^2,

quad text{with } s_0 text{ fixed and } s_T=g.]

We then make two key additions.

Ingredient 1: Exploration by noising the state iterates

Even with a smoother goal, planning is nonconvex. We introduce exploration by injecting Gaussian noise into the digital state updates throughout optimization.

A easy model:

[s_t leftarrow s_t – eta_s nabla_{s_t}mathcal{L} + sigma_{text{state}} xi, qquad xisimmathcal{N}(0,I).]

Actions are nonetheless up to date by non-stochastic descent:

[a_t leftarrow a_t – eta_a nabla_{a_t}mathcal{L}.]

The state noise helps you “hop” between basins within the lifted house, whereas the actions stay guided by gradients. We discovered that particularly noising states right here (versus actions) finds an excellent steadiness of exploration and the flexibility to search out sharper minima.2

2. As a result of we solely noise the states (and never the actions), the corresponding dynamics will not be actually Langevin dynamics. ↩

Ingredient 2: Reshape gradients: cease brittle state-input gradients, hold motion gradients

As mentioned, the delicate pathway is the gradient that flows into the state enter of the world mannequin, (D_s F_{theta}). Probably the most easy method to do that initially is to simply cease state gradients into (F_{theta}) instantly:

- Let $bar{s}_t$ be the identical worth as $s_t$, however with gradients stopped.

Outline the stop-gradient dynamics loss:

[mathcal{L}_{text{dyn}}^{text{sg}}(mathbf{s},mathbf{a})

= sum_{t=0}^{T-1} big|F_theta(bar{s}_t, a_t) – s_{t+1}big|_2^2.]

This alone doesn’t work. Discover now states solely observe the earlier state’s step, with out something forcing the bottom states to chase the subsequent ones. Consequently, there are trivial minima for simply stopping on the origin, then just for the ultimate motion attempting to get to the objective in a single step.

Dense objective shaping

We are able to view the above concern because the objective’s sign being reduce off fully from earlier states. One approach to repair that is to easily add a dense objective time period all through prediction:

[mathcal{L}_{text{goal}}^{text{sg}}(mathbf{s},mathbf{a})

= sum_{t=0}^{T-1} big|F_theta(bar{s}_t, a_t) – gbig|_2^2.]

In regular settings this is able to over-bias in the direction of the grasping resolution of straight chasing the objective, however that is balanced in our setting by the stop-gradient dynamics loss’s bias in the direction of possible dynamics. The ultimate goal is then as follows:

[mathcal{L}(mathbf{s},mathbf{a}) = mathcal{L}_{text{dyn}}^{text{sg}}(mathbf{s},mathbf{a}) + gamma , mathcal{L}_{text{goal}}^{text{sg}}(mathbf{s},mathbf{a}).]

The result’s a planning optimization goal that doesn’t have dependence on state gradients.

Periodic “sync”: briefly return to true rollout gradients

The lifted stop-gradient goal is nice for quick, guided exploration, nevertheless it’s nonetheless an approximation of the unique serial rollout goal.

So each $K_{textual content{sync}}$ iterations, GRASP does a brief refinement section:

- Roll out from $s_0$ utilizing present actions $mathbf{a}$, and take a number of small gradient steps on the unique serial loss:

[mathbf{a} leftarrow mathbf{a} – eta_{text{sync}},nabla_{mathbf{a}},|s_T(mathbf{a})-g|_2^2.]

The lifted-state optimization nonetheless gives the core of the optimization, whereas this refinement step provides some help to maintain states and actions grounded in the direction of actual trajectories. This refinement step can after all get replaced with a serial planner of your selection (e.g. CEM); the core thought is to nonetheless get among the good thing about the full-path synchronization of serial planners, whereas nonetheless principally utilizing the advantages of the lifted-state planning.

How GRASP addresses long-range planning

Collocation-based planners provide a pure repair for long-horizon planning, however this optimization is sort of troublesome by way of trendy world fashions because of adversarial robustness points. GRASP proposes a easy resolution for a smoother collocation-based planner, alongside steady stochasticity for exploration. Consequently, longer-horizon planning finally ends up not solely succeeding extra, but in addition discovering such successes sooner:

| Horizon | CEM | GD | LatCo | GRASP |

|---|---|---|---|---|

| H=40 | 61.4% / 35.3s | 51.0% / 18.0s | 15.0% / 598.0s | 59.0% / 8.5s |

| H=50 | 30.2% / 96.2s | 37.6% / 76.3s | 4.2% / 1114.7s | 43.4% / 15.2s |

| H=60 | 7.2% / 83.1s | 16.4% / 146.5s | 2.0% / 231.5s | 26.2% / 49.1s |

| H=70 | 7.8% / 156.1s | 12.0% / 103.1s | 0.0% / — | 16.0% / 79.9s |

| H=80 | 2.8% / 132.2s | 6.4% / 161.3s | 0.0% / — | 10.4% / 58.9s |

Push-T outcomes. Success charge (%) / median time to success. Daring = greatest in row. Be aware the median success time will bias larger with larger success charge; GRASP manages to be sooner regardless of larger success charge.

What’s subsequent?

There may be nonetheless loads of work to be completed for contemporary world mannequin planners. We wish to exploit the gradient construction of realized world fashions, and collocation (lifted-state optimization) is a pure strategy for long-horizon planning, nevertheless it’s essential to know typical gradient construction right here: clean and informative motion gradients and brittle state gradients. We view GRASP as an preliminary iteration for such planners.

Extension to diffusion-based world fashions (deeper latent timesteps could be considered as smoothed variations of the world mannequin itself), extra refined optimizers and noising methods, and integrating GRASP into both a closed-loop system or RL coverage studying for adaptive long-horizon planning are all pure and fascinating subsequent steps.

I do genuinely assume it’s an thrilling time to be engaged on world mannequin planners. It’s a humorous candy spot the place the background literature (planning and management general) is extremely mature and well-developed, however the present setting (pure planning optimization over trendy, large-scale world fashions) remains to be closely underexplored. However, as soon as we determine all the precise concepts, world mannequin planners will seemingly turn out to be as commonplace as RL.

For extra particulars, learn the full paper or go to the venture web site.

Quotation

@article{psenka2026grasp,

title={Parallel Stochastic Gradient-Based mostly Planning for World Fashions},

creator={Michael Psenka and Michael Rabbat and Aditi Krishnapriyan and Yann LeCun and Amir Bar},

12 months={2026},

eprint={2602.00475},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2602.00475}

}