In my earlier article, I launched Proxy-Pointer RAG — a retrieval doc construction straight right into a vector index, attaining the surgical precision of “Vectorless RAG” techniques like PageIndex, with out their scalability and value penalties. That article laid the inspiration: the why, the how, and a promising 10-query comparability on a single World Financial institution report.

Though a helpful proof-of-concept, that didn’t show manufacturing readiness. This text goals to deal with that.

In an enterprise, the overwhelming majority of paperwork on which RAG is utilized — technical manuals, analysis papers, authorized contracts, coverage experiences, annual filings, compliance paperwork — have part headings. That makes them structured. And that construction encapsulates that means, exactly how a human comprehends and searches a fancy doc by organizing the part movement within the thoughts. A regular vector RAG throws away the construction, when it shreds a doc right into a flat bag of chunks resulting in sub-optimal responses. Proxy-Pointer as an alternative exploits that ubiquitous construction to dramatically enhance retrieval accuracy, at minimal extra value.

To emphasize-test the structure, we would have liked probably the most demanding structured paperwork we might discover — the type the place a single misplaced decimal level or a missed footnote can invalidate a complete evaluation. That’s monetary filings. 10-Okay annual experiences are deeply nested, cross-referenced throughout a number of monetary statements, and demand exact numerical reasoning. If Proxy-Pointer can deal with these, it could possibly deal with something with headings.

This text gives proof to assist Proxy-Pointer’s functionality. I took 4 publicly accessible FY2022 10-Okay filings — AMD (121 pages), American Categorical (260 pages), Boeing (190 pages), and PepsiCo (500 pages) — and examined Proxy-Pointer on 66 questions throughout two distinct benchmarks, together with adversarial queries particularly designed to interrupt naive retrieval techniques. The outcomes have been decisive and summarized beneath.

Additionally on this article, I’m open-sourcing the whole pipeline — so you’ll be able to run it by yourself paperwork, reproduce the outcomes, and push it additional.

Fast Recap: What’s Proxy-Pointer?

Commonplace vector RAG splits paperwork into blind chunks, embeds them, and retrieves the top-Okay by cosine similarity. The synthesizer LLM sees fragmented, context-less textual content — and steadily hallucinates or misses the reply solely.

Within the earlier article, Proxy-Pointer mounted this with 5 zero-cost engineering strategies:

- Skeleton Tree — Parse Markdown headings right into a hierarchical tree (pure Python, no LLM wanted)

- Breadcrumb Injection — Prepend the complete structural path (

AMD > Monetary Statements > Money Flows) to each chunk earlier than embedding - Construction-Guided Chunking — Cut up textual content inside part boundaries, by no means throughout them

- Noise Filtering — Take away distracting sections (TOC, glossary, government summaries) from the index

- Pointer-Based mostly Context — Use retrieved chunks as pointers to load the full, unbroken doc part for the synthesizer

The consequence: each chunk is aware of the place it lives within the doc, and the synthesizer sees full sections — not fragments.

Refinements Because the First Article

A number of vital enhancements have been made to the pipeline earlier than benchmarking. These are outlined as follows:

Indexing Pipeline Adjustments

Standalone Structure. The unique implementation relied on PageIndex as a dependency for skeleton tree technology. This has been fully eliminated. Proxy-Pointer now ships a self-contained, ~150-line pure-Python tree builder that parses Markdown headings right into a hierarchical JSON construction — zero exterior dependencies, zero LLM calls, runs in milliseconds.

LLM-Powered Noise Filter. The primary model used a hardcoded record of noise titles (NOISE_TITLES = {"contents", "foreword", ...}). This broke on variations like “Observe of Thanks” vs. “Acknowledgments” or “TOC” vs. “TABLE OF CONTENTS.” On this model, the brand new pipeline sends the light-weight skeleton tree to gemini-flash-lite and asks it to establish noise nodes throughout six classes. This catches semantic equivalents that regex couldn’t.

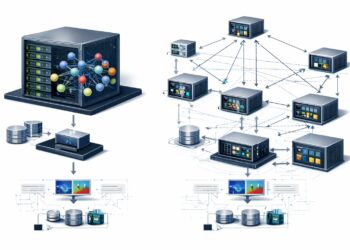

The up to date pipeline is the next:

Retrieval Pipeline Adjustments

Two-Stage Retrieval: Semantic + LLM Re-Ranker. The primary article used a easy top-Okay retrieval from FAISS. The refined pipeline now operates in two levels:

- Stage 1 (Broad Recall): FAISS returns the highest 200 chunks by embedding similarity, that are deduplicated by

(doc_id,node_id) and shortlisted to 50 distinctive candidate nodes. - Stage 2 (Structural Re-Rating): The hierarchical breadcrumb paths of all 50 candidates are despatched to a Gemini LLM, which re-ranks them by structural relevance — not embedding similarity — and returns the highest 5. That is the important thing differentiator: a question about “AMD’s money movement” now accurately prioritizes

AMD > Monetary Statements > Money Flowsover a paragraph that merely mentions money movement.

Right here is the up to date pipeline:

These refinements remodeled Proxy-Pointer from a promising prototype right into a production-grade retrieval engine.

Benchmarking: Two Exams, 66 Questions, 4 Firms

To scrupulously consider the pipeline, I downloaded the FY2022 10-Okay annual filings of AMD, American Categorical (AMEX), Boeing, and PepsiCo. These have been extracted to Markdown utilizing LlamaParse and listed via the Proxy-Pointer pipeline.

I then examined towards two distinct benchmarks:

Benchmark 1: FinanceBench (26 Questions)

FinanceBench is a longtime benchmark of qualitative and quantitative questions throughout monetary filings. I chosen all 26 questions spanning the 4 firms within the dataset — masking numerical reasoning, info extraction and logical reasoning. Resulting from dataset licensing restrictions, the FinanceBench questions and floor fact solutions are usually not included within the github repository. Nonetheless, the scorecards with Proxy-Pointer responses are offered for reference.

To make sure reproducibility, the repository features a benchmark.py script that permits you to run the complete analysis your self on FinanceBench (or any customized dataset), producing each detailed logs and scorecards utilizing the identical pipeline.

Benchmark 2: Complete Stress Take a look at (40 Questions)

FinanceBench, whereas helpful, primarily checks factual recall. I wanted one thing tougher — queries that will break a system counting on surface-level chunk matching. So I created 40 customized questions, 10 per firm, particularly designed to stress-test numerical reasoning, multi-hop retrieval, adversarial robustness, and cross-statement reconciliation.

The complete Q&A logs utilizing the bot and scorecards evaluating with the bottom fact are included within the github repository.

Listed here are 5 examples that illustrate the complexity:

Multi-hop Numerical (AMEX): “Calculate the proportion of web curiosity earnings to whole revenues web of curiosity expense for 2022 and examine it to 2021. Did dependence improve?”

This requires finding two completely different line objects throughout two fiscal years, computing ratios for every, after which evaluating them — a three-step reasoning chain that calls for exact retrieval of the earnings assertion.

Adversarial Numerical (AMD): “Estimate whether or not stock buildup contributed considerably to money movement decline in FY2022.”

That is intentionally adversarial: it presupposes that money movement declined (which it did, marginally) and requires the mannequin to quantify a stability sheet merchandise’s impression on a money movement assertion metric. A naive retriever would fetch stability sheet information however miss the money movement context.

Reinvestment Charge (PepsiCo): “Calculate the reinvestment price outlined as Capex divided by (Working Money Circulate minus Dividends).”

This requires pulling three distinct figures from the money movement assertion, performing a non-standard calculation that isn’t reported anyplace within the 10-Okay, and arriving at a exact ratio (1.123).

Money Circulate High quality (Boeing): “What proportion of working money movement in FY2022 was consumed by adjustments in working capital?”

The reply right here is counterintuitive: 0% — as a result of working capital was really a supply of money (+$4,139M vs. OCF of $3,512M), contributing 118% of working money movement. Any system that retrieves the unsuitable part or misinterprets the signal will fail.

Attribution (AMEX): “Estimate how a lot of whole income progress is attributable to low cost income improve.”

This requires computing two deltas (low cost income change and whole income change), then expressing one as a proportion of the opposite — a calculation nowhere current within the submitting itself.

Each query has a pre-computed floor fact reply with particular numerical values, making analysis unambiguous.

Outcomes

okay=5 Configuration (Main)

On this configuration, the retriever selects a set of 5 nodes (k_final = 5), and the corresponding sections despatched to the synthesizer LLM for response.

| Benchmark | Rating | Accuracy |

|---|---|---|

| FinanceBench (26 questions) | 26 / 26 | 100% |

| Complete (40 questions) | 40 / 40 | 100% |

| Whole | 66 / 66 | 100% |

An ideal rating throughout all 66 questions. Each numerical worth matched the bottom fact. Each qualitative evaluation aligned with the submitting information.

As an example, listed below are two precise bot responses from the benchmark run — exhibiting the retrieval path and the synthesized reply for queries cited within the earlier part:

PepsiCo: Reinvestment Charge — “Calculate the reinvestment price outlined as Capex divided by (Working Money Circulate minus Dividends).”

The bot retrieved Consolidated Assertion of Money Flows (ID: 0080) and Consolidated Assertion of Money Flows (continued) (ID: 0081), then computed:

Capital spending: $5,207M / (Working Money Circulate: $10,811M − Dividends: $6,172M) = 5,207 / 4,639 = 112.24% (i.e., 1.1224)

Floor fact: 1.123. ✅ Match. However the bot went additional — unprompted, it computed the identical ratio for FY2021 (79.73%) and FY2020 (83.07%), surfacing a transparent development of accelerating reinvestment that wasn’t even requested for.

Boeing: Money Circulate High quality — “What proportion of working money movement in FY2022 was consumed by adjustments in working capital?”

The bot retrieved Boeing > Liquidity and Capital Sources > Money Circulate Abstract (ID: 0082) and Consolidated Statements of Money Flows (ID: 0107), then responded:

Adjustments in working capital offered $4,139M in money, whereas web working money movement was $3,512M. As a result of the change in working capital was a supply of money relatively than a consumption, it didn’t devour any proportion of OCF.

Floor fact: 0% consumed; working capital was a supply of money. ✅ Match. This can be a intentionally counterintuitive query — most techniques would power a proportion relatively than recognizing the logical inversion. The structural retrieval ensured the bot had the complete money movement assertion, and the synthesizer accurately interpreted the signal.

okay=3 Configuration (Stress Take a look at)

To know the system’s failure boundaries, I re-ran each benchmarks with k_final=3 — retrieving solely 3 doc sections as an alternative of 5. This intentionally constrains the context window to check whether or not the retrieval precision is strong sufficient to work with fewer nodes.

| Benchmark | Rating | Accuracy |

|---|---|---|

| FinanceBench (26 questions) | 25 / 26 | 96.2% |

| Complete (40 questions) | 37 / 40 | 92.5% |

| Whole | 62 / 66 | 93.9% |

The failures within the okay=3 run have been insightful and supply extra perspective than the right okay=5 scores:

- FinanceBench: One query for AMD, suffered a calculation hallucination — the mannequin retrieved the proper inputs ($9,981M / $6,369M) however computed the division incorrectly (outputting 1.78 as an alternative of 1.57). The retrieval was appropriate; the LLM’s arithmetic was not. This isn’t a retrieval failure — it’s a synthesis failure, and a bigger LLM (than

flash-liteused right here) possible wouldn’t have made an error. - Complete (AMEX Q7): The question “Did provisions for credit score losses improve sooner than whole income?” requires each the provisions line and the whole income line from the earnings assertion. With okay=3, the ranker prioritized the credit score loss notes over the income abstract, leaving the synthesizer with out the denominator for its comparability.

- Complete (AMEX Q10): Working leverage evaluation requires evaluating income progress and expense progress facet by facet. At okay=3, the expense breakdowns have been excluded.

- Complete (PepsiCo Q9): “What proportion of Russia-Ukraine costs have been attributable to intangible asset impairments?” requires a particular footnote that, at okay=3, was displaced by higher-ranking money movement nodes.

The sample is constant: each okay=3 failure was attributable to inadequate context protection, not incorrect retrieval. The ranker selected the proper major sections; it merely didn’t have room for the secondary ones that advanced reconciliation queries demand.

This confirms an essential architectural perception: when questions require cross-referencing a number of components of monetary statements, okay=5 gives the required protection, whereas okay=3 introduces retrieval gaps for probably the most advanced reconciliations. For many sensible purposes — the place nearly all of queries goal a single part or assertion — okay=3 could be completely satisfactory and sooner.

What the Scorecards Don’t Present

Past the uncooked numbers, the benchmark revealed qualitative strengths price highlighting:

Supply Grounding. Each response cited particular sources utilizing their structural breadcrumbs (e.g., AMD > Monetary Situation > Liquidity and Capital Sources). An analyst receiving these solutions can hint them on to the submitting part, creating an audit path.

Adversarial Robustness. When requested about crypto income at AMEX (which doesn’t exist), the system accurately returned “No proof” relatively than hallucinating a determine. When requested about Boeing’s Debt/Fairness ratio (which is mathematically undefined attributable to adverse fairness), it defined why the metric just isn’t significant relatively than forcing a quantity. These are the queries that journey up techniques with poor retrieval — they floor plausible-looking however irrelevant context, and the LLM invents a solution.

Outperforming Floor Fact. In a number of instances, the bot’s reply was arguably higher than our pre-computed floor fact. Boeing’s backlog change was estimated as “mid-single digit %” within the floor fact, however the bot computed the exact determine: +7.12%. AMD’s stock impression was ground-truthed as “$1B+ drag,” however the bot recognized the precise $1.4B buildup. These aren’t errors — they’re enhancements made attainable as a result of the synthesizer noticed the complete, unedited part textual content, not a truncated chunk.

For detailed question-by-question outcomes, comparability logs, and traffic-light scorecards, consult with the open supply repo talked about beneath.

Open-Supply Repository

Proxy-Pointer is now totally open-source (MIT License) and could be accessed at Proxy-Pointer Github repository.

It’s designed for a 5-minute quickstart:

Proxy-Pointer/

├── src/

│ ├── config.py # Centralized configuration

│ ├── extraction/ # PDF → Markdown (LlamaParse)

│ ├── indexing/

│ │ ├── build_skeleton_trees.py # Pure-Python tree builder

│ │ └── build_pp_index.py # Noise filter + chunking + FAISS

│ └── agent/

│ ├── pp_rag_bot.py # Interactive RAG bot

│ └── benchmark.py # Automated benchmarking with LLM-as-a-judge

├── information/

│ ├── pdf/ # 4 FinanceBench 10-Ks included

│ ├── paperwork/ # Pre-extracted AMD Markdown (able to index)

│ └── Benchmark/ # Full scorecards and comparability logsWhat’s included out of the field

- A pre-extracted Markdown file for AMD’s FY2022 10-Okay — simply configure your Gemini API key, construct the index, and begin querying in below 5 minutes.

- Three extra 10-Okay PDFs (AMEX, Boeing, PepsiCo) for customers who wish to broaden their corpus.

- Scorecards for each benchmarks and full question-answer logs for 40 questions of Complete benchmark.

- An automatic benchmarking script with LLM-as-a-judge analysis — convey your individual Excel file with questions and floor truths, and the system generates timestamped scorecards routinely.

Your complete pipeline runs on a single Gemini API key utilizing gemini-flash-lite, probably the most value efficient mannequin in Google’s lineup. No GPU required. No advanced infrastructure or token-hungry and costly indexing tree to be constructed.

Simply clone, configure, vector index, question.

Conclusion

After I revealed the primary article on Proxy-Pointer, it was an attention-grabbing speculation: you don’t want costly LLM-navigated timber to make retrieval structurally conscious — you simply should be intelligent about what you embed. The proof was a 10-query comparability on a single doc.

This text strikes past speculation to proof.

66 questions. 4 Fortune 500 firms. 100% accuracy at okay=5. Multi-hop numerical reasoning, cross-statement reconciliation, adversarial edge instances, and counterintuitive monetary metrics — Proxy-Pointer dealt with all of them. And after we intentionally starved it of context at okay=3, it nonetheless delivered 93.9% accuracy, failing solely when advanced queries genuinely required greater than three doc sections.

Here’s what we achieved — and why it issues for manufacturing RAG techniques:

- One structure, all doc varieties. Enterprises now not want to take care of two separate retrieval pathways — Vectorless for advanced, structured, high-value paperwork (monetary filings, authorized contracts, analysis experiences) and the confirmed Vector RAG for routine information bases. Proxy-Pointer handles each inside a single, unified vector RAG pipeline. If a doc has structural headings, the system exploits them. If it doesn’t, it degrades gracefully to straightforward chunking. No routing logic, no particular instances.

- Full vector RAG scalability on the similar value level. Tree-based “Vectorless” approaches require costly LLM calls throughout indexing (one abstract per node for all nodes in a doc) and throughout retrieval (LLM tree navigation per question). Proxy-Pointer eliminates each. The skeleton tree is an especially light-weight construction constructed with pure Python in milliseconds. Indexing makes use of solely an embedding mannequin — an identical to straightforward vector RAG. The one LLM calls are the noise filter (as soon as per doc at indexing) and the re-ranker, which takes solely 50 (and may even work properly with half that quantity) breadcrumbs (tree paths) + synthesizer at question time.

- Funds-friendly fashions, premium outcomes. Your complete benchmark was run utilizing

gemini-embedding-001at half its default dimensionality (1536 as an alternative of 3072) to optimise vector database storage andgemini-flash-lite— probably the most cost-efficient mannequin in Google’s lineup — for noise filtering, re-ranking, and synthesis. No GPT-5, no Claude Opus, no fine-tuned fashions. The structure compensates for mannequin simplicity by delivering higher context to the synthesizer. - Clear, auditable, explainable. Each response comes with a structural hint: which nodes have been retrieved, their hierarchical breadcrumbs, and the precise line ranges within the supply doc. An analyst can confirm any reply by opening the Markdown file and studying the cited part. This isn’t a black-box system — it’s a glass-box retrieval engine.

- Open-source and instantly usable. The entire pipeline — from PDF extraction to benchmarking — is accessible as a single repository with a 5-minute quickstart. Clone, configure a LLM API key, construct the index and begin querying. No GPU, no Docker, no advanced infrastructure.

In case your retrieval system is scuffling with advanced, structured paperwork, the issue might be not your embedding mannequin. It’s that your index has no concept the place something lives within the doc. Give it construction, and the accuracy follows.

Clone the repo. Attempt your individual paperwork. Let me know your ideas.

Join with me and share your feedback at www.linkedin.com/in/partha-sarkar-lets-talk-AI

All paperwork used on this benchmark are publicly accessible FY2022 10-Okay filings at SEC.gov. Code and benchmark outcomes are open-source below the MIT License. Photos used on this article are generated utilizing Google Gemini.