This text is the primary of three elements. Every half stands by itself, so that you don’t have to learn the others to know it.

The dot product is without doubt one of the most essential operations in machine studying – nevertheless it’s laborious to know with out the appropriate geometric foundations. On this first half, we construct these foundations:

· Unit vectors

· Scalar projection

· Vector projection

Whether or not you’re a scholar studying Linear Algebra for the primary time, or need to refresh these ideas, I like to recommend you learn this text.

The truth is, we’ll introduce and clarify the dot product on this article, and within the subsequent article, we’ll discover it in higher depth.

The vector projection part is included as an non-compulsory bonus: useful, however not essential for understanding the dot product.

The following half explores the dot product in higher depth: its geometric which means, its relationship to cosine similarity, and why the distinction issues.

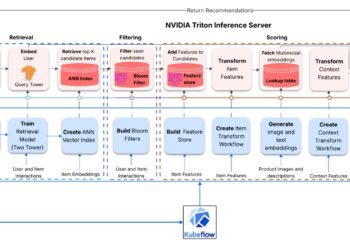

The ultimate half connects these concepts to 2 main purposes: suggestion programs and NLP.

A vector is known as a unit vector if its magnitude is 1:

To take away the magnitude of a non-zero vector whereas retaining its course, we are able to normalize it. Normalization scales the vector by the issue:

The normalized vector is the unit vector within the course of :

Notation 1. Any further, each time we normalize a vector , or write , we assume that . This notation, together with those that observe, can be related to the next articles.

This operation naturally separates a vector into its magnitude and its course:

Determine 1 illustrates this concept: and level in the identical course, however have completely different magnitudes.

Similarity of unit vectors

In two dimensions, all unit vectors lie on the unit circle (radius 1, centered on the origin). A unit vector that varieties an angle θ with the x-axis has coordinates (cos θ, sin θ).

This implies the angle between two unit vectors encodes a pure similarity rating - as we’ll present shortly, this rating is precisely cos θ: equal to 1 after they level the identical approach, 0 when perpendicular, and −1 when reverse.

Notation 2. All through this text, θ denotes the smallest angle between the 2 vectors, so .

In apply, we don’t know θ instantly – we all know the vectors’ coordinates.

We will present why the dot product of two unit vectors: and equals cos θ utilizing a geometrical argument in three steps:

1. Rotate the coordinate system till lies alongside the x-axis. Rotation doesn’t change angles or magnitudes.

2. Learn off the brand new coordinates. After rotation, has coordinates (1 , 0). Since is a unit vector at angle θ from the x-axis, the unit circle definition provides its coordinates as (cos θ, sin θ).

3. Multiply corresponding parts and sum:

This sum of component-wise merchandise is known as the dot product:

See the illustration of those three steps in Determine 2 beneath:

Every thing above was proven in 2D, however the identical end result holds in any variety of dimensions. Any two vectors, regardless of what number of dimensions they reside in, all the time lie in a single flat airplane. We will rotate that airplane to align with the xy-plane — and from there, the 2D proof applies precisely.

Notation 3. Within the diagrams that observe, we frequently draw one of many vectors (sometimes ) alongside the horizontal axis. When shouldn’t be already aligned with the x-axis, we are able to all the time rotate our coordinate system as we did above (the “rotation trick”). Since rotation preserves all lengths, angles, and dot merchandise, each system derived on this orientation holds for any course of .

A vector can contribute in lots of instructions directly, however usually we care about just one course.

Scalar projection solutions the query: How a lot of lies alongside the course of ?

This worth is damaging if the projection factors in the wrong way of .

The Shadow Analogy

Essentially the most intuitive approach to consider scalar projection is because the size of a shadow. Think about you maintain a stick (vector ) at an angle above the bottom (the course of ), and a lightweight supply shines straight down from above.

The shadow that the stick casts on the bottom is the scalar projection.

The animated determine beneath illustrates this concept:

The scalar projection measures how a lot of vector a lies within the course of b.

It equals the size of the shadow that a casts onto b (Woo, 2023). The GIF was created by Claude

Calculation

Think about a lightweight supply shining straight down onto the road PS (the course of ). The “shadow” that (the arrow from P to Q ) casts onto that line is precisely the section PR. You may see this in Determine 4.

Deriving the system

Now have a look at the triangle : the perpendicular drop from creates a proper triangle, and its sides are:

- (the hypotenuse).

- (the adjoining facet – the shadow).

- (the other facet – the perpendicular part).

From this triangle:

- The angle between and is θ.

- (probably the most primary definition of cosine).

- Multiply each side by :

The Phase is the shadow size – the scalar projection of on .

When θ > 90°, the scalar projection turns into damaging too. Consider the shadow as flipping to the other facet.

How is the unit vector associated?

The shadow’s size (PR) doesn’t depend upon how lengthy is. It depends upon and on θ.

Once you compute , you might be asking: how a lot of lies alongside course? That is the shadow size.

The unit vector acts like a course filter: multiplying by it extracts the part of alongside that course.

Let’s see it utilizing the rotation trick. We place b̂ alongside the x-axis:

and:

Then:

The scalar projection of within the course of is:

We apply the identical rotation trick yet one more time, now with two common vectors: and .

After rotation:

,

so:

The dot product of and is:

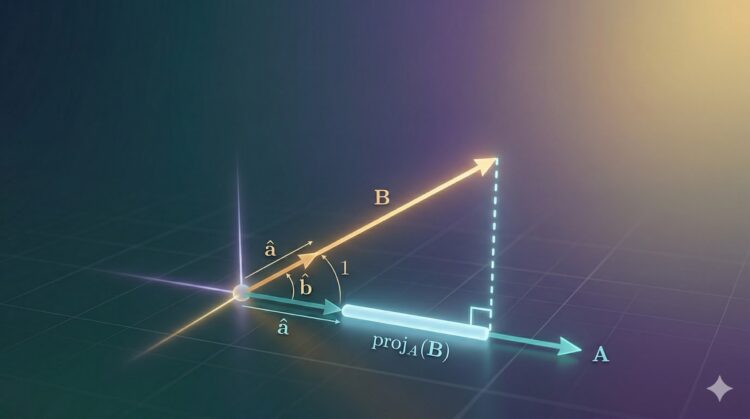

Vector projection extracts the portion of vector that factors alongside the course of vector .

The Path Analogy

Think about two trails ranging from the identical level (the origin):

- Path A results in a whale-watching spot.

- Path B leads alongside the coast in a special course.

Right here’s the query projection solutions:

You’re solely allowed to stroll alongside Path B. How far do you have to stroll in order that you find yourself as shut as doable to the endpoint of Path A?

You stroll alongside B, and in some unspecified time in the future, you cease. From the place you stopped, you look towards the top of Path A, and the road connecting you to it varieties an ideal 90° angle with Path B. That’s the important thing geometric truth – the closest level is all the time the place you’d make a right-angle flip.

The spot the place you cease on Path B is the projection of A onto B. It represents “the a part of A that goes in B’s course.

The remaining hole - out of your stopping level to the precise finish of Path A – is all the pieces about A that has nothing to do with B’s course. This instance is illustrated in Determine 5 beneath: The vector that begins on the origin, factors alongside Path B, and ends on the closest level –is the vector projection of onto .

Strolling alongside path B, the closest level to the endpoint of A happens the place the connecting section varieties a proper angle with B. This level is the projection of A onto B. Picture by Writer (created utilizing Claude)..

Scalar projection solutions: “How far did you stroll?”

That’s only a distance, a single quantity.

Vector projection solutions: “The place precisely are you?”

Extra exactly: “What’s the precise motion alongside Path B that will get you to that closest level?”

Now “1.5 kilometers” isn’t sufficient, you have to say “1.5 kilometers east alongside the coast.” That’s a distance plus a course: an arrow, not only a quantity. The arrow begins on the origin, factors alongside Path B, and ends on the closest level.

The space you walked is the scalar projection worth. The magnitude of the vector projection equals absolutely the worth of the scalar projection.

Unit vector solutions : “Which course does Path B go?”

It’s precisely what represents. It’s Path B stripped of any size info - simply the pure course of the coast.

I do know the whale analog could be very particular; it was impressed by this good rationalization (Michael.P, 2014)

Determine 6 beneath exhibits the identical shadow diagram as in Determine 4, with PR drawn as an arrow, as a result of the vector projection is a vector (with each size and course), not only a quantity.

In contrast to scalar projection (a size), the vector projection is an arrow alongside vector b. Picture by Writer (created utilizing Claude).

Because the projection should lie alongside , we’d like two issues for :

- Its magnitude is the scalar projection:

- Its course is: (the course of )

Any vector equals its magnitude occasions its course (as we noticed within the Unit Vector part), so:

That is already the vector projection system. We will rewrite it by substituting , and recognizing that

The vector projection of within the course of is:

- A unit vector isolates a vector’s course by stripping away its magnitude.

- The dot product multiplies corresponding parts and sums them. It’s also equal to the product of the magnitudes of the 2 vectors multiplied by the cosine of the angle between them.

- Scalar projection makes use of the dot product to measure how far one vector reaches alongside one other’s course - a single quantity, just like the size of a shadow

- Vector projection goes one step additional, returning an precise arrow alongside that course: the scalar projection occasions the unit vector.

Within the subsequent half, we’ll use the instruments we discovered on this article to actually perceive the dot product.