This text is the third a part of a sequence I made a decision to jot down on methods to construct a sturdy and steady credit score scoring mannequin over time.

The primary article centered on methods to assemble a credit score scoring dataset, whereas the second explored exploratory knowledge evaluation (EDA) and methods to higher perceive borrower and mortgage traits earlier than modeling.

, my last yr at an engineering college. As a part of a credit score scoring undertaking, a financial institution supplied us with knowledge about particular person clients. In a earlier article, I defined how this kind of dataset is often constructed.

The purpose of the undertaking was to develop a scoring mannequin that might predict a borrower’s credit score danger over a one-month horizon. As quickly as we acquired the info, step one was to carry out an exploratory knowledge evaluation. In my earlier article, I briefly defined why exploratory knowledge evaluation is crucial for understanding the construction and high quality of a dataset.

The dataset supplied by the financial institution contained greater than 300 variables and over a million observations, overlaying two years of historic knowledge. The variables had been each steady and categorical. As is frequent with real-world datasets, some variables contained lacking values, some had outliers, and others confirmed strongly imbalanced distributions.

Since we had little expertise with modeling on the time, a number of methodological questions shortly got here up.

The primary query was in regards to the knowledge preparation course of. Ought to we apply preprocessing steps to the complete dataset first after which cut up it into coaching, check, and OOT (out-of-time) units? Or ought to we cut up the info first after which apply all preprocessing steps individually?

This query is essential. A scoring mannequin is constructed for prediction, which suggests it should be capable of generalize to new observations, similar to new financial institution clients. Because of this, each step within the knowledge preparation pipeline, together with variable preselection, have to be designed with this goal in thoughts.

One other query was in regards to the position of area consultants. At what stage ought to they be concerned within the course of? Ought to they take part early throughout knowledge preparation, or solely later when decoding the outcomes? We additionally confronted extra technical questions. For instance, ought to lacking values be imputed earlier than treating outliers, or the opposite manner round?

On this article, we deal with a key step within the modeling course of: dealing with excessive values (outliers) and lacking values. This step can generally additionally contribute to lowering the dimensionality of the issue, particularly when variables with poor knowledge high quality are eliminated or simplified throughout preprocessing.

I beforehand described a associated course of in one other article on variable preprocessing for linear regression. In follow, the way in which variables are processed typically will depend on the kind of mannequin used for coaching. Some strategies, similar to regression fashions, are delicate to outliers and customarily require express therapy of lacking values. Different approaches can deal with these points extra naturally.

As an example the steps offered right here, we use the identical dataset launched within the earlier article on exploratory knowledge evaluation. This dataset is an open-source dataset accessible on Kaggle: the Credit score Scoring Dataset. It accommodates 32,581 observations and 12 variables describing loans issued by a financial institution to particular person debtors.

Though this instance includes a comparatively small variety of variables, the preprocessing strategy described right here can simply be utilized to a lot bigger datasets, together with these with a number of hundred variables.

Lastly, you will need to keep in mind that this kind of evaluation solely is sensible if the dataset is top quality and consultant of the issue being studied. In follow, knowledge high quality is without doubt one of the most important elements for constructing sturdy and dependable credit score scoring fashions.

This publish is a part of a sequence devoted to understanding methods to construct sturdy and steady credit score scoring fashions. The primary article centered on how credit score scoring datasets are constructed. The second article explored exploratory knowledge evaluation for credit score knowledge. Within the following part, we flip to a sensible and important step: dealing with outliers and lacking values utilizing an actual credit score scoring dataset.

Making a Time Variable

Our dataset doesn’t include a variable that straight captures the time dimension of the observations. That is problematic as a result of the purpose is to construct a prediction mannequin that may estimate whether or not new debtors will default. And not using a time variable, it turns into tough to obviously illustrate methods to cut up the info into coaching, check, and out-of-time (OOT) samples. As well as, we can not simply assess the soundness or monotonic habits of variables over time.

To handle this limitation, we create a man-made time variable, which we name yr.

We assemble this variable utilizing cb_person_cred_hist_length, which represents the size of a borrower’s credit score historical past. This variable has 29 distinct values, starting from 2 to 30 years. Within the earlier article, once we discretized it into quartiles, we noticed that the default fee remained comparatively steady throughout intervals, round 21%.

That is precisely the habits we wish for our yr variable: a comparatively stationary default fee, which means that the default fee stays steady throughout totally different time durations.

To assemble this variable, we make the next assumption. We arbitrarily suppose that debtors with a 2-year credit score historical past entered the portfolio in 2022, these with 3 years of historical past in 2021, and so forth. For instance, a worth of 10 years corresponds to an entry in 2014. Lastly, all debtors with a credit score historical past better than or equal to 11 years are grouped right into a single class similar to an entry in 2013.

This strategy offers us a dataset overlaying an approximate historic interval from 2013 to 2022, offering about ten years of historic knowledge. This reconstructed timeline allows extra significant practice, check, and out-of-time splits when creating the scoring mannequin. And likewise to check the soundness of the chance driver distribution over time.

Coaching and Validation Datasets

This part addresses an essential methodological query: ought to we cut up the info earlier than performing knowledge therapy and variable preselection, or after?

In follow, machine studying strategies are generally used to develop credit score scoring fashions, particularly when a sufficiently massive dataset is accessible and covers the complete scope of the portfolio. The methodology used to estimate mannequin parameters have to be statistically justified and primarily based on sound analysis standards. Particularly, we should account for potential estimation biases attributable to overfitting or underfitting, and choose an applicable stage of mannequin complexity.

Mannequin estimation ought to finally depend on its capacity to generalize, which means its capability to accurately rating new debtors who weren’t a part of the coaching knowledge. To correctly consider this capacity, the dataset used to measure mannequin efficiency have to be unbiased from the dataset used to coach the mannequin.

In statistical modeling, three forms of datasets are usually used to realize this goal:

- Coaching (or growth) dataset used to estimate and match the parameters of the mannequin.

- Validation / Check dataset (in-time) used to judge the standard of the mannequin match on knowledge that weren’t used throughout coaching.

- Out-of-time (OOT) validation dataset used to evaluate the mannequin’s efficiency on knowledge from a totally different time interval, which helps consider whether or not the mannequin stays steady over time.

Different validation methods are additionally generally utilized in follow, similar to k-fold cross-validation or leave-one-out validation.

Dataset Definition

On this part, we current an instance of methods to create the datasets utilized in our evaluation: practice, check, and OOT.

The event dataset (practice + check) covers the interval from 2013 to 2021. Inside this dataset:

- 70% of the observations are assigned to the coaching set

- 30% are assigned to the check set

The OOT dataset corresponds to 2022.

train_test_df = df[df["year"] <= 2021].copy()

oot_df = df[df["year"] == 2022].copy()

train_test_df.to_csv("train_test_data.csv", index=False)

oot_df.to_csv("oot_data.csv", index=False)Preserving Mannequin Generalization

To protect the mannequin’s capacity to generalize, as soon as the dataset has been cut up into practice, check, and OOT, the check and OOT datasets should stay fully untouched throughout mannequin growth.

In follow, they need to be handled as in the event that they had been locked away and solely used after the modeling technique has been outlined and the candidate fashions have been skilled. These datasets will later enable us to match mannequin efficiency and choose the ultimate mannequin.

One essential level to remember is that every one preprocessing steps utilized to the coaching dataset have to be replicated precisely on the check and OOT datasets. This consists of:

- dealing with outliers

- imputing lacking values

- discretizing variables

- and making use of another preprocessing transformations.

Splitting the Improvement Dataset into Prepare and Check

To coach and consider the totally different fashions, we cut up the event dataset (2013–2021) into two components:

- a coaching set (70%)

- a check set (30%)

To make sure that the distributions stay comparable throughout these two datasets, we carry out a stratified cut up. The stratification variable combines the default indicator and the yr variable:

def_year = def + yr

This variable permits us to protect each the default fee and the temporal construction of the info when splitting the dataset.

Earlier than performing the stratified cut up, you will need to first look at the distribution of the brand new variable def_year to confirm that stratification is possible. If some teams include too few observations, stratification will not be potential or might require changes.

In our case, the smallest group outlined by def_year accommodates greater than 300 observations, which implies that stratification is completely possible. We will subsequently cut up the dataset into practice and check units, save them, and proceed the preprocessing steps utilizing solely the coaching dataset. The identical transformations will later be replicated on the check and OOT datasets.

from sklearn.model_selection import train_test_split

train_test_df["def_year"] = train_test_df["def"].astype(str) + "_" + train_test_df["year"].astype(str)

train_df, test_df = train_test_split(train_test_df, test_size=0.2, random_state=42, stratify=train_test_df["def_year"])

# sauvegarde des bases

train_df.to_csv("train_data.csv", index=False)

test_df.to_csv("test_data.csv", index=False)

oot_df.to_csv("oot_data.csv", index=False)

Within the following sections, all analyses are carried out utilizing the coaching knowledge.

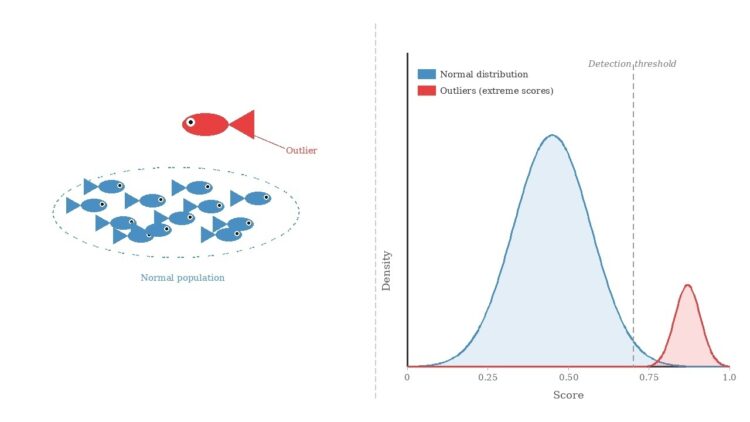

Outlier Remedy

We start by figuring out and treating outliers, and we validate these therapies with area consultants. In follow, this step is simpler for consultants to evaluate than lacking worth imputation. Specialists typically know the believable ranges of variables, however they might not all the time know why a worth is lacking. Performing this step first additionally helps scale back the bias that excessive values might introduce through the imputation course of.

To deal with excessive values, we use the IQR technique (Interquartile Vary technique). This technique is usually used for variables that roughly observe a standard distribution. Earlier than making use of any therapy, you will need to visualize the distributions utilizing boxplots and density plots.

In our dataset, we’ve got six steady variables. Their boxplots and density plots are proven beneath.

The desk beneath presents, for every variable, the decrease sure and higher sure, outlined as:

Decrease Sure = Q1 – 1.5 x IQR

Higher Sure = Q3 + 1.5 x IQR

the place IQR = Q3 – Q1 and (Q1) and (Q3) correspond to the first and third quartiles, respectively.

On this research, this therapy technique is cheap as a result of it doesn’t considerably alter the central tendency of the variables. To additional validate this strategy, we are able to confer with the earlier article and look at which quantile ranges the decrease and higher bounds fall into, and analyze the default fee of debtors inside these intervals.

When treating outliers, you will need to proceed rigorously. The target is to cut back the affect of maximum values with out altering the scope of the research.

From the desk above, we observe that the IQR technique would cap the age of debtors at 51 years. This result’s acceptable provided that the research inhabitants was initially outlined with a most age of 51. If this restriction was not a part of the preliminary scope, the edge needs to be mentioned with area consultants to find out an affordable higher sure for the variable.

Suppose, for instance, that debtors as much as 60 years outdated are thought-about a part of the portfolio. In that case, the IQR technique would not be applicable for treating outliers within the person_age variable, as a result of it could artificially truncate legitimate observations.

Two options can then be thought-about. First, area consultants might specify a most believable age, similar to 100 years, which might outline the suitable vary of the variable. One other strategy is to make use of a way referred to as winsorization.

Winsorization follows an identical thought to the IQR technique: it limits the vary of a steady variable, however the bounds are usually outlined utilizing excessive quantiles or expert-defined thresholds. A standard strategy is to limit the variable to a variety similar to:

Observations falling outdoors this restricted vary are then changed by the closest boundary worth (the corresponding quantile or a worth decided by consultants).

This strategy might be utilized in two methods:

- Unilateral winsorization, the place just one aspect of the distribution is capped.

- Bilateral winsorization, the place each the decrease and higher tails are truncated.

On this instance, all observations with values beneath €6 are changed with €6 for the variable of curiosity. Equally, all observations with values above €950 are changed with €950.

We compute the ninetieth, ninety fifth, and 99th percentiles of the person_age variable to substantiate whether or not the IQR technique is acceptable. If not, we might use the 99th percentile because the higher sure for a winsorization strategy.

On this case, the 99th percentile is the same as the IQR higher sure (51). This confirms that the IQR technique is acceptable for treating outliers on this variable.

def apply_iqr_bounds(practice, check, oot, variables):

practice = practice.copy()

check = check.copy()

oot = oot.copy()

bounds = []

for var in variables:

Q1 = practice[var].quantile(0.25)

Q3 = practice[var].quantile(0.75)

IQR = Q3 - Q1

decrease = Q1 - 1.5 * IQR

higher = Q3 + 1.5 * IQR

bounds.append({

"Variable": var,

"Decrease Sure": decrease,

"Higher Sure": higher

})

for df in [train, test, oot]:

df[var] = df[var].clip(decrease, higher)

bounds_table = pd.DataFrame(bounds)

return bounds_table, practice, check, oot

bounds_table, train_clean_outlier, test_clean_outlier, oot_clean_outlier = apply_iqr_bounds(

train_df,

test_df,

oot_df,

variables

)One other strategy that may typically be helpful when coping with outliers in steady variables is discretization, which I’ll focus on in a future article.

Imputing Lacking Values

The dataset accommodates two variables with lacking values: loan_int_rate and person_emp_length. Within the coaching dataset, the distribution of lacking values is summarized within the desk beneath.

The truth that solely two variables include lacking values permits us to investigate them extra rigorously. As a substitute of instantly imputing them with a easy statistic such because the imply or the median, we first attempt to perceive whether or not there’s a sample behind the lacking observations.

In follow, when coping with lacking knowledge, step one is usually to seek the advice of area consultants. They might present insights into why sure values are lacking and counsel cheap methods to impute them. This helps us higher perceive the mechanism producing the lacking values earlier than making use of statistical instruments.

A easy approach to discover this mechanism is to create indicator variables that take the worth 1 when a variable is lacking and 0 in any other case. The thought is to examine whether or not the likelihood {that a} worth is lacking will depend on the opposite noticed variables.

Case of the Variable person_emp_length

The determine beneath exhibits the boxplots of the continual variables relying on whether or not person_emp_length is lacking or not.

A number of variations might be noticed. For instance, observations with lacking values are likely to have:

- decrease earnings in contrast with observations the place the variable is noticed,

- smaller mortgage quantities,

- decrease rates of interest,

- and greater loan-to-income ratios.

These patterns counsel that the lacking observations usually are not randomly distributed throughout the dataset. To substantiate this instinct, we are able to complement the graphical evaluation with statistical exams, similar to:

- Kolmogorov–Smirnov or Kruskal–Wallis exams for steady variables,

- Cramér’s V check for categorical variables.

These analyses would usually present that the likelihood of a lacking worth will depend on the noticed variables. This mechanism is named MAR (Lacking At Random).

Underneath MAR, a number of imputation strategies might be thought-about, together with machine studying approaches similar to k-nearest neighbors (KNN).

Nevertheless, on this article, we undertake a conservative imputation technique, which is usually utilized in credit score scoring. The thought is to assign lacking values to a class related to the next likelihood of default.

In our earlier evaluation, we noticed that debtors with the best default fee belong to the primary quartile of employment size, similar to clients with lower than two years of employment historical past. To stay conservative, we subsequently assign lacking values for person_emp_length to 0, which means no employment historical past.

Case of the Variable loan_int_rate

After we analyze the connection between loan_int_rate and the opposite steady variables, the graphical evaluation suggests no clear variations between observations with lacking values and people with out.

In different phrases, debtors with lacking rates of interest seem to behave equally to the remainder of the inhabitants when it comes to the opposite variables. This commentary may also be confirmed utilizing statistical exams.

Such a mechanism is often known as MCAR (Lacking Fully At Random). On this case, the missingness is unbiased of each the noticed and unobserved variables.

When the lacking knowledge mechanism is MCAR, a easy imputation technique is mostly enough. On this research, we select to impute the lacking values of loan_int_rate utilizing the median, which is strong to excessive values.

If you need to discover lacking worth imputation methods in additional depth, I like to recommend studying this article.

The code beneath exhibits methods to impute the practice, check, and OOT datasets whereas preserving the independence between them. This strategy ensures that every one imputation parameters are computed utilizing the coaching dataset solely after which utilized to the opposite datasets. By doing so, we restrict potential biases that might in any other case have an effect on the mannequin’s capacity to generalize to new knowledge.

def impute_missing_values(practice, check, oot,

emp_var="person_emp_length",

rate_var="loan_int_rate",

emp_value=0):

"""

Impute lacking values utilizing statistics computed on the coaching dataset.

Parameters

----------

practice, check, oot : pandas.DataFrame

Datasets to course of.

emp_var : str

Variable representing employment size.

rate_var : str

Variable representing rate of interest.

emp_value : int or float

Worth used to impute employment size (conservative technique).

Returns

-------

train_imp, test_imp, oot_imp : pandas.DataFrame

Imputed datasets.

"""

# Copy datasets to keep away from modifying originals

train_imp = practice.copy()

test_imp = check.copy()

oot_imp = oot.copy()

# ----------------------------

# Compute statistics on TRAIN

# ----------------------------

rate_median = train_imp[rate_var].median()

# ----------------------------

# Create lacking indicators

# ----------------------------

for df in [train_imp, test_imp, oot_imp]:

df[f"{emp_var}_missing"] = df[emp_var].isnull().astype(int)

df[f"{rate_var}_missing"] = df[rate_var].isnull().astype(int)

# ----------------------------

# Apply imputations

# ----------------------------

for df in [train_imp, test_imp, oot_imp]:

df[emp_var] = df[emp_var].fillna(emp_value)

df[rate_var] = df[rate_var].fillna(rate_median)

return train_imp, test_imp, oot_imp

## Utility de l'imputation

train_imputed, test_imputed, oot_imputed = impute_missing_values(

practice=train_clean_outlier,

check=test_clean_outlier,

oot=oot_clean_outlier,

emp_var="person_emp_length",

rate_var="loan_int_rate",

emp_value=0

)Now we have now handled each outliers and lacking values. To maintain the article centered and keep away from making it lengthy, we’ll cease right here and transfer on to the conclusion. At this stage, the practice, check, and OOT datasets might be safely saved.

train_imputed.to_csv("train_imputed.csv", index=False)

test_imputed.to_csv("test_imputed.csv", index=False)

oot_imputed.to_csv("oot_imputed.csv", index=False)Within the subsequent article, we’ll analyze correlations amongst variables to carry out sturdy variable choice. We may even introduce the discretization of steady variables and research two essential properties for credit score scoring fashions: monotonicity and stability over time.

Conclusion

This text is a part of a sequence devoted to constructing credit score scoring fashions which are each sturdy and steady over time.

On this article, we highlighted the significance of dealing with outliers and lacking values through the preprocessing stage. Correctly treating these points helps forestall biases that might in any other case distort the mannequin and scale back its capacity to generalize to new debtors.

To protect this generalization functionality, all preprocessing steps have to be calibrated utilizing solely the coaching dataset, whereas sustaining strict independence from the check and out-of-time (OOT) datasets. As soon as the transformations are outlined on the coaching knowledge, they need to then be replicated precisely on the check and OOT datasets.

Within the subsequent article, we’ll analyze the relationships between the goal variable and the explanatory variables, following the identical methodological precept, that’s, preserving the independence between the practice, check, and OOT datasets.

Picture Credit

All pictures and visualizations on this article had been created by the creator utilizing Python (pandas, matplotlib, seaborn, and plotly) and excel, until in any other case acknowledged.

References

[1] Lorenzo Beretta and Alessandro Santaniello.

Nearest Neighbor Imputation Algorithms: A Crucial Analysis.

Nationwide Library of Medication, 2016.

[2] Nexialog Consulting.

Traitement des données manquantes dans le milieu bancaire.

Working paper, 2022.

[3] John T. Hancock and Taghi M. Khoshgoftaar.

Survey on Categorical Knowledge for Neural Networks.

Journal of Large Knowledge, 7(28), 2020.

[4] Melissa J. Azur, Elizabeth A. Stuart, Constantine Frangakis, and Philip J. Leaf.

A number of Imputation by Chained Equations: What Is It and How Does It Work?

Worldwide Journal of Strategies in Psychiatric Analysis, 2011.

[5] Majid Sarmad.

Strong Knowledge Evaluation for Factorial Experimental Designs: Improved Strategies and Software program.

Division of Mathematical Sciences, College of Durham, England, 2006.

[6] Daniel J. Stekhoven and Peter Bühlmann.

MissForest—Non-Parametric Lacking Worth Imputation for Combined-Kind Knowledge.Bioinformatics, 2011.

[7] Supriyanto Wibisono, Anwar, and Amin.

Multivariate Climate Anomaly Detection Utilizing the DBSCAN Clustering Algorithm.

Journal of Physics: Convention Collection, 2021.

Knowledge & Licensing

The dataset used on this article is licensed underneath the Artistic Commons Attribution 4.0 Worldwide (CC BY 4.0) license.

This license permits anybody to share and adapt the dataset for any goal, together with business use, supplied that correct attribution is given to the supply.

For extra particulars, see the official license textual content: CC0: Public Area.

Disclaimer

Any remaining errors or inaccuracies are the creator’s accountability. Suggestions and corrections are welcome.