-Augmented Era (RAG) has moved out of the experimental part and firmly into enterprise manufacturing. We’re not simply constructing chatbots to check LLM capabilities; we’re developing advanced, agentic programs that interface instantly with inside structured databases (SQL), unstructured information lakes (Vector DBs), and third-party APIs and MCP instruments. Nevertheless, as RAG adoption scales inside a company, a obtrusive and costly drawback is obvious — redundancy.

In lots of enterprise RAG deployments, groups observe that over 30% of person queries are repetitive or semantically comparable. Workers throughout completely different departments ask for the similar This fall gross sales numbers, the similar onboarding procedures, and the similar summaries of normal vendor contracts. Exterior customers asking about medical insurance premiums for his or her age usually obtain responses which might be similar throughout comparable profiles.

In a naive RAG structure, each single one in every of these repeated questions triggers an similar, costly chain of occasions: producing embeddings, executing vector similarity searches, scanning SQL tables, retrieving huge context home windows, and forcing a Giant Language Mannequin (LLM) to purpose over the very same tokens to provide a solution it generated an hour in the past.

This redundancy inflates cloud infrastructure prices and provides pointless multi-second latencies to person responses. We’d like an clever caching technique to regulate prices and hold RAG viable because the person and question quantity will increase.

Nevertheless, caching for Agentic RAG will not be a easy `key: worth` retailer. Language is nuanced, information is extremely dynamic, and serving a stale or hallucinated cache is an actual danger. On this article, I’ll display a caching structure with real-world eventualities that may deliver tangible advantages.

The Setup: A Twin-Supply Agentic System

Allow us to think about a simulated enterprise atmosphere utilizing a dataset of Amazon Product Critiques (CC0).

Our Agentic RAG system acts as an clever router geared up with entry to 2 information shops:

1. A Structured SQL Database (SQLite): Comprises tabular overview information (Id, ProfileName, Rating, Time, Abstract, Overview Textual content).

2. An Unstructured Vector Database (FAISS): Comprises the embedded textual content payload of the critiques of merchandise by prospects. This simulates inside information bases, wikis, and coverage paperwork.

The Two-Tier Cache Structure

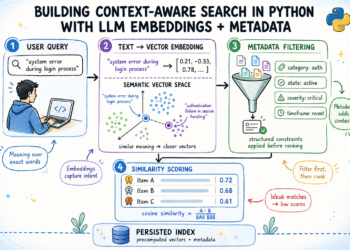

We make the most of a Two-Tier Cache structure as a result of customers hardly ever ask precisely the identical query verbatim, however they continuously ask questions with the similar that means, and subsequently, requiring the identical underlying context.

Tier 1: The Semantic Cache (At question degree)

The Semantic Cache performing as the primary line of protection, intercepting the person question. In contrast to a standard cache that requires an ideal string match (e.g., caching `SELECT * FROM desk`), a Semantic Cache makes use of embeddings.

When a person asks a query, we embed the question and examine it in opposition to beforehand cached queries utilizing cosine similarity. If the brand new question is semantically similar—say, a similarity rating of > 95% —we instantly return the beforehand generated LLM reply. As an illustration:

Question A: “What’s the firm go away coverage?”

Question B: “Are you able to inform me the coverage for taking day off?”

The Semantic Cache acknowledges these as similar intents. It intercepts the request earlier than the Agent is even invoked, leading to a solution that’s delivered in milliseconds with zero LLM token prices.

Tier 2: The Retrieval Cache (Context Degree)

Let’s think about the person asks the question within the following manner:

Question C: “Summarize the go away coverage particularly for distant employees.”

This isn’t a 95% match, so it misses Tier 1. Nevertheless, the underlying paperwork wanted to reply Question C are precisely the identical paperwork retrieved for Question A. That is the place Tier 2, the Retrieval Cache, prompts.

The Retrieval Cache shops the uncooked information blocks (SQL rows or FAISS textual content chunks) in opposition to a broader “Matter Match” threshold (e.g., > 70%). When the Semantic Cache misses, the agent checks Tier 2. If it finds related pre-fetched context, it skips the costly database lookups and instantly feeds the cached context into the LLM to generate a contemporary reply. It acts as a high-speed notepad.

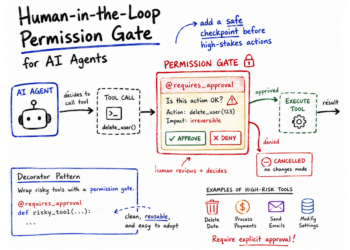

The Clever Router: Agent Development & Tooling

Fetching from the caches will not be sufficient. We have to have mechanisms to detect staleness of the saved content material within the cache, to stop incorrect responses to the person. To orchestrate retrieval and validation from the two-tier cache and the dual-source backends, the system depends on an LLM Agent. Reasonably than a RAG agent that solely acts because the response synthesizer given the context, right here the agent is supplied with a rigorous system immediate and a particular set of instruments that enable it to behave as an clever question router and information validator.

The agent toolkit consists of a number of customized capabilities it could autonomously invoke primarily based on the person’s intent:

- search_vector_database: Queries the Vector DB (FAISS) for unstructured textual content.

- query_sql_database: Executes dynamic SQL queries in opposition to the native SQLite database to fetch precise numbers or filtered information.

- check_retrieval_cache: Pulls pre-fetched context for >70% comparable subjects to skip Vector/SQL lookups.

- check_source_last_updated: Rapidly queries the dwell SQL database to get the precise

MAX(Time)timestamp. Helps to detect if the supply ‘critiques’ desk has been up to date for international aggregation queries (eg: What’s the common rating throughout all critiques?) - check_row_timestamp: Validates the

Date-Timeparameter of a particular row ID. - check_data_fingerprint: Calculates the Hash of a doc’s content material to detect adjustments. Helpful when there is no such thing as a

Date-Timecolumn or for a distributed database. - check_predicate_staleness: Checks if a particular “slice” of information (e.g., a particular yr) has modified.

This tool-calling structure transforms the LLM from a passive textual content generator into an energetic, self-correcting information supervisor. The next eventualities will depict how these instruments are used for particular varieties of queries to handle value and accuracy of responses. The determine depicts the question stream throughout all of the eventualities coated right here.

Actual-World Eventualities

State of affairs 1: The Semantic Cache Hit (Pace & Price)

That is the perfect situation, the place a query from one person is sort of identically repeated by one other person (>95% similarity). For eg; a person asks the system: “What are the widespread opinions about espresso style?”. Since it’s the first time the system has seen this query, it leads to a cache MISS. The agent methodically queries the Vector Search, retrieves three paperwork, and the LLM spends 36 seconds reasoning over the textual content to generate a complete abstract of bitter versus scrumptious espresso profiles.

A second later, a second person asks the identical query. The system generates an embedding, seems on the Semantic Cache, and registers a success. The precise reply is returned immediately.

The web influence is a response time drop from ~36.0 seconds to 0.02 seconds. Complete token value for the second question: $0.00.

Right here is the question stream.

============================================================

==== State of affairs 1: The Semantic Cache Hit (Pace & Price) =====

============================================================

-> Asking it the FIRST time (anticipate Cache MISS, gradual LLM + DB lookups)

[USER]: What are the widespread opinions about espresso style?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'widespread opinions about espresso style'

[TOOL: RetrievalCache]: MISS. Matter not present in cache.

[TOOL: VectorSearch]: Trying to find 'widespread opinions about espresso style'...

[TOOL: VectorSearch]: Discovered 3 paperwork. Saving to Retrieval Cache.

[AGENT]: Based mostly on the critiques, widespread opinions about espresso style range. Some discover it to have a bitter style, whereas others describe it as nice tasting and scrumptious. There are additionally opinions that espresso could be stale and missing in taste. Some shoppers are additionally involved about attaining the total taste potential of their espresso.

[TIME TAKEN]: 36.13 seconds

-> Asking it the SECOND time (anticipate Semantic Cache HIT, prompt)

[USER]: What are the widespread opinions about espresso style?

[SYSTEM]: Semantic Cache HIT -> Based mostly on the critiques, widespread opinions about espresso style range. Some discover it to have a bitter style, whereas others describe it as nice tasting and scrumptious. There are additionally opinions that espresso could be stale and missing in taste. Some shoppers are additionally involved about attaining the total taste potential of their espresso.

[TIME TAKEN]: 0.02 secondsState of affairs 2: Retrieval Cache (Shared Context)

Subsequent, the person asks a follow-up: “Summarize these opinions into 3 bullet factors.”

The Semantic Cache registers a MISS as a result of the intent (summarization format) is essentially completely different. Nevertheless, the semantic subject is extremely comparable (>70%). The system hits the Tier 2 Retrieval Cache, pulls the very same 3 paperwork fetched in State of affairs 1 , and passes them to the LLM to format into bullets.

The web influence is we eradicate the latency and price of vector database nearest-neighbor looking out, preserving the information retrieval strictly in-memory.

Right here is the question stream.

============================================================

===== State of affairs 2: Retrieval Cache Hit (Shared Context) =====

============================================================

-> Guaranteeing Retrieval Cache is seeded (silent examine)...

[USER]: What are the widespread opinions about espresso style?

[SYSTEM]: Semantic Cache HIT -> Based mostly on the critiques, widespread opinions about espresso style range. Some discover it to have a bitter style, whereas others describe it as nice tasting and scrumptious. There are additionally opinions that espresso could be stale and missing in taste. Some shoppers are additionally involved about attaining the total taste potential of their espresso.

-> Asking a DIFFERENT query on the SAME TOPIC.

-> Semantic question is barely completely different so Semantic cache misses.

-> Agent ought to hit Retrieval Cache to keep away from FAISS lookup and reply it.

[USER]: Summarize these espresso style opinions in a bulleted record.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'espresso style opinions'

[TOOL: RetrievalCache]: HIT! Discovered cached context (Doc ID: 481389

[AGENT]: This is a abstract of the espresso style opinions:

* One person discovered the espresso to have a "bizarre whang" and a bitter style, expressing disappointment.

* One other person loved the espresso, describing it as "nice tasting" and "scrumptious" when made in a drip espresso maker, although they have been uncertain in the event that they have been attaining its full taste potential because of a scarcity of brewing directions.

* A 3rd person was significantly disenchanted, discovering the espresso stale and missing in taste.

[TIME TAKEN]: 34.24 secondsState of affairs 3: Agentic Cache Bypass

If the person question is about newest analytics, similar to present traits or newest gross sales figures, it’s advisable to bypass the cache completely. On this situation, the person queries: “What are the LATEST damaging critiques?”

On this case, the Agentic router inspects the person question and understands the temporal intent. Based mostly on the system immediate, it then explicitly decides to bypass the cache completely. The question is routed straight to the supply SQL database to make sure up-to-date context for constructing the response.

Right here is the question stream.

============================================================

======= State of affairs 3: Agentic Bypass for 'Newest' Information =======

============================================================

-> Asking for 'newest' information.

-> Agent immediate logic ought to explicitly bypass cache and go to SQL.

[USER]: What are the most recent 5 star critiques?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[AGENT]: Listed below are the most recent 5-star critiques:

* **Rating:** 5, **Abstract:** YUM, **Textual content:** Skinny sticks go just a little too quick in my family!.. continued State of affairs 4: Row-Degree Staleness Detection

Information will not be static. And subsequently there must be a validation of the cache contents earlier than use.

Let’s say a person asks: “What’s the abstract of the overview with ID 120698?” The system caches the reply.

Subsequently, an administrator updates the database, altering the abstract textual content for a similar ID. When the person asks the very same query once more, the Semantic Cache identifies a 100% match. Nevertheless, it doesn’t blindly serve the reply.

Each cache entry is saved with a Validation Technique Tag. Earlier than returning the hit, the system triggers the check_row_timestamp agent device. It rapidly checks the Time column for ID 120698 within the dwell database. Seeing that the dwell database timestamp is newer than the cache’s creation timestamp, the system triggers an Invalidation. It drops the stale cache, forces an agentic question to the database, and retrieves the corrected abstract.

Right here is the question stream. I’ve added an extra examine to indicate that updating an unrelated row doesn’t invalidate the cache.

============================================================

== State of affairs 4: Staleness Detection (Row-Degree Timestamp) ===

============================================================

-> Step 1: Preliminary Ask (Anticipate MISS, Agent fetches from SQL)

[USER]: Present an in depth abstract of overview ID 120698.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'overview ID 120698'

[TOOL: RetrievalCache]: MISS. Matter not present in cache.

[AGENT]: The overview for ID 120698 is summarized as "Burnt tasting rubbish"..contd.

-> Step 2: Asking once more (Anticipate HIT - Information is Recent)

[USER]: Present an in depth abstract of overview ID 120698.

[SYSTEM]: Semantic Cache HIT (Recent Row Timestamp) -> The overview for ID 120698 is summarized as "Burnt tasting rubbish"..contd..

-> Step 3: Simulating Background Replace (Unrelated ID 99999)...

-> Testing retrieval AFTER unrelated change (Anticipate HIT - Row continues to be contemporary):

[USER]: Present an in depth abstract of overview ID 120698.

[SYSTEM]: Semantic Cache HIT (Recent Row Timestamp) -> The overview for ID 120698 is summarized as "Burnt tasting rubbish"..contd..

-> Now updating the goal overview (Row 120698) itself...

[REAL-TIME UPDATE]: New Timestamp in DB: 27-02-2026 03:53:00

-> Testing Semantic Cache retrieval for Row 120698 AFTER its personal replace:

-> EXPECTATION: Stale cache detected (Row-Degree). Invalidating.

[USER]: Present an in depth abstract of overview ID 120698.

[SYSTEM]: Stale cache detected (Row 120698 up to date at 27-02-2026 03:53:00). Invalidating.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'overview ID 120698'

[TOOL: RetrievalCache]: MISS. Matter not present in cache.

[AGENT]: The UPDATED overview for ID 120698 is summarized as "Burnt tasting rubbish"..contd..State of affairs 5: Desk-Degree Staleness (Aggregations)

Row-level validation works effectively for single lookups, however not on queries requiring aggregations on a lot of rows. For eg;

a person asks: “What number of complete critiques are within the database?” or “What’s the common rating for all critiques?”. After which one other person asks it once more. On this case, checking the timestamp of 1000’s of rows could be extremely inefficient. As a substitute, the Semantic Cache tags aggregation queries with a Desk MAX Time validation technique. When the identical query is requested once more, the agent makes use of check_source_last_updated device to examine SELECT MAX(Time) FROM critiques. If it sees a brand new supply desk timestamp, it invalidates the cache and recalculates the whole depend precisely.

Right here is the question stream.

============================================================

====== State of affairs 5: Staleness Detection (Desk-Degree) =======

============================================================

-> Step 1: Preliminary Ask (Anticipate MISS, Agent performs international depend)

[USER]: What number of complete critiques are within the database?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'complete variety of critiques'

[TOOL: RetrievalCache]: MISS. Matter not present in cache.

[AGENT]: There are 205 complete critiques within the database.

-> Step 2: Asking once more (Anticipate HIT - Desk is Recent)

[USER]: What number of complete critiques are within the database?

[SYSTEM]: Semantic Cache HIT (Recent Supply Timestamp) -> There are 205 complete critiques within the database.

-> Including a model new overview document (id 11111) with a FRESH timestamp...

-> Testing International Cache retrieval AFTER desk change:

-> EXPECTATION: Stale cache detected (Supply-Degree). Invalidating.

[USER]: What number of complete critiques are within the database?

[SYSTEM]: Stale cache detected (Supply 'critiques' up to date at 27-02-2026 08:03:26). Invalidating.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'complete variety of critiques'

[TOOL: RetrievalCache]: MISS. Matter not present in cache.

[AGENT]: There are 206 complete critiques within the database.State of affairs 6: Staleness Detection by way of Information Fingerprinting

Typically, databases don’t have dependable updated_at timestamps, or we’re coping with unstructured textual content information or a distributed database. On this situation, we depend on cryptography. A person queries: “What does overview ID 120698 say?” The system caches the response alongside a SHA-256 Hash of the underlying supply textual content.

When the textual content is altered with out updating a timestamp, the Semantic Cache catches a success. Utilizing check_data_fingerprint device, it makes an attempt validation by evaluating the cached SHA-256 hash in opposition to a contemporary hash of the dwell supply textual content. The hash mismatch throws a pink flag, safely invalidating the silent edit.

Right here is the question stream.

============================================================

== State of affairs 6: Staleness Detection (Information Fingerprinting) ===

============================================================

-> Step 1: Preliminary Ask (Anticipate MISS, Agent fetches textual content)

[USER]: What's the precise textual content of overview ID 120698?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[AGENT]: The precise textual content of overview ID 120698 is: 'The worst espresso beverage I've..contd.'

-> Step 2: Asking once more (Anticipate HIT - Hash is Legitimate)

[USER]: What's the precise textual content of overview ID 120698?

[SYSTEM]: Semantic Cache HIT (Legitimate Hash) -> The precise textual content of overview ID 120698 is: 'The worst espresso beverage I've ..contd.

-> Modifying the underlying supply textual content with out timestamp in SQL DB...

-> Testing Semantic Cache retrieval AFTER content material change:

-> EXPECTATION: Stale cache detected (Hash mismatch). Invalidating.

[USER]: What's the precise textual content of overview ID 120698?

[SYSTEM]: Stale cache detected (Hash mismatch). Invalidating cache and re-running.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[AGENT]: The precise textual content of overview ID 120698 is: 'The worst espresso beverage I've ..contd.State of affairs 7: Retrieval Cache Fallback (Context Sufficiency)

Whereas the Tier 2 context cache is a robust device, generally the context could solely have half the reply to the person query.

For instance, a person asks: “What’s the sentiment about packaging of the espresso?” The system searches, and the Vector database returns paperwork completely speaking concerning the packaging of the espresso. That is cached.

Subsequent, the person asks: “What do folks take into consideration the packaging and the style of the espresso?”

The system hits the Retrieval Cache primarily based on subject similarity and passes the paperwork to the LLM. However the agent is instructed to guage Sufficiency by the check_retrieval_cache device. The agent analyzes the cached context and realizes that the context solely has details about packaging, however not the style of the espresso.

As a substitute of hallucinating a solution about style, the agent triggers a Context Fallback. It discards the cache, generates a brand new question particularly focusing on “espresso style” and “espresso packaging”, queries the dwell Vector DB, and merges the end result to supply a flawless, fact-based reply.

Right here is the question stream.

============================================================

State of affairs 7: Retrieval Cache Fallback (Context Sufficiency)

============================================================

-> Step 1: Seeding Retrieval Cache with NARROW context (Packaging solely) for a BROAD subject...

-> Step 2: Asking a BROAD query ('packaging' AND 'style').

-> EXPECTATION:

[USER]: What do folks take into consideration the packaging and the precise style of the espresso?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[TOOL: RetrievalCache]: Checking cache for subject: 'packaging and style of espresso'

[TOOL: RetrievalCache]: HIT! Discovered cached context (Overview 1: The field arrived barely dented however the inside wrap was safe.

[TOOL: VectorSearch]: Trying to find 'packaging of the espresso'...

[TOOL: VectorSearch]: Discovered 3 paperwork. Saving to Retrieval Cache.

[TOOL: VectorSearch]: Trying to find 'style of the espresso'...

[TOOL: VectorSearch]: Discovered 3 paperwork. Saving to Retrieval Cache.

[AGENT]: Folks have blended opinions on the packaging and style of the espresso.

Concerning **packaging**:

* Some prospects have obtained merchandise with broken packaging, similar to a "crushed field" and "espresso mud all around the Ok-cups."

* Others have famous points with the readability of knowledge on the packaging"

Concerning the **precise style of the espresso**:

* A number of critiques describe the style negatively, with feedback like "very bitter,"

* One reviewer merely acknowledged it "tastes like prompt espresso."

[TIME TAKEN]: 7.34 secondsState of affairs 8: Predicate Caching (Time-Bounded Validation)

Lastly, we are able to apply a complicated staleness invalidation logic to optimize cache retrievals. Right here is an instance.

A person asks: “What number of critiques have been written in 2011?”

Since this can be a international question involving a lot of rows, table-level staleness examine (situation 5) applies. Nevertheless, if somebody provides a overview for the yr 2026, the complete desk’s MAX(Time) adjustments, and the 2011 cache could be invalidated and cleared. That isn’t environment friendly.

As a substitute, we make use of Predicate Caching. The cache entry information the precise SQL WHERE clause constraint (e.g., Time BETWEEN start_of_2011 AND end_of_2011).

When a brand new 2026 overview is added, utilizing the check_predicate_staleness device, the system checks the MAX(Time) solely throughout the 2011 slice. Seeing that the 2011 slice is undisturbed, it safely returns a Cache HIT. Solely when a overview particularly dated for 2011 is inserted does the predicate validation flag it as stale, making certain extremely focused, environment friendly invalidation.

Right here is the question stream.

============================================================

= State of affairs 8: Predicate Caching (Time-Bounded Validation) ==

============================================================

-> Step 1: Preliminary Ask (Anticipate MISS, Agent executes filtered SQL)

[USER]: What number of critiques have been written in 2011?

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[AGENT]: There have been 59 critiques written in 2011.

-> Step 2: Asking once more (Anticipate HIT - Predicate slice is contemporary)

[USER]: What number of critiques have been written in 2011?

[SYSTEM]: Semantic Cache HIT (Recent Predicate Marker) -> There have been 59 critiques written in 2011.

-> Step 3: Including a NEW overview for a DIFFERENT yr (2026)...

-> Testing Semantic Cache for 2011 AFTER an unrelated 2026 replace:

-> EXPECTATION: Semantic Cache HIT (The 2011 slice is unchanged!)

[USER]: What number of critiques have been written in 2011?

[SYSTEM]: Semantic Cache HIT (Recent Predicate Marker) -> There have been 59 critiques written in 2011.

-> Step 4: Including a NEW overview WITHIN the 2011 time slice...

-> Testing Semantic Cache for 2011 AFTER a associated 2011 replace:

-> EXPECTATION: Stale cache detected (Predicate marker modified). Invalidating.

[USER]: What number of critiques have been written in 2011?

[SYSTEM]: Stale cache detected (Predicate 'Time >= 1293840000 AND Time <= 1325375999' marker modified). Invalidating.

[SYSTEM]: Semantic Cache MISS / BYPASSED. Routing to Agent...

[AGENT]: There have been 60 critiques written in 2011.Conclusion

On this article, we demonstrated how redundancy silently inflates latency and token spend in manufacturing RAG programs. We walked by a dual-source agentic setup combining structured SQL information and unstructured vector search, and confirmed how repeated queries unnecessarily set off similar retrieval and technology pipelines.

To unravel this, we launched a validation-aware, two-tier caching structure:

- Tier 1 (Semantic Cache) eliminates repeated LLM reasoning by serving semantically similar solutions immediately.

- Tier 2 (Retrieval Cache) avoids redundant database and vector searches by reusing beforehand fetched context.

- Agentic validation layers—temporal bypass, row-level and table-level checks, cryptographic hashing, predicate-aware invalidation, and context sufficiency analysis—be certain that effectivity doesn’t come at the price of correctness.

The result’s a system that’s not solely quicker and cheaper, but additionally smarter and safer.

As enterprises scale a RAG system, the distinction between a prototype RAG system and a production-grade one is not going to be mannequin dimension, however architectural self-discipline and effectivity. Clever caching transforms Agentic RAG from a reactive pipeline right into a self-optimizing information engine.

Join with me and share your feedback at www.linkedin.com/in/partha-sarkar-lets-talk-AI

Reference

Amazon Product Critiques — Dataset by Arham Rumi (Proprietor) (CC0: Public Area)

Photographs used on this article are generated utilizing Google Gemini. Figures and underlying code created by me.