is the a part of a collection of posts on the subject of analyzing and optimizing PyTorch fashions. All through the collection, we now have advocated for utilizing the PyTorch Profiler in AI mannequin growth and demonstrated the potential affect of efficiency optimization on the velocity and price of working AI/ML workloads. One widespread phenomenon we now have seen is how seemingly harmless code can hamper runtime efficiency. On this put up, we discover a number of the penalties related to the naive use of variable-shaped tensors — tensors whose form relies on previous computations and/or inputs. Whereas not relevant to all conditions, there are occasions when using variable-shaped tensors may be averted — though this will come on the expense of extra compute and/or reminiscence. We are going to exhibit the tradeoffs of those options on a toy implementation of knowledge sampling in PyTorch.

Three Downsides of Variable Formed Tensors

We encourage the dialogue by presenting three disadvantages to using variable-shaped tensors:

Host-Gadget Sync Occasions

In a perfect situation, the CPU and GPU are capable of run in parallel in an asynchronous method, with the CPU repeatedly feeding the GPU with enter samples, allocating required GPU reminiscence, and loading GPU compute kernels, and the GPU executing the loaded kernels on the offered inputs utilizing the allotted reminiscence. The presence of dynamic-shaped tensors throws a wrench into this parallelism. So as to allocate the suitable quantity reminiscence, the CPU should watch for the GPU to report the tensor’s form, after which the GPU should watch for the CPU to allocate the reminiscence and proceed with the kernel loading. The overhead of this sync occasion could cause a drop within the GPU utilization and gradual runtime efficiency.

We noticed an instance of this in half three of this collection once we studied a naive implementation of the widespread cross-entropy loss that included calls to torch.nonzero and torch.distinctive. Each APIs return tensors with shapes which might be dynamic and depending on the contents of the enter. When these capabilities are run on the GPU, a host-device synchronization occasion happens. Within the case of the cross-entropy loss, we found the inefficiency by using PyTorch Profiler and had been capable of simply overcome it with an alternate implementation that averted using variable-shaped tensors and demonstrated a lot better runtime efficiency.

Graph Compilation

In a current put up we explored the efficiency advantages of making use of just-in-time (JIT) compilation utilizing the torch.compile operator. One in every of our observations was that graph compilation offered a lot better outcomes when the graph was static. The presence of dynamic shapes within the graph limits the extent of the optimization through compilation: In some circumstances, it fails fully; in others it ends in decrease efficiency beneficial properties. The identical implications additionally apply to different types of graph compilation, comparable to XLA, ONNX, OpenVINO, and TensorRT.

Knowledge Batching

One other optimization we now have encountered in a number of of our posts (e.g., right here) is sample-batching. Batching improves efficiency in two major methods:

- Decreasing overhead of kernel loading: Moderately than loading the GPU kernels required for the computation pipeline as soon as per enter pattern, the CPU can load the kernels as soon as per batch.

- Maximizing parallelization throughout compute models: GPUs are extremely parallel compute engines. The extra we’re capable of parallelize computation, the extra we are able to saturate the GPU and enhance its utilization. By batching we are able to doubtlessly enhance the diploma of parallelization by an element of the batch dimension.

Regardless of their downsides, using variable-shaped tensors is commonly unavoidable. However typically we are able to modify our mannequin implementation to avoid them. Typically these modifications might be simple (as within the cross-entropy loss instance). Different instances they could require some creativity in arising with a unique sequence of fixed-shape PyTorch APIs that present the identical numerical end result. Usually, this effort can ship significant rewards in runtime and prices.

Within the subsequent sections, we are going to research using variable-shaped tensors within the context of the info sampling operation. We are going to begin with a trivial implementation and analyze its efficiency. We are going to then suggest a GPU-friendly different that avoids using variable-shaped tensors.

To match our implementations, we are going to use an Amazon EC2 g6e.xlarge with an NVIDIA L40S working an AWS Deep Studying AMI (DLAMI) with PyTorch (2.8). The code we are going to share is meant for demonstration functions. Please don’t depend on it for accuracy or optimality. Please don’t interpret our point out of any framework, library, or platform and an endorsement of its use.

Sampling in AI Mannequin Workloads

Within the context of this put up, sampling refers back to the collection of a subset of things from a big set of candidates for the needs of computational effectivity, balancing of datatypes, or regularization. Sampling is widespread in lots of AI/ML fashions, comparable to detection, rating, and contrastive studying techniques.

We outline a easy variation of the sampling drawback: Given a listing of N tensors every with a binary label, we’re requested to return a subset of Okay tensors containing each optimistic and unfavourable examples, in random order. If the enter listing comprises sufficient samples of every label (Okay/2), the returned subset ought to be evenly break up. Whether it is missing samples of 1 sort, these ought to be full of random samples of the second sort.

The code block beneath comprises a PyTorch implementation of our sampling perform. The implementation is impressed by the favored Detectron2 library (e.g., see right here and right here). For the experiments on this put up, we are going to repair the sampling ratio to 1:10.

import torch

INPUT_SAMPLES = 10000

SUB_SAMPLE = INPUT_SAMPLES // 10

FEATURE_DIM = 16

def sample_data(input_array, labels):

gadget = labels.gadget

optimistic = torch.nonzero(labels == 1, as_tuple=True)[0]

unfavourable = torch.nonzero(labels == 0, as_tuple=True)[0]

num_pos = min(optimistic.numel(), SUB_SAMPLE//2)

num_neg = min(unfavourable.numel(), SUB_SAMPLE//2)

if num_neg < SUB_SAMPLE//2:

num_pos = SUB_SAMPLE - num_neg

elif num_pos < SUB_SAMPLE//2:

num_neg = SUB_SAMPLE - num_pos

# randomly choose optimistic and unfavourable examples

perm1 = torch.randperm(optimistic.numel(), gadget=gadget)[:num_pos]

perm2 = torch.randperm(unfavourable.numel(), gadget=gadget)[:num_neg]

pos_idxs = optimistic[perm1]

neg_idxs = unfavourable[perm2]

sampled_idxs = torch.cat([pos_idxs, neg_idxs], dim=0)

rand_perm = torch.randperm(SUB_SAMPLE, gadget=labels.gadget)

sampled_idxs = sampled_idxs[rand_perm]

return input_array[sampled_idxs], labels[sampled_idxs]Efficiency Evaluation With PyTorch Profiler

Even when not instantly apparent, using dynamic shapes is definitely identifiable within the PyTorch Profiler Hint view. We use the next perform to allow PyTorch Profiler:

def profile(fn, enter, labels):

def export_trace(p):

p.export_chrome_trace(f"{fn.__name__}.json")

with torch.profiler.profile(

actions=[torch.profiler.ProfilerActivity.CPU,

torch.profiler.ProfilerActivity.CUDA],

with_stack=True,

schedule=torch.profiler.schedule(wait=0, warmup=10, lively=5),

on_trace_ready=export_trace

) as prof:

for _ in vary(20):

fn(enter, labels)

torch.cuda.synchronize() # express sync for hint readability

prof.step()

# create random enter

input_samples = torch.randn((INPUT_SAMPLES, FEATURE_DIM), gadget='cuda')

labels = torch.randint(0, 2, (INPUT_SAMPLES,),

gadget='cuda', dtype=torch.int64)

# run with profiler

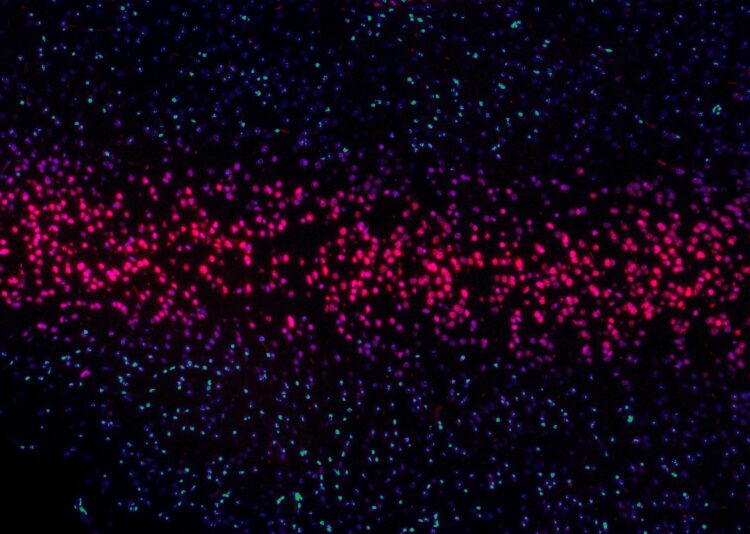

profile(sample_data, input_samples, labels)The picture beneath was captured for the worth of ten million enter samples. It clearly reveals the presence of sync occasions coming from the torch.nonzero name, in addition to the corresponding drops in GPU utilization:

Using torch.nonzero in our implementation shouldn’t be very best, however can or not it’s averted?

A GPU-Pleasant Knowledge Sampler

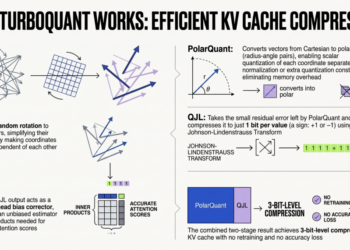

We suggest an alternate implementation of our sampling perform that replaces the dynamic torch.nonzero perform with a inventive mixture of the static torch.count_nonzero, torch.topk, and different APIs:

def opt_sample_data(enter, labels):

pos_mask = labels == 1

neg_mask = labels == 0

num_pos_idxs = torch.count_nonzero(pos_mask, dim=-1)

num_neg_idxs = torch.count_nonzero(neg_mask, dim=-1)

half_samples = labels.new_full((), SUB_SAMPLE // 2)

num_pos = torch.minimal(num_pos_idxs, half_samples)

num_neg = torch.minimal(num_neg_idxs, half_samples)

num_pos = torch.the place(

num_neg < SUB_SAMPLE // 2,

SUB_SAMPLE - num_neg,

num_pos

)

num_neg = SUB_SAMPLE - num_pos

# create random ordering on pos and neg entries

rand = torch.rand_like(labels, dtype=torch.float32)

pos_rand = torch.the place(pos_mask, rand, -1)

neg_rand = torch.the place(neg_mask, rand, -1)

# choose prime pos entries and invalidate others

# since CPU would not know num_pos, we assume most to keep away from sync

top_pos_rand, top_pos_idx = torch.topk(pos_rand, ok=SUB_SAMPLE)

arange = torch.arange(SUB_SAMPLE, gadget=labels.gadget)

if num_pos.numel() > 1:

# unsqueeze to help batched enter

arange = arange.unsqueeze(0)

num_pos = num_pos.unsqueeze(-1)

num_neg = num_neg.unsqueeze(-1)

top_pos_rand = torch.the place(arange >= num_pos, -1, top_pos_rand)

# repeat for neg entries

top_neg_rand, top_neg_idx = torch.topk(neg_rand, ok=SUB_SAMPLE)

top_neg_rand = torch.the place(arange >= num_neg, -1, top_neg_rand)

# mix and blend collectively optimistic and unfavourable idxs

cat_rand = torch.cat([top_pos_rand, top_neg_rand], dim=-1)

cat_idx = torch.cat([top_pos_idx, top_neg_idx], dim=-1)

topk_rand_idx = torch.topk(cat_rand, ok=SUB_SAMPLE)[1]

sampled_idxs = torch.collect(cat_idx, dim=-1, index=topk_rand_idx)

sampled_input = torch.collect(enter, dim=-2,

index=sampled_idxs.unsqueeze(-1))

sampled_labels = torch.collect(labels, dim=-1, index=sampled_idxs)

return sampled_input, sampled_labelsClearly, this perform requires extra reminiscence and extra operations than our first implementation. The query is: Do the efficiency advantages of a static, synchronization-free implementation outweigh the additional price in reminiscence and compute?

To evaluate the tradeoffs between the 2 implementations, we introduce the next benchmarking utility:

def benchmark(fn, enter, labels):

# warm-up

for _ in vary(20):

_ = fn(enter, labels)

iters = 100

begin = torch.cuda.Occasion(enable_timing=True)

finish = torch.cuda.Occasion(enable_timing=True)

torch.cuda.synchronize()

begin.file()

for _ in vary(iters):

_ = fn(enter, labels)

finish.file()

torch.cuda.synchronize()

avg_time = begin.elapsed_time(finish) / iters

print(f"{fn.__name__} common step time: {(avg_time):.4f} ms")

benchmark(sample_data, input_samples, labels)

benchmark(opt_sample_data, input_samples, labels)The next desk compares the common runtime of every of the implementations for a wide range of enter pattern sizes:

For a lot of the enter pattern sizes, the overhead of the host-device sync occasion is both comparable or decrease than the extra compute of the static implementation. Disappointingly, we solely see a serious profit from the sync-free different when the enter pattern dimension reaches ten million. Pattern sizes that enormous are unusual in AI/ML settings. But it surely’s not our tendency to surrender so simply. As famous above, the static implementation allows different optimizations like graph compilation and enter batching.

Graph Compilation

Opposite to the unique perform — which fails to compile — our static implementation is totally appropriate with torch.compile:

benchmark(torch.compile(opt_sample_data), input_samples, labels)The next desk contains the runtimes of our compiled perform:

The outcomes are considerably higher — offering a 70–75 % enhance over the unique sampler implementation within the 1–10 thousand vary. However we nonetheless have yet one more optimization up our sleeve.

Maximizing Efficiency with Batched Enter

As a result of the unique implementation comprises variable-shaped operations, it can’t deal with batched enter straight. To course of a batch, we now have no alternative however to use it to every enter individually, in a Python loop:

BATCH_SIZE = 32

def batched_sample_data(inputs, labels):

sampled_inputs = []

sampled_labels = []

for i in vary(inputs.dimension(0)):

inp, lab = sample_data(inputs[i], labels[i])

sampled_inputs.append(inp)

sampled_labels.append(lab)

return torch.stack(sampled_inputs), torch.stack(sampled_labels)In distinction, our optimized perform helps batched inputs as is — no modifications needed.

input_batch = torch.randn((BATCH_SIZE, INPUT_SAMPLES, FEATURE_DIM),

gadget='cuda')

labels = torch.randint(0, 2, (BATCH_SIZE, INPUT_SAMPLES),

gadget='cuda', dtype=torch.int64)

benchmark(batched_sample_data, input_batch, labels)

benchmark(opt_sample_data, input_batch, labels)

benchmark(torch.compile(opt_sample_data), input_batch, labels)The desk beneath compares the step instances of our sampling capabilities on a batch dimension of 32:

Now the outcomes are definitive: Through the use of a static implementation of the info sampler, we’re capable of enhance efficiency by 2X–52X(!!) the variable-shaped possibility, relying on the enter pattern dimension.

Word that though our experiments had been run on a GPU gadget, the mannequin compilation and enter batching optimizations additionally apply to a CPU setting. Thus, avoiding variable shapes may have implications on AI/ML mannequin efficiency on CPU, as effectively.

Abstract

The optimization course of we demonstrated on this put up generalizes past the particular case of knowledge sampling:

- Discovery through Efficiency Profiling: Utilizing the PyTorch Profiler we had been capable of establish drops in GPU utilization and uncover their supply: the presence of variable-shaped tensors ensuing from the torch.nonzero operation.

- An Alternate Implementation: Our profiling findings allowed us to develop an alternate implementation that completed the identical objective whereas avoiding using variable-shaped tensors. Nonetheless, this step got here at the price of extra compute and reminiscence overhead. As seen in our preliminary benchmarks, the sync-free different demonstrated worse efficiency on widespread enter sizes.

- Unlocking Additional Potential for Optimization: The true breakthrough got here as a result of the static-shaped implementation was compilation-friendly and supported batching. These optimizations offered efficiency beneficial properties that dwarfed the preliminary overhead, resulting in a 2x to 52x speedup over the unique implementation.

Naturally, not all tales will finish as fortunately as ours. In lots of circumstances, we could come throughout PyTorch code that performs poorly on the GPU however doesn’t have an alternate implementation, or it could have one which requires considerably extra compute assets. Nonetheless, given the potential for significant beneficial properties in efficiency and reductions in price, the method of figuring out runtime inefficiencies and exploring different implementations is a vital a part of AI/ML growth.