, a learner messaged me a couple of unsuitable reply.

She had requested the tutor a couple of idea from one in every of my Generative AI tutorials. The response appeared high quality. But it surely wasn’t. I had already rewritten that content material two months earlier. My RAG system pulled a model from six months in the past — not clearly unsuitable, simply unsuitable sufficient to mislead.

She thought she had misunderstood. She hadn’t. My very own system was educating her from classes I had already changed.

I’m constructing a RAG-powered assistant for EmiTechLogic, my tech training platform — turning a content material library right into a system that generates solutions straight from my very own articles. I wrote in regards to the preliminary structure right here. The preliminary structure was manageable. The true problem begins when actual learners hit a dwell system.

Once I pulled the retrieval logs, I noticed precisely what occurred. Each variations had been within the vector retailer. The previous one ranked first as a result of it had extra matching tokens and a better cosine similarity rating. The up to date model got here in second. Typically third.

I anticipated the newer doc to win robotically. That’s not how cosine similarity works.

The system was doing precisely what it was designed to do, which turned out to be the issue.

The sample held throughout different queries too. Python tutorials I had up to date, mannequin comparability guides I had revised. Previous variations stored surfacing first. The AI instrument I used to be constructing was quietly educating folks from classes I had already changed.

Right here’s what that appeared like in observe, identical question, identical corpus, naive RAG:

QUERY: What are the API price limits? Will I get a 429 error?

NAIVE RAG

1. [policy_v1] age=540d | EXPIRED | sim=0.447

"API price limits are set to 100 requests per minute..."

2. [announcement_today] age=0d | legitimate | sim=0.329

3. [tutorial_old] age=600d | EXPIRED | sim=0.303A 540-day-old expired doc was sitting on the prime. The dwell announcement from 48 hours in the past was ranked second. The retriever didn’t care about freshness. It solely matched phrases.

I assumed freshness could be dealt with someplace within the pipeline. It wasn’t. No one had thought so as to add it.

This text is about how I mounted that. I constructed a temporal layer, a layer that sits between the vector search outcomes and the LLM, and makes the system care about time.

TL;DR

If you happen to’re quick on time: vector search has no idea of when one thing was true. I mounted this by including a reranking step between the retriever and the LLM — one which hard-removes expired information, boosts energetic time-bounded alerts, and makes use of exponential decay to choose newer paperwork. The tough half was ensuring “recent” didn’t override “related.”

The one-line model: naive RAG finds what’s comparable, temporal RAG finds what’s nonetheless true.

Full code: https://github.com/Emmimal/temporal-rag/

Who that is for

Any RAG system the place the data base adjustments over time. In case your system has ever given a assured reply from a doc you had already up to date, deprecated, or changed — that is for you.

It issues most for API and product documentation, incident and outage administration, buyer help data bases, inner wikis and coverage methods, and training platforms the place content material evolves.

Skip it in case your data base is static and by no means adjustments. Skip it in case your content material has no idea of expiry, variations, or time-bounded alerts. Skip it if a stale reply carries no actual consequence.

Why Vector Search Has No Sense of Time

The usual RAG pipeline embeds paperwork, embeds the question, finds the closest matches, and sends them to the mannequin [1, 2]. That works high quality in case your info by no means adjustments. However in case you are continually publishing new guides and rewriting previous ones, this fails silently. You may not even discover till a person complains.

The vector retailer simply is aware of the angle between the vectors [10]. It has no concept which doc is six months previous and which one I printed final week.

The standard fixes are deleting previous paperwork or including metadata filters. I attempted each. They helped for about two weeks, after which I up to date my content material once more and the identical drawback returned. A doc with a 20% penalty can nonetheless rank first if its phrase overlap is powerful sufficient.

Once I appeared nearer, I noticed this wasn’t one huge drawback. It was really three separate issues, and each wants a distinct repair.

I had been collapsing all three into one bucket known as “stale content material” and making use of the identical repair to all of them. That’s why nothing was sticking.

Three Time Issues, Three Completely different Fixes

1. Expiration: a reality that’s now false

Some paperwork have an expiry date. Displaying them after that date isn’t a freshness concern. It’s a lie. You possibly can’t simply down-rank these. It’s important to take away them utterly earlier than the mannequin ever sees them.

2. Temporality: information which might be solely true proper now

Some info issues intensely for a brief window. A dwell discover a couple of website outage or a 48-hour coverage change isn’t simply further context. It’s a very powerful doc in your data base whereas its window is open. An hour after it closes, it’s false.

3. Versioning: a indisputable fact that has been changed

This was my largest drawback. Once I up to date a doc, each variations stayed within the vector retailer. The previous one stored profitable as a result of it had extra matching phrases. The repair right here is neither elimination nor boosting. Let time decay deal with it. The newer doc ought to naturally outscore the older one when recency is a part of the rating sign.

| Downside | Nature | Unsuitable repair | Proper repair |

|---|---|---|---|

| Expiration | Truth is now false | Down-weight | Arduous take away earlier than rating |

| Temporality | Truth is energetic and pressing | Deal with as regular | Enhance whereas window is open |

| Versioning | Truth is outmoded | Arduous take away | Time decay ranks newer increased |

I stored seeing the identical sample: previous paperwork, expired paperwork, and non permanent alerts had been all handled like the identical drawback. In observe, it behaved extra like a set of guidelines than an precise temporal retrieval mannequin.

How This Pertains to Present Analysis

I checked out present approaches — graph-based retrieval, timestamped embeddings, recency priors baked into the retriever itself. Time-aware language fashions bake temporal alerts straight into the mannequin weights [5], whereas internet-augmented approaches fetch dwell paperwork at question time [3]. RealTime QA [4] frames the issue as one in every of reply foreign money somewhat than retrieval rating. All of them required rebuilding infrastructure I didn’t have. I wanted one thing I may drop into the system I already had operating.

So I constructed a post-retrieval layer as an alternative — a reranking step [6] utilized downstream of dense passage retrieval. No retriever adjustments. No new embedding mannequin. No new infrastructure. All it requires is a timestamp on every doc and one reranking step at question time.

I wanted one thing operating inside days on a dwell platform, not a rebuild. This was that.

What I Constructed: A Temporal Layer

What I ended up constructing was a temporal layer that sits between the retriever and the LLM. The retriever stays unchanged. It nonetheless pulls the highest 20 candidates by cosine similarity. The temporal layer receives these candidates, reclassifies them, and reranks them earlier than any attain the mannequin.

That hole between retriever and LLM is the place all the true work occurs.

The Core Design: Two Orthogonal Axes

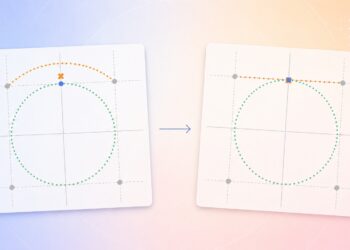

The important thing design determination is 2 impartial classification axes, not one.

Axis 1: Validity State (3 States)

EXPIRED -> was true, is now not true. Arduous take away earlier than rating.

VALID -> true with no energetic time constraint. Regular scoring.

TEMPORAL -> true inside a at the moment energetic time window. Enhance.Most methods run on two states: legitimate and expired. What I used to be lacking was a separate TEMPORAL state for energetic time-bound alerts. A upkeep discover isn’t the identical as a everlasting rule. It’s pressing and must floor first. As soon as upkeep is over, the discover strikes to EXPIRED and is eliminated.

You could find the total code for a way this works in my venture folder. Here’s a simplified model of the primary logic:

TEMPORAL state is gated on doc sort.

# TEMPORAL state is gated on doc sort.

# Solely EVENT paperwork attain TEMPORAL — not VERSIONED, not STATIC.

if self.sort == DocumentKind.EVENT:

return ValidityState.TEMPORAL

return ValidityState.VALID # VERSIONED docs with valid_from are nonetheless simply VALIDThe whole implementation with all edge instances is linked within the “Run It Your self” part.

Axis 2: Doc Form (3 Varieties)

STATIC -> timeless reality (definitions, math, reference materials)

VERSIONED -> changed by newer info (insurance policies, tutorials, specs)

EVENT -> true solely inside a time window (bulletins, outages)This distinction issues quite a bit. With out it, my first model categorized a brand new firm coverage as a short lived occasion and boosted it to the highest of each search. A coverage replace behaves in another way from a dwell outage discover, even when each are current. It must be ranked usually and lose factors slowly over time.

The repair: I made it so solely “Occasions” (like information or alerts) can get the “Pressing” enhance. Regular paperwork are by no means handled that manner.

policy_v2: sort=VERSIONED state=legitimate window=supersedes policy_v1

announcement_today: sort=EVENT state=temporal window=42h remainingEven with an identical timestamps, these paperwork behave in another way as a result of they symbolize completely different varieties of knowledge.

state decides what to do with a doc, doc sort decides why. Picture by Writer

The Scoring Formulation

The ultimate rating for every doc combines vector similarity with temporal alerts:

final_score = semantic_penalty

× [(1 − w) × vector_score

+ w × (decay_score × recency_score

× validity_multiplier × event_relevance_multiplier)]The place:

vector_score: cosine similarity, normalized to fall between 0 and 1 relative to the candidate pool.

decay_score: exponential decay primarily based on doc age, a method utilized to doc freshness rating in info retrieval [10].

decay = 0.5 ^ (age_in_days / half_life_days)You may also change how briskly the rating ought to drops primarily based on what the doc is. For instance, information fades away in simply 7 days, however authorized paperwork keep robust for three hundred and sixty five days.

recency_score: a relative comparability throughout the present pool. The most recent doc will get the highest rating, the oldest will get the underside. This ensures the system at all times prefers the freshest possibility accessible, not simply the freshest possibility in absolute phrases.

validity_multiplier — utilized primarily based on validity state:

EXPIRED -> 0.0 (security internet; ought to already be filtered)

VALID -> 1.0 (regular)

TEMPORAL -> 1.2 (enhance for energetic EVENT alerts)event_relevance_multiplier — utilized to EVENT paperwork solely

EVENT + TEMPORAL + raw_cosine >= flooring -> 1.0 (full enhance)

EVENT + TEMPORAL + raw_cosine < flooring -> 0.5 (enhance halved)semantic_penalty — utilized to all doc varieties:

normalized_score >= min_threshold -> 1.0 (no penalty)

normalized_score < min_threshold -> 0.3 (relevance penalty)w is temporal_weight — the stability between semantic relevance and temporal alerts. I run it at 0.40 on my platform’s tutor, which means 60% of the rating nonetheless comes from which means, 40% from time.

60% semantic match, 40% time, with freshness by no means allowed to override relevance. Picture by Writer.

The Failure That Revealed the EVENT Relevance Gate

After the primary model was operating, I seen a brand new drawback. A person requested about “engineering crew well being,” however the prime end result was a discover about web site upkeep.

The discover was new. But it surely had nothing to do with the query. It received just because it was the freshest factor within the system. Being new isn’t sufficient. The doc additionally must be related.

With out some relevance gating, recent alerts began exhibiting up in unrelated queries.

So I added a tough requirement: an occasion solely will get its enhance if its uncooked cosine rating clears a minimal flooring. If the content material doesn’t speak about the precise subject, the recency benefit disappears.

def _event_relevance_multiplier(self, doc, state, raw_vector_score) -> float:

if doc.sort != DocumentKind.EVENT:

return 1.0

if state != ValidityState.TEMPORAL:

return 1.0

flooring = self.config.event_min_raw_vector_score

return 1.0 if raw_vector_score >= flooring else 0.5Why uncooked cosine and never normalized? As a result of it acts as an absolute ruler.

Normalized scores are relative. If all of your outcomes are weak, the “least dangerous” one may nonetheless rating 80%. That’s harmful. Uncooked cosine doesn’t care in regards to the different paperwork. If a question about “crew well being” has nearly nothing in widespread with a “technical replace,” the rating stays close to zero regardless.

cause: EVENT sign current however low question relevance

(uncooked sim 0.101 < 0.2) — temporal enhance halvedThreshold calibration word: The quantity you employ as your “safety guard” threshold is determined by the kind of AI mannequin you employ.

- TF-IDF / sparse embeddings: use a flooring round 0.20. Phrase-match scores are naturally decrease.

- Dense fashions like

text-embedding-3-smallorall-MiniLM-L6-v2[7]: use 0.35 to 0.50. These fashions rating increased by default, so the ground wants to maneuver up.

4 Situations: Earlier than and After

These are the precise outputs from operating demo.py on the identical queries, two methods: naive RAG and temporal RAG.

State of affairs 1 — API Price Limits (expired reply is harmful)

QUERY: What are the API price limits? Will I get a 429 error?

NAIVE RAG

1. [policy_v1] age=540d | EXPIRED | sim=0.447

2. [announcement_today] age=0d | legitimate | sim=0.329

3. [tutorial_old] age=600d | EXPIRED | sim=0.303

TEMPORAL RAG

[announcement_today]

age : 0.3 days | sort: EVENT | state: temporal (energetic)

window : 42h remaining

cause : Lively EVENT sign (42h remaining) — overrides static sources

FINAL SCORE : 1.079

[policy_v2]

age : 175.0 days | sort: VERSIONED | state: ✓ legitimate

cause : Newest model — supersedes policy_v1

FINAL SCORE : 0.573

[news_recent]

age : 30.0 days | sort: STATIC | state: ✓ legitimate

cause : Contemporary, open-ended reality — excessive confidence

FINAL SCORE : 0.509

eliminated : ['policy_v1', 'tutorial_old']

surfaced : ['policy_v2', 'news_recent']Naive RAG tells the person they’ll hit 429 errors at 100 requests per minute. The precise restrict is 1,000. Temporal RAG leads with the dwell upkeep announcement (price limiting is at the moment suspended) and follows with the present coverage.

State of affairs 2: LLM Scaling Analysis

QUERY: Do bigger language fashions hold enhancing with scale?

NAIVE RAG

1. [tutorial_old] age=600d | EXPIRED | sim=0.226

2. [research_2022] age=730d | legitimate | sim=0.141

3. [research_2026] age=120d | legitimate | sim=0.136

TEMPORAL RAG

[research_2026] STATIC ✓ legitimate rating=0.662

cause: Stale — semantically related however low freshness weight

[research_2022] STATIC ✓ legitimate rating=0.600

cause: Stale — semantically related however low freshness weight

[news_old] STATIC ✓ legitimate rating=0.476

cause: Stale — semantically related however low freshness weight

eliminated : tutorial_old

surfaced: news_oldNaive RAG ranks a lifeless doc first by phrase overlap. Temporal RAG removes it and places the 2026 analysis on the prime, the place it belongs. The corpus paperwork on this state of affairs mirror the true shift in scaling analysis: the sooner plateau discovering [8] was later revised by compute-optimal scaling research [9].

State of affairs 3 — Firm Well being (one story vs the total image)

QUERY: What's the present state of the engineering crew and firm well being?

NAIVE RAG

1. [news_old] age=400d | legitimate | sim=0.600

2. [tutorial_new] age=85d | legitimate | sim=0.385

3. [tutorial_old] age=600d | EXPIRED | sim=0.304

TEMPORAL RAG

[news_old] STATIC ✓ legitimate rating=0.602

cause: Stale — semantically related however low freshness weight

[news_recent] STATIC ✓ legitimate rating=0.543

cause: Contemporary, open-ended reality — excessive confidence

[tutorial_new] VERSIONED ✓ legitimate rating=0.519

cause: Newest model — supersedes tutorial_old

eliminated : tutorial_old

surfaced : news_recentThe dwell announcement didn’t seem right here as a result of it failed the relevance gate. Its uncooked cosine was 0.165, beneath the 0.20 flooring. However each information articles confirmed up, which is strictly proper. The LLM can now learn each and perceive how issues have modified over time. Naive RAG solely surfaced the previous story and two unrelated guides.

State of affairs 4 — Dwell Outages (pressing sign buried)

QUERY: Are there any present API outages or restrict suspensions I ought to learn about?

NAIVE RAG

1. [policy_v1] age=540d | EXPIRED | sim=0.390

2. [policy_v2] age=175d | legitimate | sim=0.267

3. [announcement_today] age=0d | legitimate | sim=0.101

TEMPORAL RAG

[policy_v2] VERSIONED ✓ legitimate rating=0.641

cause: Newest model — supersedes policy_v1

[announcement_today] EVENT temporal rating=0.465

cause: EVENT sign current however low question relevance

(uncooked sim 0.101 < 0.2) — temporal enhance halved

[news_recent] STATIC ✓ legitimate rating=0.082

cause: Penalized: normalized vector rating 0.000 beneath relevance threshold

eliminated : policy_v1Naive RAG buries the dwell replace at place 3 behind an expired coverage. Temporal RAG strikes it to place 2. It didn’t attain first as a result of the phrase overlap between “outages” and “upgrades” was low. With dense embeddings as an alternative of TF-IDF, it could have taken the highest spot simply.

What broke subsequent — and the way I mounted it

As soon as the core temporal layer was working, actual queries surfaced extra surprises. Right here’s what broke subsequent.

When a doc is just too previous to face alone however too helpful to drop

Some paperwork weren’t unsuitable, simply sufficiently old that I didn’t need them answering alone. So I added a 3rd motion between SOLO and DROP: Weak paperwork get retrieved provided that a brisker supply comes with them. Invalid ones by no means attain the mannequin.

[Invalid] research_old decay=0.100 → DO NOT RETRIEVE

[Weak] research_weak decay=0.351 → PAIR WITH research_fresh (achieve=+0.540)

[Good] research_fresh decay=0.891 → RETRIEVEWhen the rating appears good however the reply isn’t sure

A excessive rating doesn’t imply excessive confidence. When two paperwork rating 0.73 and 0.72 however contradict one another, the system shouldn’t act sure. I added confidence tiers that test the margin and flag conflicts — a detailed race or contradiction drops the end result to LOW whatever the uncooked rating.

policy_v3 — clear winner: confidence 0.7485 → HIGH

policy_v3 — battle, slim margin: confidence 0.4727 → LOW

math_theorem: confidence 0.6992 → MEDIUMThe second policy_v3 row is the one which issues: rating went up from the battle enhance, confidence went down as a result of the battle is a warning sign.

Realizing why one thing was rejected

When the system rejects a doc I need to know precisely which rule fired and on which question. I added a failure log keyed by query_id.

Failure abstract (3 rejections — query_id=d211ffdc)

EXPIRED_VERSIONED_DOC × 1 doc=expired_policy

STALE_STATIC_DOC × 1 doc=stale_reference

BELOW_RELEVANCE_GATE × 1 doc=fresh_irrelevantCodes in use: EXPIRED_VERSIONED_DOC, STALE_STATIC_DOC, HARD_EXPIRED_EVENT, BELOW_RELEVANCE_GATE, OUT_OF_TIME_RANGE, PAIR_PARTNER_NOT_FOUND. That is what I open first when one thing surfaces the unsuitable doc.

When the actual fact modified considerably between variations

Changing “100 requests per minute” with “1,000 requests per minute” just isn’t a wording change. I added battle severity detection that enhances the winner’s rating and concurrently lowers its confidence — so the precise reply surfaces however the mannequin stays cautious.

'100' → '5000' severity=0.980 enhance=+0.196 conf_pen=-0.098 (50× — extreme)

'1000' → '500' severity=0.500 enhance=+0.100 conf_pen=-0.050

'1000' → '1000' severity=0.000 enhance=0 conf_pen=0When the person specifies a time vary

A learner typed “present me analysis from 2021 to 2023.” The system returned the three most up-to-date paperwork — none from that vary. Temporal decay made it worse, rating newer paperwork increased when older ones had been precisely what was requested for.

I added a time-range parser that applies a strict filter when the question alerts a date window, and steps apart totally when it doesn’t. I didn’t need it to guess.

'Present me analysis from 2021-2023' → stored: research_2022

'What had been the findings in 2019?' → stored: research_2019

'Newest embeddings analysis' → no filter, all docs goWhen the question tells you ways a lot recency ought to matter

“What’s the present price restrict?” wants the freshest reply accessible. “How does cosine similarity work?” doesn’t care if I wrote it three years in the past. I used to be making use of the identical temporal weight to each. The load now adjusts primarily based on sign phrases within the question.

'What's the present price restrict?' → temporal_weight: 0.70

'Has the speed restrict modified lately?' → temporal_weight: 0.55

'How does cosine similarity work?' → temporal_weight: 0.20 (baseline)Seeing contained in the system — and protecting model conflicts out of context

I needed to know not simply the place every doc ranked, however what to do with it. The freshness report provides kind-aware recommendation per doc:

fresh_event [EVENT] grade: A → Confirm earlier than serving, window closes quickly

current_policy [VERSIONED] grade: D → Verify for a more moderen model

math_theorem [STATIC] grade: F → Could have been outmodedThe ultimate drawback was subtler. Even with good reranking, the LLM produced hedged or averaged solutions when v1 and v3 of the identical coverage each ended up in context. It doesn’t know which model to belief — it tries to reconcile the whole lot it sees. What solved it was deduplicating by model chain earlier than paperwork reached the temporal layer in any respect.

Enter: policy_v1 (v1), policy_v2 (v2), policy_v3 (v3)

policy_v1 — EXPIRED → eliminated

policy_v2 — outmoded by v3 → eliminated

policy_v3 — stored ✓

End result: ['policy_v3']Coverage v3 goes in. The battle by no means comes up.

Not All Content material Decays on the Similar Price

One factor grew to become clear rapidly once I utilized this to the platform: a single half-life worth doesn’t work for all content material varieties. A breaking replace and a mathematical definition age very in another way, and treating them the identical manner was quietly sabotaging the rankings.

breaking_news: half_life=1d, temporal_weight=0.70

information: half_life=7d, temporal_weight=0.55

coverage: half_life=90d, temporal_weight=0.45

analysis: half_life=180d, temporal_weight=0.35

authorized: half_life=365d, temporal_weight=0.25

reference: half_life=1825d, temporal_weight=0.10

arithmetic: half_life=36500d, temporal_weight=0.01

are each “previous” after a yr, however solely one in every of them is unsuitable. Picture by Writer.

For breaking information, being new is principally the entire level. For a math proof, age doesn’t matter — a theorem from 70 years in the past is simply as legitimate as one from final week. On EmiTechLogic I group my content material into bands: tutorials use the “coverage” setting since newer is often higher, and reference materials makes use of the “reference” setting because the information don’t expire. Getting this distinction proper is what really made the entire thing work.

There may be yet one more constraint layered on prime of half-life: a decay flooring. With out it, a math theorem from 1954 will get a decay rating close to zero — not as a result of it’s unsuitable, however just because it’s previous. The temporal part then drags its remaining rating down even when the semantic match is powerful. The ground prevents that. Within the implementation, DECAY_FLOORS maps a (doc_type, sort) pair to a minimal decay worth — arithmetic/STATIC flooring at 0.95, reference/STATIC at 0.70, analysis/STATIC at 0.10. Paperwork with out a flooring entry decay freely; paperwork with one by no means drop beneath their minimal. A cosine-similarity winner that occurs to be previous nonetheless competes on which means somewhat than shedding robotically on age.

The implementation value is decrease than you’d anticipate. The temporal reranking step provides roughly 15 to 30 milliseconds per search — negligible subsequent to the 1 to 4 seconds LLM inference sometimes takes. You don’t want to vary your search engine, your knowledge, or your embedding mannequin. All the temporal layer is a pure Python post-processing step that runs downstream of no matter vector search you’re already utilizing.

The one actual upfront requirement is metadata in your paperwork. At minimal, each doc wants a created_at timestamp. valid_from, valid_until, and sort provide the greatest outcomes, however they’re non-obligatory — paperwork with none metadata fall again to STATIC/VALID with customary time-decay scoring, which is already higher than nothing. On my platform I automated the tagging totally. The system now distinguishes between an replace, an alert, and a everlasting reality with out me labeling something manually.

What This Does Not Resolve

A couple of sincere caveats earlier than you construct this.

Implicit expiration is the one I nonetheless haven’t absolutely solved. Most paperwork don’t announce after they go stale — a tutorial for a deprecated endpoint has no expiry date, so the system can’t understand it’s rotting. My heuristic guidelines catch the plain instances, however edge instances slip via, and I discover them the identical manner I discovered the unique drawback: a learner will get a solution that’s quietly unsuitable.

Conflicting sources are outdoors the temporal layer’s scope totally. It surfaces the latest and related paperwork — resolving disagreements between them is the LLM’s drawback, not the retriever’s.

Calibration is model-specific in methods that can chunk you. The 0.20 uncooked cosine flooring is tuned for TF-IDF. Dense fashions like text-embedding-3-small rating increased in absolute phrases, in order that flooring wants to maneuver to 0.35–0.50. Take a look at towards your individual queries earlier than you belief any threshold I’ve listed.

The half-life profiles are beginning factors, not constants. What “stale” means for a authorized crew just isn’t what it means for a information website. Run the system on actual queries out of your area and tune from there.

The Takeaway

The issue isn’t that RAG methods retrieve unsuitable paperwork — it’s that they haven’t any idea of when a doc was true, solely how comparable it’s to the question.

Two axes drove the entire design — the sort axis was the one I nearly missed totally. Validity state — whether or not a doc is expired (take away it), legitimate (rating usually), or temporal (enhance it whereas its window is energetic). Doc sort — whether or not it’s a timeless reality (STATIC), one thing that has been changed (VERSIONED), or one thing that’s solely true inside a time window (EVENT).

With out the sort axis, a versioned coverage with an efficient date appears an identical to a time-bounded occasion and will get mislabeled. The system produces the unsuitable end result for a right-sounding cause. That’s the toughest class of bug to catch in manufacturing, as a result of nothing appears damaged.

The semantic threshold closes the final hole. Contemporary-but-irrelevant paperwork can take over rating when temporal scores are excessive. A minimal uncooked cosine flooring for EVENT paperwork makes positive freshness by no means absolutely overrides relevance.

Similarity alone wasn’t sufficient anymore. I wanted the retriever to care about whether or not the knowledge was nonetheless legitimate.

Run It Your self

The total implementation (temporal_rag.py, demo.py, and superior.py) is offered at:

The repository contains the whole validity_state implementation, all decay profiles, the SequenceAwareRetriever, and the freshness report API. The demo runs with none API key utilizing a deterministic TF-IDF embedder so you may reproduce the precise output proven above on any machine.

git clone https://github.com/Emmimal/temporal-rag/

cd temporal-rag

pip set up numpy

python demo.pyReferences

Foundational RAG

[1] Lewis, P., Perez, E., Piktus, A., Petroni, F., Karpukhin, V., Goyal, N., Küttler, H., Lewis, M., Yih, W.-T., Rocktäschel, T., Riedel, S., & Kiela, D. (2020). Retrieval-augmented technology for knowledge-intensive NLP duties. Advances in Neural Data Processing Techniques, 33, 9459–9474. https://arxiv.org/abs/2005.11401

[2] Gao, Y., Xiong, Y., Gao, X., Jia, Ok., Pan, J., Bi, Y., Dai, Y., Solar, J., Wang, M., & Wang, H. (2024). Retrieval-augmented technology for big language fashions: A survey. arXiv preprint arXiv:2312.10997. https://doi.org/10.48550/arXiv.2312.10997

Temporal Reasoning in Language Fashions

[3] Lazaridou, A., Gribovskaya, E., Stokowiec, W., & Grigorev, N. (2022). Web-augmented language fashions via few-shot prompting for open-domain query answering. arXiv preprint arXiv:2203.05115. https://doi.org/10.48550/arXiv.2203.05115

[4] Kasai, J., Sakaguchi, Ok., Takahashi, Y., Le Bras, R., Asai, A., Yu, X., Radev, D., Smith, N. A., Choi, Y., & Inui, Ok. (2022). RealTime QA: What’s the reply proper now? arXiv preprint arXiv:2207.13332. https://doi.org/10.48550/arXiv.2207.13332

[5] Dhingra, B., Cole, J. R., Eisenschlos, J. M., Gillick, D., Eisenstein, J., & Cohen, W. W. (2022). Time-aware language fashions as temporal data bases. Transactions of the Affiliation for Computational Linguistics, 10, 257–273. https://doi.org/10.1162/tacl_a_00459

Dense Retrieval and Reranking

[6] Nogueira, R., & Cho, Ok. (2019). Passage re-ranking with BERT. arXiv preprint arXiv:1901.04085. https://doi.org/10.48550/arXiv.1901.04085

[7] Reimers, N., & Gurevych, I. (2019). Sentence-BERT: Sentence embeddings utilizing siamese BERT-networks. Proceedings of the 2019 Convention on Empirical Strategies in Pure Language Processing and the ninth Worldwide Joint Convention on Pure Language Processing (EMNLP-IJCNLP), 3982–3992. https://doi.org/10.18653/v1/D19-1410

Scaling Legal guidelines (referenced in State of affairs 2)

[8] Kaplan, J., McCandlish, S., Henighan, T., Brown, T. B., Chess, B., Little one, R., Grey, S., Radford, A., Wu, J., & Amodei, D. (2020). Scaling legal guidelines for neural language fashions. arXiv preprint arXiv:2001.08361. https://doi.org/10.48550/arXiv.2001.08361

[9] Hoffmann, J., Borgeaud, S., Mensch, A., Buchatskaya, E., Cai, T., Rutherford, E., de las Casas, D., Hendricks, L. A., Welbl, J., Clark, A., Hennigan, T., Noland, E., Millican, Ok., van den Driessche, G., Damoc, B., Man, A., Osindero, S., Simonyan, Ok., Elsen, E., Rae, J. W., Vinyals, O., & Sifre, L. (2022). Coaching compute-optimal giant language fashions. arXiv preprint arXiv:2203.15556. https://doi.org/10.48550/arXiv.2203.15556

Data Retrieval Fundamentals

[10] Manning, C. D., Raghavan, P., & Schütze, H. (2008). Introduction to info retrieval. Cambridge College Press. https://nlp.stanford.edu/IR-book/

Disclosure

All code on this article was written by me and is authentic work, developed and examined on Python 3.12.6. Benchmark numbers and retrieval outputs are from precise demo runs on my native machine (Home windows 11, CPU solely) and are reproducible by cloning the repository and operating demo.py and superior.py. The temporal layer, scoring formulation, doc classification system, and all design choices are impartial implementations not derived from any cited codebase. The demo runs with none API key utilizing a deterministic TF-IDF embedder; numpy is the one exterior dependency required to breed all outputs proven. I’ve no monetary relationship with any instrument, library, or firm talked about on this article.