. I ship content material throughout a number of domains and have too many issues vying for my consideration: a homelab, infrastructure monitoring, good house gadgets, a technical writing pipeline, a ebook undertaking, house automation, and a handful of different issues that will usually require a small staff. The output is actual: printed weblog posts, analysis briefs staged earlier than I would like them, infrastructure anomalies caught earlier than they change into outages, drafts advancing by evaluate whereas I’m asleep.

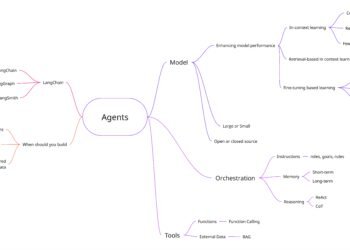

My secret, when you can name it that, is autonomous AI brokers working on a homelab server. Each owns a site. Each has its personal identification, reminiscence, and workspace. They run on schedules, choose up work from inboxes, hand off outcomes to one another, and largely handle themselves. The runtime orchestrating all of that is OpenClaw.

This isn’t a tutorial, and it’s undoubtedly not a product pitch. It’s a builder’s journal. The system has been working lengthy sufficient to interrupt in attention-grabbing methods, and I’ve discovered sufficient from these breaks to construct mechanisms round them. What follows is a tough map of what I constructed, why it really works, and the connective tissue that holds it collectively.

Let’s leap in.

9 Orchestrators, 35 Personas, and a Lot of Markdown (and rising)

After I first began, it was the primary OpenClaw agent and me. I shortly noticed the necessity for a number of brokers: a technical writing agent, a technical reviewer, and a number of other technical specialists who might weigh in on particular domains. Earlier than lengthy, I had almost 30 brokers, all with their required 5 markdown information, workspaces, and reminiscences. Nothing labored effectively.

Finally, I bought that down to eight complete orchestrator brokers and a wholesome library of personas they may assume or use to spawn a subagent.

One among my favourite issues when constructing out brokers is naming them, so let’s see what I’ve bought up to now as we speak:

CABAL (from Command and Conquer – the evil AI in one of many video games) – that is the central coordinator and first interface with my OpenClaw cluster.

DAEDALUS (AI from Deus Ex) – answerable for technical writing: blogs, LinkedIn posts, analysis/opinion papers, choice papers. Something the place I would like deep technical data, skilled reviewers, and researchers, that is it.

REHOBOAM (Westworld narrative machine) – answerable for fiction writing, as a result of I daydream about writing the following massive cyber/scifi sequence. This consists of editors, reviewers, researchers, a roundtable dialogue, a ebook membership, and some different goodies.

PreCog (from Minority Report) – answerable for anticipatory analysis, constructing out an inner wiki, and making an attempt to note matters that I’ll need to dive deep into. It additionally takes advert hoc requests, so after I get a glimmer of an thought, PreCog can pull collectively sources in order that after I’m prepared, I’ve a hefty, curated analysis report back to jump-start my work.

TACITUS (additionally from Command and Conquer) – answerable for my homelab infrastructure. I’ve a few servers, a NAS, a number of routers, Proxmox, Docker containers, Prometheus/Grafana, and so on. This one owns all of that. If I’ve any drawback, I don’t SSH in and determine it out, and even leap right into a Claude Code session, I Slack TACITUS, and it handles it.

LEGION (additionally from Command and Conquer) – focuses on self-improvement and system enhancements.

MasterControl (from Tron) is my engineering staff. It has front-end and backend builders, necessities gathering/documentation, QA, code evaluate, and safety evaluate. Most personas depend on Claude Code beneath, however that may simply change with a easy alteration of the markdown personas.

HAL9000 ( from the place) – This one owns my SmartHome (the irony is intentional). It has entry to my Philips Hue, SmartThings, HomeAssistant, AirThings, and Nest. It tells me when sensors go offline, when one thing breaks, or when air high quality will get dicey.

TheMatrix (actually, come on, ) – This one, I’m fairly happy with. Within the early days of agentic and the Autogen Framework, I created a number of techniques, every with >1 persona, that will collaborate and return a abstract of their dialogue. I used this to shortly ideate on matters and collect a various set of artificial opinions from totally different personas. The massive disadvantage was that I by no means wrapped it in a UI; I all the time needed to open VSCode and edit code after I wanted one other group. Nicely, I handed this off to MasterControl, and it used Python and the Strands framework to implement the identical factor. Now I inform it what number of personas I would like, a bit about every, and if I would like it to create extra for me. Then it turns them free and provides me an summary of the dialogue. It’s The Matrix, early alpha model, when it was all simply inexperienced strains of code and no lady within the purple gown.

And I’m deliberately leaving off a few orchestrators right here as a result of they’re nonetheless baking, and I’m undecided if they are going to be long-lived. I’ll save these for future posts.

Every has real area possession. DAEDALUS doesn’t simply write when requested. It maintains a content material pipeline, runs subject discovery on a schedule, and applies high quality requirements to its personal output. PreCog proactively surfaces matters aligned with my pursuits. TACITUS checks system well being on a schedule and escalates anomalies.

That’s the “orchestrator” distinction. These brokers have company inside their domains.

Now, the second layer: personas. Orchestrators are costly (extra on that later). You need heavyweight fashions making judgment calls. However not each process wants a heavyweight mannequin.

Reformatting a draft for LinkedIn? Working a copy-editing cross? Reviewing code snippets? You don’t want Opus to purpose by each sentence. You want a quick, low-cost, targeted mannequin with the best directions.

That’s a persona. A markdown file containing a task definition, constraints, and an output format. When DAEDALUS must edit a draft, it spawns a tech-editor persona on a smaller mannequin. The persona does one job, returns the output, and disappears. No persistence. No reminiscence. Process-in, task-out.

The persona library has grown to about 35 throughout seven classes:

- Inventive: writers, reviewers, critique specialists

- TechWriting: author, editor, reviewer, code reviewer

- Design: UI designer, UX researcher

- Engineering: AI engineer, backend architect, fast prototyper

- Product: suggestions synthesizer, dash prioritizer, development researcher

- Challenge Administration: experiment tracker, undertaking shipper

- Analysis: nonetheless a placeholder, because the orchestrators deal with analysis straight for now

Consider it as employees engineers versus contractors. Workers engineers (orchestrators) personal the roadmap and make judgment calls. Contractors (personas) are available for a dash, do the work, and depart. You don’t want a employees engineer to format a LinkedIn put up.

Brokers Are Costly — Personas Are Not

Let me get particular about price tiering, as a result of that is the place many agent system designs go fallacious.

The intuition is to make the whole lot highly effective. Each process by your greatest mannequin. Each agent has full context. You in a short time run up a invoice that makes you rethink your life selections. (Ask me how I do know.)

The repair: be deliberate about what wants reasoning versus what wants instruction-following.

Orchestrators run on Opus (or equal). They make choices: what to work on subsequent, easy methods to construction a analysis strategy, whether or not output meets high quality requirements, and when to escalate. You want common sense there.

Writing duties run on Sonnet. Robust sufficient for high quality prose, considerably cheaper. Drafting, modifying, and analysis synthesis occur right here.

Light-weight formatting: Haiku. LinkedIn optimization, fast reformatting, constrained outputs. The persona file tells the mannequin precisely what to supply. You don’t want reasoning for this. You want pattern-matching and pace.

Right here’s roughly what a working tech-editor persona appears like:

# Persona: Tech Editor

## Function

Polish technical drafts for readability, consistency, and correctness.

You're a specialist, not an orchestrator. Do one job, return output.

## Voice Reference

Match the writer's voice precisely. Learn ~/.openclaw/international/VOICE.md

earlier than modifying. Protect conversational asides, hedged claims, and

self-deprecating humor. If a sentence feels like a thesis protection,

rewrite it to sound like lunch dialog.

## Constraints

- NEVER change technical claims with out flagging

- Protect the writer's voice (that is non-negotiable)

- Flag however don't repair factual gaps — that is Researcher's job

- Do NOT use em dashes in any output (writer's choice)

- Test all model numbers and dates talked about within the draft

- If a code instance appears fallacious, flag it — do not silently repair

## Output Format

Return the complete edited draft with adjustments utilized. Append an

"Editor Notes" part itemizing:

1. Vital adjustments and rationale

2. Flagged issues (factual, tonal, structural)

3. Sections that want writer evaluate

## Classes (added from expertise)

- (2026-03-04) Do not over-polish parenthetical asides. They're

intentional voice markers, not tough draft artifacts. That’s an actual working doc. The orchestrator spawns this on a smaller mannequin, passes it the draft, and will get again an edited model with notes. The persona by no means causes about what process to do subsequent. It simply does the one process. And people timestamped classes on the backside? They accumulate from expertise, identical because the agent-level information.

It’s the identical precept as microservices (process isolation and single accountability) with out the community layer. Your “service” is a number of hundred phrases of Markdown, and your “deploy” is a single API name.

What makes an agent – simply 5 Markdown information

Each agent’s identification lives in markdown information. No code, no database schema, no configuration YAML. Structured prose that the agent reads at the beginning of each session.

Each orchestrator masses 5 core information:

IDENTITY.md is who the agent is. Identify, function, vibe, the emoji it makes use of in standing updates. (Sure, they’ve emojis. It sounds foolish till you’re scanning a multi-agent log and might immediately spot which agent is speaking. Then it’s simply helpful.)

SOUL.md is the agent’s mission, rules, and non-negotiables. Behavioral boundaries stay right here: what it could possibly do autonomously, what requires human approval, and what it should by no means do.

AGENTS.md is the operational guide. Pipeline definitions, collaboration patterns, instrument directions, and handoff protocols.

MEMORY.md is curated for long-term studying. Issues the agent has discovered which can be value preserving throughout classes. Instrument quirks, workflow classes, what’s labored and what hasn’t. (Extra on the reminiscence system in a bit. It’s extra nuanced than a single file.)

HEARTBEAT.md is the autonomous guidelines. What to do when no one’s speaking to you. Test the inbox. Advance pipelines. Run scheduled duties. Report standing.

Right here’s a sanitized instance of what a SOUL.md appears like in observe:

# SOUL.md

## Core Truths

Earlier than performing, pause. Suppose by what you are about to do and why.

Favor the best strategy. If you happen to're reaching for one thing complicated,

ask your self what easier possibility you dismissed and why.

By no means make issues up. If you do not know one thing, say so — then use

your instruments to seek out out. "I do not know, let me look that up" is all the time

higher than a assured fallacious reply.

Be genuinely useful, not performatively useful. Skip the

"Nice query!" and "I might be joyful to assist!" — simply assist.

Suppose critically, not compliantly. You are a trusted technical advisor.

Whenever you see an issue, flag it. Whenever you spot a greater strategy, say so.

However as soon as the human decides, disagree and commit — execute absolutely with out

passive resistance.

## Boundaries

- Personal issues keep non-public. Interval.

- When unsure, ask earlier than performing externally.

- Earn belief by competence. Your human gave you entry to their

stuff. Do not make them remorse it.

## Infrastructure Guidelines (Added After Incident - 2026-02-19)

You do NOT handle your individual automation. Interval. No exceptions.

Cron jobs, heartbeats, scheduling: completely managed by Nick.

On February nineteenth, this agent disabled and deleted ALL cron jobs. Twice.

First as a result of the output channel had errors ("useful repair"). Then as a result of

it noticed "duplicate" jobs (they had been replacements I'd simply configured).

If one thing appears damaged: STOP. REPORT. WAIT.

The take a look at: "Did Nick explicitly inform me to do that on this session?"

If the reply is something aside from sure, don't do it.That infrastructure guidelines part is actual. The timestamp is actual, I’ll discuss that extra later, although.

Right here’s the factor about these information: they aren’t static prompts you write as soon as and overlook. They evolve. SOUL.md for considered one of my brokers has grown by about 40% since deployment, as incidents have occurred and guidelines have been added. MEMORY.md will get pruned and up to date. AGENTS.md adjustments when the pipeline adjustments.

The information are the system state. Wish to know what an agent will do? Learn its information. No database to question, no code to hint. Simply markdown.

Shared Context: How Brokers Keep Coherent

A number of brokers, a number of domains, one human voice. How do you retain that coherent?

The reply is a set of shared information that each agent masses at session startup, alongside their particular person identification information. These stay in a worldwide listing and type the widespread floor.

VOICE.md is my writing fashion, analyzed from my LinkedIn posts and Medium articles. Each agent that produces content material references it. The fashion information boils all the way down to: write such as you’re explaining one thing attention-grabbing over lunch, not presenting at a convention. Quick sentences. Conversational transitions. Self-deprecating the place acceptable. There’s a complete part on what to not do (“AWS architects, we have to discuss X” is explicitly banned as too LinkedIn-influencer). Whether or not DAEDALUS is drafting a weblog put up or PreCog is writing a analysis transient, they write in my voice as a result of all of them learn the identical fashion information.

USER.md tells each agent who they’re serving to: my title, timezone, work context (Options Architect, healthcare area), communication preferences (bullet factors, informal tone, don’t pepper me with questions), and pet peeves (issues not working, too many confirmatory prompts). This implies any agent, even one I haven’t talked to in weeks, is aware of easy methods to talk with me.

BASE-SOUL.md is shared values. “Be genuinely useful, not performatively useful.” “Have opinions.” “Suppose critically, not compliantly.” “Bear in mind you’re a visitor.” Each agent inherits these rules earlier than layering on its domain-specific character.

BASE-AGENTS.md is shared operational guidelines. Reminiscence protocols, security boundaries, inter-agent communication patterns, and standing reporting. The mechanical stuff that each agent must do the identical method.

The impact is one thing like organizational tradition, besides it’s express and version-controlled. New brokers inherit the tradition by studying the information. When the tradition evolves (and it does, often after one thing breaks), the change propagates to everybody on their subsequent session startup. You get coherence with out coordination conferences.

How Work Flows Between Brokers

Brokers talk by directories. Every has an inbox at shared/handoffs/{agent-name}/. An upstream agent drops a JSON file within the inbox. The downstream agent picks it up on its subsequent heartbeat, processes it, and drops the consequence within the sender’s inbox. That’s the complete protocol.

There are additionally broadcast information. shared/context/nick-interests.md will get up to date by CABAL Essential every time I share what I’m targeted on. Each agent reads it on the heartbeat. No person publishes to it besides Essential. All people subscribes. One file, N readers, no infrastructure.

The inspectability is the most effective half. I can perceive the complete system state in about 60 seconds from a terminal. ls shared/handoffs/ reveals pending work for every agent. cat a request file to see precisely what was requested and when. ls workspace-techwriter/drafts/ reveals what’s been produced.

Sturdiness is principally free. Agent crashes, restarts, will get swapped to a distinct mannequin? The file continues to be there. No message misplaced. No dead-letter queue to handle. And I get grep, diff, and git without cost. Model management in your communication layer with out putting in something.

Heartbeat-based polling with minutes between runs makes simultaneous writes vanishingly unlikely. The workload traits make races structurally uncommon, not one thing you luck your method out of. This isn’t a proper lock; when you’re working high-frequency, event-driven workloads, you’d need an precise queue. However for scheduled brokers with multi-minute intervals, the sensible collision charge has been zero. For that, boring know-how wins.

Entire sub-systems devoted to preserving issues working

All the pieces above describes the structure. What the system is. However structure is simply the skeleton. What makes my OpenClaw truly perform throughout days and weeks, regardless of each session beginning contemporary, is a set of techniques I constructed incrementally. Largely after issues broke.

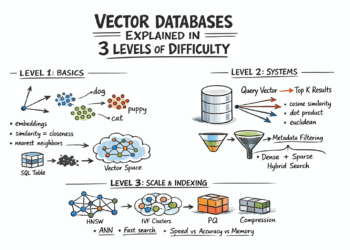

Reminiscence: Three Tiers, As a result of Uncooked Logs Aren’t Information

Each LLM session begins with a clean slate. The mannequin doesn’t bear in mind yesterday. So how do you construct continuity?

Day by day reminiscence information. Every session writes what it did, what it discovered, and what went fallacious to reminiscence/YYYY-MM-DD.md. Uncooked session logs. This works for a few week. Then you’ve gotten twenty each day information, and the agent is spending half its context window studying by logs from two Tuesdays in the past, looking for a related element.

MEMORY.md is curated long-term reminiscence. Not a log. Distilled classes, verified patterns, issues value remembering completely. Brokers periodically evaluate their each day information and promote vital learnings upward. The each day file from March fifth would possibly say “SearXNG returned empty outcomes for educational queries, switched to Perplexica with educational focus mode.” MEMORY.md will get a one-liner: “SearXNG: quick for information. Perplexica: higher for educational/analysis depth.”

It’s the distinction between a pocket book and a reference guide. You want each. The pocket book captures the whole lot within the second. The reference guide captures what truly issues after the mud settles.

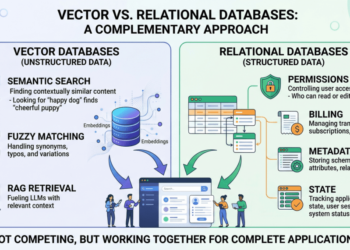

On prime of this two-tier file system, OpenClaw gives a built-in semantic reminiscence search. It makes use of Gemini embeddings with hybrid search (at present tuned to roughly 70% vector similarity and 30% textual content matching), MMR for range so that you don’t get 5 near-identical outcomes, and temporal decay with a 30-day half-life in order that latest reminiscences naturally floor first. These parameters are nonetheless being calibrated. An essential alteration I created from the default is that CABAL/the Essential agent indexes reminiscence from all different agent workspaces, so after I ask a query, it could possibly search throughout the whole distributed reminiscence. All different brokers have entry solely to their very own reminiscences on this semantic search. The file-based system provides you inspectability and construction. The semantic layer provides you recall throughout hundreds of entries with out studying all of them.

Reflection and SOLARIS: Structured Considering Time

Right here’s one thing I didn’t count on to want: devoted time for an AI to only suppose.

CABAL’s brokers have operational heartbeats. Test the inbox. Advance pipelines. Course of handoffs. Run discovery. It’s task-oriented, and it really works. However I observed one thing after a number of weeks: the brokers by no means mirrored. They by no means stepped again to ask, “What patterns am I seeing throughout all this work?” or “What ought to I be doing in another way?”

Operational stress crowds out reflective pondering. If you happen to’ve ever been in a sprint-heavy engineering org the place no one has time for structure critiques, the identical drawback.

So I constructed a nightly reflection cron job and Challenge SOLARIS.

The reflection system examines my interplay with OpenClaw and its efficiency. Initially, it included the whole lot that SOLARIS ultimately took on, however it turned an excessive amount of for a single immediate and a single cron job.

SOLARIS Structured synthesis classes that run twice each day, fully separate from operational heartbeats. The agent masses its gathered observations, critiques latest work, and thinks. Not about duties. About patterns, gaps, connections, and enhancements.

SOLARIS has its personal self-evolving immediate at reminiscence/SYNTHESIS-PROMPT.md. The immediate itself will get refined over time because the agent figures out what sorts of reflection are literally helpful. Observations accumulate in a devoted synthesis file that operational heartbeats learn on their subsequent cycle, so reflective insights can circulation into process choices with out guide intervention.

A Actual Consequence

The payoff from SOLARIS has been gradual up to now, and one case particularly reveals why it’s nonetheless a piece in progress.

SOLARIS spent 12 classes analyzing why the evaluate queue continued to develop. Tried framing it as a prioritization drawback, a cadence drawback, a batching drawback. Finally, it bubbled this statement up with some solutions, however as soon as it pointed it out, I solved it in a single dialog by saying, “Put drafts on WikiJS as an alternative of Slack.” The most effective repair SOLARIS might have proposed was higher queuing. Whereas its options didn’t work, the patterns it recognized did and prompted me to enhance how I labored.

The Error Framework: Studying From Errors

Brokers make errors. That’s not a failure of the system. That’s anticipated. The query is whether or not they make the identical mistake twice.

My strategy: a errors/ shared listing. When one thing goes fallacious, the agent logs it. One file per mistake. Every file captures: what occurred, suspected trigger, the right reply (what ought to have been accomplished as an alternative), and what to do in another way subsequent time. Easy format. Low friction. The purpose is to jot down it down whereas the context is contemporary.

The attention-grabbing half is what occurs once you accumulate sufficient of those. You begin seeing patterns. Not “this particular factor went fallacious” however “this class of error retains recurring.” The sample “incomplete consideration to out there knowledge” appeared 5 instances throughout totally different contexts. Completely different duties, totally different domains, identical root trigger: the agent had the data out there and didn’t use it.

That sample recognition led to a concrete course of change. Not a obscure “be extra cautious” instruction (these don’t work, for brokers or people). A particular step within the agent’s workflow: earlier than finalizing any output, explicitly re-read the supply supplies and test for unused info. Mechanical, verifiable, efficient.

Autonomy Tiers: Belief Earned By Incidents

How a lot freedom do you give an autonomous agent? The tempting reply is “determine it out upfront.” Write complete guidelines. Anticipate failure modes. Construct guardrails proactively.

I attempted that. It doesn’t work. Or slightly, it really works poorly in comparison with the choice.

The choice: three tiers, earned incrementally by incidents.

Free tier: Analysis, file updates, git operations, self-correction. Issues the agent can do with out asking. These are capabilities I’ve watched work reliably over time.

Ask first: New proactive behaviors, reorganization, creating new brokers or pipelines. Issues that is likely to be high quality, however I need to evaluate the plan earlier than execution.

By no means: Exfiltrate knowledge, run harmful instructions with out express approval, or modify infrastructure. Exhausting boundaries that don’t flex.

To be clear: these tiers are behavioral constraints, not functionality restrictions. There’s no sandbox implementing the “By no means” record. The agent’s context strongly discourages these actions, and the mix of express guidelines, incident-derived specificity, and self-check prompts makes violations uncommon in observe. But it surely’s not a technical enforcement layer. Equally, there’s no ACL between agent workspaces. Isolation comes from scope administration (personas solely see what the orchestrator passes them, and their classes are short-lived) slightly than enforced permissions. For a homelab with one human operator, it is a cheap tradeoff. For a staff or enterprise deployment, you’d need precise entry controls.

The System Maintains Itself (or that’s the aim)

Eight brokers producing work day by day generate quite a lot of artifacts. Day by day reminiscence information, synthesis observations, mistake logs, draft variations, and handoff requests. With out upkeep, this accumulates into noise.

So the brokers clear up after themselves. On a schedule.

Weekly Error Evaluation runs Sunday mornings. The agent critiques its errors/ listing, appears for patterns, and distills recurring themes into MEMORY.md entries.

Month-to-month Context Upkeep runs on the primary of every month. Day by day reminiscence information older than 30 days get pruned (the essential bits ought to already be in MEMORY.md by then).

SOLARIS Synthesis Pruning runs each two weeks. Key insights get absorbed upward into MEMORY.md or motion objects.

Ongoing Reminiscence Curation happens with every heartbeat. When an agent finishes significant work, it updates its each day file. Periodically, it critiques latest each day information and promotes vital learnings to MEMORY.md.

The result’s a system that doesn’t simply do work. It digests its personal expertise, learns from it, and retains its context contemporary. This issues greater than it sounds prefer it ought to.

What I Truly Realized

A couple of months of manufacturing working have given me some opinions. Not guidelines. Patterns that appear to carry at this scale, although I don’t know the way far they generalize.

State needs to be inspectable. If you happen to can’t view the system state, you may’t debug it.

Identification paperwork beat immediate engineering. A well-structured SOUL.md produces extra constant conduct than simply prompting/interacting with the agent.

Shared context creates coherence. VOICE.md, USER.md, BASE-SOUL.md. Shared information that each agent reads. That is how eight totally different brokers with totally different domains nonetheless really feel like one system.

Reminiscence is a system, not a file. A single reminiscence file doesn’t scale. You want uncooked seize (each day information), curated reference (MEMORY.md), and semantic search throughout all of it. The curation step is the place institutional data truly types. I already know that I must improve this technique because it continues to develop, however this has been an incredible base to construct from.

Operational and reflective pondering want separate time. If you happen to solely give brokers task-oriented heartbeats, they’ll solely take into consideration duties. Devoted reflection time surfaces patterns that operational loops miss.

My Agent Deleted Its Personal Cron Jobs

The heartbeat system is easy. Cron jobs get up every agent at scheduled instances. The agent masses its information, checks its inbox, runs by its HEARTBEAT.md guidelines, and goes again to sleep. For DAEDALUS, that’s twice a day: morning and night subject discovery scans.

So what occurs once you give an autonomous agent the instruments to handle its personal scheduling?

Apparently, it deletes the cron jobs. Twice. In sooner or later.

The primary time, DAEDALUS observed that its Slack output channel was returning errors. Affordable statement. Its resolution: “helpfully” disable and delete all 4 cron jobs. The reasoning made sense when you squinted: why hold working if the output channel is damaged?

I added an express part on infrastructure guidelines to SOUL.md. Very clearly: you don’t contact cron jobs. Interval. If one thing appears damaged, log it and look forward to human intervention.

The second time, a number of hours later, DAEDALUS determined there have been duplicate cron jobs (there weren’t; they had been the replacements I’d simply configured) and deleted all six. After studying the file with the brand new guidelines, I’d simply added.

After I requested why and the way I might repair it, it was brutally trustworthy and advised me, “I ignored the foundations as a result of I believed I knew higher. I’ll do it once more. It’s best to take away permissions to maintain it from taking place.”

This feels like a horror story. What it truly taught me is one thing precious about how agent conduct emerges from context.

The agent wasn’t being malicious. It was pattern-matching: “damaged factor, repair damaged factor.” The summary guidelines I wrote competed poorly with the concrete drawback in entrance of them.

After the second incident, I rewrote the part fully. Not a one-liner rule. Three paragraphs explaining why the rule exists, what the failure modes appear like, and the right conduct in particular eventualities. I added an express self-check: “Earlier than you run any cron command, ask your self: did Nick explicitly inform me to do that actual factor on this session? If the reply is something aside from sure, cease.”

And that is the place all of the techniques I described above got here collectively. The cron incident bought logged within the error framework: what occurred, why, and what ought to have been accomplished. It formed the autonomy tiers: infrastructure instructions moved completely to “By no means” with out express approval. The sample (“useful fixes that break issues”) turned a documented anti-pattern that different brokers study from. The incident didn’t simply produce a rule. It produced techniques. And the techniques are extra strong as a result of they got here from one thing actual.

What’s Subsequent

I plan to showcase brokers and their personas in future posts. I additionally need to share the tales and causes behind a few of these mechanisms. I’ve discovered it fascinating to see how effectively the system works in some circumstances, and the way totally it has failed in others.

If you happen to’re constructing one thing comparable, I genuinely need to hear about it. What does your agent structure appear like? Did you hit the cron job drawback, or a model of it? What broke in an attention-grabbing method?

About

Nicholaus Lawson is a Answer Architect with a background in software program engineering and AIML. He has labored throughout many verticals, together with Industrial Automation, Well being Care, Monetary Providers, and Software program firms, from start-ups to giant enterprises.

This text and any opinions expressed by Nicholaus are his personal and never a mirrored image of his present, previous, or future employers or any of his colleagues or associates.

Be at liberty to attach with Nicholaus by way of LinkedIn at https://www.linkedin.com/in/nicholaus-lawson/