to start out finding out LLMs with all this content material over the web, and new issues are developing every day. I’ve learn some guides from Google, OpenAI, and Anthropic and seen how every focuses on completely different elements of Brokers and LLMs. So, I made a decision to consolidate these ideas right here and add different vital concepts that I believe are important if you happen to’re beginning to research this discipline.

This submit covers key ideas with code examples to make issues concrete. I’ve ready a Google Colab pocket book with all of the examples so you may apply the code whereas studying the article. To make use of it, you’ll want an API key — examine part 5 of my earlier article if you happen to don’t know the way to get one.

Whereas this information offers you the necessities, I like to recommend studying the total articles from these firms to deepen your understanding.

I hope this lets you construct a stable basis as you begin your journey with LLMs!

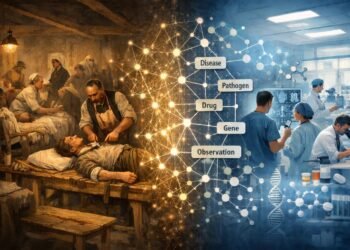

On this MindMap, you may examine a abstract of this text’s content material.

What’s an agent?

“Agent” might be outlined in a number of methods. Every firm whose information I’ve learn defines brokers in a different way. Let’s study these definitions and evaluate them:

“Brokers are programs that independently accomplish duties in your behalf.” (Open AI)

“In its most basic type, a Generative AI agent might be outlined as an software that makes an attempt to obtain a purpose by observing the world and performing upon it utilizing the instruments that it has at its disposal. Brokers are autonomous and might act independently of human intervention, particularly when supplied with correct objectives or goals they’re meant to realize. Brokers can be proactive of their strategy to reaching their objectives. Even within the absence of express instruction units from a human, an agent can motive about what it ought to do subsequent to realize its final purpose.” (Google)

“Some clients outline brokers as totally autonomous programs that function independently over prolonged durations, utilizing numerous instruments to perform complicated duties. Others use the time period to explain extra prescriptive implementations that observe predefined workflows. At Anthropic, we categorize all these variations as agentic programs, however draw an vital architectural distinction between workflows and brokers:

– Workflows are programs the place LLMs and instruments are orchestrated by means of predefined code paths.

– Brokers, however, are programs the place LLMs dynamically direct their very own processes and gear utilization, sustaining management over how they accomplish duties.” (Anthropic)

The three definitions emphasize completely different elements of an agent. Nevertheless, all of them agree that brokers:

- Function autonomously to carry out duties

- Make selections about what to do subsequent

- Use instruments to realize objectives

An agent consists of three essential elements:

- Mannequin

- Directions/Orchestration

- Instruments

First, I’ll outline every element in a simple phrase so you may have an outline. Then, within the following part, we’ll dive into every element.

- Mannequin: a language mannequin that generates the output.

- Directions/Orchestration: express tips defining how the agent behaves.

- Instruments: permits the agent to work together with exterior knowledge and companies.

Mannequin

Mannequin refers back to the language mannequin (LM). In easy phrases, it predicts the subsequent phrase or sequence of phrases based mostly on the phrases it has already seen.

If you wish to perceive how these fashions work behind the black field, here’s a video from 3Blue1Brown that explains it.

Brokers vs fashions

Brokers and fashions will not be the identical. The mannequin is a element of an agent, and it’s utilized by it. Whereas fashions are restricted to predicting a response based mostly on their coaching knowledge, brokers prolong this performance by performing independently to realize particular objectives.

Here’s a abstract of the primary variations between Fashions and Brokers from Google’s paper.

Massive Language Fashions

The opposite L from LLM refers to “Massive”, which primarily refers back to the variety of parameters it was educated on. These fashions can have a whole lot of billions and even trillions of parameters. They’re educated on large knowledge and want heavy pc energy to be educated on.

Examples of LLMs are GPT 4o, Gemini Flash 2.0 , Gemini Professional 2.5, Claude 3.7 Sonnet.

Small Language Fashions

We even have Small Language Fashions (SLM). They’re used for easier duties the place you want much less knowledge and fewer parameters, are lighter to run, and are simpler to regulate.

SLMs have fewer parameters (sometimes below 10 billion), dramatically lowering the computational prices and power utilization. They give attention to particular duties and are educated on smaller datasets. This maintains a steadiness between efficiency and useful resource effectivity.

Examples of SLMs are Llama 3.1 8B (Meta), Gemma2 9B (Google), Mistral 7B (Mistral AI).

Open Supply vs Closed Supply

These fashions might be open supply or closed. Being open supply signifies that the code — typically mannequin weights and coaching knowledge, too — is publicly accessible for anybody to make use of freely, perceive the way it works internally, and alter for particular duties.

The closed mannequin signifies that the code isn’t publicly accessible. Solely the corporate that developed it might probably management its use, and customers can solely entry it by means of APIs or paid companies. Typically, they’ve a free tier, like Gemini has.

Right here, you may examine some open supply fashions on Hugging Face.

These with * in dimension imply this data will not be publicly accessible, however there are rumors of a whole lot of billions and even trillions of parameters.

Directions/Orchestration

Directions are express tips and guardrails defining how the agent behaves. In its most basic type, an agent would include simply “Directions” for this element, as outlined in Open AI’s information. Nevertheless, the agent may have extra than simply “Directions” to deal with extra complicated situations. In Google’s paper, they name this element “Orchestration” as a substitute, and it entails three layers:

- Directions

- Reminiscence

- Mannequin-based Reasoning/Planning

Orchestration follows a cyclical sample. The agent gathers data, processes it internally, after which makes use of these insights to find out its subsequent transfer.

Directions

The directions might be the mannequin’s objectives, profile, roles, guidelines, and data you assume is vital to boost its habits.

Right here is an instance:

system_prompt = """

You're a pleasant and a programming tutor.

At all times clarify ideas in a easy and clear approach, utilizing examples when potential.

If the person asks one thing unrelated to programming, politely carry the dialog again to programming subjects.

"""On this instance, we instructed the position of the LLM, the anticipated habits, how we needed the output — easy and with examples when potential — and set limits on what it’s allowed to speak about.

Mannequin-based Reasoning/Planning

Some reasoning strategies, comparable to ReAct and Chain-of-Thought, give the orchestration layer a structured approach to absorb data, carry out inside reasoning, and produce knowledgeable selections.

Chain-of-Thought (CoT) is a immediate engineering method that allows reasoning capabilities by means of intermediate steps. It’s a approach of questioning a language mannequin to generate a step-by-step rationalization or reasoning course of earlier than arriving at a last reply. This methodology helps the mannequin to interrupt down the issue and never skip any intermediate duties to keep away from reasoning failures.

Prompting instance:

system_prompt = f"""

You're the assistant for a tiny candle store.

Step 1:Test whether or not the person mentions both of our candles:

• Forest Breeze (woodsy scent, 40 h burn, $18)

• Vanilla Glow (heat vanilla, 35 h burn, $16)

Step 2:Record any assumptions the person makes

(e.g. "Vanilla Glow lasts 50 h" or "Forest Breeze is unscented").

Step 3:If an assumption is mistaken, appropriate it politely.

Then reply the query in a pleasant tone.

Point out solely the 2 candles above-we do not promote the rest.

Use precisely this output format:

Step 1:

Step 2:

Step 3:

Response to person:

""" Right here is an instance of the mannequin output for the person question: “Hello! I’d like to purchase the Vanilla Glow. Is it $10?”. You may see the mannequin following our tips from every step to construct the ultimate reply.

ReAct is one other immediate engineering method that mixes reasoning and performing. It offers a thought course of technique for language fashions to motive and take motion on a person question. The agent continues in a loop till it accomplishes the duty. This system overcomes weaknesses of reasoning-only strategies like CoT, comparable to hallucination, as a result of it causes in exterior data obtained by means of actions.

Prompting instance:

system_prompt= """You're an agent that may name two instruments:

1. CurrencyAPI:

• enter: {base_currency (3-letter code), quote_currency (3-letter code)}

• returns: trade price (float)

2. Calculator:

• enter: {arithmetic_expression}

• returns: outcome (float)

Comply with **strictly** this response format:

Thought:

Motion: []

Remark:

… (repeat Thought/Motion/Remark as wanted)

Reply:

By no means output the rest. If no software is required, skip on to Reply.

""" Right here, I haven’t applied the features (the mannequin is hallucinating to get the forex), so it’s simply an instance of the reasoning hint:

These strategies are good to make use of if you want transparency and management over what and why the agent is giving that reply or taking an motion. It helps debug your system, and if you happen to analyze it, it may present indicators for enhancing prompts.

If you wish to learn extra, these strategies have been proposed by Google’s researchers within the paper Chain of Thought Prompting Elicits Reasoning in Massive Language Fashions and REACT: SYNERGIZING REASONING AND ACTING IN LANGUAGE MODELS.

Reminiscence

LLMs don’t have reminiscence inbuilt. This “Reminiscence” is a few content material you move inside your immediate to offer the mannequin context. We will refer to 2 sorts of reminiscence: short-term and long-term.

- Brief-term reminiscence refers back to the instant context the mannequin has entry to throughout an interplay. This might be the newest message, the final N messages, or a abstract of earlier messages. The quantity may range based mostly on the mannequin’s context limitations — when you hit that restrict, you may drop older messages to offer area to new ones.

- Lengthy-term reminiscence entails storing vital data past the mannequin’s context window for future use. To work round this, you may summarize previous conversations or get key data and save them externally, sometimes in a vector database. When wanted, the related data is retrieved utilizing Retrieval-Augmented Era (RAG) strategies to refresh the mannequin’s understanding. We’ll discuss RAG within the following part.

Right here is only a easy instance of managing short-term reminiscence manually. You may examine the Google Colab pocket book for this code execution and a extra detailed rationalization.

# System immediate

system_prompt = """

You're the assistant for a tiny candle store.

Step 1:Test whether or not the person mentions both of our candles:

• Forest Breeze (woodsy scent, 40 h burn, $18)

• Vanilla Glow (heat vanilla, 35 h burn, $16)

Step 2:Record any assumptions the person makes

(e.g. "Vanilla Glow lasts 50 h" or "Forest Breeze is unscented").

Step 3:If an assumption is mistaken, appropriate it politely.

Then reply the query in a pleasant tone.

Point out solely the 2 candles above-we do not promote the rest.

Use precisely this output format:

Step 1:

Step 2:

Step 3:

Response to person:

"""

# Begin a chat_history

chat_history = []

# First message

user_input = "I wish to purchase 1 Forest Breeze. Can I pay $10?"

full_content = f"System directions: {system_prompt}nn Chat Historical past: {chat_history} nn Consumer message: {user_input}"

response = consumer.fashions.generate_content(

mannequin="gemini-2.0-flash",

contents=full_content

)

# Append to talk historical past

chat_history.append({"position": "person", "content material": user_input})

chat_history.append({"position": "assistant", "content material": response.textual content})

# Second Message

user_input = "What did I say I needed to purchase?"

full_content = f"System directions: {system_prompt}nn Chat Historical past: {chat_history} nn Consumer message: {user_input}"

response = consumer.fashions.generate_content(

mannequin="gemini-2.0-flash",

contents=full_content

)

# Append to talk historical past

chat_history.append({"position": "person", "content material": user_input})

chat_history.append({"position": "assistant", "content material": response.textual content})

print(response.textual content) We truly move to the mannequin the variable full_content, composed of system_prompt (containing directions and reasoning tips), the reminiscence (chat_history), and the brand new user_input.

In abstract, you may mix directions, reasoning tips, and reminiscence in your immediate to get higher outcomes. All of this mixed types certainly one of an agent’s elements: Orchestration.

Instruments

Fashions are actually good at processing data, nonetheless, they’re restricted by what they’ve realized from their coaching knowledge. With entry to instruments, the fashions can work together with exterior programs and entry information past their coaching knowledge.

Capabilities and Operate Calling

Capabilities are self-contained modules of code that accomplish a selected activity. They’re reusable code that you should utilize again and again.

When implementing operate calling, you join a mannequin with features. You present a set of predefined features, and the mannequin determines when to make use of every operate and which arguments are required based mostly on the operate’s specs.

The Mannequin doesn’t execute the operate itself. It’ll inform which features ought to be known as and move the parameters (inputs) to make use of that operate based mostly on the person question, and you’ll have to create the code to execute this operate later. Nevertheless, if we construct an agent, then we will program its workflow to execute the operate and reply based mostly on that, or we will use Langchain, which has an abstraction of the code, and also you simply move the features to the pre-built agent. Keep in mind that an agent is a composition of (mannequin + directions + instruments).

On this approach, you prolong your agent’s capabilities to make use of exterior instruments, comparable to calculators, and take actions, comparable to interacting with exterior programs utilizing APIs.

Right here, I’ll first present you an LLM and a fundamental operate name so you may perceive what is going on. It’s nice to make use of LangChain as a result of it simplifies your code, however you must perceive what is going on beneath the abstraction. On the finish of the submit, we’ll construct an agent utilizing LangChain.

The method of making a operate name:

- Outline the operate and a operate declaration, which describes the operate’s identify, parameters, and goal to the mannequin.

- Name LLM with operate declarations. As well as, you may move a number of features and outline if the mannequin can select any operate you specified, whether it is pressured to name precisely one particular operate, or if it might probably’t use them in any respect.

- Execute Operate Code.

- Reply the person.

# Procuring listing

shopping_list: Record[str] = []

# Capabilities

def add_shopping_items(objects: Record[str]):

"""Add a number of objects to the purchasing listing."""

for merchandise in objects:

shopping_list.append(merchandise)

return {"standing": "okay", "added": objects}

def list_shopping_items():

"""Return all objects presently within the purchasing listing."""

return {"shopping_list": shopping_list}

# Operate declarations

add_shopping_items_declaration = {

"identify": "add_shopping_items",

"description": "Add a number of objects to the purchasing listing",

"parameters": {

"kind": "object",

"properties": {

"objects": {

"kind": "array",

"objects": {"kind": "string"},

"description": "A listing of purchasing objects so as to add"

}

},

"required": ["items"]

}

}

list_shopping_items_declaration = {

"identify": "list_shopping_items",

"description": "Record all present objects within the purchasing listing",

"parameters": {

"kind": "object",

"properties": {},

"required": []

}

}

# Configuration Gemini

consumer = genai.Shopper(api_key=os.getenv("GEMINI_API_KEY"))

instruments = sorts.Instrument(function_declarations=[

add_shopping_items_declaration,

list_shopping_items_declaration

])

config = sorts.GenerateContentConfig(instruments=[tools])

# Consumer enter

user_input = (

"Hey there! I am planning to bake a chocolate cake later immediately, "

"however I noticed I am out of flour and chocolate chips. "

"Might you please add these objects to my purchasing listing?"

)

# Ship the person enter to Gemini

response = consumer.fashions.generate_content(

mannequin="gemini-2.0-flash",

contents=user_input,

config=config,

)

print("Mannequin Output Operate Name")

print(response.candidates[0].content material.elements[0].function_call)

print("n")

#Execute Operate

tool_call = response.candidates[0].content material.elements[0].function_call

if tool_call.identify == "add_shopping_items":

outcome = add_shopping_items(**tool_call.args)

print(f"Operate execution outcome: {outcome}")

elif tool_call.identify == "list_shopping_items":

outcome = list_shopping_items()

print(f"Operate execution outcome: {outcome}")

else:

print(response.candidates[0].content material.elements[0].textual content)On this code, we’re creating two features: add_shopping_items and list_shopping_items. We outlined the operate and the operate declaration, configured Gemini, and created a person enter. The mannequin had two features accessible, however as you may see, it selected add_shopping_items and obtained the args={‘objects’: [‘flour’, ‘chocolate chips’]}, which was precisely what we have been anticipating. Lastly, we executed the operate based mostly on the mannequin output, and people objects have been added to the shopping_list.

Exterior knowledge

Typically, your mannequin doesn’t have the proper data to reply correctly or do a activity. Entry to exterior knowledge permits us to offer further knowledge to the mannequin, past the foundational coaching knowledge, eliminating the necessity to practice the mannequin or fine-tune it on this extra knowledge.

Instance of the information:

- Web site content material

- Structured Knowledge in codecs like PDF, Phrase Docs, CSV, Spreadsheets, and so on.

- Unstructured Knowledge in codecs like HTML, PDF, TXT, and so on.

One of the frequent makes use of of an information retailer is the implementation of RAGs.

Retrieval Augmented Era (RAG)

Retrieval Augmented Era (RAG) means:

- Retrieval -> When the person asks the LLM a query, the RAG system will seek for an exterior supply to retrieve related data for the question.

- Augmented -> The related data might be integrated into the immediate.

- Era -> The LLM then generates a response based mostly on each the unique immediate and the extra context retrieved.

Right here, I’ll present you the steps of a normal RAG. We now have two pipelines, one for storing and the opposite for retrieving.

First, we’ve got to load the paperwork, cut up them into smaller chunks of textual content, embed every chunk, and retailer them in a vector database.

Necessary:

- Breaking down massive paperwork into smaller chunks is vital as a result of it makes a extra centered retrieval, and LLMs even have context window limits.

- Embeddings create numerical representations for items of textual content. The embedding vector tries to seize the which means, so textual content with comparable content material can have comparable vectors.

The second pipeline retrieves the related data based mostly on a person question. First, embed the person question and retrieve related chunks within the vector retailer utilizing some calculation, comparable to fundamental semantic similarity or most marginal relevance (MMR), between the embedded chunks and the embedded person question. Afterward, you may mix probably the most related chunks earlier than passing them into the ultimate LLM immediate. Lastly, add this mixture of chunks to the LLM directions, and it might probably generate a solution based mostly on this new context and the unique immediate.

In abstract, you can provide your agent extra information and the flexibility to take motion with instruments.

Enhancing mannequin efficiency

Now that we’ve got seen every element of an agent, let’s discuss how we may improve the mannequin’s efficiency.

There are some methods for enhancing mannequin efficiency:

- In-context studying

- Retrieval-based in-context studying

- Positive-tuning based mostly studying

In-context studying

In-context studying means you “train” the mannequin the way to carry out a activity by giving examples straight within the immediate, with out altering the mannequin’s underlying weights.

This methodology offers a generalized strategy with a immediate, instruments, and few-shot examples at inference time, permitting it to study “on the fly” how and when to make use of these instruments for a selected activity.

There are some sorts of in-context studying:

We already noticed examples of Zero-shot, CoT, and ReAct within the earlier sections, so now right here is an instance of one-shot studying:

user_query= "Carlos to arrange the server by Tuesday, Maria will finalize the design specs by Thursday, and let's schedule the demo for the next Monday."

system_prompt= f""" You're a useful assistant that reads a block of assembly transcript and extracts clear motion objects.

For every merchandise, listing the individual accountable, the duty, and its due date or timeframe in bullet-point type.

Instance 1

Transcript:

'John will draft the finances by Friday. Sarah volunteers to assessment the advertising deck subsequent week. We have to ship invitations for the kickoff.'

Actions:

- John: Draft finances (due Friday)

- Sarah: Assessment advertising deck (subsequent week)

- Crew: Ship kickoff invitations

Now you

Transcript: {user_query}

Actions:

"""

# Ship the person enter to Gemini

response = consumer.fashions.generate_content(

mannequin="gemini-2.0-flash",

contents=system_prompt,

)

print(response.textual content)Right here is the output based mostly in your question and the instance:

Retrieval-based in-context studying

Retrieval-based in-context studying means the mannequin retrieves exterior context (like paperwork) and provides this related content material retrieved into the mannequin’s immediate at inference time to boost its response.

RAGs are vital as a result of they scale back hallucinations and allow LLMs to reply questions on particular domains or non-public knowledge (like an organization’s inside paperwork) without having to be retrained.

When you missed it, return to the final part, the place I defined RAG intimately.

Positive-tuning-based studying

Positive-tuning-based studying means you practice the mannequin additional on a selected dataset to “internalize” new behaviors or information. The mannequin’s weights are up to date to replicate this coaching. This methodology helps the mannequin perceive when and the way to apply sure instruments earlier than receiving person queries.

There are some frequent strategies for fine-tuning. Listed below are a number of examples so you may search to check additional.

Analogy to check the three methods

Think about you’re coaching a tour information to obtain a bunch of individuals in Iceland.

- In-Context Studying: you give the tour information a number of handwritten notes with some examples like “If somebody asks about Blue Lagoon, say this. In the event that they ask about native meals, say that”. The information doesn’t know the town deeply, however he can observe your examples as lengthy the vacationers keep inside these subjects.

- Retrieval-Based mostly Studying: you equip the information with a telephone + map + entry to Google search. The information doesn’t must memorize every part however is aware of the way to search for data immediately when requested.

- Positive-Tuning: you give the information months of immersive coaching within the metropolis. The information is already of their head after they begin giving excursions.

The place does LangChain come in?

LangChain is a framework designed to simplify the event of purposes powered by massive language fashions (LLMs).

Throughout the LangChain ecosystem, we’ve got:

- LangChain: The essential framework for working with LLMs. It means that you can change between suppliers or mix elements when constructing purposes with out altering the underlying code. For instance, you may change between Gemini or GPT fashions simply. Additionally, it makes the code less complicated. Within the subsequent part, I’ll evaluate the code we constructed within the part on operate calling and the way we may do this with LangChain.

- LangGraph: For constructing, deploying, and managing agent workflows.

- LangSmith: For debugging, testing, and monitoring your LLM purposes

Whereas these abstractions simplify growth, understanding their underlying mechanics by means of checking the documentation is important — the comfort these frameworks present comes with hidden implementation particulars that may impression efficiency, debugging, and customization choices if not correctly understood.

Past LangChain, you may additionally take into account OpenAI’s Brokers SDK or Google’s Agent Growth Equipment (ADK), which provide completely different approaches to constructing agent programs.

Let’s construct one agent utilizing LangChain

Right here, in a different way from the code within the “Operate Calling” part, we don’t need to create operate declarations like we did earlier than manually. Utilizing the @softwaredecorator above our features, LangChain mechanically converts them into structured descriptions which are handed to the mannequin behind the scenes.

ChatPromptTemplate organizes data in your immediate, creating consistency in how data is introduced to the mannequin. It combines system directions + the person’s question + agent’s working reminiscence. This manner, the LLM at all times will get data in a format it might probably simply work with.

The MessagesPlaceholder element reserves a spot within the immediate template and the agent_scratchpad is the agent’s working reminiscence. It comprises the historical past of the agent’s ideas, software calls, and the outcomes of these calls. This permits the mannequin to see its earlier reasoning steps and gear outputs, enabling it to construct on previous actions and make knowledgeable selections.

One other key distinction is that we don’t need to implement the logic with conditional statements to execute the features. The create_openai_tools_agent operate creates an agent that may motive about which instruments to make use of and when. As well as, the AgentExecutor orchestrates the method, managing the dialog between the person, agent, and instruments. The agent determines which software to make use of by means of its reasoning course of, and the executor takes care of the operate execution and dealing with the outcome.

# Procuring listing

shopping_list = []

# Capabilities

@software

def add_shopping_items(objects: Record[str]):

"""Add a number of objects to the purchasing listing."""

for merchandise in objects:

shopping_list.append(merchandise)

return {"standing": "okay", "added": objects}

@software

def list_shopping_items():

"""Return all objects presently within the purchasing listing."""

return {"shopping_list": shopping_list}

# Configuration

llm = ChatGoogleGenerativeAI(

mannequin="gemini-2.0-flash",

temperature=0

)

instruments = [add_shopping_items, list_shopping_items]

immediate = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant that helps manage shopping lists. "

"Use the available tools to add items to the shopping list "

"or list the current items when requested by the user."),

("human", "{input}"),

MessagesPlaceholder(variable_name="agent_scratchpad")

])

# Create the Agent

agent = create_openai_tools_agent(llm, instruments, immediate)

agent_executor = AgentExecutor(agent=agent, instruments=instruments, verbose=True)

# Consumer enter

user_input = (

"Hey there! I am planning to bake a chocolate cake later immediately, "

"however I noticed I am out of flour and chocolate chips. "

"Might you please add these objects to my purchasing listing?"

)

# Ship the person enter to Gemini

response = agent_executor.invoke({"enter": user_input})After we use verbose=True, we will see the reasoning and actions whereas the code is being executed.

And the ultimate outcome:

When must you construct an agent?

Keep in mind that we mentioned brokers’s definitions within the first part and noticed that they function autonomously to carry out duties. It’s cool to create brokers, much more due to the hype. Nevertheless, constructing an agent will not be at all times probably the most environment friendly resolution, and a deterministic resolution might suffice.

A deterministic resolution signifies that the system follows clear and predefined guidelines with out an interpretation. This manner is best when the duty is well-defined, secure, and advantages from readability. As well as, on this approach, it’s simpler to check and debug, and it’s good when you could know precisely what is going on given an enter, no “black field”. Anthropic’s information reveals many various LLM Workflows the place LLMs and instruments are orchestrated by means of predefined code paths.

The most effective practices information for constructing brokers from Open AI and Anthropic suggest first discovering the best resolution potential and solely rising the complexity if wanted.

If you find yourself evaluating if you happen to ought to construct an agent, take into account the next:

- Complicated selections: when coping with processes that require nuanced judgment, dealing with exceptions, or making selections that rely closely on context — comparable to figuring out whether or not a buyer is eligible for a refund.

- Diffult-to-maintain guidelines: You probably have workflows constructed on sophisticated units of guidelines which are troublesome to replace or keep with out threat of constructing errors, and they’re continually altering.

- Dependence on unstructured knowledge: You probably have duties that require understanding written or spoken language, getting insights from paperwork — pdfs, emails, pictures, audio, html pages… — or chatting with customers naturally.

Conclusion

We noticed that brokers are programs designed to perform duties on human behalf independently. These brokers are composed of directions, the mannequin, and instruments to entry exterior knowledge and take actions. There are some methods we may improve our mannequin by enhancing the immediate with examples, utilizing RAG to offer extra context, or fine-tuning it. When constructing an agent or LLM workflow, LangChain might help simplify the code, however you must perceive what the abstractions are doing. At all times needless to say simplicity is one of the best ways to construct agentic programs, and solely observe a extra complicated strategy if wanted.

Subsequent Steps

In case you are new to this content material, I like to recommend that you simply digest all of this primary, learn it a number of instances, and in addition learn the total articles I beneficial so you’ve got a stable basis. Then, attempt to begin constructing one thing, like a easy software, to start out working towards and creating the bridge between this theoretical content material and the follow. Starting to construct is one of the best ways to study these ideas.

As I instructed you earlier than, I’ve a easy step-by-step information for making a chat in Streamlit and deploying it. There’s additionally a video on YouTube explaining this information in Portuguese. It’s a good start line if you happen to haven’t accomplished something earlier than.

I hope you loved this tutorial.

You could find all of the code for this challenge on my GitHub or Google Colab.

Comply with me on:

Assets

Constructing efficient brokers – Anthropic

Brokers – Google

A sensible information to constructing brokers – OpenAI

Chain of Thought Prompting Elicits Reasoning in Massive Language Fashions – Google Analysis

REACT: SYNERGIZING REASONING AND ACTING IN LANGUAGE MODELS – Google Analysis

Small Language Fashions: A Information With Examples – DataCamp