: Why the Menace Mannequin Adjustments

Most AI safety work focuses on the mannequin: what it says, what it refuses, and the way it handles malicious prompts. This framing made sense when AI was a textual content interface. The person sends a message, and it responds. The assault floor was slim and well-defined.

Brokers change the form of the issue completely.

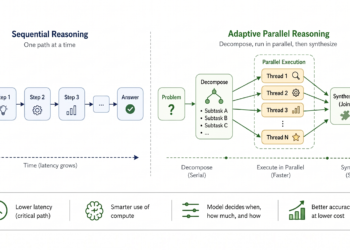

An AI agent does rather more than generate textual content. It plans, makes use of instruments, shops reminiscence throughout periods, and infrequently coordinates with different brokers to finish multi-step duties. Consider the distinction between a navigation app suggesting a route and an autopilot system wired immediately into the car’s steering and throttle. One offers data. The opposite executes management. The danger mannequin is now not comparable.

The numbers verify that is now not a theoretical concern. In keeping with Gravitee’s 2026 State of AI Agent Safety report, primarily based on a survey of greater than 900 executives and practitioners:

- 88 p.c of organizations reported confirmed or suspected AI agent safety incidents previously yr

- Solely 14.4 p.c of agentic programs went dwell with full safety and IT approval

This sample extends throughout the business. A 2026 report from Apono discovered that 98 p.c of cybersecurity leaders report friction between accelerating agentic AI adoption and assembly safety necessities, leading to slowed or constrained deployments.

That hole between deployment pace and safety readiness is the place incidents occur.

A standalone LLM has one assault floor: the immediate. An agent exposes 4:

- The Immediate Floor: Studying exterior inputs.

- The Instrument Floor: Executing backend actions.

- The Reminiscence Floor: Remembering previous periods.

- The Planning Loop Floor: Deciding subsequent steps.

Every floor has its personal assault patterns. Defenses constructed for one don’t switch to the others.

The 4-Floor Assault Taxonomy

In mid-2025, Pomerium reported an AI assist agent that blindly executed a hidden SQL payload, leaking database secrets and techniques right into a public ticket. Conventional safety fails right here. Including instruments, reminiscence, and autonomous planning to an LLM creates 4 distinct assault surfaces, every requiring a wholly new menace mannequin.

The immediate floor: when the agent reads the fallacious factor

The person enter is completely clear. The vulnerability lies in all the things else the agent consumes.

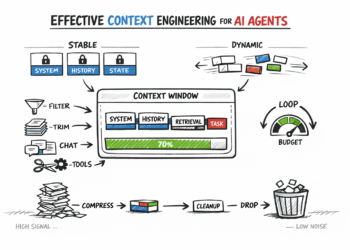

When an agent fetches a webpage, a RAG doc, or a backend response, these inputs arrive with no belief boundary. Attackers don’t compromise the person interface; they plant payloads the place the agent will finally look. That is oblique immediate injection.

As a result of fashions flatten all textual content right into a single context window, they can’t distinguish your system directions from a hidden command inside a retrieved PDF. They deal with the malicious textual content as trusted context. Even software docstrings and parameter names can invisibly hijack the agent’s conduct, resulting in silent knowledge exfiltration upstream whereas the person sees a traditional response.

What Protection Seems to be Like Right here:

- Boundary sanitization: Deal with all exterior knowledge as untrusted at each retrieval level.

- Instruction separation: Use structured codecs to isolate system prompts from fetched content material.

- Pre-execution filtering: Scan for exfiltration patterns earlier than any software fires.

These controls safe what the agent ingests. However as soon as it takes motion, the assault strikes to the Instrument Floor.

The Instrument floor: when studying turns into doing

Each software an agent can name is a permission boundary, making it a main goal for exploitation. The core assault is parameter injection: manipulating the agent into passing attacker-controlled values into instruments that set off real-world penalties, like database writes or signed API requests.

The Pomerium incident talked about earlier illustrates precisely how this fails in observe. The assault succeeded as a result of three architectural flaws converged: extreme privileges granted to the agent, unvalidated person inputs reaching the SQL software, and an open outbound knowledge channel. Sadly, this describes the default setup of most brokers right this moment.

What Protection Seems to be Like Right here:

- Least Privilege: Scope permissions strictly to the precise activity.

- Parameter Validation: Confirm all inputs towards strict schemas earlier than execution.

- Human Checkpoints: Require handbook approval for any irreversible motion.

Securing these instruments locks down the current. However as soon as an agent provides persistent reminiscence, the vulnerability shifts to what it remembers for later.

The reminiscence floor: when the whiteboard lies

Think about a shared workplace whiteboard relied upon for every day choices. If an outsider quietly rewrites one entry in a single day, the group’s total output shifts primarily based on corrupted knowledge. Persistent reminiscence in an autonomous agent works precisely the identical method. Management what the agent remembers, and also you dictate its future actions throughout periods and customers.

The info on this vulnerability is very regarding:

- The MINJA Framework: Safety testing throughout main fashions achieved a 95% success charge in silently injecting false recollections, requiring completely no elevated privileges or API entry.

- Microsoft Defender Intel: In simply 60 days, researchers intercepted over 50 assaults throughout 14 industries. Adversaries used hidden URL parameters to secretly instruct brokers to favor particular corporations in future responses.

- Zero-Price Deployment: These assaults weren’t launched by superior menace teams. They had been executed by on a regular basis advertising and marketing groups utilizing free software program packages, proving this exploit takes minutes to deploy and prices nothing.

What Protection Seems to be Like Right here:

- Provenance Monitoring: Securely log the supply, context, and timestamp of each reminiscence write.

- Belief-Weighted Retrieval: Authenticated person entries should strictly outrank unverified exterior content material.

- Temporal Decay (TTL): Implement age thresholds the place reminiscence entries decay or are explicitly purged.

- Periodic Auditing: Run automated audits to detect anomalous clusters of malicious directions.

Reminiscence poisoning is harmful by itself, however it units the stage for the ultimate assault floor.

The planning loop: when the vacation spot is fallacious

A GPS fed false map knowledge nonetheless provides assured turn-by-turn instructions. The routing logic works completely, however the vacation spot is fallacious. The driving force has no thought till they arrive someplace they by no means meant to go.

The planning loop is an agent’s reasoning engine. If an attacker shifts the place the agent thinks it’s going, they don’t must inject particular instructions. The agent will autonomously navigate to the malicious goal.

This shift can originate from any floor we simply lined: a poisoned reminiscence entry, a manipulated software return, or a malicious exterior doc. However the actual hazard is contagion velocity. In a December 2025 simulation by Galileo AI, a single compromised orchestrator poisoned 87% of downstream decision-making throughout a multi-agent structure inside 4 hours. It corrupted each agent that trusted its output.

What Protection Seems to be Like Right here:

- Reasoning Logging: Log intermediate reasoning steps, not simply last outputs.

- Checkpoint Validation: Validate the purpose state at outlined checkpoints throughout activity execution.

- Arduous Boundaries: Outline strict cease circumstances at deployment that retrieved content material can not override.

- Agent Isolation: Isolate agent situations so a single compromise can not propagate freely throughout the system.

| Floor | Assault | Instance | Mitigation |

| Immediate | Oblique injection through Rag or instruments | A summarized e mail silently exfiltrated information from OneDrive/Groups. | Sanitize boundaries, isolate system prompts, filter outputs |

| Instrument | Parameter injection, privilege escalation | A assist ticket used hidden SQL to leak tokens through an agent. | Implement least privilege, validate parameters, and require human approval |

| Reminiscence | Persistent injection, advice poisoning | Faux activity information inserted into reminiscence brought about future unsafe conduct. | Observe provenance, weight retrieval by belief, audit, and periodically |

| Planning Loop | Objective hijacking, multi-agent cascade | One compromised agent poisons all the multi-agent pipeline by means of cascading reasoning corruption. | Log reasoning, validate checkpoints, isolate situations |

Safety vs. Agent Autonomy: The Tradeoff House

Each mitigation throughout the Immediate, Instrument, Reminiscence, and Planning Loop surfaces carries an inherent price, as ignoring these trade-offs produces safety theater somewhat than precise safety. Sandboxing a software setting limits what an agent can attain, which is exactly the purpose, but it additionally features as a direct discount within the agent’s total functionality. Equally, implementing human-in-the-loop gates on irreversible actions prevents unauthorized writes however introduces latency that may erode the enterprise case for automation. Different important controls, corresponding to periodic reminiscence audits, strict parameter validation, and retrieval filtering, additional decelerate processing or break unanticipated edge instances.

Safety and autonomy exist on a dial, not a binary swap. The optimum setting for any deployment is set by three particular components:

- Functionality Profile: Controls have to be proportional to what the agent is empowered to do, as a read-only agent carries a fraction of the chance in comparison with a multi-agent orchestrator.

- Process Setting: An agent summarizing inside paperwork operates in a essentially totally different menace setting than one managing vital infrastructure.

- Blast Radius: Selections must be primarily based on the worst-case consequence of an exploit somewhat than its perceived likelihood.

The need of this strategy is underscored by the truth that model-level security fails below stress. Stanford analysis demonstrated that fine-tuning assaults bypassed security filters in 72% of Claude Haiku instances and 57% of GPT-4o instances, with the assault acknowledged as a vulnerability by each Anthropic and OpenAI. As a result of model-layer coaching shouldn’t be a dependable substitute for execution-layer safety, sturdy system-level controls are necessary for any production-grade deployment

Implementation: Transferring from Taxonomy to Structure

The taxonomy of assault surfaces solely issues if it immediately influences how a system is constructed. The lively menace panorama relies upon completely on an agent’s capabilities.

Matching Controls to Structure

- Single-Instrument Brokers: For brokers with no persistent reminiscence and no outbound actions, the first vulnerability is the Immediate floor. Minimal viable safety contains enter sanitization at retrieval boundaries, tightly scoped permissions, and full audit logging of software calls.

- Multi-Agent Orchestrators: Methods with persistent reminiscence and the flexibility to spawn downstream brokers expose all 4 surfaces concurrently.

Prioritizing by Blast Radius

Efficient safety prioritizes the potential influence of an exploit over its perceived probability:

- Permissions First: Most incidents, such because the Supabase leak, stem from extreme privileges; implementing least privilege is the highest-leverage, lowest-cost management.

- Separate Instruction Sources: System directions and retrieved content material mustn’t ever share a belief context to shut the vast majority of the Immediate floor.

- Reminiscence Provenance: Analysis like MemoryGraft reveals how poisoned reminiscence compounds; monitoring the supply of each reminiscence write have to be in place earlier than scaling.

- Monitor Reasoning: Output filtering can not detect purpose hijacking; programs should log intermediate reasoning steps somewhat than simply last outputs.

Out-of-process frameworks like Microsoft’s Agent Governance Toolkit implement insurance policies independently, sustaining management even when the agent is compromised. Finally, you both map these assault surfaces intentionally earlier than deployment or uncover them throughout post-incident forensics.

Conclusion

The shift from LLM to agent is a structural change in what the system can do and, subsequently, in what can go fallacious. The 4 surfaces lined on this article compound throughout one another, the place a poisoned reminiscence entry permits purpose hijacking, an overprivileged software turns an injection into exfiltration, and a compromised orchestrator corrupts each agent downstream. The organizations managing these dangers successfully are those that mapped the issue earlier than deployment, matched controls to precise functionality profiles, and constructed monitoring into the reasoning layer somewhat than simply the output layer. This taxonomy doesn’t eradicate the menace, and it offers an correct map of the terrain earlier than you construct on it, as a result of what will get mapped might be defended, and what will get skipped will probably be found by means of an incident.

Thanks for studying. I’m Mostafa Ibrahim, founding father of Codecontent, a developer-first technical content material company. I write about agentic programs, RAG, and manufacturing AI. If you happen to’d like to remain in contact or talk about the concepts on this article, you could find me on LinkedIn right here.