, neural networks and Clustering algorithms appear worlds aside. Neural networks are sometimes utilized in supervised studying, the place the purpose is to label new knowledge based mostly on patterns realized from a labeled dataset. Clustering, in contrast, is often an unsupervised process: we attempt to uncover relationships in knowledge with out entry to floor reality labels.

Because it seems, Deep Studying could be extremely helpful for clustering issues. Right here’s the important thing thought: suppose we prepare a neural community utilizing a loss perform that displays one thing we care about — say, how properly we are able to classify or separate examples. If the community achieves low loss, we are able to infer that the representations it learns (particularly within the second-to-last layer) seize significant construction within the knowledge. In different phrases, these intermediate representations encode what the community has realized in regards to the process.

So, what occurs if we run a clustering algorithm (like KMeans) on these representations? Ideally, we find yourself with clusters that replicate the identical underlying construction the community was skilled to seize.

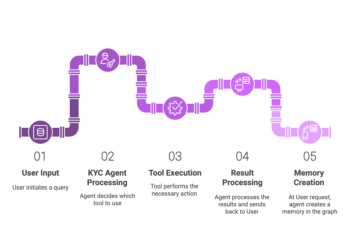

Ahh, that’s loads! Right here’s an image:

As seen within the picture, once we run our inputs by till the second-to-last layer, we get a vector out with Kₘ values, which is presumably loads decrease than the quantity of inputs we began with if we did all the pieces proper. As a result of the output layer solely seems at this vector when making predictions, if our predictions are good, we are able to conclude that this vector encapsulates some vital details about our knowledge. Clustering on this house is extra significant than clustering uncooked knowledge, since we’ve filtered for the options that truly matter.

That is the elemental thought behind DeepType — a Neural Community strategy to clustering. Reasonably than clustering uncooked knowledge instantly, DeepType first learns a task-relevant illustration by supervised coaching after which performs clustering in that realized house.

This does elevate a query, nonetheless — if we have already got ground-truth labels, why would we have to run clustering? In any case, if we simply clustered utilizing our labels, wouldn’t that create an ideal clustering? Then, for brand spanking new knowledge factors, we might merely run our neural internet, predict the label, and cluster the purpose appropriately.

Because it seems, in some contexts, we care extra in regards to the relationships between our knowledge factors than the labels themselves. Within the paper that launched DeepType, as an illustration, the authors used the concept described to search out completely different groupings of sufferers with breast most cancers based mostly on genetic knowledge, which could be very helpful in a organic context. They then discovered that these teams correlated very extremely to survival charges, which is sensible provided that the representations they clustered on have been ingrained with organic knowledge¹.

Refining the Thought: DeepType’s Loss Perform

At this level, we perceive the core thought: prepare a neural community to study a task-relevant illustration, then cluster in that house. Nevertheless, we are able to make some slight modifications to make this course of higher.

For starters, we’d just like the clusters that we produce to be compact if doable. In different phrases, we’d a lot quite have the state of affairs within the image on the left than on the suitable:

To be able to do that, we wish to push the representations of information factors in the identical clusters to be as shut collectively as doable. To do that, we add a time period to our loss perform that penalizes the gap between our enter’s illustration and the middle of the cluster its been assigned to. Thus, our loss perform turns into

The place d is a distance perform between vectors, i.e. the sq. of the norm of the distinction between the vectors (as is used within the authentic paper).

However wait, how will we get the cluster facilities if we haven’t skilled the community but? To be able to get round that, DeepType does the next process:

- Prepare a mannequin on simply the first loss

- Create clusters within the illustration house (utilizing e.g. KMeans or your favourite algorithm)

- Prepare the mannequin utilizing the modified loss

- Return to step 2 and repeat till we converge

Finally, this process produces compact clusters that hopefully correspond to our lack of curiosity.

Discovering Vital Inputs

In contexts the place DeepType is beneficial, along with caring about clusters, we additionally care about which inputs are essentially the most informative/vital. The paper that launched DeepType, as an illustration, was excited about figuring out which genes have been crucial in figuring out somebody’s most cancers subtype — such data is definitely helpful for a biologist. Loads of different contexts would additionally discover such data attention-grabbing — in truth, it’s laborious to dream up one which wouldn’t.

In a deep studying context, we are able to contemplate an enter to be vital if the magnitude of the weights assigned to it by the nodes within the first layer are excessive. In distinction, if most of our nodes have a weight near 0 for the enter, it received’t contribute a lot to our ultimate prediction, and therefore possible isn’t all that vital.

We thus introduce one ultimate loss time period — a sparsity loss — that may encourage our neural internet to push as many enter weights to 0 as doable. With that, our ultimate modified DeepType loss turns into

The place the beta time period is the gap time period we had earlier than, and the alpha time period successfully penalizes a excessive “magnitude” of the first-layer weight matrix².

We additionally modify the four-step process from the earlier part barely. As an alternative of simply coaching on the MSE in step one, we prepare on each the MSE and the sparsity loss within the pretraining step. Per the authors, our ultimate DeepType construction seems like this:

Taking part in with DeepType

As a part of my analysis, I’ve posted an open-source implementation of DeepType right here. You’ll be able to moreover obtain it from pip by doing pip set up torch-deeptype .

The DeepType package deal makes use of a reasonably easy infrastructure to get all the pieces examined. For example, we’ll create an artificial dataset with 4 clusters and 20 inputs, solely 5 of which truly contribute to the output:

import numpy as np

import torch

from torch.utils.knowledge import TensorDataset, DataLoader

# 1) Configuration

n_samples = 1000

n_features = 20

n_informative = 5 # variety of "vital" options

n_clusters = 4 # variety of ground-truth clusters

noise_features = n_features - n_informative

# 2) Create distinct cluster facilities within the informative subspace

# (unfold out so clusters are properly separated)

informative_centers = np.random.randn(n_clusters, n_informative) * 5

# 3) Assign every pattern to a cluster, then pattern round that middle

X_informative = np.zeros((n_samples, n_informative))

y_clusters = np.random.randint(0, n_clusters, dimension=n_samples)

for i, c in enumerate(y_clusters):

middle = informative_centers[c]

X_informative[i] = middle + np.random.randn(n_informative)

# 4) Generate pure noise for the remaining options

X_noise = np.random.randn(n_samples, noise_features)

# 5) Concatenate informative + noise options

X = np.hstack([X_informative, X_noise]) # form (1000, 20)

y = y_clusters # form (1000,)

# 6) Convert to torch tensors and construct DataLoader

X_tensor = torch.from_numpy(X).float()

y_tensor = torch.from_numpy(y).lengthy()

dataset = TensorDataset(X_tensor, y_tensor)

train_loader = DataLoader(dataset, batch_size=64, shuffle=True)Right here’s what our knowledge seems like once we plot a PCA:

We’ll then outline a DeeptypeModel — It may be any infrastructure so long as it implements the ahead , get_input_layer_weights , and get_hidden_representations capabilities:

import torch

import torch.nn as nn

from torch_deeptype import DeeptypeModel

class MyNet(DeeptypeModel):

def __init__(self, input_dim: int, hidden_dim: int, output_dim: int):

tremendous().__init__()

self.input_layer = nn.Linear(input_dim, hidden_dim)

self.h1 = nn.Linear(hidden_dim, hidden_dim)

self.cluster_layer = nn.Linear(hidden_dim, hidden_dim // 2)

self.output_layer = nn.Linear(hidden_dim // 2, output_dim)

def ahead(self, x: torch.Tensor) -> torch.Tensor:

# Discover how ahead() will get the hidden representations

hidden = self.get_hidden_representations(x)

return self.output_layer(hidden)

def get_input_layer_weights(self) -> torch.Tensor:

return self.input_layer.weight

def get_hidden_representations(self, x: torch.Tensor) -> torch.Tensor:

x = torch.relu(self.input_layer(x))

x = torch.relu(self.h1(x))

x = torch.relu(self.cluster_layer(x))

return xThen, we create a DeeptypeTrainer and prepare:

from torch_deeptype import DeeptypeTrainer

coach = DeeptypeTrainer(

mannequin = MyNet(input_dim=20, hidden_dim=64, output_dim=5),

train_loader = train_loader,

primary_loss_fn = nn.CrossEntropyLoss(),

num_clusters = 4, # Ok in KMeans

sparsity_weight = 0.01, # α for L₂ sparsity on enter weights

cluster_weight = 0.5, # β for cluster‐rep loss

verbose = True # print per-epoch loss summaries

)

coach.prepare(

main_epochs = 15, # epochs for joint part

main_lr = 1e-4, # LR for joint part

pretrain_epochs = 10, # epochs for pretrain part

pretrain_lr = 1e-3, # LR for pretrain (defaults to main_lr if None)

train_steps_per_batch = 8, # interior updates per batch in joint part

)After coaching, we are able to then simply extract the vital inputs

sorted_idx = coach.mannequin.get_sorted_input_indices()

print("High 5 options by significance:", sorted_idx[:5].tolist())

print(coach.mannequin.get_input_importance())

>> High 5 options by significance: [3, 1, 4, 2, 0]

>> tensor([0.7594, 0.8327, 0.8003, 0.9258, 0.8141, 0.0107, 0.0199, 0.0329, 0.0043,

0.0025, 0.0448, 0.0054, 0.0119, 0.0021, 0.0190, 0.0055, 0.0063, 0.0073,

0.0059, 0.0189], grad_fn=) Which is superior, we received again the 5 vital inputs as anticipated!

We will additionally simply extract the clusters utilizing the illustration layer and plot them:

centroids, labels = coach.get_clusters(dataset)

plt.determine(figsize=(8, 6))

plt.scatter(

parts[:, 0],

parts[:, 1],

c=labels,

cmap='tab10',

s=20,

alpha=0.7

)

plt.xlabel('Principal Part 1')

plt.ylabel('Principal Part 2')

plt.title('PCA of Artificial Dataset')

plt.colorbar(label='True Cluster')

plt.tight_layout()

plt.present()

And growth, that’s all!

Conclusion

Although DeepType received’t be the suitable instrument for each drawback, it provides a robust option to combine area data into the clustering course of. So if you end up with a significant loss perform and a need to uncover construction in your knowledge—give DeepType a shot!

Please contact [email protected] for any inquiries. All photos by writer until acknowledged in any other case.

- Biologists have decided a set of most cancers subtypes for the broader class breast most cancers. Although I’m no skilled, it’s secure to imagine that these subtypes have been recognized by biologists for a cause. The the authors skilled their mannequin to foretell the subtype for a affected person, which offered the organic context essential to provide novel, attention-grabbing clusters. Given the purpose, although, I’m undecided why the authors selected to foretell on subtypes as an alternative of affected person outcomes instantly, although — in truth, I guess the outcomes from such an experiment can be attention-grabbing.

- The norm introduced is outlined as

We transpose w since we wish to penalize the columns of the burden matrix quite than the rows. That is vital as a result of in a totally related neural community layer, every column of the burden matrix corresponds to an enter function. By making use of the ℓ2,1 norm to the transposed matrix, we encourage total enter options to be zeroed out, selling feature-level sparsity

Cowl picture supply: right here