Introduction

What if we might make language fashions assume extra like people? As a substitute of writing one phrase at a time, what if they may sketch out their ideas first, and progressively refine them?

That is precisely what Massive Language Diffusion Fashions (LLaDA) introduces: a unique strategy to present textual content era utilized in Massive Language Fashions (LLMs). Not like conventional autoregressive fashions (ARMs), which predict textual content sequentially, left to proper, LLaDA leverages a diffusion-like course of to generate textual content. As a substitute of producing tokens sequentially, it progressively refines masked textual content till it varieties a coherent response.

On this article, we’ll dive into how LLaDA works, why it issues, and the way it might form the subsequent era of LLMs.

I hope you benefit from the article!

The present state of LLMs

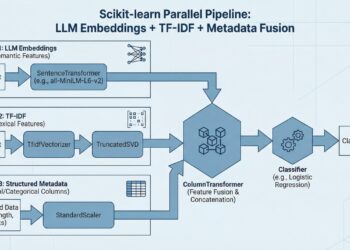

To understand the innovation that LLaDA represents, we first want to know how present massive language fashions (LLMs) function. Fashionable LLMs comply with a two-step coaching course of that has turn into an trade commonplace:

- Pre-training: The mannequin learns common language patterns and information by predicting the subsequent token in large textual content datasets via self-supervised studying.

- Supervised Positive-Tuning (SFT): The mannequin is refined on fastidiously curated knowledge to enhance its skill to comply with directions and generate helpful outputs.

Observe that present LLMs usually use RLHF as nicely to additional refine the weights of the mannequin, however this isn’t utilized by LLaDA so we’ll skip this step right here.

These fashions, based on the Transformer structure, generate textual content one token at a time utilizing next-token prediction.

Here’s a simplified illustration of how knowledge passes via such a mannequin. Every token is embedded right into a vector and is reworked via successive transformer layers. In present LLMs (LLaMA, ChatGPT, DeepSeek, and many others), a classification head is used solely on the final token embedding to foretell the subsequent token within the sequence.

This works because of the idea of masked self-attention: every token attends to all of the tokens that come earlier than it. We are going to see later how LLaDA can do away with the masks in its consideration layers.

If you wish to study extra about Transformers, try my article right here.

Whereas this strategy has led to spectacular outcomes, it additionally comes with important limitations, a few of which have motivated the event of LLaDA.

Present limitations of LLMs

Present LLMs face a number of essential challenges:

Computational Inefficiency

Think about having to write down a novel the place you’ll be able to solely take into consideration one phrase at a time, and for every phrase, it’s good to reread every thing you’ve written up to now. That is primarily how present LLMs function — they predict one token at a time, requiring a whole processing of the earlier sequence for every new token. Even with optimization methods like KV caching, this course of is fairly computationally costly and time-consuming.

Restricted Bidirectional Reasoning

Conventional autoregressive fashions (ARMs) are like writers who might by no means look forward or revise what they’ve written up to now. They’ll solely predict future tokens primarily based on previous ones, which limits their skill to cause about relationships between completely different elements of the textual content. As people, we regularly have a common concept of what we wish to say earlier than writing it down, present LLMs lack this functionality in some sense.

Quantity of knowledge

Current fashions require monumental quantities of coaching knowledge to attain good efficiency, making them resource-intensive to develop and probably limiting their applicability in specialised domains with restricted knowledge availability.

What’s LLaDA

LLaDA introduces a essentially completely different strategy to Language Era by changing conventional autoregression with a “diffusion-based” course of (we’ll dive later into why that is known as “diffusion”).

Let’s perceive how this works, step-by-step, beginning with pre-training.

LLaDA pre-training

Do not forget that we don’t want any “labeled” knowledge in the course of the pre-training part. The target is to feed a really great amount of uncooked textual content knowledge into the mannequin. For every textual content sequence, we do the next:

- We repair a most size (just like ARMs). Sometimes, this could possibly be 4096 tokens. 1% of the time, the lengths of sequences are randomly sampled between 1 and 4096 and padded in order that the mannequin can also be uncovered to shorter sequences.

- We randomly select a “masking price”. For instance, one might choose 40%.

- We masks every token with a likelihood of 0.4. What does “masking” imply precisely? Properly, we merely substitute the token with a particular token:

. As with every different token, this token is related to a selected index and embedding vector that the mannequin can course of and interpret throughout coaching. - We then feed our complete sequence into our transformer-based mannequin. This course of transforms all of the enter embedding vectors into new embeddings. We apply the classification head to every of the masked tokens to get a prediction for every. Mathematically, our loss perform averages cross-entropy losses over all of the masked tokens within the sequence, as beneath:

5. And… we repeat this process for billions or trillions of textual content sequences.

Observe, that in contrast to ARMs, LLaDA can totally make the most of bidirectional dependencies within the textual content: it doesn’t require masking in consideration layers anymore. Nonetheless, this may come at an elevated computational value.

Hopefully, you’ll be able to see how the coaching part itself (the move of the info into the mannequin) is similar to another LLMs. We merely predict randomly masked tokens as a substitute of predicting what comes subsequent.

LLaDA SFT

For auto-regressive fashions, SFT is similar to pre-training, besides that we have now pairs of (immediate, response) and wish to generate the response when giving the immediate as enter.

That is precisely the similar idea for LlaDa! Mimicking the pre-training course of: we merely move the immediate and the response, masks random tokens from the response solely, and feed the complete sequence into the mannequin, which will predict lacking tokens from the response.

The innovation in inference

Innovation is the place LLaDA will get extra fascinating, and really makes use of the “diffusion” paradigm.

Till now, we at all times randomly masked some textual content as enter and requested the mannequin to foretell these tokens. However throughout inference, we solely have entry to the immediate and we have to generate your entire response. You may assume (and it’s not incorrect), that the mannequin has seen examples the place the masking price was very excessive (probably 1) throughout SFT, and it needed to study, in some way, the way to generate a full response from a immediate.

Nonetheless, producing the complete response directly throughout inference will probably produce very poor outcomes as a result of the mannequin lacks data. As a substitute, we want a technique to progressively refine predictions, and that’s the place the important thing concept of ‘remasking’ is available in.

Right here is the way it works, at every step of the textual content era course of:

- Feed the present enter to the mannequin (that is the immediate, adopted by

tokens) - The mannequin generates one embedding for every enter token. We get predictions for the

tokens solely. And right here is the essential step: we remask a portion of them. Specifically: we solely maintain the “finest” tokens i.e. those with one of the best predictions, with the best confidence. - We will use this partially unmasked sequence as enter within the subsequent era step and repeat till all tokens are unmasked.

You may see that, apparently, we have now rather more management over the era course of in comparison with ARMs: we might select to remask 0 tokens (just one era step), or we might determine to maintain solely one of the best token each time (as many steps as tokens within the response). Clearly, there’s a trade-off right here between the standard of the predictions and inference time.

Let’s illustrate that with a easy instance (in that case, I select to maintain one of the best 2 tokens at each step)

Observe, in observe, the remasking step would work as follows. As a substitute of remasking a hard and fast variety of tokens, we’d remask a proportion of s/t tokens over time, from t=1 all the way down to 0, the place s is in [0, t]. Specifically, this implies we remask fewer and fewer tokens because the variety of era steps will increase.

Instance: if we wish N sampling steps (so N discrete steps from t=1 all the way down to t=1/N with steps of 1/N), taking s = (t-1/N) is an effective selection, and ensures that s=0 on the finish of the method.

The picture beneath summarizes the three steps described above. “Masks predictor” merely denotes the Llm (LLaDA), predicting masked tokens.

Can autoregression and diffusion be mixed?

One other intelligent concept developed in LLaDA is to mix diffusion with conventional autoregressive era to make use of one of the best of each worlds! That is known as semi-autoregressive diffusion.

- Divide the era course of into blocks (for example, 32 tokens in every block).

- The target is to generate one block at a time (like we’d generate one token at a time in ARMs).

- For every block, we apply the diffusion logic by progressively unmasking tokens to disclose your entire block. Then transfer on to predicting the subsequent block.

This can be a hybrid strategy: we most likely lose a few of the “backward” era and parallelization capabilities of the mannequin, however we higher “information” the mannequin in the direction of the ultimate output.

I believe it is a very fascinating concept as a result of it relies upon so much on a hyperparameter (the variety of blocks), that may be tuned. I think about completely different duties may profit extra from the backward era course of, whereas others may profit extra from the extra “guided” era from left to proper (extra on that within the final paragraph).

Why “Diffusion”?

I believe it’s essential to briefly clarify the place this time period truly comes from. It displays a similarity with picture diffusion fashions (like Dall-E), which have been highly regarded for picture era duties.

In picture diffusion, a mannequin first provides noise to a picture till it’s unrecognizable, then learns to reconstruct it step-by-step. LLaDA applies this concept to textual content by masking tokens as a substitute of including noise, after which progressively unmasking them to generate coherent language. Within the context of picture era, the masking step is usually known as “noise scheduling”, and the reverse (remasking) is the “denoising” step.

It’s also possible to see LLaDA as some sort of discrete (non-continuous) diffusion mannequin: we don’t add noise to tokens, however we “deactivate” some tokens by masking them, and the mannequin learns the way to unmask a portion of them.

Outcomes

Let’s undergo a number of of the fascinating outcomes of LLaDA.

You could find all of the leads to the paper. I selected to deal with what I discover probably the most fascinating right here.

- Coaching effectivity: LLaDA exhibits related efficiency to ARMs with the identical variety of parameters, however uses a lot fewer tokens throughout coaching (and no RLHF)! For instance, the 8B model makes use of round 2.3T tokens, in comparison with 15T for LLaMa3.

- Utilizing completely different block and reply lengths for various duties: for instance, the block size is especially massive for the Math dataset, and the mannequin demonstrates robust efficiency for this area. This might recommend that mathematical reasoning could profit extra from the diffusion-based and backward course of.

- Apparently, LLaDA does higher on the “Reversal poem completion process”. This process requires the mannequin to full a poem in reverse order, ranging from the final traces and dealing backward. As anticipated, ARMs wrestle on account of their strict left-to-right era course of.

LLaDA is not only an experimental various to ARMs: it exhibits actual benefits in effectivity, structured reasoning, and bidirectional textual content era.

Conclusion

I believe LLaDA is a promising strategy to language era. Its skill to generate a number of tokens in parallel whereas sustaining world coherence might undoubtedly result in extra environment friendly coaching, higher reasoning, and improved context understanding with fewer computational sources.

Past effectivity, I believe LLaDA additionally brings a number of flexibility. By adjusting parameters just like the variety of blocks generated, and the variety of era steps, it will possibly higher adapt to completely different duties and constraints, making it a flexible instrument for numerous language modeling wants, and permitting extra human management. Diffusion fashions might additionally play an essential position in pro-active AI and agentic programs by having the ability to cause extra holistically.

As analysis into diffusion-based language fashions advances, LLaDA might turn into a helpful step towards extra pure and environment friendly language fashions. Whereas it’s nonetheless early, I consider this shift from sequential to parallel era is an fascinating course for AI growth.

Thanks for studying!

Try my earlier articles:

References:

- [1] Liu, C., Wu, J., Xu, Y., Zhang, Y., Zhu, X., & Track, D. (2024). Massive Language Diffusion Fashions. arXiv preprint arXiv:2502.09992. https://arxiv.org/pdf/2502.09992

- [2] Yang, Ling, et al. “Diffusion fashions: A complete survey of strategies and purposes.” ACM Computing Surveys 56.4 (2023): 1–39.

- [3] Alammar, J. (2018, June 27). The Illustrated Transformer. Jay Alammar’s Weblog. https://jalammar.github.io/illustrated-transformer/