In my earlier submit, Immediate Caching — what it’s, the way it works, and the way it can prevent some huge cash and time when working AI-powered apps with excessive visitors. In in the present day’s submit, I stroll you thru implementing Immediate Caching particularly utilizing OpenAI’s API, and we focus on some widespread pitfalls.

A short reminder on Immediate Caching

Earlier than getting our fingers soiled, let’s briefly revisit what precisely the idea of Immediate Caching is. Immediate Caching is a performance supplied in frontier mannequin API providers just like the OpenAI API or Claude’s API, that permits caching and reusing elements of the LLM’s enter which might be repeated continuously. Such repeated elements could also be system prompts or directions which might be handed to the mannequin each time when working an AI app, together with another variable content material, just like the consumer’s question or info retrieved from a data base. To have the ability to hit cache with immediate caching, the repeated elements of the immediate have to be in the beginning of it, particularly, a immediate prefix. As well as, to ensure that immediate caching to be activated, this prefix should exceed a sure threshold (e.g., for OpenAI the prefix ought to be greater than 1,024 tokens, whereas Claude has completely different minimal cache lengths for various fashions). So far as these two circumstances are happy — repeated tokens as a prefix exceeding the dimensions threshold outlined by the API service and mannequin — caching may be activated to realize economies of scale when working AI apps.

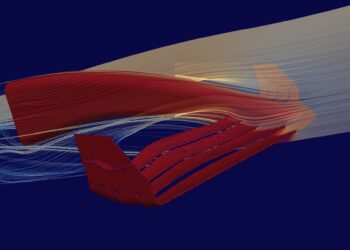

In contrast to caching in different parts in a RAG or different AI app, immediate caching operates on the token stage, within the inside procedures of the LLM. Specifically, LLM inference takes place in two steps:

- Pre-fill, that’s, the LLM takes into consideration the consumer immediate to generate the primary token, and

- Decoding, that’s, the LLM recursively generates the tokens of the output one after the other

In brief, immediate caching shops the computations that happen within the pre-fill stage, so the mannequin doesn’t have to recompute it once more when the identical prefix reappears. Any computations happening within the decoding iterations section, even when repeated, aren’t going to be cached.

For the remainder of the submit, I will probably be focusing solely on the usage of immediate caching within the OpenAI API.

What in regards to the OpenAI API?

In OpenAI’s API, immediate caching was initially launched on the 1st of October 2024. Initially, it provided a 50% low cost on the cached tokens, however these days, this low cost goes as much as 90%. On high of this, by hitting their immediate cache, extra financial savings on latency may be achived as much as 80%.

When immediate caching is activated, the API service makes an attempt to hit the cache for a submitted request by routing the submitted immediate to an acceptable machine, the place the respective cache is predicted to exist. That is referred to as the Cache Routing, and to do that, the API service sometimes makes use of a hash of the primary 256 tokens of the immediate.

Past this, their API additionally permits for explicitly defining a the prompt_cache_key parameter within the API request to the mannequin. That could be a single key defining which cache we’re referring to, aiming to additional enhance the possibilities of our immediate being routed to the right machine and hitting cache.

As well as, OpenAI API supplies two distinct forms of caching with regard to length, outlined via the prompt_cache_retention parameter. These are:

- In-memory immediate cache retention: That is primarily the default kind of caching, accessible for all fashions for which immediate caching is on the market. With in-memory cache, cached knowledge stay lively for a interval of 5-10 minutes beteen requests.

- Prolonged immediate cache retention: This accessible for particular fashions. Prolonged cache permits for maintaining knowledge in cache for loger and as much as a most of 24 hours.

Now, with regard to how a lot all these value, OpenAI costs the identical per enter (non cached) token, both we have now immediate caching activated or not. If we handle to hit cache succesfully, we’re billed for the cached tokens at a significantly discounted value, with a reduction as much as 90%. Furthermore, the value per enter token stays the identical each for the in reminiscence and prolonged cache retention.

Immediate Caching in Apply

So, let’s see how immediate caching really works with a easy Python instance utilizing OpenAI’s API service. Extra particularly, we’re going to do a practical situation the place a lengthy system immediate (prefix) is reused throughout a number of requests. If you’re right here, I suppose you have already got your OpenAI API key in place and have put in the required libraries. So, the very first thing to do could be to import the OpenAI library, in addition to time for capturing latency, and initialize an occasion of the OpenAI consumer:

from openai import OpenAI

import time

consumer = OpenAI(api_key="your_api_key_here")then we will outline our prefix (the tokens which might be going to be repeated and we’re aiming to cache):

long_prefix = """

You're a extremely educated assistant specialised in machine studying.

Reply questions with detailed, structured explanations, together with examples when related.

""" * 200 Discover how we artificially enhance the size (multiply with 200) to verify the 1,024 token caching threshold is met. Then we additionally arrange a timer in order to measure our latency financial savings, and we’re lastly able to make our name:

begin = time.time()

response1 = consumer.responses.create(

mannequin="gpt-4.1-mini",

enter=long_prefix + "What's overfitting in machine studying?"

)

finish = time.time()

print("First response time:", spherical(finish - begin, 2), "seconds")

print(response1.output[0].content material[0].textual content)

So, what can we count on to occur from right here? For fashions from gpt-4o and newer, immediate caching is activated by default, and since our 4,616 enter tokens are effectively above the 1,024 prefix token threshold, we’re good to go. Thus, what this request does is that it initially checks if the enter is a cache hit (it’s not, since that is the primary time we do a request with this prefix), and since it’s not, it processes the whole enter after which caches it. Subsequent time we ship an enter that matches the preliminary tokens of the cached enter to some extent, we’re going to get a cache hit. Let’s test this in follow by making a second request with the identical prefix:

begin = time.time()

response2 = consumer.responses.create(

mannequin="gpt-4.1-mini",

enter=long_prefix + "What's regularization?"

)

finish = time.time()

print("Second response time:", spherical(finish - begin, 2), "seconds")

print(response2.output[0].content material[0].textual content)

Certainly! The second request runs considerably sooner (23.31 vs 15.37 seconds). It’s because the mannequin has already made the calculations for the cached prefix and solely must course of from scratch the brand new half, “What’s regularization?”. Because of this, by utilizing immediate caching, we get considerably decrease latency and lowered value, since cached tokens are discounted.

One other factor talked about within the OpenAI documentation we’ve already talked about is the prompt_cache_key parameter. Specifically, in line with the documentation, we will explicitly outline a immediate cache key when making a request, and on this approach outline the requests that want to make use of the identical cache. Nonetheless, I attempted to incorporate it in my instance by appropriately adjusting the request parameters, however didn’t have a lot luck:

response1 = consumer.responses.create(

prompt_cache_key = 'prompt_cache_test1',

mannequin="gpt-5.1",

enter=long_prefix + "What's overfitting in machine studying?"

)

🤔

It appears that evidently whereas prompt_cache_key exists within the API capabilities, it’s not but uncovered within the Python SDK. In different phrases, we can not explicitly management cache reuse but, however it’s slightly computerized and best-effort.

So, what can go mistaken?

Activating immediate caching and truly hitting the cache appears to be sort of easy from what we’ve mentioned thus far. So, what could go mistaken, leading to us lacking the cache? Sadly, numerous issues. As easy as it’s, immediate caching requires numerous completely different assumptions to be in place. Lacking even a kind of stipulations goes to end in a cache miss. However let’s take a greater look!

One apparent miss is having a prefix that’s lower than the brink for activating immediate caching, particularly, lower than 1,024 tokens. Nonetheless, that is very simply solvable — we will all the time simply artificially enhance the prefix token rely by merely multiplying by an acceptable worth, as proven within the instance above.

One other factor could be silently breaking the prefix. Specifically, even once we use persistent directions and system prompts of acceptable dimension throughout all requests, we have to be exceptionally cautious to not break the prefixes by including any variable content material in the beginning of the mannequin’s enter, earlier than the prefix. That could be a assured approach to break the cache, irrespective of how lengthy and repeated the next prefix is. Typical suspects for falling into this pitfall are dynamic knowledge, as an example, appending the consumer ID or timestamps in the beginning of the immediate. Thus, a finest follow to comply with throughout all AI app improvement is that any dynamic content material ought to all the time be appended on the finish of the immediate — by no means in the beginning.

Finally, it’s price highlighting that immediate caching is just in regards to the pre-fill section — decoding is rarely cached. Which means that even when we impose on the mannequin to generate responses following a selected template, that beggins with sure mounted tokens, these tokens aren’t going to be cached, and we’re going to be billed for his or her processing as normal.

Conversely, for particular use instances, it doesn’t actually make sense to make use of immediate caching. Such instances could be extremely dynamic prompts, like chatbots with little repetition, one-off requests, or real-time personalised techniques.

. . .

On my thoughts

Immediate caching can considerably enhance the efficiency of AI purposes each when it comes to value and time. Specifically when seeking to scale AI apps immediate caching comes extremelly useful, for sustaining value and latency in acceptable ranges.

For OpenAI’s API immediate caching is activated by default and prices for enter, non-cached tokens are the identical both we activate immediate caching or not. Thus, one can solely win by activating immediate caching and aiming to hit it in each request, even when they don’t succeed.

Claude additionally supplies intensive performance on immediate caching via their API, which we’re going to be exploring intimately in a future submit.

Thanks for studying! 🙂

. . .

Cherished this submit? Let’s be buddies! Be part of me on:

📰Substack 💌 Medium 💼LinkedIn ☕Purchase me a espresso!

All photographs by the creator, besides talked about in any other case.