Open Meals Information has tried to resolve this concern for years utilizing Common Expressions and present options corresponding to Elasticsearch’s corrector, with out success. Till not too long ago.

Because of the most recent developments in synthetic intelligence, we now have entry to highly effective Giant Language Fashions, additionally referred to as LLMs.

By coaching our personal mannequin, we created the Substances Spellcheck and managed to not solely outperform proprietary LLMs corresponding to GPT-4o or Claude 3.5 Sonnet on this activity, but in addition to cut back the variety of unrecognized substances within the database by 11%.

This text walks you thru the totally different phases of the undertaking and exhibits you ways we managed to enhance the standard of the database utilizing Machine Studying.

Benefit from the studying!

When a product is added by a contributor, its footage undergo a sequence of processes to extract all related info. One essential step is the extraction of the listing of substances.

When a phrase is recognized as an ingredient, it’s cross-referenced with a taxonomy that incorporates a predefined listing of acknowledged substances. If the phrase matches an entry within the taxonomy, it’s tagged as an ingredient and added to the product’s info.

This tagging course of ensures that substances are standardized and simply searchable, offering correct knowledge for shoppers and evaluation instruments.

But when an ingredient isn’t acknowledged, the method fails.

Because of this, we launched an extra layer to the method: the Substances Spellcheck, designed to right ingredient lists earlier than they’re processed by the ingredient parser.

A less complicated strategy can be the Peter Norvig algorithm, which processes every phrase by making use of a sequence of character deletions, additions, and replacements to establish potential corrections.

Nonetheless, this technique proved to be inadequate for our use case, for a number of causes:

- Particular Characters and Formatting: Parts like commas, brackets, and share indicators maintain important significance in ingredient lists, influencing product composition and allergen labeling (e.g., “salt (1.2%)”).

- Multilingual Challenges: the database incorporates merchandise from all around the phrase with all kinds of languages. This additional complicates a fundamental character-based strategy like Norvig’s, which is language-agnostic.

As a substitute, we turned to the most recent developments in Machine Studying, notably Giant Language Fashions (LLMs), which excel in all kinds of Pure Language Processing (NLP) duties, together with spelling correction.

That is the trail we determined to take.

You possibly can’t enhance what you don’t measure.

What is an effective correction? And how you can measure the efficiency of the corrector, LLM or non-LLM?

Our first step is to know and catalog the range of errors the Ingredient Parser encounters.

Moreover, it’s important to evaluate whether or not an error ought to even be corrected within the first place. Typically, making an attempt to right errors may do extra hurt than good:

flour, salt (1!2%)

# Is it 1.2% or 12%?...

For these causes, we created the Spellcheck Pointers, a algorithm that limits the corrections. These pointers will serve us in some ways all through the undertaking, from the dataset technology to the mannequin analysis.

The rules was notably used to create the Spellcheck Benchmark, a curated dataset containing roughly 300 lists of substances manually corrected.

This benchmark is the cornerstone of the undertaking. It allows us to judge any answer, Machine Studying or easy heuristic, on our use case.

It goes alongside the Analysis algorithm, a customized answer we developed that remodel a set of corrections into measurable metrics.

The Analysis Algorithm

A lot of the present metrics and analysis algorithms for text-relative duties compute the similarity between a reference and a prediction, corresponding to BLEU or ROUGE scores for language translation or summarization.

Nonetheless, in our case, these metrics fail quick.

We need to consider how properly the Spellcheck algorithm acknowledges and fixes the proper phrases in a listing of substances. Due to this fact, we adapt the Precision and Recall metrics for our activity:

Precision = Proper corrections by the mannequin / Whole corrections made by the mannequin

Recall = Proper corrections by the mannequin / Whole variety of errors

Nonetheless, we don’t have the fine-grained view of which phrases have been purported to be corrected… We solely have entry to:

- The authentic: the listing of substances as current within the database;

- The reference: how we count on this listing to be corrected;

- The prediction: the correction from the mannequin.

Is there any technique to calculate the variety of errors that have been accurately corrected, those that have been missed by the Spellcheck, and at last the errors that have been wrongly corrected?

The reply is sure!

Authentic: "Th cat si on the fride,"

Reference: "The cat is on the fridge."

Prediction: "Th huge cat is within the fridge."

With the instance above, we will simply spot which phrases have been purported to be corrected: The , is and fridge ; and which phrases have been wrongly corrected: on into in. Lastly, we see that an extra phrase was added: huge .

If we align these 3 sequences in pairs, original-reference and original-prediction , we will detect which phrases have been purported to be corrected, and those who weren’t. This alignment downside is typical in bio-informatic, referred to as Sequence Alignment, whose objective is to establish areas of similarity.

This can be a excellent analogy for our spellcheck analysis activity.

Authentic: "Th - cat si on the fride,"

Reference: "The - cat is on the fridge."

1 0 0 1 0 0 1Authentic: "Th - cat si on the fride,"

Prediction: "Th huge cat is in the fridge."

0 1 0 1 1 0 1

FN FP TP FP TP

By labeling every pair with a 0 or 1 whether or not the phrase modified or not, we will calculate how typically the mannequin accurately fixes errors (True Positives — TP), incorrectly adjustments right phrases (False Positives — FP), and misses errors that ought to have been corrected (False Negatives — FN).

In different phrases, we will calculate the Precision and Recall of the Spellcheck!

We now have a strong algorithm that’s able to evaluating any Spellcheck answer!

You could find the algorithm within the undertaking repository.

Giant Language Fashions (LLMs) have proved being nice assist in tackling Pure Language activity in numerous industries.

They represent a path now we have to probe for our use case.

Many LLM suppliers brag concerning the efficiency of their mannequin on leaderboards, however how do they carry out on correcting error in lists of substances? Thus, we evaluated them!

We evaluated GPT-3.5 and GPT-4o from OpenAI, Claude-Sonnet-3.5 from Anthropic, and Gemini-1.5-Flash from Google utilizing our customized benchmark and analysis algorithm.

We prompted detailed directions to orient the corrections in direction of our customized pointers.

GPT-3.5-Turbo delivered the most effective efficiency in comparison with different fashions, each by way of metrics and guide evaluate. Particular point out goes to Claude-Sonnet-3.5, which confirmed spectacular error corrections (excessive Recall), however typically offered further irrelevant explanations, decreasing its Precision.

Nice! We now have an LLM that works! Time to create the function within the app!

Nicely, not so quick…

Utilizing personal LLMs reveals many challenges:

- Lack of Possession: We turn into depending on the suppliers and their fashions. New mannequin variations are launched ceaselessly, altering the mannequin’s habits. This instability, primarily as a result of the mannequin is designed for common functions quite than our particular activity, complicates long-term upkeep.

- Mannequin Deletion Danger: We now have no safeguards towards suppliers eradicating older fashions. As an illustration, GPT-3.5 is slowly being substitute by extra performant fashions, regardless of being the most effective mannequin for this activity!

- Efficiency Limitations: The efficiency of a personal LLM is constrained by its prompts. In different phrases, our solely method of enhancing outputs is thru higher prompts since we can not modify the core weights of the mannequin by coaching it on our personal knowledge.

For these causes, we selected to focus our efforts on open-source options that would offer us with full management and outperform common LLMs.

Any machine studying answer begins with knowledge. In our case, knowledge is the corrected lists of substances.

Nonetheless, not all lists of substances are equal. Some are freed from unrecognized substances, some are simply so unreadable they’d be no level correcting them.

Due to this fact, we discover a excellent steadiness by selecting lists of substances having between 10 and 40 % of unrecognized substances. We additionally ensured there’s no duplicate inside the dataset, but in addition with the benchmark to stop any knowledge leakage through the analysis stage.

We extracted 6000 uncorrected lists from the Open Meals Information database utilizing DuckDB, a quick in-process SQL device able to processing tens of millions of rows below the second.

Nonetheless, these extracted lists usually are not corrected but, and manually annotating them would take an excessive amount of time and assets…

Nonetheless, now we have entry to LLMs we already evaluated on the precise activity. Due to this fact, we prompted GPT-3.5-Turbo, the most effective mannequin on our benchmark, to right each listing in respect of our pointers.

The method took lower than an hour and price practically 2$.

We then manually reviewed the dataset utilizing Argilla, an open-source annotation device specialised in Pure Language Processing duties. This course of ensures the dataset is of adequate high quality to coach a dependable mannequin.

We now have at our disposal a coaching dataset and an analysis benchmark to coach our personal mannequin on the Spellcheck activity.

Coaching

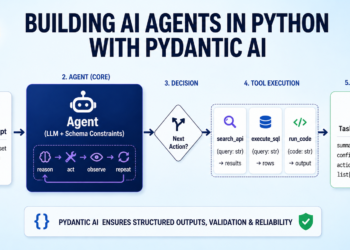

For this stage, we determined to go together with Sequence-to-Sequence Language Fashions. In different phrases, these fashions take a textual content as enter and returns a textual content as output, which fits the spellcheck course of.

A number of fashions match this position, such because the T5 household developed by Google in 2020, or the present open-source LLMs corresponding to Llama or Mistral, that are designed for textual content technology and following directions.

The mannequin coaching consists in a succession of steps, each requiring totally different assets allocations, corresponding to cloud GPUs, knowledge validation and logging. Because of this, we determined to orchestrate the coaching utilizing Metaflow, a pipeline orchestrator designed for Knowledge science and Machine Studying tasks.

The coaching pipeline consists as comply with:

- Configurations and hyperparameters are imported to the pipeline from config yaml recordsdata;

- The coaching job is launched within the cloud utilizing AWS Sagemaker, alongside the set of mannequin hyperparameters and the customized modules such because the analysis algorithm. As soon as the job is completed, the mannequin artifact is saved in an AWS S3 bucket. All coaching particulars are tracked utilizing Comet ML;

- The fine-tuned mannequin is then evaluated on the benchmark utilizing the analysis algorithm. Relying on the mannequin sizem this course of could be extraordinarily lengthy. Due to this fact, we used vLLM, a Python library designed to accelerates LLM inferences;

- The predictions towards the benchmark, additionally saved in AWS S3, are despatched to Argilla for human-evaluation.

After iterating time and again between refining the information and the mannequin coaching, we achieved efficiency corresponding to proprietary LLMs on the Spellcheck activity, scoring an F1-Rating of 0.65.