On this place paper, I talk about the premise that a number of potential efficiency enhancement is left on the desk as a result of we don’t usually deal with the potential of dynamic execution.

I suppose I must first outline what’s dynamic execution on this context. As a lot of you’re little question conscious of, we frequently deal with efficiency optimizations by taking an excellent take a look at the mannequin itself and what might be executed to make processing of this mannequin extra environment friendly (which might be measured when it comes to decrease latency, greater throughput and/or vitality financial savings).

These strategies usually deal with the dimensions of the mannequin, so we search for methods to compress the mannequin. If the mannequin is smaller, then reminiscence footprint and bandwidth necessities are improved. Some strategies additionally deal with sparsity inside the mannequin, thus avoiding inconsequential calculations.

Nonetheless… we’re solely wanting on the mannequin itself.

That is undoubtedly one thing we need to do, however are there extra alternatives we are able to leverage to spice up efficiency much more? Usually, we overlook probably the most human-intuitive strategies that don’t concentrate on the mannequin measurement.

Onerous vs Straightforward

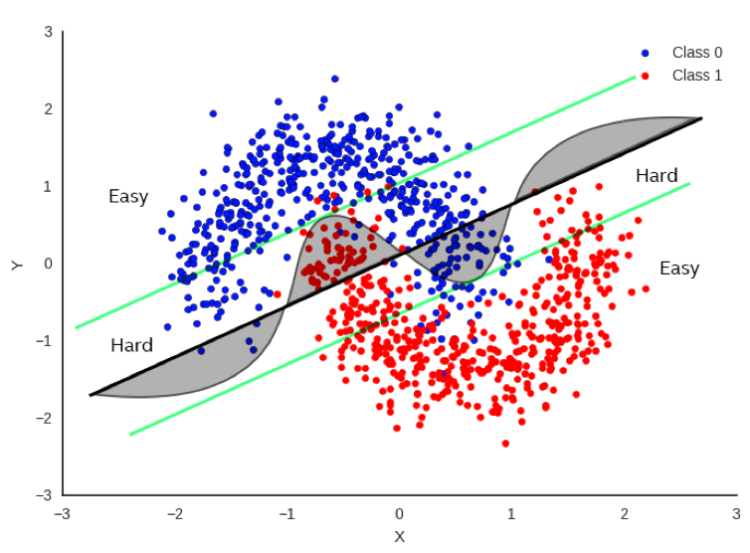

In Determine 1, there’s a easy instance (maybe a bit simplistic) relating to the best way to classify between pink and blue information factors. It could be actually helpful to have the ability to draw a call boundary in order that we all know the pink and blue factors are on reverse sides of the boundary as a lot as potential. One technique is to do a linear regression whereby we match a straight line as finest as we are able to to separate the information factors as a lot as potential. The daring black line in Determine 1 represents one potential boundary. Focusing solely on the daring black line, you’ll be able to see that there’s a substantial variety of factors that fall on the fallacious aspect of the boundary, nevertheless it does an honest job more often than not.

If we concentrate on the curved line, this does a a lot better job, nevertheless it’s additionally tougher to compute because it’s now not a easy, linear equation. If we would like extra accuracy, clearly the curve is a a lot better choice boundary than the black line.

However let’s not simply throw out the black line simply but. Now let’s take a look at the inexperienced parallel traces on both sides of the black boundary. Be aware that the linear choice boundary could be very correct for factors outdoors of the inexperienced line. Let’s name these factors “Straightforward”.

In actual fact, it’s 100% as correct because the curved boundary for Straightforward factors. Factors that lie contained in the inexperienced traces are “Onerous” and there’s a clear benefit to utilizing the extra advanced choice boundary for these factors.

So… if we are able to inform if the enter information is difficult or simple, we are able to apply completely different strategies to fixing the issue with no lack of accuracy and a transparent financial savings of computations for the straightforward factors.

That is very intuitive as that is precisely how people deal with issues. If we understand an issue as simple, we frequently don’t assume too onerous about it and provides a solution shortly. If we understand an issue as being onerous, we predict extra about it and sometimes it takes extra time to get to the reply.

So, can we apply the same method to AI?

Dynamic Execution Strategies

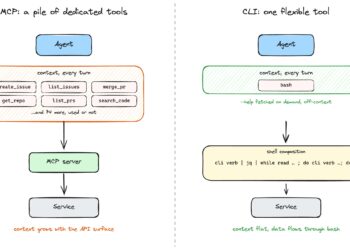

Within the dynamic execution state of affairs, we make use of a set of specialised methods designed to scrutinize the particular question at hand. These methods contain an intensive examination of the question’s construction, content material, and context with the purpose of discerning whether or not the issue it represents might be addressed in a extra simple method.

This method mirrors the way in which people deal with problem-solving. Simply as we, as people, are sometimes in a position to determine issues which are ’simple’ or ’easy’ and clear up them with much less effort in comparison with ’onerous’ or ’advanced’ issues, these methods attempt to do the identical. They’re designed to acknowledge less complicated issues and clear up them extra effectively, thereby saving computational sources and time.

For this reason we refer to those methods as Dynamic Execution. The time period ’dynamic’ signifies the adaptability and suppleness of this method. In contrast to static strategies that rigidly adhere to a predetermined path whatever the downside’s nature, Dynamic Execution adjusts its technique primarily based on the particular downside it encounters, that’s, the chance is information dependent.

The objective of Dynamic Execution is to not optimize the mannequin itself, however to optimize the compute stream. In different phrases, it seeks to streamline the method via which the mannequin interacts with the information. By tailoring the compute stream to the information offered to the mannequin, Dynamic Execution ensures that the mannequin’s computational sources are utilized in probably the most environment friendly method potential.

In essence, Dynamic Execution is about making the problem-solving course of as environment friendly and efficient as potential by adapting the technique to the issue at hand, very like how people method problem-solving. It’s about working smarter, not tougher. This method not solely saves computational sources but additionally improves the pace and accuracy of the problem-solving course of.

Early Exit

This method entails including exits at varied levels in a deep neural community (DNN). The thought is to permit the community to terminate the inference course of earlier for easier duties, thus saving computational sources. It takes benefit of the statement that some take a look at examples might be simpler to foretell than others [1], [2].

Under is an instance of the Early Exit technique in a number of encoder fashions, together with BERT, ROBERTA, and ALBERT.

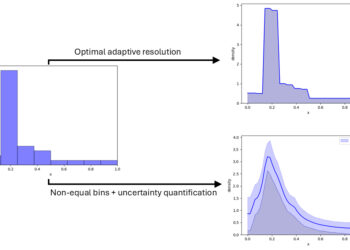

We measured the speed-ups on glue scores for varied entropy thresholds. Determine 2 reveals a plot of those scores and the way they drop with respect to the entropy threshold. The scores present the proportion of the baseline rating (that’s, with out Early Exit). Be aware that we are able to get 2x to 4X speed-up with out sacrificing a lot high quality.

Speculative Sampling

This technique goals to hurry up the inference course of by computing a number of candidate tokens from a smaller draft mannequin. These candidate tokens are then evaluated in parallel within the full goal mannequin [3], [4].

Speculative sampling is a way designed to speed up the decoding course of of huge language fashions [5], [6]. The idea behind speculative sampling relies on the statement that the latency of parallel scoring of quick continuations, generated by a sooner however much less highly effective draft mannequin, is corresponding to that of sampling a single token from the bigger goal mannequin. This method permits a number of tokens to be generated from every transformer name, growing the pace of the decoding course of.

The method of speculative sampling entails two fashions: a smaller, sooner draft mannequin and a bigger, slower goal mannequin. The draft mannequin speculates what the output is a number of steps into the long run, whereas the goal mannequin determines what number of of these tokens we must always settle for. The draft mannequin decodes a number of tokens in a daily autoregressive vogue, and the chance outputs of the goal and the draft fashions on the brand new predicted sequence are in contrast. Based mostly on some rejection standards, it’s decided how most of the speculated tokens we need to hold. If a token is rejected, it’s resampled utilizing a mixture of the 2 distributions, and no extra tokens are accepted. If all speculated tokens are accepted, an extra ultimate token might be sampled from the goal mannequin chance output.

By way of efficiency enhance, speculative sampling has proven important enhancements. As an example, it was benchmarked with Chinchilla, a 70 billion parameter language mannequin, reaching a 2–2.5x decoding speedup in a distributed setup, with out compromising the pattern high quality or making modifications to the mannequin itself. One other instance is the appliance of speculative decoding to Whisper, a common function speech transcription mannequin, which resulted in a 2x speed-up in inference throughput [7], [8]. Be aware that speculative sampling can be utilized to spice up CPU inference efficiency, however the enhance will probably be much less (usually round 1.5x).

In conclusion, speculative sampling is a promising approach that leverages the strengths of each a draft and a goal mannequin to speed up the decoding course of of huge language fashions. It gives a major efficiency enhance, making it a priceless software within the area of pure language processing. Nonetheless, it is very important notice that the precise efficiency enhance can differ relying on the particular fashions and setup used.

StepSaver

This can be a technique that is also known as Early Stopping for Diffusion Technology, utilizing an progressive NLP mannequin particularly fine-tuned to find out the minimal variety of denoising steps required for any given textual content immediate. This superior mannequin serves as a real-time software that recommends the perfect variety of denoising steps for producing high-quality pictures effectively. It’s designed to work seamlessly with the Diffusion mannequin, making certain that pictures are produced with superior high quality within the shortest potential time. [9]

Diffusion fashions iteratively improve a random noise sign till it intently resembles the goal information distribution [10]. When producing visible content material reminiscent of pictures or movies, diffusion fashions have demonstrated important realism [11]. For instance, video diffusion fashions and SinFusion signify situations of diffusion fashions utilized in video synthesis [12][13]. Extra not too long ago, there was rising consideration in the direction of fashions like OpenAI’s Sora; nevertheless, this mannequin is at the moment not publicly obtainable on account of its proprietary nature.

Efficiency in diffusion fashions entails numerous iterations to get well pictures or movies from Gaussian noise [14]. This course of known as denoising and is educated on a selected variety of iterations of denoising. The variety of iterations on this sampling process is a key issue within the high quality of the generated information, as measured by metrics, reminiscent of FID.

Latent house diffusion inference makes use of iterations in function house, and efficiency suffers from the expense of many iterations required for high quality output. Varied methods, reminiscent of patching transformation and transformer-based diffusion fashions [15], enhance the effectivity of every iteration.

StepSaver dynamically recommends considerably decrease denoising steps, which is important to deal with the gradual sampling situation of steady diffusion fashions throughout picture technology [9]. The beneficial steps additionally guarantee higher picture high quality. Determine 3 reveals that pictures generated utilizing dynamic steps lead to a 3X throughput enchancment and the same picture high quality in comparison with static 100 steps.

LLM Routing

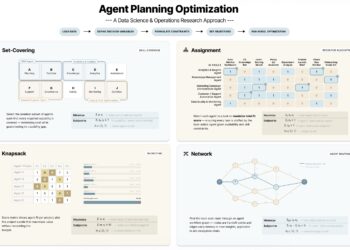

Dynamic Execution isn’t restricted to only optimizing a selected activity (e.g. producing a sequence of textual content). We will take a step above the LLM and take a look at your entire pipeline. Suppose we’re operating an enormous LLM in our information middle (or we’re being billed by OpenAI for token technology through their API), can we optimize the calls to LLM in order that we choose the perfect LLM for the job (and “finest” could possibly be a operate of token technology price). Difficult prompts may require a dearer LLM, however many prompts might be dealt with with a lot decrease price on a less complicated LLM (and even regionally in your pocket book). So if we are able to route our immediate to the suitable vacation spot, then we are able to optimize our duties primarily based on a number of standards.

Routing is a type of classification by which the immediate is used to find out the perfect mannequin. The immediate is then routed to this mannequin. By finest, we are able to use completely different standards to find out the best mannequin when it comes to price and accuracy. In some ways, routing is a type of dynamic execution executed on the pipeline stage the place most of the different optimizations we’re specializing in on this paper is finished to make every LLM extra environment friendly. For instance, RouteLLM is an open-source framework for serving LLM routers and supplies a number of mechanisms for reference, reminiscent of matrix factorization. [16] On this examine, the researchers at LMSys have been in a position to save 85% of prices whereas nonetheless preserving 95% accuracy.

Conclusion

This definitely was not meant to be an exhaustive examine of all dynamic execution strategies, nevertheless it ought to present information scientists and engineers with the motivation to seek out extra efficiency boosts and value financial savings from the traits of the information and never solely concentrate on model-based strategies. Dynamic Execution supplies this chance and doesn’t intervene with or hamper conventional model-based optimization efforts.

Except in any other case famous, all pictures are by the creator.