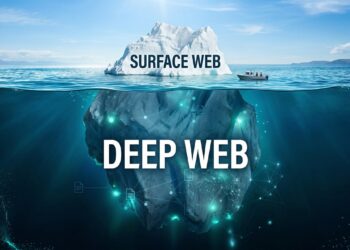

Till not too long ago, AI fashions have been slim in scope and restricted to understanding both language or particular pictures, however hardly ever each.

On this respect, normal language fashions like GPTs have been a HUGE leap since we went from specialised fashions to normal but rather more highly effective fashions.

However at the same time as language fashions progressed, they remained separate from pc imaginative and prescient аreas, every area advancing in silos with out bridging the hole. Think about what would occur should you may solely hear however not see, or vice versa.

My title is Roman Isachenko, and I’m a part of the Laptop Imaginative and prescient workforce at Yandex.

On this article, I’ll focus on visible language fashions (VLMs), which I imagine are the way forward for compound AI techniques.

I’ll clarify the fundamentals and coaching course of for growing a multimodal neural community for picture search and discover the design ideas, challenges, and structure that make all of it potential.

In direction of the tip, I’ll additionally present you ways we used an AI-powered search product to deal with pictures and textual content and what modified with the introduction of a VLM.

Let’s start!

What Are VLMs?

LLMs with billions and even a whole lot of billions of parameters are not a novelty.

We see them all over the place!

The subsequent key focus in LLM analysis has been extra inclined in the direction of growing multimodal fashions (omni-models) — fashions that may perceive and course of a number of knowledge varieties.

Because the title suggests, these fashions can deal with extra than simply textual content. They’ll additionally analyze pictures, video, and audio.

However why are we doing this?

Jack of all trades, grasp of none, oftentimes higher than grasp of 1.

In recent times, we’ve seen a pattern the place normal approaches dominate slim ones.

Give it some thought.

At this time’s language-driven ML fashions have turn into comparatively superior and general-purpose. One mannequin can translate, summarize, determine speech tags, and rather more.

However earlier, these fashions was task-specific (we’ve them now as properly, however fewer than earlier than).

- A devoted mannequin for translating.

- A devoted mannequin for summarizing, and so forth.

In different phrases, as we speak’s NLP fashions (LLMs, particularly) can serve a number of functions that beforehand required growing extremely particular options.

Second, this method permits us to exponentially scale the information obtainable for mannequin coaching, which is essential given the finite quantity of textual content knowledge. Earlier, nevertheless, one would want task-specific knowledge:

- A devoted translation labeled dataset.

- A devoted summarization dataset, and so forth.

Third, we imagine that coaching a multimodal mannequin can improve the efficiency of every knowledge sort, identical to it does for people.

For this text, we’ll simplify the “black field” idea to a situation the place the mannequin receives a picture and a few textual content (which we name the “instruct”) as enter and outputs solely textual content (the response).

Consequently, we find yourself with a a lot easier course of as proven beneath:

We’ll focus on image-discriminative fashions that analyze and interpret what a picture depicts.

Earlier than delving into the technical particulars, think about the issues these fashions can clear up.

A couple of examples are proven beneath:

- High left picture: We ask the mannequin to explain the picture. That is specified with textual content.

- High mid picture: We ask the mannequin to interpret the picture.

- High proper picture: We ask the mannequin to interpret the picture and inform us what would occur if we adopted the signal.

- Backside picture: That is probably the most difficult instance. We give the mannequin some math issues. From these examples, you’ll be able to see that the vary of duties is huge and various.

VLMs are a brand new frontier in pc imaginative and prescient that may clear up varied basic CV-related duties (classification, detection, description) in zero-shot and one-shot modes.

Whereas VLMs might not excel in each commonplace job but, they’re advancing shortly.

Now, let’s perceive how they work.

VLM Structure

These fashions sometimes have three most important elements:

- LLM — a textual content mannequin (YandexGPT, in our case) that doesn’t perceive pictures.

- Picture encoder — a picture mannequin (CNN or Imaginative and prescient Transformer) that doesn’t perceive textual content.

- Adapter — a mannequin that acts as a mediator to make sure that the LLM and picture encoder get alongside properly.

The pipeline is fairly easy:

- Feed a picture into the picture encoder.

- Remodel the output of the picture encoder into some illustration utilizing the adapter.

- Combine the adapter’s output into the LLM (extra on that beneath).

- Whereas the picture is processed, convert the textual content instruct right into a sequence of tokens and feed them into the LLM.

Extra Data About Adapters

The adapter is probably the most thrilling and vital a part of the mannequin, because it exactly facilitates the communication/interplay between the LLM and the picture encoder.

There are two kinds of adapters:

- Immediate-based adapters

- Cross-attention-based adapters

Immediate-based adapters have been first proposed in BLIP-2 and LLaVa fashions.

The concept is easy and intuitive, as evident from the title itself.

We take the output of the picture encoder (a vector, a sequence of vectors, or a tensor — relying on the structure) and rework it right into a sequence of vectors (tokens), which we feed into the LLM. You may take a easy MLP mannequin with a few layers and use it as an adapter, and the outcomes will doubtless be fairly good.

Cross-attention-based adapters are a bit extra subtle on this respect.

They have been utilized in current papers on Llama 3.2 and NVLM.

These adapters purpose to rework the picture encoder’s output for use within the LLM’s cross-attention block as key/worth matrices. Examples of such adapters embody transformer architectures like perceiver resampler or Q‑former.

Immediate-based adapters (left) and Cross-attention-based adapters (proper)

Each approaches have execs and cons.

Presently, prompt-based adapters ship higher outcomes however take away a big chunk of the LLM’s enter context, which is vital since LLMs have restricted context size (for now).

Cross-attention-based adapters don’t take away from the LLM’s context however require a lot of parameters to attain good high quality.

VLM Coaching

With the structure sorted out, let’s dive into coaching.

Firstly, observe that VLMs aren’t educated from scratch (though we predict it’s solely a matter of time) however are constructed on pre-trained LLMs and picture encoders.

Utilizing these pre-trained fashions, we fine-tune our VLM in multimodal textual content and picture knowledge.

This course of entails two steps:

- Pre-training

- Alignment: SFT + RL (optionally available)

Coaching process of VLMs (Picture by Writer)

Discover how these levels resemble LLM coaching?

It’s because the 2 processes are comparable in idea. Let’s take a short have a look at these levels.

VLM Pre-training

Right here’s what we wish to obtain at this stage:

- Hyperlink the textual content and picture modalities collectively (do not forget that our mannequin contains an adapter we haven’t educated earlier than).

- Load world information into our mannequin (the pictures have loads of specifics, for one, OCR expertise).

There are three kinds of knowledge utilized in pre-training VLMs:

- Interleaved Pre-training: This mirrors the LLM pre-training part, the place we train the mannequin to carry out the subsequent token prediction job by feeding it net paperwork. With VLM pre-training, we decide net paperwork with pictures and practice the mannequin to foretell textual content. The important thing distinction right here is {that a} VLM considers each the textual content and the pictures on the web page. Such knowledge is straightforward to return by, so one of these pre-training isn’t exhausting to scale up. Nevertheless, the information high quality isn’t nice, and boosting it proves to be a troublesome job.

Picture-Textual content Pairs Pre-training: We practice the mannequin to carry out one particular job: captioning pictures. You want a big corpus of pictures with related descriptions to try this. This method is extra standard as a result of many such corpora are used to coach different fashions (text-to-image era, image-to-text retrieval).

Instruct-Primarily based Pre-training: Throughout inference, we’ll feed the mannequin pictures and textual content. Why not practice the mannequin this fashion from the beginning? That is exactly what instruct-based pre-training does: It trains the mannequin on an enormous dataset of image-instruct-answer triplets, even when the information isn’t all the time excellent.

How a lot knowledge is required to coach a VLM mannequin correctly is a posh query. At this stage, the required dataset dimension can range from just a few million to a number of billion (fortunately, not a trillion!) samples.

Our workforce used instruct-based pre-training with just a few million samples. Nevertheless, we imagine interleaved pre-training has nice potential, and we’re actively working in that path.

VLM Alignment

As soon as pre-training is full, it’s time to start out on alignment.

It contains SFT coaching and an optionally available RL stage. Since we solely have the SFT stage, I’ll concentrate on that.

Nonetheless, current papers (like this and this) usually embody an RL stage on prime of VLM, which makes use of the identical strategies as for LLMs (DPO and varied modifications differing by the primary letter within the technique title).

Anyway, again to SFT.

Strictly talking, this stage is much like instruct-based pre-training.

The excellence lies in our concentrate on high-quality knowledge with correct response construction, formatting, and powerful reasoning capabilities.

Which means that the mannequin should be capable to perceive the picture and make inferences about it. Ideally, it ought to reply equally properly to textual content instructs with out pictures, so we’ll additionally add high-quality text-only knowledge to the combination.

Finally, this stage’s knowledge sometimes ranges between a whole lot of hundreds to some million examples. In our case, the quantity is someplace within the six digits.

High quality Analysis

Let’s focus on the strategies for evaluating the standard of VLMs. We use two approaches:

- Calculate metrics on open-source benchmarks.

- Examine the fashions utilizing side-by-side (SBS) evaluations, the place an assessor compares two mannequin responses and chooses the higher one.

The primary technique permits us to measure surrogate metrics (like accuracy in classification duties) on particular subsets of knowledge.

Nevertheless, since most benchmarks are in English, they’ll’t be used to check fashions educated in different languages, like German, French, Russian, and so forth.

Whereas translation can be utilized, the errors launched by translation fashions make the outcomes unreliable.

The second method permits for a extra in-depth evaluation of the mannequin however requires meticulous (and costly) handbook knowledge annotation.

Our mannequin is bilingual and might reply in each English and Russian. Thus, we are able to use English open-source benchmarks and run side-by-side comparisons.

We belief this technique and make investments so much in it. Right here’s what we ask our assessors to judge:

- Grammar

- Readability

- Comprehensiveness

- Relevance to the instruct

- Errors (logical and factual)

- Hallucinations

We try to judge a whole and various subset of our mannequin’s expertise.

The next pie chart illustrates the distribution of duties in our SbS analysis bucket.

This summarizes the overview of VLM fundamentals and the way one can practice a mannequin and consider its high quality.

Pipeline Structure

This spring, we added multimodality to Neuro, an AI-powered search product, permitting customers to ask questions utilizing textual content and pictures.

Till not too long ago, its underlying know-how wasn’t actually multimodal.

Right here’s what this pipeline appeared like earlier than.

This diagram appears complicated, nevertheless it’s easy when you break it down into steps.

Right here’s what the method used to appear to be

- The person submits a picture and a textual content question.

- We ship the picture to our visible search еngine, which might return a wealth of details about the picture (tags, acknowledged textual content, data card).

- We formulate a textual content question utilizing a rephraser (a fine-tuned LLM) with this data and the unique question.

- With the rephrased textual content question, we use Yandex Search to retrieve related paperwork (or excerpts, which we name infocontext).

- Lastly, with all this data (authentic question, visible search data, rephrased textual content question, and information context), we generate the ultimate response utilizing a generator mannequin (one other fine-tuned LLM).

Carried out!

As you’ll be able to see, we used to depend on two unimodal LLMs and our visible search engine. This resolution labored properly on a small pattern of queries however had limitations.

Beneath is an instance (albeit barely exaggerated) of how issues may go flawed.

Right here, the rephraser receives the output of the visible search service and easily doesn’t perceive the person’s authentic intent.

In flip, the LLM mannequin, which is aware of nothing in regards to the picture, generates an incorrect search question, getting tags in regards to the pug and the apple concurrently.

To enhance the standard of our multimodal response and permit customers to ask extra complicated questions, we launched a VLM into our structure.

Extra particularly, we made two main modifications:

- We changed the LLM rephraser with a VLM rephraser. Basically, we began feeding the unique picture to the rephraser’s enter on prime of the textual content from the visible search engine.

- We added a separate VLM captioner to the pipeline. This mannequin gives a picture description, which we use as information context for the ultimate generator.

You may surprise

Why not make the generator itself VLM-based?

That’s a good suggestion!

However there’s a catch.

Our generator coaching inherits from Neuro’s textual content mannequin, which is regularly up to date.

To replace the pipeline sooner and extra conveniently, it was a lot simpler for us to introduce a separate VLM block.

Plus, this setup works simply as properly, which is proven beneath:

Coaching VLM rephraser and VLM captioner are two separate duties.

For this, we use talked about earlierse VLM, as talked about e for thise-tuned it for these particular duties.

Fantastic-tuning these fashions required amassing separate coaching datasets comprising tens of hundreds of samples.

We additionally needed to make important modifications to our infrastructure to make the pipeline computationally environment friendly.

Gauging the High quality

Now for the grand query:

Did introducing a VLM to a reasonably complicated pipeline enhance issues?

In brief, sure, it did!

We ran side-by-side assessments to measure the brand new pipeline’s efficiency and in contrast our earlier LLM framework with the brand new VLM one.

This analysis is much like the one mentioned earlier for the core know-how. Nevertheless, on this case, we use a special set of pictures and queries extra aligned with what customers may ask.

Beneath is the approximate distribution of clusters on this bucket.

Our offline side-by-side analysis exhibits that we’ve considerably improved the standard of the ultimate response.

The VLM pipeline noticeably will increase the response high quality and covers extra person eventualities.

We additionally needed to check the outcomes on a dwell viewers to see if our customers would discover the technical modifications that we imagine would enhance the product expertise.

So, we performed an internet break up check, evaluating our LLM pipeline to the brand new VLM pipeline. The preliminary outcomes present the next change:

- The variety of instructs that embody a picture elevated by 17%.

- The variety of classes (the person getting into a number of queries in a row) noticed an uptick of 4.5%.

To reiterate what was mentioned above, we firmly imagine that VLMs are the way forward for pc imaginative and prescient fashions.

VLMs are already able to fixing many out-of-the-box issues. With a little bit of fine-tuning, they’ll completely ship state-of-the-art high quality.

Thanks for studying!