Desk of Contents

Related Hyperlinks

Just a few months in the past, I launched the Movie Search app, a Retrieval-Augmented Era (RAG) software designed to advocate movies primarily based on consumer queries. For instance, a consumer might ask: “Discover me drama motion pictures in English which can be lower than 2 hours lengthy and have canines.” and obtain a advice like:

Title of Movie: Hachi: A Canine’s Story

Runtime: 93 minutes

Launch 12 months: 2009

Streaming: Not obtainable for streaming

This movie tells the poignant true story of Hachiko, an Akita canine identified for his exceptional loyalty to his proprietor. The emotional depth and the themes of friendship and loyalty resonate strongly, making it a touching drama that showcases the profound bond between people and canines. It’s good for anybody searching for a heartfelt story that highlights the significance of companionship.…

This was not only a easy RAG app, nonetheless. It included what is named self-querying retrieval. Which means the bot takes the consumer’s question and transforms it by including metadata filters. This ensures any paperwork pulled into the chat mannequin’s context respects the constraints set by the consumer’s question. For extra info on this app, I like to recommend trying out my earlier article linked above.

Sadly, there have been some points with the app:

- There was no offline analysis carried out, in addition to passing the ‘eye take a look at’. This take a look at is important, however not ample.

- Observability was non-existent. If a question went poorly, you needed to manually pull up the mission and run some ad-hoc scripts in an try to see what went unsuitable.

- The Pinecone vector database needed to be pulled manually. This meant the paperwork would shortly be outdated if, say, a movie obtained pulled from a streaming service.

On this article, I’ll briefly cowl a few of the enhancements made to the Movie Search app. This can cowl:

- Offline Analysis utilizing RAGAS and Weave

- On-line Analysis and Observability

- Automated Knowledge Pulling utilizing Prefect

One factor earlier than we leap in: I discovered the title Movie Search to be a bit generic, so I rebranded the app as Rosebud 🌹, therefore the picture proven above. Actual movie geeks will perceive the reference.

You will need to be capable of choose if a change made to your LLM software improves or degrades its efficiency. Sadly, analysis of LLM apps is a tough and novel area. There may be merely not a lot settlement on what constitutes an excellent analysis.

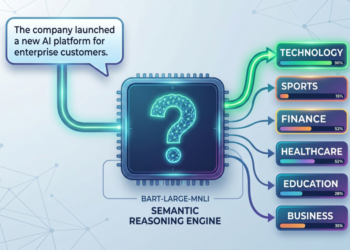

For Rosebud 🌹, I made a decision to sort out what is named the RAG triad. This method is promoted by TruLens, a platform to guage and monitor LLM purposes.

The triad covers three points of a RAG app:

- Context Relevancy: When a question is made by the consumer, paperwork fill the context of the chat mannequin. Is the retrieved context really helpful? If not, chances are you’ll have to tweak issues like doc embedding, chunking, or metadata filtering.

- Faithfulness: Is the mannequin’s response really grounded within the retrieved paperwork? You don’t need the mannequin making up details; the entire level of RAG is to assist scale back hallucinations by utilizing retrieved paperwork.

- Reply Relevancy: Does the mannequin’s response really reply the consumer’s question? If the consumer asks for “Comedy movies made within the Nineteen Nineties?”, the mannequin’s reply higher include solely comedy movies made within the Nineteen Nineties.

There are a couple of methods to aim to evaluate these three capabilities of a RAG app. A method can be to make use of human knowledgeable evaluators. Sadly, this might be costly and wouldn’t scale. For Rosebud 🌹 I made a decision to make use of LLMs-as-a-judges. This implies utilizing a chat mannequin to have a look at every of the three standards above and assigning a rating of 0 to 1 for every. This technique has the benefit of being low cost and scaling properly. To perform this, I used RAGAS, a preferred framework that helps you consider your RAG purposes. The RAGAS framework consists of the three metrics talked about above and makes it pretty simple to make use of them to guage your apps. Under is a code snippet demonstrating how I performed this offline analysis:

from ragas import consider

from ragas.metrics import AnswerRelevancy, ContextRelevancy, Faithfulness

import weave@weave.op()

def evaluate_with_ragas(question, model_output):

# Put knowledge right into a Dataset object

knowledge = {

"query": [query],

"contexts": [[model_output['context']]],

"reply": [model_output['answer']]

}

dataset = Dataset.from_dict(knowledge)

# Outline metrics to guage

metrics = [

AnswerRelevancy(),

ContextRelevancy(),

Faithfulness(),

]

judge_model = ChatOpenAI(mannequin=config['JUDGE_MODEL_NAME'])

embeddings_model = OpenAIEmbeddings(mannequin=config['EMBEDDING_MODEL_NAME'])

analysis = consider(dataset=dataset, metrics=metrics, llm=judge_model, embeddings=embeddings_model)

return {

"answer_relevancy": float(analysis['answer_relevancy']),

"context_relevancy": float(analysis['context_relevancy']),

"faithfulness": float(analysis['faithfulness']),

}

def run_evaluation():

# Initialize chat mannequin

mannequin = rosebud_chat_model()

# Outline analysis questions

questions = [

{"query": "Suggest a good movie based on a book."}, # Adaptations

{"query": "Suggest a film for a cozy night in."}, # Mood-Based

{"query": "What are some must-watch horror movies?"}, # Genre-Specific

...

# Total of 20 questions

]

# Create Weave Analysis object

analysis = weave.Analysis(dataset=questions, scorers=[evaluate_with_ragas])

# Run the analysis

asyncio.run(analysis.consider(mannequin))

if __name__ == "__main__":

weave.init('film-search')

run_evaluation()

Just a few notes:

- With twenty questions and three standards to guage throughout, you’re taking a look at sixty LLM requires a single analysis! It will get even worse although; with the

rosebud_chat_model, there are two calls for each question: one to assemble the metadata filter and one other to offer the reply, so actually that is 120 requires a single eval! All fashions used my analysis are the brand newgpt-4o-mini, which I strongly advocate. In my expertise the calls price $0.05 per analysis. - Observe that we’re utilizing

asyncio.runto run the evals. It’s excellent to make use of asynchronous calls since you don’t wish to consider every query sequentially one after the opposite. As an alternative, withasynciowe will start evaluating different questions as we watch for earlier I/O operations to complete. - There are a complete of twenty questions for a single analysis. These span quite a lot of typical movie queries a consumer might ask. I principally got here up with these myself, however in apply it might be higher to make use of queries really requested by customers in manufacturing.

- Discover the

weave.initand the@weave.opdecorator which can be getting used. These are a part of the brand new Weave library from Weights & Biases (W&B). Weave is a complement to the normal W&B library, with a give attention to LLM purposes. It permits you to seize inputs and outputs of LLMs by utilizing a the easy@weave.opdecorator. It additionally permits you to seize the outcomes of evaluations utilizingweave.Analysis(…). By integrating RAGAS to carry out evaluations and Weave to seize and log them, we get a strong duo that helps GenAI builders iteratively enhance their purposes. You additionally get to log the mannequin latency, price, and extra.

In concept, one can now tweak a hyperparameter (e.g. temperature), re-run the analysis, and see if the adjustment has a optimistic or unfavorable influence. Sadly, in apply I discovered the LLM judging to be finicky, and I’m not the one one. LLM judges appear to be pretty dangerous at utilizing a floating level worth to evaluate these metrics. As an alternative, it seems they appear to do higher at classification e.g. a thumbs up/thumbs down. RAGAS doesn’t but assist LLM judges performing classification. Writing it by hand doesn’t appear too tough, and maybe in a future replace I’ll try this myself.

Offline analysis is nice for seeing how tweaking hyperparameters impacts efficiency, however for my part on-line analysis is way extra helpful. In Rosebud 🌹 I’ve now integrated using 👍/👎 buttons on the backside of each response to offer suggestions.

When a consumer clicks on both button they’re instructed that their suggestions was logged. Under is a snippet of how this was completed within the Streamlit interface:

def start_log_feedback(suggestions):

print("Logging suggestions.")

st.session_state.feedback_given = True

st.session_state.sentiment = suggestions

thread = threading.Thread(goal=log_feedback, args=(st.session_state.sentiment,

st.session_state.question,

st.session_state.query_constructor,

st.session_state.context,

st.session_state.response))

thread.begin()def log_feedback(sentiment, question, query_constructor, context, response):

ct = datetime.datetime.now()

wandb.init(mission="film-search",

title=f"question: {ct}")

desk = wandb.Desk(columns=["sentiment", "query", "query_constructor", "context", "response"])

desk.add_data(sentiment,

question,

query_constructor,

context,

response

)

wandb.log({"Question Log": desk})

wandb.end()

Observe that the method of sending the suggestions to W&B runs on a separate thread relatively than on the primary thread. That is to stop the consumer from getting caught for a couple of seconds ready for the logging to finish.

A W&B desk is used to retailer the suggestions. 5 portions are logged within the desk:

- Sentiment: Whether or not the consumer clicked thumbs up or thumbs down

- Question: The consumer’s question, e.g. Discover me drama motion pictures in English which can be lower than 2 hours lengthy and have canines.

- Query_Constructor: The outcomes of the question constructor, which rewrites the consumer’s question and consists of metadata filtering if needed, e.g.

{

"question": "drama English canines",

"filter": {

"operator": "and",

"arguments": [

{

"comparator": "eq", "attribute": "Genre", "value": "Drama"

},

{

"comparator": "eq", "attribute": "Language", "value": "English"

}, {

"comparator": "lt", "attribute": "Runtime (minutes)", "value": 120

}

]

},

}

- Context: The retrieved context primarily based on the reconstructed question, e.g. Title: Hachi: A Canine’s Story. Overview: A drama primarily based on the true story of a faculty professor’s…

- Response: The mannequin’s response

All of that is logged conveniently in the identical mission because the Weave evaluations proven earlier. Now, when a question goes south it is so simple as hitting the thumbs down button to see precisely what occurred. This can enable a lot sooner iteration and enchancment of the Rosebud 🌹 advice software.

To make sure suggestions from Rosebud 🌹 proceed to remain correct it was vital to automate the method of pulling knowledge and importing them to Pinecone. For this process, I selected Prefect. Prefect is a well-liked workflow orchestration device. I used to be searching for one thing light-weight, simple to be taught, and Pythonic. I discovered all of this in Prefect.

Prefect affords quite a lot of methods to schedule your workflows. I made a decision to make use of the push work swimming pools with automated infrastructure provisioning. I discovered that this setup balances simplicity with configurability. It permits the consumer to process Prefect with mechanically provisioning the entire infrastructure wanted to run your circulation in your cloud supplier of selection. I selected to deploy on Azure, however deploying on GCP or AWS solely requires altering a couple of strains of code. Confer with the pinecone_flow.py file for extra particulars. A simplified circulation is offered under:

@process

def begin():

"""

Begin-up: examine all the pieces works or fail quick!

"""# Print out some debug data

print("Beginning circulation!")

# Guarantee consumer has set the suitable env variables

assert os.environ['LANGCHAIN_API_KEY']

assert os.environ['OPENAI_API_KEY']

...

@process(retries=3, retry_delay_seconds=[1, 10, 100])

def pull_data_to_csv(config):

TMBD_API_KEY = os.getenv('TMBD_API_KEY')

YEARS = vary(config["years"][0], config["years"][-1] + 1)

CSV_HEADER = ['Title', 'Runtime (minutes)', 'Language', 'Overview', ...]

for yr in YEARS:

# Seize checklist of ids for all movies made in {YEAR}

movie_list = checklist(set(get_id_list(TMBD_API_KEY, yr)))

FILE_NAME = f'./knowledge/{yr}_movie_collection_data.csv'

# Creating file

with open(FILE_NAME, 'w') as f:

author = csv.author(f)

author.writerow(CSV_HEADER)

...

print("Efficiently pulled knowledge from TMDB and created csv recordsdata in knowledge/")

@process

def convert_csv_to_docs():

# Loading in knowledge from all csv recordsdata

loader = DirectoryLoader(

...

show_progress=True)

docs = loader.load()

metadata_field_info = [

AttributeInfo(name="Title",

description="The title of the movie", type="string"),

AttributeInfo(name="Runtime (minutes)",

description="The runtime of the movie in minutes", type="integer"),

...

]

def convert_to_list(doc, discipline):

if discipline in doc.metadata and doc.metadata[field] will not be None:

doc.metadata[field] = [item.strip()

for item in doc.metadata[field].break up(',')]

...

fields_to_convert_list = ['Genre', 'Actors', 'Directors',

'Production Companies', 'Stream', 'Buy', 'Rent']

...

# Set 'overview' and 'key phrases' as 'page_content' and different fields as 'metadata'

for doc in docs:

# Parse the page_content string right into a dictionary

page_content_dict = dict(line.break up(": ", 1)

for line in doc.page_content.break up("n") if ": " in line)

doc.page_content = (

'Title: ' + page_content_dict.get('Title') +

'. Overview: ' + page_content_dict.get('Overview') +

...

)

...

print("Efficiently took csv recordsdata and created docs")

return docs

@process

def upload_docs_to_pinecone(docs, config):

# Create empty index

PINECONE_KEY, PINECONE_INDEX_NAME = os.getenv(

'PINECONE_API_KEY'), os.getenv('PINECONE_INDEX_NAME')

laptop = Pinecone(api_key=PINECONE_KEY)

# Goal index and examine standing

pc_index = laptop.Index(PINECONE_INDEX_NAME)

print(pc_index.describe_index_stats())

embeddings = OpenAIEmbeddings(mannequin=config['EMBEDDING_MODEL_NAME'])

namespace = "film_search_prod"

PineconeVectorStore.from_documents(

docs,

...

)

print("Efficiently uploaded docs to Pinecone vector retailer")

@process

def publish_dataset_to_weave(docs):

# Initialize Weave

weave.init('film-search')

rows = []

for doc in docs:

row = {

'Title': doc.metadata.get('Title'),

'Runtime (minutes)': doc.metadata.get('Runtime (minutes)'),

...

}

rows.append(row)

dataset = Dataset(title='Film Assortment', rows=rows)

weave.publish(dataset)

print("Efficiently printed dataset to Weave")

@circulation(log_prints=True)

def pinecone_flow():

with open('./config.json') as f:

config = json.load(f)

begin()

pull_data_to_csv(config)

docs = convert_csv_to_docs()

upload_docs_to_pinecone(docs, config)

publish_dataset_to_weave(docs)

if __name__ == "__main__":

pinecone_flow.deploy(

title="pinecone-flow-deployment",

work_pool_name="my-aci-pool",

cron="0 0 * * 0",

picture=DeploymentImage(

title="prefect-flows:newest",

platform="linux/amd64",

)

)

Discover how easy it’s to show Python capabilities right into a Prefect circulation. All you want are some sub-functions styled with @process decorators and a @circulation decorator on the primary operate. Additionally observe that after importing the paperwork to Pinecone, the final step of our circulation publishes the dataset to Weave. That is vital for reproducibility functions.

On the backside of the script we see how deployment is finished in Prefect.

- We have to present a

titlefor the deployment. That is arbitrary. - We additionally have to specify a

work_pool_name. Push work swimming pools in Prefect mechanically ship duties to serverless computer systems without having a intermediary. This title must match the title used to create the pool, which we’ll see under. - You additionally have to specify a

cron, which is brief for chronograph. This lets you specify how typically to repeat a workflow. The worth“0 0 * * 0”means repeat this workflow each week. Try this web site for particulars on how thecronsyntax works. - Lastly, you have to specify a

DeploymentImage. Right here you specify each atitleand aplatform. The title is unfair, however the platform will not be. Since I wish to deploy to Azure compute cases, and these cases run Linux, it’s vital I specify that within theDeploymentImage.

To deploy this circulation on Azure utilizing the CLI, run the next instructions:

prefect work-pool create --type azure-container-instance:push --provision-infra my-aci-pool

prefect deployment run 'get_repo_info/my-deployment'

These instructions will mechanically provision the entire needed infrastructure on Azure. This consists of an Azure Container Registry (ACR) that may maintain a Docker picture containing all recordsdata in your listing in addition to any needed libraries listed in a necessities.txt . It’ll additionally embody an Azure Container Occasion (ACI) Identification that may have permissions essential to deploy a container with the aforementioned Docker picture. Lastly, the deployment run command will schedule the code to be run each week. You’ll be able to examine the Prefect dashboard to see your circulation get run:

By updating my Pinecone vector retailer weekly, I can make sure that the suggestions from Rosebud 🌹 stay correct.

On this article, I mentioned my expertise bettering the Rosebud 🌹 app. This included the method of incorporating offline and on-line analysis, in addition to automating the replace of my Pinecone vector retailer.

Another enhancements not talked about on this article:

- Together with scores from The Film Database within the movie knowledge. Now you can ask for “extremely rated movies” and the chat mannequin will filter for movies above a 7/10.

- Upgraded chat fashions. Now the question and abstract fashions are utilizing

gpt-4o-mini. Recall that the LLM choose mannequin can also be utilizinggpt-4o-mini. - Embedding mannequin upgraded to

text-embedding-3-smallfromtext-embedding-ada-002. - Years now span 1950–2023, as an alternative of beginning at 1920. Movie knowledge from 1920–1950 was not prime quality, and solely tousled suggestions.

- UI is cleaner, with all particulars concerning the mission relegated to a sidebar.

- Vastly improved documentation on GitHub.

- Bug fixes.

As talked about on the prime of the article, the app is now 100% free to make use of! I’ll foot the invoice for queries for the foreseeable future (therefore the selection of gpt-4o-mini as an alternative of the dearer gpt-4o). I actually wish to get the expertise of operating an app in manufacturing, and having my readers take a look at out Rosebud 🌹 is an effective way to do that. Within the unlikely occasion that the app actually blows up, I must give you another mannequin of funding. However that might a terrific downside to have.

Get pleasure from discovering superior movies! 🎥