takes a five-minute change and returns eight clear sections. Selections. Motion gadgets. Dangers. Open questions. Every part reads prefer it was written by somebody who was paying consideration.

Learn the underlying transcript, although, and you discover that two of these sections have been inferred from a single ambiguous sentence, one was invented fully, and three have been pattern-matched from the mannequin’s prior on what a gathering abstract ought to comprise. Assured, formatted, structurally indistinguishable from a abstract of a gathering the place these issues truly occurred.

This isn’t a hallucination downside within the traditional sense. The mannequin will not be making up a reality in regards to the world. It’s making up a reality in regards to the assembly. And the failure mode will not be seen within the output. It’s simply confident-sounding textual content that the reader can’t simply confirm towards the supply.

There’s a title for this failure mode in one other area, and it’s older than language fashions. It’s what occurs once you do estimation with out identification.

This text will not be a brand new summarization benchmark. It’s an argument for a design sample that I’ve not seen handled because the central design constraint in AI engineering literature: deal with LLM-generated summaries as structured claims over a supply, require every declare to declare its assist class, and constrain evaluate phases to allow them to solely weaken unsupported claims somewhat than make the output smoother. I’ll stroll via what that appears like in follow, what it produces, and the place it breaks.

The lacking step

Causal inference is the analytical custom that formalizes the distinction between figuring out a amount and estimating one. Identification is the argument that the info you could have can assist the declare you wish to make. Estimation is the process that produces a quantity as soon as identification is settled. The order will not be negotiable. You can not estimate a remedy impact you haven’t first argued is identifiable out of your observational information, as a result of the ensuing quantity is meaningless. It seems like an impact. It’s not an impact.

Practitioners who work in observational settings spend a considerable fraction of their time on identification. They draw causal graphs. They argue about confounders. They distinguish between what the info can assist and what the info can’t. The estimation step, when it lastly comes, is usually the simple half.

Now take into account what an LLM summarizer does. It receives a transcript. It produces structured claims in regards to the content material of that transcript: choices made, commitments accepted, dangers raised, subsequent steps assigned. Every declare is, in an actual sense, an estimate of a latent amount. The choice was made or it was not. The dedication was accepted or it was not. The abstract is asserting a price for every of those portions.

There isn’t a identification step. The mannequin doesn’t ask whether or not the transcript comprises sufficient proof to assist the declare. It produces the declare as a result of the format requires one.

LLM summarization behaves like observational evaluation, however it’s usually deployed with out something resembling an identification step.

The AI engineering literature has not been silent on the underlying downside. Hallucination detection, calibrated uncertainty, selective prediction and abstention, RAG grounding, quotation verification, factual consistency, and declare verification: every of those is a severe line of labor, and every addresses an actual layer of the failure. What they’ve in widespread is that they deal with fabrication as a mannequin conduct to be measured, scored, or suppressed after the very fact.

Identification is a distinct layer. It doesn’t rating the output for trustworthiness. It modifications what the mannequin is allowed to claim within the first place by requiring each declare to declare what it’s and the place it got here from. The 2 layers are complementary. A pipeline that does identification properly nonetheless advantages from calibration and grounding work downstream. A pipeline that does solely the downstream work is filtering output that ought to by no means have been produced within the type it was produced.

What identification seems like for a transcript

Identification in observational information is a query about what the info can assist. Identification for a transcript is similar query, narrowed to a particular supply. Given this transcript, what could be noticed straight, what could be inferred with said assumptions, and what can’t be supported in any respect?

That’s the complete transfer. Each declare a summarizer produces ought to declare which of these three classes it belongs to. Noticed claims level to a particular span of the transcript and assert nothing past what that span says. Inferred claims declare the idea being made and the proof the inference is bridging. Suggestions declare that they’re the mannequin’s suggestion, not the individuals’ resolution.

A summarizer that can’t place a declare into a kind of classes has no enterprise producing the declare. The suitable output in that case will not be a smoother declare. It’s no declare.

That is uncomfortable for the buyer of summaries, as a result of it means many sections shall be empty when the underlying dialog was skinny. That discomfort is the purpose. It’s info. It tells the reader that the assembly didn’t, in reality, produce eight sections of substance, no matter what the summarizer wished to write down.

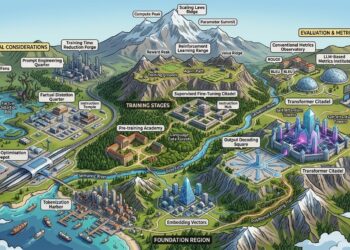

A pipeline that enforces the self-discipline

The structure follows from the framing. Three LLM phases and a deterministic renderer.

Picture by Creator

The primary stage extracts structured information from the transcript. Speaker turns, specific commitments, specific choices, specific portions. This stage is intentionally conservative. It’s allowed to overlook issues. It’s not allowed to invent them.

The second stage synthesizes these information into declare objects throughout eight sections. Every declare carries a label: noticed, inferred, or advice. Every declare carries a pointer to the proof within the extracted information. Synthesis is the place the analytical work occurs, and it’s also the place the mannequin is most certainly to float.

The third stage audits. That is the stage that does the identification work, and the constraint on it’s the a part of the design that issues most.

The audit stage can’t rewrite the evaluation into one thing smoother. It can’t add a better-sounding advice. It can’t invent lacking context.

It’s given a bounded set of operations and forbidden from doing anything. It could delete a declare. It could downgrade a declare from noticed to inferred, or from inferred to advice. It could transfer a declare to a extra acceptable part. It could change a declare with an specific insufficient-evidence placeholder. It could collapse a whole part when nothing in it survives evaluate.

Something not on this record is forbidden, together with writing higher claims.

Picture by Creator

The replace_with_insufficient_evidence operation deserves its personal line. It’s the system actually typing a placeholder into the output the place a assured declare was. That’s identification work made operational. The reader sees, in prose, precisely the place the synthesis stage produced a declare that the supply couldn’t assist.

Why the asymmetry issues. A reviewer that’s allowed to enhance the evaluation turns into one other supply of the identical downside the system is attempting to resolve. A reviewer that’s solely allowed to weaken or take away can solely fail in a single course: by being too cautious. That may be a tolerable failure mode. The other will not be.

What the design produces, and what it refuses to provide

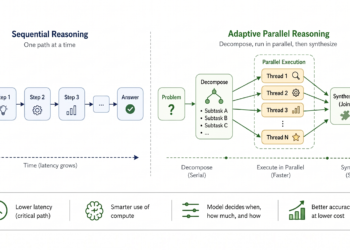

This isn’t a benchmark. It’s a small fixture-based stress take a look at designed to examine whether or not the structure produces the conduct it was constructed to provide. Three transcripts should not sufficient to make basic claims about LLM summarization. They’re sufficient to examine whether or not a particular design alternative has the implications the design predicted.

The fixtures are: a choice assembly through which a pricing mannequin was chosen amongst three actual options, a working session that surfaced a measurement downside with out resolving it, and a skinny two-person sync that contained virtually no resolution content material.

What didn’t occur. Throughout the three runs, the pipeline produced zero fabricated commitments and 0 ungrounded portions. That is what the structure is designed to make tougher. A declare can’t survive the pipeline if it doesn’t have a pointer to proof, and the audit stage can’t manufacture proof to maintain a declare alive. The outcome will not be a assure. The deterministic renderer is the one stage that provides ensures. Extraction, synthesis, and audit are nonetheless LLM calls and may nonetheless fail. The purpose is that the structure pushes their failures towards elimination somewhat than towards fabrication, and the fixtures are per that.

What did occur. The outcome that I discover extra fascinating is the abstention fee.

Throughout three fixture transcripts, the share of empty part slots rose from 17% to 58%.

Throughout all three fixtures: 0 fabricated commitments, 0 ungrounded portions.

Picture by Creator

On the wealthy resolution assembly, the pipeline left seventeen p.c of part slots empty or changed with the insufficient-evidence placeholder. On the working session, the determine rose to 25 p.c. On the skinny sync, it reached fifty-eight p.c. The system produced roughly three and a half instances as many empty sections when the enter sign was skinny in comparison with when it was wealthy.

That’s the conduct the design is attempting to provide. A summarizer that fills the identical eight sections no matter enter will not be summarizing. It’s producing output that conforms to a template. The template is doing the work, and the mannequin is the beauty end.

A summarizer that abstains in proportion to the thinness of the enter is doing one thing completely different. It’s treating the transcript as a supply whose content material varies, and it’s letting that variation present up within the output. The empty sections should not failures of the mannequin. They’re the mannequin declining to claim what the supply doesn’t assist.

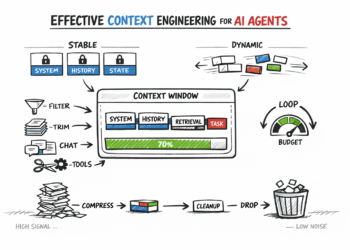

Excerpts from the decision-meeting fixture, with the specific labels surfaced inline.

Picture by Creator

Studying the outcome. The labels should not ornament. They alter what the reader does with the output. An noticed declare invitations verification towards the transcript. An inferred declare invitations scrutiny of the idea that produced it. An insufficient-evidence placeholder invitations the reader to both take a look at the supply themselves or settle for that the assembly didn’t, in reality, produce a declare of that form.

The objection from the buyer

There’s an argument that vacant sections are a usability downside. The reader anticipated a abstract. The reader acquired a partial abstract with specific gaps. The reader has to do extra work.

That objection deserves a direct reply. The reader who acquired a fluent eight-section abstract of a five-minute change was already doing extra work, simply invisibly. They have been going to learn the abstract, act on it, and in some unspecified time in the future uncover that two of the motion gadgets weren’t truly agreed to and one of many dangers was by no means raised. The price of that discovery is excessive. It’s paid in misallocated conferences, missed commitments, and the gradual erosion of belief within the tooling.

Sincere vacancy pushes the associated fee ahead. The reader sees the hole instantly and may resolve the right way to deal with it. Open the transcript. Ask a participant. Deal with the assembly as inconclusive. Every of these is a greater response than performing on a assured abstract that was generated from a confidence the supply didn’t earn.

This is similar commerce observational analysts make once they refuse to report some extent estimate with out identification. The buyer would favor a quantity. The analyst declines. The choice the buyer makes from no quantity is, on common, higher than the choice they’d have comprised of a quantity the info couldn’t assist.

Generalizing the sample

The structure transfers. Any LLM workflow that produces structured claims from a supply could be reframed as observational evaluation and given an identification layer.

Doc evaluate for authorized discovery. Affected person observe summarization. Buyer name evaluation. Code evaluate summaries. Every of those is presently deployed as a one-shot era downside, with a mannequin producing structured output from a supply and the buyer trusting the outcome. Every of them has a model of the identical failure mode the assembly summarizer has, and every could be made extra auditable with the same structure: an extraction stage that’s conservative about what it pulls from the supply, a synthesis stage that produces labeled claims with proof pointers, and an audit stage that’s forbidden from including or strengthening something. The implementation and the danger profile differ throughout these domains. The sample transfers. The specifics don’t.

The labels and the proof pointers should not non-obligatory options. They’re the identification step made operational. A declare and not using a label will not be identifiable. A declare with out an proof pointer can’t be audited. The audit stage’s monotonic-weakening constraint is what prevents identification work from being undone by a mannequin that wishes to provide smoother output.

What this implies for the folks constructing these techniques

Calibrated uncertainty estimates are invaluable. Hallucination benchmarks are invaluable. Grounding and quotation work are invaluable. None of them substitute for the self-discipline of refusing to provide a declare that the supply doesn’t assist.

That self-discipline is lacking from many LLM techniques partly for cultural causes. The sphere grew out of machine studying, the place the aim of a mannequin is to provide an output for each enter. The notion that the correct output is typically no output will not be overseas to the literature, however it’s overseas to the default disposition of a generative mannequin skilled to fill in what comes subsequent. It’s, nonetheless, native to observational evaluation, the place the correct reply to many questions is that the info can’t assist a solution.

So the strategies for making LLM analytical techniques reliable might not come primarily from inside the LLM literature. They could come from disciplines which have already labored out what it means to do sincere evaluation below circumstances the place the supply is the binding constraint. Causal inference is a kind of disciplines. Survey methodology is one other. Forensic accounting is one other.

The individuals who already know the right way to refuse to estimate with out identification have an unusually good vantage level on what’s improper with present LLM analytical tooling, and what to do about it.

Causal inference taught a era of practitioners to not estimate what they haven’t first recognized. LLM summarizers make the identical mistake, simply in prose as a substitute of numbers. The repair isn’t just a greater mannequin. The repair is to place again the step that observational evaluation by no means let go of, and to implement it with an structure that can’t be talked out of doing the correct factor.

A couple of closing pitfalls

- Treating the labels as beauty. If the labels should not enforced upstream, they’re ornament. They should be assigned at synthesis with a pointer to proof and audited downstream towards that pointer. A synthesis stage that produces a label with out an proof pointer will not be doing identification work. It’s producing a class that appears like identification.

- Letting the audit stage be useful. That is the simple mistake. A reviewer that may add a advice, provide lacking context, or rewrite a careless declare feels helpful. It’s also precisely the failure mode the synthesis stage already has, simply dressed up as high quality management. Constrain the audit to a hard and fast set of weakening operations. Anything is the system arguing with itself.

- Complicated abstention with low high quality. A summarizer that returns largely empty sections on a skinny assembly will not be failing. A summarizer that returns assured eight-section output on the identical skinny assembly is failing, simply invisibly. The way in which to guage these techniques will not be abstract completeness, it’s whether or not the abstention fee scales with the sign within the supply.

- Reasoning from three fixtures to basic claims. Three transcripts are sufficient to examine whether or not a design alternative produces the conduct it was constructed to provide. They aren’t sufficient to make claims about LLM summarization usually. In case you construct a model of this, you will have your individual fixture set and your individual definition of what counts as the correct stage of abstention in your use case.

The asymmetry that issues

A pipeline that may solely weaken its outputs has a single failure mode: it may be too cautious. A pipeline that may strengthen its outputs has each failure mode the literature has been documenting for the final a number of years.

Selecting the primary form over the second form will not be a technical resolution. It’s a resolution about what the system is for. If the system is for producing fluent textual content, the second form wins on each metric. If the system is for producing claims a reader can audit earlier than performing, solely the primary form is defensible.

Most present tooling is constructed for the primary aim and deployed as if it had been constructed for the second. Treating that hole as a methodological downside somewhat than a model-quality downside is what modifications the out there cures.

Repository, analysis harness, and instance outputs can be found on GitHub. The complete pocket book walks one transcript via each stage and runs the eval harness throughout all three fixtures.

Workers Information Scientist centered on causal inference, experimentation, and resolution science. I write about turning ambiguous enterprise questions into decision-ready evaluation.

Extra like this on LinkedIn 👇