# Introduction

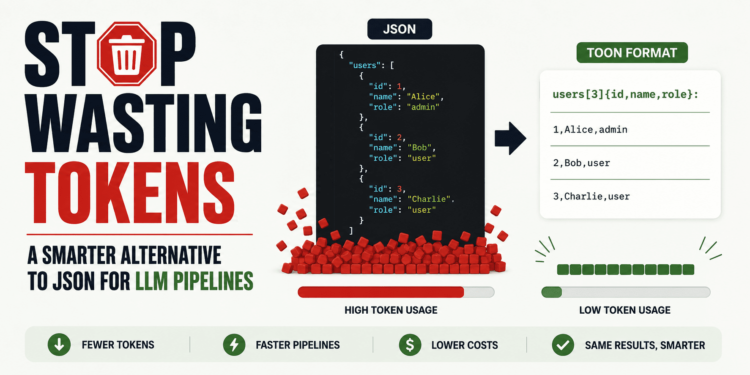

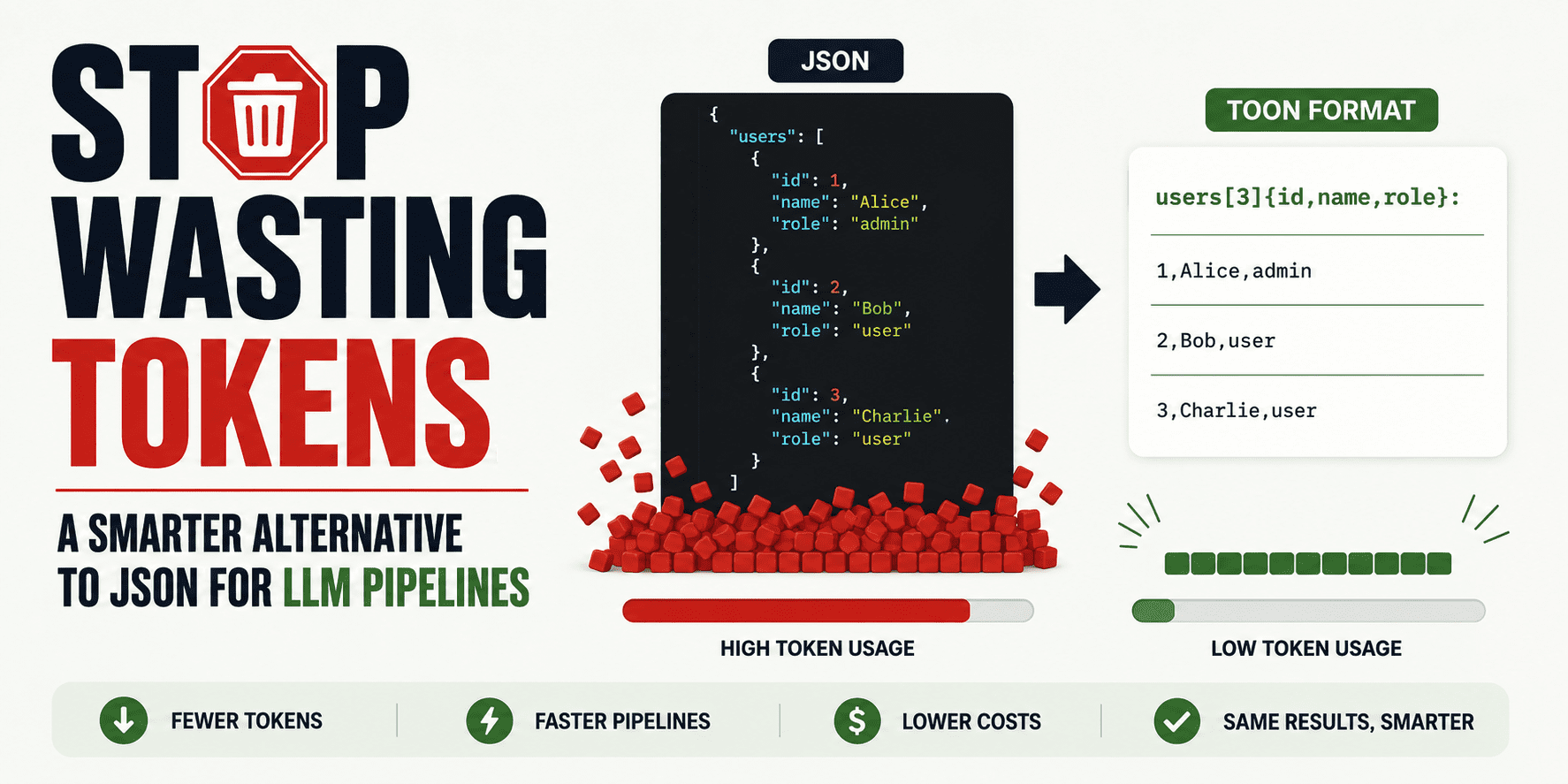

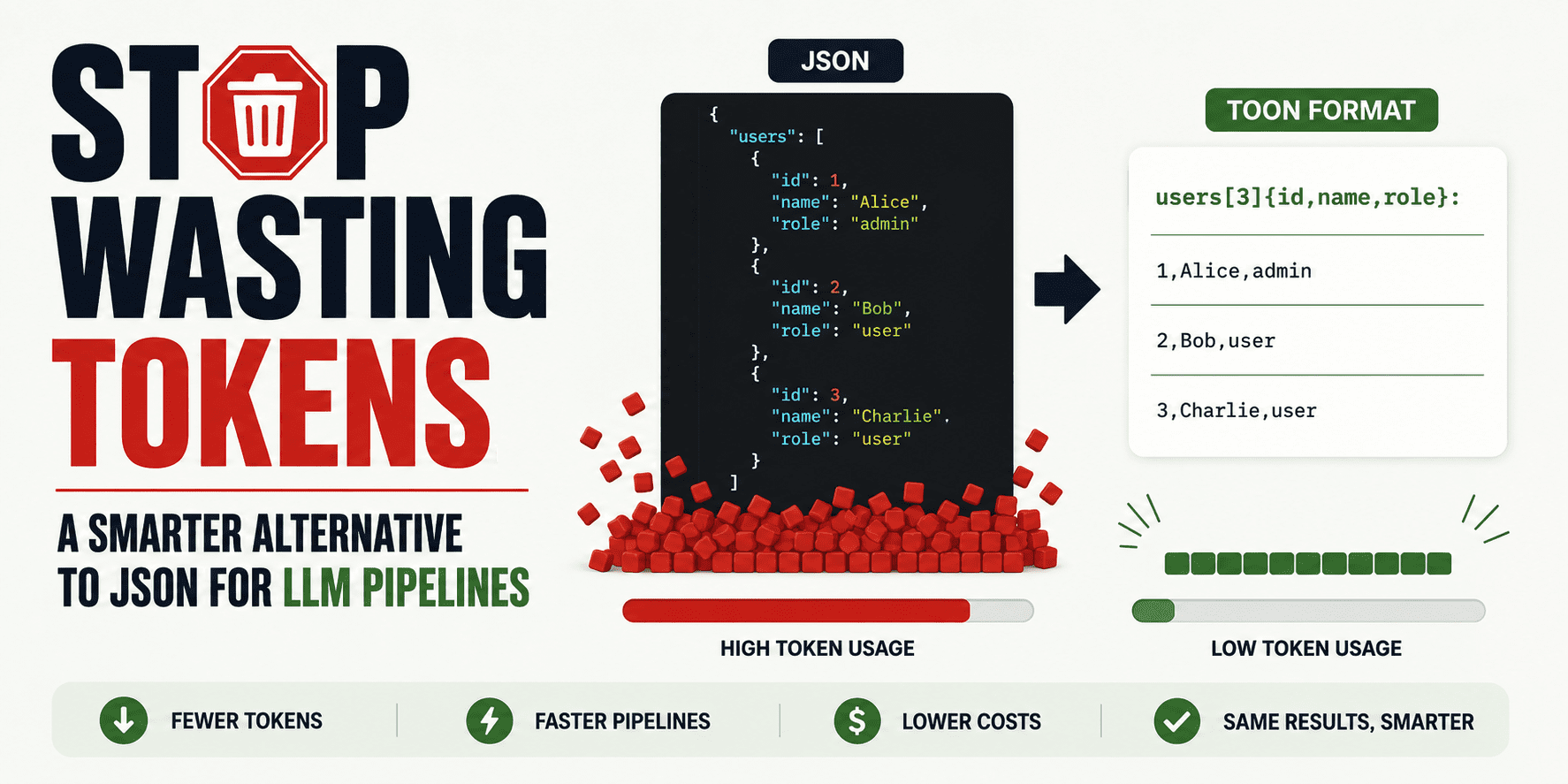

JSON is nice for APIs, storage, and software logic. However inside massive language mannequin (LLM) pipelines, it typically carries loads of token overhead that doesn’t add a lot worth to the mannequin: braces, quotes, commas, and repeated subject names on each row. TOON, quick for Token-Oriented Object Notation, is a more moderen format designed particularly to maintain the identical JSON information mannequin whereas utilizing fewer tokens and giving fashions clearer structural cues. The official TOON docs describe it as a compact, lossless illustration of JSON for LLM enter, particularly robust on uniform arrays of objects.

On this article, you’ll be taught what TOON is, when it is smart to make use of it, and the best way to begin utilizing it step-by-step in your individual LLM workflow. We may also maintain the tradeoffs sincere, as a result of TOON is helpful in some instances, not all of them.

# Why JSON Wastes Tokens in LLM Pipelines

JSON turns into costly in prompts as a result of it repeats construction over and over. LLMs don’t care that JSON is a regular. They solely see tokens.

In case you ship 100 help tickets, product rows, or consumer data to a mannequin, the identical subject names seem in each object. TOON reduces that repetition by declaring fields as soon as after which streaming row values in a compact tabular type. Right here is an easy instance.

JSON:

{

"customers": [

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" },

{ "id": 3, "name": "Charlie", "role": "user" }

]

}

TOON:

customers[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

Identical information, much less litter.

The construction continues to be clear, however the repeated keys are gone. That’s the place TOON will get most of its worth.

# What TOON Really Is and When It Is Value Utilizing

TOON is a serialization format for the JSON information mannequin. Meaning it will probably symbolize objects, arrays, strings, numbers, booleans, and null values — however in a method that’s extra compact for mannequin enter. The TOON challenge presents it as lossless relative to JSON, which suggests you’ll be able to convert JSON to TOON and again with out shedding info. The necessary factor to grasp is that this:

You do not want to switch JSON in your app.

A greater method is to maintain JSON in your backend, APIs, and storage, then convert it to TOON solely when you find yourself about to ship structured information into an LLM.

TOON is most helpful when your immediate incorporates repeated structured data with the identical fields. Good examples embody retrieved help tickets, catalog rows, analytics data, device outputs, CRM entries, or reminiscence snapshots for agent techniques. Nonetheless, in case your construction is deeply nested, extremely irregular, purely flat, or very small, the advantages can shrink or disappear.

# Getting Began with TOON

// Step 1: Putting in the TOON Command-Line Interface

The simplest approach to attempt TOON is with the official command-line interface (CLI) from the TOON challenge. The TOON website hyperlinks on to its CLI, and the primary repository presents the format as a part of a broader SDK and tooling ecosystem.

Set up the package deal:

npm set up -g @toon-format/cli

// Step 2: Changing a JSON File into TOON

Let’s create a folder first:

mkdir toon-test

cd toon-test

Now, run the next command to create the JSON file:

Paste this:

[

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" },

{ "id": 3, "name": "Charlie", "role": "user" }

]

Now convert it:

npx @toon-format/cli customers.json -o customers.toon

It is best to get a compact outcome just like this:

[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

That is the core TOON sample: declare the form as soon as, then listing the values row by row. That aligns with the official design purpose of tabular arrays for uniform objects.

// Step 3: Utilizing TOON as Mannequin Enter

The most effective place to make use of TOON is on the enter facet of your pipeline. As a substitute of pasting a big JSON blob right into a immediate, go the TOON model and maintain the instruction easy.

For instance:

The next information is in TOON format.

customers[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

Summarize the consumer roles and level out something uncommon.

This works properly as a result of TOON is designed to assist the mannequin learn repeated construction with much less overhead. That can be how the official challenge frames its benchmarks: as a check of comprehension throughout completely different structured enter codecs.

// Step 4: Preserving JSON for Outputs

This is likely one of the most necessary sensible selections. TOON may be very helpful for enter, however JSON continues to be normally the higher alternative for output when one other system must parse the mannequin response. That’s as a result of JSON has a lot stronger tooling help, and trendy APIs can implement structured JSON output with schemas.

In follow, the most secure sample is:

- JSON in your app.

- TOON for giant structured immediate context.

- JSON once more for machine-parseable mannequin responses.

This provides you effectivity on the enter facet and reliability on the output facet.

// Step 5: Benchmarking in Your Personal Pipeline

Don’t swap codecs primarily based on hype alone.

Run a small benchmark in your individual workflow:

- Depend enter tokens for JSON.

- Depend enter tokens for TOON.

- Evaluate latency.

- Evaluate reply high quality.

- Evaluate whole value.

The official TOON challenge positions token financial savings as one of many predominant advantages, and third-party protection repeats these claims, however group dialogue additionally exhibits that outcomes rely closely on the form of the information. That’s the reason the very best query is just not “Is TOON higher than JSON?”

The higher query is: “Is TOON higher for this particular LLM step?”

# Ultimate Ideas

TOON is just not one thing you should use all over the place.

It’s a focused optimization for one particular downside: losing tokens on repeated JSON construction inside LLM prompts. In case your pipeline passes numerous repeated structured data right into a mannequin, TOON is value testing. In case your payloads are small, irregular, or closely nested, JSON should be the higher alternative.

The neatest approach to undertake it’s easy: maintain JSON the place JSON already works properly, use TOON the place you might be packing massive structured inputs into prompts, and benchmark the outcomes by yourself duties earlier than committing to it.

Kanwal Mehreen is a machine studying engineer and a technical author with a profound ardour for information science and the intersection of AI with drugs. She co-authored the e-book “Maximizing Productiveness with ChatGPT”. As a Google Technology Scholar 2022 for APAC, she champions variety and educational excellence. She’s additionally acknowledged as a Teradata Range in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower girls in STEM fields.

# Introduction

JSON is nice for APIs, storage, and software logic. However inside massive language mannequin (LLM) pipelines, it typically carries loads of token overhead that doesn’t add a lot worth to the mannequin: braces, quotes, commas, and repeated subject names on each row. TOON, quick for Token-Oriented Object Notation, is a more moderen format designed particularly to maintain the identical JSON information mannequin whereas utilizing fewer tokens and giving fashions clearer structural cues. The official TOON docs describe it as a compact, lossless illustration of JSON for LLM enter, particularly robust on uniform arrays of objects.

On this article, you’ll be taught what TOON is, when it is smart to make use of it, and the best way to begin utilizing it step-by-step in your individual LLM workflow. We may also maintain the tradeoffs sincere, as a result of TOON is helpful in some instances, not all of them.

# Why JSON Wastes Tokens in LLM Pipelines

JSON turns into costly in prompts as a result of it repeats construction over and over. LLMs don’t care that JSON is a regular. They solely see tokens.

In case you ship 100 help tickets, product rows, or consumer data to a mannequin, the identical subject names seem in each object. TOON reduces that repetition by declaring fields as soon as after which streaming row values in a compact tabular type. Right here is an easy instance.

JSON:

{

"customers": [

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" },

{ "id": 3, "name": "Charlie", "role": "user" }

]

}

TOON:

customers[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

Identical information, much less litter.

The construction continues to be clear, however the repeated keys are gone. That’s the place TOON will get most of its worth.

# What TOON Really Is and When It Is Value Utilizing

TOON is a serialization format for the JSON information mannequin. Meaning it will probably symbolize objects, arrays, strings, numbers, booleans, and null values — however in a method that’s extra compact for mannequin enter. The TOON challenge presents it as lossless relative to JSON, which suggests you’ll be able to convert JSON to TOON and again with out shedding info. The necessary factor to grasp is that this:

You do not want to switch JSON in your app.

A greater method is to maintain JSON in your backend, APIs, and storage, then convert it to TOON solely when you find yourself about to ship structured information into an LLM.

TOON is most helpful when your immediate incorporates repeated structured data with the identical fields. Good examples embody retrieved help tickets, catalog rows, analytics data, device outputs, CRM entries, or reminiscence snapshots for agent techniques. Nonetheless, in case your construction is deeply nested, extremely irregular, purely flat, or very small, the advantages can shrink or disappear.

# Getting Began with TOON

// Step 1: Putting in the TOON Command-Line Interface

The simplest approach to attempt TOON is with the official command-line interface (CLI) from the TOON challenge. The TOON website hyperlinks on to its CLI, and the primary repository presents the format as a part of a broader SDK and tooling ecosystem.

Set up the package deal:

npm set up -g @toon-format/cli

// Step 2: Changing a JSON File into TOON

Let’s create a folder first:

mkdir toon-test

cd toon-test

Now, run the next command to create the JSON file:

Paste this:

[

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" },

{ "id": 3, "name": "Charlie", "role": "user" }

]

Now convert it:

npx @toon-format/cli customers.json -o customers.toon

It is best to get a compact outcome just like this:

[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

That is the core TOON sample: declare the form as soon as, then listing the values row by row. That aligns with the official design purpose of tabular arrays for uniform objects.

// Step 3: Utilizing TOON as Mannequin Enter

The most effective place to make use of TOON is on the enter facet of your pipeline. As a substitute of pasting a big JSON blob right into a immediate, go the TOON model and maintain the instruction easy.

For instance:

The next information is in TOON format.

customers[3]{id,title,function}:

1,Alice,admin

2,Bob,consumer

3,Charlie,consumer

Summarize the consumer roles and level out something uncommon.

This works properly as a result of TOON is designed to assist the mannequin learn repeated construction with much less overhead. That can be how the official challenge frames its benchmarks: as a check of comprehension throughout completely different structured enter codecs.

// Step 4: Preserving JSON for Outputs

This is likely one of the most necessary sensible selections. TOON may be very helpful for enter, however JSON continues to be normally the higher alternative for output when one other system must parse the mannequin response. That’s as a result of JSON has a lot stronger tooling help, and trendy APIs can implement structured JSON output with schemas.

In follow, the most secure sample is:

- JSON in your app.

- TOON for giant structured immediate context.

- JSON once more for machine-parseable mannequin responses.

This provides you effectivity on the enter facet and reliability on the output facet.

// Step 5: Benchmarking in Your Personal Pipeline

Don’t swap codecs primarily based on hype alone.

Run a small benchmark in your individual workflow:

- Depend enter tokens for JSON.

- Depend enter tokens for TOON.

- Evaluate latency.

- Evaluate reply high quality.

- Evaluate whole value.

The official TOON challenge positions token financial savings as one of many predominant advantages, and third-party protection repeats these claims, however group dialogue additionally exhibits that outcomes rely closely on the form of the information. That’s the reason the very best query is just not “Is TOON higher than JSON?”

The higher query is: “Is TOON higher for this particular LLM step?”

# Ultimate Ideas

TOON is just not one thing you should use all over the place.

It’s a focused optimization for one particular downside: losing tokens on repeated JSON construction inside LLM prompts. In case your pipeline passes numerous repeated structured data right into a mannequin, TOON is value testing. In case your payloads are small, irregular, or closely nested, JSON should be the higher alternative.

The neatest approach to undertake it’s easy: maintain JSON the place JSON already works properly, use TOON the place you might be packing massive structured inputs into prompts, and benchmark the outcomes by yourself duties earlier than committing to it.

Kanwal Mehreen is a machine studying engineer and a technical author with a profound ardour for information science and the intersection of AI with drugs. She co-authored the e-book “Maximizing Productiveness with ChatGPT”. As a Google Technology Scholar 2022 for APAC, she champions variety and educational excellence. She’s additionally acknowledged as a Teradata Range in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower girls in STEM fields.