TL;DR

- RAG retrieved the suitable doc. The LLM nonetheless contradicted it. That’s the failure this method catches.

- 5 failure patterns: numeric contradictions, pretend citations, negation flips, reply drift, confident-but-ungrounded responses.

- Three therapeutic methods repair dangerous solutions in-place earlier than customers see them.

- No exterior APIs, no LLM choose, no embeddings mannequin — pure Python underneath 50ms.

- 70 checks, each manufacturing failure mode I discovered has a named assertion.

was mendacity (why I constructed this)

I’m constructing a RAG-powered assistant for EmiTechLogic, my tech schooling platform. The aim is easy: a learner asks a query, the system pulls from my tutorials and articles, and solutions primarily based on that content material. The LLM output shouldn’t be generic. It ought to replicate my content material, my explanations, what I’ve truly written.

Earlier than placing that in entrance of actual learners, I wanted to check it correctly.

What I discovered was not what I anticipated. The retrieval was working effective. The suitable doc was coming again. However the LLM was producing solutions that instantly contradicted what it had simply retrieved. No errors, no crashes. Only a assured, fluent reply that was factually flawed.

I began researching how widespread this failure is in manufacturing RAG programs. The extra I regarded, the extra I discovered. This isn’t a uncommon edge case or a bug you may patch. It’s a structural property of how RAG works.

The mannequin reads the suitable doc and nonetheless generates one thing totally different. The explanations usually are not absolutely understood: consideration drift, coaching biases, conflicting indicators in context. What issues virtually is that it occurs commonly, it’s not predictable, and most programs don’t have anything to catch it earlier than the person sees it.

Here’s what makes it extra harmful than customary LLM hallucination. With a plain LLM, a flawed reply is at the very least plausibly unsure. The person is aware of the mannequin is working from coaching information and could be flawed. With RAG, the mannequin learn the proper supply and nonetheless contradicted it. The person has each cause to belief the reply. The system seems to be like it’s doing precisely what it was designed to do.

The mannequin isn’t simply failing; it’s mendacity with a straight face. It produces these fluent, authoritative responses that look precisely like the reality, proper up till the second they break your system.

I spent months researching documented manufacturing failures, reproducing them in code, and constructing a system to catch them earlier than they attain customers. This text is the results of that work.

All outcomes are from actual runs of the system on Python 3.12, CPU-only, no GPU, besides the place explicitly famous as calculated from recognized inputs.

Full code:https://github.com/Emmimal/hallucination-detector/

Earlier than anything — right here’s what the system produces

============================= check session begins =============================

collected 70 objects

TestConfidenceScorer 5 handed

TestFaithfulnessScorer 5 handed

TestContradictionDetector 7 handed

TestEntityHallucinationDetector 5 handed

TestAnswerDriftMonitor 6 handed

TestHallucinationDetector 24 handed

TestQualityScore 18 handed

============================= 70 handed =============================70 checks. Each named failure mode I’ve encountered has an assertion. That quantity is the purpose of this text, not a footnote on the finish.

The place most RAG programs fail

Most RAG tutorials cease at: retrieve paperwork, stuff them right into a immediate, name the mannequin.

That works till it doesn’t.

The entire promise of retrieval-augmented technology is grounding. Give the mannequin actual paperwork and it’ll use them. In apply, RAG creates a failure mode that’s extra harmful than vanilla hallucination, not much less.

This isn’t about conflicting retrieved paperwork. That could be a separate drawback I coated in Your RAG System Retrieves the Proper Information — However Nonetheless Produces Unsuitable Solutions. That is a couple of mannequin that retrieved precisely the suitable doc and nonetheless answered incorrectly.

This occurs for causes nonetheless not absolutely understood: consideration mechanisms drifting to irrelevant tokens, coaching biases towards sure phrasings, the mannequin averaging throughout conflicting indicators in context. What issues for manufacturing is that it occurs commonly, it’s not predictable, and the one dependable approach to catch it’s a examine on the ultimate reply earlier than it leaves your system.

After I was going by means of documented manufacturing failures, 5 patterns stored exhibiting up.

The primary is assured flawed solutions. The mannequin makes use of phrases like “positively” or “clearly said” whereas asserting one thing that has no foundation within the retrieved supply. The assertive language is the issue — it removes any sign that the reply could be flawed.

The second is factual contradictions. The context says 14 days, the reply says 30. The context says annual billing, the reply says month-to-month. The supply was there. The mannequin simply ignored it.

The third is hallucinated entities. Particular person names, paper citations, group names that don’t seem wherever within the retrieved paperwork. The mannequin invents them and presents them as reality.

The fourth is reply drift. The identical query will get a distinct reply over time. This one is silent — no error, no flag, nothing. It normally will get caught in a monetary audit or a person criticism, not by the system itself.

The fifth is what I name assured however untrue. The mannequin sounds sure all through the reply however most of what it says can’t be traced again to any retrieved supply. Excessive confidence, low grounding. That mixture is essentially the most harmful sample I discovered.

Most detection frameworks flag these and hand them again to your utility code. None of them repair it. That’s the hole this method closes.

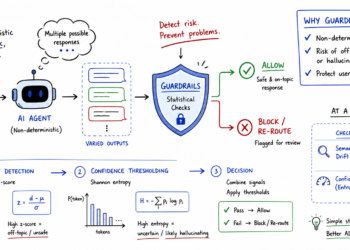

The structure: detect, rating, heal, route

retrieve(question)

→ generate(question, chunks)

→ detector.examine(question, chunks, reply)

→ QualityScore.compute(report)

→ healer.heal(...)

→ ACCEPT / HEALED_ACCEPT / FALLBACK / DISCARD

I wished this to run inside a standard FastAPI request with out including exterior dependencies or blowing the latency price range. No API calls, no embeddings mannequin, no LLM choose. The entire examine() name runs underneath 50ms with spaCy, underneath 10ms on the regex fallback. That was the constraint I designed round.

Test 1: Confidence scoring

In an ideal world, I’d use logprobs to see how certain the mannequin is about its tokens. However in manufacturing, most APIs don’t make these simple to get or mixture.

I wanted a poor man’s logprobs. I constructed the ConfidenceScorer to search for linguistic overconfidence — assertion markers like “positively” or “assured” weighed towards uncertainty indicators like “may” or “I believe”. Easy phrase counting, normalized by reply size. It sounds too easy to work, however it’s surprisingly efficient at catching the mannequin when it’s bluffing.

def rating(self, reply: str) -> float:

al = reply.decrease()

phrases = len(reply.break up()) or 1

excessive = sum(len(p.findall(al)) for p in self._HIGH_RE)

low = sum(len(p.findall(al)) for p in self._LOW_RE)

return max(0.0, min(1.0,

0.5 + min(excessive / (phrases / 10), 1.0) * 0.5

- min(low / (phrases / 10), 1.0) * 0.5

))This examine fires when confidence exceeds 0.75 AND faithfulness falls beneath 0.50. That mixture is what I stored seeing within the failures I researched — the mannequin sounding utterly sure whereas most of what it says can’t be traced again to the retrieved supply. Excessive confidence masks the issue. That’s what makes it laborious to catch.

Test 2: Faithfulness scoring

The FaithfulnessScorer splits the reply into factual declare sentences, then checks what fraction of every declare’s content material phrases seem within the mixed context.

def _claim_grounded(self, declare: str, context_lower: str) -> bool:

kw = _key_words(declare)

if not kw:

return True

return sum(1 for w in kw if w in context_lower) / len(kw) >= self.overlap_thresholdA declare is grounded if at the very least 40% of its key phrases seem within the supply. Rating = grounded claims / whole claims.

I settled on 40% as a result of it provides sufficient room for pure paraphrasing with out letting fabrication by means of. In case you are working in authorized or medical contexts, begin at 0.70 — paraphrasing itself is a threat there. Questions get a free move at 1.0 since they don’t assert something.

Test 3: Contradiction detection

After I was constructing this examine, I wanted to determine what truly counts as a contradiction. I narrowed it down to a few patterns that confirmed up most frequently within the failures I checked out.

The primary is numeric contradictions. The reply makes use of a quantity that’s not in any retrieved chunk, and the identical subject within the context has a distinct quantity close by. That is the best case and the most typical.

The second is negation flips. The context says “doesn’t help X”, the reply says “helps X”. I ended up matching eight negation patterns bidirectionally — doesn’t, can not, by no means, no, isn’t, received’t, don’t, didn’t — towards their optimistic equivalents. Getting this proper took extra iteration than I anticipated.

The third is temporal contradictions. Identical unit, totally different worth, identical subject. Context says 14 days, reply says 30 days. That’s it.

One implementation element that triggered me issues early on — the quantity extractor has to exclude letter-prefixed identifiers:

def _extract_numbers(textual content: str) -> set[str]:

# SKU-441 → excluded (letter+hyphen prefix means it is a label)

# 5-7 days → preserved (digit+hyphen is a numeric vary)

# $49.99 → preserved

return set(re.findall(

r'(?With out this rule, each product code containing a quantity triggers a false optimistic. SKU-441 comprises 441 — that could be a label, not a value.

Test 4: Entity hallucination detection

This examine extracts named entities from the reply individuals, organizations, citations and verifies every one seems in at the very least one retrieved context chunk.

The shift from regex to spaCy NER was not non-compulsory. Here’s what the 2 approaches produce on the identical reply:

Reply: "The seminal work was revealed by Dr. James Harrison and

Dr. Wei Liu in arXiv:2204.09876, at DeepMind Analysis Institute."

Context: "Latest research present transformer fashions obtain 94% accuracy on NER duties."

───────────────────────────────────────────────────────────────────

REGEX NER (v1) — false positives on noun phrases

───────────────────────────────────────────────────────────────────

Flagged as hallucinated:

Dr. James Harrison (individual) ✓ right

Dr. Wei Liu (individual) ✓ right

arXiv:2204.09876 (quotation) ✓ right

Scaling Named (individual) ✗ FALSE POSITIVE

Entity Recognition (individual) ✗ FALSE POSITIVE

NER Duties (org) ✗ FALSE POSITIVE

───────────────────────────────────────────────────────────────────

spaCy en_core_web_sm (manufacturing) — clear output

───────────────────────────────────────────────────────────────────

Flagged as hallucinated:

Dr. James Harrison (individual) ✓ right

Dr. Wei Liu (individual) ✓ right

arXiv:2204.09876 (quotation) ✓ right

DeepMind Analysis Institute (org) ✓ rightIn v1, my regex fallback was a bit too aggressive—it flagged phrases like ‘Named Entity Recognition’ as individual names simply due to the capitalization. Upgrading to spaCy’s statistical mannequin mounted this. It truly understands that ‘Scaling Named’ is a syntactic pair, not a human being. This single change killed the false positives that had been making Test 4 virtually unusable in my earlier checks.

Test 5: The half no person displays

This was the final examine I constructed and the one I virtually lower.

I used to be undecided drift detection can be well worth the complexity. Three months into testing, it caught a pricing endpoint silently returning a distinct worth after a retrieval index rebuild. Not one of the different 4 checks fired. That was sufficient to maintain it.

Drift isn’t about whether or not a single reply is right. It’s about whether or not your system is behaving persistently over time.

The AnswerDriftMonitor shops light-weight fingerprints of each reply per query in SQLite:

def _fingerprint(self, reply: str) -> dict:

numbers = sorted(set(re.findall(r'bd+(?:.d+)?(?:%|okay|m)?b', reply)))

phrases = reply.decrease().break up()

key_words = [w for w in words if len(w) > 5][:20]

pos = sum(1 for w in phrases if w in self._POS)

neg = sum(1 for w in phrases if w in self._NEG)

return {

"numbers": numbers,

"key_words": key_words,

"polarity": "optimistic" if pos > neg else ("damaging" if neg > pos else "impartial"),

"length_bucket": len(reply) // 100,

}I didn’t wish to retailer the complete reply textual content within the database. That will get massive quick and creates privateness floor space I didn’t want. So the fingerprint shops solely what is critical to detect significant change — the numbers within the reply, the highest 20 content material phrases, the general polarity, and a size bucket. That is sufficient to catch actual drift with out the database rising unbounded.

On every new reply, the monitor compares it towards the final 10 responses for that query. If the space goes above 0.35, that’s drift. If common similarity drops beneath 0.65, that’s drift too. Each thresholds got here from testing, not from principle.

The important manufacturing element: this makes use of SQLite, not an in-memory dictionary. An in-memory construction resets on each course of restart. In an actual deployment with rolling restarts, drift detection successfully by no means fires. SQLite persists throughout deploys. You’re looking forward to degradation over days, not minutes.

# The check that caught an actual mistake throughout staging

def test_persistence_across_instances(self, tmp_path):

db_file = str(tmp_path / "drift.db")

mon1 = AnswerDriftMonitor(db_path=db_file)

for _ in vary(5):

mon1.file(query, stable_answer)

mon2 = AnswerDriftMonitor(db_path=db_file) # contemporary occasion, identical file

detected, delta = mon2.file(query, drifted_answer)

assert detected # historical past from mon1 continues to be thereThis check exists as a result of my staging surroundings was restarting each half-hour and the drift monitor had been blind your entire time.

The self-healing layer

The HallucinationHealer makes an attempt one among three deterministic repair methods, then re-inspects the outcome. If the healed reply nonetheless fails re-inspection, it serves a secure decline as a substitute of delivering a flawed reply.

Therapeutic precedence order:

Technique A: Contradiction patch

Technique A is a direct repair: if the flawed quantity is within the reply, swap it for the suitable one from the context. It sounds easy, however billing cycle normalization was a nightmare.

The problem was the order of operations. If I ran the patterns within the flawed sequence, I’d get messy output like ‘yearly subscription’ as a result of the noun was changed earlier than the adjective may very well be adjusted. To repair this, I made the system detect the general path first (annual vs. month-to-month) after which apply a single ordered move. It handles particular patterns first earlier than falling again to adjectives. This solved the grammar drift that was making the ‘self-healing’ a part of the system look damaged.

Earlier than: "The Professional plan prices $10 monthly, billed month-to-month.

You may cancel your month-to-month subscription at any time."

Context: "The Professional plan prices $120 per yr, billed yearly."

After: "The Professional plan prices $120 per yr, billed yearly.

You may cancel your annual subscription at any time."

Modifications logged:

— Changed '$10' → '$120'

— Normalized billing: 'bper monthb' → 'per yr'

— Normalized billing: 'bmonthly subscriptionb' → 'annual subscription'

— Normalized billing: 'bbilled monthlyb' → 'billed yearly'

— Confidence recalibrated: 0.50 → 0.65 (contradiction_patch)Technique B: Entity scrub

Technique B is easier. If the reply comprises hallucinated entities, I take away the sentences that include them. The half I thought of fastidiously was what to inform the person. Silently deleting sentences felt flawed — the person would get a shorter reply with no clarification. So I added a transparency word every time one thing will get eliminated, so that they know the reply was trimmed and why.

for despatched in sentences:

if any(identify.decrease() in despatched.decrease() for identify in fake_names):

eliminated.append(despatched)

else:

clear.append(despatched)

if eliminated:

outcome += (

" Notice: particular names or references couldn't be verified "

"within the supply paperwork and have been omitted."

)If each sentence within the reply comprises a hallucinated entity, scrubbing produces nothing. In that case the healer falls by means of to secure decline reasonably than returning an empty response.

Technique C: Grounding rewrite

When faithfulness is beneath 0.30, I rebuild the reply from scratch utilizing the top-ranked context sentences by key phrase overlap with the query. The prefix issues right here. I didn’t wish to use one thing like “Based mostly on accessible info” as a result of that tells the person nothing about the place the reply is definitely coming from. So the prefix is chosen primarily based on what the context truly comprises:

if re.search(r'$d+|d+%|d+s*(day|month|yr)', combined_lower):

prefix = "Based on the supplied information:"

elif any(w in combined_lower for w in ("coverage", "guideline", "process", "rule")):

prefix = "Per the supply documentation:"

else:

prefix = "The supply signifies that:"“Based mostly on accessible info” tells the person nothing about the place the knowledge got here from. These three prefixes do.

Confidence recalibration by technique

After therapeutic, confidence is recalibrated primarily based on what was carried out, not re-run blindly on the healed textual content:

| Technique | Recalibration | Rationale |

|---|---|---|

| contradiction_patch | unique + 0.15, capped at 0.80 | Deterministic repair from verified supply — earns greater confidence |

| entity_scrub | unique × 0.85 | Eliminated dangerous sentences; remaining textual content continues to be the mannequin’s personal output |

| grounding_rewrite | Re-run ConfidenceScorer on healed textual content | Hedging prefix does the work — “Based on…” scores decrease naturally |

Therapeutic outcomes throughout all 5 eventualities

Situation 1 — Assured lie (30 days → 14 days)

Preliminary: CRITICAL → contradiction_patch → Ultimate: LOW

Confidence: 1.00 → 0.80

Situation 2 — Hallucinated quotation: Dr. James Harrison, arXiv:2204.09876

The mannequin invented two researchers and a paper quotation. None of them seem wherever within the retrieved context.

Anticipated final result: grounding_rewrite rebuilds the reply from context —

faithfulness of 0.00 fires the primary precedence examine earlier than entities

are thought-about.

Situation 3 — Billing contradiction ($10/month → $120/yr)

Preliminary: CRITICAL → contradiction_patch → Ultimate: LOW

Confidence: 0.50 → 0.65

Situation 4 — Reply drift (SKU-441 value diverged)

Preliminary: CRITICAL → grounding_rewrite → Ultimate: LOW

Confidence: 0.50 → 0.50

Situation 5 — Clear reply

Preliminary: LOW → no_healing_needed → Ultimate: LOW

Confidence: 0.62 → 0.62 (unchanged)Two issues price noting. Situation 2 triggers grounding_rewrite, not entity_scrub — as a result of faithfulness was 0.00, which fires the primary precedence examine earlier than entities are thought-about. Situation 4 is CRITICAL reasonably than MEDIUM as a result of the drifted reply additionally comprises a numeric contradiction ($39.99 vs $49.99), so drift and contradiction collectively push it to CRITICAL. These are actual outputs from the demo, not illustrative summaries.

Operating it your self

Clone the repo and run all 5 eventualities:

git clone https://github.com/Emmimal/hallucination-detector.git

cd hallucination-detectorRun a single situation with python demo.py --scenario 3. Here’s what Situation 3 — the billing contradiction — produces finish to finish:

── DETECT ──────────────────────────────────────────────────────

Query : How a lot does the Professional plan value?

Threat : CRITICAL

Confidence: 0.50

Trustworthy : 0.50

Contradict: True — Numeric contradiction: reply makes use of '10' however

context exhibits '120' close to 'professional'

Faux names: []

Drift : False (delta=0.00)

Triggered : ['contradiction']

Latency : 11.9ms

── SCORE ───────────────────────────────────────────────────────

Rating : 0.40 → HEALED_ACCEPT

Parts:

trustworthy 0.20 / 0.40

constant 0.00 / 0.30

confidence 0.10 / 0.20

latency 0.10 / 0.10

── HEAL ────────────────────────────────────────────────────────

Technique : contradiction_patch

Preliminary threat : CRITICAL → Ultimate threat : LOW

Earlier than: The Professional plan prices $10 monthly, billed month-to-month.

You may cancel your month-to-month subscription at any time.

After: The Professional plan prices $120 per yr, billed yearly.

You may cancel your annual subscription at any time.

Modifications:

— Changed '$10' → '$120'

— Normalized billing: 'bper monthb' → 'per yr'

— Normalized billing: 'bmonthly subscriptionb' → 'annual subscription'

— Normalized billing: 'bbilled monthlyb' → 'billed yearly'

— Confidence recalibrated: 0.50 → 0.65 (contradiction_patch)CRITICAL threat in, LOW threat out. The flawed reply is mounted in-place, the billing cycle language is normalized all through, and each change is logged. The person will get a corrected reply. You get a full file of precisely what was flawed and what was modified.

High quality scoring and supply routing

Move/fail isn’t sufficient for an actual deployment. You should know not simply whether or not a solution failed, however how badly — and what to do about it.

QualityScore computes a weighted composite that routes each reply to one among 4 supply tiers:

final_score = 0.40 × faithfulness

+ 0.30 × consistency (0.0 if contradiction discovered)

+ 0.20 × confidence (calibrated towards faithfulness stage)

+ 0.10 × latency_score (non-linear penalty curve)

− 0.20 × drift_penalty (specific deduction, utilized final)| Routing | Situation | What you ship |

|---|---|---|

| ACCEPT | rating ≥ 0.75, no therapeutic | Authentic reply |

| HEALED_ACCEPT | therapeutic utilized, re-inspection handed | Healed reply |

| FALLBACK | rating < 0.50, not healed | Retry or decline |

| DISCARD | therapeutic served secure decline | Secure decline message |

Log each HEALED_ACCEPT individually from ACCEPT. They’re your sign for what the mannequin persistently will get flawed.

Latency penalty: Full marks underneath 20ms (pure Python, regex NER), linear decay from 0.10 to 0.05 throughout the 20–50ms band (spaCy working), steep decay towards 0.00 at 200ms. The break at 50ms displays an actual manufacturing constraint — that’s the place spaCy NER begins showing in a typical FastAPI latency price range.

Drift deduction: Utilized final. Drift is a sentinel for retrieval pipeline well being. A currently-grounded reply from a degrading pipeline ought to nonetheless path to fallback, as a result of previous inconsistency predicts future unreliability. The -0.20 is utilized in any case different parts so it may well push any reply beneath the edge no matter present faithfulness.

Efficiency traits

Measured on Python 3.12, CPU solely, no GPU:

| Operation | Latency | Notes |

|---|---|---|

| Confidence scoring | < 1ms | Regex sample matching |

| Faithfulness scoring | ~2ms | Key phrase overlap calculation |

| Contradiction detection | ~1ms | Regex + quantity extraction |

| Entity detection — spaCy | ~45ms | en_core_web_sm NER |

| Entity detection — regex | < 1ms | Fallback path, no spaCy required |

| Drift file + examine | ~3ms | SQLite write + similarity question |

| Full examine() — regex NER | < 10ms | Pure Python path |

| Full examine() — spaCy NER | < 50ms | Manufacturing path |

Should you want sub-10ms end-to-end, the regex NER fallback is a one-line config change. You commerce some entity detection precision for latency. For many customer-facing deployments the spaCy path at underneath 50ms provides no perceptible delay.

The checks: 70 instances, not demos

Each named manufacturing failure has an assertion. Here’s what these 70 checks cowl:

TestConfidenceScorer (5 checks)

— assertive reply scores excessive

— hedged reply scores low

— rating bounded 0.0–1.0 throughout all inputs

— empty string dealt with

— unicode reply dealt with

TestFaithfulnessScorer (5 checks)

— grounded reply scores ≥ 0.80

— fabricated reply scores ≤ 0.50 with ungrounded listing

— empty reply returns good rating

— question-only reply excluded from claims

— strict threshold config produces decrease scores

TestContradictionDetector (7 checks)

— numeric contradiction detected with cause

— matching numbers move cleanly

— negation flip detected

— temporal contradiction detected

— clear reply passes

— empty context dealt with

— empty reply dealt with

TestEntityHallucinationDetector (5 checks)

— fabricated individual flagged

— fabricated quotation flagged

— entity current in context not flagged

— empty reply returns empty listing

— widespread discourse phrases not false-positived

TestAnswerDriftMonitor (6 checks)

— no drift on first reply

— no drift on constant solutions

— drift detected after significant change

— totally different questions don't intervene

— persistence throughout situations (SQLite, new object, identical file)

— clear historical past resets to zero

TestHallucinationDetector (24 checks)

— 5 manufacturing eventualities with right threat ranges

— threat CRITICAL on contradiction

— threat LOW on clear reply

— empty reply, empty context, very lengthy reply, unicode, single phrase

— HallucinationBlocked exception carries full report

— strict config triggers confident_but_unfaithful

— stats monitoring and reset

— 20-thread concurrent examine() with zero errors

— ainspect() returns right report

— ainspect() detects hallucination appropriately

— asyncio.collect() with 10 concurrent ainspect() calls

— stats studies NER backend appropriately

TestQualityScore (18 checks)

— ACCEPT on excessive rating

— FALLBACK on low rating

— HEALED_ACCEPT when therapeutic utilized

— DISCARD when secure decline served

— latency full marks underneath 20ms

— latency lowered at 35ms, above 50ms flooring

— latency steep penalty at 60ms, flooring at zero

— drift subtracts precisely 0.20, floored at zero

— contradiction_patch boosts confidence, caps at 0.80

— entity_scrub reduces confidence by issue 0.85

— grounding_rewrite: hedged scores decrease than assertive

— to_dict consists of drift_penalty areaTwo of those checks exist due to actual errors I made throughout growth. The thread security check runs 20 concurrent examine() calls as a result of I initially had a race situation that solely confirmed up underneath load — not in regular single-call testing. The SQLite persistence check creates a contemporary monitor occasion pointing on the identical database file as a result of my staging surroundings was restarting each half-hour, and I found the drift monitor had been utterly blind your entire time. An in-memory dictionary resets on restart. SQLite doesn’t. Each checks are there as a result of the bugs already occurred as soon as.

============================= 70 handed =============================Sincere limits and design choices

Figuring out what a system doesn’t catch is as necessary as understanding what it does. Each threshold in DetectorConfig is a deliberate place to begin, not an arbitrary quantity.

Why 0.75 confidence threshold? Under this, most solutions include sufficient pure hedging to keep away from false positives. Above it, excessive assertiveness mixed with low faithfulness is the sample I noticed most frequently within the failures I researched. Tune it all the way down to 0.60 for high-stakes domains the place earlier flagging is well worth the extra evaluation load.

Why does 0.40 faithfulness overlap? That is the minimal required to tolerate pure paraphrasing with out falsely flagging grounded solutions that use totally different wording. Authorized and medical deployments ought to begin at 0.70 — in these domains, paraphrase is itself a threat, not a tolerance.

Why 0.35 drift threshold? Empirically tuned on a small question set. A tighter threshold (0.20) fires too early throughout regular immediate variation. A looser threshold (0.50) misses actual degradation. Your right worth is determined by how a lot pure variation your LLM produces for a similar query.

What this won’t catch:

Assured, constant hallucinations. If the mannequin all the time says “30 days” and the context additionally says “30 days,” all checks move. This technique assumes retrieved context is right. It can not detect dangerous retrieval — solely solutions that deviate from or contradict what was retrieved.

Creative paraphrase that adjustments which means. At 40% key phrase overlap, a fastidiously phrased fabrication can technically move the faithfulness examine. The brink is a dial — tune it on labeled samples out of your area.

Negation with stemming mismatches. The negation detector checks for “can cancelled”, not “can cancel”. A sentence like “you may cancel” technically slips by means of. Stemming earlier than sample matching closes this hole and is on the roadmap for v4.

Drift as a trailing indicator. The drift monitor requires at the very least three prior solutions earlier than it fires. Some dangerous solutions will probably be served earlier than detection. It tells you when to analyze. It doesn’t stop the primary few failures after a pipeline change.

Set up and utilization

pip set up spacy

python -m spacy obtain en_core_web_smNo extra pip dependencies past spaCy. SQLite ships with Python’s customary library.

Primary utilization:

from hallucination_detector import (

HallucinationDetector, HallucinationHealer,

DetectorConfig, QualityScore

)

config = DetectorConfig(db_path="drift.db", log_flagged=True)

detector = HallucinationDetector(config)

healer = HallucinationHealer(detector)

# Examine each LLM reply earlier than supply

report = detector.examine(query, context_chunks, llm_answer)

rating = QualityScore.compute(report)

if rating.routing == "settle for":

return llm_answer

# Try therapeutic

outcome = healer.heal(query, context_chunks, llm_answer, report)

rating = QualityScore.compute(report, healing_result=outcome)

if rating.routing == "healed_accept":

return outcome.healed_answer

return fallback_responseAsync (FastAPI):

report = await detector.ainspect(query, context_chunks, llm_answer)Structured JSON logging:

from hallucination_detector import configure_logging

import logging

configure_logging(stage=logging.WARNING)

# Each flagged response emits a structured JSON WARNING with the complete reportBlocking on important threat:

if report.is_hallucinating:

increase HallucinationBlocked(report)

# HallucinationBlocked.report carries the complete dict to your monitoring layerStrict mode for authorized or medical contexts:

config = DetectorConfig(

faithfulness_threshold=0.70, # up from 0.50

faithfulness_overlap_threshold=0.70, # up from 0.40

confidence_threshold=0.60, # down from 0.75 — flag earlier

drift_threshold=0.25, # down from 0.35 — extra delicate

db_path="drift_production.db",

log_flagged=True,

)What subsequent

Three issues are on my listing for the following model. The primary is surfacing therapeutic adjustments to the person instantly — proper now corrections occur silently, which feels flawed in domains the place customers must know the mannequin was flawed. The second is aggregating drift indicators throughout questions reasonably than per-question, so I can detect when a whole doc retailer begins degrading reasonably than catching it one query at a time. The third is a calibration harness that generates precision/recall curves from actual site visitors, so threshold tuning doesn’t should be carried out by hand.

Closing

I constructed this as a result of I wanted it. If you find yourself constructing a RAG system that learners will truly depend on, you can not afford to ship solutions you haven’t inspected. The mannequin will retrieve the suitable doc and nonetheless generate one thing flawed. That isn’t a bug you may repair on the mannequin stage. It’s a property of how these programs work.

The 70 checks usually are not proof this method is ideal. They’re proof that I perceive precisely what it catches and what it doesn’t, and that each failure sample I discovered throughout analysis now has a named assertion.

retrieve() → generate() → examine() → rating() → heal() → shipThe mannequin will hallucinate. The retrieval will fail.

The query is whether or not you catch it earlier than your customers do.

The complete supply code: https://github.com/Emmimal/hallucination-detector/

References

- Lewis, P., Perez, E., Piktus, A., Petroni, F., Karpukhin, V., Goyal, N., … & Kiela, D. (2020). Retrieval-Augmented Technology for Data-Intensive NLP Duties. Advances in Neural Info Processing Programs, 33, 9459–9474. https://arxiv.org/abs/2005.11401

- Honovich, O., Aharoni, R., Herzig, J., Taitelbaum, H., Kukliansy, D.,Cohen, V., Scialom, T., Szpektor, I., Hassidim, A., & Matias, Y. (2022). TRUE: Re-evaluating factual consistency analysis. Proceedings of NAACL 2022, 3905–3920. https://arxiv.org/abs/2204.04991

- Min, S., Krishna, Ok., Lyu, X., Lewis, M., Yih, W., Koh, P. W., … & Hajishirzi, H. (2023). FActScore: Nice-grained Atomic Analysis of Factual Precision in Lengthy Type Textual content Technology. Proceedings of EMNLP 2023, 12076–12100. https://arxiv.org/abs/2305.14251

- Manakul, P., Liusie, A., & Gales, M. J. F. (2023). SelfCheckGPT: Zero-Useful resource Black-Field Hallucination Detection for Generative Massive Language Fashions. Proceedings of EMNLP 2023. https://arxiv.org/abs/2303.08896

- Honnibal, M., Montani, I., Van Landeghem, S., & Boyd, A. (2020). spaCy: Industrial-strength Pure Language Processing in Python. Zenodo. https://doi.org/10.5281/zenodo.1212303

- Gao, Y., Xiong, Y., Gao, X., Jia, Ok., Pan, J., Bi, Y., … & Wang, H. (2023). Retrieval-Augmented Technology for Massive Language Fashions: A Survey. arXiv preprint arXiv:2312.10997. https://arxiv.org/abs/2312.10997

- Es, S., James, J., Espinosa-Anke, L., & Schockaert, S. (2023). Ragas: Automated Analysis of Retrieval Augmented Technology. arXiv preprint arXiv:2309.15217. https://arxiv.org/abs/2309.15217

Disclosure

I’m an impartial AI researcher and founding father of EmiTechLogic (emitechlogic.com). This challenge was constructed as a part of my analysis into RAG system failures whereas creating a RAG-powered assistant for EmiTechLogic. The failure patterns described on this article had been researched from documented manufacturing failures within the area and reproduced in code. The code was written and examined domestically in Python 3.12 on Home windows utilizing PyCharm. All libraries used are open-source with permissive licenses (MIT). The spaCy en_core_web_sm mannequin is distributed underneath the MIT License by Explosion AI. I’ve no monetary relationship with any library or device talked about. GitHub repository: https://github.com/Emmimal/hallucination-detector. I’m sharing this work to doc a sample that prices actual groups actual time, to not promote a services or products.