is a vital activity that’s vital to attain, with the huge quantity of content material accessible right now. An data retrieval activity is, for instance, each time you Google one thing or ask ChatGPT for a solution to a query. The knowledge you’re looking by way of may very well be a closed dataset of paperwork or your entire web.

On this article, I’ll talk about agentic data discovering, masking how data retrieval has modified with the discharge of LLMs, and specifically with the rise of AI Brokers, who’re rather more able to find data than we’ve seen till now. I’ll first talk about RAG, since that could be a foundational block in agentic data discovering. I’ll then proceed by discussing on a excessive stage how AI brokers can be utilized to seek out data.

Why do we’d like agentic data discovering

Data retrieval is a comparatively previous activity. TF-IDF is the primary algorithm used to seek out data in a big corpus of paperwork, and it really works by indexing your paperwork primarily based on the frequency of phrases inside particular paperwork and the way frequent a phrase is throughout all paperwork.

If a consumer searches for a phrase, and that phrase happens ceaselessly in just a few paperwork, however not often throughout all paperwork, it signifies sturdy relevance for these few paperwork.

Data retrieval is such a vital activity as a result of, as people, we’re so reliant on shortly discovering data to resolve totally different issues. These issues may very well be:

- The way to cook dinner a selected meal

- The way to implement a sure algorithm

- The way to get from location A->B

TF-IDF nonetheless works surprisingly properly, although we’ve now found much more highly effective approaches to discovering data. Retrieval augmented era (RAG), is one sturdy method, counting on semantic similarity to seek out helpful paperwork.

Agentic data discovering utilises totally different methods equivalent to key phrase search (TF-IDF, for instance, however sometimes modernized variations of the algorithm, equivalent to BM25), and RAG, to seek out related paperwork, search by way of them, and return outcomes to the consumer.

Construct your personal RAG

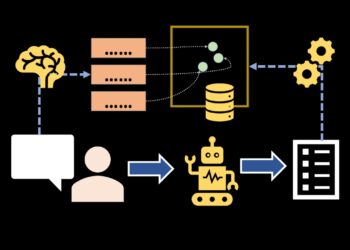

Constructing your personal RAG is surprisingly easy with all of the expertise and instruments accessible right now. There are quite a few packages on the market that allow you to implement RAG. All of them, nonetheless, depend on the identical, comparatively fundamental underlying expertise:

- Embed your doc corpus (you additionally sometimes chunk up the paperwork)

- Retailer the embeddings in a vector database

- The consumer inputs a search question

- Embed the search question

- Discover embedding similarity between the doc corpus and the consumer question, and return probably the most related paperwork

This may be applied in only a few hours if you understand what you’re doing. To embed your information and consumer queries, you possibly can, for instance, use:

- Managed providers equivalent to

- OpenAI’s text-embedding-large-3

- Google’s gemini-embedding-001

- Open-source choices like

- Alibaba’s qwen-embedding-8B

- Mistral’s Linq-Embed-Mistral

After you’ve embedded your paperwork, you possibly can retailer them in a vector database equivalent to:

After that, you’re mainly able to carry out RAG. Within the subsequent part, I’ll additionally cowl absolutely managed RAG options, the place you simply add a doc, and all chunking, embedding, and looking is dealt with for you.

Managed RAG providers

If you need an easier method, you too can use absolutely managed RAG options. Listed here are just a few choices:

- Ragie.ai

- Gemini File Search Software

- OpenAI File search instrument

These providers simplify the RAG course of considerably. You may add paperwork to any of those providers, and the providers routinely deal with the chunking, embedding, and inference for you. All it’s important to do is add your uncooked paperwork and supply the search question you need to run. The service will then offer you the related paperwork to you’re queries, which you’ll feed into an LLM to reply consumer questions.

Though managed RAG simplifies the method considerably, I might additionally like to focus on some downsides:

In the event you solely have PDFs, you possibly can add them instantly. Nevertheless, there are at the moment some file varieties not supported by the managed RAG providers. A few of them don’t assist PNG/JPG recordsdata, for instance, which complicates the method. One answer is to carry out OCR on the picture, and add the txt file (which is supported), however this, in fact, complicates your utility, which is the precise factor you need to keep away from when utilizing managed RAG.

One other draw back in fact is that it’s important to add uncooked paperwork to the providers. When doing this, it’s essential ensure that to remain compliant, for instance, with GDPR rules within the EU. This is usually a problem for some managed RAG providers, although I do know OpenAI no less than helps EU residency.

I’ll additionally present an instance of utilizing OpenAI’s File Search Software, which is of course quite simple to make use of.

First, you create a vector retailer and add paperwork:

from openai import OpenAI

shopper = OpenAI()

# Create vector retailer

vector_store = shopper.vector_stores.create(

identify="",

)

# Add file and add it to the vector retailer

shopper.vector_stores.recordsdata.upload_and_poll(

vector_store_id=vector_store.id,

file=open("filename.txt", "rb")

) After importing and processing paperwork, you possibly can question them with:

user_query = "What's the which means of life?"

outcomes = shopper.vector_stores.search(

vector_store_id=vector_store.id,

question=user_query,

)As it’s possible you’ll discover, this code is loads easier than establishing embedding fashions and vector databases to construct RAG your self.

Data retrieval instruments

Now that we have now the knowledge retrieval instruments available, we will begin performing agentic data retrieval. I’ll begin off with the preliminary method to make use of LLMs for data discovering, earlier than persevering with with the higher and up to date method.

Retrieval, then answering

The primary method is to start out by retrieving related paperwork and feeding that data to an LLM earlier than it solutions the consumer’s query. This may be executed by working each key phrase search and RAG search, discovering the highest X related paperwork, and feeding these paperwork into an LLM.

First, discover some paperwork with RAG:

user_query = "What's the which means of life?"

results_rag = shopper.vector_stores.search(

vector_store_id=vector_store.id,

question=user_query,

)Then, discover some paperwork with a key phrase search

def keyword_search(question):

# key phrase search logic ...

return outcomes

results_keyword_search = keyword_search(question)Then add these outcomes collectively, take away duplicate paperwork, and feed the contents of those paperwork to an LLM for answering:

def llm_completion(immediate):

# llm completion logic

return response

immediate = f"""

Given the next context {document_context}

Reply the consumer question: {user_query}

"""

response = llm_completion(immediate)In a whole lot of instances, this works tremendous properly and can present high-quality responses. Nevertheless, there’s a higher solution to carry out agentic data discovering.

Data retrieval features as a instrument

The most recent frontier LLMs are all educated with agentic behaviour in thoughts. This implies the LLMs are tremendous good at using instruments to reply the queries. You may present an LLM with an inventory of instruments, which it decides when to make use of itself, and which it will possibly utilise to reply consumer queries.

The higher method is thus to supply RAG and key phrase search as instruments to your LLMs. For GPT-5, you possibly can, for instance, do it like beneath:

# outline a customized key phrase search perform, and supply GPT-5 with each

# key phrase search and RAG (file search instrument)

def keyword_search(key phrases):

# carry out key phrase search

return outcomes

user_input = "What's the which means of life?"

instruments = [

{

"type": "function",

"function": {

"name": "keyword_search",

"description": "Search for keywords and return relevant results",

"parameters": {

"type": "object",

"properties": {

"keywords": {

"type": "array",

"items": {"type": "string"},

"description": "Keywords to search for"

}

},

"required": ["keywords"]

}

}

},

{

"kind": "file_search",

"vector_store_ids": [""],

}

]

response = shopper.responses.create(

mannequin="gpt-5",

enter=user_input,

instruments=instruments,

)

This works a lot better since you’re not working a one-time data discovering with RAG/key phrase search after which answering the consumer query. It really works properly as a result of:

- The agent can itself resolve when to make use of the instruments. Some queries, for instance, don’t require vector search

- OpenAI routinely does question rewriting, which means it runs parallel RAG queries with totally different variations of the consumer question (which it writes itself, primarily based on the consumer question

- The agent can decide to run extra RAG queries/key phrase searches if it believes it doesn’t have sufficient data

The final level within the checklist above is crucial level for agentic data discovering. Generally, you don’t discover the knowledge you’re on the lookout for with the preliminary question. The agent (GPT-5) can decide that that is the case and select to fireside extra RAG/key phrase search queries if it thinks it’s wanted. This usually results in a lot better outcomes and makes the agent extra prone to discover the knowledge you’re on the lookout for.

Conclusion

On this article, I lined the fundamentals of agentic data retrieval. I began by discussing why agentic data is so essential, highlighting how we’re extremely depending on fast entry to data. Moreover, I lined the instruments you should use for data retrieval with key phrase search and RAG. I then highlighted that you may run these instruments statically earlier than feeding the outcomes to an LLM, however the higher method is to feed these instruments to an LLM, making it an agent able to find data. I feel agentic data discovering shall be increasingly more essential sooner or later, and understanding methods to use AI brokers shall be an essential ability to create highly effective AI functions within the coming years.

👉 Discover me on socials:

💻 My webinar on Imaginative and prescient Language Fashions

🧑💻 Get in contact

✍️ Medium

You can even learn my different articles: