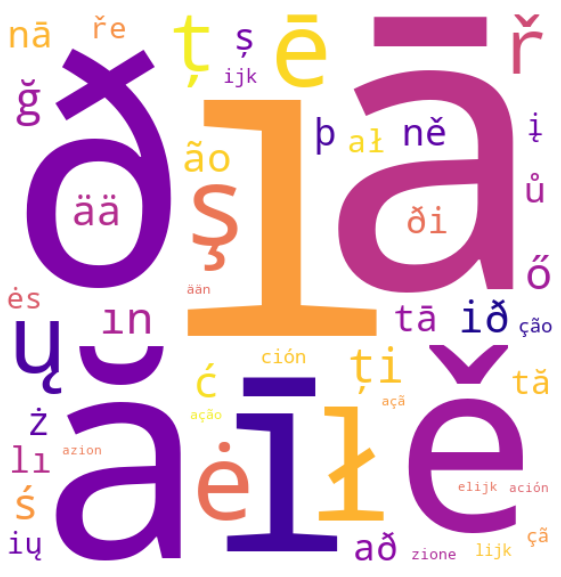

communicate a phrase of a language, you possibly can typically guess what language you’re simply by recognizing sure character patterns. I don’t communicate Icelandic, for instance, however I immediately acknowledge Icelandic textual content after I see letters like “ð” or “þ”, as these characters are extraordinarily uncommon elsewhere. Likewise, I’ve observed that after I see a number of “ijk” in a textual content, it’s most likely Dutch.

This text explores how we are able to use easy statistics to be taught these visible fingerprints, the character sequences that the majority strongly sign which language you’re , throughout 20 totally different European languages.

Study Linguistic Fingerprints With Statistics

To be taught the visible “fingerprints” of a language, we first want a technique to measure how distinctive a given character sample is. A pure start line is likely to be to take a look at the commonest character patterns inside every language. Nonetheless, this strategy shortly falls quick as a personality sample is likely to be quite common in a single language and in addition seem very steadily in others. Frequency alone doesn’t seize uniqueness. As a substitute, we need to ask:

“How more likely is that this sample to look in a single language in comparison with all others?”

That is the place statistics is available in! Formally, let:

- L be the set of all 20 studied languages

- S be the set of all noticed character patterns throughout these languages

To find out how strongly a given character sample s∈S identifies a language l∈L, we compute the probability ratio:

[LR_{s,l} = fracl)neg l)]

This compares the likelihood of seeing character sample s in language l, versus in some other language. The upper the ratio, the extra uniquely tied that sample is to that language.

Calculating the Chance Ratio in Apply

To compute the probability ratio for every character sample in observe, we have to translate the conditional possibilities into portions we are able to really measure. Right here’s how we outline

the related counts:

- cl(s): the variety of occasions character sample s seems in language l

- c¬l(s): the variety of occasions character sample s seems in all different languages

- Nl: the whole variety of character sample occurrences in language l

- N¬l: the whole variety of sample occurrences in all different languages

Utilizing these, the conditional possibilities change into:

[P(s|l) = frac{c_l(s)}{N_l},~P(s|neg l)=frac{c_{neg l}(s)}{N_{neg l}}]

and the probability ratio simplifies to:

[LR_{s,l} = fracl)neg l) = frac{c_l(s)cdot N_{neg l}}{c_{neg l}(s)cdot N_l}]

This provides us a numeric rating that quantifies how more likely a personality sample s is to look in language l versus all others.

Dealing with Zero Counts

There may be sadly an issue with our probability ratio method: what occurs when c¬l(s) = 0?

In different phrases, what if a sure character sample s seems solely in language l and in no others? This results in a divide-by-zero within the denominator, and a probability ratio of infinity.

Technically, this implies we’ve discovered a superbly distinctive sample for that language. However in observe, it’s not very useful. A personality sample may solely seem as soon as in a single language and by no means wherever else and it will be given an infinite rating. Not very helpful as a powerful “fingerprint” of the language.

To keep away from this concern, we apply a method referred to as additive smoothing. This technique adjusts the uncooked counts barely to remove zeros and cut back the impression of uncommon occasions.

Particularly, we add a small fixed α to each depend within the numerator, and α|S| to the denominator, with |S| being the whole variety of noticed character patterns. This has the impact of assuming that each character sample has a tiny likelihood of occurring in each language, even when it hasn’t been seen but.

With smoothing, the adjusted possibilities change into:

[P'(s|l) = frac{c_l(s) + alpha}S,~P'(s|neg l)=frac{c_{neg l}(s) + alpha}{N_{neg l} + alpha|S|}]

And the ultimate probability ratio to be maximized is:

[LR_{s,l} = fracl)neg l) = frac{(c_l(s) + alpha)cdot(N_{neg l} + alpha|S|)}{(N_l + alpha|S|)cdot(c_{neg l}(s) + alpha)}]

This retains issues secure and ensures {that a} uncommon sample doesn’t robotically dominate simply because it’s unique.

The Dataset

Now that we’ve outlined a metric to establish essentially the most distinctive character patterns (our linguistic “fingerprints”), it’s time to collect precise language information to research.

For this, I used the python library wordfreq, which compiles phrase frequency lists for dozens of languages based mostly on large-scale sources like Wikipedia, books, subtitles, and net textual content.

One significantly helpful perform for this evaluation is top_n_list(), which returns a sorted record of the highest n highest frequency phrases in a supplied language. For instance, to get the highest 40 most typical phrases in Icelandic, we’d name:

wordfreq.top_n_list("is", 40, ascii_only=False)The argument ascii_only=False ensures that non-ASCII characters — like Icelandic’s “ð” and “þ” — are preserved within the output. That’s important for this evaluation, since we’re particularly searching for language-unique character patterns, which incorporates single characters.

To construct the dataset, I pulled the highest 5,000 most frequent phrases in every of the next 20 European languages:

Catalan, Czech, Danish, Dutch, English, Finnish, French, German, Hungarian, Icelandic, Italian, Latvian, Lithuanian, Norwegian, Polish, Portuguese, Romanian, Spanish, Swedish, and Turkish.

This yields a big multilingual vocabulary of 100,000 complete phrases, wealthy sufficient to extract significant statistical patterns throughout languages.

To extract the character patterns used within the evaluation, all attainable substrings of size 1 to five had been generated from every phrase within the dataset. For instance, the phrase language would comprise patterns akin to l, la, lan, lang, langu, a, an, ang, and so forth. The result’s a complete set S of over 180,000 distinctive character patterns noticed throughout the 20 studied languages.

Outcomes

For every language, the highest 5 most distinctive character patterns, ranked by the probability ratio, are proven. The smoothing fixed was chosen to be α=0.5.

As a result of the uncooked probability ratios will be fairly giant, I’ve reported the base-10 logarithm of the probability ratio (log10(LR)) as a substitute. For instance, a log probability ratio of three signifies that the character sample is 103 = 1,000 occasions extra more likely to seem in that language than in some other. Notice that as a result of smoothing, these probability ratios are approximate quite than precise, and the extremeness of some scores could also be dampened.

Every cell exhibits a top-ranked character sample and its log probability ratio.

| Language | #1 | #2 | #3 | #4 | #5 |

|---|---|---|---|---|---|

| Catalan | ènc 3.03 |

ènci 3.01 |

cions 2.95 |

ència 2.92 |

atge 2.77 |

| Czech | ě 4.14 |

ř 3.94 |

ně 3.65 |

ů 3.59 |

ře 3.55 |

| Danish | øj 2.82 |

æng 2.77 |

søg 2.73 |

skab 2.67 |

øge 2.67 |

| Dutch | ijk 3.51 |

lijk 3.45 |

elijk 3.29 |

ijke 3.04 |

voor 3.04 |

| English | ally 2.79 |

tly 2.64 |

ough 2.54 |

ying 2.54 |

cted 2.52 |

| Finnish | ää 3.74 |

ään 3.33 |

tää 3.27 |

llä 3.13 |

ssä 3.13 |

| French | êt 2.83 |

eux 2.78 |

rése 2.73 |

dép 2.68 |

prése 2.64 |

| German | eich 3.03 |

tlic 2.98 |

tlich 2.98 |

schl 2.98 |

ichen 2.90 |

| Hungarian | ő 3.80 |

ű 3.17 |

gye 3.16 |

szá 3.14 |

ész 3.09 |

| Icelandic | ð 4.32 |

ið 3.74 |

að 3.64 |

þ 3.63 |

ði 3.60 |

| Italian | zione 3.41 |

azion 3.29 |

zion 3.07 |

aggi 2.90 |

zioni 2.87 |

| Latvian | ā 4.50 |

ī 4.20 |

ē 4.10 |

tā 3.66 |

nā 3.64 |

| Lithuanian | ė 4.11 |

ų 4.03 |

ių 3.58 |

į 3.57 |

ės 3.56 |

| Norwegian | sjon 3.17 |

asj 2.93 |

øy 2.88 |

asjon 2.88 |

asjo 2.88 |

| Polish | ł 4.13 |

ś 3.79 |

ć 3.77 |

ż 3.69 |

ał 3.59 |

| Portuguese | ão 3.73 |

çã 3.53 |

ção 3.53 |

ação 3.32 |

açã 3.32 |

| Romanian | ă 4.31 |

ț 4.01 |

ți 3.86 |

ș 3.64 |

tă 3.60 |

| Spanish | ción 3.51 |

ación 3.29 |

ión 3.14 |

sión 2.86 |

iento 2.85 |

| Swedish | förs 2.89 |

ställ 2.72 |

stäl 2.72 |

ång 2.68 |

öra 2.68 |

| Turkish | ı 4.52 |

ş 4.10 |

ğ 3.83 |

ın 3.80 |

lı 3.60 |

Dialogue

Under are some fascinating interpretations of the outcomes. This isn’t meant to be a complete evaluation, only a few observations I discovered noteworthy:

- Lots of the character patterns with the best probability ratios are single characters distinctive to their language, such because the beforehand talked about Icelandic “ð” and “þ”, Romanian’s “ă”, “ț”, and “ș”, or Turkish’s “ı”, “ş”, and “ğ”. As a result of these characters are basically absent from all different languages within the dataset, they might have produced infinite probability ratios if not for the additive smoothing.

- In some languages, most notably Dutch, most of the prime outcomes are substrings of each other. For instance, the highest sample “ijk” additionally seems inside the subsequent highest-ranking patterns: “lijk”, “elijk”, and “ijke”. This exhibits how sure mixtures of letters are reused steadily in longer phrases, making them much more distinctive for that language.

- English has a few of the least distinctive character patterns of all of the languages analyzed, with a most log probability ratio of solely 2.79. This can be because of the presence of English loanwords in lots of different languages’ prime 5,000 phrase lists, which dilutes the individuality of English-specific patterns.

- There are a number of instances the place the highest character patterns mirror shared grammatical constructions throughout languages. For instance, the Spanish “-ción”, Italian “-zione”, and Norwegian “-sjon” all perform as nominalization suffixes, much like the English “-tion” — turning verbs or adjectives into nouns. These endings stand out strongly in every language and spotlight how totally different languages can comply with comparable patterns utilizing totally different spellings.

Conclusion

This mission began with a easy query: What makes a language seem like itself? By analyzing the 5000 most typical phrases in 20 European languages and evaluating the character patterns they use, we uncovered distinctive ‘fingerprints’ for every language — from accented letters like “ş” and “ø” to recurring letter mixtures like “ijk” or “ción”. Whereas the outcomes aren’t meant to be definitive, they provide a enjoyable and statistically grounded technique to discover what units languages aside visually, even with out understanding a single phrase.

See my GitHub Repository for the total code implementation of this system.

Thanks for studying!

References

wordfreq python library:

- Robyn Speer. (2022). rspeer/wordfreq: v3.0 (v3.0.2). Zenodo. https://doi.org/10.5281/zenodo.7199437