Accuracy is usually important for LLM purposes, particularly in circumstances equivalent to API calling or summarisation of monetary studies. Thankfully, there are methods to boost precision. One of the best practices to enhance accuracy embody the next steps:

- You can begin merely with immediate engineering strategies — including extra detailed directions, utilizing few-shot prompting, or asking the mannequin to assume step-by-step.

- If accuracy continues to be inadequate, you possibly can incorporate a self-reflection step, for instance, to return errors from the API calls and ask the LLM to right errors.

- The following possibility is to supply probably the most related context to the LLM utilizing RAG (Retrieval-Augmented Technology) to spice up precision additional.

We’ve explored this method in my earlier TDS article, “From Prototype to Manufacturing: Enhancing LLM Accuracy”. In that undertaking, we constructed an SQL Agent and went from 0% legitimate SQL queries to 70% accuracy. Nonetheless, there are limits to what we are able to obtain with immediate. To interrupt by way of this barrier and attain the following frontier of accuracy, we have to undertake extra superior strategies.

Probably the most promising possibility is fine-tuning. With fine-tuning, we are able to transfer from relying solely on info in prompts to embedding further info straight into the mannequin’s weights.

Let’s begin by understanding what fine-tuning is. Positive-tuning is the method of refining pre-trained fashions by coaching them on smaller, task-specific datasets to boost their efficiency particularly purposes. Primary fashions are initially skilled on huge quantities of information, which permits them to develop a broad understanding of language. Positive-tuning, nonetheless, tailors these fashions to specialised duties, remodeling them from general-purpose programs into extremely focused instruments. For instance, instruction fine-tuning taught GPT-2 to speak and comply with directions, and that’s how ChatGPT emerged.

Primary LLMs are initially skilled to foretell the following token based mostly on huge textual content corpora. Positive-tuning sometimes adopts a supervised method, the place the mannequin is introduced with particular questions and corresponding solutions, permitting it to regulate its weights to enhance accuracy.

Traditionally, fine-tuning required updating all mannequin weights, a way often known as full fine-tuning. This course of was computationally costly because it required storing all of the mannequin weights, states, gradients and ahead activations in reminiscence. To deal with these challenges, parameter-efficient fine-tuning strategies had been launched. PEFT strategies replace solely the small set of the mannequin parameters whereas maintaining the remaining frozen. Amongst these strategies, some of the broadly adopted is LoRA (Low-Rank Adaptation), which considerably reduces the computational value with out compromising efficiency.

Execs & cons

Earlier than contemplating fine-tuning, it’s important to weigh its benefits and limitations.

Benefits:

- Positive-tuning permits the mannequin to be taught and retain considerably extra info than will be offered by way of prompts alone.

- It normally offers greater accuracy, usually exceeding 90%.

- Throughout inference, it could cut back prices by enabling using smaller, task-specific fashions as an alternative of bigger, general-purpose ones.

- Positive-tuned small fashions can usually be deployed on-premises, eliminating reliance on cloud suppliers equivalent to OpenAI or Anthropic. This method reduces prices, enhances privateness, and minimizes dependency on exterior infrastructure.

Disadvantages:

- Positive-tuning requires upfront investments for mannequin coaching and knowledge preparation.

- It requires particular technical data and should contain a steep studying curve.

- The standard of outcomes relies upon closely on the provision of high-quality coaching knowledge.

Since this undertaking is concentrated on gaining data, we’ll proceed with fine-tuning. Nonetheless, in real-world eventualities, it’s vital to guage whether or not the advantages of fine-tuning justify all of the related prices and efforts.

Execution

The following step is to plan how we’ll method fine-tuning. After listening to the “Enhancing Accuracy of LLM Functions” course, I’ve determined to strive the Lamini platform for the next causes:

- It affords a easy one-line API name to fine-tune the mannequin. It’s particularly handy since we’re simply beginning to be taught a brand new approach.

- Though it’s not free and will be fairly costly for toy initiatives (at $1 per tuning step), they provide free credit upon registration, that are adequate for preliminary testing.

- Lamini has applied a brand new method, Lamini Reminiscence Tuning, which guarantees zero lack of factual accuracy whereas preserving common capabilities. This can be a vital declare, and it’s value testing out. We’ll focus on this method in additional element shortly.

After all, there are many different fine-tuning choices you possibly can contemplate:

- The Llama documentation supplies quite a few recipes for fine-tuning, which will be executed on a cloud server and even regionally for smaller fashions.

- There are lots of step-by-step guides out there on-line, together with the tutorial on how you can fine-tune Llama on Kaggle from DataCamp.

- You’ll be able to fine-tune not solely open-sourced fashions. OpenAI additionally affords the potential to fine-tune their fashions.

Lamini Reminiscence Tuning

As I discussed earlier, Lamini launched a brand new method to fine-tuning, and I imagine it’s value discussing it in additional element.

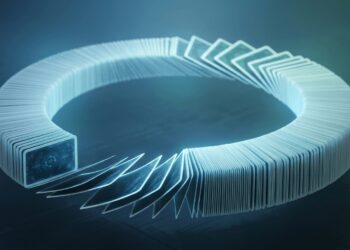

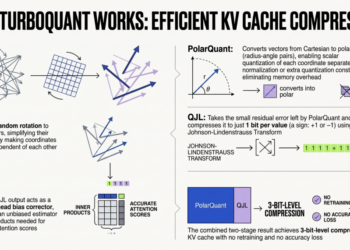

Lamini launched the Combination of Reminiscence Specialists (MoME) method, which permits LLMs to be taught an enormous quantity of factual info with virtually zero loss, all whereas sustaining generalization capabilities and requiring a possible quantity of computational sources.

To realize this, Lamini prolonged a pre-trained LLM by including a big quantity (on the order of 1 million) of LoRA adapters together with a cross-attention layer. Every LoRA adapter is a reminiscence professional, functioning as a kind of reminiscence for the mannequin. These reminiscence consultants focus on completely different features, guaranteeing that the mannequin retains devoted and correct info from the information it was tuned on. Impressed by info retrieval, these million reminiscence consultants are equal to indices from which the mannequin intelligently retrieves and routes.

At inference time, the mannequin retrieves a subset of probably the most related consultants at every layer and merges again into the bottom mannequin to generate a response to the consumer question.

Lamini Reminiscence Tuning is stated to be able to attaining 95% accuracy. The important thing distinction from conventional instruction fine-tuning is that as an alternative of optimizing for common error throughout all duties, this method focuses on attaining zero error for the information the mannequin is particularly skilled to recollect.

So, this method permits an LLM to protect its potential to generalize with common error on every thing else whereas recalling the vital information almost completely.

For additional particulars, you possibly can confer with the analysis paper “Banishing LLM Hallucinations Requires Rethinking Generalization” by Li et al. (2024)

Lamini Reminiscence Tuning holds nice promise — let’s see if it delivers on its potential in apply.

As all the time, let’s start by setting every thing up. As we mentioned, we’ll be utilizing Lamini to fine-tune Llama, so step one is to put in the Lamini bundle.

pip set up lamini

Moreover, we have to arrange the Lamini API Key on their web site and specify it as an surroundings variable.

export LAMINI_API_KEY=""

As I discussed above, we shall be bettering the SQL Agent, so we want a database. For this instance, we’ll proceed utilizing ClickHouse, however be at liberty to decide on any database that fits your wants. You could find extra particulars on the ClickHouse setup and the database schema in the earlier article.

To fine-tune an LLM, we first want a dataset — in our case, a set of pairs of questions and solutions (SQL queries). The duty of placing collectively a dataset may appear daunting, however fortunately, we are able to leverage LLMs to do it.

The important thing elements to contemplate whereas making ready the dataset:

- The standard of the information is essential, as we’ll ask the mannequin to recollect these information.

- Range within the examples is vital so {that a} mannequin can learn to deal with completely different circumstances.

- It’s preferable to make use of actual knowledge quite than synthetically generated knowledge because it higher represents real-life questions.

- The standard minimal measurement for a fine-tuning dataset is round 1,000 examples, however the extra high-quality knowledge, the higher.

Producing examples

All the data required to create question-and-answer pairs is current within the database schema, so it will likely be a possible activity for an LLM to generate examples. Moreover, I’ve a consultant set of Q&A pairs that I used for RAG method, which we are able to current to the LLM as examples of legitimate queries (utilizing the few-shot prompting approach). Let’s load the RAG dataset.

# loading a set of examples

with open('rag_set.json', 'r') as f:

rag_set = json.masses(f.learn())rag_set_df = pd.DataFrame(rag_set)

rag_set_df['qa_fmt'] = checklist(map(

lambda x, y: "query: %s, sql_query: %s" % (x, y),

rag_set_df.query,

rag_set_df.sql_query

))

The concept is to iteratively present the LLM with the schema info and a set of random examples (to make sure range within the questions) and ask it to generate a brand new, related, however completely different Q&A pair.

Let’s create a system immediate that features all the mandatory particulars concerning the database schema.

generate_dataset_system_prompt = '''

You're a senior knowledge analyst with greater than 10 years of expertise writing complicated SQL queries.

There are two tables within the database you are working with with the next schemas. Desk: ecommerce.customers

Description: prospects of the web store

Fields:

- user_id (integer) - distinctive identifier of buyer, for instance, 1000004 or 3000004

- nation (string) - nation of residence, for instance, "Netherlands" or "United Kingdom"

- is_active (integer) - 1 if buyer continues to be lively and 0 in any other case

- age (integer) - buyer age in full years, for instance, 31 or 72

Desk: ecommerce.periods

Description: periods for on-line store

Fields:

- user_id (integer) - distinctive identifier of buyer, for instance, 1000004 or 3000004

- session_id (integer) - distinctive identifier of session, for instance, 106 or 1023

- action_date (date) - session begin date, for instance, "2021-01-03" or "2024-12-02"

- session_duration (integer) - period of session in seconds, for instance, 125 or 49

- os (string) - operation system that buyer used, for instance, "Home windows" or "Android"

- browser (string) - browser that buyer used, for instance, "Chrome" or "Safari"

- is_fraud (integer) - 1 if session is marked as fraud and 0 in any other case

- income (float) - earnings in USD (the sum of bought objects), for instance, 0.0 or 1506.7

Write a question in ClickHouse SQL to reply the next query.

Add "format TabSeparatedWithNames" on the finish of the question to get knowledge from ClickHouse database in the best format.

'''

The following step is to create a template for the consumer question.

generate_dataset_qa_tmpl = '''

Contemplating the next examples, please, write query

and SQL question to reply it, that's related however completely different to offered beneath.Examples of questions and SQL queries to reply them:

{examples}

'''

Since we want a high-quality dataset, I favor utilizing a extra superior mannequin — GPT-4o— quite than Llama. As normal, I’ll initialize the mannequin and create a dummy device for structured output.

from langchain_core.instruments import device@device

def generate_question_and_answer(feedback: str, query: str, sql_query: str) -> str:

"""Returns the brand new query and SQL question

Args:

feedback (str): 1-2 sentences concerning the new query and reply pair,

query (str): new query

sql_query (str): SQL question in ClickHouse syntax to reply the query

"""

go

from langchain_openai import ChatOpenAI

generate_qa_llm = ChatOpenAI(mannequin="gpt-4o", temperature = 0.5)

.bind_tools([generate_question_and_answer])

Now, let’s mix every thing right into a perform that can generate a Q&A pair and create a set of examples.

# helper perform to mix system + consumer prompts

def get_openai_prompt(query, system):

messages = [

("system", system),

("human", question)

]

return messagesdef generate_qa():

# deciding on 3 random examples

sample_set_df = rag_set_df.pattern(3)

examples = 'nn'.be part of(sample_set_df.qa_fmt.values)

# establishing immediate

immediate = get_openai_prompt(

generate_dataset_qa_tmpl.format(examples = examples),

generate_dataset_system_prompt)

# calling LLM

qa_res = generate_qa_llm.invoke(immediate)

strive:

rec = qa_res.tool_calls[0]['args']

rec['examples'] = examples

return rec

besides:

go

# executing perform

qa_tmp = []

for i in tqdm.tqdm(vary(2000)):

qa_tmp.append(generate_qa())

new_qa_df = pd.DataFrame(qa_tmp)

I generated 2,000 examples, however in actuality, I used a a lot smaller dataset for this toy undertaking. Subsequently, I like to recommend limiting the variety of examples to 200–300.

Cleansing the dataset

As we all know, “rubbish in, rubbish out”, so a vital step earlier than fine-tuning is cleansing the information generated by the LLM.

The primary — and most evident — examine is to make sure that every SQL question is legitimate.

def is_valid_output(s):

if s.startswith('Database returned the next error:'):

return 'error'

if len(s.strip().cut up('n')) >= 1000:

return 'too many rows'

return 'okay'new_qa_df['output'] = new_qa_df.sql_query.map(get_clickhouse_data)

new_qa_df['is_valid_output'] = new_qa_df.output.map(is_valid_output)

There are not any invalid SQL queries, however some questions return over 1,000 rows.

Though these circumstances are legitimate, we’re specializing in an OLAP state of affairs with aggregated stats, so I’ve retained solely queries that return 100 or fewer rows.

new_qa_df['output_rows'] = new_qa_df.output.map(

lambda x: len(x.strip().cut up('n')))filt_new_qa_df = new_qa_df[new_qa_df.output_rows <= 100]

I additionally eradicated circumstances with empty output — queries that return no rows or solely the header.

filt_new_qa_df = filt_new_qa_df[filt_new_qa_df.output_rows > 1]

One other vital examine is for duplicate questions. The identical query with completely different solutions might confuse the mannequin, because it gained’t have the ability to tune to each options concurrently. And actually, we have now such circumstances.

filt_new_qa_df = filt_new_qa_df[['question', 'sql_query']].drop_duplicates()

filt_new_qa_df['question'].value_counts().head(10)

To resolve these duplicates, I’ve saved just one reply for every query.

filt_new_qa_df = filt_new_qa_df.drop_duplicates('query')

Though I generated round 2,000 examples, I’ve determined to make use of a smaller dataset of 200 question-and-answer pairs. Positive-tuning with a bigger dataset would require extra tuning steps and be costlier.

sample_dataset_df = pd.read_csv('small_sample_for_finetuning.csv', sep = 't')

You could find the ultimate coaching dataset on GitHub.

Now that our coaching dataset is prepared, we are able to transfer on to probably the most thrilling half — fine-tuning.

The primary iteration

The following step is to generate the units of requests and responses for the LLM that we are going to use to fine-tune the mannequin.

Since we’ll be working with the Llama mannequin, let’s create a helper perform to assemble a immediate for it.

def get_llama_prompt(user_message, system_message=""):

system_prompt = ""

if system_message != "":

system_prompt = (

f"<|start_header_id|>system<|end_header_id|>nn{system_message}"

f"<|eot_id|>"

)

immediate = (f"<|begin_of_text|>{system_prompt}"

f"<|start_header_id|>consumer<|end_header_id|>nn"

f"{user_message}"

f"<|eot_id|>"

f"<|start_header_id|>assistant<|end_header_id|>nn"

)

return immediate

For requests, we’ll use the next system immediate, which incorporates all the mandatory details about the information schema.

generate_query_system_prompt = '''

You're a senior knowledge analyst with greater than 10 years of expertise writing complicated SQL queries.

There are two tables within the database you are working with with the next schemas. Desk: ecommerce.customers

Description: prospects of the web store

Fields:

- user_id (integer) - distinctive identifier of buyer, for instance, 1000004 or 3000004

- nation (string) - nation of residence, for instance, "Netherlands" or "United Kingdom"

- is_active (integer) - 1 if buyer continues to be lively and 0 in any other case

- age (integer) - buyer age in full years, for instance, 31 or 72

Desk: ecommerce.periods

Description: periods of utilization the web store

Fields:

- user_id (integer) - distinctive identifier of buyer, for instance, 1000004 or 3000004

- session_id (integer) - distinctive identifier of session, for instance, 106 or 1023

- action_date (date) - session begin date, for instance, "2021-01-03" or "2024-12-02"

- session_duration (integer) - period of session in seconds, for instance, 125 or 49

- os (string) - operation system that buyer used, for instance, "Home windows" or "Android"

- browser (string) - browser that buyer used, for instance, "Chrome" or "Safari"

- is_fraud (integer) - 1 if session is marked as fraud and 0 in any other case

- income (float) - earnings in USD (the sum of bought objects), for instance, 0.0 or 1506.7

Write a question in ClickHouse SQL to reply the next query.

Add "format TabSeparatedWithNames" on the finish of the question to get knowledge from ClickHouse database in the best format.

Reply questions following the directions and offering all of the wanted info and sharing your reasoning.

'''

Let’s create the responses within the format appropriate for Lamini fine-tuning. We have to put together a listing of dictionaries with enter and output keys.

formatted_responses = []for rec in sample_dataset_df.to_dict('data'):

formatted_responses.append(

{

'enter': get_llama_prompt(rec['question'],

generate_query_system_prompt),

'output': rec['sql_query']

}

)

Now, we’re totally ready for fine-tuning. We simply want to pick out a mannequin and provoke the method. We shall be fine-tuning the Llama 3.1 8B mannequin.

from lamini import Lamini

llm = Lamini(model_name="meta-llama/Meta-Llama-3.1-8B-Instruct")finetune_args = {

"max_steps": 50,

"learning_rate": 0.0001

}

llm.prepare(

data_or_dataset_id=formatted_responses,

finetune_args=finetune_args,

)

We will specify a number of hyperparameters, and yow will discover all the main points in the Lamini documentation. For now, I’ve handed solely probably the most important ones to the perform:

max_steps: This determines the variety of tuning steps. The documentation recommends utilizing 50 steps for experimentation to get preliminary outcomes with out spending an excessive amount of cash.learning_rate: This parameter determines the step measurement of every iteration whereas shifting towards a minimal of a loss perform (Wikipedia). The default is 0.0009, however based mostly on the steerage, I’ve determined to make use of a smaller worth.

Now, we simply want to attend for 10–quarter-hour whereas the mannequin trains, after which we are able to take a look at it.

finetuned_llm = Lamini(model_name='')

# yow will discover Mannequin ID within the Lamini interfacequery = '''What number of prospects made buy in December 2024?'''

immediate = get_llama_prompt(query, generate_query_system_prompt)

finetuned_llm.generate(immediate, max_new_tokens=200)

# choose uniqExact(s.user_id) as prospects

# from ecommerce.periods s be part of ecommerce.customers u

# on s.user_id = u.user_id

# the place (toStartOfMonth(action_date) = '2024-12-01') and (income > 0)

# format TabSeparatedWithNames

It’s value noting that we’re utilizing Lamini for inference as effectively and should pay for it. You could find up-to-date details about the prices right here.

At first look, the consequence appears to be like promising, however we want a extra sturdy accuracy analysis to substantiate it.

Moreover, it’s value noting that since we’ve fine-tuned the mannequin for our particular activity, it now constantly returns SQL queries, that means we might now not want to make use of device requires structured output.

Evaluating the standard

We’ve mentioned LLM accuracy analysis intimately in my earlier article, so right here I’ll present a short recap.

We use a golden set of question-and-answer pairs to guage the mannequin’s high quality. Since it is a toy instance, I’ve restricted the set to only 10 pairs, which you’ll be able to assessment on GitHub.

The analysis course of consists of two elements:

- SQL Question Validity: First, we examine that the SQL question is legitimate, that means ClickHouse doesn’t return errors throughout execution.

- Question Correctness: Subsequent, we be certain that the generated question is right. We examine the outputs of the generated and true queries utilizing LLMs to confirm that they supply semantically similar outcomes.

The preliminary outcomes are removed from splendid, however they’re considerably higher than the bottom Llama mannequin (which produced zero legitimate SQL queries). Right here’s what we discovered:

- ClickHouse returned errors for 2 queries.

- Three queries had been executed, however the outcomes had been incorrect.

- 5 queries had been right.

No surprises — there’s no silver bullet, and it’s all the time an iterative course of. Let’s examine what went fallacious.

Diving into the errors

The method is simple. Let’s look at the errors one after the other to know why we bought these outcomes and the way we are able to repair them. We’ll begin with the primary unsuccessful instance.