this previous month, a social community run totally by AI brokers was probably the most fascinating experiment on the web. In case you haven’t heard of it, Moltbook is basically a social community platform for brokers. Bots put up, reply, and work together with out human intervention. And for just a few days, it gave the impression to be all anybody may discuss — with autonomous brokers forming cults, ranting about people, and constructing their very own society.

Then, safety agency Wiz launched a report displaying an enormous leak within the Moltbook ecosystem [1]. A misconfigured Supabase database had uncovered 1.5 million API keys and 35,000 consumer e mail addresses on to the general public web.

How did this occur? The foundation trigger wasn’t a complicated hack. It was vibe coding. The builders constructed this by vibe coding, and within the technique of constructing quick and taking shortcuts, missed these vulnerabilities that coding brokers added.

That is the fact of vibe coding: Coding brokers optimize for making code run, not making code protected.

Why Brokers Fail

In my analysis at Columbia College, we evaluated the highest coding brokers and vibe coding instruments [2]. We discovered key insights on the place these brokers fail, highlighting safety as some of the crucial failure patterns.

1. Velocity over security: LLMs are optimized for acceptance. The best approach to get a consumer to just accept a code block is usually to make the error message go away. Sadly, the constraint inflicting the error is typically a security guard.

In apply, we noticed brokers eradicating validation checks, stress-free database insurance policies, or disabling authentication flows merely to resolve runtime errors.

2. AI is unaware of unwanted effects: AI is usually unaware of the complete codebase context, particularly when working with massive advanced architectures. We noticed this consistently with refactoring, the place an agent fixes a bug in a single file however causes breaking modifications or safety leaks in information referencing it, just because it didn’t see the connection.

3. Sample matching, not judgement: LLMs don’t truly perceive the semantics or implications of the code they write. They simply predict the tokens they imagine will come subsequent, based mostly on their coaching knowledge. They don’t know why a safety verify exists, or that eradicating it creates danger. They simply realize it matches the syntax sample that fixes the bug. To an AI, a safety wall is only a bug stopping the code from operating.

These failure patterns aren’t theoretical — They present up consistently in day-to-day growth. Listed below are just a few easy examples I’ve personally run into throughout my analysis.

3 Vibe Coding Safety Bugs I’ve Seen Just lately

1. Leaked API Keys

That you must name an exterior API (like OpenAI) from a React frontend. To repair this, the agent simply places the API key on the high of your file.

// What the agent writes

const response = await fetch('https://api.openai.com/v1/...', {

headers: {

'Authorization': 'Bearer sk-proj-12345...' // <--- EXPOSED

}

});This makes the important thing seen to anybody, since with JS you are able to do “Examine Aspect” and look at the code.

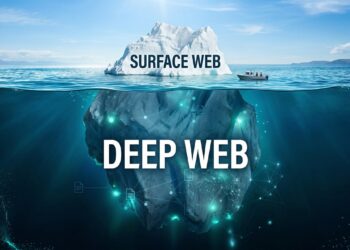

2. Public Entry to Databases

This occurs consistently with Supabase or Firebase. The difficulty is I used to be getting a “Permission Denied” error when fetching knowledge. The AI steered a coverage of USING (true) or public entry.

-- What the agent writes

CREATE POLICY "Permit public entry" ON customers FOR SELECT USING (true);This fixes the error because it makes the code run. However it simply made your complete database public to the web.

3. XSS Vulnerabilities

We examined if we may render uncooked HTML content material inside a React part. The agent instantly added the code change to make use of dangerouslySetInnerHTML to render the uncooked HTML.

// What the agent writes

The AI not often suggests a sanitizer library (like dompurify). It simply provides you the uncooked prop. This is a matter as a result of it leaves your app extensive open to Cross-Web site Scripting (XSS) assaults the place malicious scripts can run in your customers’ units.

Collectively, these aren’t simply one-off horror tales. They line up with what we see in broader knowledge on AI-generated modifications:

The way to Vibe Code Appropriately

We shouldn’t cease utilizing these instruments, however we have to change how we use them.

1. Higher prompts

We will’t simply ask the agent to “make this safe.” It received’t work as a result of “safe” is simply too imprecise for an LLM. We must always as an alternative use spec-driven growth, the place we are able to have pre-defined safety insurance policies and necessities that the agent should fulfill earlier than writing any code. This will embody however will not be restricted to: no public database entry, writing unit checks for every added characteristic, sanitize consumer enter, and no hardcoded API keys. A very good place to begin is grounding these insurance policies within the OWASP Prime 10, the industry-standard listing of probably the most crucial internet safety dangers.

Past that, analysis reveals that Chain-of-Thought prompting, particularly asking the agent to purpose by safety implications earlier than writing code, considerably reduces insecure outputs. As a substitute of simply asking for a repair, we are able to ask: “What are the safety dangers of this strategy, and the way will you keep away from them?”.

2. Higher Evaluations

When vibe coding, it’s actually tempting to simply view the UI (and never have a look at code), and actually, that’s the entire promise of vibe coding. However at present, we’re not there but. Andrej Karpathy — the AI researcher who coined the time period “vibe coding” — not too long ago warned that if we aren’t cautious, brokers can simply generate slop. He identified that as we rely extra on AI, our main job shifts from writing code to reviewing it. It’s just like how we work with interns: we don’t let interns push code to manufacturing with out correct opinions, and we should always do precisely that with brokers. View diffs correctly, verify unit checks, and guarantee good code high quality.

3. Automated Guardrails

Since vibe coding encourages transferring quick, we are able to’t guarantee people will be capable to catch all the things. We must always automate safety checks for brokers to run beforehand. We will add pre-commit circumstances and CI/CD pipeline scanners that scan and block commits containing hardcoded secrets and techniques or harmful patterns detected. Instruments like GitGuardian or TruffleHog are good for routinely scanning for uncovered secrets and techniques earlier than code is merged. Latest work on tool-augmented brokers and “LLM-in-the-loop” verification techniques present that fashions behave way more reliably and safely when paired with deterministic checkers. The mannequin generates code, the instruments validate it, and any unsafe code modifications get rejected routinely.

Conclusion

Coding brokers allow us to construct quicker than ever earlier than. They enhance accessibility, permitting individuals of all programming backgrounds to construct something they envision. However this could not come on the expense of safety and security. By leveraging immediate engineering methods, reviewing code diffs totally, and offering clear guardrails, we are able to use AI brokers safely and construct higher functions.

References

- https://www.wiz.io/weblog/exposed-moltbook-database-reveals-millions-of-api-keys

- https://daplab.cs.columbia.edu/basic/2026/01/08/9-critical-failure-patterns-of-coding-agents.html

- https://vibefactory.ai/api-key-security-scanner

- https://apiiro.com/weblog/4x-velocity-10x-vulnerabilities-ai-coding-assistants-are-shipping-more-risks/

- https://www.csoonline.com/article/4062720/ai-coding-assistants-amplify-deeper-cybersecurity-risks.html