challenge involving the construct of propensity fashions to foretell prospects’ potential purchases, I encountered function engineering points that I had seen quite a few occasions earlier than.

These challenges could be broadly categorized into two classes:

1) Insufficient Characteristic Administration

- Definitions, lineage, and variations of options generated by the workforce weren’t systematically tracked, thereby limiting function reuse and reproducibility of mannequin runs.

- Characteristic logic was manually maintained throughout separate coaching and inference scripts, resulting in a threat of inconsistent options for coaching and inference (i.e., training-serving skew)

- Options have been saved as flat information (e.g., CSV), which lack schema enforcement and help for low-latency or scalable entry.

2) Excessive Characteristic Engineering Latency

- Heavy function engineering workloads typically come up when coping with time-series information, the place a number of window-based transformations have to be computed.

- When these computations are executed sequentially quite than optimized for parallel execution, the latency of function engineering can enhance considerably.

On this article, I clearly clarify the ideas and implementation of function shops (Feast) and distributed compute frameworks (Ray) for function engineering in manufacturing machine studying (ML) pipelines.

Contents

(1) Instance Use Case

(2) Understanding Feast and Ray

(3) Roles of Feast and Ray in Characteristic Engineering

(4) Code Walkthrough

You will discover the accompanying GitHub repo right here.

(i) Goal

As an instance the capabilities of Feast and Ray, our instance situation includes constructing an ML pipeline to coach and serve a 30-day buyer buy propensity mannequin.

(ii) Dataset

We are going to use the UCI On-line Retail dataset (CC BY 4.0), which includes buy transactions for a UK on-line retailer between December 2010 and December 2011.

(iii) Characteristic Engineering Strategy

We will hold the function engineering scope easy by limiting it to the next options (primarily based on a 90-day lookback window except in any other case said):

Recency, Frequency, Financial Worth (RFM) options

recency_days: Days since final buyfrequency: Variety of distinct ordersfinancial: Complete financial spendtenure_days: Days since first-ever buy (all-time)

Buyer behavioral options

avg_order_value: Imply spend per orderavg_basket_size: Imply variety of gadgets per ordern_unique_products: Product rangereturn_rate: Share of cancelled ordersavg_days_between_purchases: Imply days between purchases

(iv) Rolling Window Design

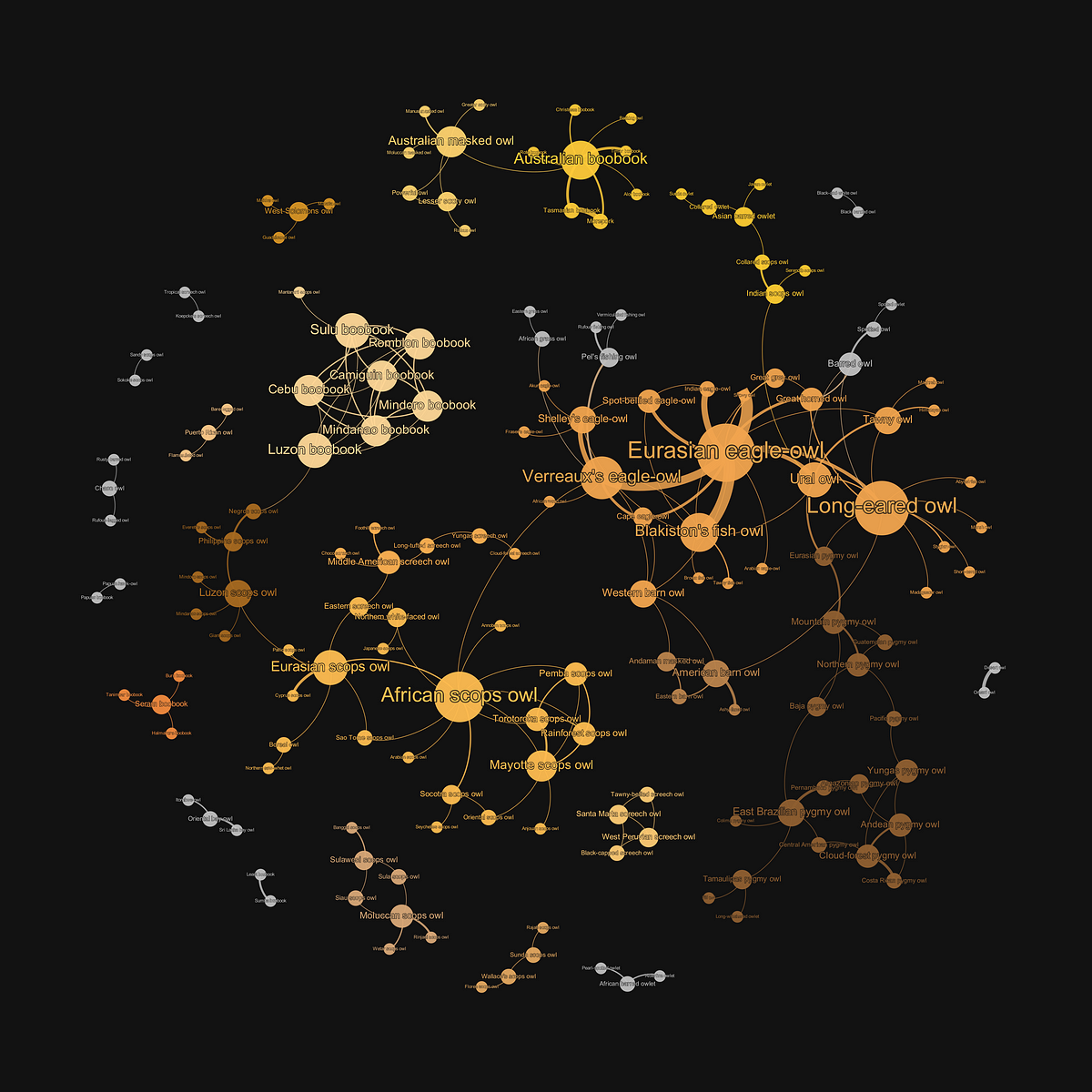

The options are computed from a 90-day window earlier than every cutoff date, and buy labels (1 = at the least one buy, 0 = no buy) are computed from a 30-day window after every cutoff.

On condition that the cutoff dates are spaced 30 days aside, it produces 9 snapshots from the dataset:

(i) About Feast

Firstly, let’s perceive what a function retailer is.

A function retailer is a centralized information repository that manages, shops, and serves machine studying options, performing as a single supply of fact for each coaching and serving.

Characteristic shops supply key advantages in managing function pipelines:

- Implement consistency between coaching and serving information

- Stop information leakage by making certain options use solely information obtainable on the time of prediction (i.e., point-in-time right information)

- Permit cross-team reuse of options and have pipelines

- Observe function variations, lineage, and metadata for governance

Feast (brief for Feature Store) is an open-source function retailer that delivers function information at scale throughout coaching and inference.

It integrates with a number of database backends and ML frameworks that may work throughout or off cloud platforms.

Feast helps each on-line (for real-time inference) and offline (for batch predictions), although our focus is on offline options, as batch prediction is extra related for our buy propensity use case.

(ii) About Ray

Ray is an open-source general-purpose distributed computing framework designed to scale ML functions from a single machine to massive clusters. It may well run on any machine, cluster, cloud supplier, or Kubernetes.

Ray provides a spread of capabilities, and the one we are going to use is the core distributed runtime referred to as Ray Core.

Ray Core supplies low-level primitives for the parallel execution of Python capabilities as distributed duties and for managing duties throughout obtainable compute assets.

Let’s have a look at the areas the place Feast and Ray assist handle function engineering challenges.

(i) Characteristic Retailer Setup with Feast

For our case, we are going to arrange an offline function retailer utilizing Feast. Our RFM and buyer habits options can be registered within the function retailer for centralized entry.

In Feast terminology, offline options are additionally termed as ‘historic’ options

(ii) Characteristic Retrieval with Feast and Ray

With our Feast function retailer prepared, we will allow the retrieval of related options from it throughout each levels of mannequin coaching and inference.

We should first be clear about these three ideas: Entity, Characteristic, and Characteristic View.

- An entity is the first key used to retrieve options. It principally refers back to the identifier “object” for every function row (e.g.,

user_id,account_id, and so forth) - A function is a single typed attribute related to every entity (e.g.,

avg_basket_size) - A function view defines a gaggle of associated options for an entity, sourced from a dataset. Consider it as a desk with a main key (e.g.,

user_id) being coupled with related function columns.

Occasion timestamps are an integral part of function views because it permits usto generate point-in-time right function information for coaching and inference.

Say we now wish to get hold of these offline options for coaching or inference. Right here’s how it’s completed:

- An entity DataFrame is first created, containing the entity keys and an occasion timestamp for every row. It corresponds to the 2 left-most columns in Fig. 5 above.

- A point-in-time right be part of happens between the entity DataFrame and the function tables outlined by the totally different Characteristic Views

The output is a mixed dataset containing all of the requested options for the desired set of entities and timestamps.

So the place does Ray are available right here?

The Ray Offline Retailer is a distributed compute engine that allows sooner, extra scalable function retrieval, particularly for giant datasets. It does so by parallelizing information entry and be part of operations:

- Knowledge (I/O) Entry: Distributed information reads by splitting Parquet information throughout a number of employees, the place every employee reads a distinct partition in parallel

- Be part of Operations: Splits the entity DataFrame so that every partition independently performs temporal joins to retrieve the function values per entity earlier than a given timestamp. With a number of function views, Ray parallelizes the computationally intensive joins to scale effectively.

(iii) Characteristic Engineering with Ray

The function engineering operate for producing RFM and buyer habits options have to be utilized to every 90-day window (i.e., 9 impartial cutoff dates, every requiring the identical computation).

Ray Core turns every operate name right into a distant process, enabling the function engineering to run in parallel throughout obtainable cores (or machines in a cluster).

(4.1) Preliminary Setup

We set up the next Python dependencies:

feast[ray]==0.60.0

openpyxl==3.1.5

psycopg2-binary==2.9.11

ray==2.54.0

scikit-learn==1.8.0

xgboost==3.2.0As we are going to use PostgreSQL for the function registry, make it possible for Docker is put in and working earlier than working docker compose up -d to begin the PostgreSQL container.

(4.2) Put together Knowledge

Apart from information ingestion and cleansing, there are two preparation steps to execute:

- Rolling Cutoff Technology: Creates 9 snapshots spaced 30 days aside. Every cutoff date defines a coaching/prediction level at which options are computed from the 90 days previous it, and goal labels are computed from the 30 days after it.

- Label Creation: For every cutoff, create a binary goal label indicating whether or not a buyer made at the least one buy inside the 30-day window after the cutoff.

(4.3) Run Ray-Primarily based Characteristic Engineering

After defining the code to generate RFM and buyer habits options, let’s parallelize the execution utilizing Ray for every rolling window.

We begin by making a operate (compute_features_for_cutoff) to wrap all of the related function engineering steps for each cutoff:

The @ray.distant decorator registers the operate as a distant process to be run asynchronously in separate employees.

The info preparation and have engineering pipeline is then run as follows:

Right here’s how Ray is concerned within the pipeline:

ray.init()initiates a Ray cluster and permits distributed execution throughout all native cores by default.ray.put(df)shops the cleaned DataFrame in Ray’s shared reminiscence (aka distributed object retailer) and returns a reference (ObjectRef) so that each one parallel duties can entry the DataFrame with out copying it. This helps to enhance reminiscence effectivity and process launch efficiencycompute_features_for_cutoff.distant(...)sends our function computation duties to Ray’s scheduler, the place Ray assigns every process to a employee for parallel execution and returns a reference to every process’s output.futures = [...]shops all references returned by every.distant()name. They characterize all of the in-flight parallel duties which were launchedray.get(futures)retrieves all of the precise return values from the parallel process executions at one go- The script then extracts and concatenates per-cutoff RFM and habits options into two DataFrames, saves them as Parquet information regionally

ray.shutdown()releases the assets allotted by stopping the Ray runtime

Whereas our options are saved regionally on this case, do notice that offline function information is often saved in information warehouses or information lakes (e.g., S3, BigQuery, and so forth) in manufacturing settings.

(4.4) Arrange Feast Characteristic Registry

To this point, now we have lined the transformation and storage facets of function engineering. Allow us to transfer on to the Feast function registry.

A function registry is the centralized catalog of function definitions and metadata that serves as a single supply of fact for function info.

There are two key elements within the registry setup: Definitions and Configuration.

Definitions

We first outline the Python objects to characterize the options engineered to this point. For instance, one of many first objects to find out is the Entity (i.e., the first key that hyperlinks the function rows):

Subsequent, we outline the information sources by which our function information are saved:

Notice that the timestamp_field is vital because it permits right point-in-time information views and joins when options are retrieved for coaching or inference.

After defining entities and information sources, we are able to outline the function views. On condition that now we have two units of options (RFM and buyer habits), we count on to have two function views:

The schema (discipline names, dtypes) is vital for making certain that function information is correctly validated and registered.

Configuration

The function registry configuration is outlined in a YAML file referred to as feature_store.yaml:

The configuration tells Feast what infrastructure to make use of and the place its metadata and have information dwell, and it usually includes the next:

- Venture identify: Namespace for challenge

- Supplier: Execution setting (e.g., native, Kubernetes, cloud)

- Registry location: Location of function metadata storage (file or databases like PostgreSQL)

- Offline retailer: Location from which historic options information is learn

- On-line retailer: Location from which low-latency options are served (not related in our case)

In our case, we use PostgreSQL (working in a Docker container) for the function registry and the Ray offline retailer for optimized function retrieval.

We use PostgreSQL as an alternative of native SQLite to simulate production-grade infrastructure for the function registry setup, the place a number of companies can entry the registry concurrently

Feast Apply

As soon as definitions and configuration are arrange, we run feast apply to register and synchronize the definitions with the registry and provision the required infrastructure.

The command could be discovered within the Makefile:

# Step 2: Register Feast function definitions in PostgreSQL registry

apply:

cd feature_store && feast apply(4.5) Retrieve Options for Mannequin Coaching

As soon as our function retailer is prepared, we proceed with coaching the ML mannequin.

We begin by creating the entity backbone for retrieval (i.e., the 2 columns of customer_id and event_timestamp), which Feast makes use of to retrieve the proper function snapshot.

We then execute the retrieval of options for mannequin coaching at runtime:

FeatureStoreis the Feast object that’s used to outline, create, and retrieve options at runtimeget_historical_features()is designed for offline function retrieval (versusget_online_features()), and it expects the entity DataFrame and the listing of options to retrieve. The distributed reads and point-in-time joins of function information happen right here.

(4.7) Retrieve Options for Inference

We finish off by producing predictions from our educated mannequin.

The function retrieval codes for inference are largely just like these for coaching, since we’re reaping the advantages of a constant function retailer.

The principle distinction comes from the totally different cutoff dates used.

Wrapping It Up

Characteristic engineering is an important part of constructing ML fashions, but it surely additionally introduces information administration challenges if not correctly dealt with.

On this article, we clearly demonstrated how you can use Feast and Ray to enhance the administration, reusability, and effectivity of function engineering.

Understanding and making use of these ideas will allow groups to construct environment friendly ML pipelines with scalable function engineering capabilities.