On this article, I’ll exhibit find out how to transfer from merely forecasting outcomes to actively intervening in programs to steer towards desired targets. With hands-on examples in predictive upkeep, I’ll present how data-driven selections can optimize operations and cut back downtime.

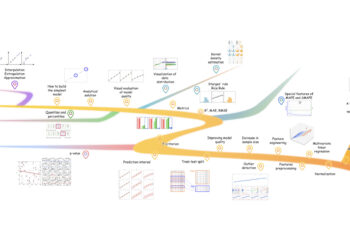

with descriptive evaluation to research “what has occurred”. In predictive evaluation, we goal for insights and decide “what’s going to occur”. With Bayesian prescriptive modeling, we are able to transcend prediction and goal to intervene within the end result. I’ll exhibit how you need to use knowledge to “make it occur”. To do that, we have to perceive the advanced relationships between variables in a (closed) system. Modeling causal networks is essential, and as well as, we have to make inferences to quantify how the system is affected within the desired end result. On this article, I’ll briefly begin by explaining the theoretical background. Within the second half, I’ll exhibit find out how to construct causal fashions that information decision-making for predictive upkeep. Lastly, I’ll clarify that in real-world eventualities, there’s one other vital issue that must be thought-about: How cost-effective is it to forestall failures? I’ll use bnlearn for Python throughout all my analyses.

This weblog incorporates hands-on examples! It will aid you to be taught faster, perceive higher, and keep in mind longer. Seize a espresso and take a look at it out! Disclosure: I’m the creator of the Python packages bnlearn.

What You Want To Know About Prescriptive Evaluation: A Transient Introduction.

Prescriptive evaluation would be the strongest solution to perceive your small business efficiency, tendencies, and to optimize for effectivity, however it’s definitely not step one you soak up your evaluation. Step one ought to be, like at all times, understanding the info when it comes to descriptive evaluation with Exploratory Information Evaluation (EDA). That is the step the place we have to determine “what has occurred”. That is tremendous vital as a result of it supplies us with deeper insights into the variables and their dependencies within the system, which subsequently helps to scrub, normalize, and standardize the variables in our knowledge set. Cleaned knowledge set are the basics in each evaluation.

With the cleaned knowledge set, we are able to begin engaged on our prescriptive mannequin. Usually, for most of these evaluation, we regularly want a number of knowledge. The reason being easy: the higher we are able to be taught a mannequin that matches the info precisely, the higher we are able to detect causal relationships. On this article, I’ll use the notion of ‘system’ ceaselessly, so let me first outline ‘system’. A system, within the context of prescriptive evaluation and causal modeling, is a set of measurable variables or processes that affect one another and produce outcomes over time. Some variables would be the key gamers (the drivers), whereas others are much less related (the passengers).

For instance, suppose we’ve got a healthcare system that incorporates details about sufferers with their signs, therapies, genetics, environmental variables, and behavioral data. If we perceive the causal course of, we are able to intervene by influencing (one or a number of) driver variables. To enhance the affected person’s end result, we might solely want a comparatively small change, reminiscent of enhancing their weight loss program. Importantly, the variable that we goal to affect or intervene have to be a driver variable to make it impactful. Usually talking, altering variables for a desired end result is one thing we do in our each day lives. From closing the window to forestall rain coming in to the recommendation from mates, household, or professionals that we consider for a particular end result. However this will even be a extra trial-and-error process. With prescriptive evaluation, we goal to find out the motive force variables after which quantify what occurs on intervention.

With prescriptive evaluation we first want to differentiate the motive force variables from the passengers, after which quantify what occurs on intervention.

All through this text, I’ll give attention to purposes with programs that embrace bodily parts, reminiscent of bridges, pumps, dikes, together with environmental variables reminiscent of rainfall, river ranges, soil erosion, and human selections (e.g., upkeep schedules and prices). Within the subject of water administration, there are basic instances of advanced programs the place prescriptive evaluation can supply critical worth. An important candidate for prescriptive evaluation is predictive upkeep, which might enhance operational time and reduce prices. Such programs typically comprise varied sensors, making it data-rich. On the identical time, the variables in programs are sometimes interdependent, that means that actions in a single a part of the system typically ripple by means of and have an effect on others. For instance, opening a floodgate upstream can change water strain and move dynamics downstream. This interconnectedness is precisely why understanding causal relationships is vital. After we perceive the essential elements in the complete system, we are able to extra precisely intervene. With Bayesian modeling, we goal to uncover and quantify these causal relationships.

Variables in programs are sometimes interdependent, that means that intervention in a single a part of the system typically ripple by means of and have an effect on others.

Within the subsequent part, I’ll begin with an introduction to Bayesian networks, along with sensible examples. It will aid you to higher perceive the real-world use case within the coming sections.

Bayesian Networks and Causal Inference: The Constructing Blocks.

At its core, a Bayesian community is a graphical mannequin that represents probabilistic relationships between variables. These networks with causal relationships are highly effective instruments for prescriptive modeling. Let’s break this down utilizing a basic instance: the sprinkler system. Suppose you’re making an attempt to determine why your grass is moist. One chance is that you just turned on the sprinkler; one other is that it rained. The climate performs a task too; on cloudy days, it’s extra prone to rain, and the sprinkler may behave otherwise relying on the forecast. These dependencies kind a community of causal relationships that we are able to mannequin. With bnlearn for Python, we are able to mannequin the relationships as proven within the code block:

# Set up Python bnlearn bundle

pip set up bnlearn# Import library

import bnlearn as bn

# Outline the causal relationships

edges = [('Cloudy', 'Sprinkler'),

('Cloudy', 'Rain'),

('Sprinkler', 'Wet_Grass'),

('Rain', 'Wet_Grass')]

# Create the Bayesian community

DAG = bn.make_DAG(edges)

# Visualize the community

bn.plot(DAG)

This creates a Directed Acyclic Graph (DAG) the place every node represents a variable, every edge represents a causal relationship, and the route of the sting exhibits the route of causality. Thus far, we’ve got not modeled any knowledge, however solely offered the causal construction based mostly on our personal area information concerning the climate together with our understanding/ speculation of the system. Essential to know is that such a DAG varieties the premise for Bayesian studying! We will thus both create the DAG ourselves or be taught the construction from knowledge utilizing Construction Studying. See the subsequent part on find out how to be taught the DAG kind knowledge.

Studying Construction from Information.

In lots of events, we don’t know the causal relationships beforehand, however have the info that we are able to use to be taught the construction. The bnlearn library supplies a number of structure-learning approaches that may be chosen based mostly on the kind of enter knowledge (discrete, steady, or blended knowledge units); PC algorithm (named after Peter and Clark), Exhaustive-Search, Hillclimb-Search, Chow-Liu, Naivebayes, TAN, or Ica-lingam. However the choice for the kind of algorithm can be based mostly on the kind of community you goal for. You may for instance set a root node when you’ve got a superb cause for this. Within the code block beneath you may be taught the construction of the community utilizing a dataframe the place the variables are categorical. The output is a DAG that’s similar to that of Determine 1.

# Import library

import bnlearn as bn

# Load Sprinkler knowledge set

df = bn.import_example(knowledge='sprinkler')

# Present dataframe

print(df)

+--------+------------+------+------------+

| Cloudy | Sprinkler | Rain | Wet_Grass |

+--------+------------+------+------------+

| 0 | 0 | 0 | 0 |

| 1 | 0 | 1 | 1 |

| 0 | 1 | 0 | 1 |

| 1 | 1 | 1 | 1 |

| 1 | 1 | 1 | 1 |

| ... | ... | ... | ... |

| 1000 | 1 | 0 | 0 |

+--------+------------+------+------------+

# Construction studying

mannequin = bn.structure_learning.match(df)

# Visualize the community

bn.plot(DAG)DAGs Matter for Causal Inference.

The underside line is that Directed Acyclic Graphs (DAGs) depict the causal relationships between the variables. This discovered mannequin varieties the premise for making inferences and answering questions like:

- If we alter X, what occurs to Y?

- Or what’s the impact of intervening on X whereas holding others fixed?

Making inferences is essential for prescriptive modeling as a result of it helps us perceive and quantify the affect of the variables on intervention. As talked about earlier than, not all variables in programs are of curiosity or topic to intervention. In our easy use case, we are able to intervene for Moist grass based mostly on Sprinklers, however we cannot intervene for Moist Grass based mostly on Rain or Cloudy situations as a result of we cannot management the climate. Within the subsequent part, I’ll dive into the hands-on use case with a real-world instance on predictive upkeep. I’ll exhibit find out how to construct and visualize causal fashions, find out how to be taught construction from knowledge, make interventions, after which quantify the intervention utilizing inferences.

Generate Artificial Information in Case You Solely Have Specialists’ Data or Few Samples.

In lots of domains, reminiscent of healthcare, finance, cybersecurity, and autonomous programs, real-world knowledge may be delicate, costly, imbalanced, or tough to gather, notably for uncommon or edge-case eventualities. That is the place artificial Information turns into a robust different. There are, roughly talking, two principal classes of making artificial knowledge: Probabilistic and Generative. In case you want extra knowledge, I might suggest studying this weblog about [3]. It discusses varied ideas of artificial knowledge technology along with hands-on examples. Among the many mentioned factors are:

- Generate artificial knowledge that mimics current steady measurements (anticipated with unbiased variables).

- Generate artificial knowledge that mimics skilled information. (anticipated to be steady and Unbiased variables).

- Generate artificial Information that mimics an current categorical dataset (anticipated with dependent variables).

- Generate artificial knowledge that mimics skilled information (anticipated to be categorical and with dependent variables).

A Actual World Use Case In Predictive Upkeep.

Up to now, I’ve briefly described the Bayesian concept and demonstrated find out how to be taught buildings utilizing the sprinkler knowledge set. On this part, we’ll work with a posh real-world knowledge set to find out the causal relationships, carry out inferences, and assess whether or not we are able to suggest interventions within the system to alter the result of machine failures. Suppose you’re answerable for the engines that function a water lock, and also you’re making an attempt to know what components drive potential machine failures as a result of your aim is to maintain the engines operating with out failures. Within the following sections, we’ll stepwise undergo the info modeling elements and take a look at to determine how we are able to hold the engines operating with out failures.

Step 1: Information Understanding.

The information set we’ll use is a predictive upkeep knowledge set [1] (CC BY 4.0 licence). It captures a simulated however sensible illustration of sensor knowledge from equipment over time. In our case, we deal with this as if it had been collected from a posh infrastructure system, such because the motors controlling a water lock, the place gear reliability is important. See the code block beneath to load the info set.

# Import library

import bnlearn as bn

# Load knowledge set

df = bn.import_example('predictive_maintenance')

# print dataframe

+-------+------------+------+------------------+----+-----+-----+-----+-----+

| UDI | Product ID | Kind | Air temperature | .. | HDF | PWF | OSF | RNF |

+-------+------------+------+------------------+----+-----+-----+-----+-----+

| 1 | M14860 | M | 298.1 | .. | 0 | 0 | 0 | 0 |

| 2 | L47181 | L | 298.2 | .. | 0 | 0 | 0 | 0 |

| 3 | L47182 | L | 298.1 | .. | 0 | 0 | 0 | 0 |

| 4 | L47183 | L | 298.2 | .. | 0 | 0 | 0 | 0 |

| 5 | L47184 | L | 298.2 | .. | 0 | 0 | 0 | 0 |

| ... | ... | ... | ... | .. | ... | ... | ... | ... |

| 9996 | M24855 | M | 298.8 | .. | 0 | 0 | 0 | 0 |

| 9997 | H39410 | H | 298.9 | .. | 0 | 0 | 0 | 0 |

| 9998 | M24857 | M | 299.0 | .. | 0 | 0 | 0 | 0 |

| 9999 | H39412 | H | 299.0 | .. | 0 | 0 | 0 | 0 |

|10000 | M24859 | M | 299.0 | .. | 0 | 0 | 0 | 0 |

+-------+-------------+------+------------------+----+-----+-----+-----+-----+

[10000 rows x 14 columns]The predictive upkeep knowledge set is a so-called mixed-type knowledge set containing a mixture of steady, categorical, and binary variables. It captures operational knowledge from machines, together with each sensor readings and failure occasions. For example, it contains bodily measurements like rotational velocity, torque, and gear put on (all steady variables reflecting how the machine is behaving over time). Alongside these, we’ve got categorical data such because the machine sort and environmental knowledge like air temperature. The information set additionally information whether or not particular kinds of failures occurred, reminiscent of device put on failure or warmth dissipation failure, represented as binary variables. This mixture of variables permits us to not solely observe what occurs underneath completely different situations but additionally discover the potential causal relationships that may drive machine failures.

Step 2: Information Cleansing

Earlier than we are able to start studying the causal construction of this method utilizing Bayesian strategies, we have to carry out some pre-processing steps first. Step one is to take away irrelevant columns, reminiscent of distinctive identifiers (UID and Product ID), which holds no significant data for modeling. If there have been lacking values, we might have wanted to impute or take away them. On this knowledge set, there are not any lacking values. If there have been lacking values, bnlearn present two imputation strategies for dealing with lacking knowledge, specifically the Ok-Nearest Neighbor imputer (knn_imputer) and the MICE imputation strategy (mice_imputer). Each strategies observe a two-step strategy wherein first the numerical values are imputed, then the specific values. This two-step strategy is an enhancement on current strategies for dealing with lacking values in mixed-type knowledge units.

# Take away IDs from Dataframe

del df['UDI']

del df['Product ID']Step 3: Discretization Utilizing Chance Density Capabilities.

A lot of the Bayesian fashions are designed to mannequin categorical variables. Steady variables can distort computations as a result of they require assumptions concerning the underlying distributions, which aren’t at all times simple to validate. In case of the info units that comprise each steady and discrete variables, it’s best to discretize the continual variables. There are a number of methods for discretization, and in bnlearn the next options are applied:

- Discretize utilizing chance density becoming. This strategy robotically matches the very best distribution for the variable and bins it into 95% confidence intervals (the thresholds may be adjusted). A semi-automatic strategy is advisable because the default CII (higher, decrease) intervals might not correspond to significant domain-specific boundaries.

- Discretize utilizing a principled Bayesian discretization methodology. This strategy requires offering the DAG earlier than making use of the discretization methodology. The underlying thought is that consultants’ information will likely be included within the discretization strategy, and due to this fact enhance the accuracy of the binning.

- Don’t discretize however mannequin steady and hybrid knowledge units in a semi-parametric strategy. There are two approaches applied in

bnlearnare these that may deal with blended knowledge units; Direct-lingam and Ica-lingam, which each assume linear relationships. - Manually discretizing utilizing the skilled’s area information. Such an answer may be helpful, nevertheless it requires expert-level mechanical information or entry to detailed operational thresholds. A limitation is that it will possibly introduce sure bias into the variables because the thresholds mirror subjective assumptions and will not seize the true underlying variability or relationships within the knowledge.

Method 2 and three could also be much less appropriate for our present use case as a result of Bayesian discretization strategies typically require sturdy priors or assumptions concerning the system (DAG) that I can’t confidently present. The semi-parametric strategy, however, might introduce pointless complexity for this comparatively small knowledge set. The discretization strategy that I’ll use is a mixture of chance density becoming [3] together with the specs concerning the operation ranges of the mechanical units. I don’t have expert-level mechanical information to confidently set the thresholds. Nevertheless, the specs are listed for regular mechanical operations within the documentation [1]. Let me elaborate extra on this. The information set description lists the next specs: Air Temperature is measured in Kelvin, and round 300 Ok with an ordinary deviation of two Ok. The Course of temperature inside the manufacturing course of is roughly the Air Temperature plus 10 Ok. The Rotational velocity of the machine is in revolutions per minute, and calculated from an influence of 2860 W. The Torque is in Newton-meters, and round 40 Nm with out damaging values. The Instrument put on is the cumulative minutes. With this data, we are able to outline whether or not we have to set decrease and/ or higher boundaries for our chance density becoming strategy.

See Desk 2 the place I outlined regular and important operation ranges, and the code block beneath to set the brink values based mostly on the info distributions of the variables.

pip set up distfit# Discretize the next columns

colnames = ['Air temperature [K]', 'Course of temperature [K]', 'Rotational velocity [rpm]', 'Torque [Nm]', 'Instrument put on [min]']

colours = ['#87CEEB', '#FFA500', '#800080', '#FF4500', '#A9A9A9']

# Apply distribution becoming to every variable

for colname, shade in zip(colnames, colours):

# Initialize and set 95% confidence interval

if colname=='Instrument put on [min]' or colname=='Course of temperature [K]':

# Set mannequin parameters to find out the medium-high ranges

dist = distfit(alpha=0.05, certain='up', stats='RSS')

labels = ['medium', 'high']

else:

# Set mannequin parameters to find out the low-medium-high ranges

dist = distfit(alpha=0.05, stats='RSS')

labels = ['low', 'medium', 'high']

# Distribution becoming

dist.fit_transform(df[colname])

# Plot

dist.plot(title=colname, bar_properties={'shade': shade})

plt.present()

# Outline bins based mostly on distribution

bins = [df[colname].min(), dist.mannequin['CII_min_alpha'], dist.mannequin['CII_max_alpha'], df[colname].max()]

# Take away None

bins = [x for x in bins if x is not None]

# Discretize utilizing the outlined bins and add to dataframe

df[colname + '_category'] = pd.minimize(df[colname], bins=bins, labels=labels, include_lowest=True)

# Delete the unique column

del df[colname]This semi-automated strategy determines the optimum binning for every variable given the important operation ranges. We thus match a chance density operate (PDF) to every steady variable and use statistical properties, such because the 95% confidence interval, to outline classes like low, medium, and excessive. This strategy preserves the underlying distribution of the info whereas nonetheless permitting for interpretable discretization aligned with pure variations within the system. This enables to create bins which can be each statistically sound and interpretable. As at all times, plot the outcomes and make sanity checks, because the ensuing intervals might not at all times align with significant, domain-specific thresholds. See Determine 2 with the estimated PDFs and thresholds for the continual variables. On this state of affairs, we see properly that two variables are binned into medium-high, whereas the remaining are in low-medium-high.

Step 4: The Ultimate Cleaned Information set.

At this level, we’ve got a cleaned and discretized knowledge set. The remaining variables within the knowledge set are failure modes (TWF, HDF, PWF, OSF, RNF) that are boolean variables for which no transformation step is required. These variables are saved within the mannequin due to their doable relationships with the opposite variables. For instance, Torque may be linked to OSF (overstrain failure), or Air temperature variations with HDF (warmth dissipation failure), or Instrument Put on is linked with TWF (device put on failure). Within the knowledge set description is described that if no less than one failure mode is true, the method fails, and the Machine Failure label is ready to 1. It’s, nevertheless, not clear which of the failure modes has brought on the method to fail. Or in different phrases, the Machine Failure label is a composite end result: it solely tells you that one thing went improper, however not which causal path led to the failure. Within the final step we’ll studying the construction to find the causal community.

Step 5: Studying The Causal Construction.

On this step, we’ll decide the causal relationships. In distinction to supervised Machine Studying approaches, we don’t must set a goal variable reminiscent of Machine Failure. The Bayesian mannequin will be taught the causal relationships based mostly on the info utilizing a search technique and scoring operate. A scoring operate quantifies how effectively a particular DAG explains the noticed knowledge, and the search technique is to effectively stroll by means of the complete search house of DAGs to finally discover essentially the most optimum DAG with out testing all of them. For this use case, we’ll use HillClimbSearch as a search technique and the Bayesian Data Criterion (BIC) as a scoring operate. See the code block to be taught the construction utilizing Python bnlearn .

# Construction studying

mannequin = bn.structure_learning.match(df, methodtype='hc', scoretype='bic')

# [bnlearn] >Warning: Computing DAG with 12 nodes can take a really very long time!

# [bnlearn] >Computing greatest DAG utilizing [hc]

# [bnlearn] >Set scoring sort at [bds]

# [bnlearn] >Compute construction scores for mannequin comparability (increased is best).

print(mannequin['structure_scores'])

# {'k2': -23261.534992034045,

# 'bic': -23296.9910477033,

# 'bdeu': -23325.348497769708,

# 'bds': -23397.741317668322}

# Compute edge weights utilizing ChiSquare independence check.

mannequin = bn.independence_test(mannequin, df, check='chi_square', prune=True)

# Plot the very best DAG

bn.plot(mannequin, edge_labels='pvalue', params_static={'maxscale': 4, 'figsize': (15, 15), 'font_size': 14, 'arrowsize': 10})

dotgraph = bn.plot_graphviz(mannequin, edge_labels='pvalue')

dotgraph

# Retailer to pdf

dotgraph.view(filename='bnlearn_predictive_maintanance')Every mannequin may be scored based mostly on its construction. Nevertheless, the scores should not have easy interpretability, however can be utilized to match completely different fashions. A better rating represents a greater match, however keep in mind that scores are normally log-likelihood based mostly, so a much less damaging rating is thus higher. From the outcomes, we are able to see that K2=-23261 scored the very best, that means that the discovered construction had the very best match on the info.

Nevertheless, the variations in rating with BIC=-23296 may be very small. I then choose selecting the DAG decided by BIC over K2 as DAGs detected BIC are usually sparser, and thus cleaner, because it provides a penalty for complexity (variety of parameters, variety of edges). The K2 strategy, however, determines the DAG purely on the chance or the match on the info. Thus, there is no such thing as a penalty for making a extra advanced community (extra edges, extra mother and father). The causal DAG is proven in Determine 3, and within the subsequent part I’ll interpret the outcomes. That is thrilling as a result of does the DAG is smart and might we actively intervene within the system in direction of our desired end result? Carry on studying!

Determine Potential Interventions for Machine Failure.

I launched the concept that Bayesian evaluation allows energetic intervention in a system. Which means that we are able to steer in direction of our desired outcomes, aka the prescriptive evaluation. To take action, we first want a causal understanding of the system. At this level, we’ve got obtained our DAG (Determine 3) and might begin deciphering the DAG to find out the doable driver variables of machine failures.

From Determine 3, it may be noticed that the Machine Failure label is a composite end result; it’s influenced by a number of underlying variables. We will use the DAG to systematically determine the variables for intervention of machine failures. Let’s begin by inspecting the foundation variable, which is PWF (Energy Failure). The DAG exhibits that stopping energy failures would instantly contribute to stopping machine failures total. Though this discovering is intuitive (aka energy points result in system failure), you will need to acknowledge that this conclusion has now been derived purely from knowledge. If it had been a special variable, we wanted to consider it what it may imply and whether or not the DAG is correct for our knowledge set.

After we proceed to look at the DAG, we see that Torque is linked to OSF (Overstrain Failure). Air Temperature is linked to HDF (Warmth Dissipation Failure), and Instrument Put on is linked to TWF (Instrument Put on Failure). Ideally, we anticipate that failure modes (TWF, HDF, PWF, OSF, RNF) are results, whereas bodily variables like Torque, Air Temperature, and Instrument Put on act as causes. Though construction studying detected these relationships fairly effectively, it doesn’t at all times seize the right causal route purely from observational knowledge. Nonetheless, the found edges present actionable beginning factors that can be utilized to design our interventions:

- Torque → OSF (Overstrain Failure):

Actively monitoring and controlling torque ranges can stop overstrain-related failures. - Air Temperature → HDF (Warmth Dissipation Failure):

Managing the ambient setting (e.g., by means of improved cooling programs) might cut back warmth dissipation points. - Instrument Put on → TWF (Instrument Put on Failure):

Actual-time device put on monitoring can stop device put on failures.

Moreover, Random Failures (RNF) will not be detected with any outgoing or incoming connections, indicating that such failures are actually stochastic inside this knowledge set and can’t be mitigated by means of interventions on noticed variables. It is a nice sanity verify for the mannequin as a result of we’d not anticipate the RNF to be vital within the DAG!

Quantify with Interventions.

Up so far, we’ve got discovered the construction of the system and recognized which variables may be focused for intervention. Nevertheless, we’re not completed but. To make these interventions significant, we should quantify the anticipated outcomes.

That is the place inference in Bayesian networks comes into play. Let me elaborate a bit extra on this as a result of once I describe intervention, I imply altering a variable within the system, like protecting Torque at a low degree, or lowering Instrument Put on earlier than it hits excessive values, or ensuring Air Temperature stays secure. On this method, we are able to cause over the discovered mannequin as a result of the system is interdependent, and a change in a single variable can ripple all through the complete system.

To make these interventions significant, we should quantify the anticipated outcomes.

The usage of inferences is thus vital and for varied causes: 1. Ahead inference, the place we goal to foretell future outcomes given present proof. 2. Backward inference, the place we are able to diagnose the most definitely trigger after an occasion has occurred. 3. Counterfactual inference to simulate the “what-if” eventualities. Within the context of our predictive upkeep knowledge set, inference can now assist reply particular questions. However first, we have to be taught the inference mannequin, which is completed simply as proven within the code block beneath. With the mannequin we are able to begin asking questions and see how its results ripples all through the system.

# Study inference mannequin

mannequin = bn.parameter_learning.match(mannequin, df, methodtype="bayes")What’s the chance of a Machine Failure if Torque is excessive?

q = bn.inference.match(mannequin, variables=['Machine failure'],

proof={'Torque [Nm]_category': 'excessive'},

plot=True)

+-------------------+----------+

| Machine failure | p |

+===================+==========+

| 0 | 0.584588 |

+-------------------+----------+

| 1 | 0.415412 |

+-------------------+----------+

Machine failure = 0: No machine failure occurred.

Machine failure = 1: A machine failure occurred.

On condition that the Torque is excessive:

There's a couple of 58.5% likelihood the machine won't fail.

There's a couple of 41.5% likelihood the machine will fail.

A Excessive Torque worth thus considerably will increase the chance of machine failure.

Give it some thought, with out conditioning, machine failure in all probability occurs

at a a lot decrease charge. Thus, controlling the torque and protecting it out of

the excessive vary could possibly be an vital prescriptive motion to forestall failures.

If we handle to maintain the Air Temperature within the medium vary, how a lot does the chance of Warmth Dissipation Failure lower?

q = bn.inference.match(mannequin, variables=['HDF'],

proof={'Air temperature [K]_category': 'medium'},

plot=True)

+-------+-----------+

| HDF | p |

+=======+===========+

| 0 | 0.972256 |

+-------+-----------+

| 1 | 0.0277441 |

+-------+-----------+

HDF = 0 means "no warmth dissipation failure."

HDF = 1 means "there's a warmth dissipation failure."

On condition that the Air Temperature is saved at a medium degree:

There's a 97.22% likelihood that no failure will occur.

There's solely a 2.77% likelihood {that a} failure will occur.

Given {that a} Machine Failure has occurred, which failure mode (TWF, HDF, PWF, OSF, RNF) is essentially the most possible trigger?

q = bn.inference.match(mannequin, variables=['TWF', 'HDF', 'PWF', 'OSF'],

proof={'Machine failure': 1},

plot=True)

+----+-------+-------+-------+-------+-------------+

| | TWF | HDF | PWF | OSF | p |

+====+=======+=======+=======+=======+=============+

| 0 | 0 | 0 | 0 | 0 | 0.0240521 |

+----+-------+-------+-------+-------+-------------+

| 1 | 0 | 0 | 0 | 1 | 0.210243 | <- OSF

+----+-------+-------+-------+-------+-------------+

| 2 | 0 | 0 | 1 | 0 | 0.207443 | <- PWF

+----+-------+-------+-------+-------+-------------+

| 3 | 0 | 0 | 1 | 1 | 0.0321357 |

+----+-------+-------+-------+-------+-------------+

| 4 | 0 | 1 | 0 | 0 | 0.245374 | <- HDF

+----+-------+-------+-------+-------+-------------+

| 5 | 0 | 1 | 0 | 1 | 0.0177909 |

+----+-------+-------+-------+-------+-------------+

| 6 | 0 | 1 | 1 | 0 | 0.0185796 |

+----+-------+-------+-------+-------+-------------+

| 7 | 0 | 1 | 1 | 1 | 0.00499062 |

+----+-------+-------+-------+-------+-------------+

| 8 | 1 | 0 | 0 | 0 | 0.21378 | <- TWF

+----+-------+-------+-------+-------+-------------+

| 9 | 1 | 0 | 0 | 1 | 0.00727977 |

+----+-------+-------+-------+-------+-------------+

| 10 | 1 | 0 | 1 | 0 | 0.00693896 |

+----+-------+-------+-------+-------+-------------+

| 11 | 1 | 0 | 1 | 1 | 0.00148291 |

+----+-------+-------+-------+-------+-------------+

| 12 | 1 | 1 | 0 | 0 | 0.00786678 |

+----+-------+-------+-------+-------+-------------+

| 13 | 1 | 1 | 0 | 1 | 0.000854361 |

+----+-------+-------+-------+-------+-------------+

| 14 | 1 | 1 | 1 | 0 | 0.000927891 |

+----+-------+-------+-------+-------+-------------+

| 15 | 1 | 1 | 1 | 1 | 0.000260654 |

+----+-------+-------+-------+-------+-------------+

Every row represents a doable mixture of failure modes:

TWF: Instrument Put on Failure

HDF: Warmth Dissipation Failure

PWF: Energy Failure

OSF: Overstrain Failure

More often than not, when a machine failure happens, it may be traced again to

precisely one dominant failure mode:

HDF (24.5%)

OSF (21.0%)

PWF (20.7%)

TWF (21.4%)

Mixed failures (e.g., HDF + PWF energetic on the identical time) are a lot

much less frequent (<5% mixed).

When a machine fails, it is virtually at all times on account of one particular failure mode and never a mixture.

Warmth Dissipation Failure (HDF) is the most typical root trigger (24.5%), however others are very shut.

Intervening on these particular person failure sorts may considerably cut back machine failures.I demonstrated three examples utilizing inferences with interventions at completely different factors. Keep in mind that to make the interventions significant, we should thus quantify the anticipated outcomes. If we don’t quantify how a lot these actions will change the chance of machine failure, we’re simply guessing. The quantification, “If I decrease Torque, what occurs to failure chance?” is precisely what inference in Bayesian networks does because it updates the possibilities based mostly on our intervention (the proof), after which tells us how a lot affect our management motion may have. I do have one final part that I wish to share, which is about cost-sensitive modeling. The query it’s best to ask your self isn’t just: “Can I predict or stop failures?” however how cost-effective is it? Hold on studying into the subsequent part!

Value Delicate Modeling: Discovering the Candy-Spot.

How cost-effective is it to forestall failures? That is the query it’s best to ask your self earlier than “Can I stop failures?”. After we construct prescriptive upkeep fashions and suggest interventions based mostly on mannequin outputs, we should additionally perceive the financial returns. This strikes the dialogue from pure mannequin accuracy to a cost-optimization framework.

A method to do that is by translating the standard confusion matrix right into a cost-optimization matrix, as depicted in Determine 6. The confusion matrix has the 4 recognized states (A), however every state can have a special price implication (B). For illustration, in Determine 6C, a untimely alternative (false constructive) prices €2000 in pointless upkeep. In distinction, lacking a real failure (false damaging) can price €8000 (together with €6000 injury and €2000 alternative prices). This asymmetry highlights why cost-sensitive modeling is important: False negatives are 4x extra pricey than false positives.

In observe, we should always due to this fact not solely optimize for mannequin efficiency but additionally decrease the full anticipated prices. A mannequin with the next false constructive charge (untimely alternative) can due to this fact be extra optimum if it considerably reduces the prices in comparison with the a lot costlier false negatives (Failure). Having stated this, this doesn’t imply that we should always at all times go for untimely replacements as a result of, in addition to the prices, there’s additionally the timing of changing. Or in different phrases, when ought to we exchange gear?

The precise second when gear ought to be changed or serviced is inherently unsure. Mechanical processes with put on and tear are stochastic. Subsequently, we can’t anticipate to know the exact level of optimum intervention. What we are able to do is search for the so-called candy spot for upkeep, the place intervention is most cost-effective, as depicted in Determine 7.

This determine exhibits how the prices of proudly owning (orange) and repairing an asset (blue) evolve over time. At the beginning of an asset’s life, proudly owning prices are excessive (however lower steadily), whereas restore prices are low (however rise over time). When these two tendencies are mixed, the full price initially declines however then begins to extend once more.

The candy spot happens within the interval the place the full price of possession and restore is at its lowest. Though the candy spot may be estimated, it normally can’t be pinpointed precisely as a result of real-world situations fluctuate. We will higher outline a sweet-spot window. Good monitoring and data-driven methods enable us to remain near it and keep away from the steep prices related to surprising failure later within the asset’s life. Appearing throughout this sweet-spot window (e.g., changing, overhauling, and so on) ensures the very best monetary end result. Intervening too early means lacking out on usable life, whereas ready too lengthy results in rising restore prices and an elevated danger of failure. The principle takeaway is that efficient asset administration goals to behave close to the candy spot, avoiding each pointless early alternative and dear reactive upkeep after failure.

Wrapping up.

On this article, we moved from a RAW knowledge set to a causal Directed Acyclic Graph (DAG), which enabled us to transcend descriptive statistics to prescriptive evaluation. I demonstrated a data-driven strategy to be taught the causal construction of a knowledge set and to determine which facets of the system may be adjusted to enhance and cut back failure charges. Earlier than making interventions, we additionally should carry out inferences, which give us the up to date chances after we repair (or observe) sure variables. With out this step, the intervention is simply guessing as a result of actions in a single a part of the system typically ripple by means of and have an effect on others. This interconnectedness is precisely why understanding causal relationships is so vital.

Earlier than transferring into prescriptive analytics and taking motion based mostly on our analytical interventions, it’s extremely advisable to analysis whether or not the price of failure outweighs the price of upkeep. The problem is to search out the candy spot: the purpose the place the price of preventive upkeep is balanced in opposition to the rising danger and value of failure. I confirmed with Bayesian inference how variables like Torque can shift the failure chance. Such insights supplies understanding of the affect of intervention. The timing of the intervention is essential to make it cost-effective; being too early would waste sources, and being too late can lead to excessive failure prices.

Identical to all different fashions, Bayesian fashions are additionally “simply” fashions, and the causal community wants experimental validation earlier than making any important selections.

Be protected. Keep frosty.

Cheers, E.

You’ve come to the tip of this text! I hope you loved and discovered so much! Experiment with the hands-on examples! It will aid you to be taught faster, perceive higher, and keep in mind longer.

Software program

Let’s join!

References

- AI4I 2020 Predictive Upkeep Information set. (2020). UCI Machine Studying Repository. Licensed underneath a Inventive Commons Attribution 4.0 Worldwide (CC BY 4.0).

- E. Taskesen, bnlearn for Python library.

- E. Taskesen, The way to Generate Artificial Information: A Complete Information Utilizing Bayesian Sampling and Univariate Distributions, In the direction of Information Science (TDS), Could 2026