a big enterprise dimension a Kubernetes cluster for real-time inference on their customer-facing LLM product. We began with 64 H100 SXM GPUs throughout 8 nodes, all operating vLLM in monolithic mode. The outcomes had been not the place we want them to be. Throughout prefill bursts the tensor cores hit 92% utilization. Ten milliseconds later, throughout decode, the identical GPUs cratered to twenty-eight%. We had been paying for 64 H100s and getting significant work out of possibly 20 of them for 90% of every request’s lifetime. The finance staff wished to know why the GPU invoice appeared like we had been coaching a basis mannequin once we had been simply serving one.

A Llama 70B mannequin operating inference on an H100 GPU hits 92% compute utilization throughout prefill. Thirty milliseconds later, throughout decode, that very same GPU drops to 30%. The {hardware} hasn’t modified. The mannequin weights are an identical. The arithmetic depth of the workload fell by 5x between one part and the following.

That mismatch sits beneath each inference price drawback, and most serving architectures faux it doesn’t exist.

Each time a big language mannequin processes a request, it does two various things. First, it reads your whole immediate in parallel, filling a key-value cache with the eye state of each enter token. That’s the prefill part. Then it generates output tokens one after the other, studying from that cache on every step. That’s decode. Prefill is a matrix multiplication drawback. Decode is a reminiscence bandwidth drawback. They’ve reverse {hardware} necessities, and the usual follow is to run each on the identical pool of GPUs.

That normal follow is dear. The GPU that’s completely sized in your prefill burst is massively overprovisioned for decode. The GPU that’s cost-efficient for decode can’t sustain with prefill. You pay for the worst case in each instructions.

Disaggregated inference splits these two phases onto separate {hardware} swimming pools, every sized for what it truly does. The concept surfaced in a 2024 OSDI paper referred to as DistServe, out of UC San Diego’s Hao AI Lab. Eighteen months later, Perplexity runs it in manufacturing. Meta, LinkedIn, and Mistral serve site visitors by way of it. NVIDIA constructed a whole framework (Dynamo) round it. vLLM, SGLang, and TensorRT-LLM all assist it natively.

Right here’s the way it works, the place the trade-offs are, and if you shouldn’t trouble.

The 2 phases of inference aren’t the identical workload

If you happen to’ve run inference on any transformer mannequin, you’ve already encountered each phases. However most monitoring dashboards flatten them right into a single “inference” metric, which hides the issue.

Prefill processes all enter tokens concurrently. For a 4,096-token immediate, the eye computation includes massive matrix multiplications throughout the complete sequence size. That is compute-bound work. The GPU’s tensor cores are the bottleneck. On an H100 SXM, prefill achieves 200-400 arithmetic operations per byte of reminiscence accessed. Utilization sits between 90% and 95%. The reminiscence bandwidth, at 3.35 TB/s, is barely taxed.

Decode generates one token at a time. Every step reads all the KV-cache from HBM to compute a single consideration output. The tensor cores end in microseconds after which look ahead to the following reminiscence learn. Arithmetic depth drops to 60-80 ops/byte. GPU utilization falls to 20-40%. The tensor cores sit idle whereas the reminiscence bus saturates.

These aren’t tough estimates. The InfoQ technical evaluation from September 2025 measured prefill at 90-95% utilization and decode at 20-40%, with 3-4x higher power effectivity per operation throughout prefill. The SPAD paper from UT Austin simulated an H100 and located that lowering reminiscence bandwidth by 40% solely elevated prefill latency by 17%, as a result of prefill doesn’t use the bandwidth. Decreasing compute capability by 50% solely elevated decode latency by 22%, as a result of decode doesn’t use the compute.

Two workloads. Reverse bottlenecks. Identical GPU.

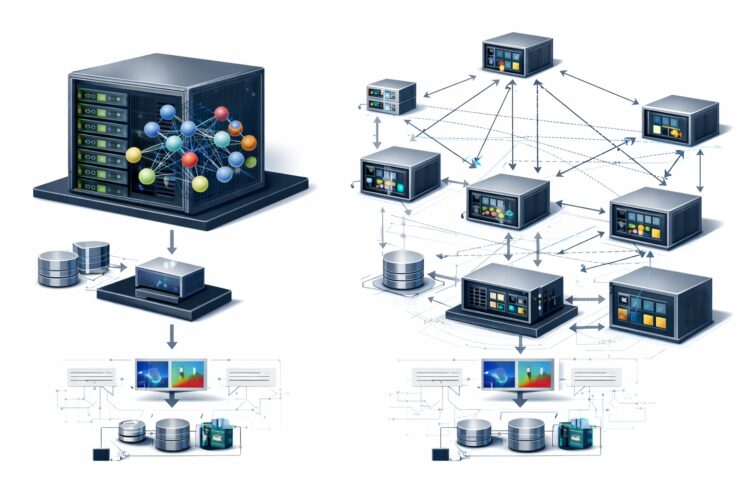

[Source: Author (SVG created using Inkscape)]

What monolithic serving truly prices you

In a normal vLLM or TensorRT-LLM deployment, a single GPU pool handles each phases. The scheduler interleaves prefill and decode requests throughout the identical batch.

The fast drawback is interference. When a brand new prefill request enters the batch, lively decode requests have to attend. Prefill is compute-heavy and takes longer per step. These decode requests, which ought to be returning tokens at a gentle cadence, expertise a latency spike. That is the inter-token latency (ITL) jitter that manufacturing programs obsess over. A person watching a streaming response sees the textual content pause mid-sentence whereas the GPU processes another person’s immediate.

The slower-burning drawback is utilization waste. The GPU is provisioned to deal with prefill peaks, so it’s overprovisioned for the decode part that dominates the request lifecycle. A typical era produces 200-500 output tokens. Every decode step takes 10-30ms. A 300-token response spends roughly 3-9 seconds in decode and possibly 200ms in prefill. The GPU runs decode for 90%+ of wall-clock time, and through that 90%, it’s utilizing 30% of its compute capability. You’re paying for an H100 and getting H100-level utilization for one-tenth of the request period.

[Source: Author (SVG created using Inkscape)]

The vLLM group addressed a part of this with chunked prefill, which breaks an extended prefill into smaller items and interleaves them with decode steps. This smooths out the worst ITL spikes, however it doesn’t remedy the utilization mismatch. The GPU remains to be doing each jobs.

Splitting the inference path in two

Disaggregated inference runs prefill and decode on separate GPU swimming pools related by a quick community. A request arrives, will get routed to a prefill employee, which processes the complete immediate and generates the KV-cache. That cache is then transferred over the community to a decode employee, which handles the autoregressive token era.

Three items make it work.

1. A KV-aware router sits in entrance of each swimming pools. It assigns incoming requests to out there prefill employees and, as soon as prefill completes, routes the KV-cache to a decode employee with out there capability. The router tracks which decode employees maintain which KV-caches, enabling options like prefix caching throughout requests that share frequent immediate prefixes (system prompts, few-shot examples, device descriptions).

2. A prefill pool accommodates GPUs optimized for high-throughput matrix multiplication. These employees course of prompts, construct KV-caches, and hand them off. They don’t generate output tokens. As a result of prefill is compute-bound, these GPUs can use much less HBM bandwidth with out penalty.

3. A decode pool accommodates GPUs optimized for reminiscence bandwidth. These employees obtain KV-caches and generate tokens autoregressively. As a result of decode is memory-bound, these GPUs can use much less compute with out penalty. With massive batch sizes (a whole lot of concurrent decode requests), decode utilization rises as a result of the reminiscence reads are amortized throughout extra work.

[Source: Author (SVG created using Inkscape)]

The 2 swimming pools scale independently. In case your workload is prompt-heavy (lengthy system prompts, agentic workflows with device descriptions), you add prefill employees. If you happen to’re serving many concurrent customers producing lengthy responses, you add decode employees. This decoupling is the first financial profit: you cease paying for compute capability throughout decode, and also you cease paying for reminiscence bandwidth throughout prefill.

The tax you pay: transferring the KV-cache

Disaggregation shouldn’t be free. The KV-cache produced throughout prefill has to maneuver from the prefill GPU to the decode GPU over the community, and these caches aren’t small.

For a 70B parameter mannequin utilizing grouped-query consideration with 80 layers, 8 KV heads per layer, 128 dimensions per head, saved in FP16: every token’s KV state is 327,680 bytes. A 4,096-token immediate produces 1.34 GB of KV-cache. That whole block has to switch earlier than the decode employee can start producing.

Right here’s the calculation you may run for any mannequin:

# KV-cache dimension calculation for any transformer mannequin

def kv_cache_bytes(n_layers, n_kv_heads, head_dim, seq_len, dtype_bytes=2):

"""Returns KV-cache dimension in bytes for a single request."""

per_token = n_layers * n_kv_heads * head_dim * 2 * dtype_bytes # 2 for Ok and V

return per_token * seq_len

# Llama 70B with GQA

cache = kv_cache_bytes(n_layers=80, n_kv_heads=8, head_dim=128, seq_len=4096)

print(f"Per token: {80 * 8 * 128 * 2 * 2:,} bytes")

print(f"Full cache: {cache / 1e9:.2f} GB") # 1.34 GB

# Llama 8B (smaller mannequin, identical train)

cache_8b = kv_cache_bytes(n_layers=32, n_kv_heads=8, head_dim=128, seq_len=4096)

print(f"Llama 8B cache: {cache_8b / 1e9:.2f} GB") # 0.54 GB

[Source: Author (SVG created using Inkscape)]

At 100 Gbps (a normal RDMA hyperlink with EFA or ConnectX NICs), 1.34 GB takes roughly 107 milliseconds. At 400 Gbps, it takes 27ms. These numbers matter as a result of they set the ground on time-to-first-token: the decode employee can’t begin till the cache arrives.

Perplexity’s engineering staff constructed their disaggregated serving stack on high of libfabric and RDMA, utilizing cuMem for cross-process GPU reminiscence sharing. Their KV messenger coordinates transfers layer-by-layer, so the decode employee can start processing early layers whereas later layers are nonetheless in transit. This pipelining reduces the efficient switch latency under the uncooked bandwidth calculation.

The query is whether or not this switch overhead is price it. In a monolithic system, there’s no KV switch, however there’s queuing delay. When a brand new prefill burst lands, lively decode requests stall. Measurements from the DistServe paper present P99 latency enhancements of 2x or extra with disaggregation, as a result of eliminating the prefill-decode interference removes the tail latency spikes that queuing causes. The 27ms switch price replaces 200-500ms of queuing delay.

There’s a crossover level, although. For brief prompts with excessive prefix cache hit charges, native prefill on the decode employee could be quicker than transferring the cache over the community. The BentoML inference handbook reviews 20-30% efficiency degradation when disaggregation is utilized to workloads that don’t really want it. This isn’t an structure you apply blindly.

What the manufacturing stack seems to be like at this time

Eighteen months after DistServe, disaggregated inference has gone from a analysis prototype to the default serving structure at a number of firms operating LLMs at scale.

[Source: Author (SVG created using Inkscape)]

NVIDIA’s Dynamo, introduced at GTC 2025, is a datacenter-scale orchestration layer that treats prefill and decode employees as first-class entities. It helps vLLM, SGLang, and TensorRT-LLM as inference backends, and features a KV-aware router that handles cache placement and request scheduling throughout swimming pools.

On the Kubernetes-native facet, llm-d (an open-source venture from Pink Hat and IBM Analysis) implements disaggregated serving as a set of Kubernetes customized assets. Prefill and decode swimming pools are separate deployments that autoscale independently primarily based on queue depth and GPU utilization. KV-cache routing is dealt with by way of a gateway that tracks cache areas. For groups already operating inference on EKS, GKE, or OpenShift, this maps cleanly onto current cluster administration workflows.

If you happen to’re utilizing vLLM instantly, a minimal disaggregated setup seems to be like this:

# Begin the prefill occasion

python -m vllm.entrypoints.openai.api_server

--model meta-llama/Llama-3.1-70B-Instruct

--tensor-parallel-size 4

--kv-transfer-config '{

"kv_connector": "PyNcclConnector",

"kv_role": "kv_producer",

"kv_parallel_size": 2

}'

# Begin the decode occasion (separate node or GPU set)

python -m vllm.entrypoints.openai.api_server

--model meta-llama/Llama-3.1-70B-Instruct

--tensor-parallel-size 4

--kv-transfer-config '{

"kv_connector": "PyNcclConnector",

"kv_role": "kv_consumer",

"kv_parallel_size": 2

}'The kv_connector specifies the switch protocol (NCCL on this case, RDMA by way of MooncakeConnector for greater throughput). The kv_role tells every occasion whether or not it produces or consumes KV-caches. vLLM handles the routing and cache handoff between the 2.

SGLang examined DeepSeek-R1 with disaggregated serving on 96 H100 GPUs: 3 nodes (24 GPUs) for prefill, 9 nodes (72 GPUs) for decode. They measured 52,300 enter tokens/second and 22,300 output tokens/second per node. A follow-up on GB200 NVL72 confirmed 3.8x prefill and 4.8x decode throughput positive aspects in comparison with the identical workload on H100.

vLLM’s implementation (llm-d 0.3, October 2025) reached 2,200 tokens/second per H200 GPU with 32-way skilled parallelism. Perplexity’s manufacturing system makes use of RDMA-based KV switch with layer-pipelined transmission, supporting speculative decoding and structured output era throughout the prefill-decode boundary.

When disaggregation makes issues worse

Not each workload advantages. The BentoML inference handbook reviews 20-30% efficiency degradation when disaggregation is utilized to workloads that don’t want it, and that’s an actual quantity measured on actual {hardware}.

Quick prompts harm essentially the most. In case your median immediate is below 512 tokens and era size stays under 100 tokens, the KV switch overhead eats into no matter latency financial savings you’d get from part separation. Native prefill on the decode employee takes a number of milliseconds for a brief immediate. Transport the cache throughout RDMA takes longer than simply computing it once more. For chatbots dealing with fast Q&A, monolithic serving is less complicated and quicker.

Prefix cache reuse modifications the equation too. Multi-turn conversations and agentic workflows are inclined to share massive system prompts throughout requests. If 80%+ of the KV-cache already lives on the decode employee from a earlier flip, the incremental prefill is tiny. Sending a recent cache from a separate prefill employee wastes community bandwidth on information that’s already native.

Scale issues. A single node with 2-4 GPUs doesn’t generate sufficient concurrent requests to maintain two separate swimming pools busy. The scheduling overhead alone can tank throughput at that dimension. Disaggregation begins paying off when you’ve got dozens of GPUs and sufficient site visitors to maintain each swimming pools saturated.

After which there’s the community. With out RDMA or not less than 100 Gbps hyperlinks, KV switch turns into the brand new bottleneck. TCP works however provides latency. Perplexity constructed their whole stack on RDMA with libfabric for a motive.

The Hao AI Lab put it bluntly of their November 2025 retrospective: “disaggregated prefill doesn’t enhance throughput.” The positive aspects are latency management and price effectivity by way of higher utilization. If you happen to’re assembly latency targets with monolithic serving, including a KV switch hop between phases simply provides you extra transferring elements to debug at 2 AM.

The associated fee arithmetic

The financial case for disaggregation rests on one remark: you should utilize cheaper {hardware} for every part once they’re separated.

Prefill employees want excessive FLOPS however don’t want large HBM capability. An H100 SXM with 80GB HBM is well-suited. An H200 with 141GB HBM is overkill for prefill; you’re paying for reminiscence bandwidth you gained’t use.

Decode employees want excessive reminiscence bandwidth and huge HBM to carry KV-caches for a lot of concurrent requests, however don’t want peak FLOPS. An H200, with its bigger HBM3e capability and better bandwidth, is definitely higher fitted to decode than an H100. The additional reminiscence allows you to batch extra decode requests collectively, amortizing the reminiscence reads and pushing utilization greater.

The SPAD paper takes this logic to its excessive: they suggest “right-sizing” GPU designs into separate prefill and decode chips. Their simulation reveals {that a} prefill chip with 40% much less reminiscence bandwidth than an H100 solely loses 17% of prefill efficiency. A decode chip with 50% much less compute solely loses 22% of decode efficiency. The associated fee financial savings from eradicating unused silicon in every chip are substantial.

On the cluster stage, the InfoQ evaluation reviews 15-40% whole infrastructure price discount from disaggregation. The financial savings come from not overprovisioning {hardware}, from eliminating idle tensor cores throughout decode, and from having the ability to add decode employees with out shopping for prefill capability you don’t want.

Meta, LinkedIn, Mistral, and HuggingFace are all operating vLLM with disaggregated serving in manufacturing. The throughput positive aspects reported vary from 2x to six.4x relying on the workload and {hardware}. SGLang’s DeepSeek-R1 deployment on GB200 measured 4.8x decode throughput enchancment over monolithic H100 serving.

Do you have to disaggregate? A choice framework

Earlier than you refactor your serving stack, run by way of these 5 checks. They take ten minutes and can prevent from both over-engineering a easy deployment or under-investing in one which’s bleeding cash.

1. Measure your prefill-to-decode time ratio. Run /standing or equal monitoring in your present deployment and file what share of wall-clock GPU time is spent in every part. If decode accounts for lower than 70% of request period, your workload is prefill-heavy and disaggregation has a smaller utilization payoff. If decode is 85%+ of wall time, you’re paying for idle tensor cores a lot of the day.

2. Calculate your KV-cache switch dimension utilizing the method above. If it exceeds 500 MB per request and your community is below 100 Gbps, the switch latency will eat into your TTFT price range. Run the numbers in your precise mannequin and median immediate size, not for a theoretical worst case.

3. Verify your prefix cache hit charge. If you happen to’re operating agentic or multi-turn workloads with shared system prompts, measure how usually the decode employee already holds a usable KV-cache from a earlier flip. Hit charges above 80% scale back the worth of a separate prefill pool as a result of most prefill work is already skipped.

4. Rely your GPUs. Beneath ~16 GPUs, the scheduling overhead of sustaining two swimming pools sometimes exceeds the utilization achieve. Above 32 GPUs with sustained site visitors, the price financial savings from right-sized {hardware} begin to compound.

5. Audit your community. Verify whether or not your nodes have RDMA-capable NICs (EFA on AWS, ConnectX on naked steel) and what bandwidth they assist. If you happen to’re restricted to TCP, disaggregation can nonetheless work for long-context workloads, however the switch latency can be greater than the numbers on this article assume.

If checks 1, 4, and 5 all come again favorable, disaggregation will nearly definitely scale back your per-token serving price. Begin with vLLM’s built-in disaggregated prefilling mode, measure the influence on TTFT and ITL, and scale from there.

What comes subsequent

Most disaggregated deployments at this time nonetheless use the identical GPU mannequin for each swimming pools. The SPAD analysis from UT Austin and NVIDIA’s personal roadmap recommend that purpose-built prefill and decode silicon is coming. Within the meantime, the sensible model is utilizing completely different cloud occasion varieties: compute-optimized for prefill, memory-optimized for decode. Moonshot AI’s Mooncake structure goes additional, utilizing CPU DRAM and SSDs as overflow tiers for KV-cache storage, conserving GPU HBM free for lively decoding.

The Hao AI Lab referred to as disaggregation “the defining precept of the trendy inference stack” of their November 2025 retrospective. Eighteen months from analysis paper to manufacturing default at Perplexity, Meta, and NVIDIA is quick, even by ML infrastructure requirements. The groups who plan for it now, with correct capability modeling and community design, will spend much less when site visitors scales than those who undertake it reactively.