On this article, you’ll be taught a transparent, sensible framework to diagnose why a language mannequin underperforms and the right way to validate possible causes shortly.

Subjects we are going to cowl embody:

- 5 widespread failure modes and what they appear to be

- Concrete diagnostics you’ll be able to run instantly

- Pragmatic mitigation ideas for every failure

Let’s not waste any extra time.

Methods to Diagnose Why Your Language Mannequin Fails

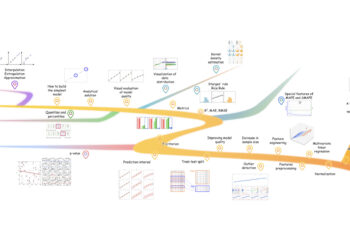

Picture by Editor

Introduction

Language fashions, as extremely helpful as they’re, will not be excellent, and so they could fail or exhibit undesired efficiency because of a wide range of elements, resembling information high quality, tokenization constraints, or difficulties in appropriately deciphering person prompts.

This text adopts a diagnostic standpoint and explores a 5-point framework for understanding why a language mannequin — be it a big, general-purpose giant language mannequin (LLM), or a small, domain-specific one — may fail to carry out effectively.

Diagnostic Factors for a Language Mannequin

Within the following sections, we are going to uncover widespread causes for failure in language fashions, briefly describing each and offering sensible ideas for prognosis and the right way to overcome them.

1. Poor High quality or Inadequate Coaching Knowledge

Similar to different machine studying fashions resembling classifiers and regressors, a language mannequin’s efficiency drastically will depend on the quantity and high quality of the information used to coach it, with one not-so-subtle nuance: language fashions are educated on very giant datasets or textual content corpora, typically spanning from many hundreds to tens of millions or billions of paperwork.

When the language mannequin generates outputs which are incoherent, factually incorrect, or nonsensical (hallucinations) even for easy prompts, likelihood is the standard or quantity of coaching information used isn’t enough. Particular causes might embody a coaching corpus that’s too small, outdated, or stuffed with noisy, biased, or irrelevant textual content. In smaller language fashions, the results of this data-related situation additionally embody lacking area vocabulary in generated solutions.

To diagnose information points, examine a sufficiently consultant portion of the coaching information if attainable, analyzing properties resembling relevance, protection, and matter steadiness. Working focused prompts about identified details and utilizing uncommon phrases to establish information gaps can be an efficient diagnostic technique. Lastly, maintain a trusted reference dataset helpful to check generated outputs with info contained there.

When the language mannequin generates outputs which are incoherent, factually incorrect, or nonsensical (hallucinations) even for easy prompts, likelihood is the standard or quantity of coaching information used isn’t enough.

2. Tokenization or Vocabulary Limitations

Suppose that by analyzing the internal conduct of a freshly educated language mannequin, it seems to battle with sure phrases or symbols within the vocabulary, breaking them into tokens in an surprising method, or failing to correctly signify them. This may occasionally stem from the tokenizer used together with the mannequin, which doesn’t align appropriately with the goal area, yielding far-from-ideal therapy of unusual phrases, technical jargon, and so forth.

Diagnosing tokenization and vocabulary points includes inspecting the tokenizer, specifically by checking the way it splits domain-specific phrases. Using metrics resembling perplexity or log-likelihood on a held-out subset can quantify how effectively the mannequin represents area textual content, and testing edge circumstances — e.g., non-Latin scripts or phrases and symbols containing unusual Unicode characters — helps pinpoint root causes associated to token administration.

3. Immediate Instability and Sensitivity

A small change within the wording of a immediate, its punctuation, or the order of a number of nonsequential directions can result in vital adjustments within the high quality, accuracy, or relevance of the generated output. That’s immediate instability and sensitivity: the language mannequin turns into overly delicate to how the immediate is articulated, actually because it has not been correctly fine-tuned for efficient, fine-grained instruction following, or as a result of there are inconsistencies within the coaching information.

The easiest way to diagnose immediate instability is experimentation: strive a battery of paraphrased prompts whose total that means is equal, and examine how constant the outcomes are with one another. Likewise, attempt to establish patterns below which a immediate leads to a steady versus an unstable response.

4. Context Home windows and Reminiscence Constraints

When a language mannequin fails to make use of context launched in earlier interactions as a part of a dialog with the person, or misses earlier context in an extended doc, it will possibly begin exhibiting undesired conduct patterns resembling repeating itself or contradicting content material it “stated” earlier than. The quantity of context a language mannequin can retain, or context window, is basically decided by reminiscence limitations. Accordingly, context home windows which are too quick could truncate related info and drop earlier cues, whereas overly prolonged contexts can hinder monitoring of long-range dependencies.

Diagnosing points associated to context home windows and reminiscence limitations entails iteratively evaluating the language mannequin with more and more longer inputs, fastidiously measuring how a lot it will possibly appropriately recall from earlier elements. When accessible, consideration visualizations are a robust useful resource to examine whether or not related tokens are attended throughout lengthy ranges within the textual content.

5. Area and Temporal Drifts

As soon as deployed, a language mannequin remains to be not exempt from offering incorrect solutions — for instance, solutions which are outdated, that miss just lately coined phrases or ideas, or that fail to replicate evolving area information. This implies the coaching information might need change into anchored up to now, nonetheless counting on a snapshot of the world that has already modified; consequently, adjustments in details inevitably result in information degradation and efficiency degradation. That is analogous to information and idea drifts in different forms of machine studying techniques.

To diagnose temporal or domain-related drifts, constantly compile benchmarks of latest occasions, phrases, articles, and different related supplies within the goal area. Observe the accuracy of responses utilizing these new language gadgets in comparison with responses associated to steady or timeless information, and see if there are vital variations. Moreover, schedule periodic performance-monitoring schemes based mostly on “recent queries.”

Ultimate Ideas

This text examined a number of widespread the reason why language fashions could fail to carry out effectively, from information high quality points to poor administration of context and drifts in manufacturing brought on by adjustments in factual information. Language fashions are inevitably complicated; due to this fact, understanding attainable causes for failure and the right way to diagnose them is vital to creating them extra strong and efficient.

On this article, you’ll be taught a transparent, sensible framework to diagnose why a language mannequin underperforms and the right way to validate possible causes shortly.

Subjects we are going to cowl embody:

- 5 widespread failure modes and what they appear to be

- Concrete diagnostics you’ll be able to run instantly

- Pragmatic mitigation ideas for every failure

Let’s not waste any extra time.

Methods to Diagnose Why Your Language Mannequin Fails

Picture by Editor

Introduction

Language fashions, as extremely helpful as they’re, will not be excellent, and so they could fail or exhibit undesired efficiency because of a wide range of elements, resembling information high quality, tokenization constraints, or difficulties in appropriately deciphering person prompts.

This text adopts a diagnostic standpoint and explores a 5-point framework for understanding why a language mannequin — be it a big, general-purpose giant language mannequin (LLM), or a small, domain-specific one — may fail to carry out effectively.

Diagnostic Factors for a Language Mannequin

Within the following sections, we are going to uncover widespread causes for failure in language fashions, briefly describing each and offering sensible ideas for prognosis and the right way to overcome them.

1. Poor High quality or Inadequate Coaching Knowledge

Similar to different machine studying fashions resembling classifiers and regressors, a language mannequin’s efficiency drastically will depend on the quantity and high quality of the information used to coach it, with one not-so-subtle nuance: language fashions are educated on very giant datasets or textual content corpora, typically spanning from many hundreds to tens of millions or billions of paperwork.

When the language mannequin generates outputs which are incoherent, factually incorrect, or nonsensical (hallucinations) even for easy prompts, likelihood is the standard or quantity of coaching information used isn’t enough. Particular causes might embody a coaching corpus that’s too small, outdated, or stuffed with noisy, biased, or irrelevant textual content. In smaller language fashions, the results of this data-related situation additionally embody lacking area vocabulary in generated solutions.

To diagnose information points, examine a sufficiently consultant portion of the coaching information if attainable, analyzing properties resembling relevance, protection, and matter steadiness. Working focused prompts about identified details and utilizing uncommon phrases to establish information gaps can be an efficient diagnostic technique. Lastly, maintain a trusted reference dataset helpful to check generated outputs with info contained there.

When the language mannequin generates outputs which are incoherent, factually incorrect, or nonsensical (hallucinations) even for easy prompts, likelihood is the standard or quantity of coaching information used isn’t enough.

2. Tokenization or Vocabulary Limitations

Suppose that by analyzing the internal conduct of a freshly educated language mannequin, it seems to battle with sure phrases or symbols within the vocabulary, breaking them into tokens in an surprising method, or failing to correctly signify them. This may occasionally stem from the tokenizer used together with the mannequin, which doesn’t align appropriately with the goal area, yielding far-from-ideal therapy of unusual phrases, technical jargon, and so forth.

Diagnosing tokenization and vocabulary points includes inspecting the tokenizer, specifically by checking the way it splits domain-specific phrases. Using metrics resembling perplexity or log-likelihood on a held-out subset can quantify how effectively the mannequin represents area textual content, and testing edge circumstances — e.g., non-Latin scripts or phrases and symbols containing unusual Unicode characters — helps pinpoint root causes associated to token administration.

3. Immediate Instability and Sensitivity

A small change within the wording of a immediate, its punctuation, or the order of a number of nonsequential directions can result in vital adjustments within the high quality, accuracy, or relevance of the generated output. That’s immediate instability and sensitivity: the language mannequin turns into overly delicate to how the immediate is articulated, actually because it has not been correctly fine-tuned for efficient, fine-grained instruction following, or as a result of there are inconsistencies within the coaching information.

The easiest way to diagnose immediate instability is experimentation: strive a battery of paraphrased prompts whose total that means is equal, and examine how constant the outcomes are with one another. Likewise, attempt to establish patterns below which a immediate leads to a steady versus an unstable response.

4. Context Home windows and Reminiscence Constraints

When a language mannequin fails to make use of context launched in earlier interactions as a part of a dialog with the person, or misses earlier context in an extended doc, it will possibly begin exhibiting undesired conduct patterns resembling repeating itself or contradicting content material it “stated” earlier than. The quantity of context a language mannequin can retain, or context window, is basically decided by reminiscence limitations. Accordingly, context home windows which are too quick could truncate related info and drop earlier cues, whereas overly prolonged contexts can hinder monitoring of long-range dependencies.

Diagnosing points associated to context home windows and reminiscence limitations entails iteratively evaluating the language mannequin with more and more longer inputs, fastidiously measuring how a lot it will possibly appropriately recall from earlier elements. When accessible, consideration visualizations are a robust useful resource to examine whether or not related tokens are attended throughout lengthy ranges within the textual content.

5. Area and Temporal Drifts

As soon as deployed, a language mannequin remains to be not exempt from offering incorrect solutions — for instance, solutions which are outdated, that miss just lately coined phrases or ideas, or that fail to replicate evolving area information. This implies the coaching information might need change into anchored up to now, nonetheless counting on a snapshot of the world that has already modified; consequently, adjustments in details inevitably result in information degradation and efficiency degradation. That is analogous to information and idea drifts in different forms of machine studying techniques.

To diagnose temporal or domain-related drifts, constantly compile benchmarks of latest occasions, phrases, articles, and different related supplies within the goal area. Observe the accuracy of responses utilizing these new language gadgets in comparison with responses associated to steady or timeless information, and see if there are vital variations. Moreover, schedule periodic performance-monitoring schemes based mostly on “recent queries.”

Ultimate Ideas

This text examined a number of widespread the reason why language fashions could fail to carry out effectively, from information high quality points to poor administration of context and drifts in manufacturing brought on by adjustments in factual information. Language fashions are inevitably complicated; due to this fact, understanding attainable causes for failure and the right way to diagnose them is vital to creating them extra strong and efficient.