0.

Least Squares is used nearly in all places in relation to numerical optimization and regression duties in machine studying. It goals at minimizing the Imply Squared Error (MSE) of a given mannequin.

Each L1 (sum of absolute values) and L2 (sum of squares) norms provide an intuitive strategy to sum signed errors whereas stopping them from cancelling one another out. But the L2 norm ends in a a lot smoother Loss Operate and avoids the kinks of absolutely the values.

However why is such a easy loss perform so standard? We’ll see that there are fairly stable arguments in favor of the Least Squares, past being simple to compute.

- Computational Comfort: The sq. loss perform is simple to distinguish and supply a closed-form resolution when optimizing a Linear Regression.

- Imply and Median: We’re all aware of these two portions, however amusingly not many individuals know that they naturally stem from L2 and L1 losses.

- OLS is BLUE: Amongst all unbiased estimators, Peculiar Least-Squares (OLS) is the Greatest Linear Unbiased Estimator (BLUE), i.e. the one with lowest variance.

- LS is MLE with regular errors: Utilizing Least-Squares to suit any mannequin, linear or not, is equal to Most Probability Estimation beneath usually distributed errors.

In conclusion, the Least Squares method completely is sensible from a mathematical perspective. Nevertheless, keep in mind that it would develop into unreliable if the theoretical assumptions are now not fulfilled, e.g. when the information distribution accommodates outliers.

N.B. I do know there’s already an ideal subreddit, “Why Do We Use Least Squares In Linear Regression?”, about this matter. Nevertheless, I‘d wish to focus on this article on presenting each intuitive understanding and rigorous proofs.

1. Computational Comfort

Optimization

Coaching a mannequin means tweaking its parameters to optimize a given price perform. In some very lucky circumstances, its differentiation permits to immediately derive a closed-form resolution for the optimum parameters, with out having to undergo an iterative optimization.

Exactly, the sq. perform is convex, easy, and simple to distinguish. In distinction, absolutely the perform is non-differentiable at 0, making the optimization course of much less easy.

Differentiability

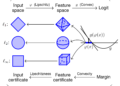

When coaching a regression mannequin with n input-output pairs (x,y) and a mannequin f parametrized by θ, the Least-Squares loss perform is:

So long as the mannequin f is differentiable with respect to θ, we will simply derive the gradient of the loss perform.

Linear Regression

Linear Regression estimates the optimum linear coefficients β given a dataset of n input-output pairs (x,y).

The equation beneath reveals on the left the L1 loss and on the appropriate the L2 loss to guage the health of β on the dataset.

We normally drop the index

iand change to a vectorized notation to raised leverage linear algebra. This may be completed by stacking the enter vectors as rows to kind the design matrix X. Equally, the outputs are stacked right into a vector Y.

Peculiar Least-Squares

The L1 formulation affords little or no room for enchancment. On the opposite facet, the L2 formulation is differentiable and its gradient turns into zero just for a single optimum set of parameters β. This method is called Peculiar Least-Squares (OLS).

Zeroing the gradient yields the closed kind resolution of the OLS estimator, utilizing the pseudo-inverse matrix. This implies we will immediately compute the optimum coefficients with out the necessity for a numerical optimization course of.

Remarks

Trendy computer systems are actually environment friendly and the efficiency drop between analytical and numerical options is normally not that vital. Thus, computational comfort just isn’t the principle purpose why we really use Least-Squares.

2. Imply and Median

Introduction

You’ve actually already computed a imply or median, whether or not with Excel, NumPy, or by hand. They’re key ideas in Statistics, and infrequently present precious insights for earnings, grades, checks scores or age distributions.

We’re so aware of these two portions that we hardly ever query their origin. But, amusingly, they stem naturally from L2 and L1 losses.

Given a set of actual values xi, we regularly attempt to combination them right into a single good consultant worth, e.g. the imply or median. That manner, we will extra simply evaluate completely different units of values. Nevertheless, what represents “nicely” the information is only subjective and relies on our expectations, i.e. the price perform. As an illustration, imply and median earnings are each related, however they convey completely different insights. The imply displays general wealth, whereas the median offers a clearer image of typical earnings, unaffected by extraordinarily low or excessive incomes.

Given a value perform ρ, mirroring our expectations, we resolve the next optimization drawback to search out the “greatest” consultant worth µ.

Imply

Let’s take into account ρ is the L2 loss.

Zeroing the gradient is easy and brings out the imply definition.

Thus, we’ve proven that the imply greatest represents the xi by way of the L2 loss.

Median

Let’s take into account the L1 loss. Being a sum of piecewise linear features, it’s itself piecewise linear, with discontinuities in its gradient at every xi.

The determine beneath illustrates the L1 loss for every xi . With out lack of generality, I’ve sorted the xi to order the non-differentiable kinks. Every perform |µ-xi| is xi-µ beneath xi and µ-xi above.

The desk beneath clarifies the piecewise expressions of every particular person L1 time period |µ-xi|. We will sum these expressions to get the whole L1 loss. With the xi sorted, the leftmost half has a slope of -n and the rightmost a slope of +n.

For higher readability, I’ve hidden the fixed intercepts as

Ci.

Intuitively, the minimal of this piecewise linear perform happens the place the slope transitions from destructive to constructive, which is exactly the place the median lies for the reason that factors are sorted.

Thus, we’ve proven that the median greatest represents the xi by way of the L1 loss.

N.B. For an

oddvariety of factors, the median is the center worth and the distinctive minimizer of the L1 loss. For anevenvariety of factors, the median is the common of the 2 center values, and the L1 loss varieties a plateau, with any worth between these two minimizing the loss.

3. OLS is BLUE

Gauss-Markov theorem

The Gauss-Markov theorem states that the Peculiar Least Squares (OLS) estimator is the Greatest Linear Unbiased Estimator (BLUE). “Greatest” signifies that OLS has the bottom variance amongst all linear unbiased estimators.

This sampling variance represents how a lot the estimate of the coefficients of β would fluctuate throughout completely different samples drawn from the identical inhabitants.

The theory assumes Y follows a linear mannequin with true linear coefficients β and random errors ε. That manner, we will analyze how the β estimate of an estimator would fluctuate for various values of noise ε.

The assumptions on the random errors ε guarantee they’re unbiased (zero imply), homoscedastic (fixed finite variance), and uncorrelated (diagonal covariance matrix).

Linearity

Remember that “linearity” within the Gauss-Markov theorem refers to 2 completely different ideas:

- Mannequin Linearity: The regression assumes a linear relationship between

YandX.

- Estimator Linearity: We solely take into account estimators linear in

Y, that means they need to embody a linear part represented by a matrixCthat relies upon solely onX.

Unbiasedness of OLS

The OLS estimator, denoted with a hat, has already been derived earlier. Substituting the random error mannequin for Y offers an expression that higher captures the deviation from the true β.

We introduce the matrix

Ato symbolize the OLS-specific linear partCfor higher readability.

As anticipated, the OLS estimator is unbiased, as its expectation is centered across the true β for unbiased errors ε.

Theorem’s proof

Let’s take into account a linear estimator, denoted by a tilde, with its linear part A+D, the place D represents a shift from the OLS estimator.

The anticipated worth of this linear estimator seems to be the true β plus an extra time period DXβ. For the estimator to be thought-about unbiased, this time period should be zero, thus DX=0. This orthogonality ensures that the shift D doesn’t introduce any bias.

Be aware that this additionally implies that DA'=0, which shall be helpful later.

Now that we’ve assured the unbiasedness of our linear estimator, we will evaluate its variance in opposition to the OLS estimator.

Because the matrix C is fixed and the errors ε are spherical, we acquire the next variance.

After substituting C with A+D, increasing the phrases, and utilizing the orthogonality of DA', we find yourself with the variance of our linear estimator being equal to a sum of two phrases. The primary time period is the variance of the OLS estimator, and the second time period is constructive, because of the constructive definiteness of DD’.

Consequently, now we have proven that the OLS estimator achieves the bottom variance amongst all linear estimators for Linear Regression with unbiased spherical errors.

Remarks

The OLS estimator is taken into account “greatest” by way of minimal variance. Nevertheless, it’s price noting that the definition of the variance itself is intently tied to Least Squares, because it displays the expectation of the squared distinction from the anticipated worth.

Thus, the important thing query could be why variance is often outlined this manner.

4. LS is MLE with regular errors

Most Probability Estimation

Most Probability Estimation (MLE) is a technique for estimating mannequin parameters θ by maximizing the probability of observing the given knowledge (x,y) beneath the mannequin outlined by θ.

Assuming the pairs (xi,yi) are unbiased and identically distributed (i.i.d.), we will specific the probability because the product of the conditional possibilities.

A standard trick consists in making use of a logarithm on high of a product to rework it right into a extra handy and numerically steady sum of logs. Because the logarithm is monotonically growing, it’s nonetheless equal to fixing the identical optimization drawback. That’s how we get the well-known log-likelihood.

In numerical optimization, we normally add a minus signal to attenuate portions as a substitute of maximizing them.

MLE Inference

As soon as the optimum mannequin parameters θ have been estimated, inference is carried out by discovering the worth of y that maximizes the conditional chance given the noticed x, i.e. the most-likely y.

Mannequin Parameters

Be aware that there’s no particular assumption on the mannequin. It may be of any variety and its parameters are merely stacked right into a flat vector θ.

As an illustration, θ can symbolize the weights of a neural community, the parameters of a random forest, the coefficients of a linear regression mannequin, and so forth.

Regular Errors

As for the errors across the true mannequin, let’s assume that they’re unbiased and usually distributed.

It’s equal to assuming that

yfollows a standard distribution with imply predicted by the mannequin and stuck variance σ².

Be aware that the inference step is easy, as a result of the height of the traditional distribution is reached on the imply, i.e. the worth predicted by the mannequin.

Apparently, the exponential time period within the regular density cancels out with the logarithm of the log-likelihood. It then seems to be equal to a plain Least-Squares minimization drawback!

Consequently, utilizing Least-Squares to suit any mannequin, linear or not, is equal to Most Probability Estimation beneath usually distributed errors.

Conclusion

Elementary Device

In conclusion, the recognition of Least-Squares comes from its computational simplicity and its deep hyperlink to key statistical ideas. It offers a closed kind resolution for Linear Regression (which is the Greatest Linear Unbiased Estimator), defines the imply, and is equal to Most Probability Estimation beneath regular errors.

BLUE or BUE ?

There’s even debate over whether or not or not the linearity assumption of the Gauss-Markov Theorem might be relaxed, permitting OLS to even be thought-about the Greatest Unbiased Estimator (BUE).

We’re nonetheless fixing Linear Regression, however this time the estimator can stay linear however can be allowed to be non-linear, thus BUE as a substitute of BLUE.

The economist Bruce Hansen thought he had proved it in 2022 [1], however Pötscher and Preinerstorfer rapidly invalidated his proof [2].

Outliers

Least-Squares may be very more likely to develop into unreliable when errors aren’t usually distributed, e.g. with outliers.

As we’ve seen beforehand, the imply outlined by L2 is very affected by excessive values, whereas the median outlined by L1 merely ignores them.

Sturdy loss features like Huber or Tukey are inclined to nonetheless mimic the quadratic habits of Least-Squares for small errors, whereas enormously attenuating the affect of huge errors with a close to L1 or fixed habits. They’re much higher decisions than L2 to deal with outliers and supply sturdy estimates.

Regularization

In some circumstances, utilizing a biased estimator like Ridge regression, which provides regularization, can enhance generalization to unseen knowledge. Whereas introducing bias, it helps stop overfitting, making the mannequin extra sturdy, particularly in noisy or high-dimensional settings.

[1] Bruce E. Hansen, 2022. “A Trendy Gauss–Markov Theorem,” Econometrica, Econometric Society, vol. 90(3), pages 1283–1294, Might.

[2] Pötscher, Benedikt M. & Preinerstorfer, David, 2022. “A Trendy Gauss-Markov Theorem? Actually?,” MPRA Paper 112185, College Library of Munich, Germany.