📕 That is the primary in a multi-part collection on creating net functions with Generative Ai integration.

of Contents

Introduction

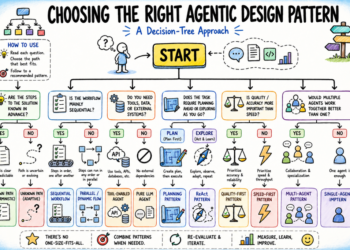

The AI house is an enormous and complex panorama. Matt Turck famously does his Machine Studying, AI, and Information (MAD) panorama yearly, and it at all times appears to get crazier and crazier. Try the newest one made for 2024.

Overwhelming, to say the least.

Nonetheless, we will use abstractions to assist us make sense of this loopy panorama of ours. The first one I can be discussing and breaking down on this article is the concept of an AI stack. A stack is only a mixture of applied sciences which are used to construct functions. These of you accustomed to net improvement doubtless know of the LAMP stack: Linux, Apache, MySQL, PHP. That is the stack that powers WordPress. Utilizing a catchy acronym like LAMP is an effective means to assist us people grapple with the complexity of the net software panorama. These of you within the information discipline doubtless have heard of the Trendy Information Stack: usually dbt, Snowflake, Fivetran, and Looker (or the Publish-Trendy Information Stack. IYKYK).

The AI stack is comparable, however on this article we are going to keep a bit extra conceptual. I’m not going to specify particular applied sciences try to be utilizing at every layer of the stack, however as an alternative will merely title the layers, and allow you to resolve the place you slot in, in addition to what tech you’ll use to realize success in that layer.

There are many methods to describe the AI stack. I favor simplicity; so right here is the AI stack in 4 layers, organized from furthest from the tip consumer (backside) to closest (prime):

- Infrastructure Layer (Backside): The uncooked bodily {hardware} vital to coach and do inference with AI. Suppose GPUs, TPUs, cloud providers (AWS/Azure/GCP).

- Information Layer (Backside): The info wanted to coach machine studying fashions, in addition to the databases wanted to retailer all of that information. Suppose ImageNet, TensorFlow Datasets, Postgres, MongoDB, Pinecone, and so on.

- Mannequin and Orchestration Layer (Center): This refers back to the precise giant language, imaginative and prescient, and reasoning fashions themselves. Suppose GPT, Claude, Gemini, or any machine studying mannequin. This additionally contains the instruments builders use to construct, deploy, and observe fashions. Suppose PyTorch/TensorFlow, Weights & Biases, and LangChain.

- Software Layer (High): The AI-powered functions which are utilized by clients. Suppose ChatGPT, GitHub copilot, Notion, Grammarly.

Many firms dip their toes in a number of layers. For instance, OpenAI has each educated GPT-4o and created the ChatGPT net software. For assist with the infrastructure layer they’ve partnered with Microsoft to make use of their Azure cloud for on-demand GPUs. As for the information layer, they constructed net scrapers to assist pull in tons of pure language information to feed to their fashions throughout coaching, not with out controversy.

The Virtues of the Software Layer

I agree very a lot with Andrew Ng and many others within the house who say that the appliance layer of AI is the place to be.

Why is that this? Let’s begin with the infrastructure layer. This layer is prohibitively costly to interrupt into until you may have tons of of thousands and thousands of {dollars} of VC money to burn. The technical complexity of trying to create your personal cloud service or craft a brand new sort of GPU could be very excessive. There’s a motive why tech behemoths like Amazon, Google, Nvidia, and Microsoft dominate this layer. Ditto on the inspiration mannequin layer. Firms like OpenAI and Anthropic have armies of PhDs to innovate right here. As well as, they needed to accomplice with the tech giants to fund mannequin coaching and internet hosting. Each of those layers are additionally quickly turning into commoditized. Because of this one cloud service/mannequin kind of performs like one other. They’re interchangeable and could be simply changed. They largely compete on worth, comfort, and model title.

The info layer is fascinating. The appearance of generative AI has led to a fairly just a few firms staking their declare as the preferred vector database, together with Pinecone, Weaviate, and Chroma. Nonetheless, the shopper base at this layer is far smaller than on the software layer (there are far much less builders than there are individuals who will use AI functions like ChatGPT). This space can also be rapidly develop into commoditized. Swapping Pinecone for Weaviate will not be a tough factor to do, and if for instance Weaviate dropped their internet hosting costs considerably many builders would doubtless make the change from one other service.

It’s additionally essential to notice improvements taking place on the database stage. Tasks similar to pgvector and sqlite-vec are taking tried and true databases and making them in a position to deal with vector embeddings. That is an space the place I want to contribute. Nonetheless, the trail to revenue will not be clear, and interested by revenue right here feels a bit icky (I ♥️ open-source!)

That brings us to the appliance layer. That is the place the little guys can notch large wins. The power to take the newest AI tech improvements and combine them into net functions is and can proceed to be in excessive demand. The trail to revenue is clearest when providing merchandise that individuals love. Purposes can both be SaaS choices or they are often custom-built functions tailor-made to an organization’s specific use case.

Keep in mind that the businesses engaged on the inspiration mannequin layer are continuously working to launch higher, quicker, and cheaper fashions. For instance, in case you are utilizing the gpt-4o mannequin in your app, and OpenAI updates the mannequin, you don’t need to do a factor to obtain the replace. Your app will get a pleasant bump in efficiency for nothing. It’s just like how iPhones get common updates, besides even higher, as a result of no set up is required. The streamed chunks getting back from your API supplier are simply magically higher.

If you wish to change to a mannequin from a brand new supplier, simply change a line or two of code to begin getting improved responses (bear in mind, commoditization). Consider the latest DeepSeek second; what could also be horrifying for OpenAI is thrilling for software builders.

It is very important word that the appliance layer will not be with out its challenges. I’ve seen fairly a bit of hand wringing on social media about SaaS saturation. It will probably really feel tough to get customers to register for an account, not to mention pull out a bank card. It will probably really feel as if you want VC funding for advertising blitzes and yet one more in-vogue black-on-black advertising web site. The app developer additionally needs to be cautious to not construct one thing that may rapidly be cannibalized by one of many large mannequin suppliers. Take into consideration how Perplexity initially constructed their fame by combining the ability of LLMs with search capabilities. On the time this was novel; these days hottest chat functions have this performance built-in.

One other hurdle for the appliance developer is acquiring area experience. Area experience is a flowery time period for figuring out a few area of interest discipline like regulation, medication, automotive, and so on. The entire technical talent on this planet doesn’t imply a lot if the developer doesn’t have entry to the required area experience to make sure their product really helps somebody. As a easy instance, one can theorize how a doc summarizer could assist out a authorized firm, however with out really working intently with a lawyer, any usability stays theoretical. Use your community to develop into buddies with some area specialists; they may also help energy your apps to success.

A substitute for partnering with a site skilled is constructing one thing particularly for your self. In the event you benefit from the product, doubtless others will as effectively. You may then proceed to dogfood your app and iteratively enhance it.

Thick Wrappers

Early functions with gen AI integration had been derided as “skinny wrappers” round language fashions. It’s true that taking an LLM and slapping a easy chat interface on it gained’t succeed. You’re basically competing with ChatGPT, Claude, and so on. in a race to the underside.

The canonical skinny wrapper appears one thing like:

- A chat interface

- Primary immediate engineering

- A function that doubtless can be cannibalized by one of many large mannequin suppliers quickly or can already be performed utilizing their apps

An instance could be an “AI writing assistant” that simply relays prompts to ChatGPT or Claude with fundamental immediate engineering. One other could be an “AI summarizer software” that passes a textual content to an LLM to summarize, with no processing or domain-specific data.

With our expertise in growing net apps with AI integration, we at Los Angeles AI Apps have give you the next criterion for learn how to keep away from creating a skinny wrapper software:

If the app can’t greatest ChatGPT with search by a major issue, then it’s too skinny.

Just a few issues to notice right here, beginning with the concept of a “important issue”. Even when you’ll be able to exceed ChatGPT’s functionality in a selected area by a small issue, it doubtless gained’t be sufficient to make sure success. You actually should be loads higher than ChatGPT for folks to even think about using the app.

Let me encourage this perception with an instance. After I was studying information science, I created a film suggestion undertaking. It was an amazing expertise, and I discovered fairly a bit about RAG and net functions.

Would it not be manufacturing app? No.

It doesn’t matter what query you ask it, ChatGPT will doubtless offer you a film suggestion that’s comparable. Although I used to be utilizing RAG and pulling in a curated dataset of movies, it’s unlikely a consumer will discover the responses far more compelling than ChatGPT + search. Since customers are accustomed to ChatGPT, they’d doubtless keep it up for film suggestions, even when the responses from my app had been 2x or 3x higher than ChatGPT (in fact, defining “higher” is difficult right here.)

Let me use one other instance. One app we had thought of constructing out was an internet app for metropolis authorities web sites. These websites are notoriously giant and laborious to navigate. We thought if we might scrape the contents of the web site area after which use RAG we might craft a chatbot that might successfully reply consumer queries. It labored pretty effectively, however ChatGPT with search capabilities is a beast. It oftentimes matched or exceeded the efficiency of our bot. It might take in depth iteration on the RAG system to get our app to persistently beat ChatGPT + search. Even then, who would need to go to a brand new area to get solutions to metropolis questions, when ChatGPT + search would yield related outcomes? Solely by promoting our providers to the town authorities and having our chatbot built-in into the town web site would we get constant utilization.

One technique to differentiate your self is through proprietary information. If there’s personal information that the mannequin suppliers are usually not aware of, then that may be beneficial. On this case the worth is within the assortment of the information, not the innovation of your chat interface or your RAG system. Think about a authorized AI startup that gives its fashions with a big database of authorized information that can’t be discovered on the open net. Maybe RAG could be performed to assist the mannequin reply authorized questions over these personal paperwork. Can one thing like this outdo ChatGPT + search? Sure, assuming the authorized information can’t be discovered on Google.

Going even additional, I imagine one of the simplest ways have your app stand out is to forego the chat interface fully. Let me introduce two concepts:

- Proactive AI

- In a single day AI

The Return of Clippy

I learn an wonderful article from the Evil Martians that highlights the innovation beginning to happen on the software stage. They describe how they’ve forgone a chat interface fully, and as an alternative are attempting one thing they name proactive AI. Recall Clippy from Microsoft Phrase. As you had been typing out your doc, it will butt in with strategies. These had been oftentimes not useful, and poor Clippy was mocked. With the appearance of LLMs, you possibly can think about making a way more highly effective model of Clippy. It wouldn’t look forward to a consumer to ask it a query, however as an alternative might proactively provides customers strategies. That is just like the coding Copilot that comes with VSCode. It doesn’t look forward to the programmer to complete typing, however as an alternative provides strategies as they code. Performed with care, this type of AI can scale back friction and enhance consumer satisfaction.

In fact there are essential concerns when creating proactive AI. You don’t need your AI pinging the consumer so usually that they develop into irritating. One also can think about a dystopian future the place LLMs are continuously nudging you to purchase low cost junk or spend time on some senseless app with out your prompting. In fact, machine studying fashions are already doing this, however placing human language on it might probably make it much more insidious and annoying. It’s crucial that the developer ensures their software is used to learn the consumer, not swindle or affect them.

Getting Stuff Performed Whereas You Sleep

One other different to the chat interface is to make use of the LLMs offline reasonably than on-line. For instance, think about you needed to create a publication generator. This generator would use an automatic scraper to drag in leads from quite a lot of sources. It might then create articles for leads it deems fascinating. Every new situation of your publication could be kicked off by a background job, maybe day by day or weekly. The essential element right here: there is no such thing as a chat interface. There isn’t any means for the consumer to have any enter; they only get to benefit from the newest situation of the publication. Now we’re actually beginning to cook dinner!

I name this in a single day AI. The hot button is that the consumer by no means interacts with the AI in any respect. It simply produces a abstract, an evidence, an evaluation and so on. in a single day if you are sleeping. Within the morning, you get up and get to benefit from the outcomes. There ought to be no chat interface or strategies in in a single day AI. In fact, it may be very helpful to have a human-in-the-loop. Think about that the difficulty of your publication involves you with proposed articles. You may both settle for or reject the tales that go into your publication. Maybe you possibly can construct in performance to edit an article’s title, abstract, or cowl picture in case you don’t like one thing the AI generated.

Abstract

On this article, I lined the fundamentals behind the AI stack. This lined the infrastructure, information, mannequin/orchestration, and software layers. I mentioned why I imagine the appliance layer is the most effective place to work, primarily because of the lack of commoditization, proximity to the tip consumer, and alternative to construct merchandise that profit from work performed in decrease layers. We mentioned learn how to forestall your software from being simply one other skinny wrapper, in addition to learn how to use AI in a means that avoids the chat interface fully.

Partly two, I’ll talk about why the most effective language to be taught if you wish to construct net functions with AI integration will not be Python, however Ruby. I may also break down why the microservices structure for AI apps is probably not one of the simplest ways to construct your apps, regardless of it being the default that almost all go together with.

🔥 In the event you’d like a {custom} net software with generative AI integration, go to losangelesaiapps.com