Massive language fashions (LLMs) have superior past easy autocompletion, predicting the following phrase or phrase. Current developments enable LLMs to grasp and observe human directions, carry out complicated duties, and even interact in conversations. These developments are pushed by fine-tuning LLMs with specialised datasets and reinforcement studying with human suggestions (RLHF). RLHF is redefining how machines be taught and work together with human inputs.

What’s RLHF?

RLHF is a method that trains a big language mannequin to align its outputs with human preferences and expectations utilizing human suggestions. People consider the mannequin’s responses and supply scores, which the mannequin makes use of to enhance its efficiency. This iterative course of helps LLMs to refine their understanding of human directions and generate extra correct and related output. RLHF has performed a vital function in bettering the efficiency of InstructGPT, Sparrow, Claude, and extra, enabling them to outperform conventional LLMs, resembling GPT-3.

Let’s perceive how RLHF works.

RLHF vs Non-RLHF

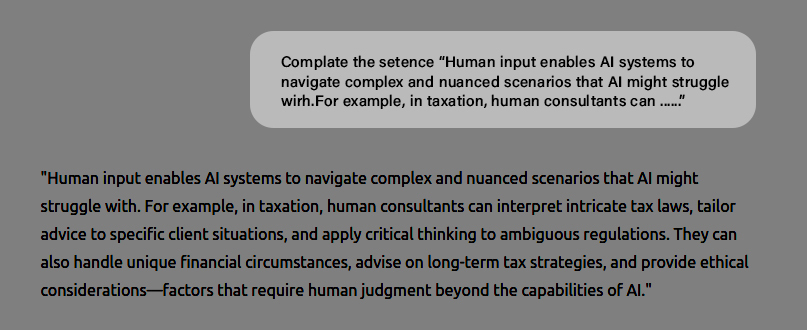

Massive language fashions have been initially designed to foretell the following phrase or token to finish a sentence based mostly on the enter often called ‘immediate’. For instance, to finish a press release, you immediate GPT-3 with the next enter:

Immediate: Full the sentence “Human enter allows AI techniques to navigate complicated and nuanced eventualities that AI would possibly wrestle with. For instance, in taxation, human consultants can …….”

The mannequin then efficiently completes the assertion as follows:

“Human enter allows AI techniques to navigate complicated and nuanced eventualities that AI would possibly wrestle with. For instance, in taxation, human consultants can interpret intricate tax legal guidelines, tailor recommendation to particular shopper conditions, and apply vital considering to ambiguous laws.”

Asking LLM to proceed a immediate

Nonetheless, massive language fashions are anticipated to do greater than full a immediate. LLMs are required to jot down tales, emails, poems, code, and extra.

RLHF vs Non-RLHF Examples

Listed below are just a few examples displaying the distinction between non-RLHF LLM (Subsequent token predictor) and RLHF LLM (skilled on human suggestions) output.

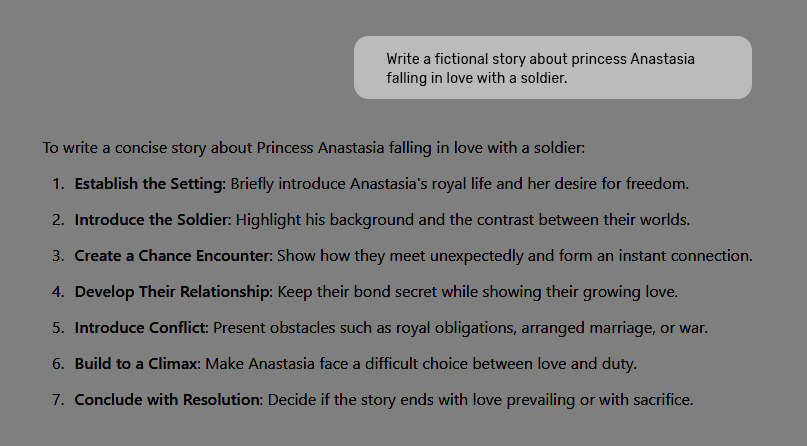

Non-RLHF Output – Story

Whenever you inform GPT-3 to ‘write a fictional story about Princess Anastasia falling in love with a soldier’, a non-RLHF mannequin generates output like:

Immediate: Write a fictional story about Princess Anastasia falling in love with a soldier.

Nonetheless, massive language fashions are anticipated to do greater than full a immediate. LLMs are required to jot down tales, emails, poems, code, and extra.

RLHF vs Non-RLHF Examples

Listed below are just a few examples displaying the distinction between non-RLHF LLM (Subsequent token predictor) and RLHF LLM (skilled on human suggestions) output.

Non-RLHF Output – Story

Whenever you inform GPT-3 to ‘write a fictional story about Princess Anastasia falling in love with a soldier,’ a non-RLHF mannequin generates output like:

Immediate: Write a fictional story about Princess Anastasia falling in love with a soldier.

The mannequin is aware of learn how to write tales, however it may’t perceive the request as a result of LLMs are skilled on web scrapes that are much less conversant in instructions like ‘write a narrative/ electronic mail’, adopted by a narrative or electronic mail itself. Predicting the following phrase is essentially totally different from intelligently following directions.

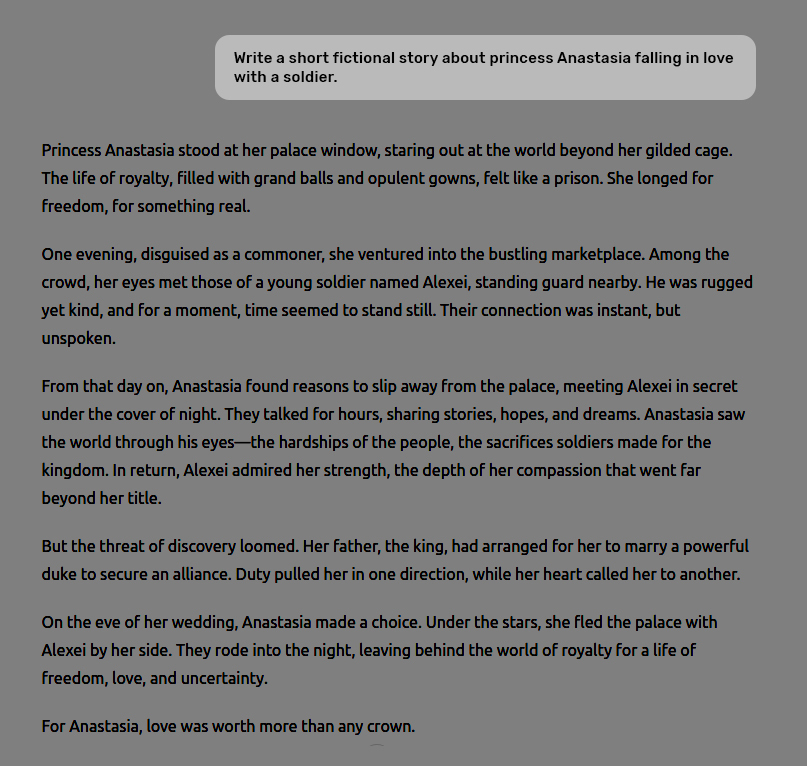

RLHF Output – Story

Here’s what you get when the identical immediate is offered to an RLHF mannequin skilled on human suggestions.

Immediate: Write a fictional story about Princess Anastasia falling in love with a soldier.

Now, the LLM generated the specified reply.

Non-RLHF Output – Arithmetic

Immediate: What’s 4-2 and 3-1?

The non-RLHF mannequin doesn’t reply the query and takes it as a part of a narrative dialogue.

RLHF Output – Arithmetic

Immediate: What’s 4-2 and 3-1?

The RLHF mannequin understands the immediate and generates the reply appropriately.

How does RLHF Work?

Let’s perceive how a big language mannequin is skilled on human suggestions to reply appropriately.

Step 1: Beginning with Pre-trained Fashions

The method of RLHF begins with a pre-trained language mode or a next-token predictor.

Step 2: Supervised Mannequin Tremendous-tuning

A number of enter prompts in regards to the duties you need the mannequin to finish and a human-written ideally suited response to every immediate are created. In different phrases, a coaching dataset consisting of <immediate, corresponding ideally suited output> pairs is created to fine-tune the pre-trained mannequin to generate comparable high-quality responses.

Step 3: Making a Human Suggestions Reward Mannequin

This step includes making a reward mannequin to judge how effectively the LLM output meets high quality expectations. Like an LLM, a reward mannequin is skilled on a dataset of human-rated responses, which function the ‘floor fact’ for assessing response high quality. With sure layers eliminated to optimize it for scoring quite than producing, it turns into a smaller model of the LLM. The reward mannequin takes the enter and LLM-generated response as enter after which assigns a numerical rating (a scalar reward) to the response.

So, human annotators consider the LLM-generated output by rating their high quality based mostly on relevance, accuracy, and readability.

Step 4: Optimizing with a Reward-driven Reinforcement Studying Coverage

The ultimate step within the RLHF course of is to coach an RL coverage (primarily an algorithm that decides which phrase or token to generate subsequent within the textual content sequence) that learns to generate textual content the reward mannequin predicts people would like.

In different phrases, the RL coverage learns to suppose like a human by maximizing suggestions from the reward mannequin.

That is how a complicated massive language mannequin like ChatGPT is created and fine-tuned.

Closing Phrases

Massive language fashions have made appreciable progress over the previous few years and proceed to take action. Strategies like RLHF have led to modern fashions resembling ChaGPT and Gemini, revolutionizing AI responses throughout totally different duties. Notably, by incorporating human suggestions within the fine-tuning course of, LLMs usually are not solely higher at following directions however are additionally extra aligned with human values and preferences, which assist them higher perceive the boundaries and functions for which they’re designed.

RLHF is remodeling massive language fashions (LLMs) by enhancing their output accuracy and talent to observe human directions. In contrast to conventional LLMs, which have been initially designed to foretell the following phrase or token, RLHF-trained fashions use human suggestions to fine-tune responses, aligning responses with person preferences.

Abstract: RLHF is remodeling massive language fashions (LLMs) by enhancing their output accuracy and talent to observe human directions. In contrast to conventional LLMs, which have been initially designed to foretell the following phrase or token, RLHF-trained fashions use human suggestions to fine-tune responses, aligning responses with person preferences.

The submit How RLHF is Reworking LLM Response Accuracy and Effectiveness appeared first on Datafloq.