In latest many years, world local weather monitoring has made vital strides, resulting in the creation of recent, intensive observational datasets (Karpatne et al., 2019). These datasets are important for bettering numerical climate predictions and refining distant sensing retrievals by offering detailed insights into complicated bodily processes (Alizadeh, 2022). Nonetheless, as the amount and complexity of the information grows, figuring out patterns throughout the observations turns into more and more difficult (Zhou et al., 2021). Extracting key options from these datasets might result in vital developments in our understanding of phenomena like convection and precipitation, additional enhancing our data of the altering world local weather.

On this put up, we’ll discover a few of these complicated information patterns by way of the lens of precipitation, which has been highlighted as a critically vital space of examine underneath warming world temperatures (IPCC, 2023). Slightly than counting on randomly generated or simulated information for this challenge, we’ll work with real-world observations from throughout the globe, that are publicly accessible for you, my reader, to discover and experiment with as properly. Let this put up function a analysis information, beginning with the significance of fine high quality information, and concluding with insights on linear and nonlinear interpretations of stated information.

In case you’d prefer to comply with together with some code, take a look at our interactive Google Colab pocket book.

This evaluation unfolds in three components, every of which is a separate, printed analysis article:

- Curating a strong, multidimensional dataset

- Analyzing linear embeddings

- Exploring nonlinear options

1. The Microphysical Dataset

https://doi.org/10.1029/2024EA003538

Once we discuss understanding the options of precipitation, what are we actually asking? How complicated can one thing as frequent as rain or snow be? It’s simple to look exterior on a stormy day and say, “It’s raining” or “It’s snowing”. However what’s truly taking place in these moments? Can we be extra exact? For instance, how intense is the rainfall? Are the raindrops massive or small? If it’s snowing, what do the snowflakes appear to be ? Are they fluffy, dendritic crystals, or are they composed of a number of, fused particles in massive mixture clumps (e.g., Fig. 1)? If the temperature hovers close to zero levels Celsius (C), do the snowflakes change into dense and slushy? How briskly are they falling? These variations might have a huge impact on what occurs when the particles attain the bottom, and categorizing these processes into distinct teams is non-trivial (Pettersen et al., 2021).

Understanding these processes is essential for higher monitoring and mitigating the impacts of flooding, runoff, freezing rain and excessive precipitation, all of that are probably harmful occasions with billions of {dollars} of related world damages every year (Sturm et al., 2017). However with 1000’s of particles falling over just some sq. meters in a matter of minutes, how can we quantify this complicated course of? It’s not nearly counting the particles, we additionally must seize key traits like measurement and form. As a substitute of trying this manually (an unattainable activity, go strive for your self), we usually depend on distant sensing devices to do the heavy lifting. One such device is the NASA Precipitation Imaging Package deal (PIP), a video disdrometer that gives detailed observations of falling rain drop and snow particles (Pettersen et al., 2020), as proven under in Fig. 2.

This comparatively cheap instrument consists of a 150-watt halogen bulb and a high-speed video digital camera (capturing at 380 frames per second) positioned two meters aside (King et al., 2024). As particles fall between the bulb and the digital camera, they block the sunshine, creating silhouettes that may be analyzed for variations in measurement and form. By monitoring the identical particle throughout a number of frames, the PIP software program may also decide its fall pace (Fig. 3). With further assumptions about particle movement within the air, the PIP information permit us to additionally derive minute-scale particle measurement distributions (PSDs), fall speeds, and efficient particle density distributions (Newman et al., 2009). These microphysical measurements, when mixed with close by meteorological observations of floor variables like temperature, relative humidity, stress, and wind pace, provide a complete snapshot of the atmosphere on the time of remark.

Over a span of 10 years, we collected greater than 1 million minutes of particle microphysical observations, alongside collocated floor meteorological variables, throughout 10 completely different websites (Fig. 4). Gathering information from a number of regional climates over such an extended interval was essential to constructing a strong database of precipitation occasions. To make sure consistency, all microphysical observations had been recorded utilizing the identical kind of instrument with an identical calibration settings and software program variations. We then carried out an intensive high quality assurance (QA) course of to eradicate inaccurate information, right timing drifts, and take away any unphysical outliers. This curated info was then standardized, packaged into Community Widespread Information Type (NetCDF) information, and made publicly out there by way of the College of Michigan’s DeepBlue information repository.

You’re welcome to obtain and discover the dataset your self! For extra particulars on the websites included, the QA course of, and the microphysical variations noticed between areas, please confer with our related information paper printed within the journal of Earth and Area Science.

To explain the PSD, we calculate a pair of parameters (n0, and λ) representing the intercept and slope of an inverse exponential match (Eq. 1). This match was chosen because it has been extensively utilized in earlier literature to precisely describe snowfall PSDs (Cooper et al., 2017; Wooden and L’Ecuyer, 2021). Nonetheless, different matches (e.g., a gamma distribution) may be thought-about in future work to higher seize massive mixture particles (Duffy et al., 2022).

n0-λ joint 2D histograms are proven under in Fig. 5 for every web site, demonstrating the big variety of precipitation PSDs occurring throughout completely different regional climates. Observe how some websites show bimodal distributions (OLY) evaluate to very slim distributions at others (NSA). We’ve got additionally put collectively a Python API for interacting with and visualizing this information known as pipdb. Please see our documentation on readthedocs for extra info on the right way to set up and use this bundle to your personal challenge.

In abstract, we’ve compiled a high-quality, multidimensional dataset of precipitation microphysical observations, capturing particulars such because the particle measurement distributions, fall speeds, and efficient densities. These measurements are complemented by a spread of close by floor meteorological variables, offering essential context in regards to the particular forms of precipitation occurring throughout every minute (e.g., was it heat out, or chilly?). A full checklist of the variables we’ve collected for this challenge is proven in Desk 1 under.

Now, what can we do with this information?

2. Analyzing Linear Embeddings with PCA

https://doi.org/10.1175/JAS-D-24-0076.1

With our information collected, it’s time to place it to make use of. We start by exploring linear embeddings by way of Principal Element Evaluation (PCA), following the methodology of Dolan et al. (2019). Their work centered on uncovering the latent options in rainfall drop measurement distributions (DSDs), figuring out six key modes of variability linked to the bodily processes that govern drop formation throughout quite a lot of areas. Constructing on this, we goal to increase the evaluation to snowfall occasions utilizing our customized dataset from Half 1. I received’t delve into the mechanics of PCA right here, as there are already many wonderful sources on TDS that cowl its implementation intimately.

Earlier than making use of PCA, we phase the complete dataset into discrete 5-minute intervals. This segmentation permits us to calculate the PSD parameters with a sufficiently massive pattern measurement. We then filter these intervals, choosing solely these with efficient density values under 0.4 g/cm³ (i.e., values usually related to snowfall and characterised by much less dense particles). This filtering ends in a dataset of 210,830 five-minute durations prepared for evaluation. For the variables used to suit the PCA, we select a subset from Desk 1 associated to snowfall, derived from the PIP. These variables embody n0, λ, Fs, Rho, Nt, and Sr (see Desk 1 for particulars). We centered on a smaller subset of observations from the disdrometer alone right here, as a result of future websites may not have collocated floor variables and we had been focused on what could possibly be extracted from simply this six-dimensional dataset.

Earlier than diving into the evaluation, it’s vital to first examine the information to make sure every part seems as anticipated. Bear in mind the outdated GIGO addage, rubbish in, rubbish out. We wish to mitigate the affect of unhealthy information if we are able to. By analyzing the worth distributions of every variable, we confirmed they fall throughout the anticipated ranges. Moreover, we reviewed the covariance matrix of the enter variables to achieve some preliminary insights into their joint habits (Fig. 6). As an example, variables like n0 and Nt, each tightly coupled to the variety of particles current, present excessive correlation as anticipated, whereas variables like efficient density (Rho) and Nt show much less of a relationship. After scaling and normalizing the inputs, we proceed by feeding them into scikit-learn’s PCA implementation.

Making use of PCA to the inputs ends in three Empirical Orthogonal Features (EOFs) that collectively account for 95% of the variability within the dataset (Fig. 7). The primary EOF is essentially the most vital, capturing roughly 55% of the dataset’s variance, as evidenced by its broad distribution in Fig. 6.a. When analyzing the usual anomalies of the EOF values for every enter variable, EOF1 reveals a robust detrimental relationship with all inputs. The second EOF accounts for about 20% of the variance, with a barely narrower distribution (Fig. 6.b) and is most strongly related to the Fallspeed and Rho (density) inputs. Lastly, EOF3, which explains round 15% of the variance, is primarily associated to λ and snowfall charge variables (Fig. 6.c).

On their very own, these EOFs are difficult to interpret in bodily phrases. What underlying options are they capturing? Are these embeddings bodily significant? One technique to simplify the interpretation is by specializing in essentially the most excessive values in every distribution, as these are most strongly related to every EOF. Whereas this guide clustering method leaves a lot of the distribution close to the origin ambiguous, it permits us to separate the information into distinct teams that may be analyzed extra carefully. By making use of a σ > 2 threshold (represented by the skinny white dashed traces in Fig. 6.a-c), we are able to divide this 3D distribution of factors into six distinct teams of equal sampling quantity. Since visualizing this separation in 2D is especially difficult, we’ve supplied an interactive information viewer (Fig. 8), created with Plotly, to make this distinction clearer. Be happy to click on on the determine under to discover the information your self.

With essentially the most excessive EOF clusters chosen, we are able to now plot these in bodily variable areas to start decoding them. That is demonstrated in Fig. 9 throughout completely different variable areas: n0-λ (panel a), Fs-Rho (panel b), λ-Dm (panel c), and Sr-Dm (panel d). Beginning with the pink and blue clusters in Fig. 9.a (representing the constructive and detrimental EOF1 values), we see a transparent separation in n0-λ house. The pink cluster, characterised by a excessive PSD intercept and slope, signifies a high-intensity grouping, suggestive of many small particles, whereas the blue cluster reveals the other habits. That is indicative of a possible depth embedding.

In panel b, there’s a definite separation between the purple and lightweight blue clusters (equivalent to the constructive and detrimental EOF2 values). The purple cluster, related to excessive fall pace and density, contrasts with the sunshine blue cluster, which reveals the other traits. This sample doubtless represents a particle temperature/wetness embedding, describing the “stickiness” of the snow because it falls. Hotter, denser particles (comparable to partially melted or frozen particles) are likely to fall sooner, very similar to how a slushy pellet falls sooner than a dry snowflake.

Lastly, in panels c and d, the yellow and magenta clusters are separated primarily based on PSD slope and mass-weighted imply diameter. Whereas much less clear, this means a possible relationship with particle measurement and the underlying snowfall regime, comparable to variations between complicated shallow methods and deep methods.

One other technique to strengthen our confidence in these attributions is by evaluating the teams to unbiased observations. We are able to do that by cross-referencing the PCA-based snowfall classifications from the PIP with close by floor radar observations (i.e., a Micro Rain Radar) and reanalysis (i.e., ERA5) estimates to guage bodily consistency. That is one purpose we advocate not all the time utilizing all out there information within the dimensionality discount, because it limits the power to later assess the robustness of the embeddings. To validate our method, we examined a collection of case research at Marquette (MQT), Michigan, to see how properly these classifications align. As an example, in Fig. 10, we observe a transition from a high-intensity snowstorm (pink) to partially melted mixed-phase snow crystals (sleet) as temperatures briefly rise above zero levels C (panel h), after which again to high-intensity snow as temperatures drop under zero later within the day. This additionally aligns with the modifications we see in reflectivity (panel a) and we are able to see this transition within the n0-λ plot in panel i.

Constructing on our PCA evaluation and the consistency noticed with collocated observations, we additionally created Fig. 11, which summarizes how the first linear embeddings recognized by way of PCA are distributed throughout completely different bodily variable areas. These classifications provide crucial microphysical insights that may improve a priori datasets, in the end bettering the accuracy of state-of-the-art fashions and snowfall retrievals.

Nonetheless, since PCA is restricted to linear embeddings, this raises an vital query: are there nonlinear patterns inside this dataset that we now have but to discover? Moreover, what new insights would possibly emerge if we prolong this evaluation past snow to incorporate different forms of precipitation?

Let’s sort out these questions within the subsequent part!

3. Nonlinear dimensionality discount utilizing UMAP

https://doi.org/10.1126/sciadv.adu0162

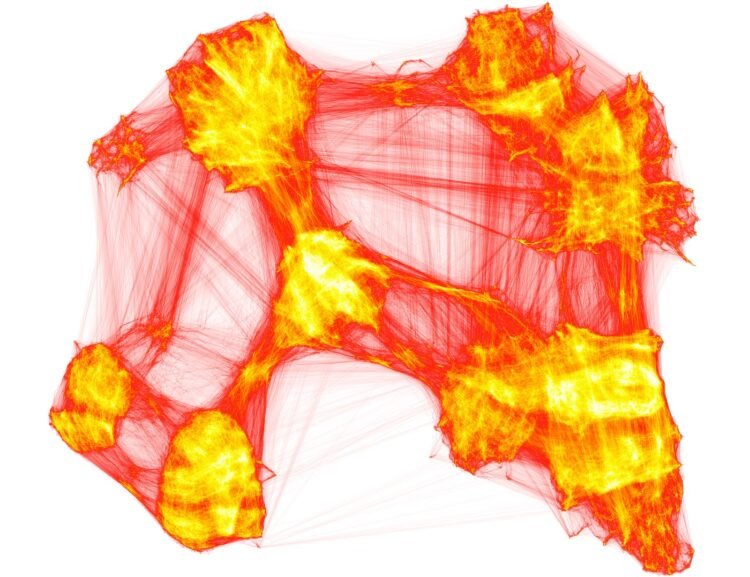

In an effort to look at extra complicated, nonlinear embeddings, we have to contemplate a unique kind of unsupervised studying that loosens the linearity assumptions of strategies like PCA. This brings us to the idea of manifold studying. The concept behind manifold studying is that high-dimensional information typically lie on a lower-dimensional, curved manifold throughout the unique information house (McInnes et al., 2020). By mapping this manifold, we are able to uncover the underlying construction and relationships that linear strategies would possibly miss. Methods like t-SNE, UMAP, VAEs, or Isomap can reveal these intricate patterns, offering a extra nuanced understanding of the dataset’s latent options. Making use of manifold studying to our dataset might uncover nonlinear embeddings that additional distinguish precipitation sorts, probably providing even deeper insights into the microphysical processes at play. As a bit of trace as to what’s to come back, see Fig. 12. As talked about earlier than, I received’t go into the implementation particulars of such strategies, as this has been lined many instances right here on TDS.

Moreover, we wish to use our complete dataset of each disdrometer observations and collocated floor metoeologic variables this time round to see if the extra dimensions present helpful context for higher differentiating between extremely complicated bodily processes. For instance, can we detect several types of mixed-phase precipitation if we knew extra in regards to the temperature and humidity on the time of remark? So, not like the earlier part the place we restricted the inputs to simply PIP information and simply snowfall, we now embody all 12 dimensions for your entire dataset. This additionally considerably reduces our complete pattern right down to 128,233 5-minute durations at 7 areas, since not all websites have working floor meteorologic stations to tug information from. As is all the time the case with a lot of these issues, as we add extra dimensions, we run up in opposition to the dreaded curse of dimentionality.

Because the dimensionality of the function house will increase, the variety of configurations can develop exponentially, and thus the variety of configurations lined by an remark decreases — Richard Bellman

This tradeoff within the variety of inputs and have sparsity is a problem we could have to bear in mind transferring ahead. Fortunately for us, we solely have 12 dimensions which can look like lots, however is admittedly fairly small in comparison with many different initiatives within the Pure Sciences with probably 1000’s of dimensions (Auton et al., 2015).

As talked about earlier, we explored quite a lot of nonlinear fashions for this section of the challenge (see Desk 2). In any Machine Studying (ML) challenge we undertake, we desire to start out with easier, extra interpretable strategies and steadily progress to extra subtle strategies, as much less complicated approaches are sometimes extra environment friendly and simply understood.

With this technique in thoughts, we started by constructing on the outcomes from Half 2, utilizing PCA as soon as once more as a baseline for this bigger dataset of rain and snow particles. We then in contrast PCA to nonlinear strategies comparable to Isomap, VAEs, t-SNE, and UMAP. After conducting a collection of sensitivity analyses, we discovered that UMAP outperformed the others in producing clear embeddings in a extra computationally environment friendly method, making it the main target of our dialogue right here. Moreover, with UMAP’s improved world separation of knowledge throughout the manifold, we are able to transfer past guide clustering, using a extra goal methodology like Hierarchical Density-Based mostly Spatial Clustering of Functions with Noise (HDBSCAN) to group comparable circumstances collectively (McInnes et al., 2017).

Making use of UMAP to this 12-dimensional dataset resulted within the identification of three main latent embeddings (LEs). We experimented with numerous hyperparameters, together with the variety of embeddings, and located that, much like PCA, the primary two embeddings had been essentially the most vital. The third embedding additionally displayed some separation between sure teams, however past this third stage, further embeddings supplied little separation and had been due to this fact excluded from the evaluation (though these is perhaps attention-grabbing to have a look at extra in future work). The primary two LEs, together with a case examine instance from Marquette, Michigan, illustrating discrete information factors over a 24-hour interval, are proven under in Fig. 13.

Instantly, we discover a number of key variations from the earlier snowfall-focused examine utilizing PCA. With the addition of rain and mixed-phase information, the primary and second empirical orthogonal features (EOF1 and EOF2) have now swapped locations. The first embedding now encodes details about particle section quite than depth. Depth shifts to the second latent embedding (LE2), remaining vital however now secondary. The third LE nonetheless seems to narrate to particle measurement and form, significantly throughout the snowfall portion of the manifold.

Making use of HDBSCAN to the manifold teams generated by UMAP resulted in 9 distinct clusters, plus one ambiguous cluster (Fig. 13.a). The separation between clusters is far clearer in comparison with PCA, and these teams appear to signify distinct bodily precipitation processes, starting from snowfall to mixed-phase to rainfall at numerous depth ranges. Apparently, the ambiguous factors and the connections between nodes within the graph kind distinct pathways of particle behavior evolution. This discovering is especially intriguing because it outlines clear particle evolutionary pathways, exhibiting how a raindrop can rework right into a frozen snow crystal underneath the suitable atmospheric circumstances.

An actual-world instance of this phenomenon is proven in Fig. 13.b, noticed in Marquette on February 15, 2023. Every coloured ring represents a person (5-minute) information level all through the day, with an arrow indicating the route of time. In Fig. 13.c, we overlay ancillary radar observations with floor temperatures. Up till round 12:00 UTC, a transparent brightband in reflectivity will be seen at roughly 1 km, indicative of a melting layer the place temperatures are heat sufficient for snow to soften into rain. This era was appropriately categorized as rainfall utilizing our UMAP+HDBSCAN (UH) clustering methodology. Then, round 17:00 UTC, temperatures quickly dropped properly under freezing, resulting in the classification of particles as mixed-phase and finally as snowfall. Most of these assessments are critically vital for ensuring what your manifold form suggests is sensible bodily.

In case you’d prefer to discover this manifold your self, analyzing completely different websites and seeing how numerous variables map to the embedding, take a look at our interactive information evaluation device, or click on Fig. 14 under.

If you discover the device talked about above, you’ll discover that mapping numerous enter options to the manifold embedding ends in easy gradients. These gradients point out that the overall world construction of the information is probably going being captured in a significant manner, providing useful insights into what the embeddings are encoding.

Evaluating the separation of factors utilizing UMAP to that of PCA (the place PCA is utilized to the very same dataset as UMAP) reveals considerably higher separation with UMAP, particularly regarding precipitation section. Whereas PCA can broadly distinguish between “liquid” and “strong” particles, it struggles with the extra complicated mixed-phase particles. This limitation is clear within the distributions proven in Fig. 15.d-e. PCA typically suffers from variance overcrowding close to the origin, resulting in a tradeoff between the variety of clusters we are able to determine and the dimensions of the ambiguous supercluster. Though HDBSCAN will be utilized to PCA in the identical method as UMAP, it solely generates two clusters (rain and snow) which isn’t significantly helpful by itself, and will be achieved with a easy linear threshold. In distinction, UMAP offers a lot better separation, leading to 37% fewer ambiguous factors and a +0.14 larger silhouette rating for the clusters in comparison with PCA (0.51).

As we did beforehand with PCA, we are able to conduct a collection of case examine comparisons when utilizing UMAP to strengthen our bodily cluster attributions. By evaluating these with collocated MRR observations, we are able to assess whether or not the circumstances reported within the astmosphere above the PIP align with the attributions produced by the UH clusters, and the way these evaluate to the clusters from PCA. In Fig. 16 under, we look at a couple of of those circumstances at Marquette.

Within the first column (a), we current an instance of a chronic mixed-phase occasion, emphasizing LE1, which we all know occurred at MQT from recorded climate experiences. Alongside the highest panel, each PCA and UMAP determine the interval up till 19:00 UTC as rain. Nonetheless, after this era, the PCA groupings change into sparse and largely ambiguous, whereas UMAP efficiently maps the post-19:00 UTC interval as mixed-phase, distinguishing between moist sleet (inexperienced) and colder, slushy pellets (purple).

In panel (b), we spotlight a case specializing in depth modifications (LE2), the place circumstances shift from high-intensity mixed-phase to low-intensity mixed-phase, after which again to high-intensity snowfall as temperatures cool. Once more, UMAP offers a extra detailed and constant classification in comparison with the sparser outcomes from PCA.

Lastly, in panel (c), we discover an LE3 case involving a shallow system till 15:00 UTC, adopted by a deep convective system transferring over the positioning, resulting in a rise within the measurement, form complexity, and depth of the snow particles. Right here too, UMAP demonstrates a extra complete mapping of the occasion. Observe that these are just a few handpicked case research nonetheless, and we advocate trying out our full paper for multi-year comparisons.

General, we discovered that the nonlinear 3D manifold generated utilizing UMAP supplied a easy and correct approximation of precipitation section, depth, and particle measurement/form (Fig. 17). When mixed with hierarchical density-based clustering, the ensuing teams had been distinct and bodily in step with unbiased observations. Whereas PCA was in a position to seize the overall embedding construction (with EOFs 1-3 largely analogous to LEs 1-3), it struggled to signify the worldwide construction of the information, as many of those processes are inherently nonlinear.

So what does this all imply?

Conclusions

You’ve made it to the tip!

I understand this has been a prolonged put up, so I’ll maintain this part temporary. In abstract, we’ve developed a high-quality dataset of precipitation observations from a number of websites over a number of years and used this information to use each linear and nonlinear dimensionality discount strategies, aiming to be taught extra in regards to the construction of the information itself! Throughout all strategies, embeddings associated to particle section, precipitation depth, and particle measurement/form had been essentially the most dominant. Nonetheless, solely the nonlinear strategies had been in a position to seize the complicated world construction of the information, revealing distinct precipitation teams that aligned properly with unbiased observations.

We consider these teams (and particle transitionary pathways) can be utilized to enhance present satellite tv for pc precipitation retrievals in addition to numerical mannequin microphysical parameterizations. With this in thoughts, we now have constructed an operational parameter matrix (the lookup house is illustrated in Fig. 18) which produces a easy conditional likelihood vector for every group primarily based on temperature (T) and particle counts (Nt). Please see the related manuscript for entry/API particulars to this desk.

Nonlinear dimensionality discount strategies like UMAP are nonetheless comparatively new and have but to be broadly utilized to the massive datasets rising within the Geosciences. It needs to be famous that these strategies are imperfect, and there are tradeoffs primarily based in your drawback context, so maintain that in thoughts. Nonetheless, our findings right here, constructing first on PCA, recommend that these strategies will be extremely efficient, emphasizing the worth of fastidiously curated and complete observational databases, which we hope to see extra of within the coming years.

Thanks once more for studying, and tell us within the feedback how you’re eager about studying extra out of your massive observational datasets!

Information and Code

PIP and floor meteorologic observations used as enter to the PCA and UMAP are publicly out there for obtain on the College of Michigan’s DeepBlue information repository (https://doi.org/10.7302/37yx-9q53). This dataset is supplied as a collection of folders containing NetCDF information for every web site and 12 months, with standardized CF metadata naming conventions. For extra detailed info, please see our information paper (https://doi.org/10.1029/2024EA003538). ERA5 information will be downloaded from the Copernicus Local weather Information Retailer.

PIP information preprocessing code is out there on our public GitHub repository (https://github.com/frasertheking/pip_processing), and we now have supplied a customized API for interacting with the particle microphysics information in Python known as pipdb (https://github.com/frasertheking/pipdb). The snowfall PCA challenge code is out there on Github (https://github.com/frasertheking/snowfall_pca). Moreover, the code used to suit the DR strategies, cluster circumstances, analyze inputs and generate figures can be out there for obtain on a separate, public GitHub repository (https://github.com/frasertheking/umap).

References

Alizadeh, O. (2022). Advances and challenges in local weather modeling. Climatic Change, 170(1), 18. https://doi.org/10.1007/s10584-021-03298-4

Auton, A., Abecasis, G. R., Altshuler, D. M., Durbin, R. M., Abecasis, G. R., Bentley, D. R., Chakravarti, A., Clark, A. G., Donnelly, P., Eichler, E. E., Flicek, P., Gabriel, S. B., Gibbs, R. A., Inexperienced, E. D., Hurles, M. E., Knoppers, B. M., Korbel, J. O., Lander, E. S., Lee, C., … Nationwide Eye Institute, N. (2015). A worldwide reference for human genetic variation. Nature, 526(7571), 68-74. https://doi.org/10.1038/nature15393

Cooper, S. J., Wooden, N. B., & L’Ecuyer, T. S. (2017). A variational method to estimate snowfall charge from coincident radar, snowflake, and fall-speed observations. Atmospheric Measurement Methods, 10(7), 2557-2571. https://doi.org/10.5194/amt-10-2557-2017

Dolan, B., Fuchs, B., Rutledge, S. A., Barnes, E. A., & Thompson, E. J. (2018). Main Modes of World Drop Dimension Distributions. Journal of the Atmospheric Sciences, 75(5), 1453-1476. https://doi.org/10.1175/JAS-D-17-0242.1

Duffy, G., & Posselt, D. J. (2022). A Gamma Parameterization for Precipitating Particle Dimension Distributions Containing Snowflake Aggregates Drawn from 5 Area Experiments. Journal of Utilized Meteorology and Climatology, 61(8), 1077-1085. https://doi.org/10.1175/JAMC-D-21-0131.1

IPCC, 2023: Local weather Change 2023: Synthesis Report. Contribution of Working Teams I, II and III to the Sixth Evaluation Report of the Intergovernmental Panel on Local weather Change [Core Writing Team, H. Lee and J. Romero (eds.)]. IPCC, Geneva, Switzerland, pp. 35-115, doi: 10.59327/IPCC/AR6-9789291691647.

Karpatne, A., Ebert-Uphoff, I., Ravela, S., Babaie, H. A., & Kumar, V. (2019). Machine Studying for the Geosciences: Challenges and Alternatives. IEEE Transactions on Information and Information Engineering, 31(8), 1544-1554. https://doi.org/10.1109/TKDE.2018.2861006

King, F., Pettersen, C., Bliven, L. F., Cerrai, D., Chibisov, A., Cooper, S. J., L’Ecuyer, T., Kulie, M. S., Leskinen, M., Mateling, M., McMurdie, L., Moisseev, D., Nesbitt, S. W., Petersen, W. A., Rodriguez, P., Schirtzinger, C., Stuefer, M., von Lerber, A., Wingo, M. T., … Wooden, N. (2024). A Complete Northern Hemisphere Particle Microphysics Information Set From the Precipitation Imaging Package deal. Earth and Area Science, 11(5), e2024EA003538. https://doi.org/10.1029/2024EA003538

McInnes, L., Healy, J., & Astels, S. (2017). hdbscan: Hierarchical density primarily based clustering. The Journal of Open Supply Software program, 2(11), 205. https://doi.org/10.21105/joss.00205

McInnes, L., Healy, J., & Melville, J. (2020). UMAP: Uniform Manifold Approximation and Projection for Dimension Discount (arXiv:1802.03426). arXiv. https://doi.org/10.48550/arXiv.1802.03426

Newman, A. J., Kucera, P. A., & Bliven, L. F. (2009). Presenting the Snowflake Video Imager (SVI). Journal of Atmospheric and Oceanic Know-how, 26(2), 167-179. https://doi.org/10.1175/2008JTECHA1148.1

Pettersen, C., Bliven, L. F., von Lerber, A., Wooden, N. B., Kulie, M. S., Mateling, M. E., Moisseev, D. N., Munchak, S. J., Petersen, W. A., & Wolff, D. B. (2020). The Precipitation Imaging Package deal: Evaluation of Microphysical and Bulk Traits of Snow. Ambiance, 11(8), Article 8. https://doi.org/10.3390/atmos11080785

Pettersen, C., Bliven, L. F., Kulie, M. S., Wooden, N. B., Shates, J. A., Anderson, J., Mateling, M. E., Petersen, W. A., von Lerber, A., & Wolff, D. B. (2021). The Precipitation Imaging Package deal: Section Partitioning Capabilities. Distant Sensing, 13(11), Article 11. https://doi.org/10.3390/rs13112183

Sturm, M., Goldstein, M. A., & Parr, C. (2017). Water and life from snow: A trillion greenback science query. Water Assets Analysis, 53(5), 3534-3544. https://doi.org/10.1002/2017WR020840

Wooden, N. B., & L’Ecuyer, T. S. (2021). What millimeter-wavelength radar reflectivity reveals about snowfall: An information-centric evaluation. Atmospheric Measurement Methods, 14(2), 869-888. https://doi.org/10.5194/amt-14-869-2021

Zhou, C., Wang, H., Wang, C., Hou, Z., Zheng, Z., Shen, S., Cheng, Q., Feng, Z., Wang, X., Lv, H., Fan, J., Hu, X., Hou, M., & Zhu, Y. (2021). Geoscience data graph within the huge information period. Science China Earth Sciences, 64(7), 1105-1114. https://doi.org/10.1007/s11430-020-9750-4