unique Present-day LLMs are prediction engines and, as such, they’ll solely discover the almost definitely answer to issues, which isn’t essentially the right one. Although common fashions have principally develop into higher at math, even prime performer Gemini 3 Flash would obtain a C if assessed with a letter grade.

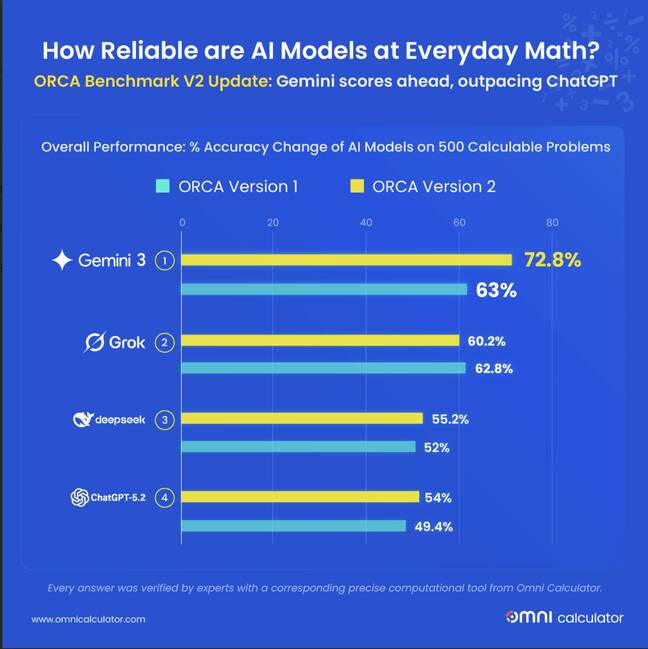

Researchers affiliated with Omni Calculator, a maker of on-line calculators for particular purposes, have subjected a brand new set of AI fashions to the corporate’s ORCA Benchmark, which consists of 500 sensible math questions.

Of their preliminary analysis final November, OpenAI’s ChatGPT-5, Google’s Gemini 2.5 Flash, Anthropic’s Claude Sonnet 4.5, xAI’s Grok 4, and DeepSeek’s DeepSeek V3.2 (alpha) all did poorly, scoring 63 % or much less on math issues.

The most recent set of contestants consists of ChatGPT-5.2, Gemini 3 Flash, Grok 4.1, and DeepSeek V3.2 (steady launch). Sonnet 4.5 did not get re-evaluated because it hadn’t modified and its successor had not been launched throughout the testing interval.

For this second spherical of testing – supplied to The Register previous to publication – all of the fashions confirmed enchancment apart from Grok-4.1, which regressed.

Gemini 3.1 Flash noticed its accuracy hit 72.8 %, a acquire of 9.8 proportion factors from its predecessor. DeepSeek V3.2 reached 55.2 %, a acquire of three.2 proportion factors from its alpha model. ChatGPT 5.2 achieved 54.0 % accuracy, up 4.6 proportion factors. And Grok 4.1 slipped to 60.2 %, a lack of 2.6 proportion factors.

“A calculator is predictable,” stated Dawid Siuda, researcher at ORCA, in an announcement. “Ask it the identical query right now or subsequent 12 months, and the reply stays the identical. AI would not work that means. These programs are predicting the subsequent possible phrase primarily based on patterns. Mathematically, it is potential for a mannequin to get a query proper right now and improper tomorrow.”

The researchers tried to evaluate the variability of mannequin responses with a metric dubbed “instability” – a measure of how typically fashions modified their solutions when requested the identical query twice.

Gemini 3 Flash proved essentially the most constant, shifting solely 46.1 % for incorrect responses. ChatGPT, the researchers report, modified its reply 65.2 % of the time. And DeepSeek V3.2 modified its reply for 68.8 % of errors.

The ORCA researchers word that mannequin efficiency enhancements over time differ throughout domains. DeepSeek, they are saying, noticed its efficiency on Biology & Chemistry questions go from 10.5 % accuracy to 43.9 %. And Gemini 3 Flash reached Math & Conversions accuracy of 93.2 %, up from 83 %. Grok 4.1 in the meantime misplaced 9 proportion factors for its accuracy answering Well being & Sports activities issues and misplaced 5.3 proportion factors for Biology & Chemistry.

The researchers speculate that current updates to Grok might have prioritized different capabilities than quantitative reasoning.

Noting that calculation errors now account for 39.8 % of all errors, up from 33.4 %, and that rounding errors slipped to 25.8 %, down from 34.7 %, the ORCA group conclude that AI fashions are getting higher at making the mathematics look proper by formatting, whereas nonetheless scuffling with arithmetic.

“AI fashions are basically prediction engines slightly than logic engines,” Siuda instructed The Register in an e-mail. “As a result of they work on likelihood, they’re principally guessing the subsequent almost definitely quantity or phrase primarily based on patterns they’ve seen earlier than. It is sort of a pupil who memorizes each reply in a math guide however by no means truly learns the best way to add.”

Siuda stated we knew that about fashions beforehand and that hasn’t modified.

“They may get the precise reply more often than not, however the second you give them a novel or tough downside, or multi-step job, they stumble as a result of they aren’t really calculating something,” he stated. “It is in all probability inconceivable to shut this hole utterly with the present know-how, but when we merge LLMs with perform calling properly sufficient, it could be potential to resolve.”

Perform calling – farming out arithmetic to a deterministic supply – is a method across the poor math dealing with of fashions.

“Main AI firms like Google and OpenAI are already doing this by having the AI name a perform to do the precise calculation,” defined Siuda. “The true headache occurs with lengthy, messy issues. The AI has to maintain observe of each little outcome at every stage, and it normally will get overwhelmed or confused.”

One other potential avenue for enchancment is likely to be educating fashions to confirm responses by formal proofs. As famous in Nature final November, Google’s DeepMind has developed an strategy that scored a silver medal outcome on the Worldwide Mathematical Olympiad by reinforcement studying primarily based on proofs developed with the Lean programming language and proof assistant.

However in the meanwhile, belief no AI. ®

unique Present-day LLMs are prediction engines and, as such, they’ll solely discover the almost definitely answer to issues, which isn’t essentially the right one. Although common fashions have principally develop into higher at math, even prime performer Gemini 3 Flash would obtain a C if assessed with a letter grade.

Researchers affiliated with Omni Calculator, a maker of on-line calculators for particular purposes, have subjected a brand new set of AI fashions to the corporate’s ORCA Benchmark, which consists of 500 sensible math questions.

Of their preliminary analysis final November, OpenAI’s ChatGPT-5, Google’s Gemini 2.5 Flash, Anthropic’s Claude Sonnet 4.5, xAI’s Grok 4, and DeepSeek’s DeepSeek V3.2 (alpha) all did poorly, scoring 63 % or much less on math issues.

The most recent set of contestants consists of ChatGPT-5.2, Gemini 3 Flash, Grok 4.1, and DeepSeek V3.2 (steady launch). Sonnet 4.5 did not get re-evaluated because it hadn’t modified and its successor had not been launched throughout the testing interval.

For this second spherical of testing – supplied to The Register previous to publication – all of the fashions confirmed enchancment apart from Grok-4.1, which regressed.

Gemini 3.1 Flash noticed its accuracy hit 72.8 %, a acquire of 9.8 proportion factors from its predecessor. DeepSeek V3.2 reached 55.2 %, a acquire of three.2 proportion factors from its alpha model. ChatGPT 5.2 achieved 54.0 % accuracy, up 4.6 proportion factors. And Grok 4.1 slipped to 60.2 %, a lack of 2.6 proportion factors.

“A calculator is predictable,” stated Dawid Siuda, researcher at ORCA, in an announcement. “Ask it the identical query right now or subsequent 12 months, and the reply stays the identical. AI would not work that means. These programs are predicting the subsequent possible phrase primarily based on patterns. Mathematically, it is potential for a mannequin to get a query proper right now and improper tomorrow.”

The researchers tried to evaluate the variability of mannequin responses with a metric dubbed “instability” – a measure of how typically fashions modified their solutions when requested the identical query twice.

Gemini 3 Flash proved essentially the most constant, shifting solely 46.1 % for incorrect responses. ChatGPT, the researchers report, modified its reply 65.2 % of the time. And DeepSeek V3.2 modified its reply for 68.8 % of errors.

The ORCA researchers word that mannequin efficiency enhancements over time differ throughout domains. DeepSeek, they are saying, noticed its efficiency on Biology & Chemistry questions go from 10.5 % accuracy to 43.9 %. And Gemini 3 Flash reached Math & Conversions accuracy of 93.2 %, up from 83 %. Grok 4.1 in the meantime misplaced 9 proportion factors for its accuracy answering Well being & Sports activities issues and misplaced 5.3 proportion factors for Biology & Chemistry.

The researchers speculate that current updates to Grok might have prioritized different capabilities than quantitative reasoning.

Noting that calculation errors now account for 39.8 % of all errors, up from 33.4 %, and that rounding errors slipped to 25.8 %, down from 34.7 %, the ORCA group conclude that AI fashions are getting higher at making the mathematics look proper by formatting, whereas nonetheless scuffling with arithmetic.

“AI fashions are basically prediction engines slightly than logic engines,” Siuda instructed The Register in an e-mail. “As a result of they work on likelihood, they’re principally guessing the subsequent almost definitely quantity or phrase primarily based on patterns they’ve seen earlier than. It is sort of a pupil who memorizes each reply in a math guide however by no means truly learns the best way to add.”

Siuda stated we knew that about fashions beforehand and that hasn’t modified.

“They may get the precise reply more often than not, however the second you give them a novel or tough downside, or multi-step job, they stumble as a result of they aren’t really calculating something,” he stated. “It is in all probability inconceivable to shut this hole utterly with the present know-how, but when we merge LLMs with perform calling properly sufficient, it could be potential to resolve.”

Perform calling – farming out arithmetic to a deterministic supply – is a method across the poor math dealing with of fashions.

“Main AI firms like Google and OpenAI are already doing this by having the AI name a perform to do the precise calculation,” defined Siuda. “The true headache occurs with lengthy, messy issues. The AI has to maintain observe of each little outcome at every stage, and it normally will get overwhelmed or confused.”

One other potential avenue for enchancment is likely to be educating fashions to confirm responses by formal proofs. As famous in Nature final November, Google’s DeepMind has developed an strategy that scored a silver medal outcome on the Worldwide Mathematical Olympiad by reinforcement studying primarily based on proofs developed with the Lean programming language and proof assistant.

However in the meanwhile, belief no AI. ®