1. The issue with LLMs

So you will have your favourite chatbot, and you utilize it to your each day job to spice up your productiveness. It may translate textual content, write good emails, inform jokes, and many others. After which comes the day when your colleague involves you and asks :

“Are you aware the present trade charge between USD and EUR ? I’m wondering if I ought to promote my EUR…”

You ask your favourite chatbot, and the reply pops :

I'm sorry, I can not fulfill this request.

I don't have entry to real-time data, together with monetary knowledge

like trade charges.

What’s the drawback right here ?

The issue is that you’ve got came across one of many shortcomings of LLMs. Giant Language Fashions (LLMs) are highly effective at fixing many varieties of issues, similar to drawback fixing, textual content summarization, era, and many others.

Nevertheless, they’re constrained by the next limitations:

- They’re frozen after coaching, resulting in stale information.

- They’ll’t question or modify exterior knowledge.

Similar means as we’re utilizing search engines like google and yahoo day-after-day, studying books and paperwork or querying databases, we’d ideally wish to present this data to our LLM to make it extra environment friendly.

Thankfully, there’s a means to try this: Instruments and Brokers.

Foundational fashions, regardless of their spectacular textual content and picture era, stay constrained by their incapability to work together with the surface world. Instruments bridge this hole, empowering brokers to work together with exterior knowledge and providers whereas unlocking a wider vary of actions past that of the underlying mannequin alone

(supply : Google Brokers whitepaper)

Utilizing brokers and instruments, we may then have the ability to, from our chat interface:

- retrieve knowledge from our personal paperwork

- learn / ship emails

- work together with inside databases

- carry out actual time Google searches

- and many others.

2. What are Brokers, Instruments and Chains ?

An agent is an software which makes an attempt to realize a aim (or a activity) by having at its disposal a set of instruments and taking selections based mostly on its observations of the surroundings.

instance of an agent may very well be you, for instance: if you should compute a posh mathematical operation (aim), you may use a calculator (device #1), or a programming language (device #2). Perhaps you’d select the calculator to do a easy addition, however select device #2 for extra complicated algorithms.

Brokers are subsequently made from :

- A mannequin : The mind in our agent is the LLM. It’s going to perceive the question (the aim), and flick through its instruments out there to pick the perfect.

- A number of instruments : These are features, or APIs, which might be answerable for performing a selected motion (ie: retrieving the present foreign money charge for USD vs EUR, including numbers, and many others.)

- An orchestration course of: that is how the mannequin will behave when requested to resolve a activity. It’s a cognitive course of that defines how the mannequin will analyze the issue, refine inputs, select a device, and many others. Examples of such processes are ReAct, CoT (Chain of Thought), ToT (Tree-of-Thought)

Right here is under a workflow clarification

Chains are someway totally different. Whereas brokers can ‘resolve’ by themselves what to do and which steps to take, chains are only a sequence of predefined steps. They’ll nonetheless depend on instruments although, that means that they’ll embrace a step wherein they should choose from out there instruments. We’ll cowl that later.

3. Making a easy chat with out Instruments

For example our level, we’ll to begin with see how our LLM performs as-is, with none assist.

Let’s set up the wanted libraries :

vertexai==1.65.0

langchain==0.2.16

langchain-community==0.2.16

langchain-core==0.2.38

langchain-google-community==1.0.8

langchain-google-vertexai==1.0.6

And create our quite simple chat utilizing Google’s Gemini LLM:

from vertexai.generative_models import (

GenerativeModel,

GenerationConfig,

Half

)gemini_model = GenerativeModel(

"gemini-1.5-flash",

generation_config=GenerationConfig(temperature=0),

)

chat = gemini_model.start_chat()

In case you run this easy chat and ask a query concerning the present trade charge, you would possibly most likely get an identical reply:

response = chat.send_message("What's the present trade charge for USD vs EUR ?")

reply = response.candidates[0].content material.components[0].textual content--- OUTPUT ---

"I'm sorry, I can not fulfill this request. I don't have entry to real-time data, together with monetary knowledge like trade charges."

Not shocking, as we all know LLMs don’t have entry to real-time knowledge.

Let’s add a device for that. Our device shall be little perform that calls an API to retrieve trade charge knowledge in actual time.

def get_exchange_rate_from_api(params):

url = f"https://api.frankfurter.app/newest?from={params['currency_from']}&to={params['currency_to']}"

print(url)

api_response = requests.get(url)

return api_response.textual content# Strive it out !

get_exchange_rate_from_api({'currency_from': 'USD', 'currency_to': 'EUR'})

---

'{"quantity":1.0,"base":"USD","date":"2024-11-20","charges":{"EUR":0.94679}}'

Now we all know how our instruments works, we wish to inform our chat LLM to make use of this perform to reply our query. We are going to subsequently create a mono-tool agent. To try this, now we have a number of choices which I’ll checklist right here:

- Use Google’s Gemini chat API with Perform Calling

- Use LangChain’s API with Brokers and Instruments

Each have their benefits and downsides. The aim of this text can also be to point out you the chances and allow you to resolve which one you like.

4. Including Instruments to our chat: The Google means with Perform Calling

There are principally two methods of making a device out of a perform.

The first one is a “dictionary” method the place you specify inputs and outline of the perform within the Software. The imporant parameters are:

- Identify of the perform (be express)

- Description : be verbose right here, as a strong and exhaustive description will assist the LLM choose the best device

- Parameters : that is the place you specify your arguments (kind and outline). Once more, be verbose within the description of your arguments to assist the LLM know tips on how to cross worth to your perform

import requestsfrom vertexai.generative_models import FunctionDeclaration

get_exchange_rate_func = FunctionDeclaration(

title="get_exchange_rate",

description="Get the trade charge for currencies between nations",

parameters={

"kind": "object",

"properties": {

"currency_from": {

"kind": "string",

"description": "The foreign money to transform from in ISO 4217 format"

},

"currency_to": {

"kind": "string",

"description": "The foreign money to transform to in ISO 4217 format"

}

},

"required": [

"currency_from",

"currency_to",

]

},

)

The 2nd means of including a device utilizing Google’s SDK is with a from_func instantiation. This requires modifying our unique perform to be extra express, with a docstring, and many others. As an alternative of being verbose within the Software creation, we’re being verbose within the perform creation.

# Edit our perform

def get_exchange_rate_from_api(currency_from: str, currency_to: str):

"""

Get the trade charge for currencies Args:

currency_from (str): The foreign money to transform from in ISO 4217 format

currency_to (str): The foreign money to transform to in ISO 4217 format

"""

url = f"https://api.frankfurter.app/newest?from={currency_from}&to={currency_to}"

api_response = requests.get(url)

return api_response.textual content

# Create the device

get_exchange_rate_func = FunctionDeclaration.from_func(

get_exchange_rate_from_api

)

The subsequent step is de facto about creating the device. For that, we’ll add our FunctionDeclaration to an inventory to create our Software object:

from vertexai.generative_models import Software as VertexTooldevice = VertexTool(

function_declarations=[

get_exchange_rate_func,

# add more functions here !

]

)

Let’s now cross that to our chat and see if it now can reply our question about trade charges ! Bear in mind, with out instruments, our chat answered:

Let’s strive Google’s Perform calling device and see if this helps ! First, let’s ship our question to the chat:

from vertexai.generative_models import GenerativeModelgemini_model = GenerativeModel(

"gemini-1.5-flash",

generation_config=GenerationConfig(temperature=0),

instruments=[tool] #We add the device right here !

)

chat = gemini_model.start_chat()

response = chat.send_message(immediate)

# Extract the perform name response

response.candidates[0].content material.components[0].function_call

--- OUTPUT ---

"""

title: "get_exchange_rate"

args {

fields {

key: "currency_to"

worth {

string_value: "EUR"

}

}

fields {

key: "currency_from"

worth {

string_value: "USD"

}

}

fields {

key: "currency_date"

worth {

string_value: "newest"

}

}

}"""

The LLM accurately guessed it wanted to make use of the get_exchange_rate perform, and likewise accurately guessed the two parameters had been USD and EUR .

However this isn’t sufficient. What we wish now’s to really run this perform to get our outcomes!

# mapping dictionnary to map perform names and performance

function_handler = {

"get_exchange_rate": get_exchange_rate_from_api,

}# Extract the perform name title

function_name = function_call.title

print("#### Predicted perform title")

print(function_name, "n")

# Extract the perform name parameters

params = {key: worth for key, worth in function_call.args.gadgets()}

print("#### Predicted perform parameters")

print(params, "n")

function_api_response = function_handler[function_name](params)

print("#### API response")

print(function_api_response)

response = chat.send_message(

Half.from_function_response(

title=function_name,

response={"content material": function_api_response},

),

)

print("n#### Remaining Reply")

print(response.candidates[0].content material.components[0].textual content)

--- OUTPUT ---

"""

#### Predicted perform title

get_exchange_rate

#### Predicted perform parameters

{'currency_from': 'USD', 'currency_date': 'newest', 'currency_to': 'EUR'}

#### API response

{"quantity":1.0,"base":"USD","date":"2024-11-20","charges":{"EUR":0.94679}}

#### Remaining Reply

The present trade charge for USD vs EUR is 0.94679. Because of this 1 USD is the same as 0.94679 EUR.

"""

We will now see our chat is ready to reply our query! It:

- Accurately guessed to perform to name,

get_exchange_rate - Accurately assigned the parameters to name the perform

{‘currency_from’: ‘USD’, ‘currency_to’: ‘EUR’} - Received outcomes from the API

- And properly formatted the reply to be human-readable!

Let’s now see one other means of doing with LangChain.

5. Including Instruments to our chat: The Langchain means with Brokers

LangChain is a composable framework to construct with LLMs. It’s the orchestration framework for controllable agentic workflows.

Much like what we did earlier than the “Google” means, we’ll construct instruments within the Langchain means. Let’s start with defining our features. Similar as for Google, we must be exhaustive and verbose within the docstrings:

from langchain_core.instruments import device@device

def get_exchange_rate_from_api(currency_from: str, currency_to: str) -> str:

"""

Return the trade charge between currencies

Args:

currency_from: str

currency_to: str

"""

url = f"https://api.frankfurter.app/newest?from={currency_from}&to={currency_to}"

api_response = requests.get(url)

return api_response.textual content

In an effort to spice issues up, I’ll add one other device which may checklist tables in a BigQuery dataset. Right here is the code:

@device

def list_tables(undertaking: str, dataset_id: str) -> checklist:

"""

Return an inventory of Bigquery tables

Args:

undertaking: GCP undertaking id

dataset_id: ID of the dataset

"""

shopper = bigquery.Shopper(undertaking=undertaking)

strive:

response = shopper.list_tables(dataset_id)

return [table.table_id for table in response]

besides Exception as e:

return f"The dataset {params['dataset_id']} isn't discovered within the {params['project']} undertaking, please specify the dataset and undertaking"

Add as soon as completed, we add our features to our LangChain toolbox !

langchain_tool = [

list_tables,

get_exchange_rate_from_api

]

To construct our agent, we’ll use the AgentExecutorobject from LangChain. This object will principally take 3 elements, that are those we outlined earlier :

Let’s first select our LLM:

gemini_llm = ChatVertexAI(mannequin="gemini-1.5-flash")

Then we create a immediate to handle the dialog:

immediate = ChatPromptTemplate.from_messages(

[

("system", "You are a helpful assistant"),

("human", "{input}"),

# Placeholders fill up a **list** of messages

("placeholder", "{agent_scratchpad}"),

]

)

And at last, we create the AgentExecutor and run a question:

agent = create_tool_calling_agent(gemini_llm, langchain_tools, immediate)

agent_executor = AgentExecutor(agent=agent, instruments=langchain_tools)

agent_executor.invoke({

"enter": "Which tables can be found within the thelook_ecommerce dataset ?"

})--- OUTPUT ---

"""

{'enter': 'Which tables can be found within the thelook_ecommerce dataset ?',

'output': 'The dataset `thelook_ecommerce` isn't discovered within the `gcp-project-id` undertaking.

Please specify the right dataset and undertaking. n'}

"""

Hmmm. Looks as if the agent is lacking one argument, or at the very least asking for extra data…Let’s reply by giving this data:

agent_executor.invoke({"enter": f"Challenge id is bigquery-public-data"})--- OUPTUT ---

"""

{'enter': 'Challenge id is bigquery-public-data',

'output': 'OK. What else can I do for you? n'}

"""

Effectively, appears we’re again to sq. one. The LLM has been informed the undertaking id however forgot concerning the query. Our agent appears to be missing reminiscence to recollect earlier questions and solutions. Perhaps we must always consider…

6. Including Reminiscence to our Agent

Reminiscence is one other idea in Brokers, which principally helps the system to recollect the dialog historical past and keep away from infinite loops like above. Consider reminiscence as being a notepad the place the LLM retains monitor of earlier questions and solutions to construct context across the dialog.

We are going to modify our immediate (directions) to the mannequin to incorporate reminiscence:

from langchain_core.chat_history import InMemoryChatMessageHistory

from langchain_core.runnables.historical past import RunnableWithMessageHistory# Various kinds of reminiscence might be present in Langchain

reminiscence = InMemoryChatMessageHistory(session_id="foo")

immediate = ChatPromptTemplate.from_messages(

[

("system", "You are a helpful assistant."),

# First put the history

("placeholder", "{chat_history}"),

# Then the new input

("human", "{input}"),

# Finally the scratchpad

("placeholder", "{agent_scratchpad}"),

]

)

# Stays unchanged

agent = create_tool_calling_agent(gemini_llm, langchain_tools, immediate)

agent_executor = AgentExecutor(agent=agent, instruments=langchain_tools)

# We add the reminiscence half and the chat historical past

agent_with_chat_history = RunnableWithMessageHistory(

agent_executor,

lambda session_id: reminiscence, #<-- NEW

input_messages_key="enter",

history_messages_key="chat_history", #<-- NEW

)

config = {"configurable": {"session_id": "foo"}}

We are going to now rerun our question from the start:

agent_with_chat_history.invoke({

"enter": "Which tables can be found within the thelook_ecommerce dataset ?"

},

config

)--- OUTPUT ---

"""

{'enter': 'Which tables can be found within the thelook_ecommerce dataset ?',

'chat_history': [],

'output': 'The dataset `thelook_ecommerce` isn't discovered within the `gcp-project-id` undertaking. Please specify the right dataset and undertaking. n'}

"""

With an empty chat historical past, the mannequin nonetheless asks for the undertaking id. Fairly per what we had earlier than with a memoryless agent. Let’s reply to the agent and add the lacking data:

reply = "Challenge id is bigquery-public-data"

agent_with_chat_history.invoke({"enter": reply}, config)--- OUTPUT ---

"""

{'enter': 'Challenge id is bigquery-public-data',

'chat_history': [HumanMessage(content='Which tables are available in the thelook_ecommerce dataset ?'),

AIMessage(content='The dataset `thelook_ecommerce` is not found in the `gcp-project-id` project. Please specify the correct dataset and project. n')],

'output': 'The next tables can be found within the `thelook_ecommerce` dataset:n- distribution_centersn- eventsn- inventory_itemsn- order_itemsn- ordersn- productsn- customers n'}

"""

Discover how, within the output:

- The `chat historical past` retains monitor of the earlier Q&A

- The output now returns the checklist of the tables!

'output': 'The next tables can be found within the `thelook_ecommerce` dataset:n- distribution_centersn- eventsn- inventory_itemsn- order_itemsn- ordersn- productsn- customers n'}

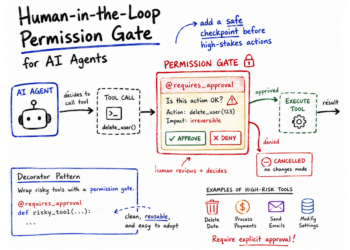

In some use circumstances nonetheless, sure actions would possibly require particular consideration due to their nature (ie deleting an entry in a database, modifying data, sending an electronic mail, and many others.). Full automation with out management would possibly results in conditions the place the agent takes improper selections and creates harm.

One approach to safe our workflows is so as to add a human-in-the-loop step.

7. Making a Chain with a Human Validation step

A series is someway totally different from an agent. Whereas the agent can resolve to make use of or to not use instruments, a series is extra static. It’s a sequence of steps, for which we are able to nonetheless embrace a step the place the LLM will select from a set of instruments.

To construct chains in LangChain, we use LCEL.

LangChain Expression Language, or LCEL, is a declarative approach to simply compose chains collectively. Chains in LangChain use the pipe `|` operator to point the orders wherein steps must be executed, similar to step 1 | step 2 | step 3 and many others. The distinction with Brokers is that Chains will at all times observe these steps, whereas Brokers can “resolve” by themselves and are autonomous of their decision-making course of.

In our case, we’ll proceed as follows to construct a easy immediate | llm chain.

# outline the immediate with reminiscence

immediate = ChatPromptTemplate.from_messages(

[

("system", "You are a helpful assistant."),

# First put the history

("placeholder", "{chat_history}"),

# Then the new input

("human", "{input}"),

# Finally the scratchpad

("placeholder", "{agent_scratchpad}"),

]

)# bind the instruments to the LLM

gemini_with_tools = gemini_llm.bind_tools(langchain_tool)

# construct the chain

chain = immediate | gemini_with_tools

Bear in mind how within the earlier step we handed an agent to our `RunnableWithMessageHistory`? Effectively, we’ll do the identical right here, however…

# With AgentExecutor# agent = create_tool_calling_agent(gemini_llm, langchain_tool, immediate)

# agent_executor = AgentExecutor(agent=agent, instruments=langchain_tool)

# agent_with_chat_history = RunnableWithMessageHistory(

# agent_executor,

# lambda session_id: reminiscence,

# input_messages_key="enter",

# history_messages_key="chat_history",

# )

config = {"configurable": {"session_id": "foo"}}

# With Chains

reminiscence = InMemoryChatMessageHistory(session_id="foo")

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: reminiscence,

input_messages_key="enter",

history_messages_key="chat_history",

)

response = chain_with_history.invoke(

{"enter": "What's the present CHF EUR trade charge ?"}, config)

--- OUTPUT

"""

content material='',

additional_kwargs={

'function_call': {

'title': 'get_exchange_rate_from_api',

'arguments': '{"currency_from": "CHF", "currency_to": "EUR"}'

}

}

"""

In contrast to the agent, a series doesn’t present the reply until we inform it to. In our case, it stopped on the step the place the LLM returns the perform that must be known as.

We have to add an additional step to really name the device. Let’s add one other perform to name the instruments:

from langchain_core.messages import AIMessagedef call_tools(msg: AIMessage) -> checklist[dict]:

"""Easy sequential device calling helper."""

tool_map = {device.title: device for device in langchain_tool}

tool_calls = msg.tool_calls.copy()

for tool_call in tool_calls:

tool_call["output"] = tool_map[tool_call["name"]].invoke(tool_call["args"])

return tool_calls

chain = immediate | gemini_with_tools | call_tools #<-- Additional step

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: reminiscence,

input_messages_key="enter",

history_messages_key="chat_history",

)

# Rerun the chain

chain_with_history.invoke({"enter": "What's the present CHF EUR trade charge ?"}, config)

We now get the next output, which reveals the API has been efficiently known as:

[{'name': 'get_exchange_rate_from_api',

'args': {'currency_from': 'CHF', 'currency_to': 'EUR'},

'id': '81bc85ea-dfd4-4c01-85e8-f3ca592fff5b',

'type': 'tool_call',

'output': '{"amount":1.0,"base":"USD","date":"2024-11-20","rates":{"EUR":0.94679}}'

}]

Now we understood tips on how to chain steps, let’s add our human-in-the-loop step ! We would like this step to examine that the LLM has understood our requests and can make the best name to an API. If the LLM has misunderstood the request or will use the perform incorrectly, we are able to resolve to interrupt the method.

def human_approval(msg: AIMessage) -> AIMessage:

"""Chargeable for passing by its enter or elevating an exception.Args:

msg: output from the chat mannequin

Returns:

msg: unique output from the msg

"""

for tool_call in msg.tool_calls:

print(f"I wish to use perform [{tool_call.get('name')}] with the next parameters :")

for okay,v in tool_call.get('args').gadgets():

print(" {} = {}".format(okay, v))

print("")

input_msg = (

f"Do you approve (Y|y)?nn"

">>>"

)

resp = enter(input_msg)

if resp.decrease() not in ("sure", "y"):

increase NotApproved(f"Software invocations not accepted:nn{tool_strs}")

return msg

Subsequent, add this step to the chain earlier than the perform name:

chain = immediate | gemini_with_tools | human_approval | call_toolsreminiscence = InMemoryChatMessageHistory(session_id="foo")

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: reminiscence,

input_messages_key="enter",

history_messages_key="chat_history",

)

chain_with_history.invoke({"enter": "What's the present CHF EUR trade charge ?"}, config)

You’ll then be requested to substantiate that the LLM understood accurately:

This human-in-the-loop step might be very useful for important workflows the place a misinterpretation from the LLM may have dramatic penalties.

8. Utilizing search instruments

One of the handy instruments to retrieve data in real-time are search engines like google and yahoo . A technique to try this is to make use of GoogleSerperAPIWrapper (you have to to register to get an API key with the intention to use it), which gives a pleasant interface to question Google Search and get outcomes rapidly.

Fortunately, LangChain already gives a device for you, so we received’t have to write down the perform ourselves.

Let’s subsequently attempt to ask a query on yesterday’s occasion (Nov twentieth) and see if our agent can reply. Our query is about Rafael Nadal’s final official recreation (which he misplaced to van de Zandschulp).

agent_with_chat_history.invoke(

{"enter": "What was the results of Rafael Nadal's newest recreation ?"}, config)--- OUTPUT ---

"""

{'enter': "What was the results of Rafael Nadal's newest recreation ?",

'chat_history': [],

'output': "I don't have entry to real-time data, together with sports activities outcomes. To get the newest data on Rafael Nadal's recreation, I like to recommend checking a dependable sports activities web site or information supply. n"}

"""

With out having the ability to entry Google Search, our mannequin is unable to reply as a result of this data was not out there on the time it was skilled.

Let’s now add our Serper device to our toolbox and see if our mannequin can use Google Search to seek out the data:

from langchain_community.utilities import GoogleSerperAPIWrapper# Create our new search device right here

search = GoogleSerperAPIWrapper(serper_api_key="...")

@device

def google_search(question: str):

"""

Carry out a search on Google

Args:

question: the data to be retrieved with google search

"""

return search.run(question)

# Add it to our current instruments

langchain_tool = [

list_datasets,

list_tables,

get_exchange_rate_from_api,

google_search

]

# Create agent

agent = create_tool_calling_agent(gemini_llm, langchain_tool, immediate)

agent_executor = AgentExecutor(agent=agent, instruments=langchain_tool)

# Add reminiscence

reminiscence = InMemoryChatMessageHistory()

agent_with_chat_history = RunnableWithMessageHistory(

agent_executor,

lambda session_id: reminiscence,

input_messages_key="enter",

history_messages_key="chat_history",

)

And rerun our question :

agent_with_chat_history.invoke({"enter": "What was the results of Rafael Nadal's newest recreation ?"}, config)--- OUTPUT ---

"""

{'enter': "What was the results of Rafael Nadal's newest recreation ?",

'chat_history': [],

'output': "Rafael Nadal's final match was a loss to Botic van de Zandschulp within the Davis Cup. Spain was eradicated by the Netherlands. n"}

"""

Conclusion

LLMs alone typically hit a blocker on the subject of utilizing private, company, non-public or real-data. Certainly, such data is mostly not out there at coaching time. Brokers and instruments are a robust approach to increase these fashions by permitting them to work together with programs and APIs, and orchestrate workflows to spice up productiveness.