Introduction

a steady variable for 4 completely different merchandise. The machine studying pipeline was in-built Databricks and there are two main parts.

- Characteristic preparation in SQL with serverless compute.

- Inference on an ensemble of a number of hundred fashions utilizing job clusters to have management over compute energy.

In our first try, a 420-core cluster spent practically 10 hours processing simply 18 partitions.

The target is to tune the information circulation to maximise cluster utilization and guarantee scalability. Inference is finished on 4 units of ML fashions, one set per product. Nonetheless, we are going to concentrate on how the information is saved as it’ll lay out how a lot parallelism we are able to leverage for inference. We is not going to concentrate on the interior workings of the inference itself.

If there are too few file partitions, the cluster will take a very long time scanning massive information and at that time, until repartitioned (which means added community latency and information shuffling), you could be inferencing on a big set of rows in each partition too. Additionally leading to long term instances.

Nonetheless, enterprise has restricted persistence to ship out ML pipelines with a direct impression on the org. So exams are restricted.

On this article, we are going to overview our characteristic information panorama, then present an outline of the ML inference, and current the outcomes and discussions of the inference efficiency primarily based on 4 dataset therapy situations:

- Partitioned desk, no salt, no row restrict in partitions (non-salted and Partitioned)

- Partitioned desk, salted, with 1M row restrict (salty and Partitioned)

- Liquid-clustered desk, no salt, no row restrict in partitions (non-salted and Liquid)

- Liquid-clustered desk, salted, with 1M row restrict (salty and liquid)

Information Panorama

The dataset accommodates options that the set of ML fashions makes use of for inference. It has ~550M rows and accommodates 4 merchandise recognized within the attribute ProductLine:

- Product A: ~10.45M (1.9%)

- Product B: ~4.4M (0.8%)

- Product C: ~100M (17.6%)

- Product D: ~354M (79.7%)

It then has one other low cardinality attribute attrB, that accommodates solely two distinct values and is used as a filter to extract subsets of the dataset for each a part of the ML system.

Furthermore, RunDate logs the date when the options had been generated. They’re append-only. Lastly, the dataset is learn utilizing the next question:

SELECT

Id,

ProductLine,

AttrB,

AttrC,

RunDate,

{model_features}

FROM

catalog.schema.FeatureStore

WHERE

ProductLine = :product AND

AttrB = :attributeB AND

RunDate = :RunDateSalt Implementation

The salting right here is generated dynamically. Its objective is to distribute the information in response to the volumes. Which means massive merchandise obtain extra buckets and smaller merchandise obtain fewer buckets. As an example, Product D ought to obtain round 80% of the buckets, given the proportions within the information panorama.

We do that so we are able to have predictable inference run instances and maximize cluster utilization.

# Calculate proportion of every (ProductLine, AttrB) primarily based on row counts

brand_cat_counts = df_demand_price_grid_load.groupBy(

"ProductLine", "AttrB"

).rely()

total_count = df_demand_price_grid_load.rely()

brand_cat_percents = brand_cat_counts.withColumn(

"p.c", F.col("rely") / F.lit(total_count)

)

# Accumulate percentages as dicts with string keys (it will later decide

# the variety of salt buckets every product receives

brand_cat_percent_dict = {

f"{row['ProductLine']}|{row['AttrB']}": row['percent']

for row in brand_cat_percents.gather()

}

# Accumulate counts as dicts with string keys (it will assist

# so as to add an extra bucket if counts just isn't divisible by the variety of

# buckets for the product

brand_cat_count_dict = {

f"{row['ProductLine']}|{row['AttrB']}": row['count']

for row in brand_cat_percents.gather()

}

# Helper to flatten key-value pairs for create_map

def dict_to_map_expr(d):

expr = []

for ok, v in d.gadgets():

expr.append(F.lit(ok))

expr.append(F.lit(v))

return expr

percent_case = F.create_map(*dict_to_map_expr(brand_cat_percent_dict))

count_case = F.create_map(*dict_to_map_expr(brand_cat_count_dict))

# Add string key column in pyspark

df_demand_price_grid_load = df_demand_price_grid_load.withColumn(

"product_cat_key",

F.concat_ws("|", F.col("ProductLine"), F.col("AttrB"))

)

df_demand_price_grid_load = df_demand_price_grid_load.withColumn(

"p.c", percent_case.getItem(F.col("product_cat_key"))

).withColumn(

"product_count", count_case.getItem(F.col("product_cat_key"))

)

# Set min/max buckets

min_buckets = 10

max_buckets = 1160

# Calculate buckets per row primarily based on (BrandName, price_delta_cat) proportion

df_demand_price_grid_load = df_demand_price_grid_load.withColumn(

"buckets_base",

(F.lit(min_buckets) + (F.col("p.c") * (max_buckets - min_buckets))).forged("int")

)

# Add an additional bucket if brand_count just isn't divisible by buckets_base

df_demand_price_grid_load = df_demand_price_grid_load.withColumn(

"buckets",

F.when(

(F.col("product_count") % F.col("buckets_base")) != 0,

F.col("buckets_base") + 1

).in any other case(F.col("buckets_base"))

)

# Generate salt per row primarily based on (ProductLine, AttrB) bucket rely

df_demand_price_grid_load = df_demand_price_grid_load.withColumn(

"salt",

(F.rand(seed=42) * F.col("buckets")).forged("int")

)

# Carry out the repartition utilizing the core attributes and the salt column

df_demand_price_grid_load = df_demand_price_grid_load.repartition(

1200, "AttrB", "ProductLine", "salt"

).drop("product_cat_key", "p.c", "brand_count", "buckets_base", "buckets", "salt")Lastly, we save our dataset to the characteristic desk and add a max variety of rows per partition. That is to forestall Spark from producing partitions with too many rows, which it could actually do even when now we have already computed the salt.

Why will we implement 1M rows? The first focus is on mannequin inference time, not a lot on file measurement. After just a few exams with 1M, 1.5M, 2M, the primary yields the perfect efficiency in our case. Once more, very funds and time-constrained for this mission, so now we have to take advantage of our sources.

df_demand_price_grid_load.write

.mode("overwrite")

.possibility("replaceWhere", f"RunDate = '{params['RunDate']}'")

.possibility("maxRecordsPerFile", 1_000_000)

.partitionBy("RunDate", "price_delta_cat", "BrandName")

.saveAsTable(f"{params['catalog_revauto']}.{params['schema_revenueautomation']}.demand_features_price_grid")Why not simply depend on Spark’s Adaptive Question Execution (AQE)?

Recall that the first focus is on inference instances, not on measurements tuned for normal Spark SQL queries like file measurement. Utilizing solely AQE was really our preliminary try. As you will notice within the outcomes, the run instances had been very undesirable and didn’t maximize the cluster utilization given our information proportions.

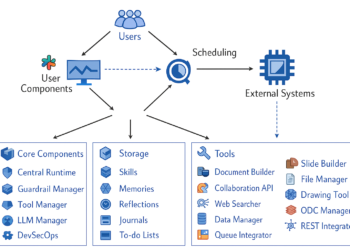

Machine Studying inference

There’s a pipeline with 4 duties, one per product. Each process does the next common steps:

- Masses the options from the corresponding product

- Masses the subset of ML fashions for the corresponding product

- Performs inference in half the subset sliced by

AttrB - Performs inference within the different half sliced by

AttrB - Saves information to the outcomes desk

We’ll concentrate on one of many inference phases to not overwhelm this text with numbers, though the opposite stage could be very related in construction and outcomes. Furthermore, you’ll be able to see the DAG for the inference to judge in Fig. 2.

It appears very simple, however the run instances can differ relying on how your information is saved and the dimensions of your cluster.

Cluster configuration

For the inference stage we’re analyzing, there’s one cluster per product, tuned for the infrastructure limitations of the mission, and in addition the distribution of knowledge:

- Product A: 35 employees (Standard_DS14v2, 420 cores)

- Product B: 5 employees (Standard_DS14v2, 70 cores)

- Product C: 1 employee (Standard_DS14v2, 14 cores)

- Product D: 1 employee (Standard_DS14v2, 14 cores)

As well as, AdaptiveQueryExecution is enabled by default, which can let Spark determine methods to finest save the information given the context you present.

Outcomes and dialogue

You will notice for every state of affairs an outline of the variety of file partitions per product and the common variety of rows per partition to present you a sign of what number of rows the ML system will do inference per Spark process. Moreover, we current Spark UI metrics to look at run-time efficiency and search for the distribution of knowledge at inference time. We’ll do the Spark UI portion just for Product D, which is the most important, to not embody an extra of data. As well as, relying on the state of affairs, inference on Product D turns into a bottleneck in run time. Another excuse why it was the first focus of the outcomes.

Non-Salted and Partitioned

You’ll be able to see in Fig. 3that the common file partition has tens of thousands and thousands of rows, which suggests appreciable run time for a single executor. The most important on common is Product C with greater than 45M rows in a single partition. The smallest is Product B with roughly 12M common rows.

Fig 4. depict the variety of partitions per product, with a complete of 26 for all. Checking product D, 18 partitions fall very wanting the 420 cores now we have obtainable and on common, each partition will carry out inference on ~40M rows.

Check out Fig 5. In complete, the cluster spent 9.9 hours and it nonetheless wasn’t full, as we needed to kill the job, for it was turning into costly and blocking different individuals’s exams.

From the abstract statistics in Fig. 6 for the duties that did end, we are able to see that there was heavy skew within the partitions for Product D. The utmost enter measurement was ~56M and the runtime was 7.8h.

Non-salted and Liquid

On this state of affairs, we are able to observe very related outcomes when it comes to common variety of rows per file partition and variety of partitions per product, as seen in Fig. 7 and Fig. 8, respectively.

Product D has 19 file partitions, nonetheless very wanting 420 cores.

We are able to already anticipate that this experiment was going to be very costly, so I made a decision to skip the inference take a look at for this state of affairs. Once more, in an excellent state of affairs, we feature it ahead, however there’s a backlog of tickets in my board.

Salty and Partitioned

After making use of the salting and repartition course of, we find yourself with ~2.5M common data per partition for merchandise A and B, and ~1M for merchandise C and D as depicted in Fig 9.

Furthermore, we are able to see in Fig. 10 that the variety of file partitions elevated to roughly 860 for product D, which provides 430 for every inference stage.

This leads to a run time of 3h for inferencing Product D with 360 duties as seen in Fig 11.

Checking the abstract statistics from Fig. 12, the distribution seems balanced with run instances round 1.7, however a most process taking 3h, which is value additional investigating sooner or later.

One nice profit is that the salt distributes the information in response to the proportions of the merchandise. If we had extra availability of sources, we might improve the variety of shuffle partitions in repartition() and add employees in response to the proportions of the information. This ensures that our course of scales predictably.

Salty and Liquid

This state of affairs combines the 2 strongest levers now we have explored to this point:

salting to regulate file measurement and parallelism, and liquid clustering to maintain associated information colocated with out inflexible partition boundaries.

After making use of the identical salting technique and a 1M row restrict per partition, the liquid-clustered desk exhibits a really related common partition measurement to the salted and partitioned case, as proven in Fig 13. Merchandise C and D stay near the 1M rows goal, whereas merchandise A and B settle barely above that threshold.

Nonetheless, the principle distinction seems in how these partitions are distributed and consumed by Spark. As proven in Fig. 14, product D once more reaches a excessive variety of file partitions, offering sufficient parallelism to saturate the obtainable cores throughout inference.

Not like the partitioned counterpart, liquid clustering permits Spark to adapt file format over time whereas nonetheless benefiting from the salt. This leads to a extra even distribution of labor throughout executors, with fewer excessive outliers in each enter measurement and process length.

From the abstract statistics in Fig. 15, we observe that almost all of duties are accomplished inside a decent runtime window, and the utmost process length is decrease than within the salty and partitioned state of affairs. This means decreased skew and higher load balancing throughout the cluster.

An vital facet impact is that liquid clustering preserves information locality for the filtered columns with out imposing strict partition boundaries. This enables Spark to nonetheless profit from information skipping, whereas the salt ensures that no single executor is overwhelmed with tens of thousands and thousands of rows.

Total, salty and liquid emerges as probably the most sturdy setup: it maximizes parallelism, minimizes skew, and reduces operational threat when inference workloads develop or cluster configurations change.

Key Takeaways

- Inference scalability is commonly restricted by information format, not mannequin complexity. Poorly sized file partitions can go away lots of of cores idle whereas just a few executors course of tens of thousands and thousands of rows.

- Partitioning alone just isn’t sufficient for large-scale inference. With out controlling file measurement, partitioned tables can nonetheless produce large partitions that result in long-running, skewed duties.

- Salting is an efficient software to unlock parallelism. Introducing a salt key and imposing a row restrict per partition dramatically will increase the variety of runnable duties and stabilizes runtimes.

- Liquid clustering enhances salting by decreasing skew with out inflexible boundaries. It permits Spark to adapt file format over time, making the system extra resilient as information grows.