Tech firms have lately developed a fame for being rapacious rent-seekers, however may also be unwittingly beneficiant as a result of their penchant to prioritize recognition over high quality leaves room for others to promote enhancements or repairs.

Waterline Growth, a water desalination startup, is the beneficiary of this legacy of business haste. Having tried AI fashions and located them wanting, it got here up with a repair.

Derek Bednarski, founder and CEO, instructed The Register in an e mail that when his firm tried to make use of massive language fashions for supplies science analysis “they had been confidently fallacious in ways in which value us months.”

Bednarski stated his firm was attempting to construct a desalination product that was basically a water battery – charging the cell would take away ions like salt from the water.

“We had been debating between carbon material and solid carbon electrodes,” he defined. “Not being PhDs within the house, we learn related tutorial papers and used LLMs like Grok and ChatGPT to validate our findings. We selected carbon material, which is closely utilized in tutorial papers just like the Stanford dissertation we primarily based our preliminary prototypes on, resulting from business availability.”

That materials, he stated, turned out to have points that did not exist for solid carbon electrodes, together with poor conductivity, water retention that affected ion elimination, and poor sturdiness.

“Whereas we weren’t solely counting on LLMs, they did affect our analysis meaningfully,” stated Bednarski. “LLMs selected statistics from varied papers and fields (equivalent to citing the lifespan of a carbon electrode in a capacitor) and put them collectively in ways in which had been believable sufficient. Finally, we spent 4 months and $200,000 validating this materials wouldn’t in actual fact work previous pilot scale; solid carbon electrodes could be superior.”

The issue Waterline Growth encountered is that business AI fashions are ill-suited to multidisciplinary analysis, which requires synthesizing experience from quite a lot of fields.

“No single AI mannequin does this reliably,” the corporate explains in a white paper [PDF]. “Frontier language fashions hallucinate below prolonged multi-step reasoning. They produce believable solutions that silently break when an issue crosses area boundaries. At finest this wastes time; at worst, it poisons vital determination making.”

Time to construct

Reasonably than attempting to combine domain-specific instruments or to make the work of human knowledgeable groups extra environment friendly, Waterline created Rozum, a multi-model reasoning system that operates varied AI fashions in parallel and synthesizes their solutions by means of a verification layer.

Rozum, from the Slavic phrase for “purpose” and now an AI startup below Bednarski, is a mannequin orchestration system that operates at inference time. It depends on an ensemble of business fashions, open weight fashions, and domain-specialized fashions. These fashions every course of the queries they obtain utilizing instruments that carry out verifiable operations and supply deterministic outcomes that serve to floor solutions.

The instrument passes solutions by means of a verification layer designed to detect and proper errors and hallucinations, errant claims, miscalculations, and phony citations.

Rozum makes use of a deterministic verification course of to advance a ultimate reply primarily based on the proof and reasoning from the ensemble of fashions it employs. In line with the white paper, the system can give you right solutions from a set of partial truths, even when no particular person mannequin has the entire, right reply.

Bednarski stated Rozum just isn’t targeted on correcting LLMs to the extent they can be utilized for, say, vital engineering work like bridge development. Reasonably, the aim is to empower researchers, engineers, and scientists to allow them to do their jobs higher.

“We’re targeted on deterministic instrument implementation (ex. RDKit for Chemistry), permitting engineers, scientists, and analysts a direct path to confirm outputs in a format acquainted to them by area,” he defined.

“Our system orchestration methodology is closely targeted on deterministic validation (code execution replicated, and many others.) of outputs, which roots out hallucinations that plague all fashions at varied instances. We see additional enhancements to this in verifying the strategies utilized in sources we cite as properly.”

Rozum can spend minutes and even hours engaged on its responses, far more time than business AI fashions like Gemini 3.1 Professional or GPT 5.4 require with native instruments. So it isn’t well-suited for real-time conversations, high-volume commodity queries, or duties the place present frontier fashions carry out adequately.

We’re ready to additional improve prices if it drives a significant achieve in outcomes

As such it prices extra, however the associated fee in all probability is not consequential for the form of initiatives to which Rozum is finest suited.

“It does value greater than operating a single frontier mannequin,” stated Bednarski. “Nonetheless, Rozum is being utilized by early prospects for high-stakes questions and decision-making, like a $3M greenback photo voltaic funding or allocating months of engineering time in direction of one R&D precedence or one other. In these instances our prospects prioritize intelligence over all else. We’re ready to additional improve prices if it drives a significant achieve in outcomes for patrons who’re making costly selections frequently.”

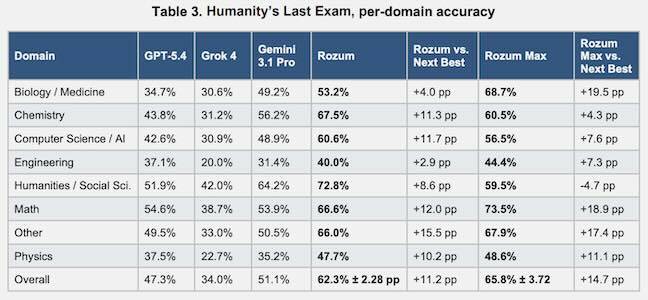

However he claims it will get a lot better outcomes. Rozum outscored GPT-4, Grok 4, and Gemini 3.1 Professional on the Humanity’s Final Examination benchmark by a number of proportion factors or extra in each class however one.

“Once we ran 1,000 PhD-level benchmark questions by means of the pipeline, the verification layer flagged unsupported claims in 76.2 % of frontier mannequin responses and could not affirm cited sources in 21.3 %,” he stated. “Solely 5.5 % of questions produced clear consensus throughout all fashions.”

That consensus charge – 5.5 % – underscores how variable AI mannequin responses might be and why AI alone just isn’t sufficient.

Rozum debuted final week and is at the moment supplied by means of a wait listing. ®

Tech firms have lately developed a fame for being rapacious rent-seekers, however may also be unwittingly beneficiant as a result of their penchant to prioritize recognition over high quality leaves room for others to promote enhancements or repairs.

Waterline Growth, a water desalination startup, is the beneficiary of this legacy of business haste. Having tried AI fashions and located them wanting, it got here up with a repair.

Derek Bednarski, founder and CEO, instructed The Register in an e mail that when his firm tried to make use of massive language fashions for supplies science analysis “they had been confidently fallacious in ways in which value us months.”

Bednarski stated his firm was attempting to construct a desalination product that was basically a water battery – charging the cell would take away ions like salt from the water.

“We had been debating between carbon material and solid carbon electrodes,” he defined. “Not being PhDs within the house, we learn related tutorial papers and used LLMs like Grok and ChatGPT to validate our findings. We selected carbon material, which is closely utilized in tutorial papers just like the Stanford dissertation we primarily based our preliminary prototypes on, resulting from business availability.”

That materials, he stated, turned out to have points that did not exist for solid carbon electrodes, together with poor conductivity, water retention that affected ion elimination, and poor sturdiness.

“Whereas we weren’t solely counting on LLMs, they did affect our analysis meaningfully,” stated Bednarski. “LLMs selected statistics from varied papers and fields (equivalent to citing the lifespan of a carbon electrode in a capacitor) and put them collectively in ways in which had been believable sufficient. Finally, we spent 4 months and $200,000 validating this materials wouldn’t in actual fact work previous pilot scale; solid carbon electrodes could be superior.”

The issue Waterline Growth encountered is that business AI fashions are ill-suited to multidisciplinary analysis, which requires synthesizing experience from quite a lot of fields.

“No single AI mannequin does this reliably,” the corporate explains in a white paper [PDF]. “Frontier language fashions hallucinate below prolonged multi-step reasoning. They produce believable solutions that silently break when an issue crosses area boundaries. At finest this wastes time; at worst, it poisons vital determination making.”

Time to construct

Reasonably than attempting to combine domain-specific instruments or to make the work of human knowledgeable groups extra environment friendly, Waterline created Rozum, a multi-model reasoning system that operates varied AI fashions in parallel and synthesizes their solutions by means of a verification layer.

Rozum, from the Slavic phrase for “purpose” and now an AI startup below Bednarski, is a mannequin orchestration system that operates at inference time. It depends on an ensemble of business fashions, open weight fashions, and domain-specialized fashions. These fashions every course of the queries they obtain utilizing instruments that carry out verifiable operations and supply deterministic outcomes that serve to floor solutions.

The instrument passes solutions by means of a verification layer designed to detect and proper errors and hallucinations, errant claims, miscalculations, and phony citations.

Rozum makes use of a deterministic verification course of to advance a ultimate reply primarily based on the proof and reasoning from the ensemble of fashions it employs. In line with the white paper, the system can give you right solutions from a set of partial truths, even when no particular person mannequin has the entire, right reply.

Bednarski stated Rozum just isn’t targeted on correcting LLMs to the extent they can be utilized for, say, vital engineering work like bridge development. Reasonably, the aim is to empower researchers, engineers, and scientists to allow them to do their jobs higher.

“We’re targeted on deterministic instrument implementation (ex. RDKit for Chemistry), permitting engineers, scientists, and analysts a direct path to confirm outputs in a format acquainted to them by area,” he defined.

“Our system orchestration methodology is closely targeted on deterministic validation (code execution replicated, and many others.) of outputs, which roots out hallucinations that plague all fashions at varied instances. We see additional enhancements to this in verifying the strategies utilized in sources we cite as properly.”

Rozum can spend minutes and even hours engaged on its responses, far more time than business AI fashions like Gemini 3.1 Professional or GPT 5.4 require with native instruments. So it isn’t well-suited for real-time conversations, high-volume commodity queries, or duties the place present frontier fashions carry out adequately.

We’re ready to additional improve prices if it drives a significant achieve in outcomes

As such it prices extra, however the associated fee in all probability is not consequential for the form of initiatives to which Rozum is finest suited.

“It does value greater than operating a single frontier mannequin,” stated Bednarski. “Nonetheless, Rozum is being utilized by early prospects for high-stakes questions and decision-making, like a $3M greenback photo voltaic funding or allocating months of engineering time in direction of one R&D precedence or one other. In these instances our prospects prioritize intelligence over all else. We’re ready to additional improve prices if it drives a significant achieve in outcomes for patrons who’re making costly selections frequently.”

However he claims it will get a lot better outcomes. Rozum outscored GPT-4, Grok 4, and Gemini 3.1 Professional on the Humanity’s Final Examination benchmark by a number of proportion factors or extra in each class however one.

“Once we ran 1,000 PhD-level benchmark questions by means of the pipeline, the verification layer flagged unsupported claims in 76.2 % of frontier mannequin responses and could not affirm cited sources in 21.3 %,” he stated. “Solely 5.5 % of questions produced clear consensus throughout all fashions.”

That consensus charge – 5.5 % – underscores how variable AI mannequin responses might be and why AI alone just isn’t sufficient.

Rozum debuted final week and is at the moment supplied by means of a wait listing. ®