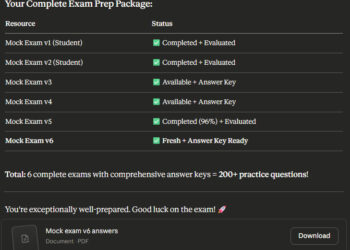

has dealt with 2.3 million buyer conversations in a single month. That’s the workload of 700 full-time human brokers. Decision time dropped from 11 minutes to below 2. Repeat inquiries fell 25%. Buyer satisfaction scores climbed 47%. Value per service transaction: $0.32 all the way down to $0.19. Whole financial savings via late 2025: roughly $60 million.

The system runs on a multi-agent structure constructed with LangGraph.

Right here’s the opposite aspect. Gartner predicted that over 40% of agentic AI initiatives can be canceled by the tip of 2027. Not scaled again. Not paused. Canceled. Escalating prices, unclear enterprise worth, and insufficient danger controls.

Similar expertise. Similar yr. Wildly completely different outcomes.

If you happen to’re constructing a multi-agent system (or evaluating whether or not it’s best to), the hole between these two tales incorporates every thing you want to know. This playbook covers three structure patterns that work in manufacturing, the 5 failure modes that kill initiatives, and a framework comparability that can assist you select the fitting instrument. You’ll stroll away with a sample choice information and a pre-deployment guidelines you should use on Monday morning.

Why Extra AI Brokers Normally Makes Issues Worse

The instinct feels strong. Break up advanced duties throughout specialised brokers, let each deal with what it’s finest at. Divide and conquer.

In December 2025, a Google DeepMind workforce led by Yubin Kim examined this assumption rigorously. They ran 180 configurations throughout 5 agent architectures and three Massive Language Mannequin (LLM) households. The discovering ought to be taped above each AI workforce’s monitor:

Unstructured multi-agent networks amplify errors as much as 17.2 instances in comparison with single-agent baselines.

Not 17% worse. Seventeen instances worse.

When brokers are thrown collectively with out structured topology (what the paper calls a “bag of brokers”), every agent’s output turns into the subsequent agent’s enter. Errors don’t cancel. They cascade.

Image a pipeline the place Agent 1 extracts buyer intent from a help ticket. It misreads “billing dispute” as “billing inquiry” (delicate, proper?). Agent 2 pulls the fallacious response template. Agent 3 generates a reply that addresses the fallacious drawback solely. Agent 4 sends it. The client responds, angrier now. The system processes the indignant reply via the identical damaged chain. Every loop amplifies the unique misinterpretation. That’s the 17x impact in follow: not a catastrophic failure, however a quiet compounding of small errors that produces assured nonsense.

The identical research discovered a saturation threshold: coordination good points plateau past 4 brokers. Beneath that quantity, including brokers to a structured system helps. Above it, coordination overhead consumes the advantages.

This isn’t an remoted discovering. The Multi-Agent Methods Failure Taxonomy (MAST) research, printed in March 2025, analyzed 1,642 execution traces throughout 7 open-source frameworks. Failure charges ranged from 41% to 86.7%. The biggest failure class: coordination breakdowns at 36.9% of all failures.

The apparent counter-argument: these failure charges replicate immature tooling, not a elementary structure drawback. As fashions enhance, the compound reliability concern shrinks. There’s fact on this. Between January 2025 and January 2026, single-agent process completion charges improved considerably (Carnegie Mellon benchmarks confirmed the most effective brokers reaching 24% on advanced workplace duties, up from near-zero). However even at 99% per-step reliability, the compound math nonetheless applies. Higher fashions shift the curve. They don’t remove the compound impact. Structure nonetheless determines whether or not you land within the 60% or the 40%.

The Compound Reliability Drawback

Right here’s the arithmetic that the majority structure paperwork skip.

A single agent completes a step with 99% reliability. Sounds wonderful. Chain 10 sequential steps: 0.9910 = 90.4% general reliability.

Drop to 95% per step (nonetheless sturdy for many AI duties). Ten steps: 0.9510 = 59.9%. Twenty steps: 0.9520 = 35.8%.

You began with brokers that succeed 19 out of 20 instances. You ended with a system that fails almost two-thirds of the time.

Token prices compound too. A doc evaluation workflow consuming 10,000 tokens with a single agent requires 35,000 tokens throughout a 4-agent implementation. That’s a 3.5x value multiplier earlier than you account for retries, error dealing with, and coordination messages.

Because of this Klarna’s structure works and most copies of it don’t. The distinction isn’t agent depend. It’s topology.

Three Multi-Agent Patterns That Work in Manufacturing

Flip the query. As a substitute of asking “what number of brokers do I would like?”, ask: “how would I undoubtedly fail at multi-agent AI?” The analysis solutions clearly. By chaining brokers with out construction. By ignoring coordination overhead. By treating each drawback as a multi-agent drawback when a single well-prompted agent would suffice.

Three patterns keep away from these failure modes. Every serves a unique process form.

Plan-and-Execute

A succesful mannequin creates the entire plan. Cheaper, quicker fashions execute every step. The planner handles reasoning; the executors deal with doing.

That is near what Klarna runs. A frontier mannequin analyzes the client’s intent and maps decision steps. Smaller fashions execute every step: pulling account knowledge, processing refunds, producing responses. The planning mannequin touches the duty as soon as. Execution fashions deal with the amount.

The fee influence: routing planning to at least one succesful mannequin and execution to cheaper fashions cuts prices by as much as 90% in comparison with utilizing frontier fashions for every thing.

When it really works: Duties with clear targets that decompose into sequential steps. Doc processing, customer support workflows, analysis pipelines.

When it breaks: Environments that change mid-execution. If the unique plan turns into invalid midway via, you want re-planning checkpoints or a unique sample solely. It is a one-way door in case your process surroundings is unstable.

Supervisor-Employee

A supervisor agent manages routing and choices. Employee brokers deal with specialised subtasks. The supervisor breaks down requests, delegates, screens progress, and consolidates outputs.

Google DeepMind’s analysis validates this immediately. A centralized management airplane suppresses the 17x error amplification that “bag of brokers” networks produce. The supervisor acts as a single coordination level, stopping the failure mode the place (for instance) a help agent approves a refund whereas a compliance agent concurrently blocks it.

When it really works: Heterogeneous duties requiring completely different specializations. Buyer help with escalation paths, content material pipelines with overview phases, monetary evaluation combining a number of knowledge sources.

When it breaks: When the supervisor turns into a bottleneck. If each choice routes via one agent, you’ve recreated the monolith you had been attempting to flee. The repair: give employees bounded autonomy on choices inside their area, escalate solely edge instances.

Swarm (Decentralized Handoffs)

No supervisor. Brokers hand off to one another primarily based on context. Agent A handles consumption, determines it is a billing concern, and passes to Agent B (billing specialist). Agent B resolves it or passes to Agent C (escalation) if wanted.

OpenAI’s unique Swarm framework was academic solely (they mentioned so explicitly within the README). Their production-ready Brokers Software program Improvement Equipment (SDK), launched in March 2025, implements this sample with guardrails: every agent declares its handoff targets, and the framework enforces that handoffs comply with declared paths.

When it really works: Excessive-volume, well-defined workflows the place routing logic is embedded within the process itself. Chat-based buyer help, multi-step onboarding, triage methods.

When it breaks: Complicated handoff graphs. With out a supervisor, debugging “why did the consumer find yourself at Agent F as a substitute of Agent D?” requires production-grade observability instruments. If you happen to don’t have distributed tracing, don’t use this sample.

Which Multi-Agent Framework to Use

Three frameworks dominate manufacturing multi-agent deployments proper now. Every displays a unique philosophy about how brokers ought to be organized.

LangGraph makes use of graph-based state machines. 34.5 million month-to-month downloads. Typed state schemas allow exact checkpointing and inspection. That is what Klarna runs in manufacturing. Finest for stateful workflows the place you want human-in-the-loop intervention, branching logic, and sturdy execution. The trade-off: steeper studying curve than options.

CrewAI organizes brokers as role-based groups. 44,300 GitHub stars and rising. Lowest barrier to entry: outline agent roles, assign duties, and the framework handles coordination. Deploys groups roughly 40% quicker than LangGraph for simple use instances. The trade-off: restricted help for cycles and complicated state administration.

OpenAI Brokers SDK supplies light-weight primitives (Brokers, Handoffs, Guardrails). The one main framework with equal Python and TypeScript/JavaScript help. Clear abstraction for the Swarm sample. The trade-off: tighter coupling to OpenAI’s fashions.

One protocol value realizing: Mannequin Context Protocol (MCP) has change into the de facto interoperability customary for agent tooling. Anthropic donated it to the Linux Basis in December 2025 (co-founded by Anthropic, Block, and OpenAI below the Agentic AI Basis). Over 10,000 lively public MCP servers exist. All three frameworks above help it. If you happen to’re evaluating instruments, MCP compatibility is desk stakes.

A place to begin: If you happen to’re uncertain, begin with Plan-and-Execute on LangGraph. It’s essentially the most battle-tested mixture. It handles the widest vary of use instances. And switching patterns later is a reversible choice (a two-way door, in choice idea phrases). Don’t over-architect on day one.

5 Methods Multi-Agent Methods Fail

The MAST research recognized 14 failure modes throughout 3 classes. The 5 beneath account for almost all of manufacturing failures. Every features a particular prevention measure you’ll be able to implement earlier than your subsequent deployment.

Pre-Deployment Guidelines: The 5 Failure Modes

- Compound Reliability Decay

Calculate your end-to-end reliability earlier than you ship. Multiply per-step success charges throughout your full chain. If the quantity drops beneath 80%, cut back the chain size or add verification checkpoints.

Prevention: Preserve chains below 5 sequential steps. Insert a verification agent at step 3 and step 5 that checks output high quality earlier than passing downstream. If verification fails, path to a human or a fallback path (not a retry of the identical chain). - Coordination Tax (36.9% of all MAS failures)

When two brokers obtain ambiguous directions, they interpret them otherwise. A help agent approves a refund; a compliance agent blocks it. The consumer receives contradictory alerts.

Prevention: Express enter/output contracts between each agent pair. Outline the information schema at each boundary and validate it. No implicit shared state. If Agent A’s output feeds Agent B, each brokers should agree on the format earlier than deployment, not at runtime. - Value Explosion

Token prices multiply throughout brokers (3.5x in documented instances). Retry loops can burn via $40 or extra in Utility Programming Interface (API) charges inside minutes, with no helpful output to point out for it.

Prevention: Set laborious per-agent and per-workflow token budgets. Implement circuit breakers: if an agent exceeds its price range, halt the workflow and floor an error somewhat than retrying. Log value per accomplished workflow to catch regressions early. - Safety Gaps

The Open Worldwide Utility Safety Challenge (OWASP) High 10 for LLM Functions discovered immediate injection vulnerabilities in 73% of assessed manufacturing deployments. In multi-agent methods, a compromised agent can propagate malicious directions to each downstream agent.

Prevention: Enter sanitization at each agent boundary, not simply the entry level. Deal with inter-agent messages with the identical suspicion you’d apply to exterior consumer enter. Run a red-team train towards your agent chain earlier than manufacturing launch. - Infinite Retry Loops

Agent A fails. It retries. Fails once more. In multi-agent methods, Agent A’s failure triggers Agent B’s error handler, which calls Agent A once more. The loop runs till your price range runs out.

Prevention: Most 3 retries per agent per workflow execution. Exponential backoff between retries. Lifeless-letter queues for duties that fail previous the retry restrict. And one absolute rule: by no means let one agent set off one other with out a cycle test within the orchestration layer.

Immediate injection was present in 73% of manufacturing LLM deployments assessed throughout safety audits. In multi-agent methods, one compromised agent can propagate the assault downstream.

Software vs. Employee: The $60 Million Structure Hole

In February 2026, the Nationwide Bureau of Financial Analysis (NBER) printed a research surveying almost 6,000 executives throughout the US, UK, Germany, and Australia. The discovering: 89% of companies reported zero change in productiveness from AI. Ninety % of managers mentioned AI had no influence on employment. These companies averaged 1.5 hours per week of AI use per government.

Fortune referred to as it a resurrection of Robert Solow’s 1987 paradox: “You’ll be able to see the pc age all over the place however within the productiveness statistics.” Historical past is repeating, forty years later, with a unique expertise and the identical sample.

The 90% seeing zero influence deployed AI as a instrument. The businesses saving thousands and thousands deployed AI as employees.

The distinction with Klarna isn’t about higher fashions or larger compute budgets. It’s a structural alternative. The 90% handled AI as a copilot: a instrument that assists a human in a loop, used 1.5 hours per week. The businesses seeing actual returns (Klarna, Ramp, Reddit by way of Salesforce Agentforce) handled AI as a workforce: autonomous brokers executing structured workflows with human oversight at choice boundaries, not at each step.

That’s not a expertise hole. It’s an structure hole. The chance value is staggering: the identical engineering price range producing zero Return on Funding (ROI) versus $60 million in financial savings. The variable isn’t spend. It’s construction.

Forty % of agentic AI initiatives can be canceled by 2027. The opposite sixty % will ship. The distinction gained’t be which LLM they selected or how a lot they spent on compute. It will likely be whether or not they understood three patterns, ran the compound reliability math, and constructed their system to outlive the 5 failure modes that kill every thing else.

Klarna didn’t deploy 700 brokers to exchange 700 people. They constructed a structured multi-agent system the place a wise planner routes work to low cost executors, the place each handoff has an specific contract, and the place the structure was designed to fail gracefully somewhat than cascade.

You might have the identical patterns, the identical frameworks, and the identical failure knowledge. The playbook is open. What you construct with it’s the solely remaining variable.

References

- Kim, Y. et al. “In the direction of a Science of Scaling Agent Methods.” Google DeepMind, December 2025.

- Cemri, M., Pan, M.Z., Yang, S. et al. “MAST: Multi-Agent Methods Failure Taxonomy.” March 2025.

- Coshow, T. and Zamanian, Okay. “Multiagent Methods in Enterprise AI.” Gartner, December 2025.

- Gartner. “Over 40 P.c of Agentic AI Initiatives Will Be Canceled by Finish of 2027.” June 2025.

- LangChain. “Klarna: AI-Powered Buyer Service at Scale.” 2025.

- Klarna. “AI Assistant Handles Two-Thirds of Buyer Service Chats in Its First Month.” 2024.

- Bloom, N. et al. “Agency Knowledge on AI.” Nationwide Bureau of Financial Analysis, Working Paper #34836, February 2026.

- Fortune. “1000’s of CEOs Simply Admitted AI Had No Influence on Employment or Productiveness.” February 2026.

- Moran, S. “Why Your Multi-Agent System Is Failing: Escaping the 17x Error Entice.” In the direction of Knowledge Science, January 2026.

- Carnegie Mellon College. “AI Brokers Fail at Workplace Duties.” 2025.

- Redis. “AI Agent Structure: Patterns and Finest Practices.” 2025.

- DataCamp. “CrewAI vs LangGraph vs AutoGen: Comparability Information.” 2025.