Although Fb can restrict untrustworthy content material, new analysis suggests it typically chooses to not

An interdisciplinary staff of researchers led by the College of Massachusetts Amherst not too long ago printed work within the prestigious journal Science calling into query the conclusions of a broadly reported research — printed in Science in 2023 and funded by Meta — discovering the social platform’s algorithms efficiently filtered out untrustworthy information surrounding the 2020 election and weren’t main drivers of misinformation.

The UMass Amherst-led staff’s work reveals that the Meta-funded analysis was carried out throughout a brief interval when Meta briefly launched a brand new, extra rigorous information algorithm fairly than its customary one, and that the earlier researchers didn’t account for the algorithmic change. This helped to create the misperception, broadly reported by the media, that Fb and Instagram’s information feeds are largely dependable sources of reliable information.

“The very first thing that rang alarm bells for us” says lead writer Chhandak Bagchi, a graduate pupil within the Manning Faculty of Data and Laptop Science at UMass Amherst, “was after we realized that the earlier researchers,” Guess et al., “carried out a randomized management experiment throughout the identical time that Fb had made a systemic, short-term change to their information algorithm.”

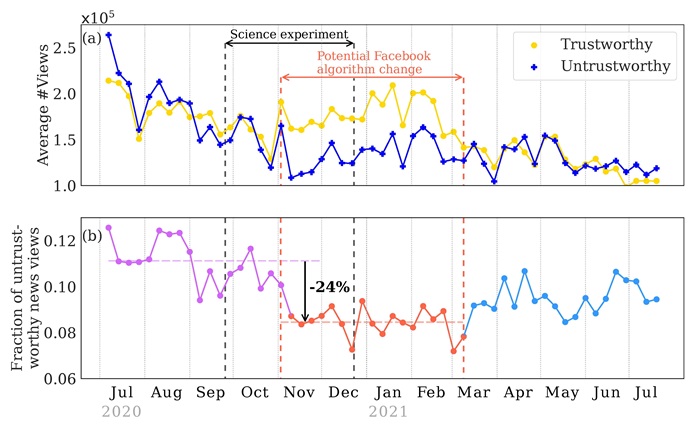

Starting across the begin of November 2020, Meta launched 63 “break glass” adjustments to Fb’s information feed which had been expressly designed to decrease the visibility of untrustworthy information surrounding the 2020 U.S. presidential election. These adjustments had been profitable. “We applaud Fb for implementing the extra stringent information feed algorithm,” says Przemek Grabowicz, the paper’s senior writer, who not too long ago joined College Faculty Dublin however carried out this analysis at UMass Amherst’s Manning Faculty of Data and Laptop Science. Chhandak, Grabowicz and their co-authors level out that the newer algorithm reduce person views of misinformation by at the very least 24%. Nonetheless, the adjustments had been non permanent, and the information algorithm reverted to its earlier follow of selling a better fraction of untrustworthy information in March 2021.

Guess et al.’s research ran from September 24 by means of December 23, and so considerably overlapped with the brief window when Fb’s information was decided by the extra stringent algorithm — however the Guess et al. paper didn’t make clear that their knowledge captured an distinctive second for the social media platform. “Their paper gives the look that the usual Fb algorithm is sweet at stopping misinformation,” says Grabowicz, “which is questionable.”

A part of the issue, as Chhandak, Grabowicz, and their co-authors write, is that experiments, such because the one run by Guess et al., must be “preregistered” — which signifies that Meta may have identified nicely forward of time what the researchers can be searching for. And but, social media aren’t required to make any public notification of serious adjustments to their algorithms. “This may result in conditions the place social media firms may conceivably change their algorithms to enhance their public picture in the event that they know they’re being studied,” write the authors, which embody Jennifer Lundquist (professor of sociology at UMass Amherst), Monideepa Tarafdar (Charles J. Dockendorff Endowed Professor at UMass Amherst’s Isenberg College of Administration), Anthony Paik (professor of sociology at UMass Amherst) and Filippo Menczer (Luddy Distinguished Professor of Informatics and Laptop Science at Indiana College).

Although Meta funded and equipped 12 co-authors for Guess et al.’s research, they write that “Meta didn’t have the precise to prepublication approval.”

“Our outcomes present that social media firms can mitigate the unfold of misinformation by modifying their algorithms however could not have monetary incentives to take action,” says Paik. “A key query is whether or not the harms of misinformation — to people, the general public and democracy — needs to be extra central of their enterprise selections.”

Join the free insideAI Information e-newsletter.

Be a part of us on Twitter: https://twitter.com/InsideBigData1

Be a part of us on LinkedIn: https://www.linkedin.com/firm/insideainews/

Be a part of us on Fb: https://www.fb.com/insideAINEWSNOW