has been like classical artwork. We used to fee a report from our information analyst—our Michelangelo—and wait patiently. Weeks later, we acquired an e mail with an impressive hand-carved masterpiece: a hyperlink to a 50-KPI dashboard or a 20-page report connected. We might admire the meticulous craftsmanship, however we couldn’t change it. What’s extra: we couldn’t even ask follow-up questions. Neither the report nor our analyst, since she was already busy with one other project.

That’s why the way forward for information evaluation doesn’t belong to an ‘analytical equal’ of Michelangelo. It’s most likely nearer to the artwork of Fujiko Nakaya.

Fujiko Nakaya is legendary for her fog ‘sculptures’: breathtaking, dwelling clouds of fog. However she doesn’t ‘sculpt’ the fog herself. She has the thought. She designs the idea. The precise, advanced work of constructing the pipe methods and programming the water stress to provide fog is finished by engineers and plumbers.

The paradigm shift of Pure Language Visualization is identical.

Think about that it is advisable to perceive a phenomenon: shopper churn growing, gross sales declining, or supply instances not enhancing. Due to that, you change into the conceptual artist. You present the thought:

What have been our gross sales within the northeast, and the way did that examine to final 12 months?

The system turns into your grasp technician. It does all of the advanced portray, sculpting, or, as in Nakaya’s case, plumbing within the background. It builds the question, chooses visualizations, and writes the interpretation. Lastly, the reply, like fog in Nakaya’s sculptures, seems proper in entrance of you.

Pc, analyze all sensor logs from the final hour. Correlate for ion fluctuations.

Do you keep in mind the bridge of the Enterprise starship? When Captain Kirk wanted to analysis a historic determine or Commander Spock wanted to cross-reference a brand new vitality signature, they by no means needed to open a posh dashboard. They spoke to the pc (or no less than used the interface and buttons on the captain’s chair) [*].

There was no want to make use of a BI app or write a single line of SQL. Kirk or Spock wanted solely to state their want: ask a query, generally add a easy hand gesture. In return, they acquired a right away, visible or vocal response. For many years, that fluid, conversational energy was pure science fiction.

In the present day, I ask myself a query:

Are we at first of this specific actuality of knowledge evaluation?

Knowledge evaluation is present process a major transformation. We’re shifting away from conventional software program that requires infinite clicking on icons, menus, and home windows, studying querying and programming languages or mastering advanced interfaces. As an alternative, we’re beginning to have easy conversations with our information.

The objective is to switch the steep studying curve of advanced instruments with the pure simplicity of human language. This opens up information evaluation to everybody, not simply specialists, permitting them to ‘discuss with their information.’

At this level, you’re most likely skeptical about what I’ve written.

And you’ve got each proper to be.

Many people have tried utilizing ‘the trendy period’ AI instruments for visualizations or shows, solely to search out the outcomes have been inferior to what generally even a junior analyst might produce. These outputs have been typically inaccurate. And even worse: they have been hallucinations, distant from the solutions we’d like, or are merely incorrect.

This isn’t only a glitch; there are clear causes for the hole between promise and actuality, which we are going to tackle at present.

On this article, I delve into a brand new method referred to as Pure Language Visualization (NLV). Specifically, I’ll describe how the expertise really works, how we will use it, and what the key challenges are that also must be solved earlier than we enter our personal Star Trek period.

I like to recommend treating this text as a structured journey by means of our present information on this matter. A sidenote: this text additionally marks a slight return for me to my earlier posts on information visualization, bridging that work with my more moderen deal with storytelling.

What I discovered within the means of scripting this specific piece—and what I hope you’ll uncover whereas studying, too—is that this topic appeared completely apparent at first look. Nevertheless, it shortly revealed a shocking, hidden depth of nuance. Finally, after reviewing all of the cited and non-cited sources, my very own reflections, and thoroughly balancing the information, I arrived at a reasonably surprising conclusion. Taking this systemic, academic-like method was a real eye-opener in some ways, and I hope it is going to be for you as effectively.

What’s Pure Language Visualization?

A crucial barrier to understanding this discipline is the anomaly of its core terminology. The acronym NLV (Pure Language Visualization) carries two distinct, historic meanings.

- Historic NLV (Textual content-to-Scene): The older discipline of producing 2D or 3D graphics from descriptive textual content [1],[2].

- Trendy NLV (Textual content-to-Viz): The modern discipline of producing information visualizations (like charts) from descriptive textual content [3].

To take care of precision and help you cross-reference concepts and evaluation introduced on this article, I’ll use a particular tutorial methodology used within the HCI and visualization communities:

- Pure Language Interface (NLI): Broad, overarching time period for any human-computer interface that accepts pure language as an enter.

- Visualization-oriented Pure Language Interface (V-NLI): It’s a system that enables customers to work together with and analyze visible information (like charts and graphs) utilizing on a regular basis speech or textual content. Its important function is to democratize information by serving as a straightforward, complementary enter technique for visible analytics instruments, finally letting customers focus completely on their information duties somewhat than grappling with the technical operation of advanced visualization software program [4],[5].

V-NLIs are interactive methods that facilitate visible analytics duties by means of two major person interfaces: form-based or chatbot-based. A form-based V-NLI sometimes makes use of a textual content field for pure language queries, generally with refinement widgets, however is mostly not designed for conversational follow-up questions. In distinction, a chatbot-based V-NLI includes a named agent with anthropomorphic traits—corresponding to persona, look, and emotional expression—that interacts with the person in a separate chat window, displaying the dialog alongside complementary outputs. Whereas each are interactive, the chatbot-based V-NLI can be anthropomorphic, possessing all of the outlined chatbot traits, whereas the form-based V-NLI lacks the human-like traits [6].

The worth proposition of V-NLIs is greatest understood by contrasting the conversational paradigm with conventional information evaluation workflows. These are introduced within the infographic under.

This shift represents a transfer from a static, high-friction, human-gated course of to a dynamic, low-friction, automated one. I additional illustrate how this new method might affect how we work with information in Desk 1.

Desk 1: Comparative Evaluation: Conventional BI vs. Conversational Analytics

| Function | Conversational Analytics | Conventional Analytics |

| Focus | All customer-agent interactions and CRM information | Cellphone conversations and buyer profiles |

| Knowledge Sources | Latest conversations throughout calls, chat, textual content, and emails | Historic data (gross sales, buyer profiles) |

| Timing | Actual-Time / Latest | Retrospective / Historic |

| Immediacy | Excessive (analyzes very latest information) | Low (insights developed over longer intervals) |

| Insights | Deep understanding of particular ache factors, rising points | Excessive-level contact middle insights over time |

| Use Case | Bettering instant buyer satisfaction, agent conduct | Understanding long-term traits and enterprise dynamics |

How does V-NLI work?

To investigate the V-NLI mechanics, I adopted the theoretical framework from the educational survey ‘The Why and The How: A Survey on Pure Language Interplay in Visualization’ [11]. This framework provides a robust lens for classifying and critiquing V-NLI methods by distinguishing between person intent and dialogue implementation. It dissects two main axes of the V-NLI system: ‘The Why’ and ‘How’. ‘The Why’ axis represents person intent. It examines why customers work together with visualizations. The ‘How’ axis represents dialogue construction. It solutions the query of how the human-machine dialogue is technically applied. Every of those axes might be additional divided into particular duties within the case of ‘Why’ and attributes within the case of ‘How’. I listing them under.

The 4 key high-level ‘Why’ duties are:

- Current: Utilizing visualization to speak a story, as an example, for visible storytelling or rationalization technology.

- Uncover: Utilizing visualization to search out new data, as an example, writing pure language queries, performing key phrase search, visible query answering (VQA), or analytical dialog.

- Get pleasure from: Utilizing visualization for non-professional targets, corresponding to augmentation of pictures or description technology.

- Produce: Utilizing visualization to create or report new artifacts, as an example, by making annotations or creating further visualizations.

The ‘How,’ then again, has three main attributes:

- Initiative: Solutions who drives the dialog. It may be user-initiated, system-initiated, or mixed-initiated.

- Period: How lengthy is the interplay? It is likely to be a single flip for a easy question, or a multi-turn dialog for a posh analytical dialogue.

- Communicative Capabilities: What’s the type of the language? The language mannequin helps a number of interplay types: customers could concern direct instructions, pose questions, or interact in a responsive dialogue during which they modify their enter based mostly on strategies from the NLI.

This framework may also assist illustrate essentially the most elementary concern inflicting our disbelief in NLI. Traditionally, each business and non-commercial Visible Pure Language Interfaces (V-NLIs) operated inside a really slim purposeful scope. The ‘Why’ was typically lowered to Uncover activity, whereas the ‘How’ was restricted to easy, single-turn queries initiated by the person.

In consequence, most ‘talk-to-your-data’ instruments functioned as little greater than primary ‘ask me a query’ search packing containers. This mannequin has confirmed constantly irritating for customers as a result of it’s overly inflexible and brittle, typically failing except a question is phrased with excellent precision.

The complete historical past of this expertise is the story of development in two key methods.

- First, our interactions have been enhancing, shifting from asking only one query at a time to having a full, back-and-forth dialog.

- Second, the explanations for utilizing V-NLIs have been increasing. We’ve progressed from merely discovering data to having the instrument routinely create new charts for us, and even clarify the information in a written story.

Working utilizing absolutely all 4 duties of ‘Why’ and three attributes of ‘How’ sooner or later would be the greatest leap of all. The system will cease ready for us to ask a query and can begin the dialog itself, proactively declaring insights you will have missed. This journey, from a easy search field to a wise, proactive associate, is the primary story connecting this expertise’s previous, current, and future.

Earlier than going additional, I want to make a small course deviation and present you an instance of how our interactions with AI might enhance. For that function I’ll use a latest submit revealed by my pal Kasia Drogowska, PhD, on LinkedIn.

AI fashions typically change into stereotyped, affected by ‘mode collapse’ as a result of they be taught our personal biases from their coaching information. A way referred to as ‘Verbalized Sampling’ (VS) provides a robust resolution by altering the immediate. As an alternative of asking for one reply (like ‘Inform me a joke’), you ask for a likelihood distribution of solutions (like ‘Generate 5 totally different jokes and their possibilities’). This easy shift not solely yields 1.6-2.1x extra numerous and artistic outcomes however, extra importantly, it teaches us to suppose probabilistically. It shatters the phantasm of a single ‘appropriate reply’ in advanced enterprise selections and places the facility of selection again in our palms, not the mannequin’s.

The picture above shows a direct comparability between two AI prompting strategies:

- The left aspect exemplifies direct prompting. On this aspect I present what occurs whenever you ask the AI the identical easy query 5 instances: ‘Inform me a joke about information visualization.’ The result’s 5 very related jokes, all following the identical format.

- The precise aspect exemplifies verbalized sampling. Right here I present a special prompting technique. The query is modified to ask for a variety of solutions: ‘Generate 5 responses with their corresponding possibilities…’ The result’s 5 utterly totally different jokes, every distinctive in its setup and punchline, and every assigned a likelihood by the AI (as a matter of reality, it isn’t true likelihood, however anyway provides you the thought).

The important thing good thing about a technique like VS is range. As an alternative of simply getting the AI’s single ‘default’ reply, it forces the AI to discover a wider spectrum of inventive potentialities, letting you select from the most typical to essentially the most distinctive. It is a excellent instance of my level: altering how we work together with these instruments can yield very totally different outcomes.

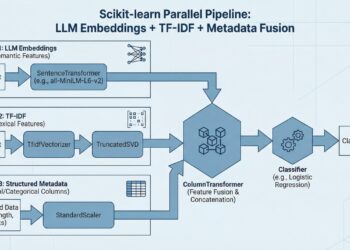

The V-NLI pipeline

To know how a V-NLI interprets a pure language question, corresponding to ‘present me final quarter’s gross sales pattern,’ right into a exact and correct information visualization, it’s essential to deconstruct its underlying technical structure. Lecturers within the V-NLI group have proposed a traditional data visualization pipeline as a structured mannequin for these methods [5]. As an instance the final mechanism of the method, I ready the next infographic.

For a single ‘text-to-viz’ question, the 2 most important and difficult levels are (1) Question Interpretation and (3/4) Visible mapping/encoding. In different phrases, it’s understanding precisely what the person means. The opposite levels, significantly (6) Dialogue Administration, change into paramount in additional superior conversational methods.

The older methods constantly failed to understand this understanding. The reason being that this activity is basically fixing two issues immediately:

- First, the system should guess the person’s intent (e.g., is the request to check gross sales or to see a pattern?).

- Second, it should translate informal phrases (like ‘greatest sellers’) into an ideal database question.

If the system misunderstood the person’s intent, it could show a desk when the person needed a chart. If it couldn’t parse person’s phrases, it could simply return an error, or worse, make up one thing out of the blue.

As soon as the system understands your query, it should create the visible reply. It ought to routinely choose the most effective chart for the given intent (e.g., a line chart for a pattern) after which map acceptable traits to it (e.g., inserting ‘Gross sales’ on the Y-axis and ‘Area’ on the X-axis). Curiously, this chart-building half developed in the same approach to the language-understanding half. Each transitioned from previous, clunky, hard-coded guidelines to versatile, new AI fashions. This parallel evolution set the stage for contemporary Massive Language Fashions (LLMs), which might now carry out each duties concurrently.

In truth, the advanced, multi-stage V-NLI pipeline described above, with its distinct modules for intent recognition, semantic parsing, and visible encoding, has been considerably disrupted by the arrival of LLMs. These fashions haven’t simply improved one stage of the pipeline; they’ve collapsed the complete pipeline right into a single, generative step.

Why is that, it’s possible you’ll ask? Properly, the parsers of the earlier period have been algorithm-centric. They required years of effort by computational linguists and builders to construct, and they’d break upon encountering a brand new area or an surprising question.

LLMs, in distinction, are data-centric. They provide a pre-trained, simplified resolution to essentially the most troublesome downside in understanding pure language [13],[14]. That is the good unification: a single, pre-trained LLM can now execute all of the core duties of the V-NLI pipeline concurrently. This architectural revolution has triggered an equal revolution within the V-NLI developer’s workflow. The core engineering problem has undergone a elementary shift. Beforehand, the problem was to construct an ideal, domain-specific semantic parser [11]. Now, the brand new problem is to create the best immediate and curate the right information to information a pre-trained LLM.

Three key strategies energy this new, LLM-centric workflow. The primary is Immediate Engineering, a brand new self-discipline targeted on rigorously structuring the textual content immediate—generally utilizing superior methods like ‘Tree-of-Ideas’—to assist the LLM motive by means of a posh information question as an alternative of simply making a fast guess. A associated technique is In-Context Studying (ICL), which primes the LLM by inserting just a few examples of the specified activity (like pattern text-to-chart pairs) immediately into the immediate itself. Lastly, for extremely specialised fields, Tremendous-Tuning is used. This entails re-training the bottom LLM on a big, domain-specific dataset. These pillars, when in place, allow the creation of a robust V-NLI that may deal with advanced duties and specialised charts that may be unattainable for any generic mannequin.

This shift has profound implications for the scalability of V-NLI methods. The previous method (symbolic parsing) required constructing new, advanced algorithms for each new area. The newest LLM-based method requires a brand new dataset for fine-tuning. Whereas creating high-quality datasets stays a major problem, it’s a data-scaling downside that’s much more solvable and economical than the earlier algorithmic-scaling downside. This variation in elementary scaling economics is the true and most lasting affect of the LLM revolution.

What’s the true which means of this?

The only greatest promise of ‘talk-to-your-data’ instruments is information democratization. They’re designed to remove the steep studying curve of conventional, advanced BI software program, which frequently requires intensive coaching. ‘Discuss-to-your-data’ instruments present a zero-learning-curve entry level for non-technical professionals (like managers, entrepreneurs, or gross sales groups) who can lastly get their very own insights with out having to file a ticket with an IT or information staff. This fosters a data-driven tradition by enabling self-service for widespread, high-value questions.

For the enterprise, worth is measured when it comes to velocity and effectivity. The choice lag of ready for an analyst, lasting days or generally weeks, is eradicated. This shift from a multi-day, human-gated course of to a real-time, automated one saves a median of 2-3 hours per person per week, permitting the group to react to market modifications immediately.

Nevertheless, this democratization creates a brand new and profound socio-technical rigidity inside organizations. The under anecdote illustrates this completely: an HR Enterprise Accomplice (a non-technical person) used considered one of these instruments to current calculations to managers. The managers, nonetheless, began discussing… the way in which we received to the calculation as an alternative of the particular conclusions, as a result of they didn’t belief that HR might ‘really do the maths.’

This reveals the crucial battle: the instrument’s major worth is in direct rigidity with the group’s elementary want for governance and belief. When a non-technical person is instantly empowered to provide advanced analytics, it challenges the authority of the normal information gatekeepers, making a battle that may be a direct consequence of the expertise’s success.

Which present LLM-based AI assistant is the most effective as a ‘talk-to-your-data’ instrument?

You would possibly anticipate to see a rating of the most effective assistants utilizing LLMs for V-NLI right here, however I selected to not embody one. With quite a few instruments obtainable, it’s unattainable to assessment all of them and rank them objectively and in a reliable method.

My very own expertise is especially with Gemini, ChatGPT, and built-in assistants like Microsoft Copilot or Google Workspace. Nonetheless, utilizing just a few on-line sources, I’ve put collectively a short overview to focus on the important thing elements you must consider when deciding on the choice that’s best suited for you. Ultimately, you’ll must discover the chances your self and contemplate points corresponding to efficiency, price, cost mannequin, and—above all—security.

The desk under outlines a number of instruments with brief descriptions. Later, I focus particularly on Gemini and ChatGPT, which I do know greatest.

Desk 2. Examples of LLMs that might function V-NLI

| BlazeSQL | An AI information analyst and chatbot that connects to SQL databases, letting non-technical customers ask questions in pure language, visualize outcomes, and construct interactive dashboards. There isn’t a coding required. |

| DataGPT | A conversational analytics instrument that solutions pure language queries with visualizations, detects anomalies, and provides options like an AI onboarding agent and Lightning Cache for fast question processing. |

| Gemini (Google) | Google Cloud’s conversational AI interface for BigQuery, allows immediate information evaluation, real-time insights, and customizable dashboards by means of on a regular basis language. |

| ChatGPT (OpenAI) | A versatile conversational instrument able to exploring datasets, operating primary statistical evaluation, producing charts, and producing customized experiences, all through pure language interplay. |

| Lumenore | A platform targeted on customized insights and quicker decision-making, with situation evaluation, an organizational information dictionary, predictive analytics, and centralized information administration. |

| Dashbot | A instrument designed to handle the ‘darkish information’ problem by analyzing each unstructured information (e.g., emails, transcripts, logs) and structured information to show beforehand unused data into actionable insights. |

Each Gemini and ChatGPT exemplify the brand new wave of {powerful}, visualization-oriented V-NLIs, every with a definite strategic benefit. Gemini’s major bonus is its deep integration throughout the Google ecosystem; it really works immediately with BigQuery and Google Suite. For instance, you’ll be able to open a PDF attachment immediately from Gmail and carry out a deep evaluation utilizing the Gemini assistant interface, utilizing both a pre-built agent or ad-hoc prompts. Its core power lies in translating easy, on a regular basis language not simply into information factors, however immediately into interactive visualizations and dashboards.

ChatGPT, in distinction, can function a extra general-purpose but equally {powerful} V-NLI for analytics, able to dealing with numerous information codecs, corresponding to CSVs and Excel recordsdata. This makes it a really perfect instrument for customers who need to make knowledgeable selections with out diving into advanced software program or coding. Its Pure Language Visualization (NLV) operate is specific, permitting customers to ask it to summarize information, establish patterns, and even generate visualizations.

The true, shared power of each platforms is their capability to deal with interactive conversations. They permit customers to ask follow-up questions and refine their queries. This iterative, conversational method makes them extremely efficient V-NLIs that don’t simply reply a single query, however allow a full, exploratory information evaluation workflow.

Utility instance: Gemini as V-NLI

Let’s do a small experiment and see, step-by-step, how Gemini (model 2.5 Professional) works as a V-NLI. For the aim of this experiment, I used Gemini to generate a set of synthetic day by day gross sales information, break up by product, area, and gross sales consultant. Then I requested it to simulate an interplay between a non-technical person (e.g., a gross sales supervisor) and a V-NLI. Let’s see what the end result was.

Generated information pattern:

Date,Area,Salesperson,Product,Class,Amount,UnitPrice,TotalSales

2022-01-01,North,Alice Smith,Alpha-100,Electronics,5,1500,7500

2022-01-01,South,Bob Johnson,Beta-200,Electronics,3,250,750

2022-01-01,East,Carla Gomez,Gamma-300,Attire,10,50,500

2022-01-01,West,David Lee,Delta-400,Software program,1,1000,1000

2022-01-02,North,Alice Smith,Beta-200,Electronics,2,250,500

2022-01-02,West,David Lee,Gamma-300,Attire,7,50,350

2022-01-03,East,Carla Gomez,Alpha-100,Electronics,3,1500,4500

2022-01-03,South,Bob Johnson,Delta-400,Software program,2,1000,2000

2023-05-15,North,Eva Inexperienced,Alpha-100,Electronics,4,1600,6400

2023-05-15,East,Frank White,Epsilon-500,Providers,1,5000,5000

2023-05-16,South,Bob Johnson,Beta-200,Electronics,5,260,1300

2023-05-16,West,David Lee,Gamma-300,Attire,12,55,660

2023-05-17,North,Alice Smith,Delta-400,Software program,1,1100,1100

2023-05-17,East,Carla Gomez,Epsilon-500,Providers,1,5000,5000

2024-11-20,South,Grace Hopper,Alpha-100,Electronics,6,1700,10200

2024-11-20,West,David Lee,Beta-200,Electronics,10,270,2700

2024-11-21,North,Eva Inexperienced,Gamma-300,Attire,15,60,900

2024-11-21,East,Frank White,Delta-400,Software program,3,1200,3600

2024-11-22,South,Grace Hopper,Epsilon-500,Providers,2,5500,11000

2024-11-22,West,Alice Smith,Alpha-100,Electronics,4,1700,6800Experiment:

My typical workflow begins with a high-level question for a broad overview. If that preliminary view seems regular, I’d cease. Nevertheless, if I think an underlying concern, I’ll ask the instrument to dig deeper for anomalies that aren’t seen on the floor.

Subsequent, I targeted on the North area to see if I might spot any anomalies.

For the final question, I shifted my perspective to investigate the day by day gross sales development. This new view serves as a launchpad for subsequent, extra detailed follow-up questions.

As a matter of reality, the above examples have been pretty easy and never distant from the ‘Previous-era’ NLIs. However let’s see what occurs, if the chatbot is empowered to take initiative in the course of the dialogue.

This demonstrates a extra superior V-NLI functionality: not simply answering the query, but in addition offering context and figuring out underlying patterns or outliers that the person might need missed.

This small experiment hopefully demonstrates that AI assistants, corresponding to Gemini, can successfully function V-NLIs. The simulation started with the mannequin efficiently deciphering a high-level natural-language question about gross sales information and translating it into an acceptable visualization. The method showcased the mannequin’s capability to deal with iterative, conversational follow-ups, corresponding to drilling down into a particular information section or shifting the analytical perspective to a time sequence. Most importantly, the ultimate experiment demonstrated proactive functionality, during which the mannequin not solely answered the person’s question but in addition independently recognized and visualized a crucial information anomaly. This means that such AI instruments can transcend the position of straightforward executors, appearing as an alternative as interactive companions within the information exploration course of. However it’s not that they are going to try this on their very own: they have to first be empowered by means of an acceptable immediate.

So is that this world actually so ideally suited?

Regardless of the promise of democratization, V-NLI instruments are stricken by elementary challenges which have led to their previous failures. The primary and most vital is the Ambiguity Drawback, the ‘Achilles’ heel’ of all pure language methods. Human language is inherently imprecise, which manifests in a number of methods:

- Linguistic ambiguity: Phrases have a number of meanings. A question for ‘high prospects’ might imply high by income, quantity, or development, and a mistaken guess immediately destroys person belief.

- Beneath-specification: Customers are sometimes imprecise, asking ‘present me gross sales’ with out specifying the timeframe, granularity, or analytical intent (corresponding to a pattern versus a complete).

- Area-specific context: A generic LLM is likely to be ineffective for a particular enterprise as a result of it doesn’t perceive inner jargon or company-specific enterprise logic [16], [17].

Second, even when a instrument offers an accurate reply, it’s socially ineffective if the person can’t belief it. That is the ‘Black Field’ downside, as cited above within the story of the HR enterprise associate. As a result of the HR person couldn’t clarify the ‘why’ behind the ‘what,’ the perception was rejected. This ‘chain of belief’ is crucial. When the V-NLI is an opaque black field, the person turns into a ‘information parrot,’ unable to defend the numbers and rendering the instrument unusable in any high-stakes enterprise context.

Lastly, there’s the ‘Final Mile’ downside of technical and financial feasibility. A person’s simple-sounding query (e.g., ‘present me the lifetime worth of consumers from our final marketing campaign’) could require a hyper-complex, 200-line SQL question that no present AI can reliably generate. LLMs should not a magic repair for this. Even to be remotely helpful, they should be skilled on a company-specific, ready, cleaned, and correctly described dataset. Sadly, that is nonetheless an unlimited and recurring expense. This results in crucial conclusion:

The one viable path ahead is a hybrid future.

An ungoverned ‘ask something field’ is a no-go.

The way forward for V-NLI will not be a generic, omnipotent LLM; it’s a versatile LLM (for language) working on high of a inflexible, curated semantic mannequin (for governance, accuracy, and domain-specific information) [18], [19]. As an alternative of ‘killing’ BI and dashboards, LLMs and V-NLI would be the reverse: a robust catalyst. They received’t exchange the dashboard or static report. They are going to improve it. We must always anticipate them to be built-in as the subsequent technology of person interface, dramatically enhancing the standard and utility of knowledge interplay.

What’s going to the longer term convey?

The way forward for information interplay factors towards a hypothetical paradigm shift, shifting effectively past a easy search field to a Multi-Modal Agentic System. Think about a system that operates extra like a collaborator and fewer like a instrument. A person, maybe carrying an AR/VR headset, would possibly ask, ‘Why did our final marketing campaign fail?’ Then the AI agent would motive over all obtainable information. Not simply the gross sales database, but in addition unstructured buyer suggestions emails, the advert inventive pictures themselves, and web site logs. As an alternative of a easy chart, it could proactively current an augmented actuality dashboard and provide a predictive conclusion, corresponding to, ‘The inventive carried out poorly together with your goal demographic, and the touchdown web page had a 70% bounce price.’ The essential evolution is the ultimate ‘agentic’ step: the system wouldn’t cease on the perception however would bridge the hole to motion, maybe concluding:

I’ve already analyzed Q2’s top-performing creatives, drafted a brand new A/B take a look at, and alerted DevOps to the page-load concern.

Would you want me to deploy the brand new take a look at? Y/N_

As scary as it could sound, this imaginative and prescient completes the evolution from merely ‘speaking to information’ to actively ‘collaborating with an agent about information’ to attain an automatic, real-world consequence [20].

I understand this final assertion opens up much more questions, however this looks like the best place to pause and switch the dialog over to you. I’m keen to listen to your opinions on this. Is a future like this reasonable? Is it thrilling, or frankly, a bit scary? And on this superior agentic system, is that last human ‘sure or no’ really vital? Or is it the security mechanism we are going to at all times need / must hold? I look ahead to the dialogue.

Concluding remarks

So, will conversational interplay make the information analyst—the one who painstakingly writes queries and manually builds charts—jobless? My conclusion is that the query isn’t about substitute however redefinition.

The pure ‘Star Trek’ imaginative and prescient of an ‘ask something’ field is not going to occur. It’s stricken by its ‘Achilles’ heel’ of human language ambiguity and the ‘Black Field’ downside that destroys the belief it must operate. Therefore, the longer term, due to this fact, will not be a generic, omnipotent LLM.

As an alternative, the one viable path ahead is a hybrid system that mixes the pliability of an LLM with the rigidity of a curated semantic mannequin. This new paradigm doesn’t exchange the analysts; it elevates them. It frees them from being a ‘information plumber’. It empowers them as a strategic associate, working with a brand new, multi-modal agentic system that may lastly bridge the chasm between information, perception, and automatic motion.

References

[1] Priyanka Jain, Hemant Darbari, Virendrakumar C. Bhavsar, Vishit: A Visualizer for Hindi Textual content – ResearchGate

[2] Christian Spika, Katharina Schwarz, Holger Dammertz, Hendrik Lensch, AVDT – Automated Visualization of Descriptive Texts

[3] Skylar Walters, Arthea Valderrama, Thomas Smits, David Kouřil, Huyen Nguyen, Sehi L’Yi, Devin Lange, Nils Gehlenborg, GQVis: A Dataset of Genomics Knowledge Questions and Visualizations for Generative AI

[4] Rishab Mitra, Arpit Narechania, Alex Endert, John Stasko, Facilitating Conversational Interplay in Pure Language Interfaces for Visualization

[5] Shen Leixian, Shen Enya, Luo Yuyu, Yang Xiaocong, Hu Xuming, Zhang Xiongshuai, Tai Zhiwei, Wang Jianmin, In the direction of Pure Language Interfaces for Knowledge Visualization: A Survey – PubMed

[6] Ecem Kavaz, Anna Puig, Inmaculada Rodríguez, Chatbot-Based mostly Pure Language Interfaces for Knowledge Visualisation: A Scoping Evaluate

[7] Shah Vaishnavi, What’s Conversational Analytics and How Does it Work? – ThoughtSpot

[8] Tyler Dye, How Conversational Analytics Works & Tips on how to Implement It – Thematic

[9] Apoorva Verma, Conversational BI for Non-Technical Customers: Making Knowledge Accessible and Actionable

[10] Ust Oldfield, Past Dashboards: How Conversational AI is Reworking Analytics

[11] Henrik Voigt, Özge Alacam, Monique Meuschke, Kai Lawonn and Sina Zarrieß, The Why and The How: A Survey on Pure Language Interplay in Visualization

[12] Jiayi Zhang, Simon Yu, Derek Chong, Anthony Sicilia, Michael R. Tomz, Christopher D. Manning, Weiyan Shi, Verbalized Sampling: Tips on how to Mitigate Mode Collapse and Unlock LLM Range

[13] Saadiq Rauf Khan, Vinit Chandak, Sougata Mukherjea, Evaluating LLMs for Visualization Era and Understanding

[14] Paula Maddigan, Teo Susnjak, Chat2VIS: Producing Knowledge Visualizations through Pure Language Utilizing ChatGPT, Codex and GPT-3 Massive Language Fashions – SciSpace

[15] Greatest 6 Instruments for Conversational AI Analytics

[16] What are the challenges and limitations of pure language processing? – Tencent Cloud

[17] Arjun Srinivasan, John Stasko, Pure Language Interfaces for Knowledge Evaluation with Visualization: Contemplating What Has and Might Be Requested

[18] Will LLMs make BI instruments out of date?

[19] Fabi.ai, Addressing the restrictions of conventional BI instruments for advanced analyses

[20] Sarfraz Nawaz, Why Conversational AI Brokers Will Change BI Dashboards in 2025

[*] Star Trek analogy was generated in ChatGPT, won’t precisely replicate the characters’ actions within the sequence. I haven’t watched it for roughly 30 years 😉 .

Disclaimer

This submit was written utilizing Microsoft Phrase, and the spelling and grammar have been checked with Grammarly. I reviewed and adjusted any modifications to make sure that my meant message was precisely mirrored. All different makes use of of AI (analogy, idea, picture, and pattern information technology) have been disclosed immediately within the textual content.