Pc imaginative and prescient (CV) fashions are solely nearly as good as their labels, and people labels are historically costly to supply. Business analysis signifies that knowledge annotation can eat 50-80% of a imaginative and prescient mission’s price range and lengthen timelines past the unique schedule. As firms in manufacturing, healthcare, and logistics race to modernize their stacks, the info annotation time and price implications have gotten a giant burden.

To date, labeling has relied on handbook, human effort. Auto-labeling methods now getting into the market are promising and might supply orders-of-magnitude financial savings, due to important progress in basis fashions and vision-language fashions (VLMs) that excel at open-vocabulary detection and multimodal reasoning. Latest benchmarks report a ~100,000× price and time discount for large-scale datasets.

This deep dive first maps the true price of handbook annotation, then explains how an AI mannequin strategy could make auto-labeling sensible. Lastly, it walks via a novel workflow (referred to as Verified Auto Labeling) you can strive your self.

Why Imaginative and prescient Nonetheless Pays a Labeling Tax

Textual content-based AI leapt ahead when LLMs realized to mine that means from uncooked, unlabeled phrases. Imaginative and prescient fashions by no means had that luxurious. A detector can’t guess what a “truck” appears to be like like till somebody has boxed hundreds of vans, frame-by-frame, and advised the community, “it is a truck”.

Even at this time’s vision-language hybrids inherit that constraint: the language aspect is self-supervised, however human labels bootstrap the visible channel. Business analysis estimated the worth of that work to be 50–60% of a median computer-vision price range, roughly equal to the price of the complete model-training pipeline mixed.

Properly-funded operations can soak up the price, but it turns into a blocker for smaller groups that may least afford it.

Three Forces That Maintain Prices Excessive

Labor-intensive work – Labeling is sluggish, repetitive, and scales line-for-line with dataset measurement. At about $0.04 per bounding field, even a mid-sized mission can cross six figures, particularly when bigger fashions set off ever-bigger datasets and a number of revision cycles.

Specialised experience – Many functions, akin to medical imaging, aerospace, and autonomous driving, want annotators who perceive area nuances. These specialists can price three to 5 instances greater than generalist labelers.

High quality-assurance overhead – Making certain constant labels usually requires second passes, audit units, and adjudication when reviewers disagree. Further QA improves accuracy however stretches timelines, and a slender reviewer pool may also introduce hidden bias that propagates into downstream fashions.

Collectively, these pressures drive up prices that capped computer-vision adoption for years. A number of corporations are constructing options to deal with this rising bottleneck.

Basic Auto-Labeling Strategies: Strengths and Shortcomings

Supervised, semi-supervised, and few-shot studying approaches, together with lively studying and prompt-based coaching, have promised to cut back handbook labeling for years. Effectiveness varies broadly with process complexity and the structure of the underlying mannequin; the methods under are merely among the many commonest.

Switch studying and fine-tuning – Begin with a pre-trained detector, akin to YOLO or Quicker R-CNN, and tweak it for a brand new area. As soon as the duty shifts to area of interest courses or pixel-tight masks, groups should collect new knowledge and soak up a considerable fine-tuning price.

Zero-shot imaginative and prescient–language fashions – CLIP and its cousins map textual content and pictures into the identical embedding area with the intention to tag new classes with out additional labels. This works nicely for classification. Nonetheless, balancing precision and recall may be harder in object detection and segmentation, making human-involved QA and verification all of the extra important.

Lively studying – Let the mannequin label what it’s certain about, then bubble up the murky instances for human evaluation. Over successive rounds, the machine improves, and the handbook evaluation pile shrinks. In follow, it could possibly scale back hand-labeling by 30–70%, however solely after a number of coaching cycles and a fairly strong preliminary mannequin has been established.

All three approaches assist, but none of those alone can course of high-quality labels at scale.

The Technical Foundations of Zero-Shot Object Detection

Zero-shot studying represents a paradigm shift from conventional supervised approaches that require in depth labeled examples for every object class. In typical laptop imaginative and prescient pipelines, fashions study to acknowledge objects via publicity to hundreds of annotated examples; for example, a automotive detector requires automotive photographs, an individual detector requires photographs of individuals, and so forth. This one-to-one mapping between coaching knowledge and detection capabilities creates the annotation bottleneck that plagues the sector.

Zero-shot studying breaks this constraint by leveraging the relationships between visible options and pure language descriptions. Imaginative and prescient-language fashions, akin to CLIP, create a shared area the place photographs and textual content descriptions may be in contrast instantly, permitting fashions to acknowledge objects they’ve by no means seen throughout coaching. The essential concept is easy: if a mannequin is aware of what “four-wheeled car” and “sedan” imply, it ought to have the ability to determine sedans with out ever being skilled on sedan examples.

That is essentially totally different from few-shot studying, which nonetheless requires some labeled examples per class, and conventional supervised studying, which calls for in depth coaching knowledge per class. Zero-shot approaches, alternatively, depend on compositional understanding, akin to breaking down advanced objects into describable elements and relationships that the mannequin has encountered in varied contexts throughout pre-training.

Nonetheless, extending zero-shot capabilities from picture classification to object detection introduces extra complexity. Whereas figuring out whether or not a complete picture incorporates a automotive is one problem, exactly localizing that automotive with a bounding field whereas concurrently classifying it represents a considerably extra demanding process that requires refined grounding mechanisms.

Voxel51’s Verified Auto Labeling: An Improved Strategy

Based on analysis revealed by Voxel51, the Verified Auto Labeling (VAL) pipeline achieves roughly 95% settlement with skilled labels in inner benchmarks. The identical examine signifies a value discount of roughly 10⁵, reworking a dataset that might have required months of paid annotation right into a process accomplished in just some hours on a single GPU.

Labeling tens of hundreds of photographs in a workday shifts annotation from a protracted‐operating, line-item expense to a repeatable batch job. That pace opens the door to shorter experiment cycles and quicker mannequin refreshes.

The workflow ships in FiftyOne, the end-to-end laptop imaginative and prescient platform, that permits ML engineers to annotate, visualize, curate, and collaborate on knowledge and fashions in a single interface.

Whereas managed companies akin to Scale AI Fast and SageMaker Floor Fact additionally pair basis fashions with human evaluation, Voxel51’s Verified Auto Labeling provides built-in QA, strategic knowledge slicing, and full mannequin analysis evaluation capabilities. This helps engineers not solely enhance the pace and accuracy of information annotation but additionally elevate general knowledge high quality and mannequin accuracy.

Technical Parts of Voxel51’s Verified Auto-Labeling

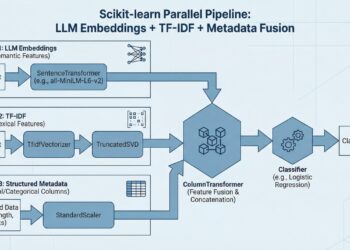

- Mannequin & Class-Immediate Choice:

- Select an open- or fixed-vocabulary detector, enter class names, and set a confidence threshold; photographs are labeled instantly, so the workflow stays zero-shot even when selecting a fixed-vocabulary mannequin.

- Computerized labeling with confidence scores:

- The mannequin generates bins, masks, or tags and assigns a rating to every prediction, permitting human reviewers to evaluation, kind by certainty, and queue labels for approval.

- FiftyOne knowledge and mannequin evaluation workflows:

- After labels are in place, engineers can make the most of FiftyOne workflows to visualise embeddings to determine clusters or outliers.

- As soon as labels are authorized, they’re prepared for downstream mannequin coaching and fine-tuning workflows carried out instantly within the device.

- Constructed-in analysis dashboards assist ML engineers additional drill down into mannequin efficiency scores akin to mAP, F1, and confusion matrices to pinpoint true and false positives, decide mannequin failure modes, and determine which extra knowledge will most enhance efficiency.

In day-to-day use, the sort of workflow will allow machines to perform the extra easy labeling instances, whereas reallocating people on difficult ones, offering a realistic midpoint between push-button automation and frame-by-frame evaluation.

Efficiency within the Wild

Printed benchmarks inform a transparent story: on standard datasets like COCO, Pascal VOC, and BDD100K, fashions skilled on VAL-generated labels carry out just about the identical as fashions skilled on totally hand-labeled knowledge for the on a regular basis objects these units seize. The hole solely reveals up on rarer courses in LVIS and equally long-tail collections, the place a lightweight contact of human annotation remains to be the quickest approach to shut the remaining accuracy hole.

Experiments counsel confidence cutoffs between 0.2 and 0.5 stability precision and recall, although the candy spot shifts with dataset density and sophistication rarity. For top-volume jobs, light-weight YOLO variants maximize throughput. When delicate or long-tail objects require additional accuracy, an open-vocabulary mannequin like Grounding DINO may be swapped in at the price of extra GPU reminiscence and latency.

Both approach, the downstream human-review step is proscribed to the low-confidence slice. And it’s far lighter than the full-image checks that conventional, handbook QA pipelines nonetheless depend on.

Implications for Broader Adoption

Reducing the time and price of annotation democratizes computer-vision growth. A ten-person agriculturetech startup may label 50,000 drone photographs for underneath $200 in spot-priced GPU time, rerunning in a single day every time the taxonomy adjustments. Bigger organizations might mix in-house pipelines for delicate knowledge with exterior distributors for less-regulated workloads, reallocating saved annotation spend towards high quality analysis or area enlargement.

Collectively, zero-shot field labeling plus focused human evaluation affords a sensible path to quicker iteration. This strategy leaves (costly) people to deal with the sting instances the place machines should stumble.

Auto-Labeling reveals that high-quality labeling may be automated to a stage as soon as thought impractical. This could deliver superior CVs inside attain of much more groups and reshape visible AI workflows throughout industries.

About our sponsor: Voxel51 supplies an end-to-end platform for constructing high-performing AI with visible knowledge. Trusted by tens of millions of AI builders and enterprises like Microsoft and LG, FiftyOne makes it straightforward to discover, refine, and enhance large-scale datasets and fashions. Our open supply and business instruments assist groups ship correct, dependable AI methods. Be taught extra at voxel51.com.