of the DeepSeek-R1 mannequin despatched ripples throughout the worldwide AI neighborhood. It delivered breakthroughs on par with the reasoning fashions from Meta and OpenAI, reaching this in a fraction of the time and at a considerably decrease price.

Past the headlines and on-line buzz, how can we assess the mannequin’s reasoning talents utilizing acknowledged benchmarks?

Deepseek’s person interface makes it straightforward to discover its capabilities, however utilizing it programmatically affords deeper insights and extra seamless integration into real-world functions. Understanding how you can run such fashions regionally additionally supplies enhanced management and offline entry.

On this article, we discover how you can use Ollama and OpenAI’s simple-evals to guage the reasoning capabilities of DeepSeek-R1’s distilled fashions primarily based on the well-known GPQA-Diamond benchmark.

Contents

(1) What are Reasoning Fashions?

(2) What’s DeepSeek-R1?

(3) Understanding Distillation and DeepSeek-R1 Distilled Fashions

(4) Number of Distilled Mannequin

(5) Benchmarks for Evaluating Reasoning

(6) Instruments Used

(7) Outcomes of Analysis

(8) Step-by-Step Walkthrough

Right here is the hyperlink to the accompanying GitHub repo for this text.

(1) What are Reasoning Fashions?

Reasoning fashions, reminiscent of DeepSeek-R1 and OpenAI’s o-series fashions (e.g., o1, o3), are massive language fashions (LLMs) skilled utilizing reinforcement studying to carry out reasoning.

Reasoning fashions suppose earlier than they reply, producing a protracted inner chain of thought earlier than responding. They excel in advanced problem-solving, coding, scientific reasoning, and multi-step planning for agentic workflows.

(2) What’s DeepSeek-R1?

DeepSeek-R1 is a state-of-the-art open-source LLM designed for superior reasoning, launched in January 2025 within the paper “DeepSeek-R1: Incentivizing Reasoning Functionality in LLMs by way of Reinforcement Studying”.

The mannequin is a 671-billion-parameter LLM skilled with in depth use of reinforcement studying (RL), primarily based on this pipeline:

- Two reinforcement phases geared toward discovering improved reasoning patterns and aligning with human preferences

- Two supervised fine-tuning phases serving because the seed for the mannequin’s reasoning and non-reasoning capabilities.

To be exact, DeepSeek skilled two fashions:

- The primary mannequin, DeepSeek-R1-Zero, a reasoning mannequin skilled with reinforcement studying, generates knowledge for coaching the second mannequin, DeepSeek-R1.

- It achieves this by producing reasoning traces, from which solely high-quality outputs are retained primarily based on their closing outcomes.

- It signifies that, in contrast to most fashions, the RL examples on this coaching pipeline usually are not curated by people however generated by the mannequin.

The result is that the mannequin achieved efficiency akin to main fashions like OpenAI’s o1 mannequin throughout duties reminiscent of arithmetic, coding, and sophisticated reasoning.

(3) Understanding Distillation and DeepSeek-R1’s Distilled Fashions

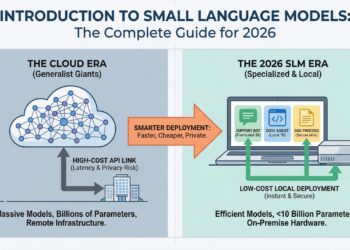

Alongside the total mannequin, in addition they open-sourced six smaller dense fashions (additionally named DeepSeek-R1) of various sizes (1.5B, 7B, 8B, 14B, 32B, 70B), distilled from DeepSeek-R1 primarily based on Qwen or Llama as the bottom mannequin.

Distillation is a method the place a smaller mannequin (the “scholar”) is skilled to copy the efficiency of a bigger, extra highly effective pre-trained mannequin (the “trainer”).

On this case, the trainer is the 671B DeepSeek-R1 mannequin, and the scholars are the six fashions distilled utilizing these open-source base fashions:

DeepSeek-R1 was used because the trainer mannequin to generate 800,000 coaching samples, a mixture of reasoning and non-reasoning samples, for distillation by way of supervised fine-tuning of the bottom fashions (1.5B, 7B, 8B, 14B, 32B, and 70B).

So why will we do distillation within the first place?

The aim is to switch the reasoning talents of bigger fashions, reminiscent of DeepSeek-R1 671B, into smaller, extra environment friendly fashions. This empowers the smaller fashions to deal with advanced reasoning duties whereas being quicker and extra resource-efficient.

Moreover, DeepSeek-R1 has an enormous variety of parameters (671 billion), making it difficult to run on most consumer-grade machines.

Even essentially the most highly effective MacBook Professional, with a most of 128GB of unified reminiscence, is insufficient to run a 671-billion-parameter mannequin.

As such, distilled fashions open up the potential of being deployed on gadgets with restricted computational assets.

Unsloth achieved a powerful feat by quantizing the unique 671B-parameter DeepSeek-R1 mannequin down to only 131GB — a exceptional 80% discount in dimension. Nonetheless, a 131GB VRAM requirement stays a major hurdle.

(4) Number of Distilled Mannequin

With six distilled mannequin sizes to select from, choosing the correct one largely depends upon the capabilities of the native system {hardware}.

For these with high-performance GPUs or CPUs and a necessity for max efficiency, the bigger DeepSeek-R1 fashions (32B and up) are very best — even the quantized 671B model is viable.

Nonetheless, if one has restricted assets or prefers faster technology instances (as I do), the smaller distilled variants, reminiscent of 8B or 14B, are a greater match.

For this mission, I shall be utilizing the DeepSeek-R1 distilled Qwen-14B mannequin, which aligns with the {hardware} constraints I confronted.

(5) Benchmarks for Evaluating Reasoning

LLMs are sometimes evaluated utilizing standardized benchmarks that assess their efficiency throughout varied duties, together with language understanding, code technology, instruction following, and query answering. Widespread examples embody MMLU, HumanEval, and MGSM.

To measure an LLM’s capability for reasoning, we’d like more difficult, reasoning-heavy benchmarks that transcend surface-level duties. Listed here are some in style examples targeted on evaluating superior reasoning capabilities:

(i) AIME 2024 — Competitors Math

- The American Invitational Arithmetic Examination (AIME) 2024 serves as a robust benchmark for evaluating an LLM’s mathematical reasoning capabilities.

- It’s a difficult math contest with advanced, multi-step issues that check an LLM’s capacity to interpret intricate questions, apply superior reasoning, and carry out exact symbolic manipulation.

(ii) Codeforces — Competitors Code

- The Codeforces Benchmark evaluates an LLM’s reasoning capacity utilizing actual aggressive programming issues from Codeforces, a platform identified for algorithmic challenges.

- These issues check an LLM’s capability to grasp advanced directions, carry out logical and mathematical reasoning, plan multi-step options, and generate right, environment friendly code.

(iii) GPQA Diamond — PhD-Stage Science Questions

- GPQA-Diamond is a curated subset of the most tough questions from the broader GPQA (Graduate-Stage Physics Query Answering) benchmark, particularly designed to push the boundaries of LLM reasoning in superior PhD-level matters.

- Whereas GPQA features a vary of conceptual and calculation-heavy graduate questions, GPQA-Diamond isolates solely essentially the most difficult and reasoning-intensive ones.

- It’s thought of Google-proof, that means that they’re tough to reply even with unrestricted net entry.

- Right here is an instance of a GPQA-Diamond query:

On this mission, we use GPQA-Diamond because the reasoning benchmark, as OpenAI and DeepSeek used it to guage their reasoning fashions.

(6) Instruments Used

For this mission, we primarily use Ollama and OpenAI’s simple-evals.

(i) Ollama

Ollama is an open-source software that simplifies operating LLMs on our pc or an area server.

It acts as a supervisor and runtime, dealing with duties reminiscent of downloads and setting setup. This enables customers to work together with these fashions with out requiring a relentless web connection or counting on cloud companies.

It helps many open-source LLMs, together with DeepSeek-R1, and is cross-platform appropriate with macOS, Home windows, and Linux. Moreover, it affords an easy setup with minimal fuss and environment friendly useful resource utilization.

Vital: Guarantee your native system has GPU entry for Ollama, as this dramatically accelerates efficiency and makes subsequent benchmarking workout routines rather more environment friendly as in comparison with CPU. Run

nvidia-smiin terminal to examine if GPU is detected.

(ii) OpenAI simple-evals

simple-evals is a light-weight library designed to guage language fashions utilizing a zero-shot, chain-of-thought prompting method. It contains well-known benchmarks like MMLU, MATH, GPQA, MGSM, and HumanEval, aiming to replicate real looking utilization situations.

A few of chances are you’ll learn about OpenAI’s extra well-known and complete analysis library known as Evals, which is distinct from simple-evals.

In truth, the README of simple-evals additionally particularly signifies that it isn’t meant to exchange the Evals library.

So why are we utilizing simple-evals?

The straightforward reply is that simple-evals comes with built-in analysis scripts for the reasoning benchmarks we’re focusing on (reminiscent of GPQA), that are lacking in Evals.

Moreover, I didn’t discover some other instruments or platforms, aside from simple-evals, that present an easy, Python-native method to run quite a few key benchmarks, reminiscent of GPQA, significantly when working with Ollama.

(7) Outcomes of Analysis

As a part of the analysis, I chosen 20 random questions from the GPQA-Diamond 198-question set for the 14B distilled mannequin to work on. The overall time taken was 216 minutes, which is ~11 minutes per query.

The result was admittedly disappointing, because it scored solely 10%, far under the reported 73.3% rating for the 671B DeepSeek-R1 mannequin.

The principle problem I observed is that in its intensive inner reasoning, the mannequin usually both failed to supply any reply (e.g., returning reasoning tokens as the ultimate strains of output) or offered a response that didn’t match the anticipated multiple-choice format (e.g., Reply: A).

As proven above, many outputs ended up as None as a result of the regex logic in simple-evals couldn’t detect the anticipated reply sample within the LLM response.

Whereas the human-like reasoning logic was attention-grabbing to watch, I had anticipated stronger efficiency by way of question-answering accuracy.

I’ve additionally seen on-line customers point out that even the bigger 32B mannequin doesn’t carry out in addition to o1. This has raised doubts concerning the utility of distilled reasoning fashions, particularly after they battle to offer right solutions regardless of producing lengthy reasoning.

That stated, GPQA-Diamond is a extremely difficult benchmark, so these fashions might nonetheless be helpful for easier reasoning duties. Their decrease computational calls for additionally make them extra accessible.

Moreover, the DeepSeek workforce really helpful conducting a number of assessments and averaging the outcomes as a part of the benchmarking course of — one thing I omitted on account of time constraints.

(8) Step-by-Step Walkthrough

At this level, we’ve coated the core ideas and key takeaways.

For those who’re prepared for a hands-on, technical walkthrough, this part supplies a deep dive into the internal workings and step-by-step implementation.

Take a look at (or clone) the accompanying GitHub repo to observe alongside. The necessities for the digital setting setup might be discovered right here.

(i) Preliminary Setup — Ollama

We start by downloading Ollama. Go to the Ollama obtain web page, choose your working system, and observe the corresponding set up directions.

As soon as set up is full, launch Ollama by double-clicking the Ollama app (for Home windows and macOS) or operating ollama serve within the terminal.

(ii) Preliminary Setup — OpenAI simple-evals

The setup of simple-evals is comparatively distinctive.

Whereas simple-evals presents itself as a library, the absence of __init__.py recordsdata within the repository means it isn’t structured as a correct Python bundle, resulting in import errors after cloning the repo regionally.

Since it’s also not revealed to PyPI and lacks customary packaging recordsdata like setup.py or pyproject.toml, it can’t be put in by way of pip.

Thankfully, we are able to make the most of Git submodules as an easy workaround.

A Git submodule lets us embody contents of one other Git repository inside our personal mission. It pulls the recordsdata from an exterior repo (e.g., simple-evals), however retains its historical past separate.

You possibly can select considered one of two methods (A or B) to tug the simple-evals contents:

(A) If You Cloned My Challenge Repo

My mission repo already contains simple-evals as a submodule, so you may simply run:

git submodule replace --init --recursive(B) If You’re Including It to a Newly Created Challenge

To manually add simple-evals as a submodule, run this:

git submodule add https://github.com/openai/simple-evals.git simple_evalsWord: The simple_evals on the finish of the command (with an underscore) is essential. It units the folder identify, and utilizing a hyphen as an alternative (i.e., easy–evals) can result in import points later.

Closing Step (For Each Strategies)

After pulling the repo contents, you should create an empty __init__.py within the newly created simple_evals folder in order that it’s importable as a module. You possibly can create it manually, or use the next command:

contact simple_evals/__init__.py(iii) Pull DeepSeek-R1 mannequin by way of Ollama

The following step is to regionally obtain the distilled mannequin of your selection (e.g., 14B) utilizing this command:

ollama pull deepseek-r1:14bThe record of DeepSeek-R1 fashions obtainable on Ollama might be discovered right here.

(iv) Outline configuration

We outline the parameters in a configuration YAML file, as proven under:

The mannequin temperature is about to 0.6 (versus the everyday default worth of 0). This follows DeepSeek’s utilization suggestions, which counsel a temperature vary of 0.5 to 0.7 (0.6 really helpful) to stop countless repetitions or incoherent outputs.

Do try the apparently distinctive DeepSeek-R1 utilization suggestions — particularly for benchmarking — to make sure optimum efficiency when utilizing DeepSeek-R1 fashions.

EVAL_N_EXAMPLES is the parameter for setting the variety of questions from the total 198-question set to make use of for analysis.

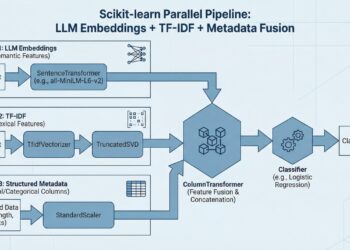

(v) Arrange Sampler code

To help Ollama-based language fashions inside the simple-evals framework, we create a customized wrapper class named OllamaSampler saved inside utils/samplers/ollama_sampler.py.

On this context, a sampler is a Python class that generates outputs from a language mannequin primarily based on a given immediate.

Since present samplers in simple-evals solely cowl suppliers like OpenAI and Claude, we’d like a sampler class that gives a appropriate interface for Ollama.

The OllamaSampler extracts the GPQA query immediate, sends it to the mannequin with a specified temperature, and returns the plain textual content response.

The _pack_message methodology is included to make sure the output format matches what the analysis scripts in simple-evals count on.

(vi) Create analysis run script

The next code units up the analysis execution in major.py, together with the usage of the GPQAEval class from simple-evals to run GPQA benchmarking.

The run_eval() perform is a configurable analysis runner that assessments LLMs by way of Ollama on benchmarks like GPQA.

It hundreds settings from the config file, units up the suitable analysis class from simple-evals, and runs the mannequin by way of a standardized analysis course of. It’s saved in major.py, which might be executed with python major.py.

Following the steps above, we’ve got efficiently arrange and executed the GPQA-Diamond benchmarking on the DeepSeek-R1 distilled mannequin.

Wrapping It Up

On this article, we showcased how we are able to mix instruments like Ollama and OpenAI’s simple-evals to discover and benchmark DeepSeek-R1’s distilled fashions.

The distilled fashions could not but rival the 671B parameter authentic mannequin on difficult reasoning benchmarks like GPQA-Diamond. Nonetheless, they display how distillation can increase entry to LLM reasoning capabilities.

Regardless of subpar scores in advanced PhD-level duties, these smaller variants could stay viable for much less demanding situations, paving the way in which for environment friendly native deployment on a wider vary of {hardware}.

Earlier than you go

I welcome you to observe my GitHub and LinkedIn to remain up to date with extra participating and sensible content material. In the meantime, have enjoyable benchmarking LLMs with Ollama and simple-evals!