assume that linear regression is about becoming a line to knowledge.

However mathematically, that’s not what it’s doing.

It’s discovering the closest potential vector to your goal throughout the

area spanned by options.

To grasp this, we have to change how we have a look at our knowledge.

In Half 1, we’ve obtained a primary thought of what a vector is and explored the ideas of dot merchandise and projections.

Now, let’s apply these ideas to resolve a linear regression downside.

We’ve got this knowledge.

The Standard Approach: Function Area

Once we attempt to perceive linear regression, we usually begin with a scatter plot drawn between the unbiased and dependent variables.

Every level on this plot represents a single row of information. We then attempt to match a line via these factors, with the objective of minimizing the sum of squared residuals.

To unravel this mathematically, we write down the fee operate equation and apply differentiation to seek out the precise formulation for the slope and intercept.

As we already mentioned in my earlier a number of linear regression (MLR) weblog, that is the usual method to perceive the issue.

That is what we name as a function area.

After doing all that course of, we get a worth for the slope and intercept. Right here we have to observe one factor.

Allow us to say ŷᵢ is the expected worth at a sure level. We’ve got the slope and intercept worth, and now in accordance with our knowledge, we have to predict the worth.

If ŷᵢ is the expected worth for Home 1, we calculate it by utilizing

[

beta_0 + beta_1 cdot text{size}

]

What have we finished right here? We’ve got a dimension worth, and we’re scaling it with a sure quantity, which we name the slope (β₁), to get the worth as close to to the unique worth as potential.

We additionally add an intercept (β₀) as a base worth.

Now let’s keep in mind this level, and we are going to transfer to the following perspective.

A Shift in Perspective

Let’s have a look at our knowledge.

Now, as a substitute of contemplating Value and Measurement as axes, let’s take into account every home as an axis.

We’ve got three homes, which suggests we are able to deal with Home A because the X-axis, Home B because the Y-axis, and Home C because the Z-axis.

Then, we merely plot our factors.

Once we take into account the dimensions and worth columns as axes, we get three factors, the place every level represents the dimensions and worth of a single home.

Nonetheless, after we take into account every home as an axis, we get two factors in a three-dimensional area.

One level represents the sizes of all three homes, and the opposite level represents the costs of all three homes.

That is what we name the column area, and that is the place the linear regression occurs.

From Factors to Instructions

Now let’s join our two factors to the origin and now we name them as vectors.

Okay, let’s decelerate and have a look at what now we have finished and why we did it.

As a substitute of a traditional scatter plot the place dimension and worth are the axes (Function Area), we thought-about every home as an axis and plotted the factors (Column Area).

We are actually saying that linear regression occurs on this Column Area.

You is perhaps pondering: Wait, we be taught and perceive linear regression utilizing the normal scatter plot, the place we decrease the residuals to discover a best-fit line.

Sure, that’s right! However in Function Area, linear regression is solved utilizing calculus. We get the formulation for the slope and intercept utilizing partial differentiation.

Should you keep in mind my earlier weblog on MLR, we derived the formulation for the slopes and intercepts after we had two options and a goal variable.

You may observe how messy it was to calculate these formulation utilizing calculus. Now think about in case you have 50 or 100 options; it turns into complicated.

By switching to Column Area, we alter the lens via which we view regression.

We have a look at our knowledge as vectors and use the idea of projections. The geometry stays precisely the identical whether or not now we have 2 options or 2,000 options.

So, if calculus will get that messy, what’s the actual good thing about this unchanging geometry? Let’s talk about precisely what occurs in Column Area.”

Why This Perspective Issues

Now that now we have an thought of what Function Area and Column Area are, let’s deal with the plot.

We’ve got two factors, the place one represents the sizes and the opposite represents the costs of the homes.

Why did we join them to the origin and take into account them vectors?

As a result of, as we already mentioned, in linear regression we’re discovering a quantity (which we name the slope or weight) to scale our unbiased variable.

We need to scale the Measurement so it will get as near the Value as potential, minimizing the residual.

You can’t visually scale a floating level; you may solely scale one thing when it has a size and a course.

By connecting the factors to the origin, they grow to be vectors. Now they’ve each magnitude and course, and we already know that we are able to scale vectors.

Okay, we established that we deal with these columns as vectors as a result of we are able to scale them, however there’s something much more necessary to be taught right here.

Let’s have a look at our two vectors: the Measurement vector and the Value vector.

First, if we have a look at the Measurement vector (1, 2, 3), it factors in a really particular course based mostly on the sample of its numbers.

From this vector, we are able to perceive that Home 2 is twice as massive as Home 1, and Home 3 is 3 times as massive.

There’s a particular 1:2:3 ratio, which forces the Measurement vector to level in a single actual course.

Now, if we have a look at the Value vector, we are able to see that it factors in a barely totally different course than the Measurement vector, based mostly by itself numbers.

The course of an arrow merely exhibits us the pure, underlying sample of a function throughout all our homes.

If our costs have been precisely (2, 4, 6), then our Value vector would lie precisely in the identical course as our Measurement vector. That might imply dimension is an ideal, direct predictor of worth.

However in actual life, that is not often potential. The value of a home isn’t just depending on dimension; there are numerous different elements that have an effect on it, which is why the Value vector factors barely away.

That angle between the 2 vectors (1,2,3) and (4,8,9) represents the real-world noise.

The Geometry Behind Regression

Now, we use the idea of projections that we realized in Half 1.

Let’s take into account our Value vector (4, 8, 9) as a vacation spot we need to attain. Nonetheless, we solely have one course we are able to journey which is the trail of our Measurement vector (1, 2, 3).

If we journey alongside the course of the Measurement vector, we are able to’t completely attain our vacation spot as a result of it factors in a distinct course.

However we are able to journey to a selected level on our path that will get us as near the vacation spot as potential.

The shortest path from our vacation spot dropping right down to that actual level makes an ideal 90-degree angle.

In Half 1, we mentioned this idea utilizing the ‘freeway and residential’ analogy.

We’re making use of the very same idea right here. The one distinction is that in Half 1, we have been in a 2D area, and right here we’re in a 3D area.

I referred to the function as a ‘means’ or a ‘freeway’ as a result of we solely have one course to journey.

This distinction between a ‘means’ and a ‘course’ will grow to be a lot clearer later after we add a number of instructions!

A Easy Approach to See This

We are able to already observe that that is the very same idea as vector projections.

We derived a method for this in Half 1. So, why wait?

Let’s simply apply the method, proper?

No. Not but.

There’s something essential we have to perceive first.

In Half 1, we have been coping with a 2D area, so we used the freeway and residential analogy. However right here, we’re in a 3D area.

To grasp it higher, let’s use a brand new analogy.

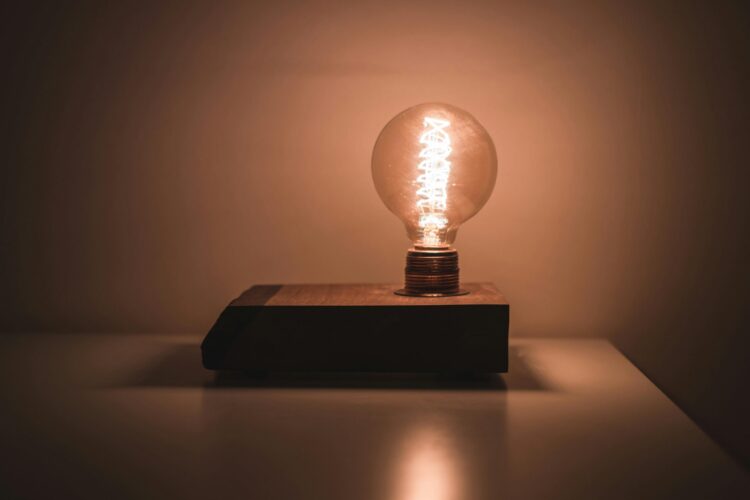

Take into account this 3D area as a bodily room. There’s a lightbulb hovering within the room on the coordinates (4, 8, 9).

The trail from the origin to that bulb is our Value vector which we name as a goal vector.

We need to attain that bulb, however our actions are restricted.

We are able to solely stroll alongside the course of our Measurement vector (1, 2, 3), shifting both ahead or backward.

Primarily based on what we realized in Half 1, you would possibly say, ‘Let’s simply apply the projection method to seek out the closest level on our path to the bulb.’

And you’ll be proper. That’s the absolute closest we are able to get to the bulb in that course.

Why We Want a Base Worth?

However earlier than we transfer ahead, we should always observe yet another factor right here.

We already mentioned that we’re discovering a single quantity (a slope) to scale our Measurement vector so we are able to get as near the Value vector as potential. We are able to perceive this with a easy equation:

Value = β₁ × Measurement

However what if the dimensions is zero? Regardless of the worth of β₁ is, we get a predicted worth of zero.

However is that this proper? We’re saying that if the dimensions of a home is 0 sq. toes, the worth of the home is 0 {dollars}.

This isn’t right as a result of there must be a base worth for every home. Why?

As a result of even when there isn’t a bodily constructing, there may be nonetheless a worth for the empty plot of land it sits on. The value of the ultimate home is closely depending on this base plot worth.

We name this base worth β0. In conventional algebra, we already know this because the intercept, which is the time period that shifts a line up and down.

So, how can we add a base worth in our 3D room? We do it by including a Base Vector.

Combining Instructions

Now now we have added a base vector (1, 1, 1), however what is definitely finished utilizing this base vector?

From the above plot, we are able to observe that by including a base vector, now we have yet another course to maneuver in that area.

We are able to transfer in each the instructions of the Measurement vector and the Base vector.

Don’t get confused by taking a look at them as “methods”; they’re instructions, and it is going to be clear as soon as we get to some extent by shifting in each of them.

With out the bottom vector, our base worth was zero. We began with a base worth of zero for each home. Now that now we have a base vector, let’s first transfer alongside it.

For instance, let’s transfer 3 steps within the course of the Base vector. By doing so, we attain the purpose (3, 3, 3). We’re presently at (3, 3, 3), and we need to attain as shut as potential to our Value vector.

This implies the bottom worth of each home is 3 {dollars}, and our new start line is (3, 3, 3).

Subsequent, let’s transfer 2 steps within the course of our Measurement vector (1, 2, 3). This implies calculating 2 * (1, 2, 3) = (2, 4, 6).

Due to this fact, from (3, 3, 3), we transfer 2 steps alongside the Home A axis, 4 models alongside the Home B axis, and 6 steps alongside the Home C axis.

Mainly, we’re including the vectors right here, and the order doesn’t matter.

Whether or not we transfer first via the bottom vector or the dimensions vector, it will get us to the very same level. We simply moved alongside the bottom vector first to grasp the concept higher!

The Area of All Doable Predictions

This fashion, we use each the instructions to get as near our Value vector. Within the earlier instance, we scaled the Base vector by 3, which suggests right here β0 = 3, and we scaled the Measurement vector by 2, which suggests β1 = 2.

From this, we are able to observe that we’d like the perfect mixture of β0 and β1 in order that we are able to know what number of steps we journey alongside the bottom vector and what number of steps we journey alongside the dimensions vector to achieve that time which is closest to our Value vector.

On this means, if we attempt all of the totally different combos of β0 and β₁, then we get an infinite variety of factors, and let’s see what it appears to be like like.

We are able to see that every one the factors shaped by the totally different combos of β0 and β1 alongside the instructions of the Base vector and Measurement vector type a flat 2D aircraft in our 3D area.

Now, now we have to seek out the purpose on that aircraft which is nearest to our Value vector.

We already know find out how to get to that time. As we mentioned in Half 1, we discover the shortest path by utilizing the idea of geometric projections.

Now we have to discover the precise level on the aircraft which is nearest to the Value vector.

We already mentioned this in Half 1 utilizing our ‘house and freeway’ analogy, the place the shortest path from the freeway to the house shaped a 90-degree angle with the freeway.

There, we moved in a single dimension, however right here we’re shifting on a 2D aircraft. Nonetheless, the rule stays the identical.

The shortest distance between the tip of our worth vector and a degree on the aircraft is the place the trail between them varieties an ideal 90-degree angle with the aircraft.

From a Level to a Vector

Earlier than we dive into the maths, allow us to make clear precisely what is going on in order that it feels simple to comply with.

Till now, now we have been speaking about discovering the particular level on our aircraft that’s closest to the tip of our goal worth vector. However what can we truly imply by this?

To succeed in that time, now we have to journey throughout our aircraft.

We do that by shifting alongside our two accessible instructions, that are our Base and Measurement vectors, and scaling them.

Whenever you scale and add two vectors collectively, the result’s all the time a vector!

If we draw a straight line from the middle on the origin on to that actual level on the aircraft, we create what is known as the Prediction Vector.

Transferring alongside this single Prediction Vector will get us to the very same vacation spot as taking these scaled steps alongside the Base and Measurement instructions.

The Vector Subtraction

Now now we have two vectors.

We need to know the precise distinction between them. In linear algebra, we discover this distinction utilizing vector subtraction.

Once we subtract our Prediction from our Goal, the result’s our Residual Vector, often known as the Error Vector.

Because of this that dotted crimson line isn’t just a measurement of distance. It’s a vector itself!

Once we deal in function area, we attempt to decrease the sum of squared residuals. Right here, by discovering the purpose on the aircraft closest to the worth vector, we’re not directly on the lookout for the place the bodily size of the residual path is the bottom!

Linear Regression Is a Projection

Now let’s begin the maths.

[

text{Let’s start by representing everything in matrix form.}

]

[

X =

begin{bmatrix}

1 & 1

1 & 2

1 & 3

end{bmatrix}

quad

y =

begin{bmatrix}

4

8

9

end{bmatrix}

quad

beta =

begin{bmatrix}

b_0

b_1

end{bmatrix}

]

[

text{Here, the columns of } X text{ represent the base and size directions.}

]

[

text{And we are trying to combine them to reach } y.

]

[

hat{y} = Xbeta

]

[

= b_0

begin{bmatrix}

1

1

1

end{bmatrix}

+

b_1

begin{bmatrix}

1

2

3

end{bmatrix}

]

[

text{Every prediction is just a combination of these two directions.}

]

[

e = y – Xbeta

]

[

text{This error vector is the gap between where we want to be.}

]

[

text{And where we actually reach.}

]

[

text{For this gap to be the shortest possible,}

]

[

text{it must be perfectly perpendicular to the plane.}

]

[

text{This plane is formed by the columns of } X.

]

[

X^T e = 0

]

[

text{Now we substitute ‘e’ into this condition.}

]

[

X^T (y – Xbeta) = 0

]

[

X^T y – X^T X beta = 0

]

[

X^T X beta = X^T y

]

[

text{By simplifying we get the equation.}

]

[

beta = (X^T X)^{-1} X^T y

]

[

text{Now we compute each part step by step.}

]

[

X^T =

begin{bmatrix}

1 & 1 & 1

1 & 2 & 3

end{bmatrix}

]

[

X^T X =

begin{bmatrix}

3 & 6

6 & 14

end{bmatrix}

]

[

X^T y =

begin{bmatrix}

21

47

end{bmatrix}

]

[

text{computing the inverse of } X^T X.

]

[

(X^T X)^{-1}

=

frac{1}{(3 times 14 – 6 times 6)}

begin{bmatrix}

14 & -6

-6 & 3

end{bmatrix}

]

[

=

frac{1}{42 – 36}

begin{bmatrix}

14 & -6

-6 & 3

end{bmatrix}

]

[

=

frac{1}{6}

begin{bmatrix}

14 & -6

-6 & 3

end{bmatrix}

]

[

text{Now multiply this with } X^T y.

]

[

beta =

frac{1}{6}

begin{bmatrix}

14 & -6

-6 & 3

end{bmatrix}

begin{bmatrix}

21

47

end{bmatrix}

]

[

=

frac{1}{6}

begin{bmatrix}

14 cdot 21 – 6 cdot 47

-6 cdot 21 + 3 cdot 47

end{bmatrix}

]

[

=

frac{1}{6}

begin{bmatrix}

294 – 282

-126 + 141

end{bmatrix}

=

frac{1}{6}

begin{bmatrix}

12

15

end{bmatrix}

]

[

=

begin{bmatrix}

2

2.5

end{bmatrix}

]

[

text{With these values, we can finally compute the exact point on the plane.}

]

[

hat{y} =

2

begin{bmatrix}

1

1

1

end{bmatrix}

+

2.5

begin{bmatrix}

1

2

3

end{bmatrix}

=

begin{bmatrix}

4.5

7.0

9.5

end{bmatrix}

]

[

text{And this point is the closest possible point on the plane to our target.}

]

We obtained the purpose (4.5, 7.0, 9.5). That is our prediction.

This level is the closest to the tip of the worth vector, and to achieve that time, we have to transfer 2 steps alongside the bottom vector, which is our intercept, and a couple of.5 steps alongside the dimensions vector, which is our slope.

What Modified Was the Perspective

Let’s recap what now we have finished on this weblog. We haven’t adopted the common methodology to resolve the linear regression downside, which is the calculus methodology the place we attempt to differentiate the equation of the loss operate to get the equations for the slope and intercept.

As a substitute, we selected one other methodology to resolve the linear regression downside which is the tactic of vectors and projections.

We began with a Value vector, and we would have liked to construct a mannequin that predicts the worth of a home based mostly on its dimension.

By way of vectors, that meant we initially solely had one course to maneuver in to foretell the worth of the home.

Then, we additionally added the Base vector by realizing there ought to be a baseline beginning worth.

Now we had two instructions, and the query was how shut can we get to the tip of the Value vector by shifting in these two instructions?

We aren’t simply becoming a line; we’re working inside an area.

In function area: we decrease error

In column area: we drop perpendiculars

Through the use of totally different combos of the slope and intercept, we obtained an infinite variety of factors that created a aircraft.

The closest level, which we would have liked to seek out, lies someplace on that aircraft, and we discovered it by utilizing the idea of projections and the dot product.

By means of that geometry, we discovered the proper level and derived the Regular Equation!

It’s possible you’ll ask, “Don’t we get this regular equation by utilizing calculus as nicely?” You’re precisely proper! That’s the calculus view, however right here we’re coping with the geometric linear algebra view to really perceive the geometry behind the maths.

Linear regression isn’t just optimization.

It’s projection.

I hope you realized one thing from this weblog!

Should you assume one thing is lacking or may very well be improved, be at liberty to depart a remark.

Should you haven’t learn Half 1 but, you may learn it right here. It covers the essential geometric instinct behind vectors and projections.

Thanks for studying!