Fingers On For all the thrill surrounding them, AI brokers are merely one other type of automation that may carry out duties utilizing the instruments you’ve got offered. Consider them as good macros that make choices and transcend easy if/then guidelines to deal with edge circumstances in enter information. Happily, it is easy sufficient to code your personal brokers and under we’ll present you the way.

Since ChatGPT made its debut in late 2022, actually dozens of frameworks for constructing AI brokers have emerged. Of them, LangFlow is likely one of the simpler and extra accessible platforms for constructing AI brokers.

Initially developed at Logspace earlier than being acquired by DataStax, which was later purchased by Large Blue, LangFlow is a low-code/no-code agent builder primarily based on LangChain. The platform permits customers to assemble LLM-augmented automations by dragging, dropping, and connecting varied parts and instruments.

On this hands-on information, we’ll be taking a more in-depth have a look at what AI brokers are, find out how to construct them, and a number of the pitfalls you are prone to run into alongside the way in which.

The anatomy of an agent

Earlier than we soar into constructing our first agent, let’s discuss what contains an agent.

At its most simple, an agent consists of three major parts: a system immediate, instruments, and the mannequin. – Click on to enlarge

Usually, AI brokers are going to have three major parts, not counting the output/motion they finally carry out or the enter/set off that began all of it.

- A system immediate or persona: This describes the agent’s goal, the instruments accessible to it, and the way and when they need to be utilized in pure language. For instance: “You are a useful AI assistant. You could have entry to the

GET_CURRENT_DATEinstrument which can be utilized to finish time or date associated duties.” - Instruments: A set of instruments and sources that the mannequin can name upon to finish its activity. This may be so simple as a

GET_CURRENT_DATEorPERFORM_SEARCHoperate, or an MCP server that gives API entry to a distant database. - The Mannequin: The LLM, which is accountable for processing the enter information or set off and utilizing the instruments accessible to it to finish a activity or carry out an motion.

For extra advanced multi-step duties, a number of fashions, instruments, and system prompts could also be used.

What you may want:

- To get began, you may want a Mac, Home windows, or Linux field to run the LangFlow Desktop.

- Linux customers might want to obtain and set up Docker Engine first utilizing the directions right here.

- Entry to an LLM and embedding mannequin. We’ll be utilizing Ollama to run these on an area system, however you may simply as simply open an OpenAI developer account and use their fashions as properly.

When you’re utilizing a Mac or PC, deploying LangFlow Desktop is so simple as downloading and working the installer from the LangFlow web site.

When you’re working Linux, you may must deploy the app as a Docker container. Fortunately this can be a lot simpler than it sounds. As soon as you’ve got acquired the most recent launch of Docker Engine put in, you may launch the LangFlow server utilizing the one-liner under:

sudo docker run -d -p 7860:7860 --name langflow langflowai/langflow:newest

After a couple of minutes, the LangFlow interface will likely be accessible at http://localhost:7860.

Warning: When you’re deploying the Docker container on a server that is uncovered to the net, ensure that to regulate your firewall guidelines appropriately otherwise you would possibly find yourself exposing LangFlow to the online.

Attending to know LangFlow Desktop

LangFlow Desktop’s homepage is split into tasks on the left and flows on the correct. – Click on to enlarge

Opening LangFlow Desktop, you may see that the applying is split into two sections: Initiatives and Flows. You may consider tasks as a folder the place your brokers are saved.

Clicking “New Circulation” will current you with quite a lot of pre-baked templates to get you began.

LangFlow’s no-code interface permits you to construct brokers by dragging and dropping parts from the sidebar right into a playground on the correct. – Click on to enlarge

On the left facet of LangFlow Desktop, we see a sidebar containing varied first and third-party parts that we’ll use to assemble an agent.

Dragging these into the “Playground,” we will then config and join them to different parts in our agent circulate.

Within the case of the Language Mannequin part proven right here, you’d need to enter your OpenAI API key and choose your most well-liked model of GPT. In the meantime, for Ollama you may need to enter your Ollama API URL, which for many customers will likely be http://localhost:11434, and choose a mannequin from the drop down.

When you need assistance getting began with Ollama, try our information right here.

Constructing your first agent

With LangFlow up and working, let’s check out a few examples of find out how to use it. If you would like to attempt these for your self, we have uploaded our templates to a GitHub repo right here.

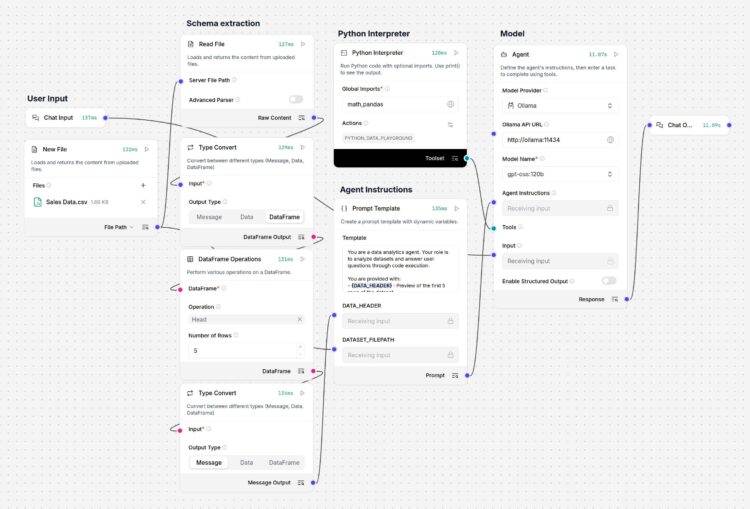

On this instance, we have constructed a comparatively fundamental agent to research spreadsheets saved as CSVs, primarily based on consumer prompts.

This information analytics agent makes use of a Python interpreter and an uploaded CSV to reply consumer prompts – Click on to enlarge

To assist the agent perceive the file’s information construction, we have used the Kind Convert and DataFrame Operations parts to extract the dataset’s schema and assist the mannequin perceive the file’s inside construction.

This, together with the complete doc’s file path, is handed to a immediate template, which serves because the mannequin’s system immediate. In the meantime, the consumer immediate is handed by way of to the mannequin as an instruction.

LangFlow’s Immediate Template allows a number of information sources to be mixed right into a single unified immediate. – Click on to enlarge

Wanting a bit nearer on the immediate template, we see {DATA_HEADER} and {DATASET_FILEPATH} outlined as variables. By putting these in {} we have created further nodes to which we will join our database schema and file path.

Past this, the system immediate comprises fundamental data on find out how to service consumer requests, together with directions on find out how to name the PYTHON_DATA_PLAYGROUND Python interpreter, which we see simply above the immediate template.

This Python interpreter is linked to the mannequin’s “instrument” node and aside from allowlisting the Pandas and Math libraries, we have not modified far more than the interpreter’s slug and outline.

The agent’s information analytics performance is achieved utilizing a Python interpreter somewhat than counting on the mannequin to make sense of the CSV by itself. – Click on to enlarge

Primarily based on the data offered to it within the system and consumer prompts, the mannequin can use this Python sandbox to execute code snippets and extract insights from the dataset.

If we ask the agent “what number of displays we offered,” within the Langflow playground’s chat, we will see what code snippets the mannequin executed utilizing the Python interpreter and the uncooked response it acquired again.

As you may see, to reply our query, the mannequin generated and executed a Python code snippet to extract the related data utilizing Pandas – Click on to enlarge

Clearly, this introduces some danger of hallucinations, significantly on smaller fashions. On this instance, the agent is about as much as analyze paperwork it hasn’t seen earlier than. Nevertheless, if you recognize what your enter appears to be like like — for instance, if the schema would not change between stories — it is attainable to preprocess the information, like we have completed within the instance under.

Reasonably than counting on the mannequin to execute the correct code each time, this instance makes use of a modified Python interpreter to preprocess the information of two CSVs or Excel recordsdata earlier than passing it to the mannequin for evaluate – Click on to enlarge

With a few tweaks to the Python interpreter, we have created a customized part that makes use of Pandas to match a brand new spreadsheet towards a reference sheet. This manner the mannequin solely wants to make use of the Python interpreter to analyze anomalies or reply questions not already addressed by this preprocessing script.

Fashions and prompts matter

AI brokers are simply automations that ought to, in concept, be higher at dealing with edge circumstances, fuzzy logic, or improperly formatted requests.

We emphasize “in concept,” as a result of the method by which LLMs execute instrument calls is a little bit of a black field. What’s extra, our testing confirmed smaller fashions had a better propensity for hallucinations and malformed instrument calls.

Switching from a locally-hosted model of OpenAI’s gpt-oss-120b to GPT-5 Nano or Mini, we noticed a drastic enchancment within the high quality and accuracy of our outputs.

When you’re struggling to get dependable outcomes out of your agentic pipelines, it could be as a result of the mannequin is not properly suited to the position. In some circumstances, it could be essential to wonderful tune a mannequin particularly for that utility. You could find our newbie’s information to wonderful tuning right here.

Together with the mannequin, the system immediate can have a big affect on the agent’s efficiency. The identical written directions and examples you would possibly give an intern on find out how to carry out a activity are simply as helpful for guiding the mannequin on what you do and don’t need out of it.

In some circumstances, it could make extra sense to interrupt up and delegate a activity to completely different fashions or system prompts so as to reduce the complexity of every activity, scale back the probability of hallucinations, or price optimize by having decrease price fashions sort out simpler items of the pipeline.

Subsequent steps

Up so far, our examples have been triggered by a chat enter and returned a chat output. Nevertheless, this is not strictly required. You would arrange the agent to detect new recordsdata in a listing, course of them, and save its output as a file.

LangFlow helps integrations with all kinds of providers that may be prolonged utilizing agentic protocols or customized parts written in Python. There’s even a Residence Assistant tie-in if you wish to construct an agent to automate your good dwelling lights and devices.

Talking of third-party integrations, we have hardly scratched the floor of the sorts of AI-enhanced automation which might be attainable utilizing frameworks like LangFlow. Along with the examples we checked out right here, we extremely suggest trying out LangFlow’s assortment of templates and workflows.

When you’re completed with that, you could need to discover what’s attainable utilizing frameworks like Mannequin Context Protocol (MCP). Initially developed by Anthropic, this agentic protocol is designed to assist builders join fashions to information and instruments. You could find extra on MCP in our hands-on information right here.

And when you’re at it, you could need to brush up on the safety implications of agentic AI, together with threats like immediate injection or distant code execution, and guarantee your atmosphere is sufficiently sandboxed earlier than deploying them in manufacturing. ®

Fingers On For all the thrill surrounding them, AI brokers are merely one other type of automation that may carry out duties utilizing the instruments you’ve got offered. Consider them as good macros that make choices and transcend easy if/then guidelines to deal with edge circumstances in enter information. Happily, it is easy sufficient to code your personal brokers and under we’ll present you the way.

Since ChatGPT made its debut in late 2022, actually dozens of frameworks for constructing AI brokers have emerged. Of them, LangFlow is likely one of the simpler and extra accessible platforms for constructing AI brokers.

Initially developed at Logspace earlier than being acquired by DataStax, which was later purchased by Large Blue, LangFlow is a low-code/no-code agent builder primarily based on LangChain. The platform permits customers to assemble LLM-augmented automations by dragging, dropping, and connecting varied parts and instruments.

On this hands-on information, we’ll be taking a more in-depth have a look at what AI brokers are, find out how to construct them, and a number of the pitfalls you are prone to run into alongside the way in which.

The anatomy of an agent

Earlier than we soar into constructing our first agent, let’s discuss what contains an agent.

At its most simple, an agent consists of three major parts: a system immediate, instruments, and the mannequin. – Click on to enlarge

Usually, AI brokers are going to have three major parts, not counting the output/motion they finally carry out or the enter/set off that began all of it.

- A system immediate or persona: This describes the agent’s goal, the instruments accessible to it, and the way and when they need to be utilized in pure language. For instance: “You are a useful AI assistant. You could have entry to the

GET_CURRENT_DATEinstrument which can be utilized to finish time or date associated duties.” - Instruments: A set of instruments and sources that the mannequin can name upon to finish its activity. This may be so simple as a

GET_CURRENT_DATEorPERFORM_SEARCHoperate, or an MCP server that gives API entry to a distant database. - The Mannequin: The LLM, which is accountable for processing the enter information or set off and utilizing the instruments accessible to it to finish a activity or carry out an motion.

For extra advanced multi-step duties, a number of fashions, instruments, and system prompts could also be used.

What you may want:

- To get began, you may want a Mac, Home windows, or Linux field to run the LangFlow Desktop.

- Linux customers might want to obtain and set up Docker Engine first utilizing the directions right here.

- Entry to an LLM and embedding mannequin. We’ll be utilizing Ollama to run these on an area system, however you may simply as simply open an OpenAI developer account and use their fashions as properly.

When you’re utilizing a Mac or PC, deploying LangFlow Desktop is so simple as downloading and working the installer from the LangFlow web site.

When you’re working Linux, you may must deploy the app as a Docker container. Fortunately this can be a lot simpler than it sounds. As soon as you’ve got acquired the most recent launch of Docker Engine put in, you may launch the LangFlow server utilizing the one-liner under:

sudo docker run -d -p 7860:7860 --name langflow langflowai/langflow:newest

After a couple of minutes, the LangFlow interface will likely be accessible at http://localhost:7860.

Warning: When you’re deploying the Docker container on a server that is uncovered to the net, ensure that to regulate your firewall guidelines appropriately otherwise you would possibly find yourself exposing LangFlow to the online.

Attending to know LangFlow Desktop

LangFlow Desktop’s homepage is split into tasks on the left and flows on the correct. – Click on to enlarge

Opening LangFlow Desktop, you may see that the applying is split into two sections: Initiatives and Flows. You may consider tasks as a folder the place your brokers are saved.

Clicking “New Circulation” will current you with quite a lot of pre-baked templates to get you began.

LangFlow’s no-code interface permits you to construct brokers by dragging and dropping parts from the sidebar right into a playground on the correct. – Click on to enlarge

On the left facet of LangFlow Desktop, we see a sidebar containing varied first and third-party parts that we’ll use to assemble an agent.

Dragging these into the “Playground,” we will then config and join them to different parts in our agent circulate.

Within the case of the Language Mannequin part proven right here, you’d need to enter your OpenAI API key and choose your most well-liked model of GPT. In the meantime, for Ollama you may need to enter your Ollama API URL, which for many customers will likely be http://localhost:11434, and choose a mannequin from the drop down.

When you need assistance getting began with Ollama, try our information right here.

Constructing your first agent

With LangFlow up and working, let’s check out a few examples of find out how to use it. If you would like to attempt these for your self, we have uploaded our templates to a GitHub repo right here.

On this instance, we have constructed a comparatively fundamental agent to research spreadsheets saved as CSVs, primarily based on consumer prompts.

This information analytics agent makes use of a Python interpreter and an uploaded CSV to reply consumer prompts – Click on to enlarge

To assist the agent perceive the file’s information construction, we have used the Kind Convert and DataFrame Operations parts to extract the dataset’s schema and assist the mannequin perceive the file’s inside construction.

This, together with the complete doc’s file path, is handed to a immediate template, which serves because the mannequin’s system immediate. In the meantime, the consumer immediate is handed by way of to the mannequin as an instruction.

LangFlow’s Immediate Template allows a number of information sources to be mixed right into a single unified immediate. – Click on to enlarge

Wanting a bit nearer on the immediate template, we see {DATA_HEADER} and {DATASET_FILEPATH} outlined as variables. By putting these in {} we have created further nodes to which we will join our database schema and file path.

Past this, the system immediate comprises fundamental data on find out how to service consumer requests, together with directions on find out how to name the PYTHON_DATA_PLAYGROUND Python interpreter, which we see simply above the immediate template.

This Python interpreter is linked to the mannequin’s “instrument” node and aside from allowlisting the Pandas and Math libraries, we have not modified far more than the interpreter’s slug and outline.

The agent’s information analytics performance is achieved utilizing a Python interpreter somewhat than counting on the mannequin to make sense of the CSV by itself. – Click on to enlarge

Primarily based on the data offered to it within the system and consumer prompts, the mannequin can use this Python sandbox to execute code snippets and extract insights from the dataset.

If we ask the agent “what number of displays we offered,” within the Langflow playground’s chat, we will see what code snippets the mannequin executed utilizing the Python interpreter and the uncooked response it acquired again.

As you may see, to reply our query, the mannequin generated and executed a Python code snippet to extract the related data utilizing Pandas – Click on to enlarge

Clearly, this introduces some danger of hallucinations, significantly on smaller fashions. On this instance, the agent is about as much as analyze paperwork it hasn’t seen earlier than. Nevertheless, if you recognize what your enter appears to be like like — for instance, if the schema would not change between stories — it is attainable to preprocess the information, like we have completed within the instance under.

Reasonably than counting on the mannequin to execute the correct code each time, this instance makes use of a modified Python interpreter to preprocess the information of two CSVs or Excel recordsdata earlier than passing it to the mannequin for evaluate – Click on to enlarge

With a few tweaks to the Python interpreter, we have created a customized part that makes use of Pandas to match a brand new spreadsheet towards a reference sheet. This manner the mannequin solely wants to make use of the Python interpreter to analyze anomalies or reply questions not already addressed by this preprocessing script.

Fashions and prompts matter

AI brokers are simply automations that ought to, in concept, be higher at dealing with edge circumstances, fuzzy logic, or improperly formatted requests.

We emphasize “in concept,” as a result of the method by which LLMs execute instrument calls is a little bit of a black field. What’s extra, our testing confirmed smaller fashions had a better propensity for hallucinations and malformed instrument calls.

Switching from a locally-hosted model of OpenAI’s gpt-oss-120b to GPT-5 Nano or Mini, we noticed a drastic enchancment within the high quality and accuracy of our outputs.

When you’re struggling to get dependable outcomes out of your agentic pipelines, it could be as a result of the mannequin is not properly suited to the position. In some circumstances, it could be essential to wonderful tune a mannequin particularly for that utility. You could find our newbie’s information to wonderful tuning right here.

Together with the mannequin, the system immediate can have a big affect on the agent’s efficiency. The identical written directions and examples you would possibly give an intern on find out how to carry out a activity are simply as helpful for guiding the mannequin on what you do and don’t need out of it.

In some circumstances, it could make extra sense to interrupt up and delegate a activity to completely different fashions or system prompts so as to reduce the complexity of every activity, scale back the probability of hallucinations, or price optimize by having decrease price fashions sort out simpler items of the pipeline.

Subsequent steps

Up so far, our examples have been triggered by a chat enter and returned a chat output. Nevertheless, this is not strictly required. You would arrange the agent to detect new recordsdata in a listing, course of them, and save its output as a file.

LangFlow helps integrations with all kinds of providers that may be prolonged utilizing agentic protocols or customized parts written in Python. There’s even a Residence Assistant tie-in if you wish to construct an agent to automate your good dwelling lights and devices.

Talking of third-party integrations, we have hardly scratched the floor of the sorts of AI-enhanced automation which might be attainable utilizing frameworks like LangFlow. Along with the examples we checked out right here, we extremely suggest trying out LangFlow’s assortment of templates and workflows.

When you’re completed with that, you could need to discover what’s attainable utilizing frameworks like Mannequin Context Protocol (MCP). Initially developed by Anthropic, this agentic protocol is designed to assist builders join fashions to information and instruments. You could find extra on MCP in our hands-on information right here.

And when you’re at it, you could need to brush up on the safety implications of agentic AI, together with threats like immediate injection or distant code execution, and guarantee your atmosphere is sufficiently sandboxed earlier than deploying them in manufacturing. ®